Scaling Linear Algebra Kernels using Remote Memory Access

Scaling Linear Algebra Kernels using Remote Memory Access Manoj Krishnan High Performance Computing, PNNL Robert R. Lewis EECS, Washington State University Abhinav Vishnu High Performance Computing, PNNL

Overview Programming Model Remote Memory Access RMA-based Linear Algebra kernels Dense Matrix Multiply: dgemm LU Factorization Experimental Results

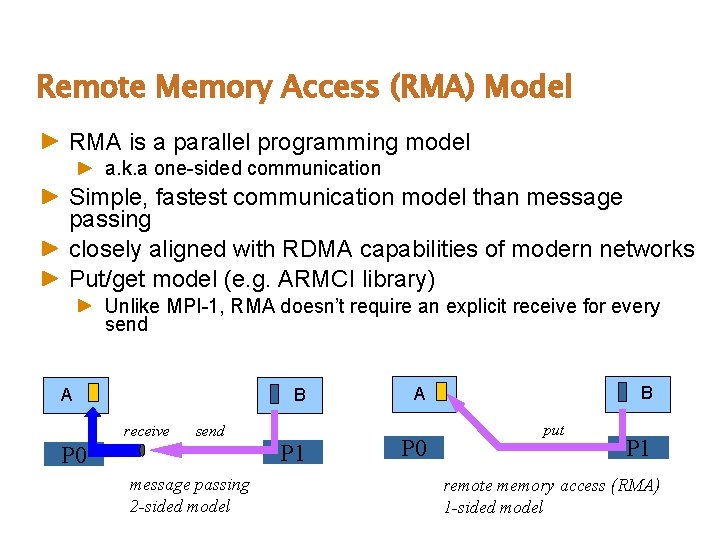

Remote Memory Access (RMA) Model RMA is a parallel programming model a. k. a one-sided communication Simple, fastest communication model than message passing closely aligned with RDMA capabilities of modern networks Put/get model (e. g. ARMCI library) Unlike MPI-1, RMA doesn’t require an explicit receive for every send A B receive send P 0 message passing 2 -sided model P 1 B A P 0 put P 1 remote memory access (RMA) 1 -sided model

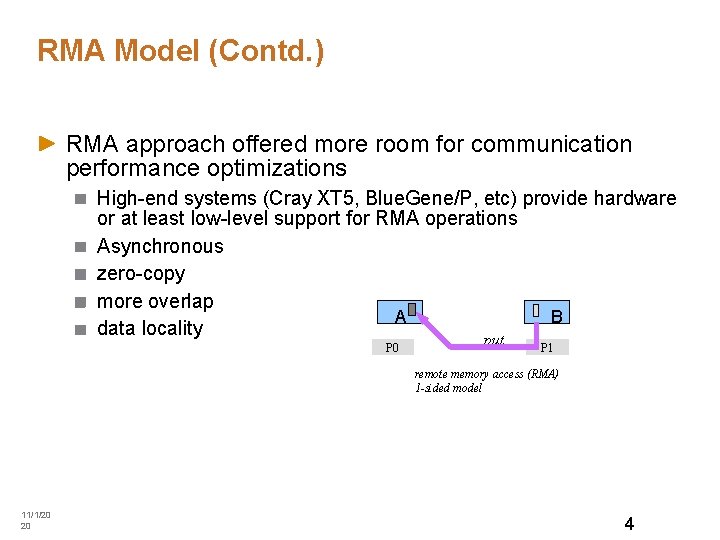

RMA Model (Contd. ) RMA approach offered more room for communication performance optimizations High-end systems (Cray XT 5, Blue. Gene/P, etc) provide hardware or at least low-level support for RMA operations Asynchronous zero-copy more overlap B A data locality put P 0 P 1 remote memory access (RMA) 1 -sided model 11/1/20 20 4

MPI-2 RMA Model The MPI-2 one-sided API – MPI_Get, MPI_Put, etc proposed eleven years ago too restrictive for actual application use MPI-2 RMA different from vendor-specific low-level RMA interfaces Differences in progress rules and semantics affect performance E. g. MPI-2 RMA involves synchronization between source and destination MPI-2 RMA does not favor global address space languages due to excessive synchronization and complex progress rules MPI-3 RMA Initiative Recently formed to address these limitations of MPI-2 RMA 11/1/20 20 5

Our RMA Model Our RMA model based on simpler progress rules, which are motivated by hardware support for the RMA operations on the current architectures Unlike MPI-2 “active” communication model, one-sided operations complete regardless of the actions taken by the remote process. Similar to MPI-2 “passive” model, but does not require using locks Relaxed Memory Consistency Model 11/1/20 20 6

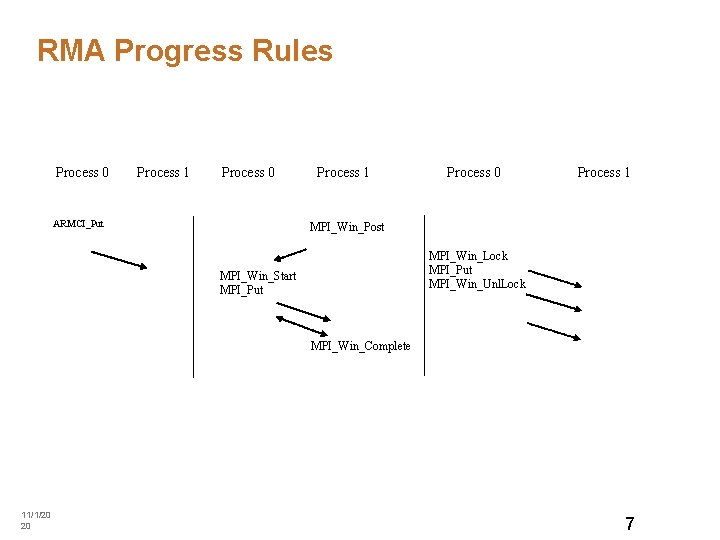

RMA Progress Rules Process 0 Process 1 Process 0 ARMCI_Put Process 1 Process 0 Process 1 MPI_Win_Post MPI_Win_Lock MPI_Put MPI_Win_Unl. Lock MPI_Win_Start MPI_Put MPI_Win_Complete 11/1/20 20 7

One-sided vs Message Passing Message-passing Communication patterns are regular or at least predictable Algorithms have a high degree of synchronization Data consistency is straightforward One-sided Communication is irregular Load balancing Algorithms are asynchronous Data consistency must be explicitly managed

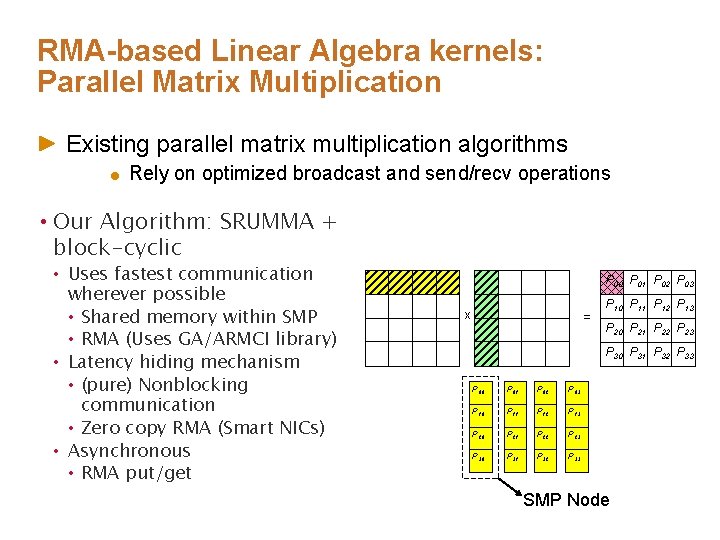

RMA-based Linear Algebra kernels: Parallel Matrix Multiplication Existing parallel matrix multiplication algorithms Rely on optimized broadcast and send/recv operations • Our Algorithm: SRUMMA + block-cyclic • Uses fastest communication wherever possible • Shared memory within SMP • RMA (Uses GA/ARMCI library) • Latency hiding mechanism • (pure) Nonblocking communication • Zero copy RMA (Smart NICs) • Asynchronous • RMA put/get P 00 P 01 P 02 P 03 x = P 10 P 11 P 12 P 13 P 20 P 21 P 22 P 23 P 30 P 31 P 32 P 33 P 00 P 01 P 02 P 03 P 10 P 11 (b) P 12 P 13 P 20 P 21 P 22 P 23 P 30 P 31 P 32 P 33 SMP Node

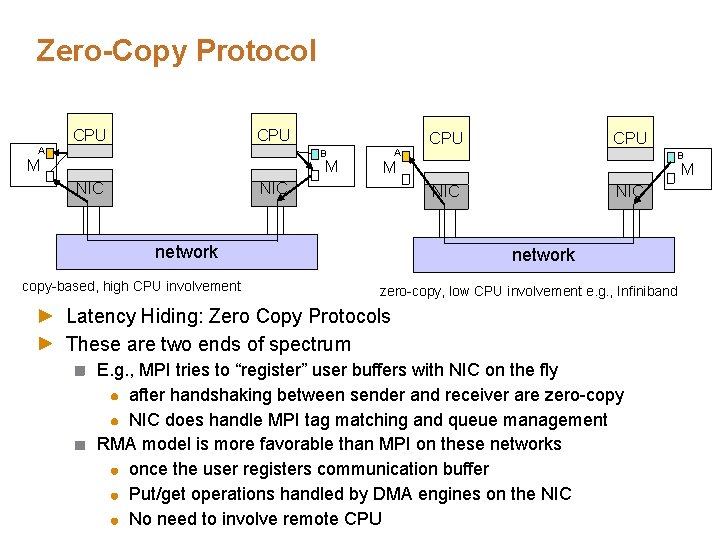

Zero-Copy Protocol CPU A CPU B M M NIC A B M NIC network copy-based, high CPU involvement CPU NIC network zero-copy, low CPU involvement e. g. , Infiniband Latency Hiding: Zero Copy Protocols These are two ends of spectrum E. g. , MPI tries to “register” user buffers with NIC on the fly after handshaking between sender and receiver are zero-copy NIC does handle MPI tag matching and queue management RMA model is more favorable than MPI on these networks once the user registers communication buffer Put/get operations handled by DMA engines on the NIC No need to involve remote CPU

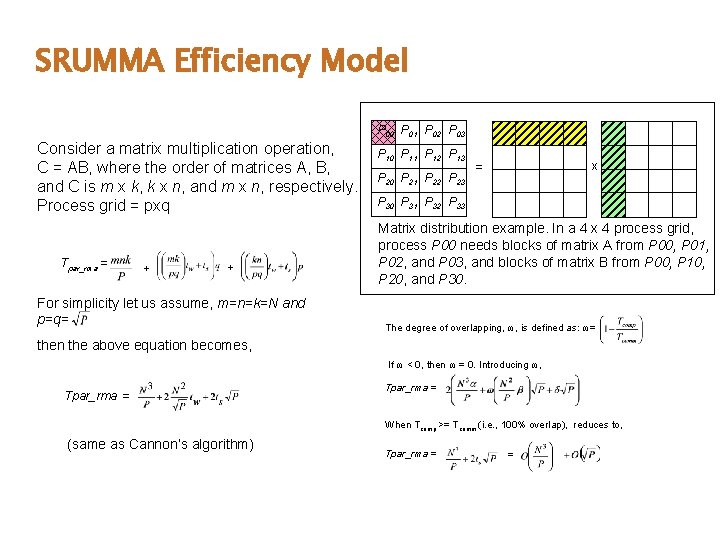

SRUMMA Efficiency Model P 00 P 01 P 02 P 03 Consider a matrix multiplication operation, C = AB, where the order of matrices A, B, and C is m x k, k x n, and m x n, respectively. Process grid = pxq Tpar_rma = + + For simplicity let us assume, m=n=k=N and p=q= P 10 P 11 P 12 P 13 P 20 P 21 P 22 P 23 = x P 30 P 31 P 32 P 33 Matrix distribution example. In a 4 x 4 process grid, process P 00 needs blocks of matrix A from P 00, P 01, P 02, and P 03, and blocks of matrix B from P 00, P 10, P 20, and P 30. The degree of overlapping, ω, is defined as: ω= then the above equation becomes, If ω < 0, then ω = 0. Introducing ω, Tpar_rma = When Tcomp >= Tcomm (i. e. , 100% overlap), reduces to, (same as Cannon’s algorithm) Tpar_rma = =

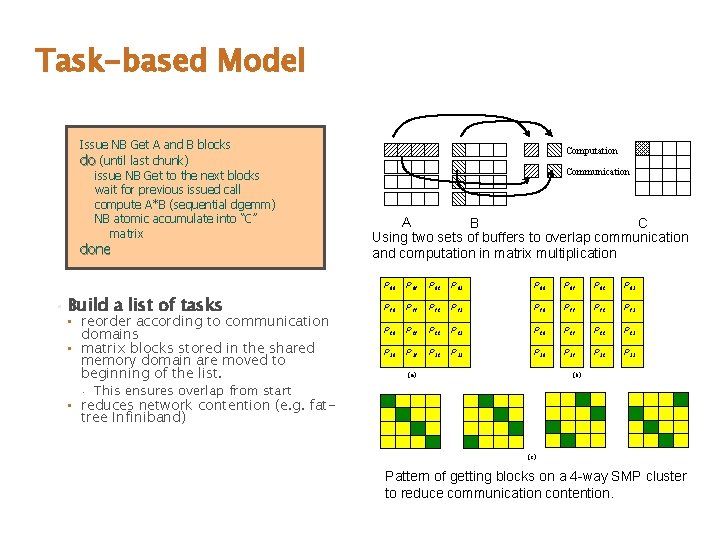

Task-based Model Issue NB Get A and B blocks do (until last chunk) issue NB Get to the next blocks wait for previous issued call compute A*B (sequential dgemm) NB atomic accumulate into “C” matrix done • Build a list of tasks • reorder according to communication domains • matrix blocks stored in the shared memory domain are moved to beginning of the list. • Computation Communication A B C Using two sets of buffers to overlap communication and computation in matrix multiplication P 00 P 01 P 02 P 03 P 10 P 11 P 12 P 13 P 20 P 21 P 22 P 23 P 30 P 31 P 32 P 33 (a) (b) This ensures overlap from start • reduces network contention (e. g. fattree Infiniband) (c) Pattern of getting blocks on a 4 -way SMP cluster to reduce communication contention.

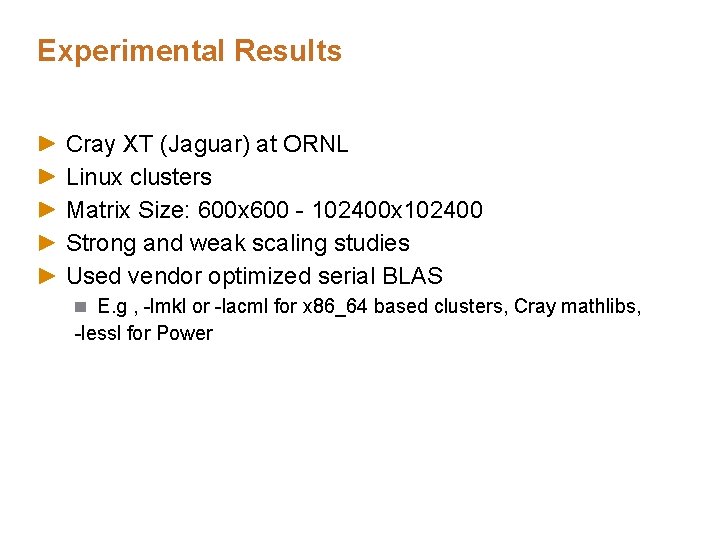

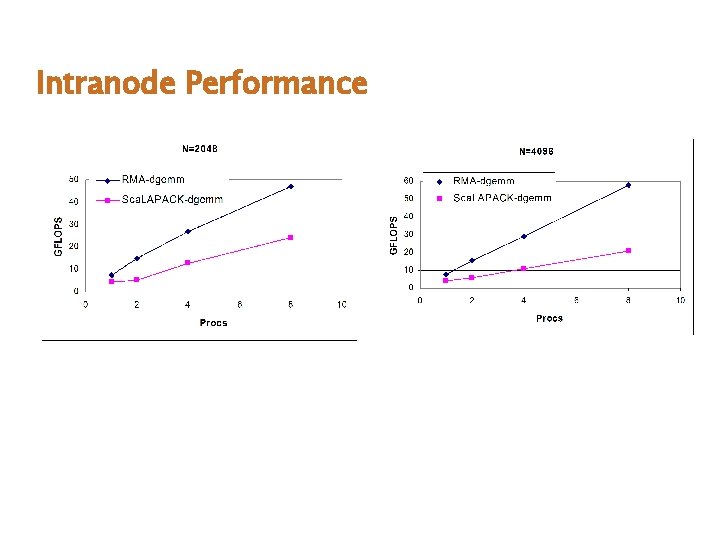

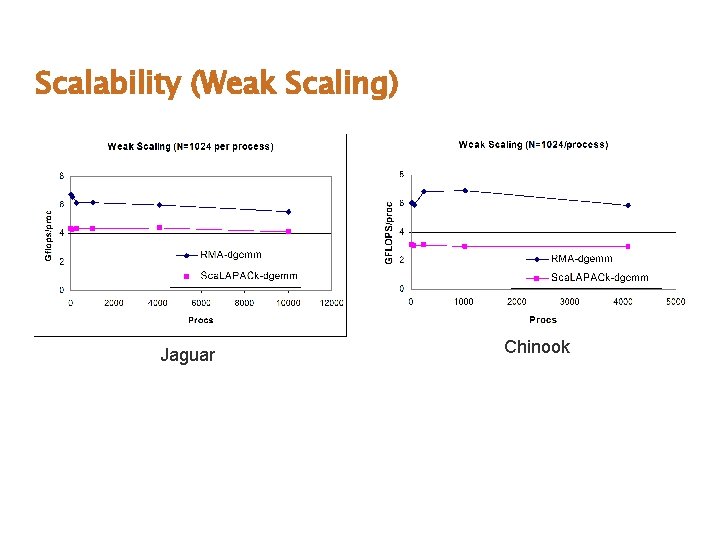

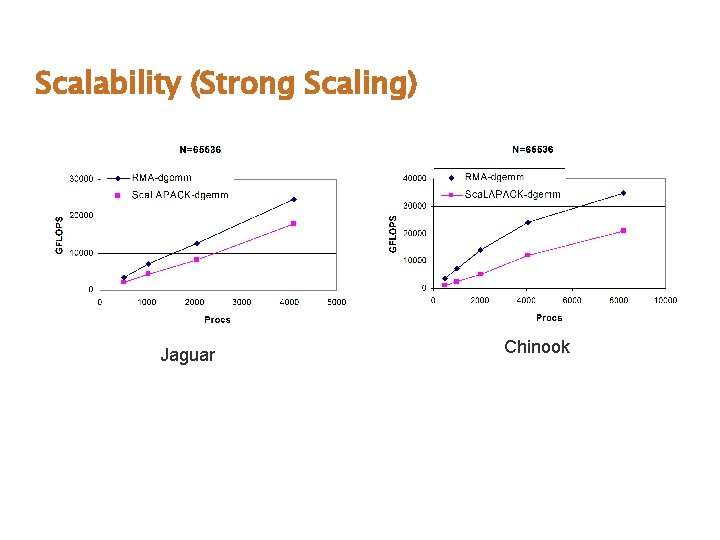

Experimental Results Cray XT (Jaguar) at ORNL Linux clusters Matrix Size: 600 x 600 - 102400 x 102400 Strong and weak scaling studies Used vendor optimized serial BLAS E. g , -lmkl or -lacml for x 86_64 based clusters, Cray mathlibs, -lessl for Power

Intranode Performance

Scalability (Weak Scaling) Jaguar Chinook

Scalability (Strong Scaling) Jaguar Chinook

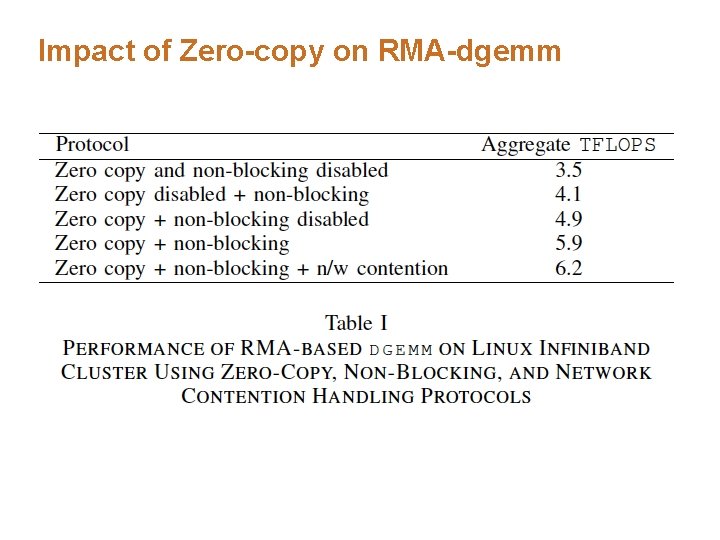

Impact of Zero-copy on RMA-dgemm

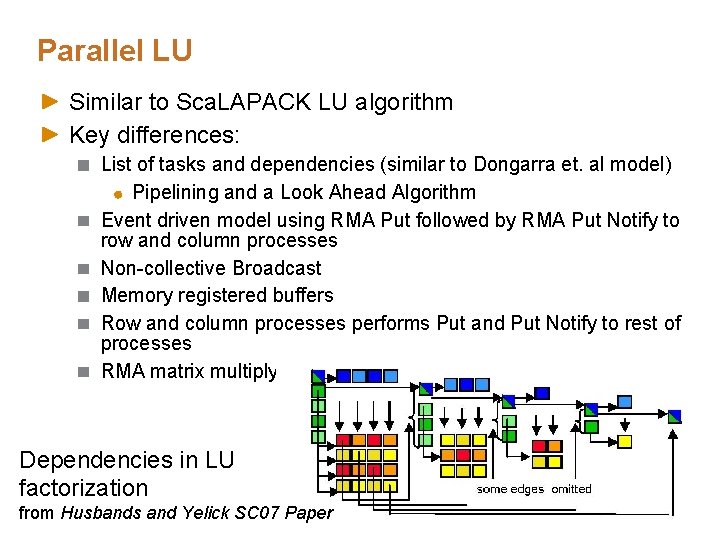

Parallel LU Similar to Sca. LAPACK LU algorithm Key differences: List of tasks and dependencies (similar to Dongarra et. al model) Pipelining and a Look Ahead Algorithm Event driven model using RMA Put followed by RMA Put Notify to row and column processes Non-collective Broadcast Memory registered buffers Row and column processes performs Put and Put Notify to rest of processes RMA matrix multiply Dependencies in LU factorization from Husbands and Yelick SC 07 Paper

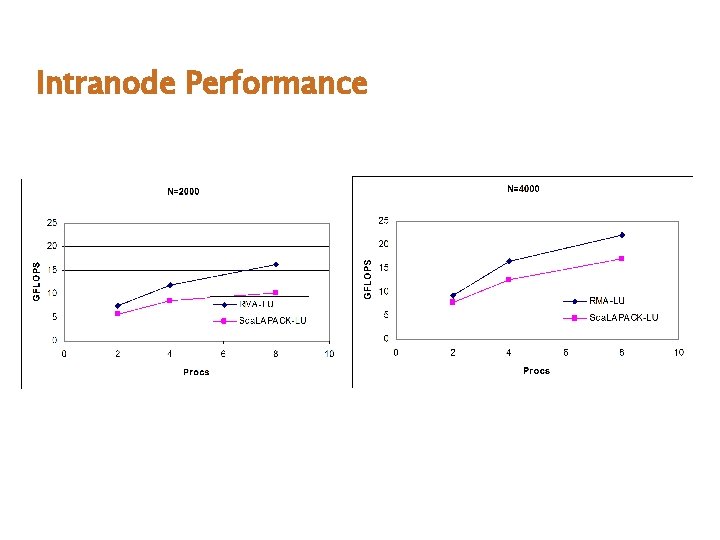

Intranode Performance

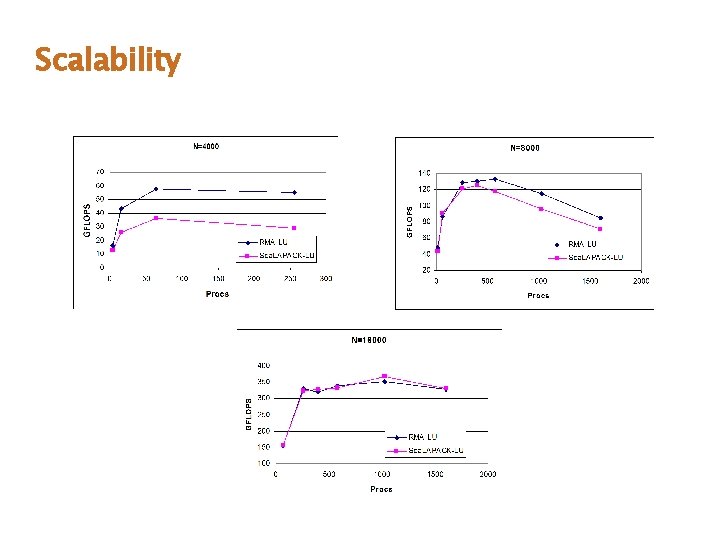

Scalability

Summary Programming Model Remote Memory Access RMA-based Linear Algebra kernels Dense Matrix Multiply: dgemm LU Factorization Experimental Results

Thank You

- Slides: 22