Probabilistic Reasoning Bayes Theorem In probability theory and

Probabilistic Reasoning

Bayes’ Theorem • In probability theory and statistics, Bayes' theorem describes the probability of an event, based on prior knowledge of conditions that might be related to the event. • When applied, the probabilities involved in Bayes‘ theorem may have different probability interpretations.

• Bayes' theorem is stated mathematically as the following equation: • P(A|B) = P(B | A) P(A) / P(B) where A and B are events. • P(A) and P(B) are the probabilities of A and B without regard to each other. • P(A | B), a conditional probability, is the probability of observing event A given that B is true. • P(B | A) is the probability of observing event B given that A is true.

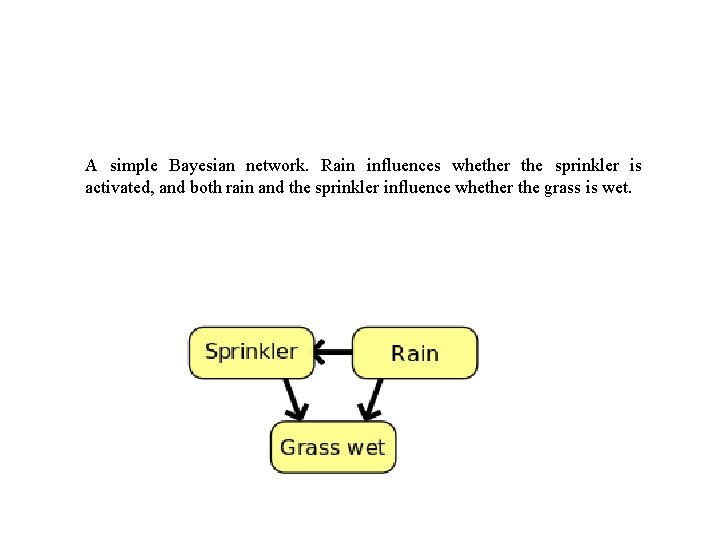

Bayesian Network • A Bayesian network, Bayes network, belief network, Bayes(ian) model or probabilistic directed acyclic graphical model is a probabilistic graphical model (a type of statistical model) that represents a set of random variables and their conditional dependencies via a directed acyclic graph (DAG). • For example, a Bayesian network could represent the probabilistic relationships between diseases and symptoms. Given symptoms, the network can be used to compute the probabilities of the presence of various diseases.

A simple Bayesian network. Rain influences whether the sprinkler is activated, and both rain and the sprinkler influence whether the grass is wet.

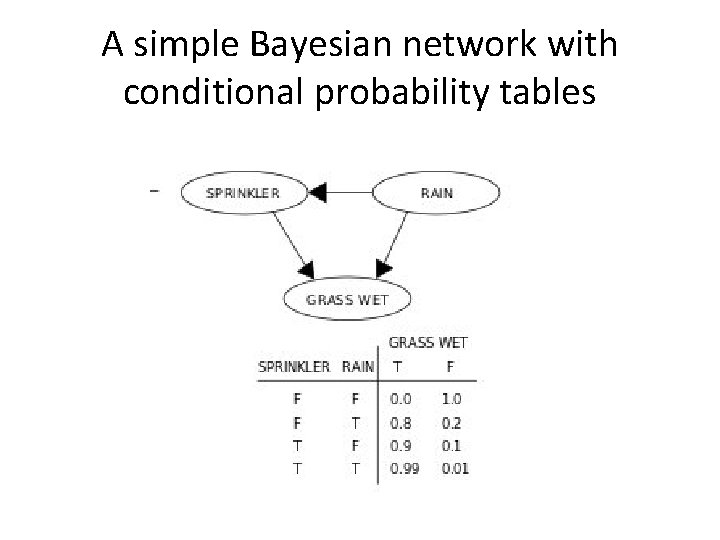

A simple Bayesian network with conditional probability tables

Dempster-Shafer theory • Dempster-Shafer theory is an approach to combining evidence. • Dempster (1967) developed means for combining degrees of belief derived from independent items of evidence.

• Each fact has a degree of support, between 0 and 1: – 0 No support for the fact – 1 full support for the fact • Differs from Bayesian approach in that: – Belief in a fact and its negation need not sum to 1. • – Both values can be 0 (meaning no evidence for or against the fact)

• Set of Possible conclusions : • A = {A 1, A 2…. , An} • Uses the evidence which supports the subsets of outcomes in A : • A 1 V A 2 V A 3 • {A 1, A 2…. , A 3}

• Frame of discernment : • The “frame of discernment” (or “Power set”) of A is the set of all possible subsets of A : • Eg if A = {A 1, A 2…. , A 3} • Then Frame of discernment is : • {ɸ, A 1, A 2, A 3, {A 1, A 2}, {A 2, A 3}, {A 1, A 2, A 3}}

• ɸ, the empty set has a probability of 0, since of outcomes has to be true. • Each of the other elements in the power set has a probability between 0 and 1. • The probability of {A 1, A 2, A 3} is 1. 0 since any one has to be true.

• Mass function m(A): = proportion of all evidence that supports this element of the power set. • (where A is a member of the power set) • Each m(A) is between 0 and 1. • All m(A) sum to 1. • m(Ø) is 0 - at least one must be true

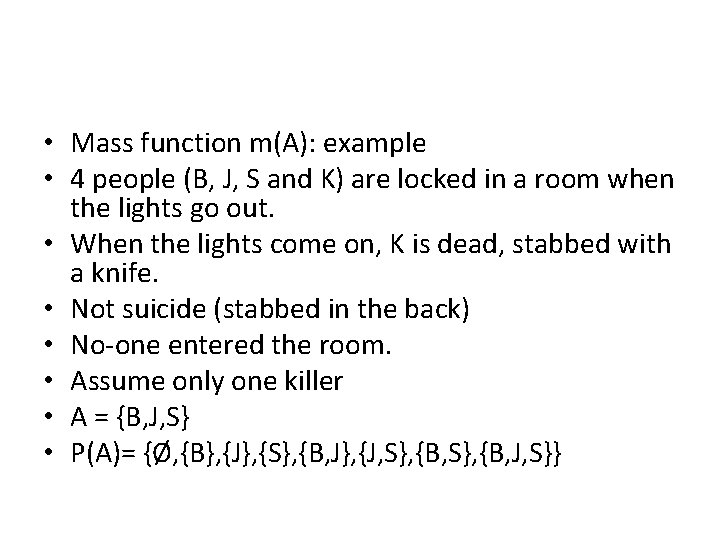

• Mass function m(A): example • 4 people (B, J, S and K) are locked in a room when the lights go out. • When the lights come on, K is dead, stabbed with a knife. • Not suicide (stabbed in the back) • No-one entered the room. • Assume only one killer • A = {B, J, S} • P(A)= {Ø, {B}, {J}, {S}, {B, J}, {J, S}, {B, J, S}}

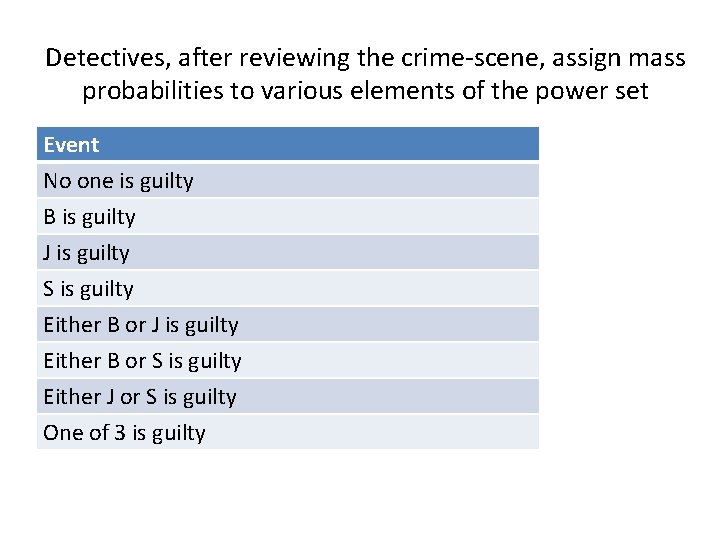

Detectives, after reviewing the crime-scene, assign mass probabilities to various elements of the power set Event No one is guilty B is guilty J is guilty S is guilty Either B or J is guilty Either B or S is guilty Either J or S is guilty One of 3 is guilty

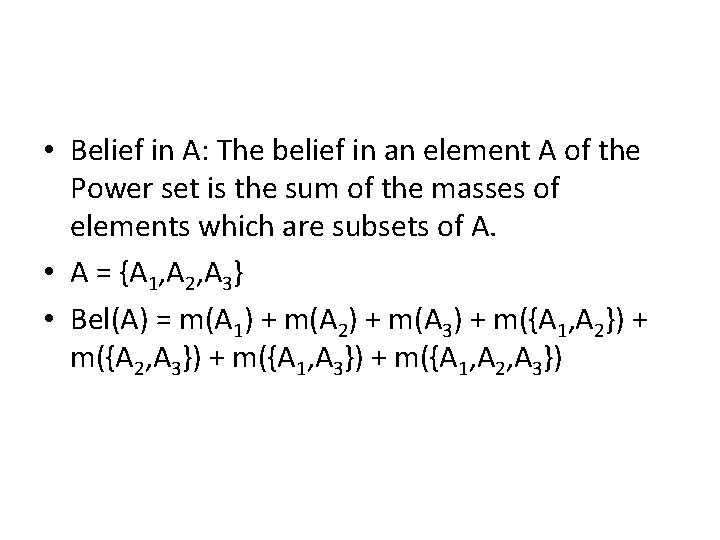

• Belief in A: The belief in an element A of the Power set is the sum of the masses of elements which are subsets of A. • A = {A 1, A 2, A 3} • Bel(A) = m(A 1) + m(A 2) + m(A 3) + m({A 1, A 2}) + m({A 2, A 3}) + m({A 1, A 2, A 3})

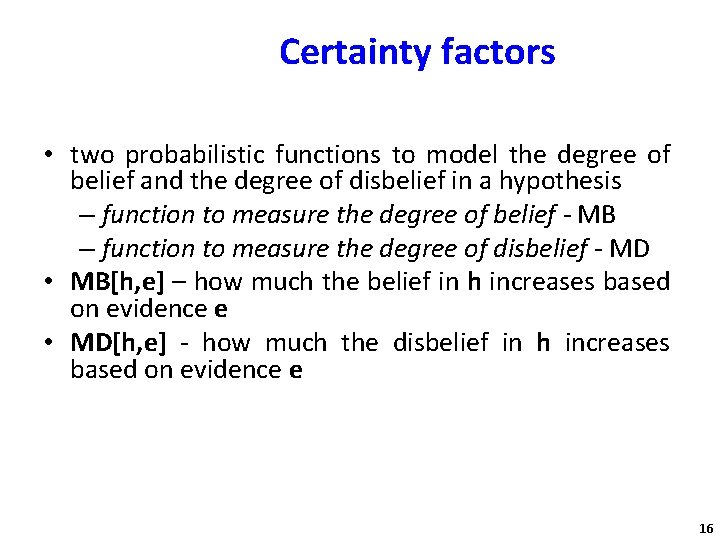

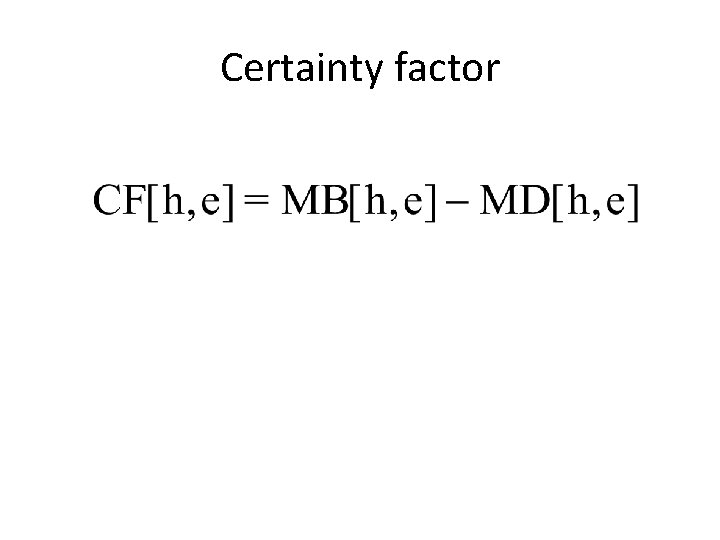

Certainty factors • two probabilistic functions to model the degree of belief and the degree of disbelief in a hypothesis – function to measure the degree of belief - MB – function to measure the degree of disbelief - MD • MB[h, e] – how much the belief in h increases based on evidence e • MD[h, e] - how much the disbelief in h increases based on evidence e 16

Certainty factor

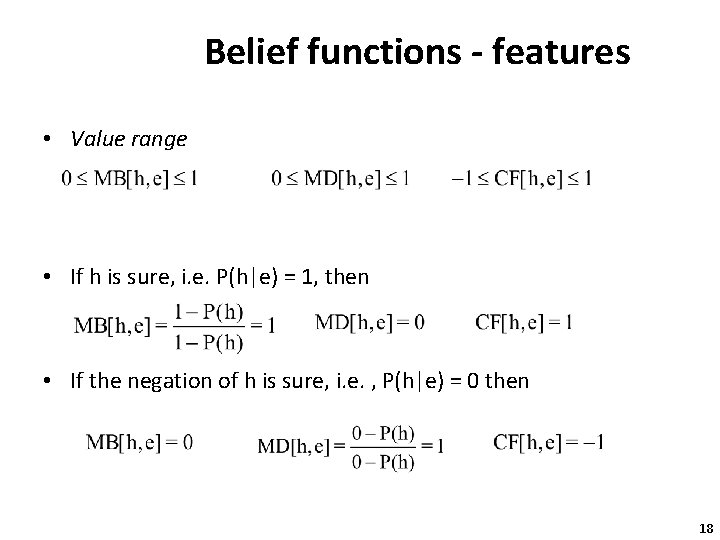

Belief functions - features • Value range • If h is sure, i. e. P(h|e) = 1, then • If the negation of h is sure, i. e. , P(h|e) = 0 then 18

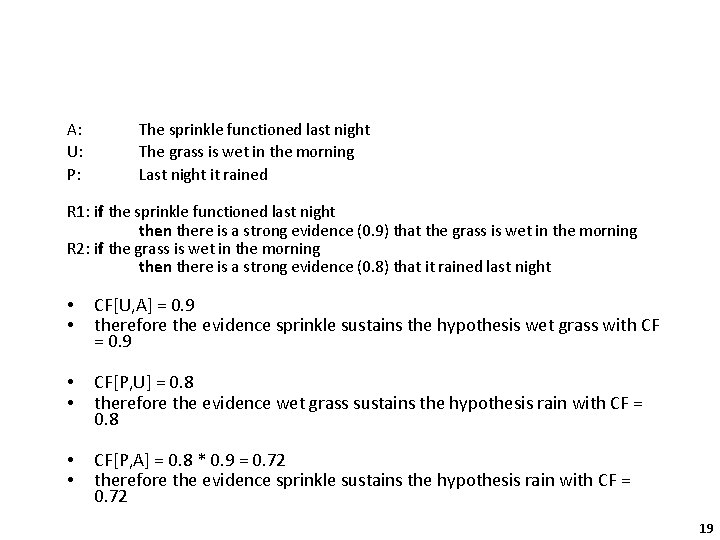

A: U: P: The sprinkle functioned last night The grass is wet in the morning Last night it rained R 1: if the sprinkle functioned last night then there is a strong evidence (0. 9) that the grass is wet in the morning R 2: if the grass is wet in the morning then there is a strong evidence (0. 8) that it rained last night • • CF[U, A] = 0. 9 therefore the evidence sprinkle sustains the hypothesis wet grass with CF = 0. 9 • • CF[P, U] = 0. 8 therefore the evidence wet grass sustains the hypothesis rain with CF = 0. 8 • • CF[P, A] = 0. 8 * 0. 9 = 0. 72 therefore the evidence sprinkle sustains the hypothesis rain with CF = 0. 72 19

- Slides: 19