Pattern Recognition and Machine Learning Chapter 2 Probability

Pattern Recognition and Machine Learning Chapter 2: Probability Distributions July - 2018 chonbuk national university

Probability Distributions: General Density Estimation: given a finite set x 1, . . . , x N of observations, find distribution p(x) of x Frequentist’s Way: chose specific parameter values by optimizing criterion (e. g. , likelihood) Bayesian Way: prior distribution over parameters, compute posterior distribution with Bayes’ rule Conjugate Prior: leads to a posterior distribution of the same functional form as the prior (makes life a lot easier : ) July - 2018 chonbuk national university

Binary Variables: Frequentist’s Way Given a binary random variable x ∈ {0, 1 } (tossing a coin) with p(x = 1|µ) = µ, p(x = 0|µ) = 1 − µ. (2. 1) p(x) can be described by the Bernoulli distribution: Bern(x|µ) = µx (1 − µ)1− x. (2. 2) m The maximum for µ is: µ ML = likelihood with estimate m = (#observations of x = 1) N Yet this can lead to overfitting (especially for small N ), e. g. , N = m = 3 yields µ M L = 1 July - 2018 chonbuk national university (2. 8)

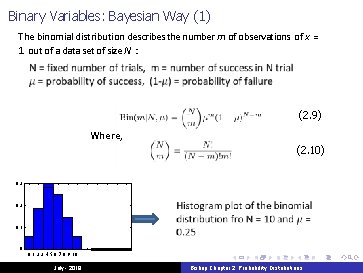

Binary Variables: Bayesian Way (1) The binomial distribution describes the number m of observations of x = 1 out of a data set of size N : (2. 9) Where, (2. 10) 0. 3 0. 2 0. 1 0 0 1 2 3 4 5 6 7 8 9 10 July - 2018 Bishop Chapter 2: Probability Distributions

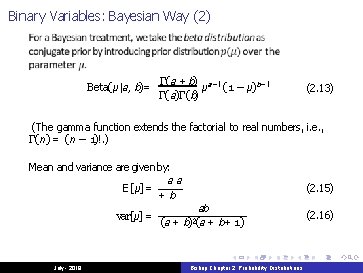

Binary Variables: Bayesian Way (2) Beta(µ|a, b)= Γ(a + b) a− 1 µ (1 − µ) b− 1 Γ(a)Γ(b) (2. 13) (The gamma function extends the factorial to real numbers, i. e. , Γ(n) = (n − 1)!. ) Mean and variance are given by: aa E[µ] = +b var[µ] = July - 2018 (2. 15) ab (a + b)2(a + b+ 1) Bishop Chapter 2: Probability Distributions (2. 16)

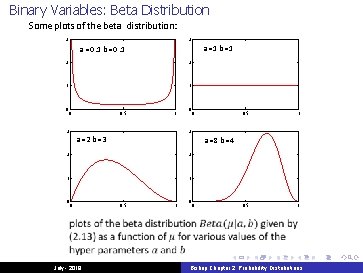

Binary Variables: Beta Distribution Some plots of the beta distribution: 3 3 a =1 b =1 a =0. 1 b =0. 1 2 2 1 1 0 0 3 0. 5 1 0 1 1 July - 2018 1 0. 5 1 a =8 b =4 2 0 0. 5 3 a =2 b =3 2 0 0 0. 5 1 0 0 Bishop Chapter 2: Probability Distributions

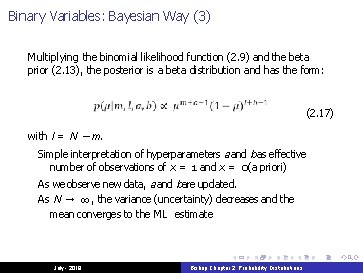

Binary Variables: Bayesian Way (3) Multiplying the binomial likelihood function (2. 9) and the beta prior (2. 13), the posterior is a beta distribution and has the form: (2. 17) with l = N − m. Simple interpretation of hyperparameters a and b as effective number of observations of x = 1 and x = 0(a priori) As we observe new data, a and bare updated. As N → ∞, the variance (uncertainty) decreases and the mean converges to the ML estimate July - 2018 Bishop Chapter 2: Probability Distributions

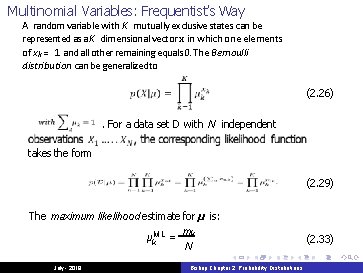

Multinomial Variables: Frequentist’s Way A random variable with K mutually exclusive states can be represented as a K dimensional vector x in which one elements of xk = 1 and all other remaining equals 0. The Bernoulli distribution can be generalizedto (2. 26). For a data set D with N independent takes the form (2. 29) The maximum likelihood estimate for µ is: m µk. M L = k N July - 2018 Bishop Chapter 2: Probability Distributions (2. 33)

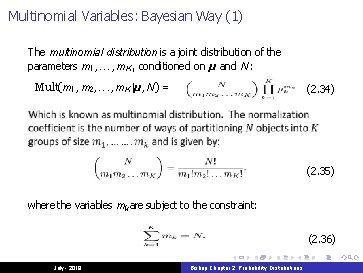

Multinomial Variables: Bayesian Way (1) The multinomial distribution is a joint distribution of the parameters m 1, . . . , m K , conditioned on µ and N : Mult(m 1, m 2, . . . , m K |µ, N ) = (2. 34) (2. 35) where the variables mk are subject to the constraint: (2. 36) July - 2018 Bishop Chapter 2: Probability Distributions

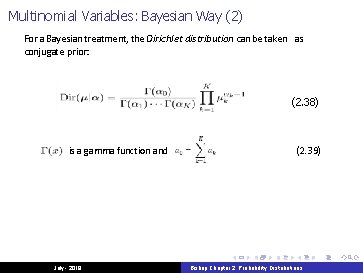

Multinomial Variables: Bayesian Way (2) For a Bayesian treatment, the Dirichlet distribution can be taken as conjugate prior: (2. 38) is a gamma function and July - 2018 (2. 39) Bishop Chapter 2: Probability Distributions

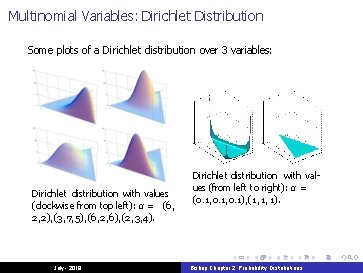

Multinomial Variables: Dirichlet Distribution Some plots of a Dirichlet distribution over 3 variables: Dirichlet distribution with values (clockwise from top left): α = (6, 2, 2), (3, 7, 5), (6, 2, 6), (2, 3, 4). July - 2018 Dirichlet distribution with values (from left to right): α = (0. 1, 0. 1), (1, 1, 1). Bishop Chapter 2: Probability Distributions

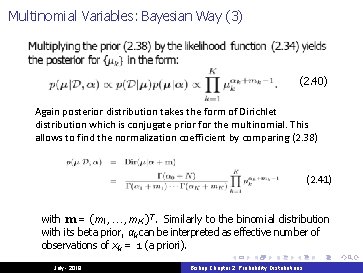

Multinomial Variables: Bayesian Way (3) (2. 40) Again posterior distribution takes the form of Dirichlet distribution which is conjugate prior for the multinomial. This allows to find the normalization coefficient by comparing (2. 38) (2. 41) with m = (m 1, . . . , m K ) T. Similarly to the binomial distribution with its beta prior, αk can be interpreted as effective number of observations of xk = 1 (a priori). July - 2018 Bishop Chapter 2: Probability Distributions

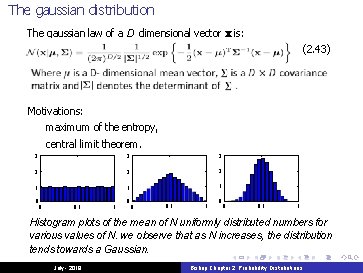

The gaussian distribution The gaussian law of a D dimensional vector x is: (2. 43) Motivations: maximum of the entropy, central limit theorem. 3 3 3 2 2 2 1 1 1 0 0 0. 5 1 Histogram plots of the mean of N uniformly distributed numbers for various values of N. we observe that as N increases, the distribution tends towards a Gaussian. July - 2018 Bishop Chapter 2: Probability Distributions

The gaussian distribution : Properties The law is a function of the Mahalanobis distance from x to µ: ∆ 2 = (x − µ)T Σ − 1(x − µ) (2. 44) The expectation of x under the Gaussian distribution is: IE(x) = µ, (2. 59) The covariance matrix of x is: cov(x) = Σ. July - 2018 Bishop Chapter 2: Probability Distributions (2. 64)

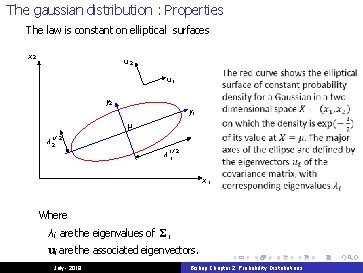

The gaussian distribution : Properties The law is constant on elliptical surfaces x 2 u 1 y 2 y 1 µ λ 12 / 2 λ 11/ 2 x 1 Where λ i are the eigenvalues of Σ, ui are the associated eigenvectors. July - 2018 Bishop Chapter 2: Probability Distributions

The gaussian distribution : Conditional and marginal laws Given a Gausian distribution N (x|µ, Σ) with: x = (xa, xb)T , µ = (µa, µb)T Σ= Σ aa Σ ab Σ ba Σ bb (2. 94) (2. 95) The conditional distribution p(xa|xb) is again a Gaussian law with parameters: (2. 81) − 1 Σa|b = Σaa − Σab Σ ba. The marginal distribution p(xa) is a gaussian law with parameters (µa, Σaa). July - 2018 Bishop Chapter 2: Probability Distributions (2. 82)

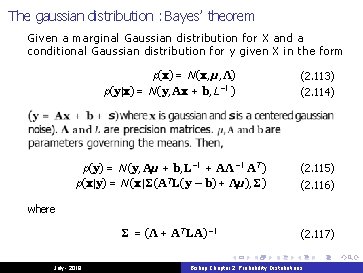

The gaussian distribution : Bayes’ theorem Given a marginal Gaussian distribution for X and a conditional Gaussian distribution for y given X in the form p(x) = N (x, µ, Λ) p(y|x) = N (y, Ax + b, L − 1 ) (2. 113) p(y) = N (y, Aµ + b, L− 1 + AΛ − 1 A T ) p(x|y) = N (x|Σ(A T L(y − b) + Λµ), Σ) (2. 115) Σ = (Λ + A T LA) − 1 (2. 117) (2. 114) (2. 116) where July - 2018 Bishop Chapter 2: Probability Distributions

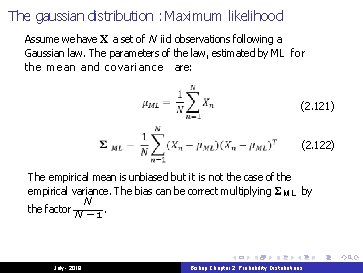

The gaussian distribution : Maximum likelihood Assume we have X a set of N iid observations following a Gaussian law. The parameters of the law, estimated by ML for the mean and covariance are: (2. 121) (2. 122) The empirical mean is unbiased but it is not the case of the empirical variance. The bias can be correct multiplying Σ M L by N the factor N − 1. July - 2018 Bishop Chapter 2: Probability Distributions

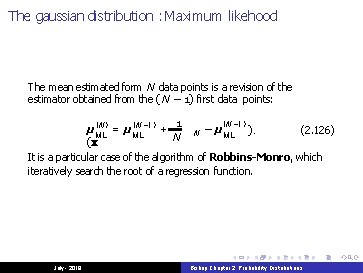

The gaussian distribution : Maximum likehood The mean estimated form N data points is a revision of the estimator obtained from the (N − 1) first data points: 1 ( N − 1) (N − 1) µML = µML + ). (2. 126) N − µ ML N (x It is a particular case of the algorithm of Robbins-Monro, which iteratively search the root of a regression function. July - 2018 Bishop Chapter 2: Probability Distributions

- Slides: 19