Optimizing Batched Linear Algebra on Intel Xeon Phi

Optimizing Batched Linear Algebra on Intel® Xeon Phi™ Processors Sarah Knepper, Murat E. Guney, Kazushige Goto, Shane Story, Arthur Araujo Mitrano, Tim Costa, and Louise Huot Intel® Math Kernel Library (Intel® MKL)

Agenda • Overview of Intel® Xeon and Xeon Phi™ Processors • Focus on Xeon Phi™ x 200 Processor • Overview of Batched Linear Algebra • Focus on Intel MKL batched API • Performance on Xeon Phi™ x 200 Processor Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 2

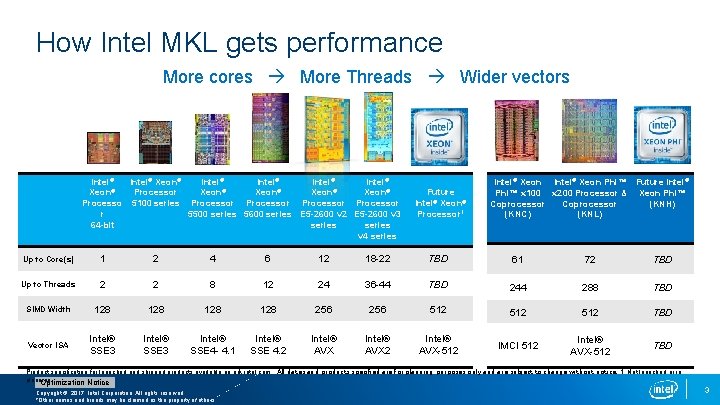

How Intel MKL gets performance More cores More Threads Wider vectors Intel® Xeon® Intel® Xeon® Processor Xeon® Processo 5100 series Processor r 5500 series 5600 series E 5 -2600 v 2 E 5 -2600 v 3 64 -bit series v 4 series Future Intel® Xeon® Processor 1 Intel® Xeon Phi™ Future Intel® Phi™ x 100 x 200 Processor & Xeon Phi™ Coprocessor (KNH) (KNC) (KNL) Up to Core(s) 1 2 4 6 12 18 -22 TBD 61 72 TBD Up to Threads 2 2 8 12 24 36 -44 TBD 244 288 TBD SIMD Width 128 128 256 512 512 TBD Vector ISA Intel® SSE 3 Intel® SSE 4 - 4. 1 Intel® SSE 4. 2 Intel® AVX-512 IMCI 512 Intel® AVX-512 TBD Product specification for launched and shipped products available on ark. intel. com. All dates and products specified are for planning purposes only and are subject to change without notice. 1. Not launched or in planning. Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 3

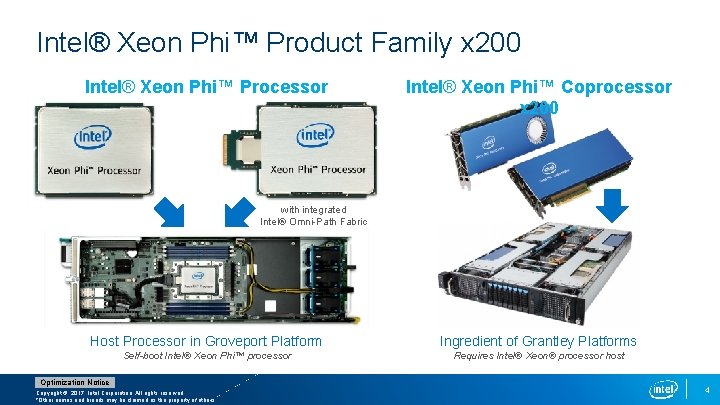

Intel® Xeon Phi™ Product Family x 200 Intel® Xeon Phi™ Processor Intel® Xeon Phi™ Coprocessor x 200 with integrated Intel® Omni-Path Fabric Host Processor in Groveport Platform Ingredient of Grantley Platforms Self-boot Intel® Xeon Phi™ processor Requires Intel® Xeon® processor host Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 4

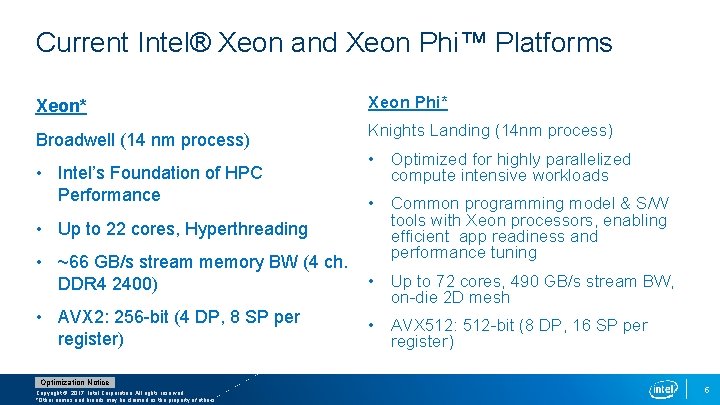

Current Intel® Xeon and Xeon Phi™ Platforms Xeon* Broadwell (14 nm process) • Intel’s Foundation of HPC Performance • Up to 22 cores, Hyperthreading • ~66 GB/s stream memory BW (4 ch. DDR 4 2400) • AVX 2: 256 -bit (4 DP, 8 SP per register) Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. Xeon Phi* Knights Landing (14 nm process) • Optimized for highly parallelized compute intensive workloads • Common programming model & S/W tools with Xeon processors, enabling efficient app readiness and performance tuning • Up to 72 cores, 490 GB/s stream BW, on-die 2 D mesh • AVX 512: 512 -bit (8 DP, 16 SP per register) 5

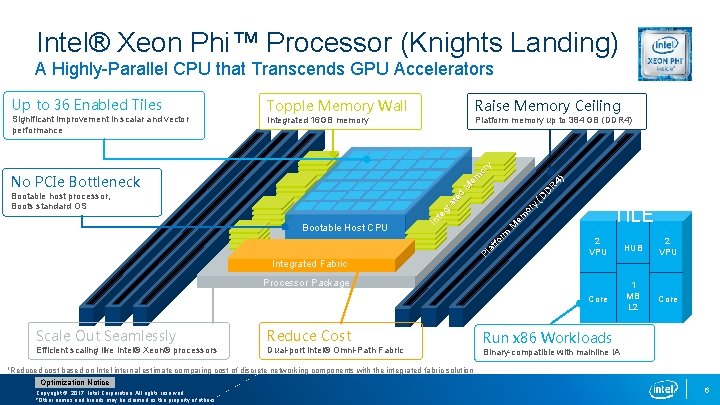

Intel® Xeon Phi™ Processor (Knights Landing) A Highly-Parallel CPU that Transcends GPU Accelerators Topple Memory Wall Raise Memory Ceiling Integrated 16 GB memory Platform memory up to 384 GB (DDR 4) D R (D or y TILE em Pl at fo Bootable Host CPU M Bootable host processor, Boots standard OS rm No PCIe Bottleneck 4) M em or y Significant improvement in scalar and vector performance In te gr at ed Up to 36 Enabled Tiles 2 VPU HUB 2 VPU Core 1 MB L 2 Core Integrated Fabric Processor Package Scale Out Seamlessly Reduce Cost Efficient scaling like Intel® Xeon® processors Dual-port Intel® Omni-Path Fabric 1 Reduced Run x 86 Workloads Binary-compatible with mainline IA cost based on Intel internal estimate comparing cost of discrete networking components with the integrated fabric solution Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 6

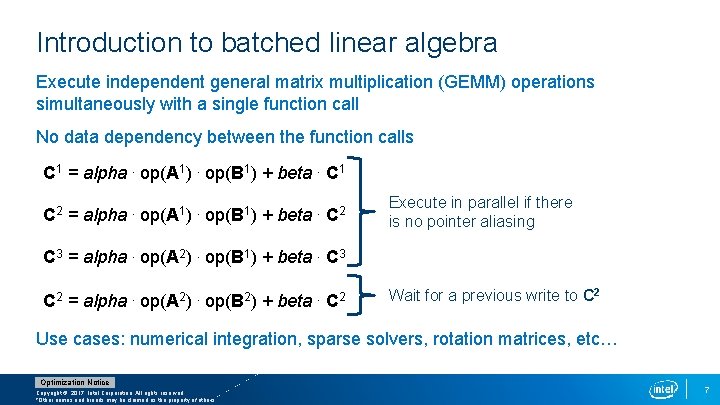

Introduction to batched linear algebra Execute independent general matrix multiplication (GEMM) operations simultaneously with a single function call No data dependency between the function calls C 1 = alpha. op(A 1). op(B 1) + beta. C 1 C 2 = alpha . op(A 1). op(B 1) + beta . C 2 Execute in parallel if there is no pointer aliasing C 3 = alpha. op(A 2). op(B 1) + beta. C 3 C 2 = alpha. op(A 2). op(B 2) + beta. C 2 Wait for a previous write to C 2 Use cases: numerical integration, sparse solvers, rotation matrices, etc… Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 7

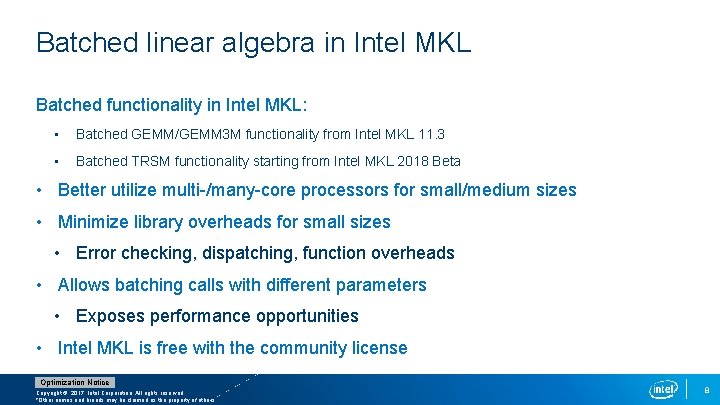

Batched linear algebra in Intel MKL Batched functionality in Intel MKL: • Batched GEMM/GEMM 3 M functionality from Intel MKL 11. 3 • Batched TRSM functionality starting from Intel MKL 2018 Beta • Better utilize multi-/many-core processors for small/medium sizes • Minimize library overheads for small sizes • Error checking, dispatching, function overheads • Allows batching calls with different parameters • Exposes performance opportunities • Intel MKL is free with the community license Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 8

Intel MKL Batch API The API allows batching calls with different parameters § Group: a number of GEMM operations with same parameters § Batch: a number of GEMM groups § GEMM_BATCH executes multiple groups simultaneously Two additional parameters to the traditional GEMM functions § group_count (integer): total number of groups § group_size (integer array): the number of GEMMs within each group A consistent level of redirection for GEMM parameters § Integer becomes array of integers § Matrix pointer becomes array of matrix pointers Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 9

GEMM_BATCH in Intel MKL - Group Concept • Group: set of GEMM operations with same input parameters (except for matrix pointers) • Transpose, size, leading dimension, alpha, beta • One or more groups per GEMM_BATCH call Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 10

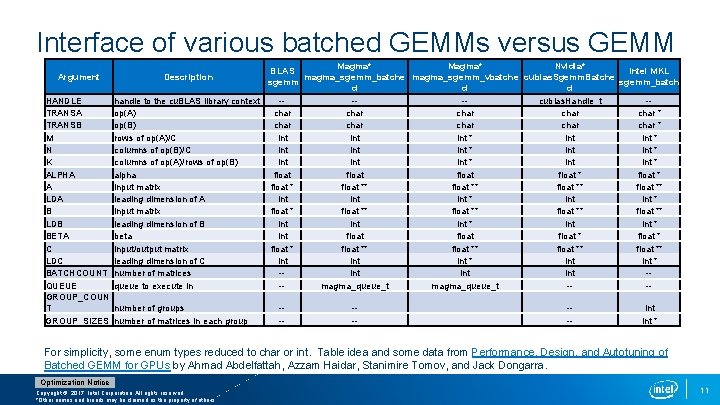

Interface of various batched GEMMs versus GEMM Magma* Nvidia* BLAS Intel MKL magma_sgemm_batche magma_sgemm_vbatche cublas. Sgemm. Batche sgemm_batch d d d HANDLE handle to the cu. BLAS library context ---cublas. Handle_t -TRANSA op(A) char char * TRANSB op(B) char char * M rows of op(A)/C int int * N columns of op(B)/C int int * K columns of op(A)/rows of op(B) int int * ALPHA alpha float * float * A input matrix float ** float ** LDA leading dimension of A int int * B input matrix float ** float ** LDB leading dimension of B int int * BETA beta int float * C input/output matrix float ** float ** LDC leading dimension of C int int * BATCHCOUNT number of matrices -int int -QUEUE queue to execute in -magma_queue_t --GROUP_COUN T number of groups ---int GROUP_SIZES number of matrices in each group ---int * Argument Description For simplicity, some enum types reduced to char or int. Table idea and some data from Performance, Design, and Autotuning of Batched GEMM for GPUs by Ahmad Abdelfattah, Azzam Haidar, Stanimire Tomov, and Jack Dongarra. Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 11

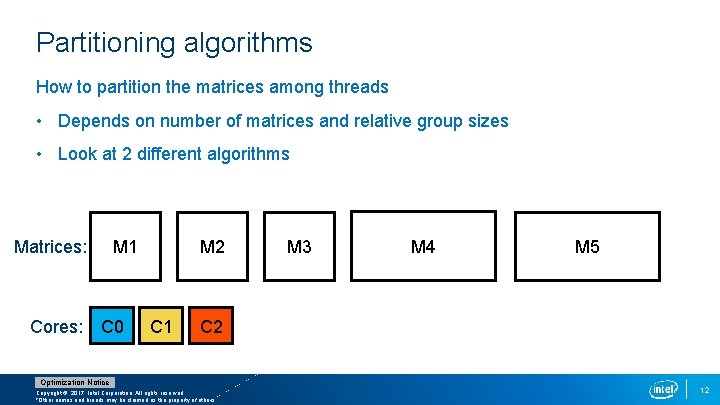

Partitioning algorithms How to partition the matrices among threads • Depends on number of matrices and relative group sizes • Look at 2 different algorithms Matrices: M 1 Cores: C 0 M 2 C 1 M 3 M 4 M 5 C 2 Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 12

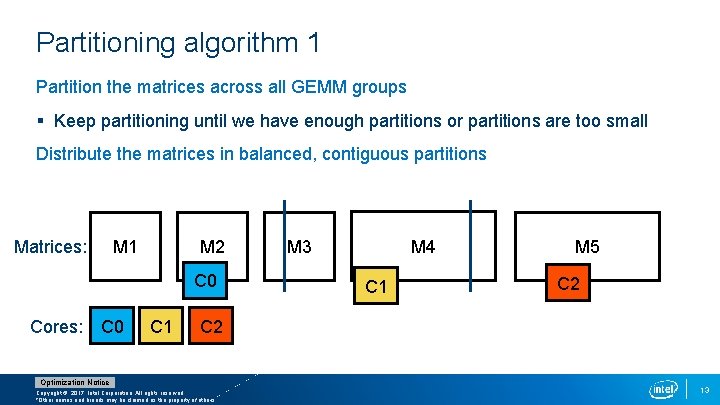

Partitioning algorithm 1 Partition the matrices across all GEMM groups § Keep partitioning until we have enough partitions or partitions are too small Distribute the matrices in balanced, contiguous partitions Matrices: M 1 M 2 C 0 Cores: C 0 C 1 M 4 M 3 C 1 M 5 C 2 Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 13

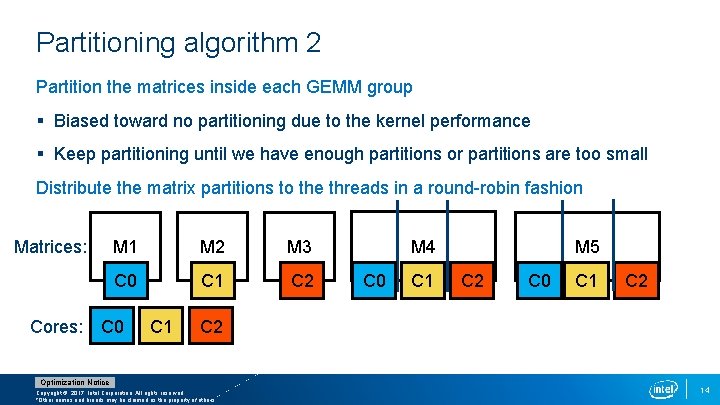

Partitioning algorithm 2 Partition the matrices inside each GEMM group § Biased toward no partitioning due to the kernel performance § Keep partitioning until we have enough partitions or partitions are too small Distribute the matrix partitions to the threads in a round-robin fashion Matrices: M 1 M 2 M 3 C 0 C 1 C 2 Cores: C 0 C 1 M 4 C 0 C 1 M 5 C 2 C 0 C 1 C 2 Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 14

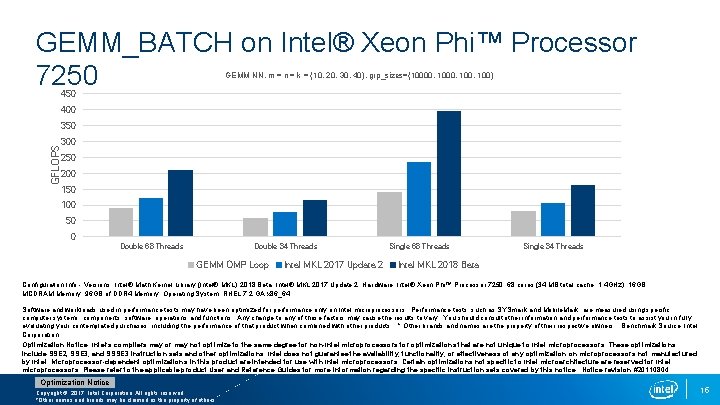

GEMM_BATCH on Intel® Xeon Phi™ Processor 7250 GEMM NN, m = n = k = {10, 20, 30, 40}, grp_sizes={10000, 100, 100} 450 400 GFLOPS 350 300 250 200 150 100 50 0 Double 68 Threads Double 34 Threads GEMM OMP Loop Intel MKL 2017 Update 2 Single 68 Threads Single 34 Threads Intel MKL 2018 Beta Configuration Info - Versions: Intel® Math Kernel Library (Intel® MKL) 2018 Beta, Intel® MKL 2017 Update 2; Hardware: Intel® Xeon Phi™ Processor 7250, 68 cores (34 MB total cache, 1. 4 GHz), 16 GB MCDRAM Memory, 96 GB of DDR 4 Memory; Operating System: RHEL 7. 2 GA x 86_64 Software and workloads used in performance tests may have been optimized for performance only on Intel microprocessors. Performance tests, such as SYSmark and Mobile. Mark, are measured using specific computer systems, components, software, operations and functions. Any change to any of those factors may cause the results to vary. You should consult other information and performance tests to assist you in fully evaluating your contemplated purchases, including the performance of that product when combined with other products. * Other brands and names are the property of their respective owners. Benchmark Source: Intel Corporation Optimization Notice: Intel’s compilers may or may not optimize to the same degree for non-Intel microprocessors for optimizations that are not unique to Intel microprocessors. These optimizations include SSE 2, SSE 3, and SSSE 3 instruction sets and other optimizations. Intel does not guarantee the availability, functionality, or effectiveness of any optimization on microprocessors not manufactured by Intel. Microprocessor-dependent optimizations in this product are intended for use with Intel microprocessors. Certain optimizations not specific to Intel microarchitecture are reserved for Intel microprocessors. Please refer to the applicable product User and Reference Guides for more information regarding the specific instruction sets covered by this notice. Notice revision #20110804. Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 15

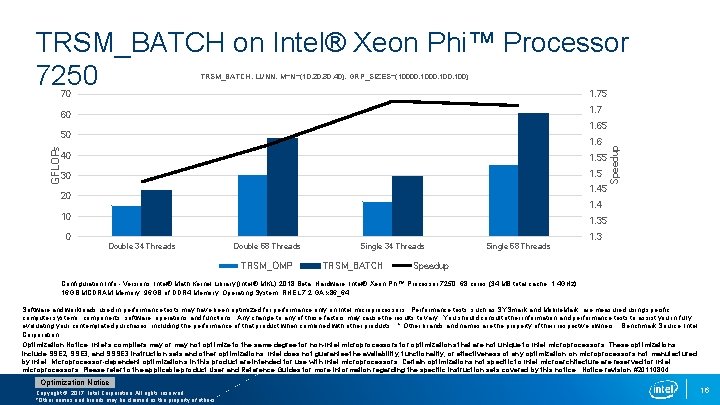

TRSM_BATCH on Intel® Xeon Phi™ Processor 7250 TRSM_BATCH, LUNN, M=N={10, 20, 30, 40}, GRP_SIZES={10000, 100, 100} 70 1. 75 1. 7 60 GFLOPs 1. 6 40 1. 55 30 1. 5 1. 45 20 Speedup 1. 65 50 1. 4 10 1. 35 0 1. 3 Double 34 Threads Double 68 Threads TRSM_OMP Single 34 Threads TRSM_BATCH Single 68 Threads Speedup Configuration Info - Versions: Intel® Math Kernel Library (Intel® MKL) 2018 Beta; Hardware: Intel® Xeon Phi™ Processor 7250, 68 cores (34 MB total cache, 1. 4 GHz), 16 GB MCDRAM Memory, 96 GB of DDR 4 Memory; Operating System: RHEL 7. 2 GA x 86_64 Software and workloads used in performance tests may have been optimized for performance only on Intel microprocessors. Performance tests, such as SYSmark and Mobile. Mark, are measured using specific computer systems, components, software, operations and functions. Any change to any of those factors may cause the results to vary. You should consult other information and performance tests to assist you in fully evaluating your contemplated purchases, including the performance of that product when combined with other products. * Other brands and names are the property of their respective owners. Benchmark Source: Intel Corporation Optimization Notice: Intel’s compilers may or may not optimize to the same degree for non-Intel microprocessors for optimizations that are not unique to Intel microprocessors. These optimizations include SSE 2, SSE 3, and SSSE 3 instruction sets and other optimizations. Intel does not guarantee the availability, functionality, or effectiveness of any optimization on microprocessors not manufactured by Intel. Microprocessor-dependent optimizations in this product are intended for use with Intel microprocessors. Certain optimizations not specific to Intel microarchitecture are reserved for Intel microprocessors. Please refer to the applicable product User and Reference Guides for more information regarding the specific instruction sets covered by this notice. Notice revision #20110804. Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 16

Performance tips • Store matrices: • Contiguously • In MCDRAM • Choose appropriate leading dimensions • Use 1 hardware thread per core • KMP_AFFINITY=compact, 1, 0, granularity=fine Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 17

Final Remarks • Intel® Xeon Phi™ processors are extremely parallel and use general purpose programming • Batching better utilizes multi- and many-cores for small/medium matrices • Groups contain matrices with same parameters (size, leading dimension, etc. ) • Intel MKL batched API combines ease-of-use with performance opportunities Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 18

Intel MKL resources Website • https: //software. intel. com/en-us/intel-mkl License options • https: //software. intel. com/en-us/articles/free-mkl Forum • https: //software. intel. com/en-us/forums/intel-math-kernel-library Link line advisor • http: //software. intel. com/en-us/articles/intel-mkl-link-line-advisor Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 19

Thank You!! Poster Session PP 3, 4: 30 -6: 30 PM Tuesday: Accelerating Multiplication of Small or Skinny Matrices with Intel® Math Kernel Library Packed GEMM Routines API for the Compact Batched BLAS, Intel MKL Team https: //www. dropbox. com/s/gplop 3 sxhg 8 le 3 r/MKL_COMPACT_v 4. docx? dl=0 Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 20

Legal Disclaimer & Optimization Notice INFORMATION IN THIS DOCUMENT IS PROVIDED “AS IS”. NO LICENSE, EXPRESS OR IMPLIED, BY ESTOPPEL OR OTHERWISE, TO ANY INTELLECTUAL PROPERTY RIGHTS IS GRANTED BY THIS DOCUMENT. INTEL ASSUMES NO LIABILITY WHATSOEVER AND INTEL DISCLAIMS ANY EXPRESS OR IMPLIED WARRANTY, RELATING TO THIS INFORMATION INCLUDING LIABILITY OR WARRANTIES RELATING TO FITNESS FOR A PARTICULAR PURPOSE, MERCHANTABILITY, OR INFRINGEMENT OF ANY PATENT, COPYRIGHT OR OTHER INTELLECTUAL PROPERTY RIGHT. Software and workloads used in performance tests may have been optimized for performance only on Intel microprocessors. Performance tests, such as SYSmark and Mobile. Mark, are measured using specific computer systems, components, software, operations and functions. Any change to any of those factors may cause the results to vary. You should consult other information and performance tests to assist you in fully evaluating your contemplated purchases, including the performance of that product when combined with other products. Copyright © 2017, Intel Corporation. All rights reserved. Intel, Pentium, Xeon Phi, Core, VTune, Cilk, and the Intel logo are trademarks of Intel Corporation in the U. S. and other countries. Optimization Notice Intel’s compilers may or may not optimize to the same degree for non-Intel microprocessors for optimizations that are not unique to Intel microprocessors. These optimizations include SSE 2, SSE 3, and SSSE 3 instruction sets and other optimizations. Intel does not guarantee the availability, functionality, or effectiveness of any optimization on microprocessors not manufactured by Intel. Microprocessor-dependent optimizations in this product are intended for use with Intel microprocessors. Certain optimizations not specific to Intel microarchitecture are reserved for Intel microprocessors. Please refer to the applicable product User and Reference Guides for more information regarding the specific instruction sets covered by this notice. Notice revision #20110804 Optimization Notice Copyright © 2017, Intel Corporation. All rights reserved. *Other names and brands may be claimed as the property of others. 21

- Slides: 22