Open Source Cluster Application Resources OSCAR Presented by

Open Source Cluster Application Resources (OSCAR) Presented by Stephen L. Scott Thomas Naughton Geoffroy Vallée Network and Cluster Computing Computer Science and Mathematics Division

Open Source Cluster Application Resources • Snapshot of best known methods for building, programming and using clusters. OSCAR 2 • Consortium of academic, research and industry members.

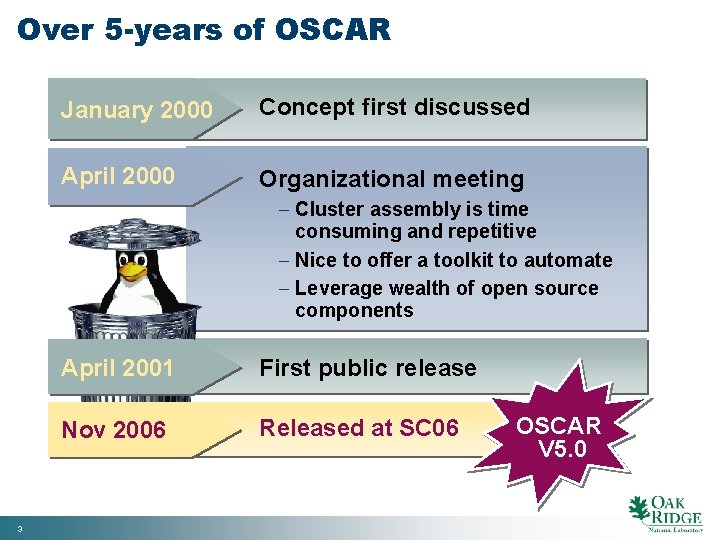

Over 5 -years of OSCAR January 2000 Concept first discussed April 2000 Organizational meeting - Cluster assembly is time consuming and repetitive - Nice to offer a toolkit to automate - Leverage wealth of open source components 3 April 2001 First public release Nov 2006 Released at SC 06 OSCAR V 5. 0

What does OSCAR do? · Wizard based cluster software installation - Operating system - Cluster environment · Automatically configures cluster components · Increases consistency among cluster builds · Reduces time to build / install a cluster · Reduces need for expertise 4

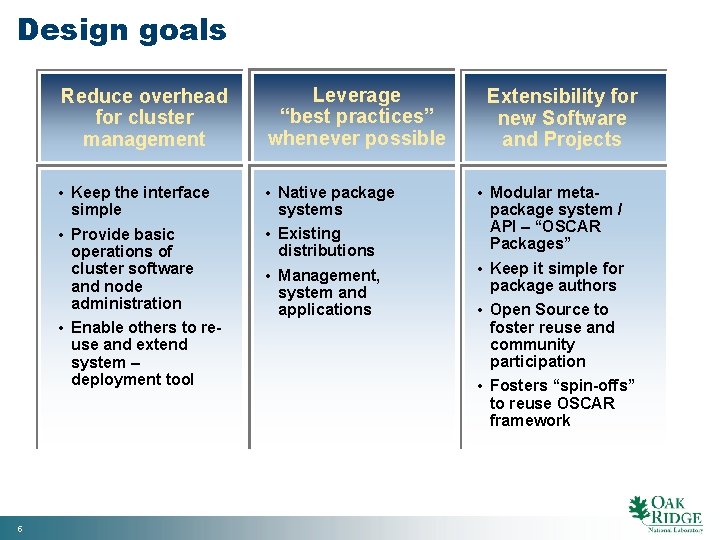

Design goals 5 Reduce overhead for cluster management Leverage “best practices” whenever possible • Keep the interface simple • Provide basic operations of cluster software and node administration • Enable others to reuse and extend system – deployment tool • Native package systems • Existing distributions • Management, system and applications Extensibility for new Software and Projects • Modular metapackage system / API – “OSCAR Packages” • Keep it simple for package authors • Open Source to foster reuse and community participation • Fosters “spin-offs” to reuse OSCAR framework

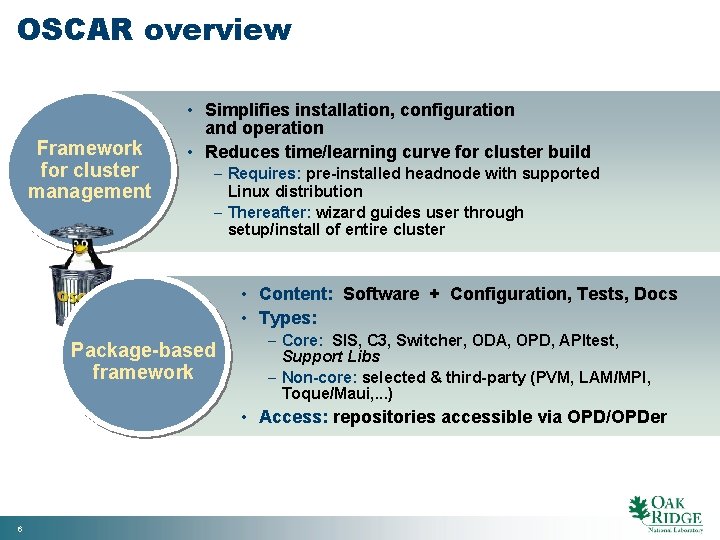

OSCAR overview Framework for cluster management • Simplifies installation, configuration and operation • Reduces time/learning curve for cluster build – Requires: pre-installed headnode with supported Linux distribution – Thereafter: wizard guides user through setup/install of entire cluster • Content: Software + Configuration, Tests, Docs • Types: Package-based framework – Core: SIS, C 3, Switcher, ODA, OPD, APItest, Support Libs – Non-core: selected & third-party (PVM, LAM/MPI, Toque/Maui, . . . ) • Access: repositories accessible via OPD/OPDer 6

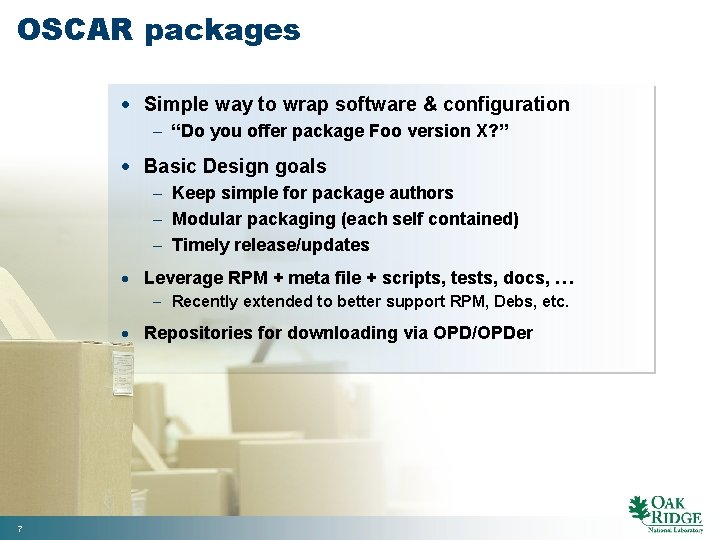

OSCAR packages · Simple way to wrap software & configuration - “Do you offer package Foo version X? ” · Basic Design goals - Keep simple for package authors - Modular packaging (each self contained) - Timely release/updates · Leverage RPM + meta file + scripts, tests, docs, … - Recently extended to better support RPM, Debs, etc. · Repositories for downloading via OPD/OPDer 7

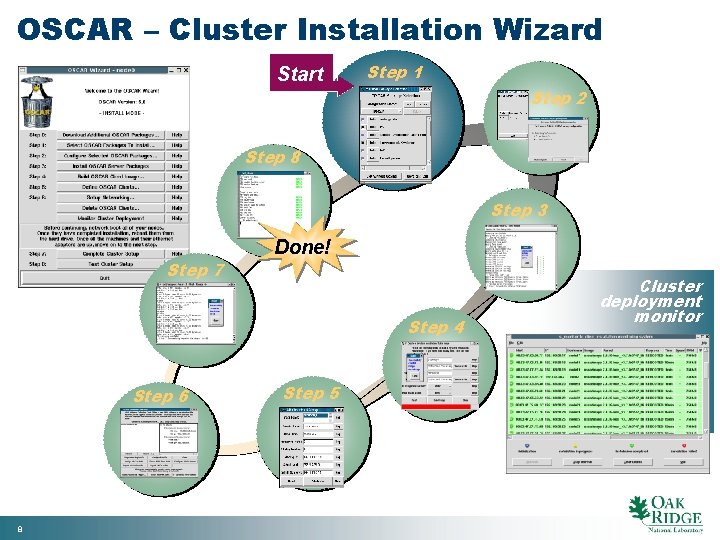

OSCAR – Cluster Installation Wizard Start Step 1 Step 2 Step 8 Step 3 Step 7 Done! Step 4 Step 6 8 Step 5 Cluster deployment monitor

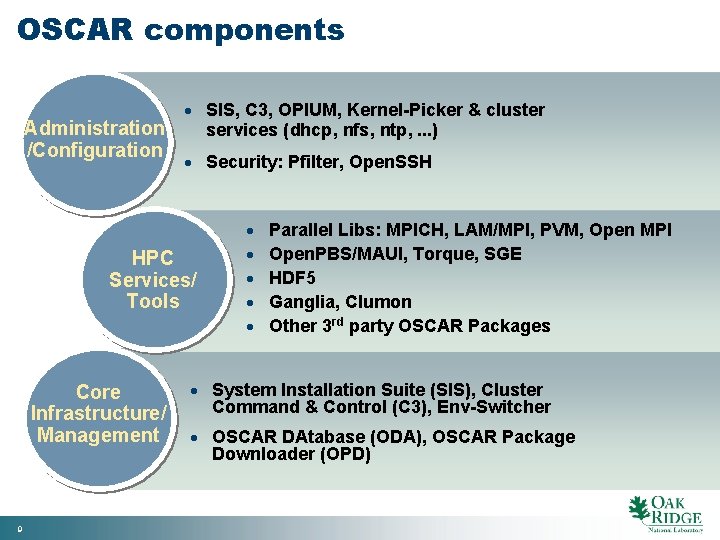

OSCAR components · SIS, C 3, OPIUM, Kernel-Picker & cluster Administration services (dhcp, nfs, ntp, . . . ) /Configuration · Security: Pfilter, Open. SSH HPC Services/ Tools Core Infrastructure/ Management 9 · · · Parallel Libs: MPICH, LAM/MPI, PVM, Open MPI Open. PBS/MAUI, Torque, SGE HDF 5 Ganglia, Clumon Other 3 rd party OSCAR Packages · System Installation Suite (SIS), Cluster Command & Control (C 3), Env-Switcher · OSCAR DAtabase (ODA), OSCAR Package Downloader (OPD)

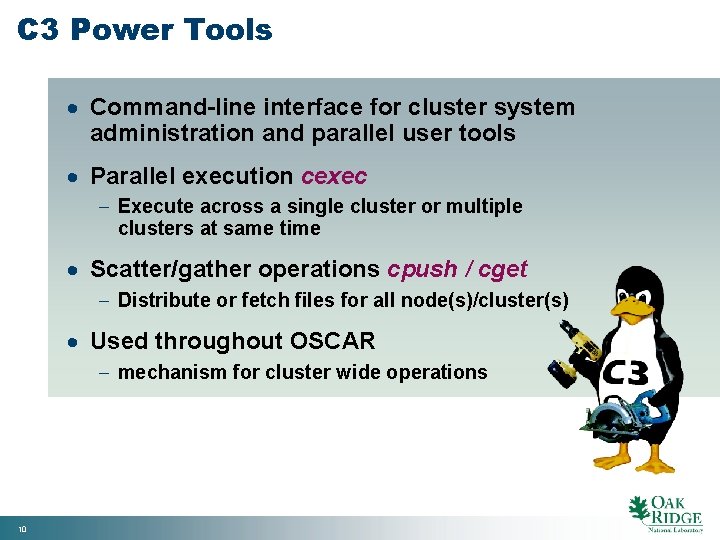

C 3 Power Tools · Command-line interface for cluster system administration and parallel user tools · Parallel execution cexec - Execute across a single cluster or multiple clusters at same time · Scatter/gather operations cpush / cget - Distribute or fetch files for all node(s)/cluster(s) · Used throughout OSCAR - mechanism for cluster wide operations 10

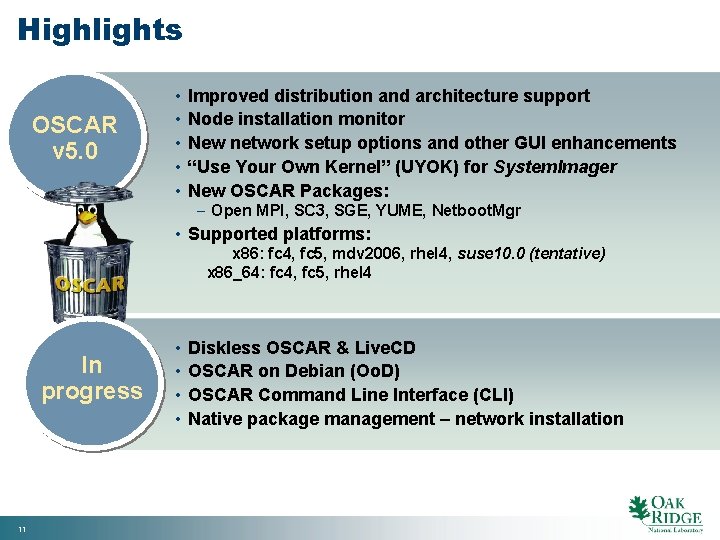

Highlights OSCAR v 5. 0 • • • Improved distribution and architecture support Node installation monitor New network setup options and other GUI enhancements “Use Your Own Kernel” (UYOK) for System. Imager New OSCAR Packages: – Open MPI, SC 3, SGE, YUME, Netboot. Mgr • Supported platforms: x 86: fc 4, fc 5, mdv 2006, rhel 4, suse 10. 0 (tentative) x 86_64: fc 4, fc 5, rhel 4 In progress 11 • • Diskless OSCAR & Live. CD OSCAR on Debian (Oo. D) OSCAR Command Line Interface (CLI) Native package management – network installation

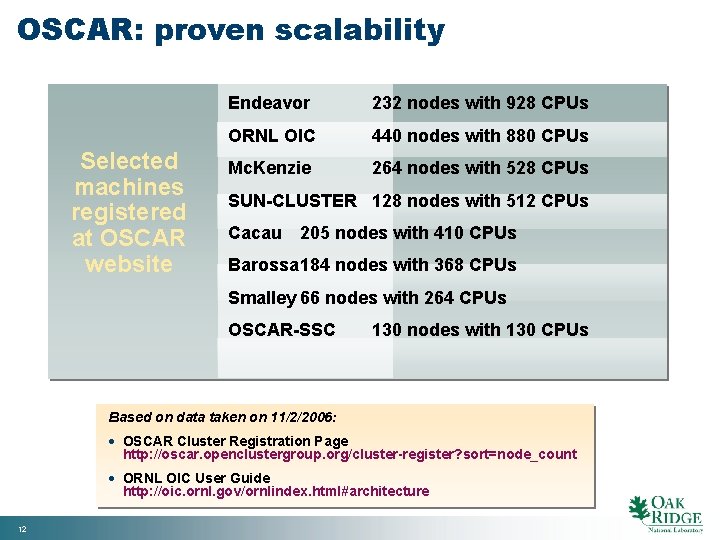

OSCAR: proven scalability Selected machines registered at OSCAR website Endeavor 232 nodes with 928 CPUs ORNL OIC 440 nodes with 880 CPUs Mc. Kenzie 264 nodes with 528 CPUs SUN-CLUSTER 128 nodes with 512 CPUs Cacau 205 nodes with 410 CPUs Barossa 184 nodes with 368 CPUs Smalley 66 nodes with 264 CPUs OSCAR-SSC 130 nodes with 130 CPUs Based on data taken on 11/2/2006: · OSCAR Cluster Registration Page http: //oscar. openclustergroup. org/cluster-register? sort=node_count · ORNL OIC User Guide http: //oic. ornl. gov/ornlindex. html#architecture 12

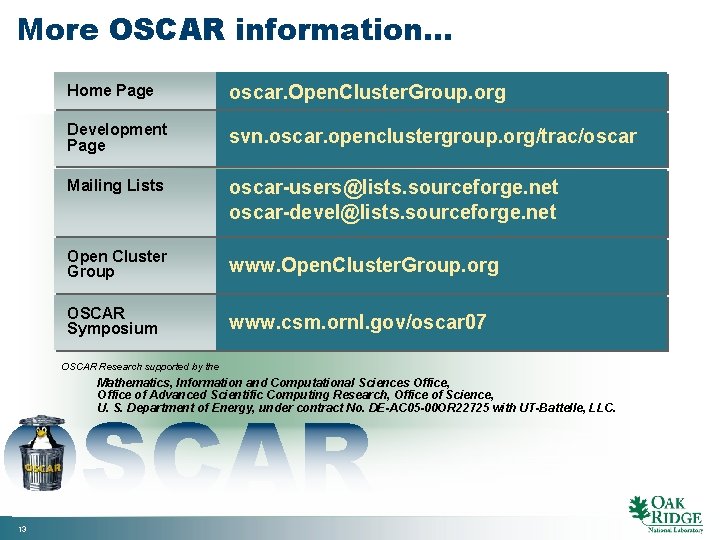

More OSCAR information… Home Page oscar. Open. Cluster. Group. org Development Page svn. oscar. openclustergroup. org/trac/oscar Mailing Lists oscar-users@lists. sourceforge. net oscar-devel@lists. sourceforge. net Open Cluster Group www. Open. Cluster. Group. org OSCAR Symposium www. csm. ornl. gov/oscar 07 OSCAR Research supported by the Mathematics, Information and Computational Sciences Office, Office of Advanced Scientific Computing Research, Office of Science, U. S. Department of Energy, under contract No. DE-AC 05 -00 OR 22725 with UT-Battelle, LLC. OSCAR 13

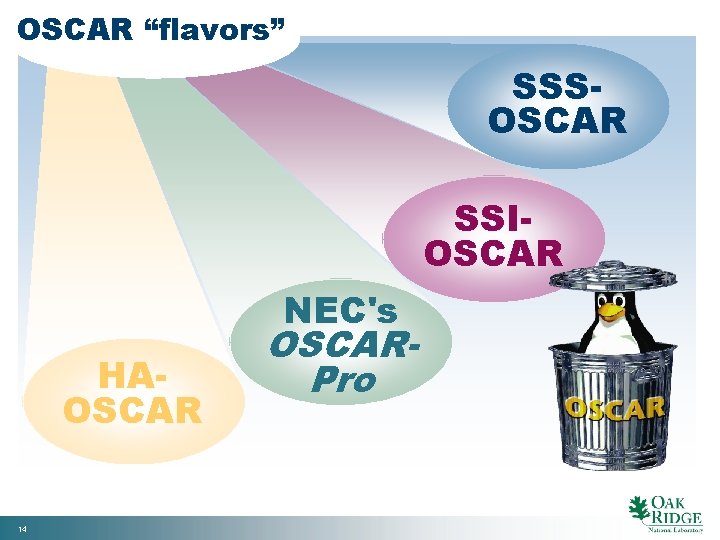

OSCAR “flavors” SSSOSCAR SSIOSCAR NEC's HAOSCAR 14 OSCARPro

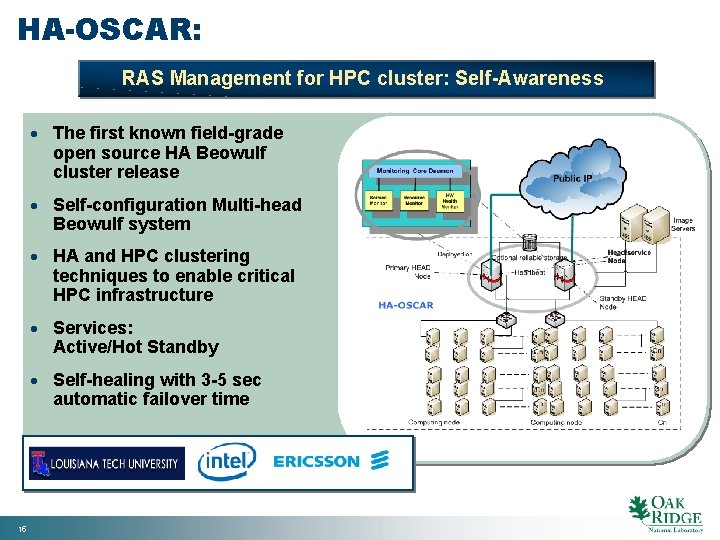

HA-OSCAR: RAS Management for HPC cluster: Self-Awareness · The first known field-grade open source HA Beowulf cluster release · Self-configuration Multi-head Beowulf system · HA and HPC clustering techniques to enable critical HPC infrastructure · Services: Active/Hot Standby · Self-healing with 3 -5 sec automatic failover time 15

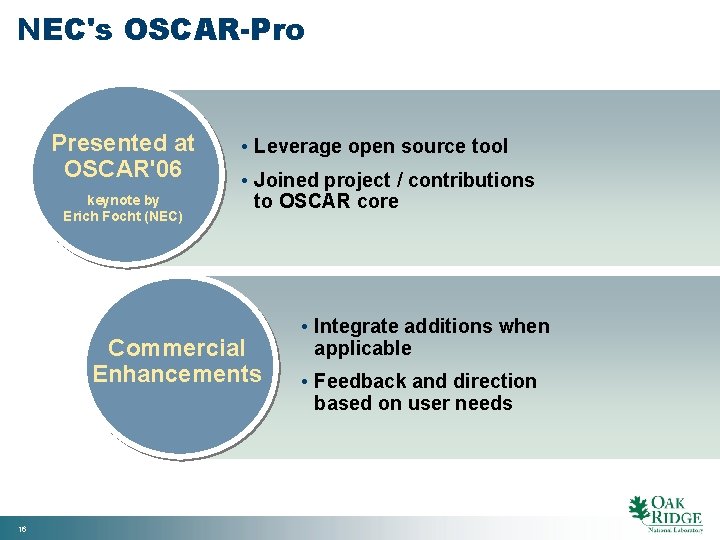

NEC's OSCAR-Pro Presented at OSCAR'06 keynote by Erich Focht (NEC) • Leverage open source tool • Joined project / contributions to OSCAR core Commercial Enhancements 16 • Integrate additions when applicable • Feedback and direction based on user needs

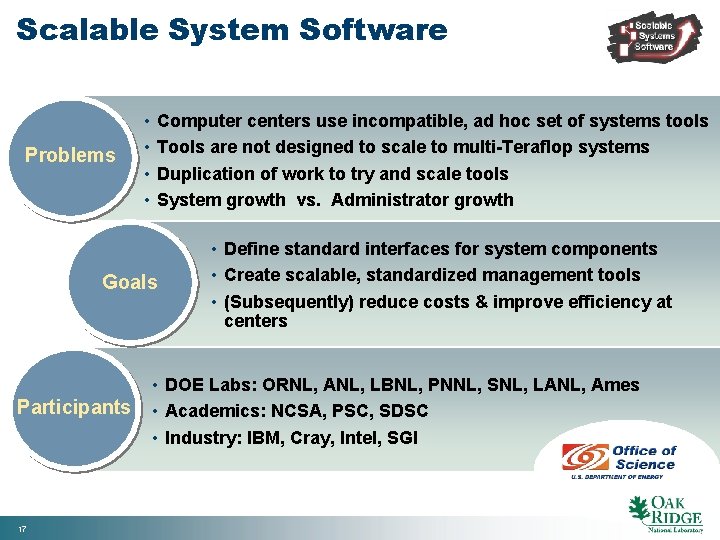

Scalable System Software Problems • • Computer centers use incompatible, ad hoc set of systems tools Tools are not designed to scale to multi-Teraflop systems Duplication of work to try and scale tools System growth vs. Administrator growth Goals Participants 17 • Define standard interfaces for system components • Create scalable, standardized management tools • (Subsequently) reduce costs & improve efficiency at centers • DOE Labs: ORNL, ANL, LBNL, PNNL, SNL, LANL, Ames • Academics: NCSA, PSC, SDSC • Industry: IBM, Cray, Intel, SGI

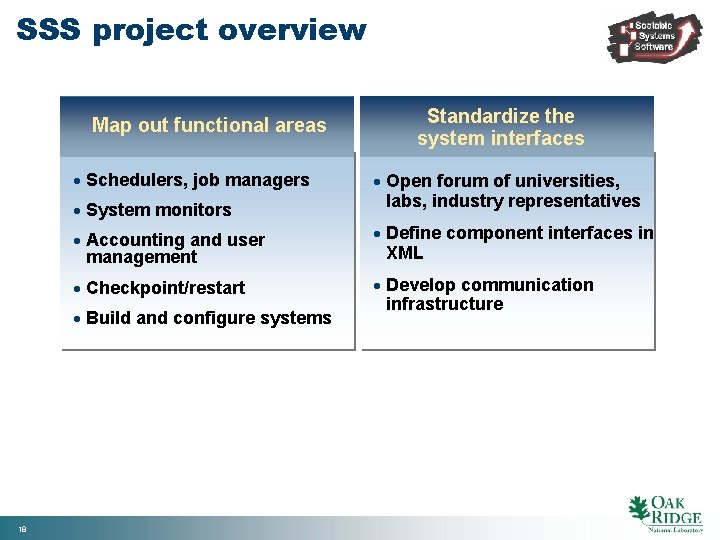

SSS project overview Map out functional areas · Schedulers, job managers · System monitors · Open forum of universities, labs, industry representatives · Accounting and user management · Define component interfaces in XML · Checkpoint/restart · Develop communication infrastructure · Build and configure systems 18 Standardize the system interfaces

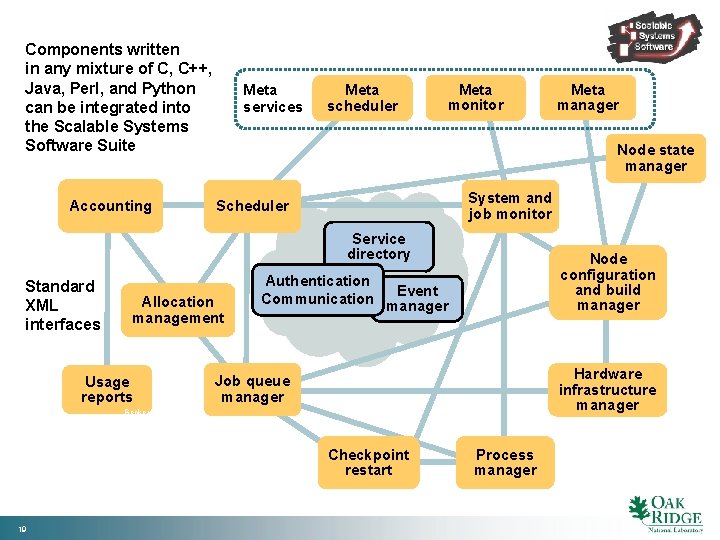

Components written in any mixture of C, C++, Java, Perl, and Python can be integrated into the Scalable Systems Software Suite Accounting Meta services Meta scheduler Meta monitor Node state manager System and job monitor Scheduler Service directory Standard XML interfaces Allocation management Usage reports Node configuration and build manager Authentication Event Communication manager Hardware infrastructure manager Job queue manager Beckerman_0611 Checkpoint restart 19 Meta manager Process manager

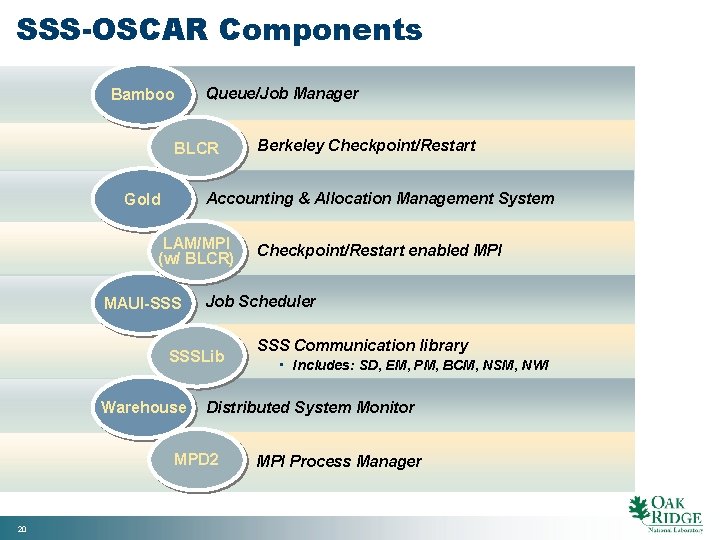

SSS-OSCAR Components Bamboo Queue/Job Manager BLCR Accounting & Allocation Management System Gold LAM/MPI (w/ BLCR) MAUI-SSS Warehouse Checkpoint/Restart enabled MPI Job Scheduler SSSLib SSS Communication library • Includes: SD, EM, PM, BCM, NSM, NWI Distributed System Monitor MPD 2 20 Berkeley Checkpoint/Restart MPI Process Manager

Single System Image Open Source Application Resources (SSI-OSCAR) · Easy use thanks to SSI systems - SMP illusion - High performance - Fault Tolerance · Easy management thanks to OCSAR - Automatic cluster install/update 21

Contacts Stephen L. Scott Network and Cluster Computing Computer Science and Mathematics Division (865) 574 -3144 scottsl@ornl. gov Thomas Naughton Network and Cluster Computing Computer Science and Mathematics Division (865) 576 -4184 naughtont@ornl. gov Geoffroy Vallée Network and Cluster Computing Computer Science and Mathematics Division (865) 574 -3152 Beckerman_0611 valleegr@ornl. gov 22 Scott_OSCAR_0611

- Slides: 22