ONAP Service Mesh Sylvain Desbureaux Orange Labs Network

ONAP: Service Mesh Sylvain Desbureaux – Orange Labs Network

Agenda § Service Mesh? § Tested Service meshes implementations § Common issues on all ”sidecars” service mesh § Details on – Maesh – Kuma – Consul Connect – Linkerd – Istio § Conclusion and Moving forward 2

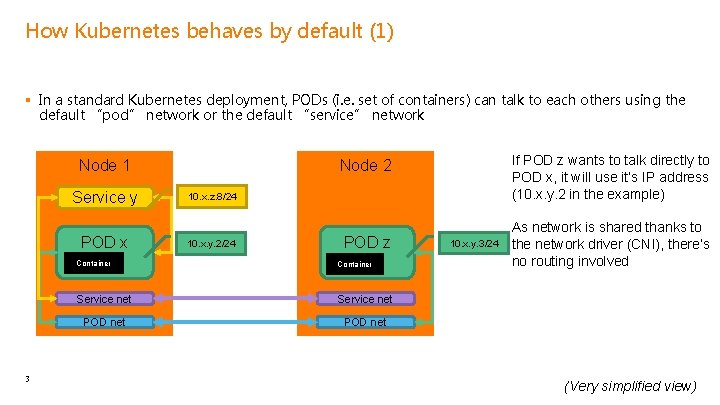

How Kubernetes behaves by default (1) § In a standard Kubernetes deployment, PODs (i. e. set of containers) can talk to each others using the default “pod” network or the default “service” network Node 1 3 If POD z wants to talk directly to POD x, it will use it’s IP address (10. x. y. 2 in the example) Node 2 Service y 10. x. z. 8/24 POD x 10. x. y. 2/24 POD z Container Service net POD net 10. x. y. 3/24 As network is shared thanks to the network driver (CNI), there’s no routing involved (Very simplified view)

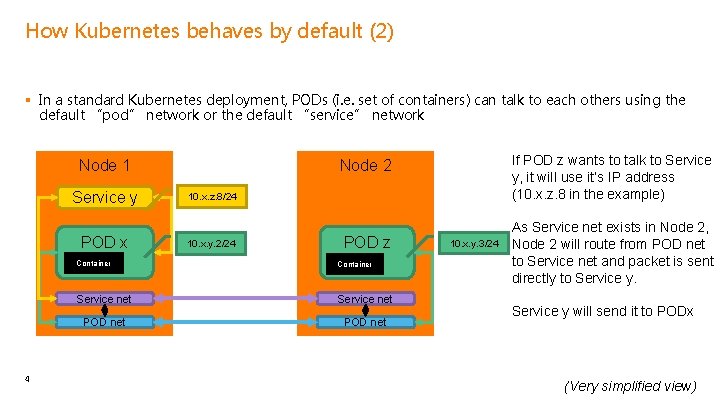

How Kubernetes behaves by default (2) § In a standard Kubernetes deployment, PODs (i. e. set of containers) can talk to each others using the default “pod” network or the default “service” network Node 1 4 If POD z wants to talk to Service y, it will use it’s IP address (10. x. z. 8 in the example) Node 2 Service y 10. x. z. 8/24 POD x 10. x. y. 2/24 POD z Container Service net POD net 10. x. y. 3/24 As Service net exists in Node 2, Node 2 will route from POD net to Service net and packet is sent directly to Service y will send it to PODx (Very simplified view)

Drawbacks on default Kubernetes behaviour § So If the CNI doesn’t provide policies, every POD can discuss with everybody – It’s also hard to know who’s talking to who – And then it’s hard to troubleshoot when it’s not working – “Canary” testing is also more complicated as you have to do all the work by hand § Tracing is also something that is very popular in a microservice world as: – It’s important to see which services is slow, not working, … § Integrated security (such as HTTPs anywhere) relies on (bad) implementation per service – It would be interesting to have a framework helping with that in order to enforce best practices – Complex encryption such as m. TLS (mutual TLS, client authenticates the server and server authenticates the client) would be integrated more easily 5

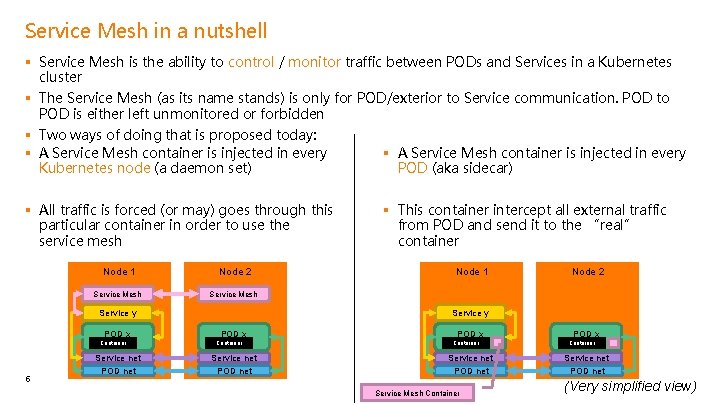

Service Mesh in a nutshell § Service Mesh is the ability to control / monitor traffic between PODs and Services in a Kubernetes cluster § The Service Mesh (as its name stands) is only for POD/exterior to Service communication. POD to POD is either left unmonitored or forbidden § Two ways of doing that is proposed today: § A Service Mesh container is injected in every Kubernetes node (a daemon set) POD (aka sidecar) § All traffic is forced (or may) goes through this particular container in order to use the service mesh Node 1 Node 2 Service Mesh Service y POD x Container 6 Service net POD net § This container intercept all external traffic from POD and send it to the “real” container Node 1 Node 2 Service y POD x Container Service net POD net Service Mesh Container POD x Container Service net POD net (Very simplified view)

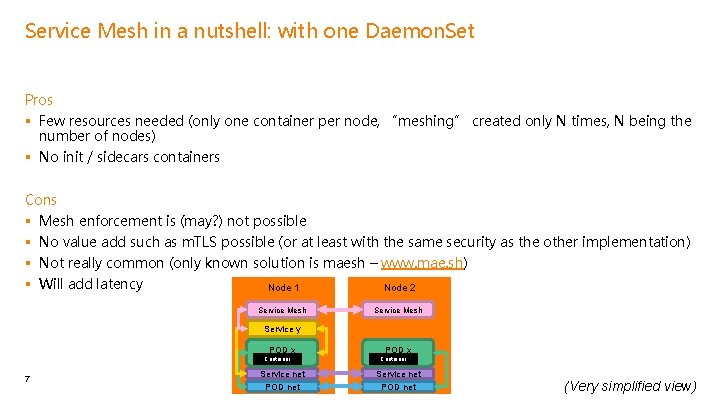

Service Mesh in a nutshell: with one Daemon. Set Pros § Few resources needed (only one container per node, “meshing” created only N times, N being the number of nodes) § No init / sidecars containers Cons § § Mesh enforcement is (may? ) not possible No value add such as m. TLS possible (or at least with the same security as the other implementation) Not really common (only known solution is maesh – www. mae. sh) Will add latency Node 1 Node 2 Service Mesh Service y POD x Container 7 Service net POD x Container Service net POD net (Very simplified view)

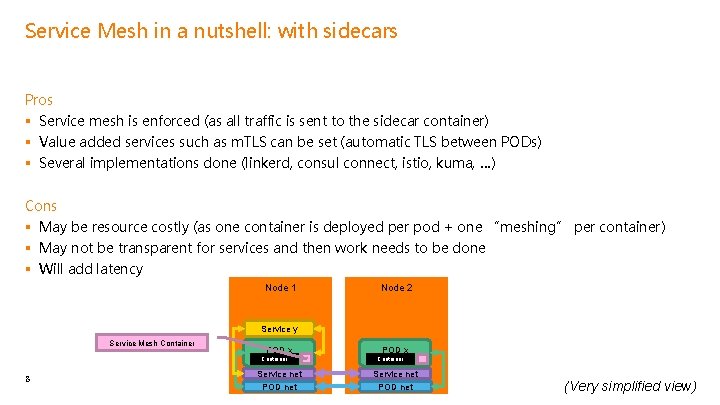

Service Mesh in a nutshell: with sidecars Pros § Service mesh is enforced (as all traffic is sent to the sidecar container) § Value added services such as m. TLS can be set (automatic TLS between PODs) § Several implementations done (linkerd, consul connect, istio, kuma, …) Cons § May be resource costly (as one container is deployed per pod + one “meshing” per container) § May not be transparent for services and then work needs to be done § Will add latency Node 1 Node 2 Service y Service Mesh Container POD x Container 8 Service net POD x Container Service net POD net (Very simplified view)

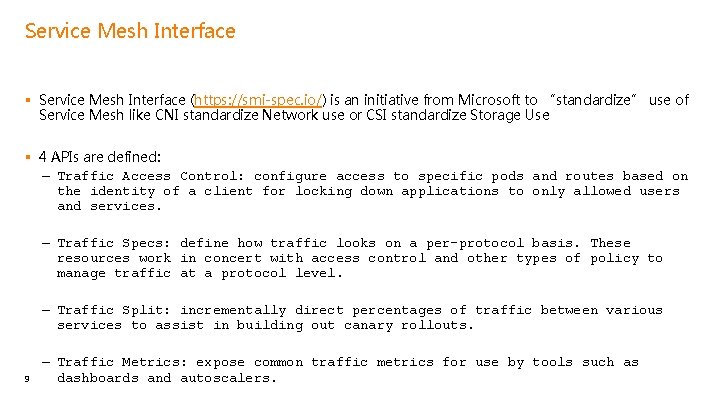

Service Mesh Interface § Service Mesh Interface (https: //smi-spec. io/) is an initiative from Microsoft to “standardize” use of Service Mesh like CNI standardize Network use or CSI standardize Storage Use § 4 APIs are defined: – Traffic Access Control: configure access to specific pods and routes based on the identity of a client for locking down applications to only allowed users and services. – Traffic Specs: define how traffic looks on a per-protocol basis. These resources work in concert with access control and other types of policy to manage traffic at a protocol level. – Traffic Split: incrementally direct percentages of traffic between various services to assist in building out canary rollouts. 9 – Traffic Metrics: expose common traffic metrics for use by tools such as dashboards and autoscalers.

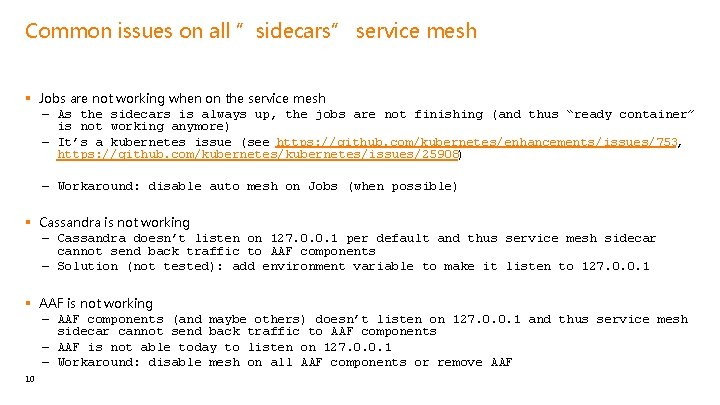

Common issues on all ”sidecars” service mesh § Jobs are not working when on the service mesh – As the sidecars is always up, the jobs are not finishing (and thus “ready container” is not working anymore) – It’s a kubernetes issue (see https: //github. com/kubernetes/enhancements/issues/753, https: //github. com/kubernetes/issues/25908) – Workaround: disable auto mesh on Jobs (when possible) § Cassandra is not working – Cassandra doesn’t listen on 127. 0. 0. 1 per default and thus service mesh sidecar cannot send back traffic to AAF components – Solution (not tested): add environment variable to make it listen to 127. 0. 0. 1 § AAF is not working – AAF components (and maybe others) doesn’t listen on 127. 0. 0. 1 and thus service mesh sidecar cannot send back traffic to AAF components – AAF is not able today to listen on 127. 0. 0. 1 – Workaround: disable mesh on all AAF components or remove AAF 10

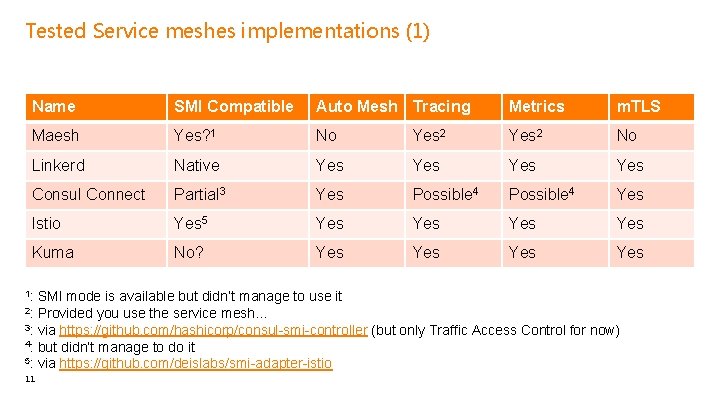

Tested Service meshes implementations (1) Name SMI Compatible Auto Mesh Tracing Metrics m. TLS Maesh Yes? 1 No Yes 2 No Linkerd Native Yes Yes Consul Connect Partial 3 Yes Possible 4 Yes Istio Yes 5 Yes Yes Kuma No? Yes Yes 1: SMI mode is available but didn’t manage to use it Provided you use the service mesh… 3: via https: //github. com/hashicorp/consul-smi-controller (but only Traffic Access Control for now) 4: but didn’t manage to do it 5: via https: //github. com/deislabs/smi-adapter-istio 2: 11

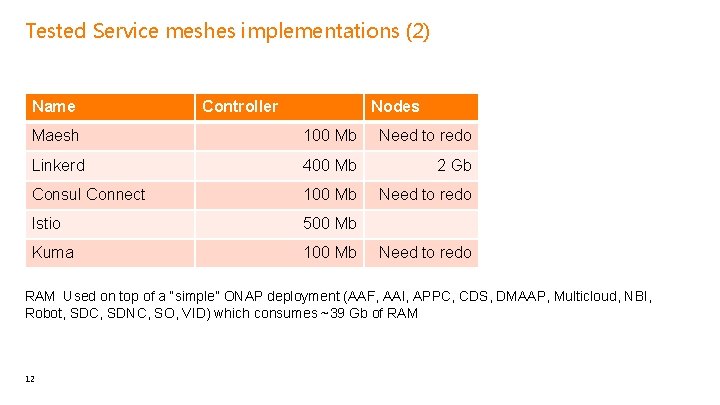

Tested Service meshes implementations (2) Name Controller Nodes Maesh 100 Mb Need to redo Linkerd 400 Mb 2 Gb Consul Connect 100 Mb Need to redo Istio 500 Mb Kuma 100 Mb Need to redo RAM Used on top of a “simple” ONAP deployment (AAF, AAI, APPC, CDS, DMAAP, Multicloud, NBI, Robot, SDC, SDNC, SO, VID) which consumes ~39 Gb of RAM 12

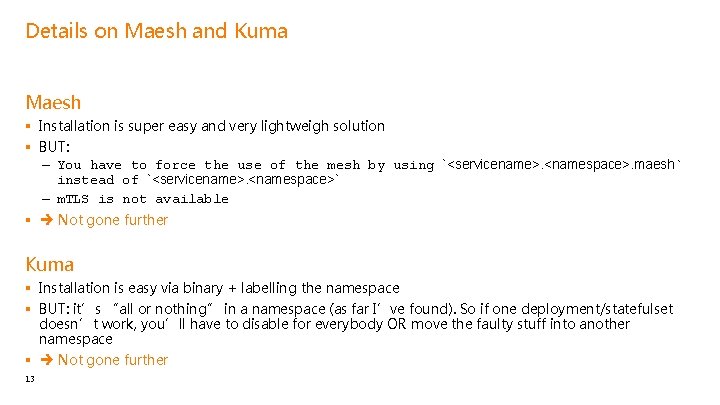

Details on Maesh and Kuma Maesh § Installation is super easy and very lightweigh solution § BUT: – You have to force the use of the mesh by using `<servicename>. <namespace>. maesh` instead of `<servicename>. <namespace>` – m. TLS is not available § Not gone further Kuma § Installation is easy via binary + labelling the namespace § BUT: it’s “all or nothing” in a namespace (as far I’ve found). So if one deployment/statefulset doesn’t work, you’ll have to disable for everybody OR move the faulty stuff into another namespace § Not gone further 13

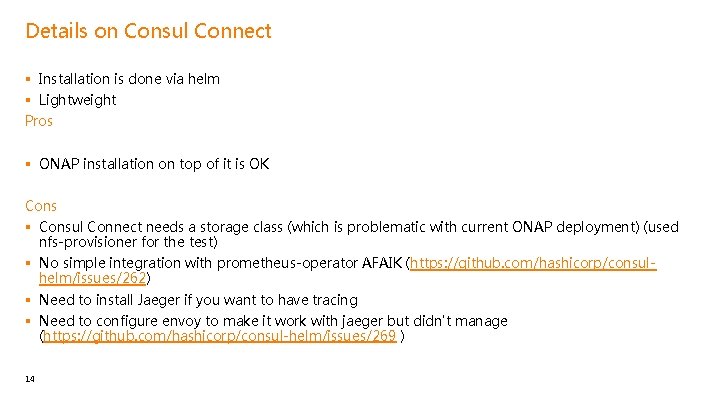

Details on Consul Connect § Installation is done via helm § Lightweight Pros § ONAP installation on top of it is OK Cons § Consul Connect needs a storage class (which is problematic with current ONAP deployment) (used nfs-provisioner for the test) § No simple integration with prometheus-operator AFAIK (https: //github. com/hashicorp/consulhelm/issues/262) § Need to install Jaeger if you want to have tracing § Need to configure envoy to make it work with jaeger but didn't manage (https: //github. com/hashicorp/consul-helm/issues/269 ) 14

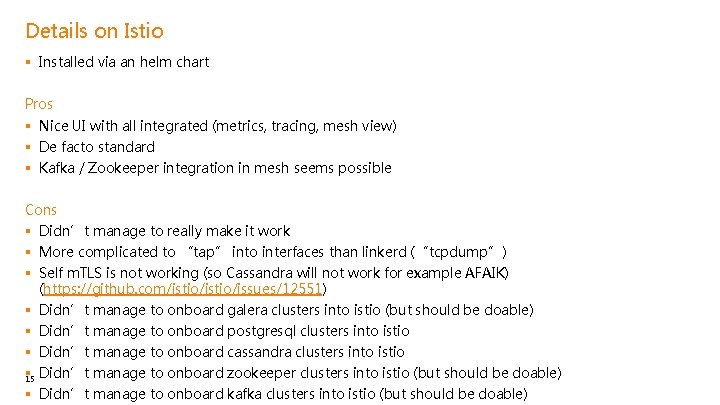

Details on Istio § Installed via an helm chart Pros § Nice UI with all integrated (metrics, tracing, mesh view) § De facto standard § Kafka / Zookeeper integration in mesh seems possible Cons § Didn’t manage to really make it work § More complicated to “tap” into interfaces than linkerd (“tcpdump”) § Self m. TLS is not working (so Cassandra will not work for example AFAIK) (https: //github. com/istio/issues/12551) § Didn’t manage to onboard galera clusters into istio (but should be doable) § Didn’t manage to onboard postgresql clusters into istio § Didn’t manage to onboard cassandra clusters into istio § 15 Didn’t manage to onboard zookeeper clusters into istio (but should be doable) § Didn’t manage to onboard kafka clusters into istio (but should be doable)

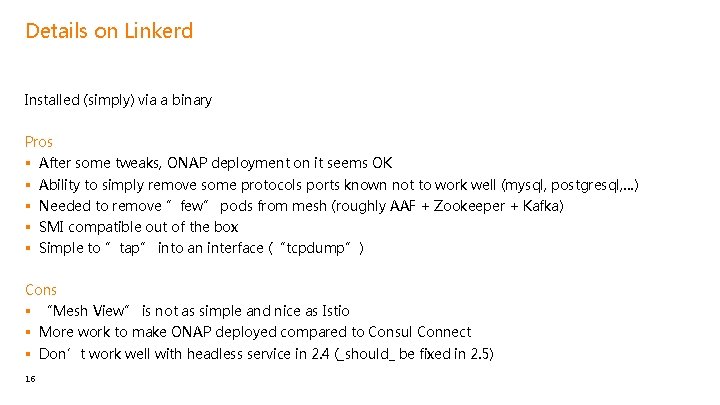

Details on Linkerd Installed (simply) via a binary Pros § § § After some tweaks, ONAP deployment on it seems OK Ability to simply remove some protocols ports known not to work well (mysql, postgresql, …) Needed to remove ”few” pods from mesh (roughly AAF + Zookeeper + Kafka) SMI compatible out of the box Simple to ”tap” into an interface (“tcpdump”) Cons § “Mesh View” is not as simple and nice as Istio § More work to make ONAP deployed compared to Consul Connect § Don’t work well with headless service in 2. 4 (_should_ be fixed in 2. 5) 16

Conclusion and moving forward § Linkerd seems to be the best choice as “default” service mesh implementation for ONAP: – SMI compatible – Managed to make it work… – All needed features § On the other hand, istio is the “de facto standard” and then it’s hard to avoid it… § Still to do: – SMI allow swagger onboarding. Could be nice to see what that means exactly – Create better policies on real use inside ONAP (forbid access to DB if not a backend for example) § Next step proposal (Po. C in Frankfurt, release in Guilin): – Add a global. service. mesh toggle which will enable service meshing – If set, will generate informations for linkerd (disable jobs, disable mesh on some services, …) – P 1 – If global. service. mesh. implementation is set to istio, will generate informations 17 for linkerd (disable jobs, disable mesh on some services, …) – P 2

Thanks

- Slides: 18