NIMBLE PAGE MANAGEMENT FOR TIERED MEMORY SYSTEMS Zi

NIMBLE PAGE MANAGEMENT FOR TIERED MEMORY SYSTEMS Zi Yan* Daniel Lustig*, David Nellans* Abhishek Bhattacharjee✥ *NVIDIA Research, ✥Yale University

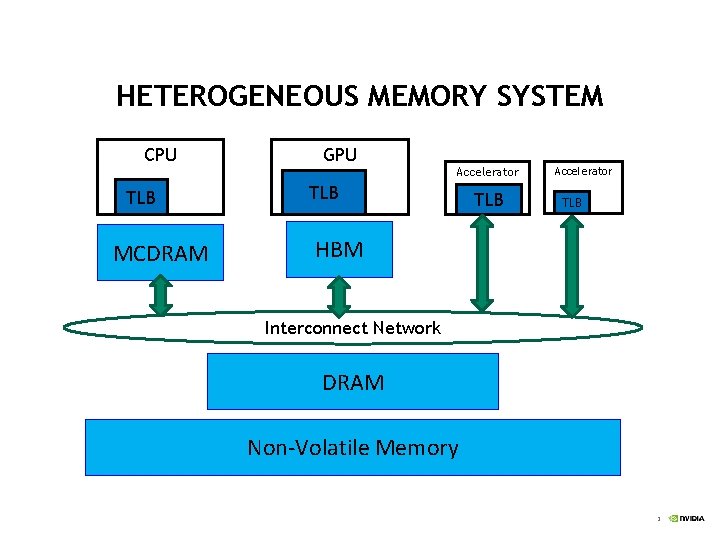

HETEROGENEOUS MEMORY SYSTEM CPU GPU Accelerator TLB MCDRAM TLB Accelerator TLB HBM Interconnect Network DRAM Non-Volatile Memory 2

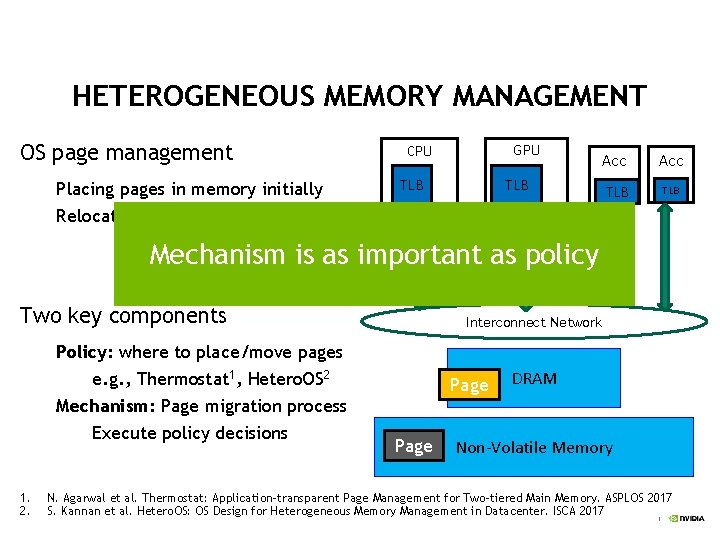

HETEROGENEOUS MEMORY MANAGEMENT OS page management Placing pages in memory initially Relocating pages to other memory GPU CPU TLB Acc TLB TLB HBM MCDRAM Mechanism is as important as policy Page Two key components Policy: where to place/move pages e. g. , Thermostat 1, Hetero. OS 2 Mechanism: Page migration process Execute policy decisions 1. 2. Interconnect Network Page DRAM Non-Volatile Memory N. Agarwal et al. Thermostat: Application-transparent Page Management for Two-tiered Main Memory. ASPLOS 2017 S. Kannan et al. Hetero. OS: OS Design for Heterogeneous Memory Management in Datacenter. ISCA 2017 3

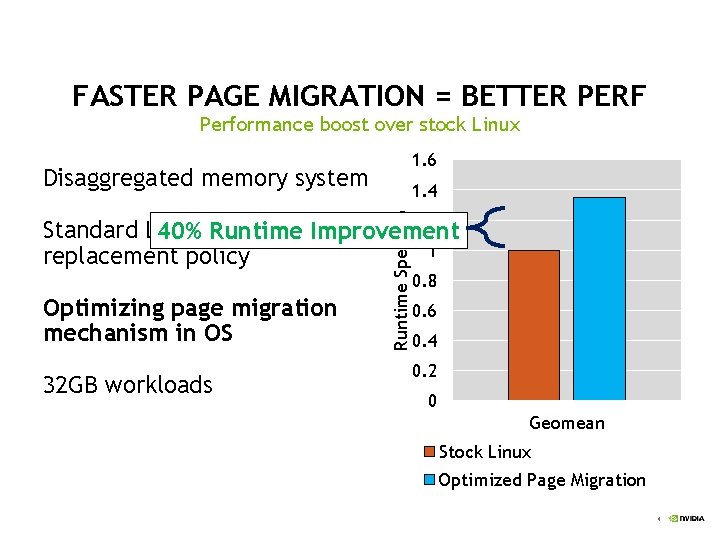

FASTER PAGE MIGRATION = BETTER PERF Performance boost over stock Linux 1. 6 Disaggregated memory system CPU 1. 4 Optimizing page migration mechanism in OS 32 GB workloads Runtime Speedup 1. 2 Standard Linux page 40% Runtime Improvement 1 replacement policy TLB 0. 8 0. 6 0. 4 Fast memory 16 GB 0. 2 0 Slow memory Geomean 40 GB Stock Linux Optimized Page Migration 4

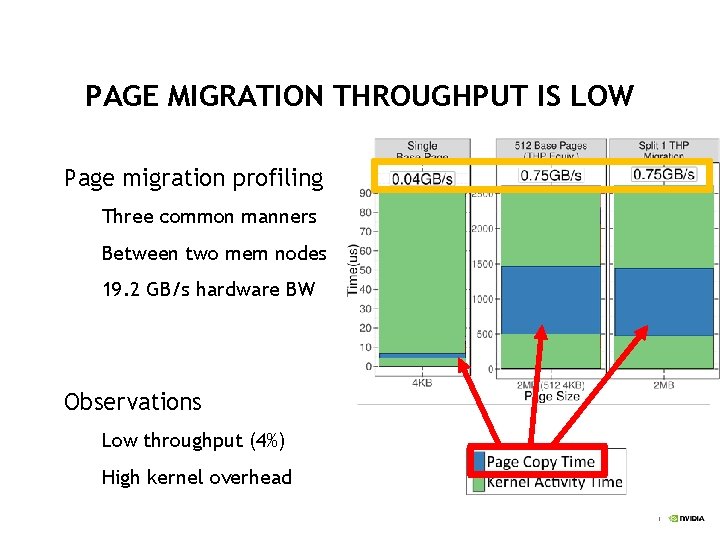

PAGE MIGRATION THROUGHPUT IS LOW 4 KB 2 MB Page migration profiling Three common manners Between two mem nodes 19. 2 GB/s hardware BW Observations Low throughput (4%) High kernel overhead 5

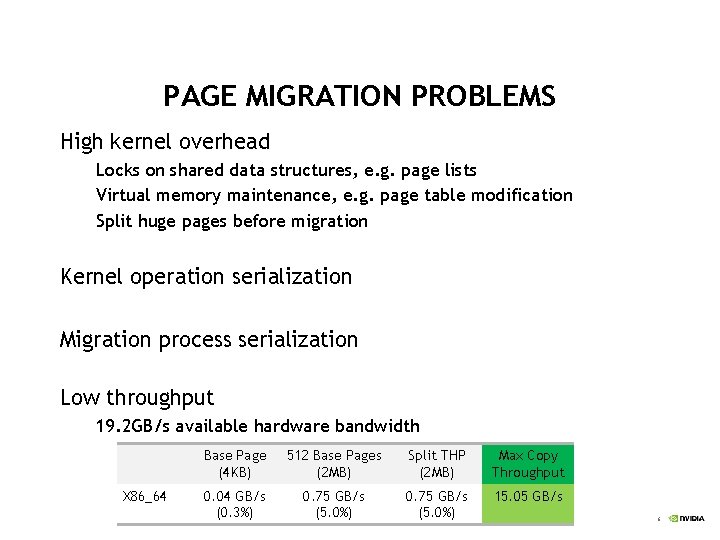

PAGE MIGRATION PROBLEMS High kernel overhead Locks on shared data structures, e. g. page lists Virtual memory maintenance, e. g. page table modification Split huge pages before migration Kernel operation serialization Migration process serialization Low throughput 19. 2 GB/s available hardware bandwidth X 86_64 Base Page (4 KB) 512 Base Pages (2 MB) Split THP (2 MB) Max Copy Throughput 0. 04 GB/s (0. 3%) 0. 75 GB/s (5. 0%) 15. 05 GB/s 6

THE GOALS OF IMPROVING PAGE MIGRATION High performance Achieve throughput as high as possible Significantly reduce page migration overheads Ease of implementation Implemented in Linux Reuse most of existing code Generality Not rely on special hardware Applicable to other uses, memory offlining, hot add/remove 7

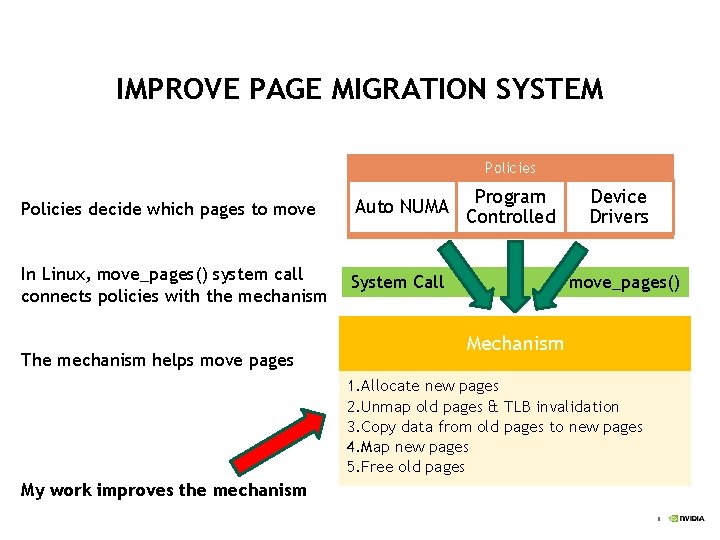

IMPROVE PAGE MIGRATION SYSTEM Policies decide which pages to move Auto NUMA In Linux, move_pages() system call connects policies with the mechanism System Call The mechanism helps move pages Program Controlled Device Drivers move_pages() Mechanism 1. Allocate new pages 2. Unmap old pages & TLB invalidation 3. Copy data from old pages to new pages 4. Map new pages 5. Free old pages My work improves the mechanism 8

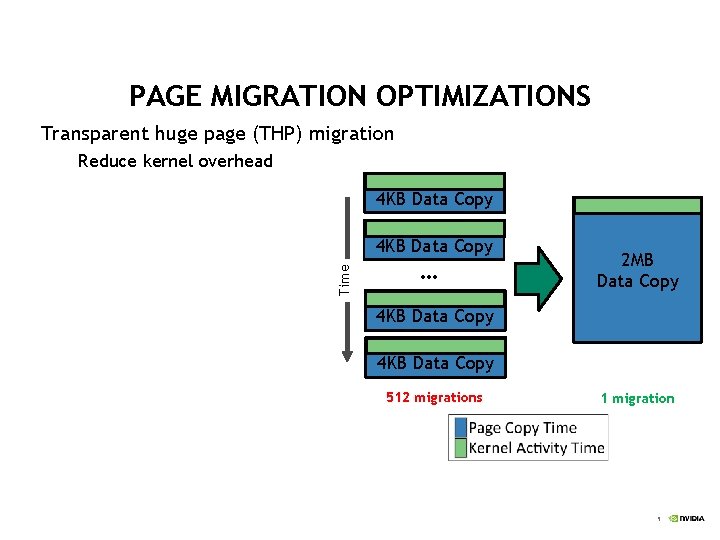

PAGE MIGRATION OPTIMIZATIONS Transparent huge page (THP) migration Reduce kernel overhead 4 KB Data Copy Time 4 KB Data Copy … 2 MB Data Copy 4 KB Data Copy 512 migrations 1 migration 9

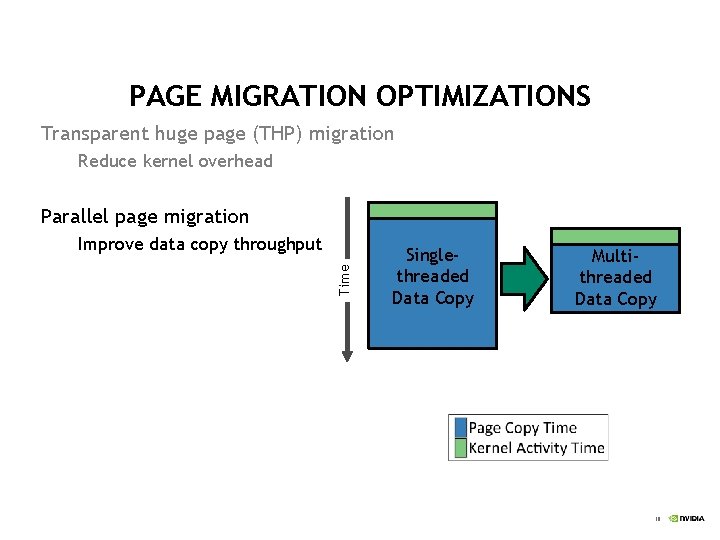

PAGE MIGRATION OPTIMIZATIONS Transparent huge page (THP) migration Reduce kernel overhead Parallel page migration Time Improve data copy throughput Singlethreaded Data Copy Multithreaded Data Copy 10

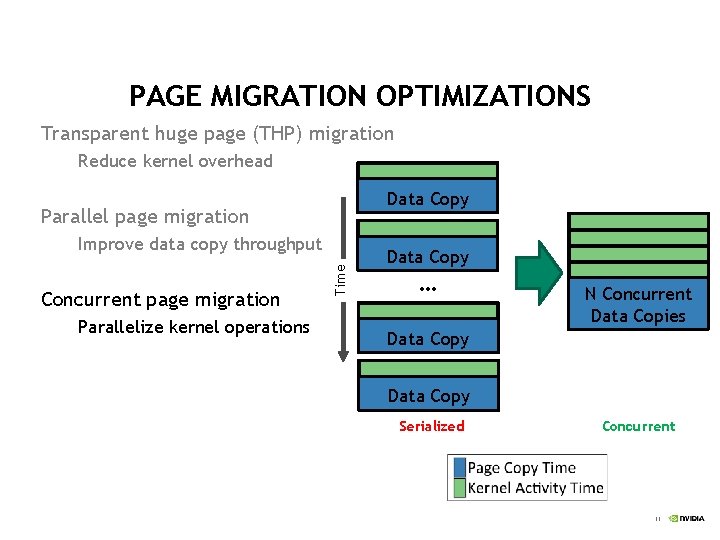

PAGE MIGRATION OPTIMIZATIONS Transparent huge page (THP) migration Reduce kernel overhead Data Copy Parallel page migration Concurrent page migration Parallelize kernel operations Time Improve data copy throughput Data Copy … N Concurrent Data Copies Data Copy Serialized Concurrent 11

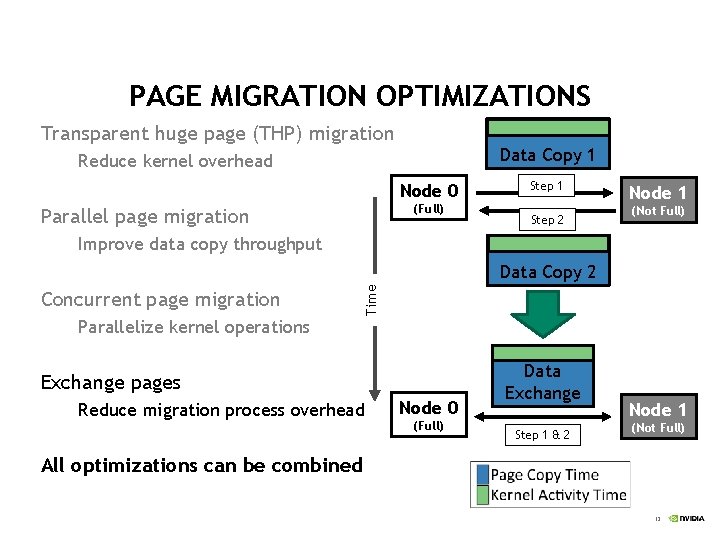

PAGE MIGRATION OPTIMIZATIONS Transparent huge page (THP) migration Data Copy 1 Reduce kernel overhead Node 0 (Full) Parallel page migration Step 1 Step 2 Node 1 (Not Full) Improve data copy throughput Concurrent page migration Time Data Copy 2 Parallelize kernel operations Exchange pages Reduce migration process overhead Node 0 (Full) Data Exchange Step 1 & 2 Node 1 (Not Full) All optimizations can be combined 12

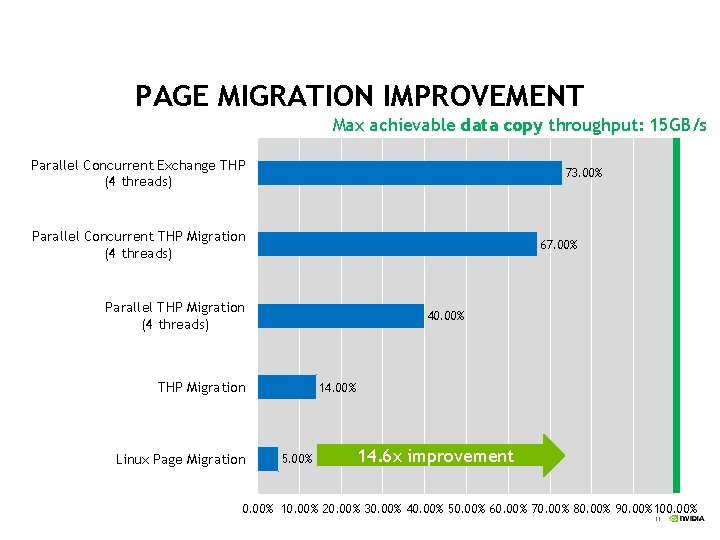

PAGE MIGRATION IMPROVEMENT Max achievable data copy throughput: 15 GB/s Parallel Concurrent Exchange THP (4 threads) 73. 00% Parallel Concurrent THP Migration (4 threads) 67. 00% Parallel THP Migration (4 threads) 40. 00% THP Migration Linux Page Migration 14. 00% 5. 00% 14. 6 x improvement 0. 00% 10. 00% 20. 00% 30. 00% 40. 00% 50. 00% 60. 00% 70. 00% 80. 00% 90. 00% 100. 00% 13

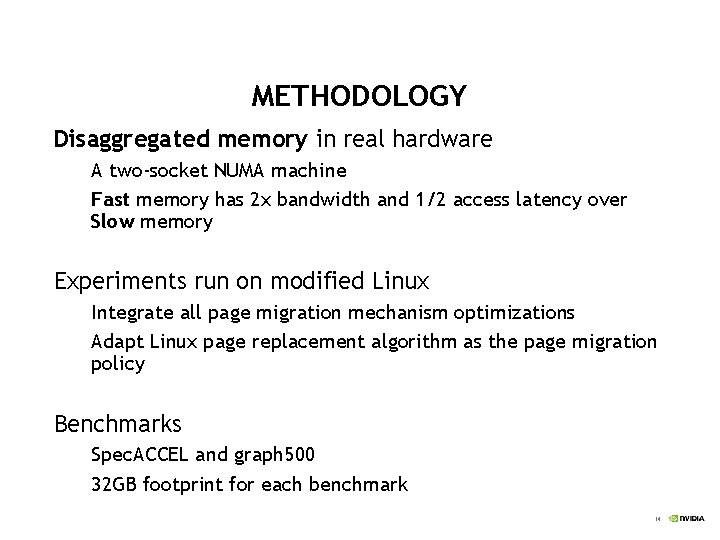

METHODOLOGY Disaggregated memory in real hardware A two-socket NUMA machine Fast memory has 2 x bandwidth and 1/2 access latency over Slow memory Experiments run on modified Linux Integrate all page migration mechanism optimizations Adapt Linux page replacement algorithm as the page migration policy Benchmarks Spec. ACCEL and graph 500 32 GB footprint for each benchmark 14

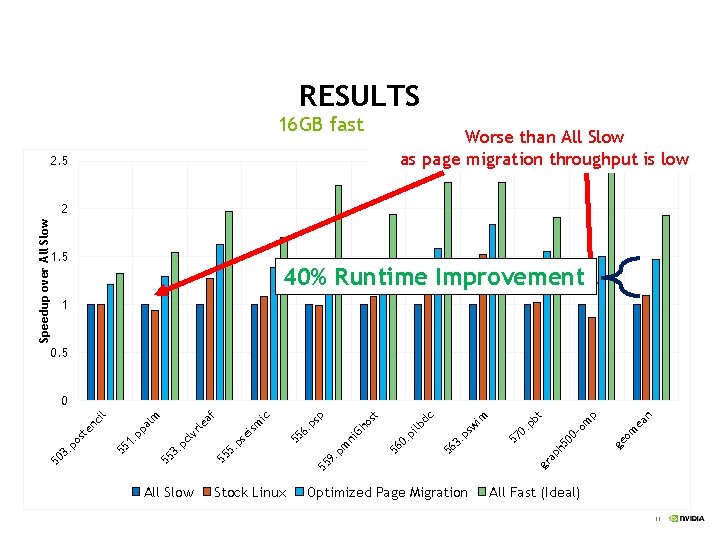

RESULTS 16 GB fast memory Worse than All Slow as page migration throughput is low 2. 5 Speedup over All Slow 2 1. 5 40% Runtime Improvement 1 0. 5 All Slow Stock Linux Optimized Page Migration n ge -o gr ap h 5 00 om m ea p bt. p 57 0 im ps w 56 3. lb 0. pi 56 ni m 55 9. p dc t Gh os . p 55 6 se 55 5. p sp ic ism af le vr cl 3. p 55 1. p pa 55 50 3. po st en ci lm l 0 All Fast (Ideal) 15

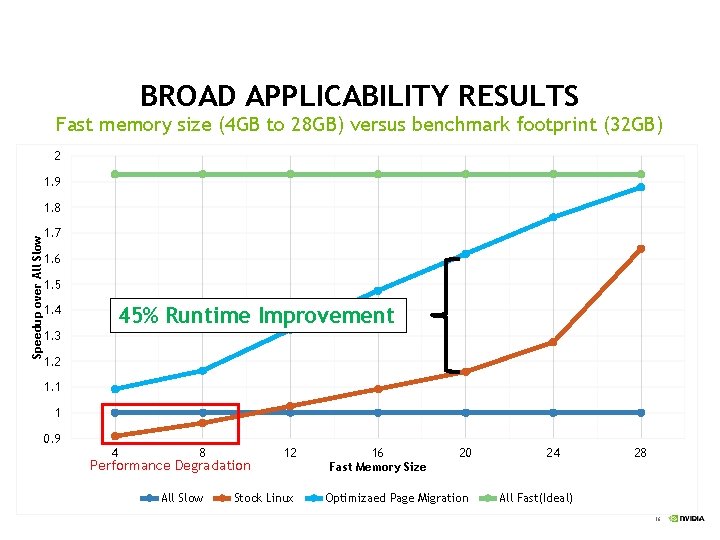

BROAD APPLICABILITY RESULTS Fast memory size (4 GB to 28 GB) versus benchmark footprint (32 GB) 2 1. 9 Speedup over All Slow 1. 8 1. 7 1. 6 1. 5 1. 4 45% Runtime Improvement 1. 3 1. 2 1. 1 1 0. 9 4 8 12 All Slow Stock Linux Performance Degradation 16 Fast Memory Size 20 Optimizaed Page Migration 24 28 All Fast(Ideal) 16

SUMMARY Existing page migration mechanism is inefficient It utilizes only 4% hardware bandwidth It can lead to performance degradation in heterogeneous memory management Our optimizations improve page migration throughput 14. 6 x page migration throughput improvement Up to 45% end-to-end runtime speedup High-performance page migration is essential to heterogeneous memory management 17

QUESTIONS? 18

- Slides: 18