Monte Carlo Methods and Statistical Physics Mathematical Biology

Monte Carlo Methods and Statistical Physics Mathematical Biology Lecture 4 James A. Glazier (Partially Based on Koonin and Meredith, Computational Physics, Chapter 8)

• Monte Carlo Methods Use Statistical Physics Techniques to Solve Problems that are Difficult or Inconvenient to Solve Deterministically. • Two Basic Applications: – Evaluation of Complex Multidimensional Integrals (e. g. in Statistical Mechanics) [1950 s] – Optimization of Problems where the Deterministic Problem is Algorithmically Hard (NP Complete—e. g. the Traveling Salesman Problem) [1970 s]. • Both Applications Important in Biology.

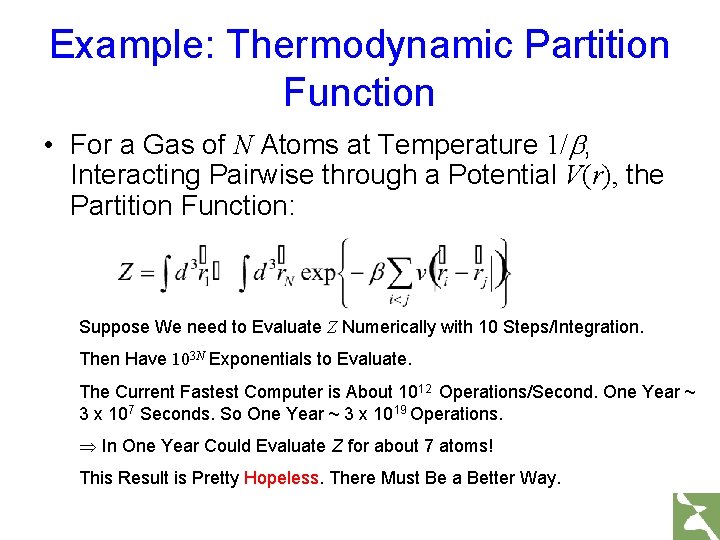

Example: Thermodynamic Partition Function • For a Gas of N Atoms at Temperature 1/b, Interacting Pairwise through a Potential V(r), the Partition Function: Suppose We need to Evaluate Z Numerically with 10 Steps/Integration. Then Have 103 N Exponentials to Evaluate. The Current Fastest Computer is About 1012 Operations/Second. One Year ~ 3 x 107 Seconds. So One Year ~ 3 x 1019 Operations. Þ In One Year Could Evaluate Z for about 7 atoms! This Result is Pretty Hopeless. There Must Be a Better Way.

![Normal Deterministic Integration • Consider the Integral: • Subdivide [0, 1] into N Evenly Normal Deterministic Integration • Consider the Integral: • Subdivide [0, 1] into N Evenly](http://slidetodoc.com/presentation_image_h2/53cd61607af919b0e77a3720549b5f2a/image-4.jpg)

Normal Deterministic Integration • Consider the Integral: • Subdivide [0, 1] into N Evenly Spaced Intervals of width Dx=1/N. Then:

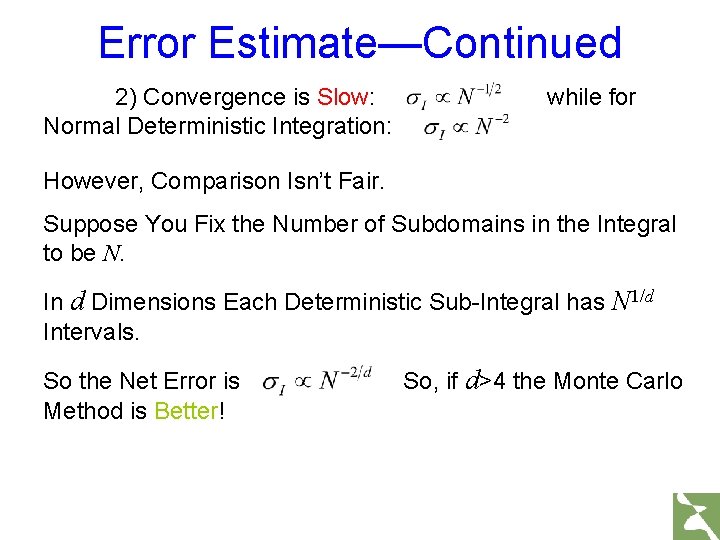

Error Estimate—Continued 2) Convergence is Slow: Normal Deterministic Integration: while for However, Comparison Isn’t Fair. Suppose You Fix the Number of Subdomains in the Integral to be N. In d Dimensions Each Deterministic Sub-Integral has N 1/d Intervals. So the Net Error is Method is Better! So, if d>4 the Monte Carlo

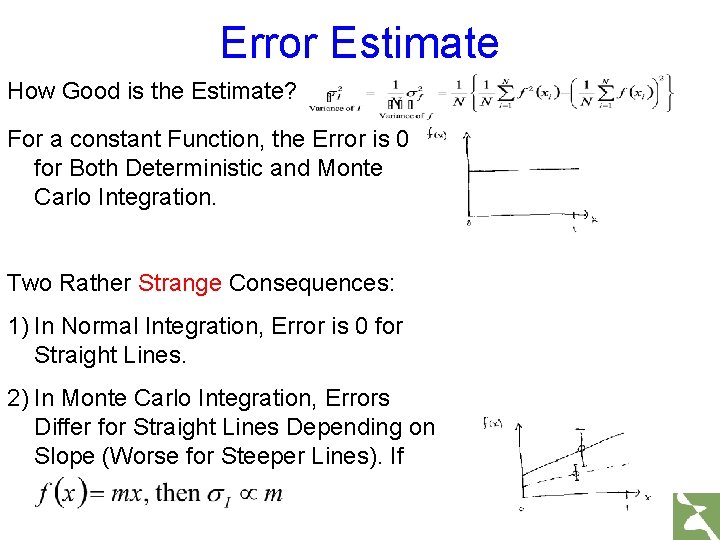

Error Estimate How Good is the Estimate? For a constant Function, the Error is 0 for Both Deterministic and Monte Carlo Integration. Two Rather Strange Consequences: 1) In Normal Integration, Error is 0 for Straight Lines. 2) In Monte Carlo Integration, Errors Differ for Straight Lines Depending on Slope (Worse for Steeper Lines). If

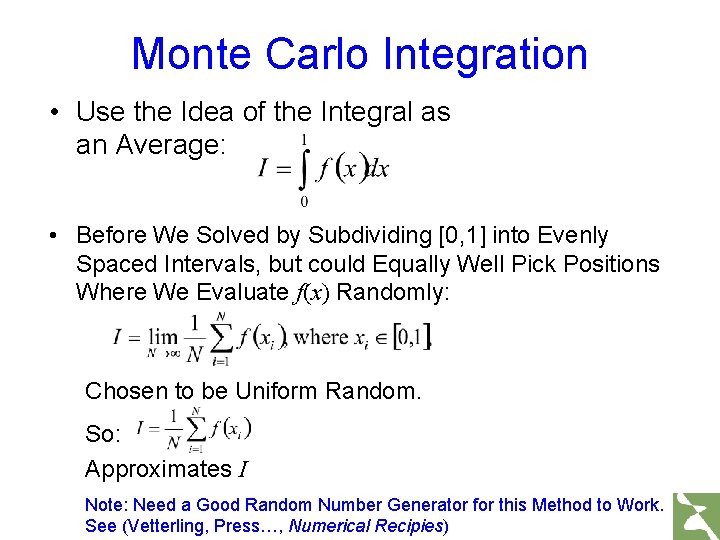

Monte Carlo Integration • Use the Idea of the Integral as an Average: • Before We Solved by Subdividing [0, 1] into Evenly Spaced Intervals, but could Equally Well Pick Positions Where We Evaluate f(x) Randomly: Chosen to be Uniform Random. So: Approximates I Note: Need a Good Random Number Generator for this Method to Work. See (Vetterling, Press…, Numerical Recipies)

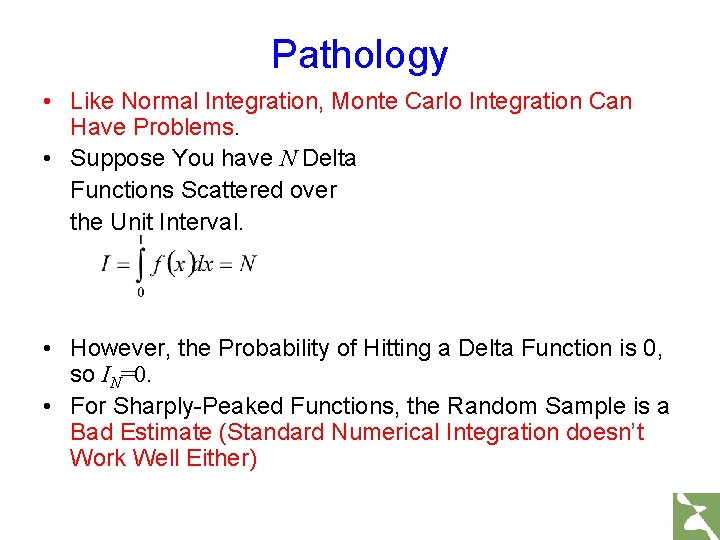

Pathology • Like Normal Integration, Monte Carlo Integration Can Have Problems. • Suppose You have N Delta Functions Scattered over the Unit Interval. • However, the Probability of Hitting a Delta Function is 0, so IN=0. • For Sharply-Peaked Functions, the Random Sample is a Bad Estimate (Standard Numerical Integration doesn’t Work Well Either)

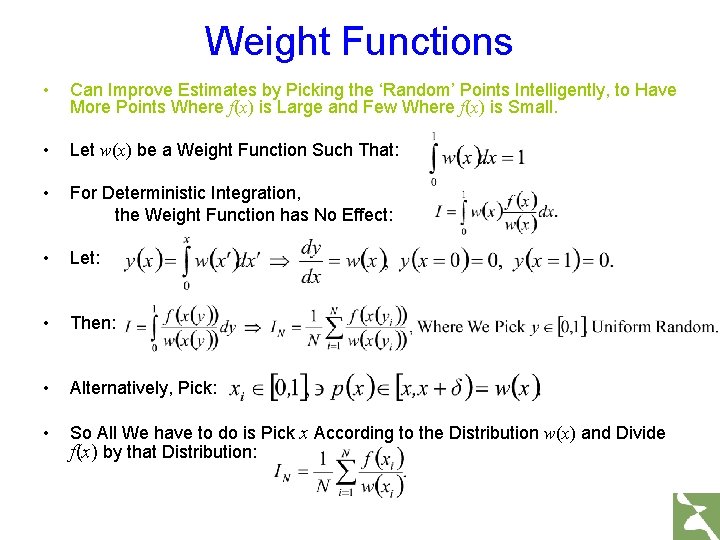

Weight Functions • Can Improve Estimates by Picking the ‘Random’ Points Intelligently, to Have More Points Where f(x) is Large and Few Where f(x) is Small. • Let w(x) be a Weight Function Such That: • For Deterministic Integration, the Weight Function has No Effect: • Let: • Then: • Alternatively, Pick: • So All We have to do is Pick x According to the Distribution w(x) and Divide f(x) by that Distribution:

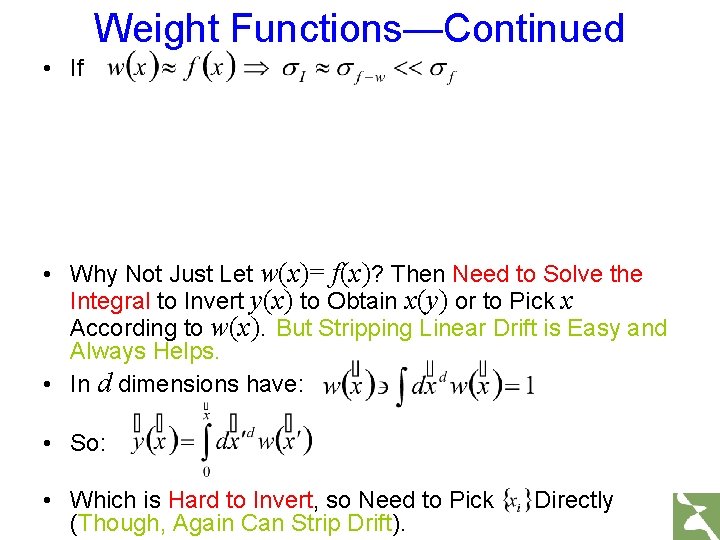

Weight Functions—Continued • If • Why Not Just Let w(x)= f(x)? Then Need to Solve the Integral to Invert y(x) to Obtain x(y) or to Pick x According to w(x). But Stripping Linear Drift is Easy and Always Helps. • In d dimensions have: • So: • Which is Hard to Invert, so Need to Pick (Though, Again Can Strip Drift). Directly

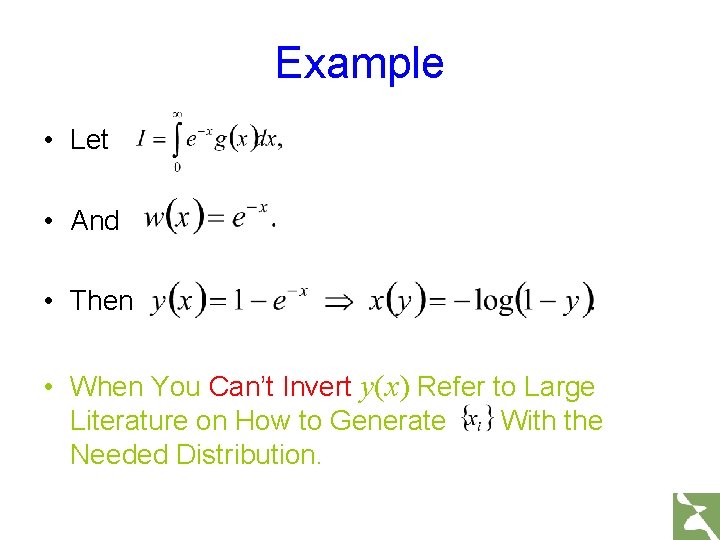

Example • Let • And • Then • When You Can’t Invert y(x) Refer to Large Literature on How to Generate With the Needed Distribution.

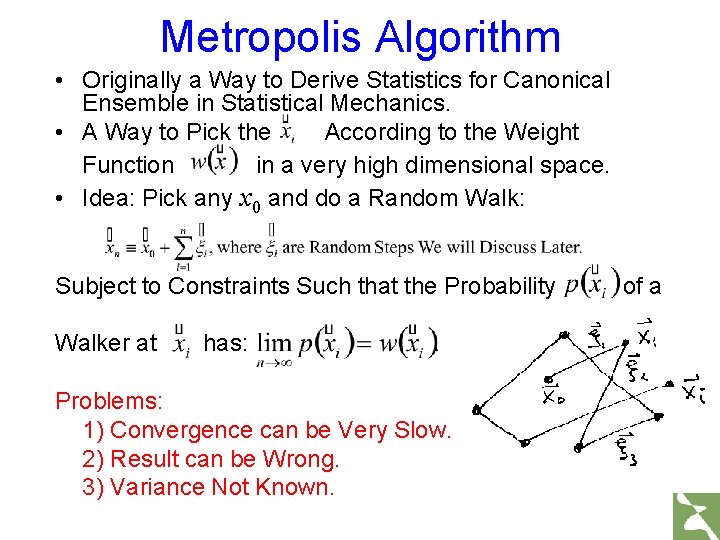

Metropolis Algorithm • Originally a Way to Derive Statistics for Canonical Ensemble in Statistical Mechanics. • A Way to Pick the According to the Weight Function in a very high dimensional space. • Idea: Pick any x 0 and do a Random Walk: Subject to Constraints Such that the Probability Walker at has: Problems: 1) Convergence can be Very Slow. 2) Result can be Wrong. 3) Variance Not Known. of a

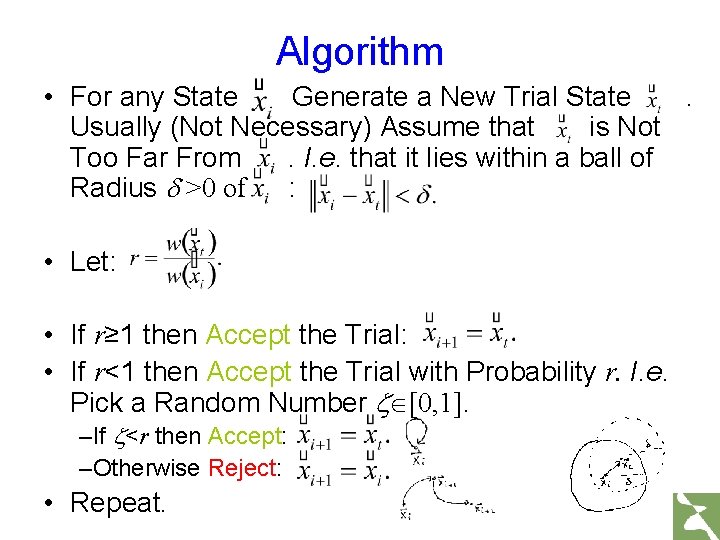

Algorithm • For any State Generate a New Trial State. Usually (Not Necessary) Assume that is Not Too Far From. I. e. that it lies within a ball of Radius d >0 of : • Let: • If r≥ 1 then Accept the Trial: • If r<1 then Accept the Trial with Probability r. I. e. Pick a Random Number [0, 1]. –If <r then Accept: –Otherwise Reject: • Repeat.

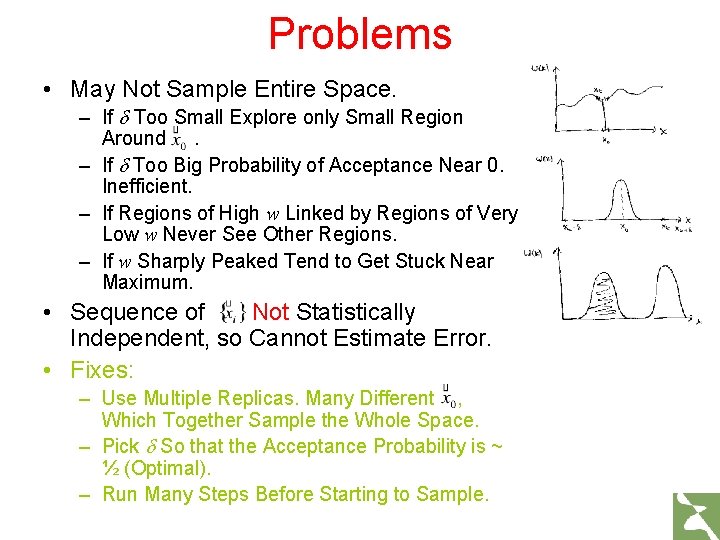

Problems • May Not Sample Entire Space. – If d Too Small Explore only Small Region Around. – If d Too Big Probability of Acceptance Near 0. Inefficient. – If Regions of High w Linked by Regions of Very Low w Never See Other Regions. – If w Sharply Peaked Tend to Get Stuck Near Maximum. • Sequence of Not Statistically Independent, so Cannot Estimate Error. • Fixes: – Use Multiple Replicas. Many Different , Which Together Sample the Whole Space. – Pick d So that the Acceptance Probability is ~ ½ (Optimal). – Run Many Steps Before Starting to Sample.

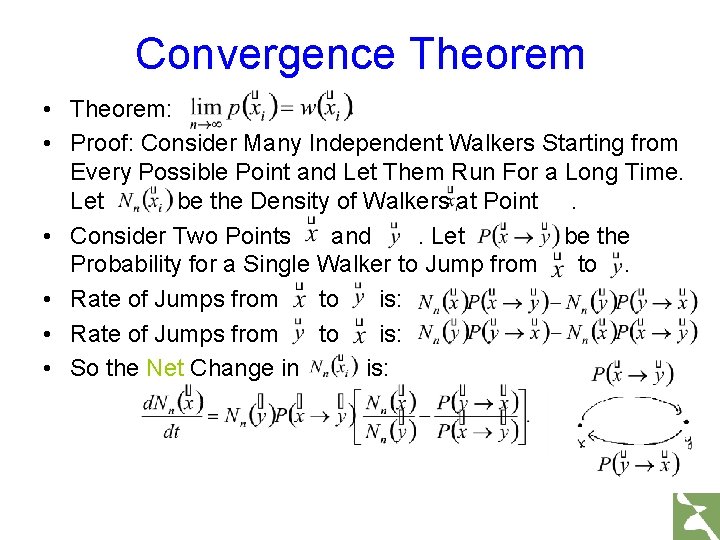

Convergence Theorem • Theorem: • Proof: Consider Many Independent Walkers Starting from Every Possible Point and Let Them Run For a Long Time. Let be the Density of Walkers at Point. • Consider Two Points and. Let be the Probability for a Single Walker to Jump from to. • Rate of Jumps from to is: • So the Net Change in is:

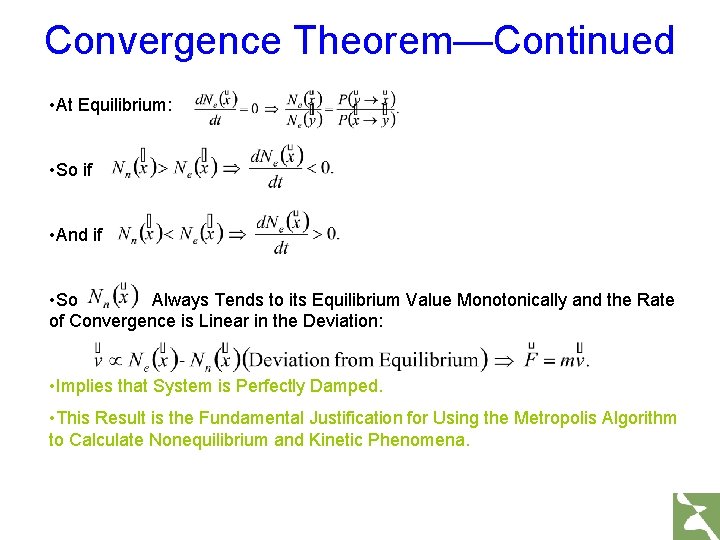

Convergence Theorem—Continued • At Equilibrium: • So if • And if • So Always Tends to its Equilibrium Value Monotonically and the Rate of Convergence is Linear in the Deviation: • Implies that System is Perfectly Damped. • This Result is the Fundamental Justification for Using the Metropolis Algorithm to Calculate Nonequilibrium and Kinetic Phenomena.

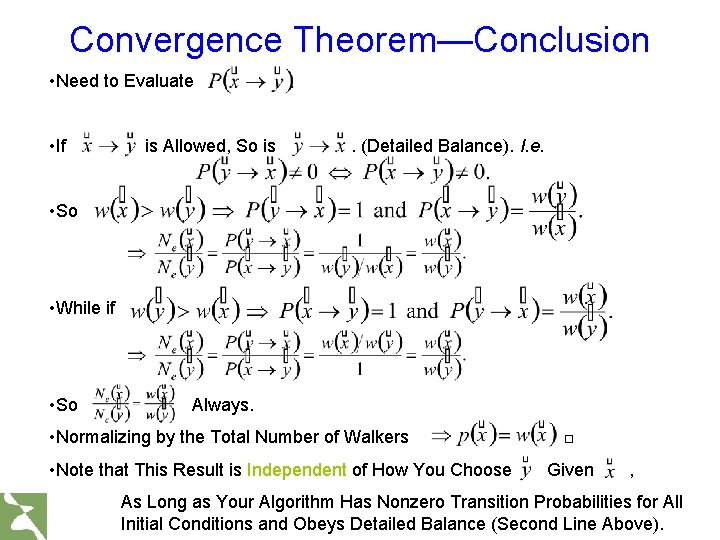

Convergence Theorem—Conclusion • Need to Evaluate • If is Allowed, So is . (Detailed Balance). I. e. • So • While if • So Always. • Normalizing by the Total Number of Walkers • Note that This Result is Independent of How You Choose □ Given , As Long as Your Algorithm Has Nonzero Transition Probabilities for All Initial Conditions and Obeys Detailed Balance (Second Line Above).

- Slides: 17