Machine Learning ICS 178 Instructor Max Welling visualization

- Slides: 13

Machine Learning ICS 178 Instructor: Max Welling visualization & k nearest neighbors

Types of Learning • Supervised Learning • Labels are provided, there is a strong learning signal. • e. g. classification, regression. • Semi-supervised Learning. • Only part of the data have labels. • e. g. a child growing up. • Reinforcement learning. • The learning signal is a (scalar) reward and may come with a delay. • e. g. trying to learn to play chess, a mouse in a maze. • Unsupervised learning • There is no direct learning signal. We are simply trying to find structure in data. • e. g. clustering, dimensionality reduction.

Ingredients • Data: • what kind of data do we have? • Prior assumptions: • what do we know a priori about the problem? • Representation: • How do we represent the data? • Model / Hypothesis space: • What hypotheses are we willing to entertain to explain the data? • Feedback / learning signal: • what kind of learning signal do we have (delayed, labels)? • Learning algorithm: • How do we update the model (or set of hypothesis) from feedback? • Evaluation: • How well did we do, should we change the model?

Data Preprocessing • Before you start modeling the data, you want to have a look at it to get a “feel”. • What are the “modalities” of the data: e. g. • Netflix: users and movies • Text: words-tokens and documents • Video: pixels, frames, color-index (R, G, B) • What is the domain? • Netflix: rating-values [1, 2, 3, 4, 5, ? ] • Text: # times a word appears: [0, 1, 2, 3, . . . ] • Video: brightness value: [0, . . , 255] or real-valued. • Are there missing data-entries? • Are there outliers in the data? (perhaps a typo? )

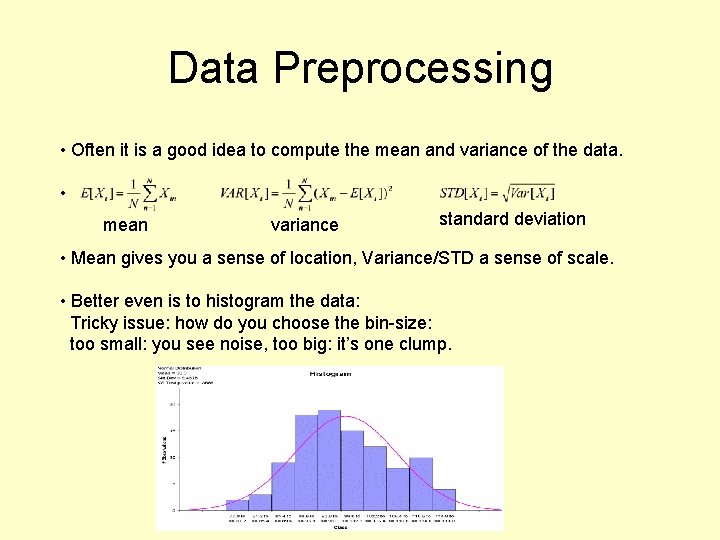

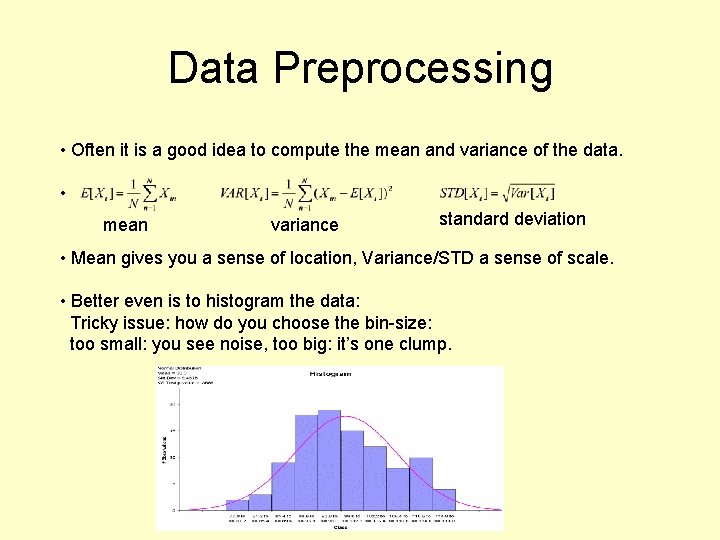

Data Preprocessing • Often it is a good idea to compute the mean and variance of the data. • mean variance standard deviation • Mean gives you a sense of location, Variance/STD a sense of scale. • Better even is to histogram the data: Tricky issue: how do you choose the bin-size: too small: you see noise, too big: it’s one clump.

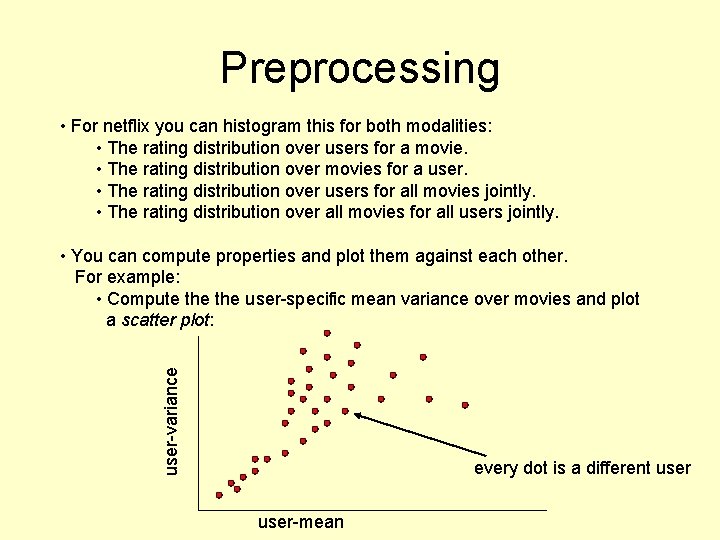

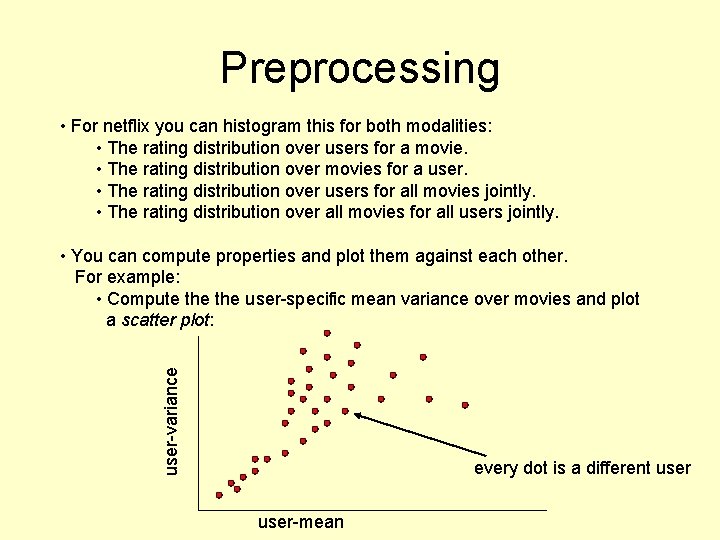

Preprocessing • For netflix you can histogram this for both modalities: • The rating distribution over users for a movie. • The rating distribution over movies for a user. • The rating distribution over users for all movies jointly. • The rating distribution over all movies for all users jointly. user-variance • You can compute properties and plot them against each other. For example: • Compute the user-specific mean variance over movies and plot a scatter plot: every dot is a different user-mean

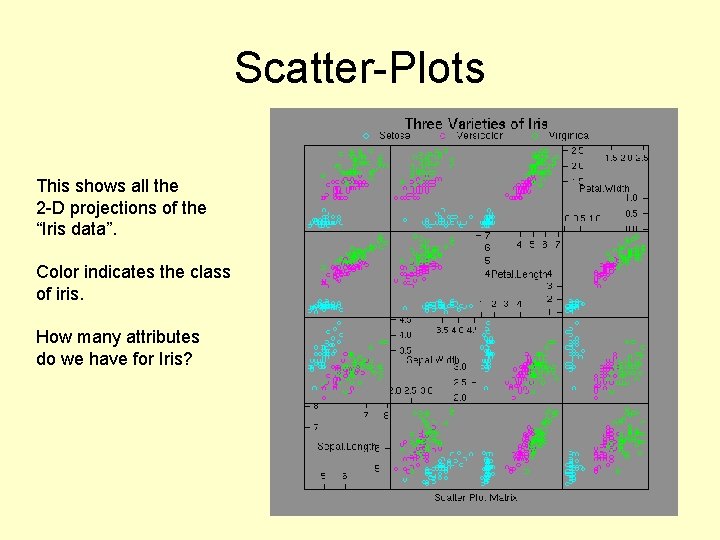

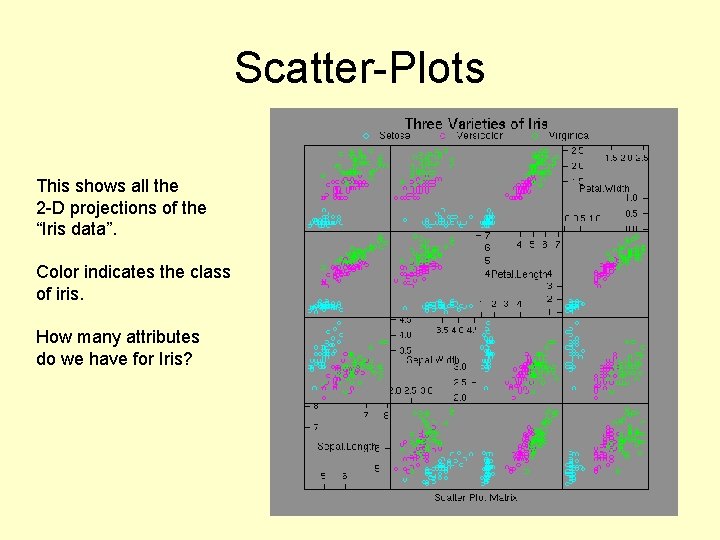

Scatter-Plots This shows all the 2 -D projections of the “Iris data”. Color indicates the class of iris. How many attributes do we have for Iris?

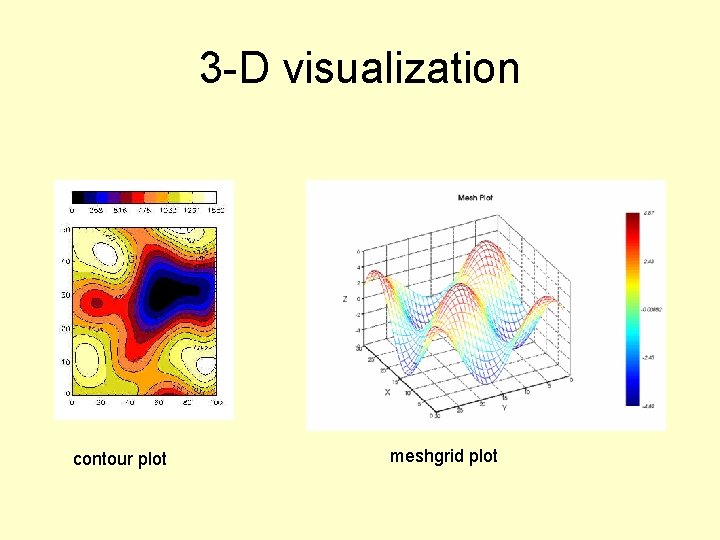

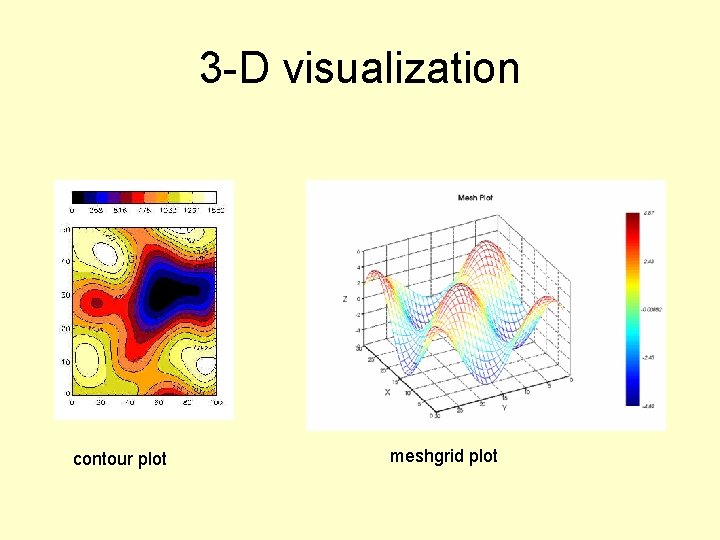

3 -D visualization contour plot meshgrid plot

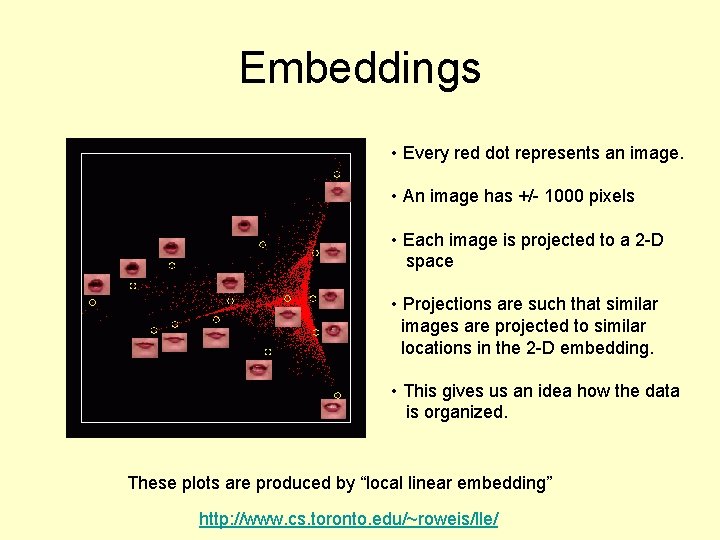

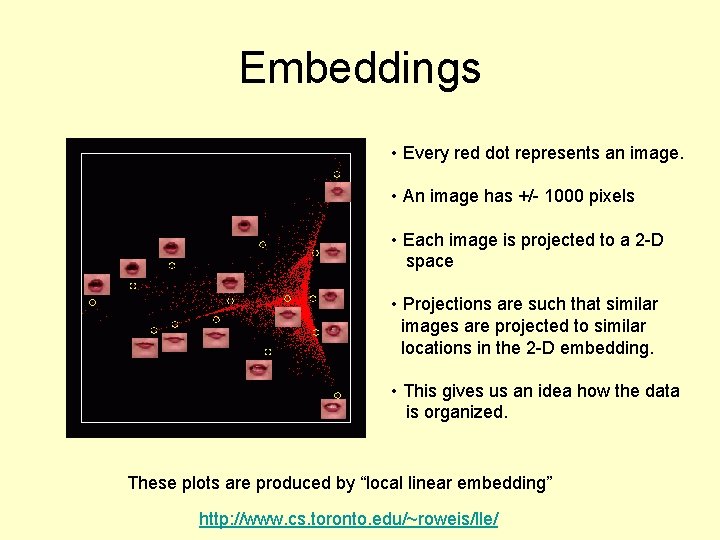

Embeddings • Every red dot represents an image. • An image has +/- 1000 pixels • Each image is projected to a 2 -D space • Projections are such that similar images are projected to similar locations in the 2 -D embedding. • This gives us an idea how the data is organized. These plots are produced by “local linear embedding” http: //www. cs. toronto. edu/~roweis/lle/

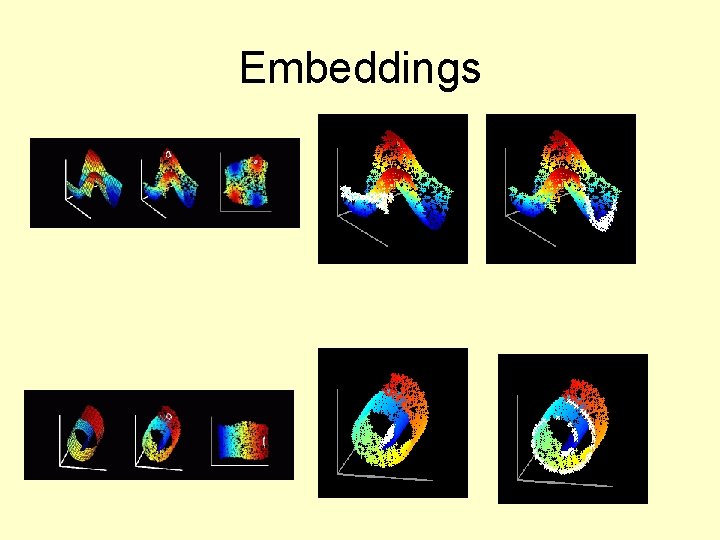

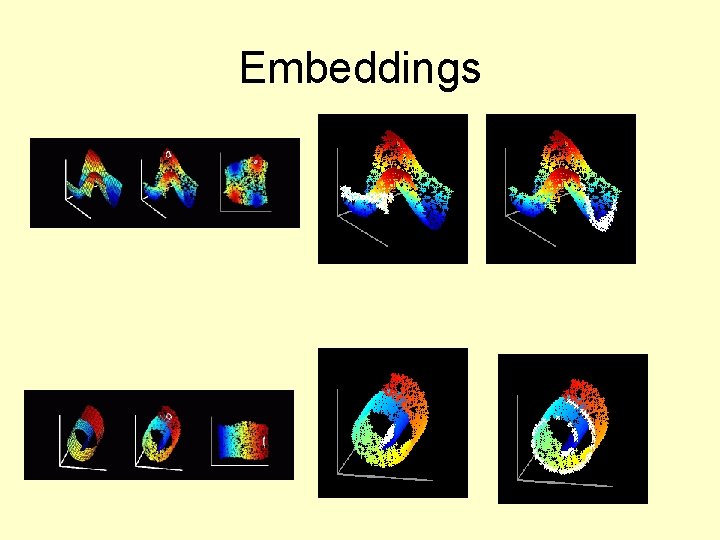

Embeddings

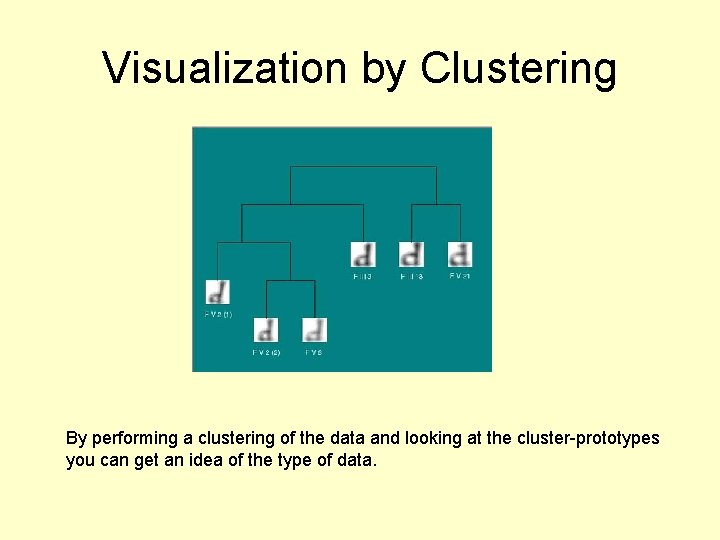

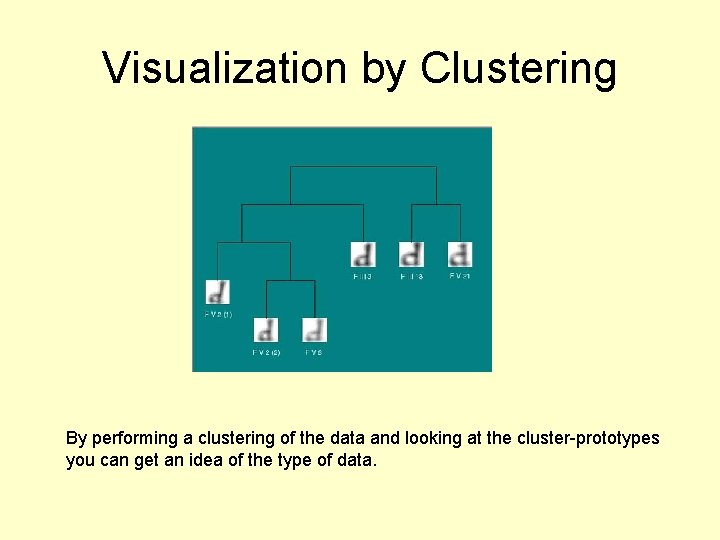

Visualization by Clustering By performing a clustering of the data and looking at the cluster-prototypes you can get an idea of the type of data.

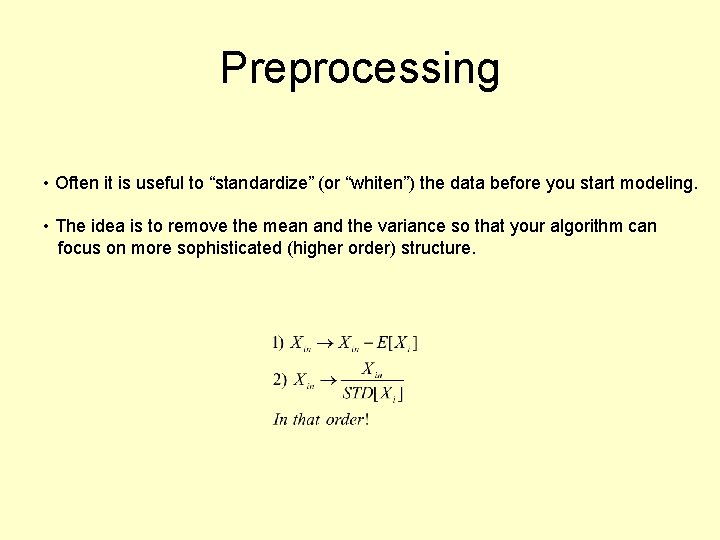

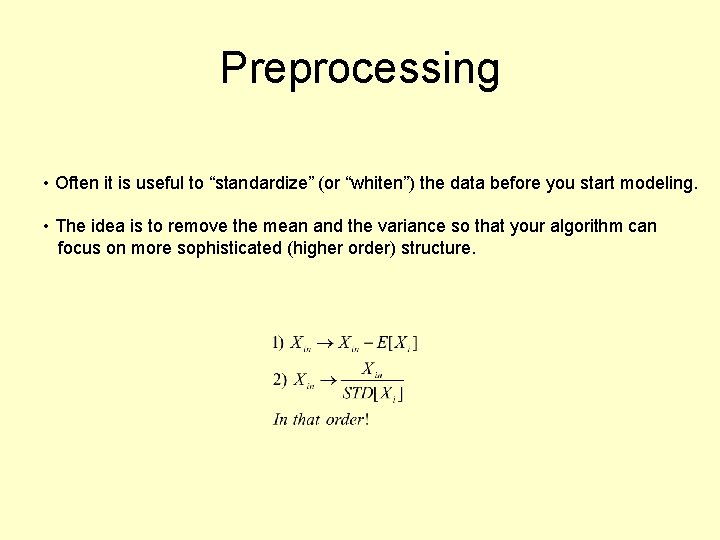

Preprocessing • Often it is useful to “standardize” (or “whiten”) the data before you start modeling. • The idea is to remove the mean and the variance so that your algorithm can focus on more sophisticated (higher order) structure.

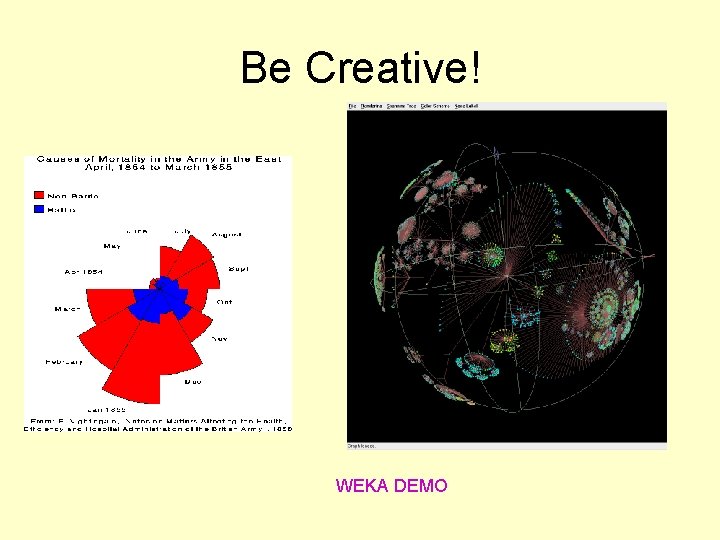

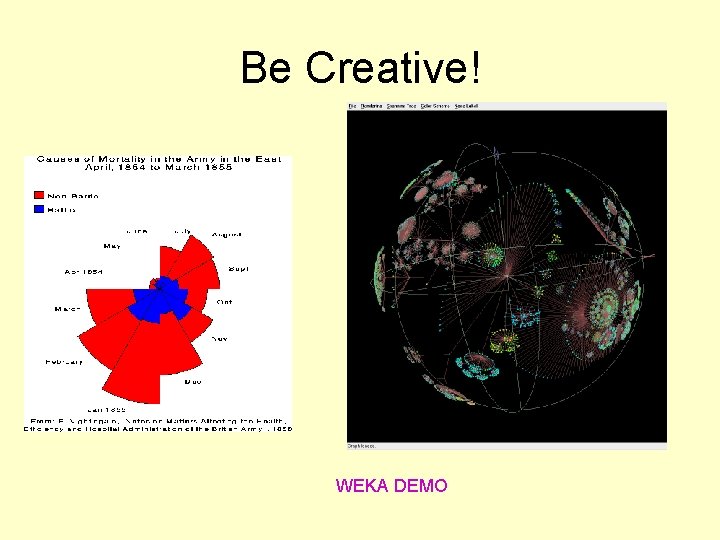

Be Creative! WEKA DEMO