Infiniband in the Data Center Steven Carter Cisco

Infiniband in the Data Center Steven Carter Cisco Systems stevenca@cisco. com Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public Makia Minich, Nageswara Rao Oak Ridge National Laboratory {minich, rao}@ornl. gov 1

Agenda § Overview § The Good, The Bad, and The Ugly § IB LAN Case Study: Oak Ridge National Laboratory Center for Computational Sciences § IB WAN Case Study: Department of Energy’s Ultra. Science Network Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 2

Overview § Data movement requirements are, once again, exploding in the HPC community (sensors produce more data, larger computers compute with higher accuracy, disk subsystems are bigger/faster, etc) § The requirement to move 100’s of GB/s (the rates currently proposed for many of the new petascale systems) within the data center necessitate something more than is being currently provided by the Ethernet community § There also exists a requirement to move large amounts of data between data centers. TCP/IP does not adequately meet this need because of its poor wide-are characteristics. § This is a high-level overview of the pros and cons of using Infiniband to meet these needs and two case studies to reinforce them Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 3

Agenda § Overview § Infiniband: The Good, The Bad, and The Ugly § IB LAN Case Study: Oak Ridge National Laboratory Center for Computational Sciences § IB WAN Case Study: Department of Energy’s Ultra. Science Network Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 4

The Good § Cool Name (Marketing gets an A+ -- who doesn’t want infinite bandwidth? ) § Unified Fabric/IO Virtualization: – Low-latency interconnect - nanoseconds, not low microseconds - not necessarily important in a data center – Storage – Using SRP (SCSI RDMA Protocol) or i. SER (i. SCSI Extension for RDMA) – IP – Using IPo. IB, newer versions run over Connected Mode giving better throughput – Gateways give access to legacy Ethernet (careful) and Fibre Channel networks Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 5

The Good (Cont. ) § Faster link speeds: – 1 x Single Data Rate (SDR) = 2. 5 Gb/s (2 Gb/s with 8 b/10 b signalling) – 4 1 x links can be aggregated into a single 4 x link – 3 4 x links can be aggregated into a single 12 x link (single 12 x link also available) – Double Data Rate (DDR) currently available, Quad Data Rate (QDR) on the horizon – Many link speeds available: 8 Gb/s, 16 Gb/s, 24 Gb/s, 32 Gb/s, 48 Gb/s, etc. Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 6

The Good (Cont. ) § HCA does much of the heavy lifting: – Much of the protocol is done on the Host Channel Adapter (HCA) heavily leveraging DMA – Remote Direct Memory Access (RDMA) gives the ability to transfer data between hosts with very little CPU overhead – RDMA capability is EXTREMELY important because it provides significantly greater capability from the same hardware Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 7

The Good (Cont. ) § Nearly 10 x less cost for similar bandwidth: – Because of its simplicity, IB switches cost less. Oddly enough, IB HCAs are more complex than 10 G NICs, but are also less expensive. – Roughly $500 per port in the switch and $500 for a dual port DDR HCA – Because of RDMA, there is a cost savings in infrastructure as well (i. e. you can do more with fewer hosts) § Higher port density switches: – Switches available with 288 (or more) full-rate ports in a single chassis Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 8

The Bad § IB sounds too much like IP (Can quickly degrade into a “Who’s on first” routine) § IB is not well understood by networking folks § Lacks some of the features of Ethernet important in the Data Center: – Router – no way to natively connect two separate fabrics - The IB Subnet Manager (SM) is integral to the operation of the network (detects hosts, programs routes into the switch, etc). Without a router, you cannot have two different SMs for different operational or administrative domains (Can be worked around at the application layer). – Firewall – No way to dictate who talks to whom by protocol (partitions exist, but are too course grained) – Protocol Analyzers - They exist but are hard to come by, difficult to “roll your own” because of the protocol is embedded in the HCA Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 9

The Ugly § Cabling options: • Heavy gage cables with clunky CX 4 connectors • Short distance (< 20 meters) • If mishandled, they have a propensity to fail • Heavy connectors can become disengaged • Electrical to optical converter • Long distance (up to 150 meters) • Uses multi-core ribbon fiber (hard to debug) • Expensive • Heavy connectors can become disengaged Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 10

The Ugly (Continued) § Cabling options: • Electrical to optical converter built on the cable • Long distance (up to 100 meters) • Uses multi-core ribbon fiber (hard to debug) • More cost effective than other solutions • Heavy connectors can become disengaged Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 11

Agenda § Overview § Infiniband: The Good, The Bad, and The Ugly § IB LAN Case Study: Oak Ridge National Laboratory Center for Computational Sciences § IB WAN Case Study: Department of Energy’s Ultra. Science Network Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 12

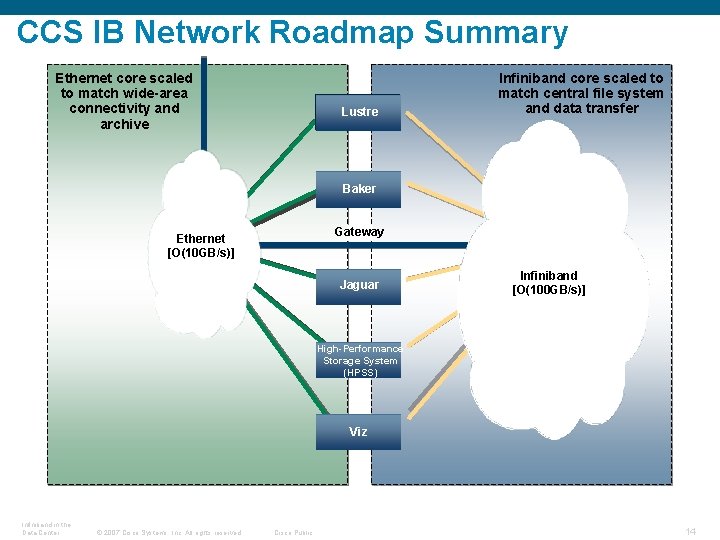

Case Study: ORNL Center for Computational Sciences (CCS) § The Department of Energy established the Leadership Computing Facility at ORNL’s Center for Computational Sciences to field a 1 PF supercomputer § The design chosen, the Cray XT series, includes an internal Lustre filesystem capable of sustaining reads and writes of 240 GB/s § The problem with making the filesystem part of the machine is that it limits the flexibly of the Lustre filesystem and increases the complexity of the Cray § The problem with decoupling the filesystem from the machine is the high cost involved with to connect it via 10 GE at the required speeds Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 13

CCS IB Network Roadmap Summary Ethernet core scaled to match wide-area connectivity and archive Lustre Infiniband core scaled to match central file system and data transfer Baker Gateway Ethernet [O(10 GB/s)] Jaguar Infiniband [O(100 GB/s)] High-Performance Storage System (HPSS) Viz Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 14

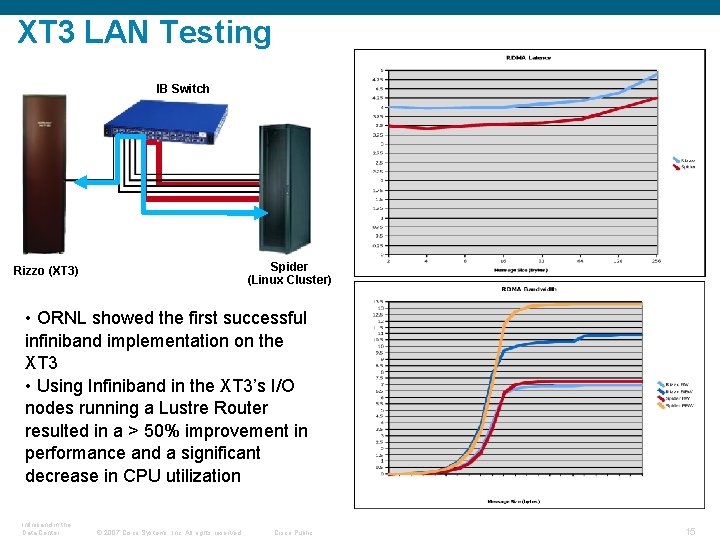

XT 3 LAN Testing IB Switch Spider (Linux Cluster) Rizzo (XT 3) • ORNL showed the first successful infiniband implementation on the XT 3 • Using Infiniband in the XT 3’s I/O nodes running a Lustre Router resulted in a > 50% improvement in performance and a significant decrease in CPU utilization Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 15

Observations § XT 3's performance is good (better than 10 GE) for RDMA § XT 3's poor performance compared to the generic X 86_64 host likely a result of PCI-X HCA (known to be sub-optimal) § In its role as a Lustre router, IB allows significantly better performance per I/O node allowing CCS to achieve the required throughput with fewer nodes than would be needed using 10 GE Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 16

Agenda § Overview § Infiniband: The Good, The Bad, and The Ugly § IB LAN Case Study: Oak Ridge National Laboratory Center for Computational Sciences § IB WAN Case Study: Department of Energy’s Ultra. Science Network Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 17

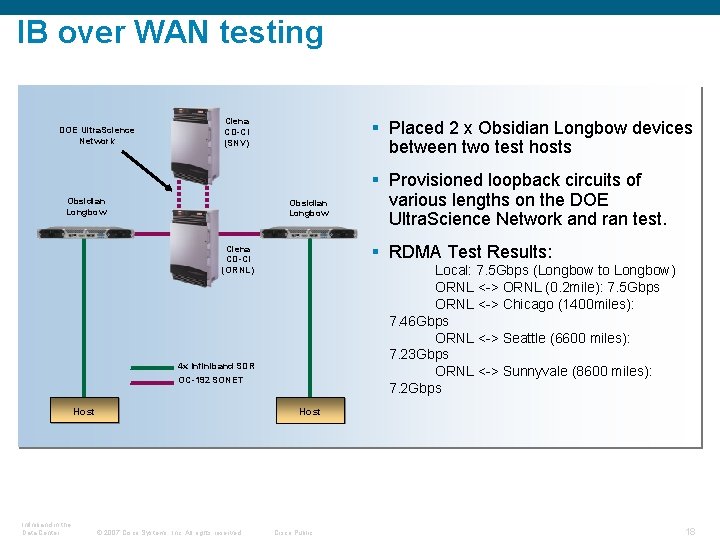

IB over WAN testing DOE Ultra. Science Network Ciena CD-CI (SNV) Obsidian Longbow § Placed 2 x Obsidian Longbow devices between two test hosts Obsidian Longbow § RDMA Test Results: Ciena CD-CI (ORNL) Local: 7. 5 Gbps (Longbow to Longbow) ORNL <-> ORNL (0. 2 mile): 7. 5 Gbps ORNL <-> Chicago (1400 miles): 7. 46 Gbps ORNL <-> Seattle (6600 miles): 7. 23 Gbps ORNL <-> Sunnyvale (8600 miles): 7. 2 Gbps 4 x Infiniband SDR OC-192 SONET Host Infiniband in the Data Center § Provisioned loopback circuits of various lengths on the DOE Ultra. Science Network and ran test. Host © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 18

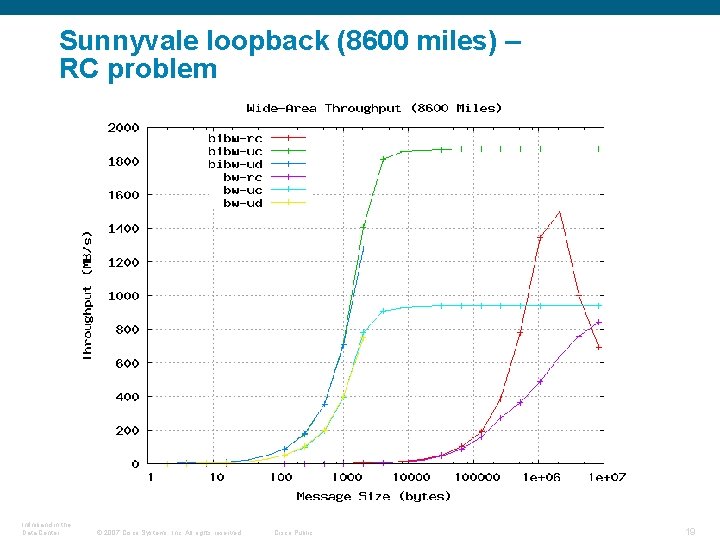

Sunnyvale loopback (8600 miles) – RC problem Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 19

Observations § The Obsidian Longbows appear to be extending sufficient link-level credits § Native IB transports does not appear to suffer from the same widearea shortcomings as TCP (i. e. Full rate with no tuning) § With the Arbel based HCAs, we saw problems: – RC only performs well at large messages sizes – There seems to be a maximum number of messages allowed in flight (~250) – RC performance does not increase rapidly enough even when message cap is not an issue § The problems seem to be fixed with the new Hermon-based HCAs… Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 20

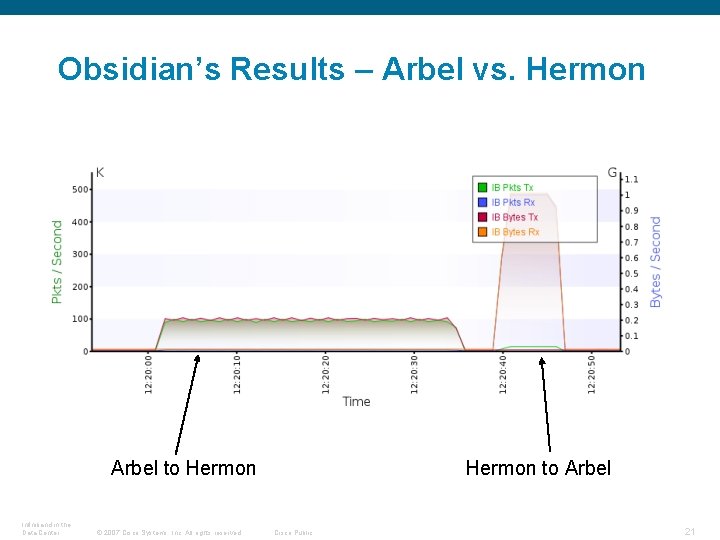

Obsidian’s Results – Arbel vs. Hermon Arbel to Hermon Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Hermon to Arbel Cisco Public 21

Summary § Infiniband has the potential to make a great data center interconnect because it provides a unified fabric, faster link speeds, mature RDMA implementation, and lower cost § There does not appear to be the same intrinsic problem with IB in the wide-area as there is with IP/Ethernet, making IB a good candidate to transfer data between data centers Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public 22

The End Questions? Comments? Criticisms? For more information: Steven Carter Cisco Systems stevenca@cisco. com Infiniband in the Data Center © 2007 Cisco Systems, Inc. All rights reserved. Cisco Public Makia Minich, Nageswara Rao Oak Ridge National Laboratory {minich, rao}@ornl. gov 23

- Slides: 23