Filling the Data Lake Chuck Yarbrough Director Pentaho

Filling the Data Lake Chuck Yarbrough, Director, Pentaho Solutions Mark Burnette, Enterprise Sales Engineer

The Data Lake 2 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

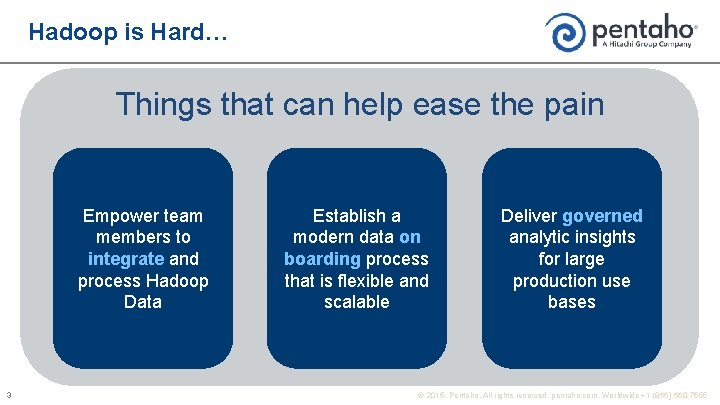

Hadoop is Hard… Things that can help ease the pain Empower team members to integrate and process Hadoop Data 3 Establish a modern data on boarding process that is flexible and scalable Deliver governed analytic insights for large production use bases © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

Proper Care and Feeding of the Data Lake 4 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

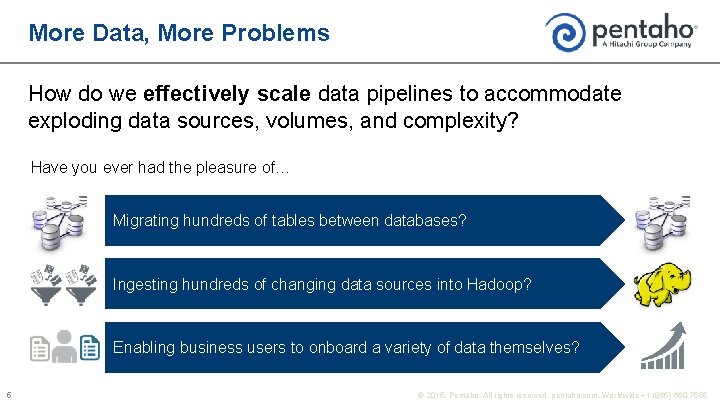

More Data, More Problems How do we effectively scale data pipelines to accommodate exploding data sources, volumes, and complexity? Have you ever had the pleasure of… Migrating hundreds of tables between databases? Ingesting hundreds of changing data sources into Hadoop? Enabling business users to onboard a variety of data themselves? 5 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

More Data, More Problems Modern data onboarding is more than just “connecting” or “loading” – it includes: Managing a changing array of data sources Establishing repeatable processes at scale Maintaining control and governance 6 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

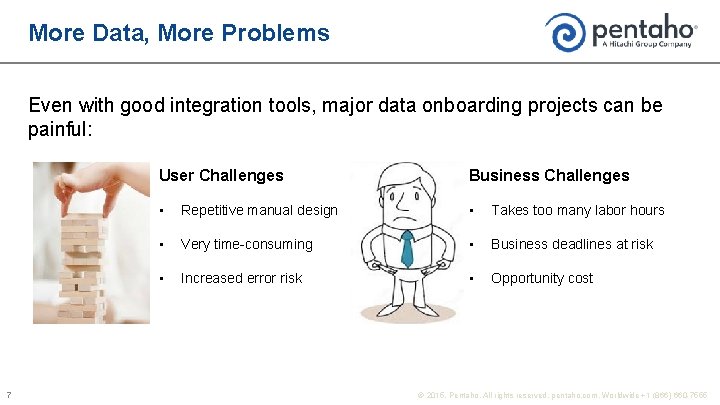

More Data, More Problems Even with good integration tools, major data onboarding projects can be painful: 7 User Challenges Business Challenges • Repetitive manual design • Takes too many labor hours • Very time-consuming • Business deadlines at risk • Increased error risk • Opportunity cost © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

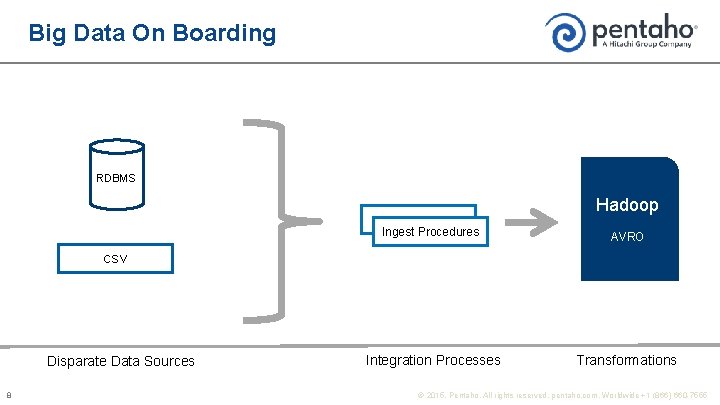

Big Data On Boarding RDBMS Hadoop Ingest Procedures AVRO Integration Processes Transformations CSV Disparate Data Sources 8 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

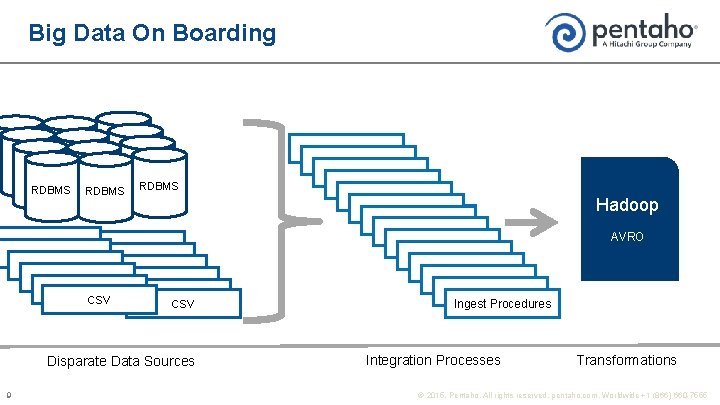

Big Data On Boarding RDBMS Hadoop AVRO CSV Disparate Data Sources 9 Ingest Procedures Integration Processes Transformations © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

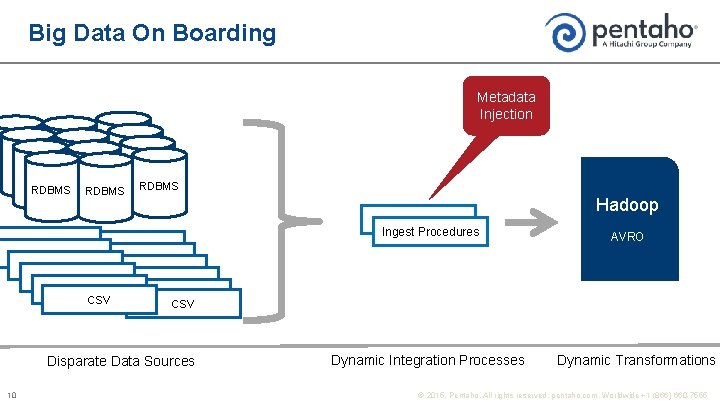

Big Data On Boarding Metadata Injection RDBMS Hadoop Ingest Procedures CSV Disparate Data Sources 10 AVRO Dynamic Integration Processes Dynamic Transformations © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

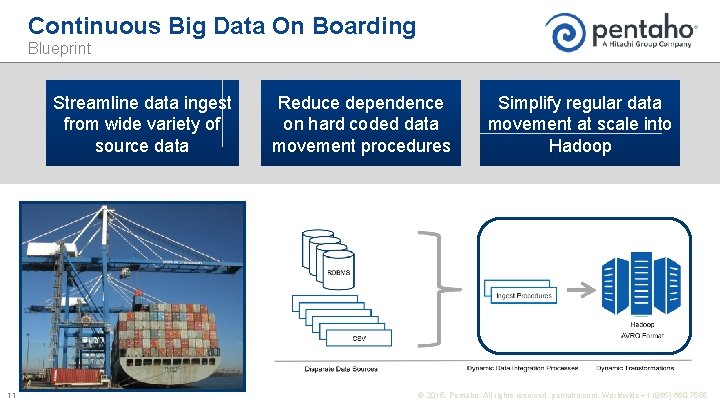

Continuous Big Data On Boarding Blueprint Streamline data ingest from wide variety of source data 11 Reduce dependence on hard coded data movement procedures Simplify regular data movement at scale into Hadoop © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

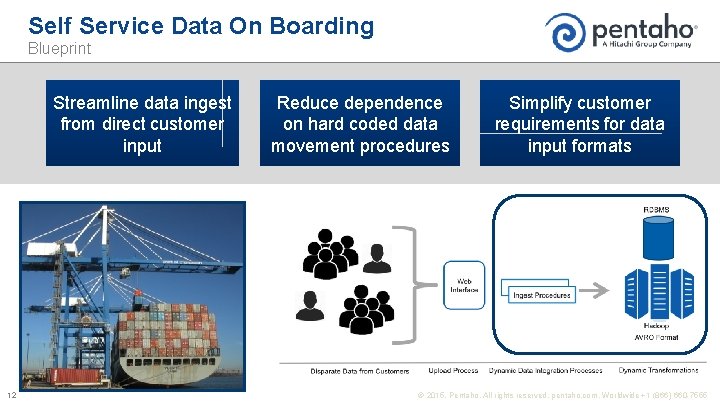

Self Service Data On Boarding Blueprint Streamline data ingest from direct customer input 12 Reduce dependence on hard coded data movement procedures Simplify customer requirements for data input formats © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

The Analytic Data Pipeline Management and Automation

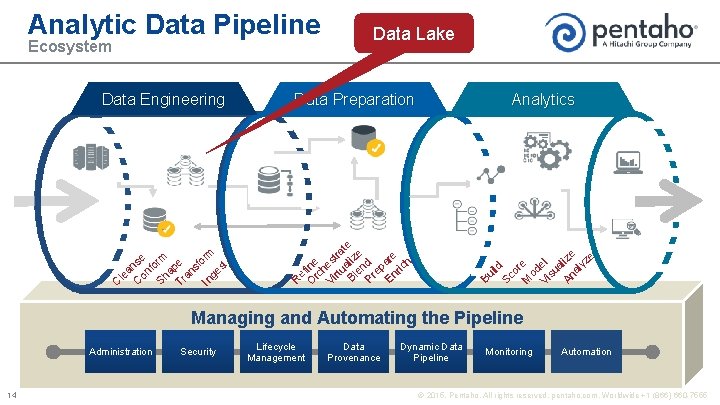

Analytic Data Pipeline Data Lake Data Preparation Analytics R e O fine rc h Vi est rtu ra al te iz e Pr ep En are ric h nd Sc ild e Bl Bu In ge s rm se form pe sfo n a n le on Sha ra C C T t Data. Engineering Data or M e od Vi el su a An lize al yz e Ecosystem Managing and Automating the Pipeline Administration 14 Security Lifecycle Management Data Provenance Dynamic Data Pipeline Monitoring Automation © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

Metadata Injection

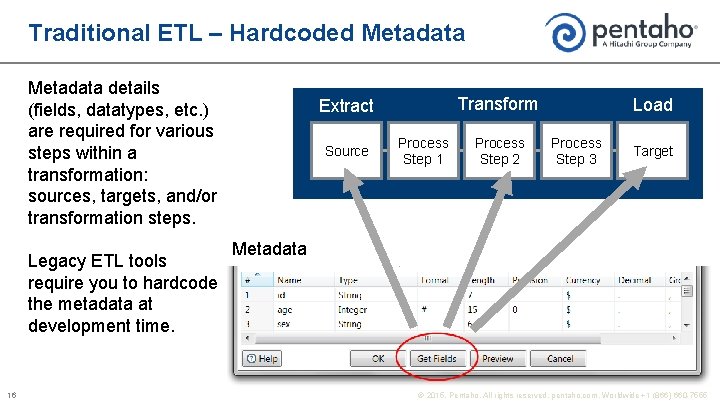

Traditional ETL – Hardcoded Metadata details (fields, datatypes, etc. ) are required for various steps within a transformation: sources, targets, and/or transformation steps. Legacy ETL tools require you to hardcode the metadata at development time. 16 Transform Extract Source Process Step 1 Process Step 2 Load Process Step 3 Target Metadata © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

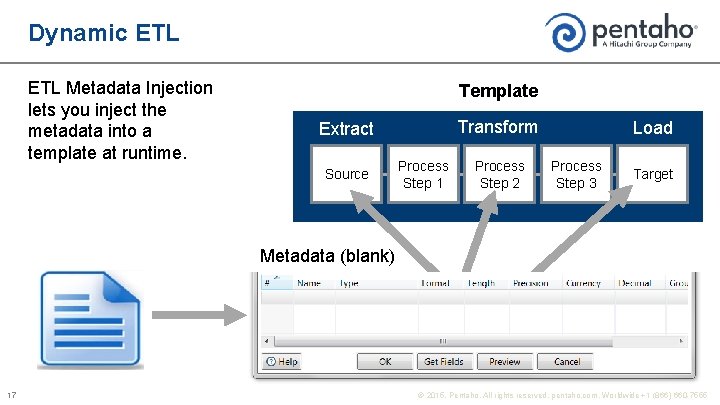

Dynamic ETL Metadata Injection lets you inject the metadata into a template at runtime. Template Transform Extract Source Process Step 1 Process Step 2 Load Process Step 3 Target Metadata (blank) 17 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

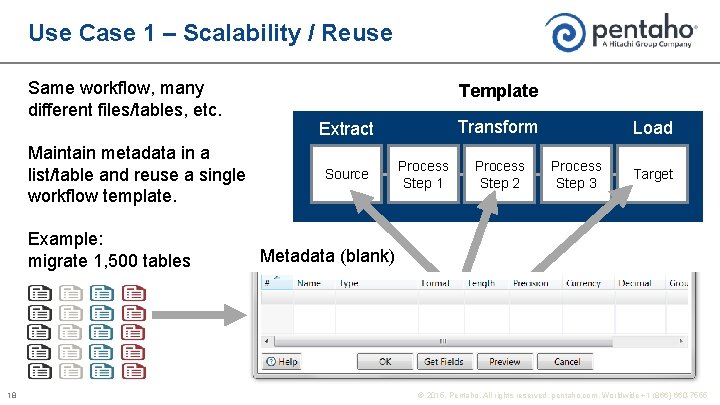

Use Case 1 – Scalability / Reuse Same workflow, many different files/tables, etc. Maintain metadata in a list/table and reuse a single workflow template. Example: migrate 1, 500 tables 18 Template Transform Extract Source Process Step 1 Process Step 2 Load Process Step 3 Target Metadata (blank) © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

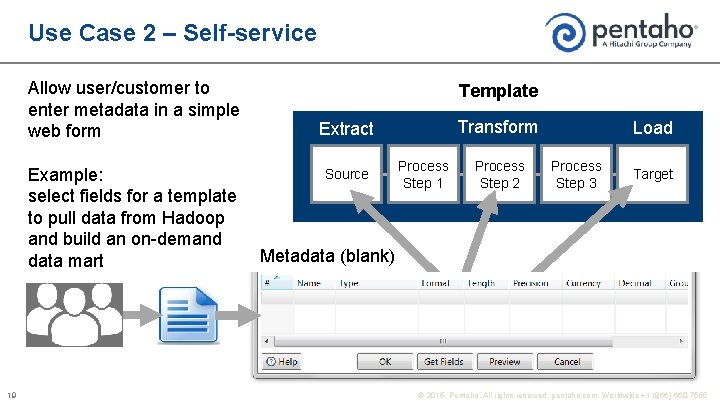

Use Case 2 – Self-service Allow user/customer to enter metadata in a simple web form Example: select fields for a template to pull data from Hadoop and build an on-demand data mart 19 Template Transform Extract Source Process Step 1 Process Step 2 Load Process Step 3 Target Metadata (blank) © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

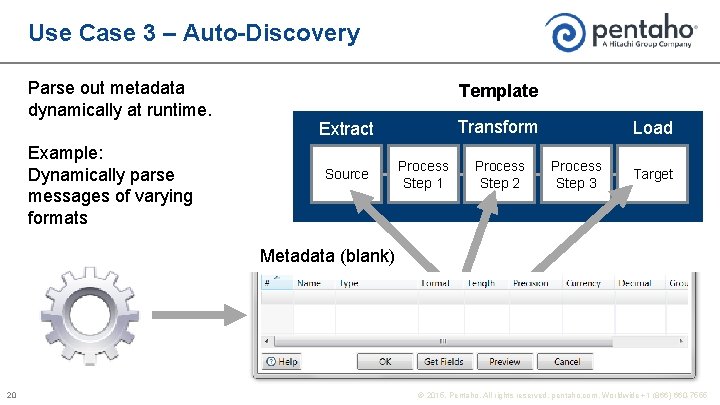

Use Case 3 – Auto-Discovery Parse out metadata dynamically at runtime. Example: Dynamically parse messages of varying formats Template Transform Extract Source Process Step 1 Process Step 2 Load Process Step 3 Target Metadata (blank) 20 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

Demo

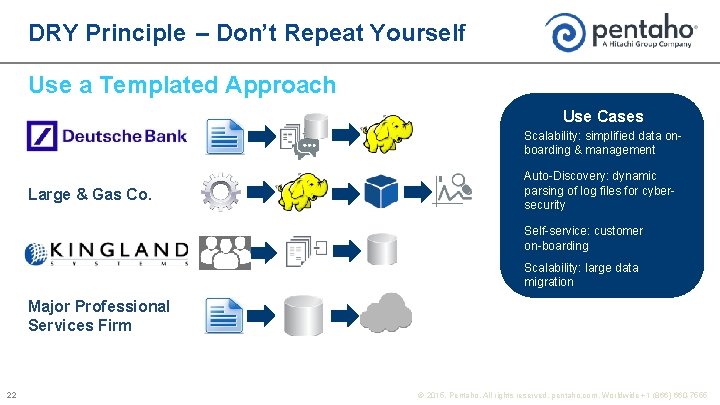

DRY Principle – Don’t Repeat Yourself Use a Templated Approach Use Cases Scalability: simplified data onboarding & management Large & Gas Co. Auto-Discovery: dynamic parsing of log files for cybersecurity Self-service: customer on-boarding Scalability: large data migration Major Professional Services Firm 22 © 2015, Pentaho. All rights reserved. pentaho. com. Worldwide +1 (866) 660 -7555

Q&A

- Slides: 24