DataIntensive Distributed Computing CS 431631 451651 Fall 2019

Data-Intensive Distributed Computing CS 431/631 451/651 (Fall 2019) Part 4: Analyzing Graphs (2/2) October 8, 2019 Ali Abedi Thanks to Jure Leskovec, Anand Rajaraman, Jeff Ullman (Stanford University) These slides are available at https: //www. student. cs. uwaterloo. ca/~cs 451/

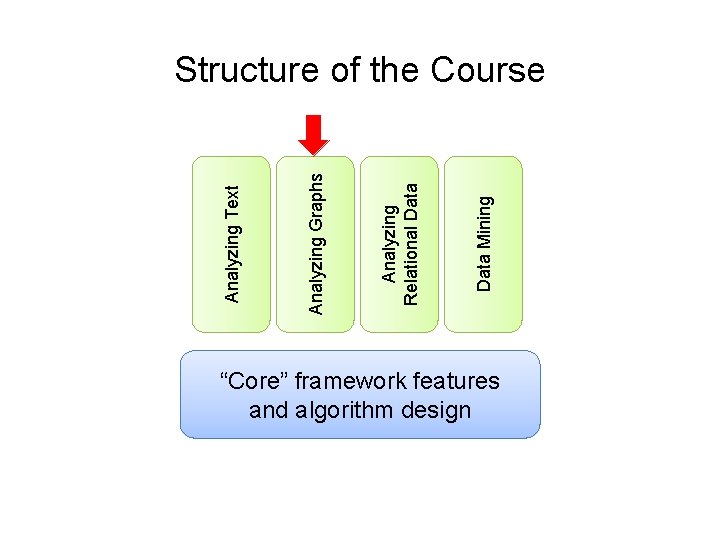

Data Mining Analyzing Relational Data Analyzing Graphs Analyzing Text Structure of the Course “Core” framework features and algorithm design

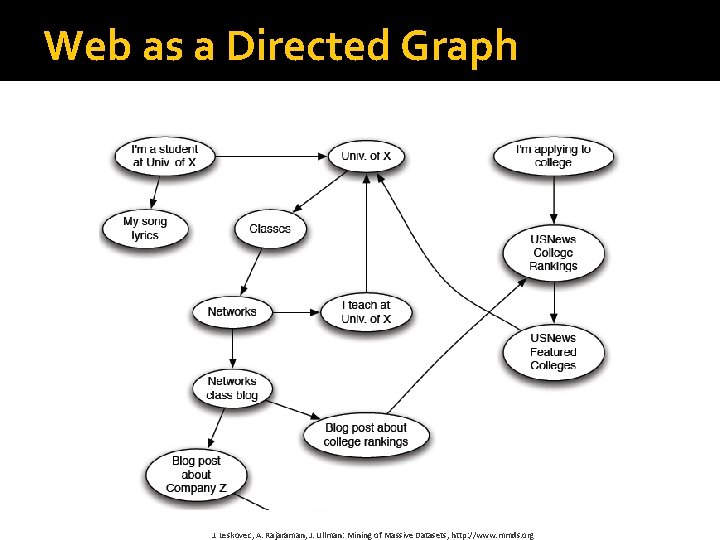

Web as a Directed Graph J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

Broad Question �How to organize the Web? �First try: Human curated Web directories § Yahoo, DMOZ, Look. Smart �Second try: Web Search § Information Retrieval investigates: Find relevant docs in a small and trusted set § Newspaper articles, Patents, etc. § But: Web is huge, full of untrusted documents, random things, web spam, etc. J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

Web Search: 2 Challenges 2 challenges of web search: �(1) Web contains many sources of information Who to “trust”? § Trick: Trustworthy pages may point to each other! �(2) What is the “best” answer to query “newspaper”? § No single right answer § Trick: Pages that actually know about newspapers might all be pointing to many newspapers J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

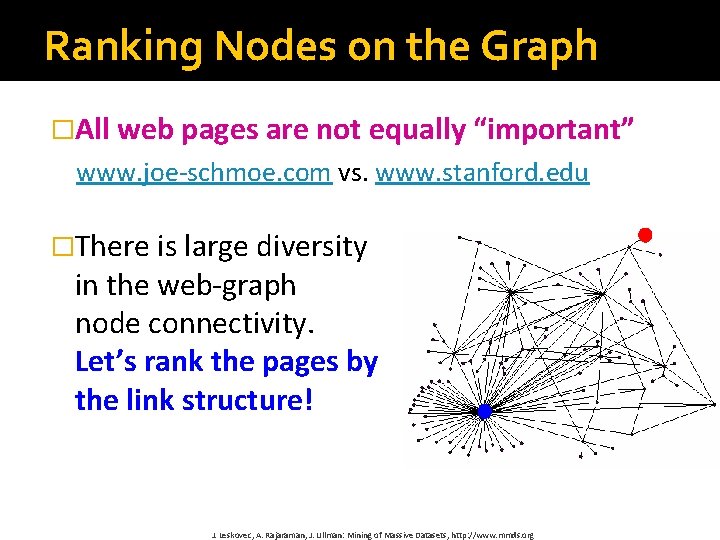

Ranking Nodes on the Graph �All web pages are not equally “important” www. joe-schmoe. com vs. www. stanford. edu �There is large diversity in the web-graph node connectivity. Let’s rank the pages by the link structure! J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

Page. Rank: The “Flow” Formulation J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

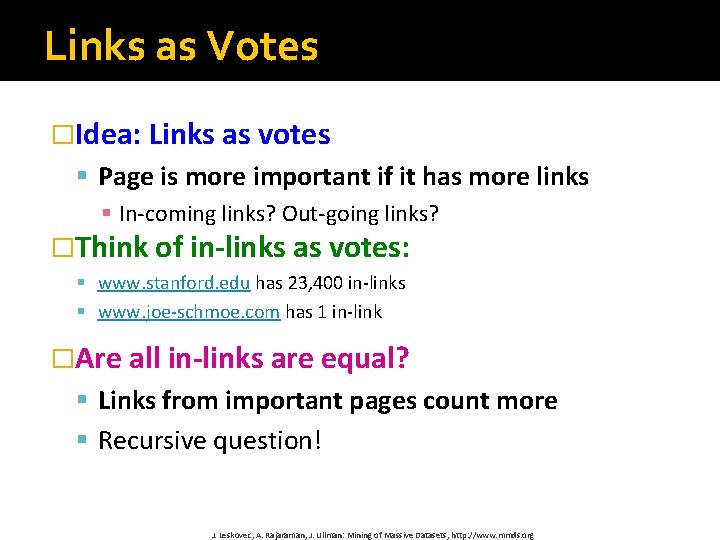

Links as Votes �Idea: Links as votes § Page is more important if it has more links § In-coming links? Out-going links? �Think of in-links as votes: § www. stanford. edu has 23, 400 in-links § www. joe-schmoe. com has 1 in-link �Are all in-links are equal? § Links from important pages count more § Recursive question! J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

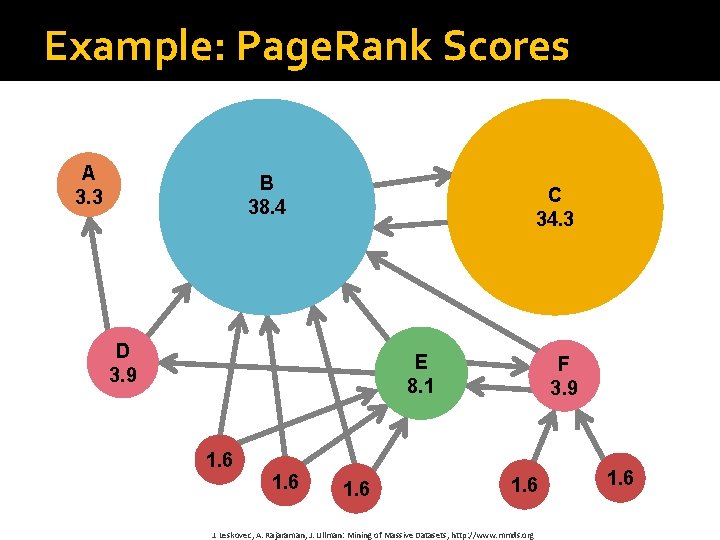

Example: Page. Rank Scores A 3. 3 B 38. 4 C 34. 3 D 3. 9 E 8. 1 1. 6 F 3. 9 1. 6 J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org 1. 6

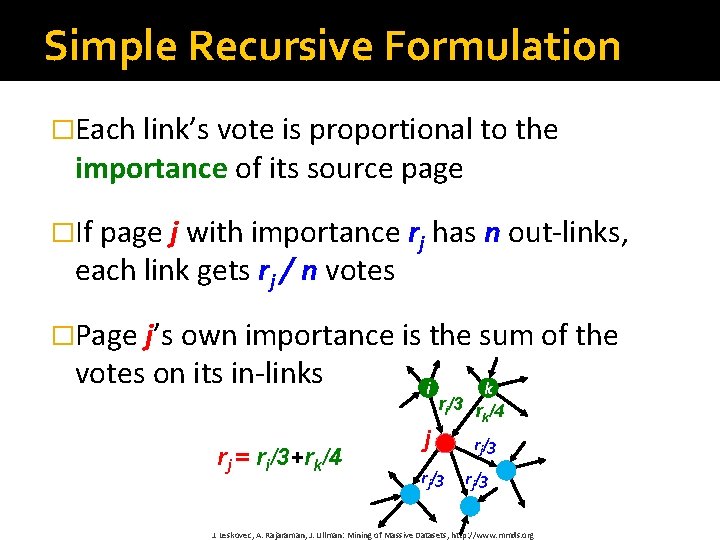

Simple Recursive Formulation �Each link’s vote is proportional to the importance of its source page �If page j with importance rj has n out-links, each link gets rj / n votes �Page j’s own importance is the sum of the votes on its in-links rj = ri/3+rk/4 i k ri/3 r /4 k j rj/3 J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

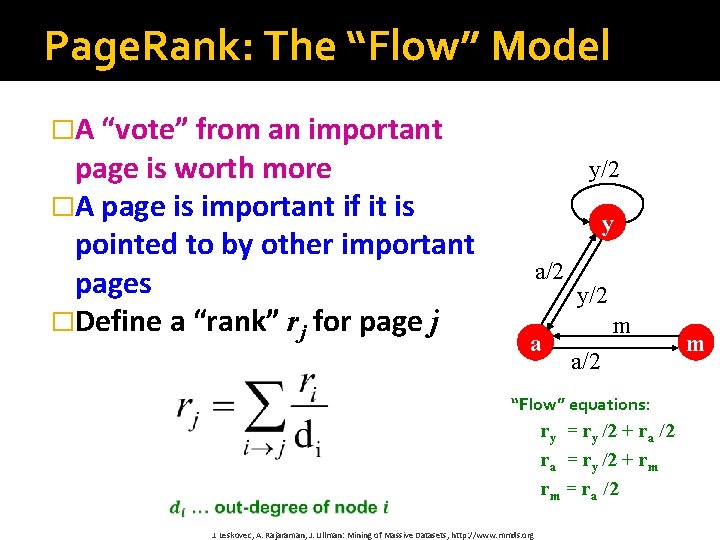

Page. Rank: The “Flow” Model �A “vote” from an important page is worth more �A page is important if it is pointed to by other important pages �Define a “rank” rj for page j y/2 y a/2 a y/2 m a/2 “Flow” equations: ry = ry /2 + ra /2 ra = ry /2 + rm rm = ra /2 J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org m

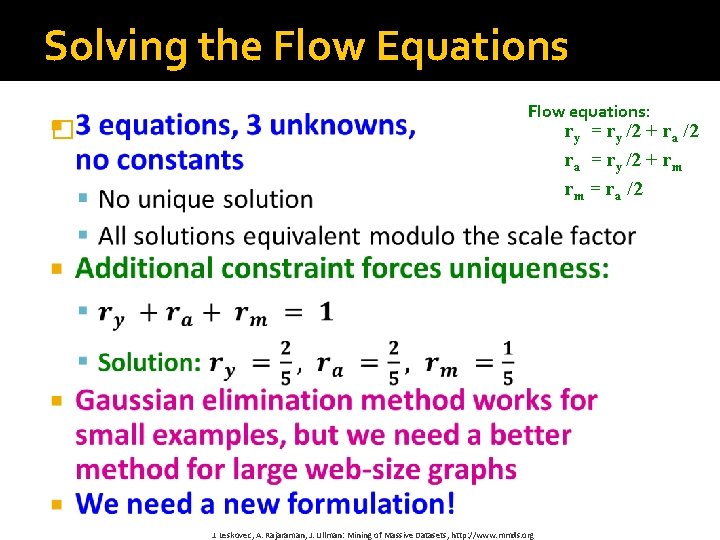

Solving the Flow Equations � Flow equations: ry = ry /2 + ra /2 ra = ry /2 + rm rm = ra /2 J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

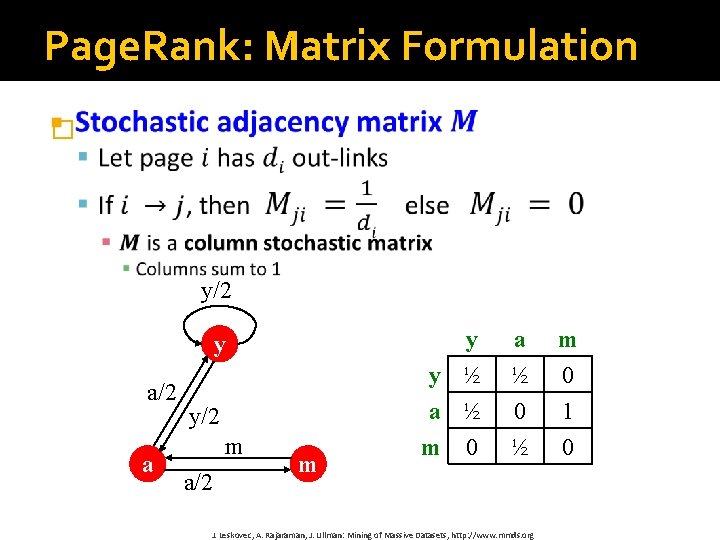

Page. Rank: Matrix Formulation � y/2 y a/2 a y/2 m a/2 m y y ½ a ½ m 0 a ½ 0 ½ J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org m 0 1 0

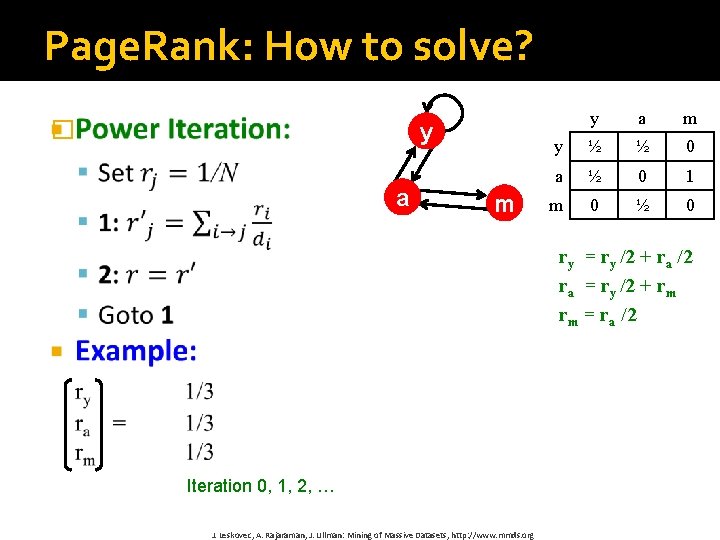

Page. Rank: How to solve? � y a m y ½ ½ 0 a ½ 0 1 m 0 ½ 0 ry = ry /2 + ra /2 ra = ry /2 + rm rm = ra /2 Iteration 0, 1, 2, … J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

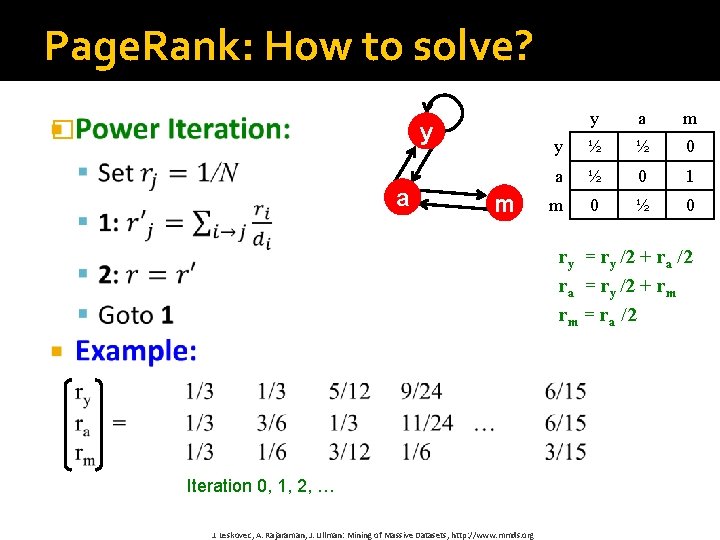

Page. Rank: How to solve? � y a m y ½ ½ 0 a ½ 0 1 m 0 ½ 0 ry = ry /2 + ra /2 ra = ry /2 + rm rm = ra /2 Iteration 0, 1, 2, … J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

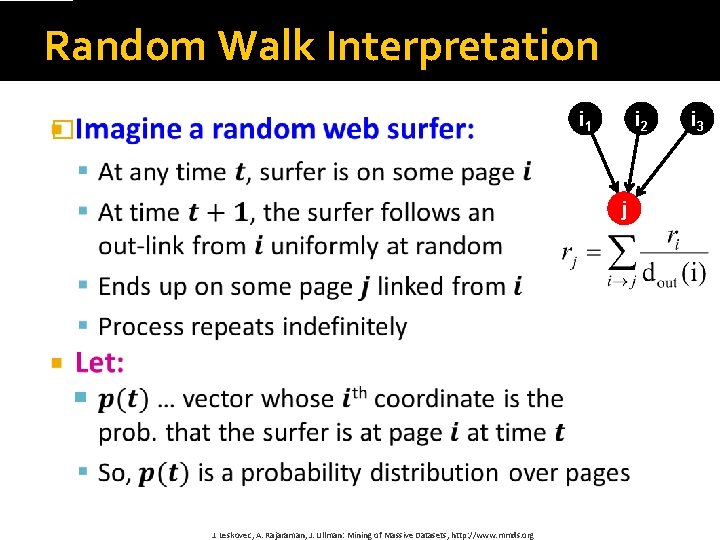

Random Walk Interpretation i 1 � i 2 j J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org i 3

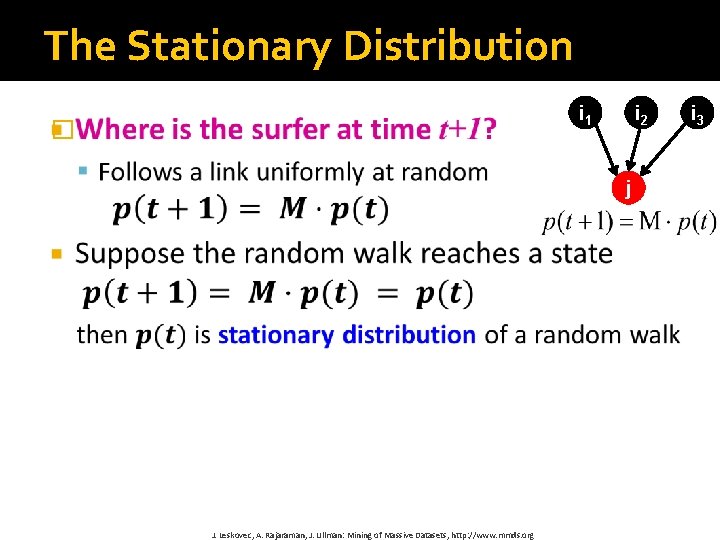

The Stationary Distribution i 1 � i 2 j J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org i 3

Existence and Uniqueness �A central result from theory of random walks (a. k. a. Markov processes): For graphs that satisfy certain conditions, the stationary distribution is unique and eventually will be reached no matter what the initial probability distribution at time t = 0 J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

Page. Rank: The Google Formulation J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

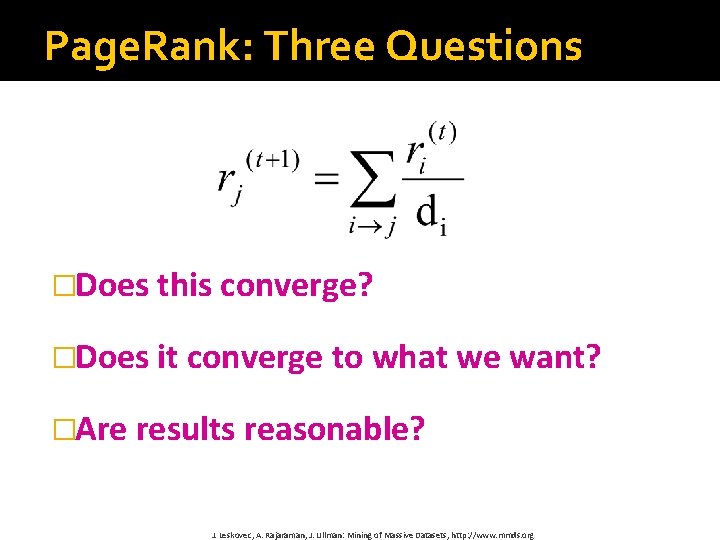

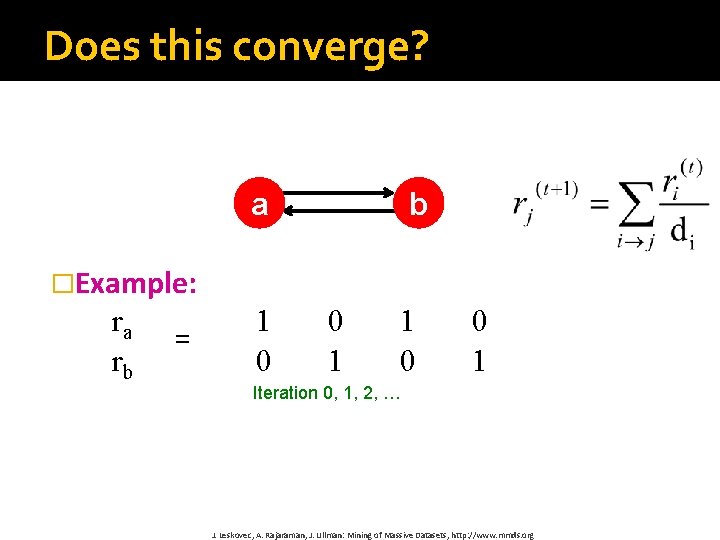

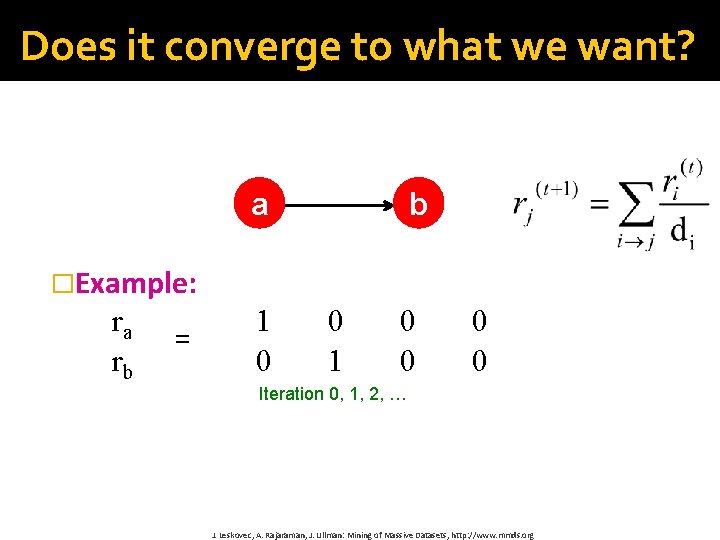

Page. Rank: Three Questions �Does this converge? �Does it converge to what we want? �Are results reasonable? J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

Does this converge? a b �Example: ra rb = 1 0 0 1 Iteration 0, 1, 2, … J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

Does it converge to what we want? a b �Example: ra rb = 1 0 0 0 0 Iteration 0, 1, 2, … J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

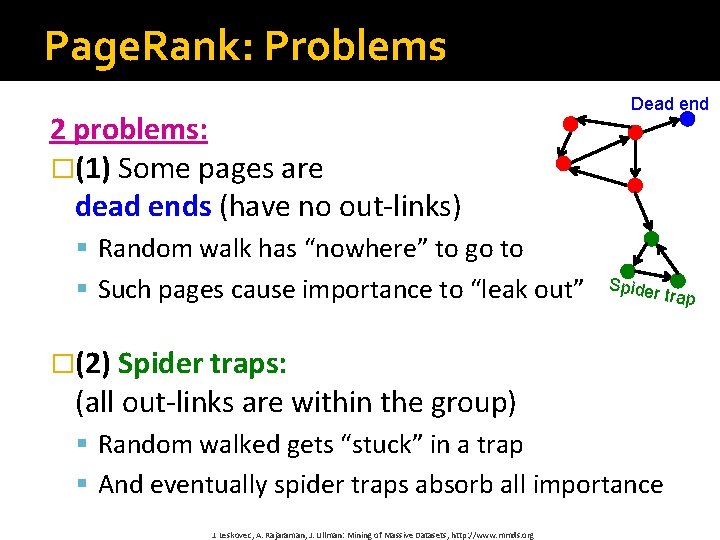

Page. Rank: Problems 2 problems: �(1) Some pages are dead ends (have no out-links) § Random walk has “nowhere” to go to § Such pages cause importance to “leak out” Dead end Spider �(2) Spider traps: (all out-links are within the group) § Random walked gets “stuck” in a trap § And eventually spider traps absorb all importance J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org trap

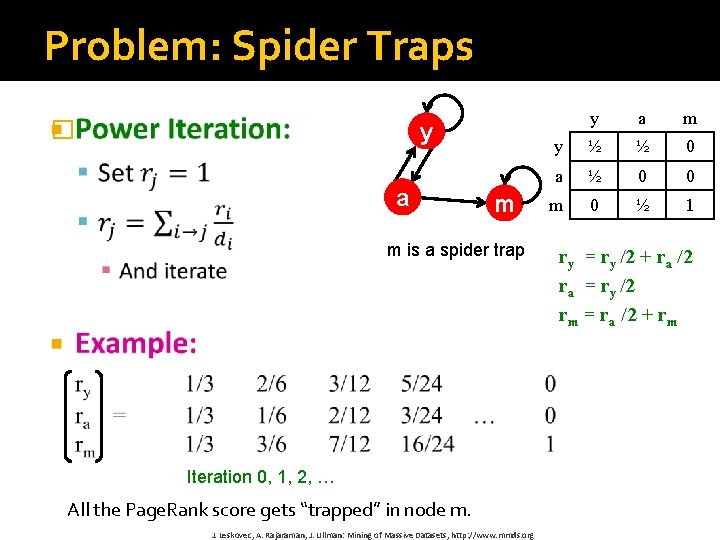

Problem: Spider Traps � y a m m is a spider trap Iteration 0, 1, 2, … All the Page. Rank score gets “trapped” in node m. J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org y a m y ½ ½ 0 a ½ 0 0 m 0 ½ 1 ry = ry /2 + ra /2 ra = ry /2 rm = ra /2 + rm

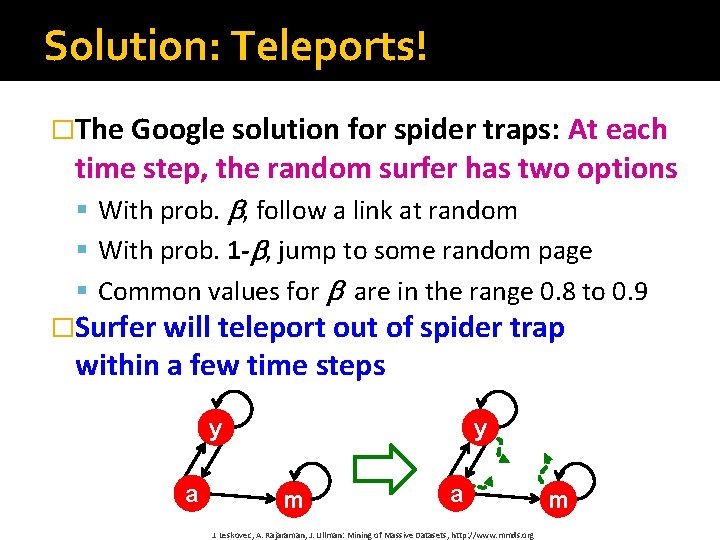

Solution: Teleports! �The Google solution for spider traps: At each time step, the random surfer has two options § With prob. , follow a link at random § With prob. 1 - , jump to some random page § Common values for are in the range 0. 8 to 0. 9 �Surfer will teleport out of spider trap within a few time steps y a y m a J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org m

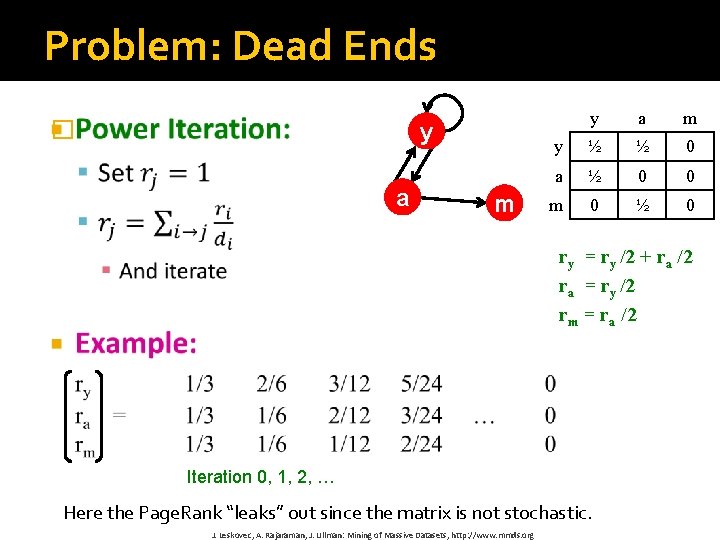

Problem: Dead Ends � y a m y ½ ½ 0 a ½ 0 0 m 0 ½ 0 ry = ry /2 + ra /2 ra = ry /2 rm = ra /2 Iteration 0, 1, 2, … Here the Page. Rank “leaks” out since the matrix is not stochastic. J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

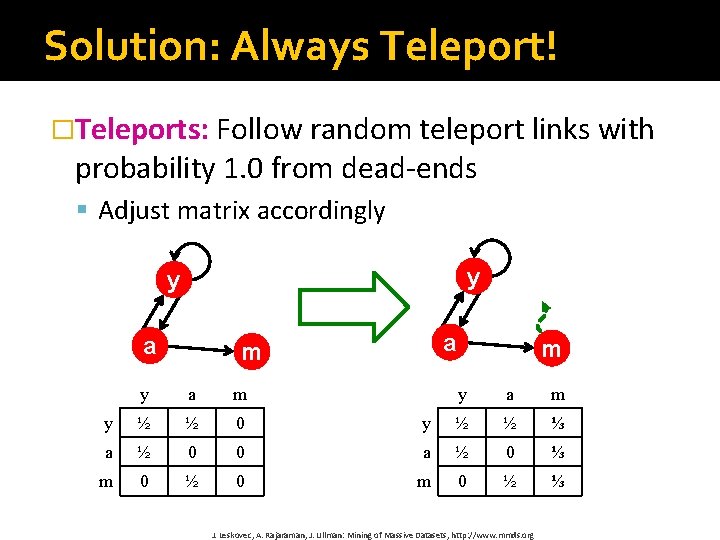

Solution: Always Teleport! �Teleports: Follow random teleport links with probability 1. 0 from dead-ends § Adjust matrix accordingly y y a a m y ½ ½ 0 a ½ 0 m 0 ½ m y a m y ½ ½ ⅓ 0 a ½ 0 ⅓ 0 m 0 ½ ⅓ J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

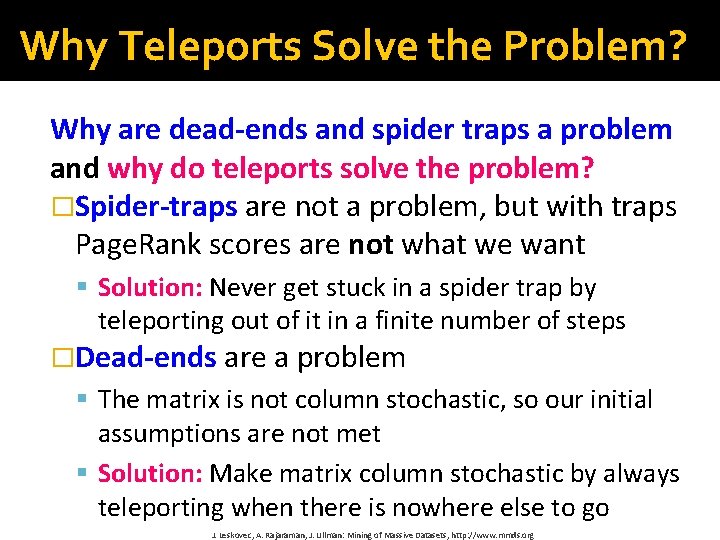

Why Teleports Solve the Problem? Why are dead-ends and spider traps a problem and why do teleports solve the problem? �Spider-traps are not a problem, but with traps Page. Rank scores are not what we want § Solution: Never get stuck in a spider trap by teleporting out of it in a finite number of steps �Dead-ends are a problem § The matrix is not column stochastic, so our initial assumptions are not met § Solution: Make matrix column stochastic by always teleporting when there is nowhere else to go J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

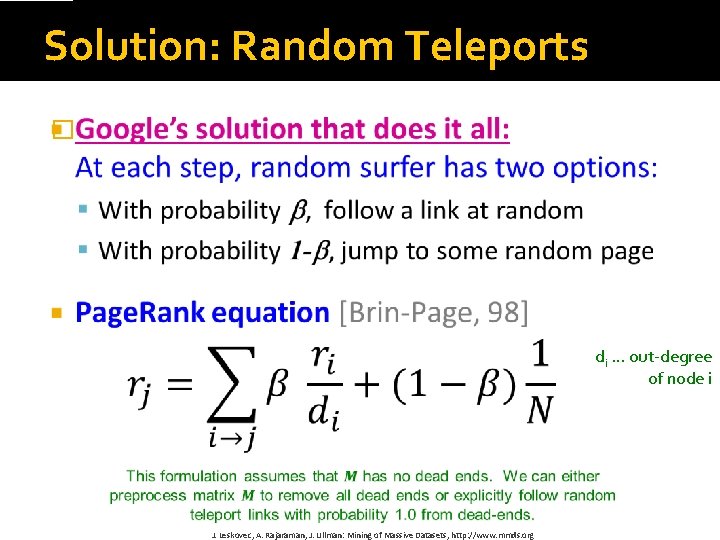

Solution: Random Teleports � di … out-degree of node i J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org

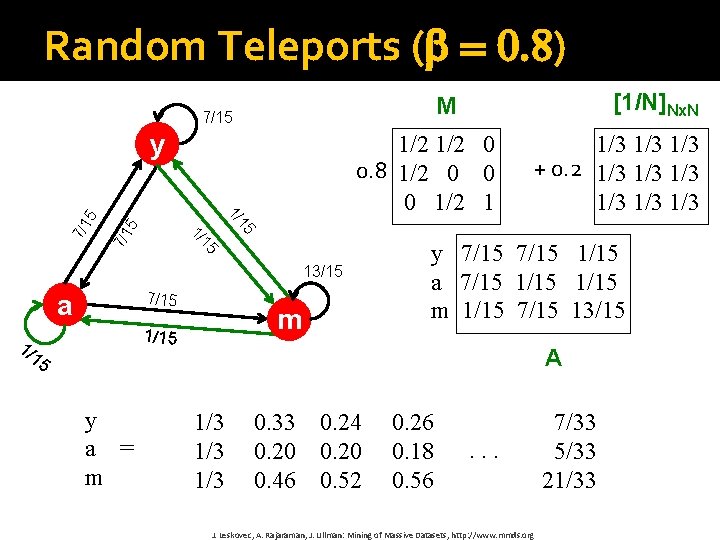

Random Teleports ( = 0. 8) y 5 7/15 m 1/15 1/ 15 y a = m 1/3 1/3 + 0. 2 1/3 1/3 1/3 15 15 1/ 7/1 5 1/ 1/2 0 0. 8 1/2 0 0 0 1/2 1 13/15 a [1/N]Nx. N M 7/15 y 7/15 1/15 a 7/15 1/15 m 1/15 7/15 13/15 A 1/3 1/3 0. 33 0. 24 0. 20 0. 46 0. 52 0. 26 0. 18 0. 56 . . . J. Leskovec, A. Rajaraman, J. Ullman: Mining of Massive Datasets, http: //www. mmds. org 7/33 5/33 21/33

Page. Rank Map. Reduce Implementation

Simplified Page. Rank First, tackle the simple case: No random jump factor No dangling (dead-end) nodes

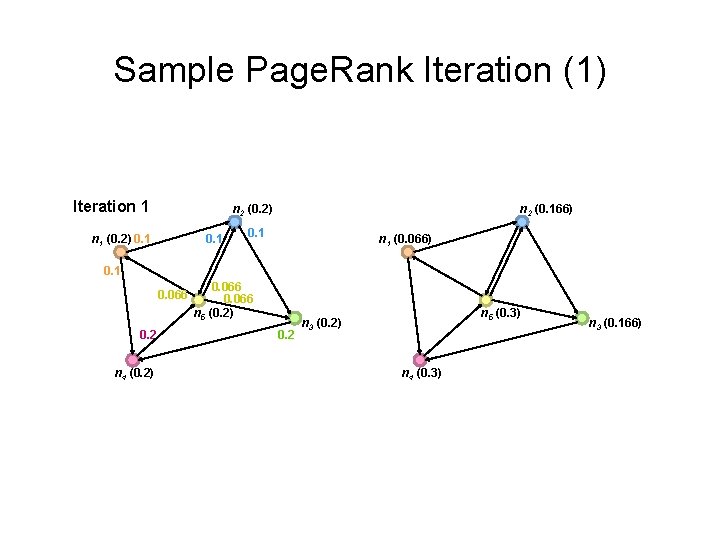

Sample Page. Rank Iteration (1) Iteration 1 n 2 (0. 2) n 1 (0. 2) 0. 1 n 2 (0. 166) 0. 1 n 1 (0. 066) 0. 1 0. 066 0. 2 n 4 (0. 2) 0. 066 n 5 (0. 2) 0. 2 n 5 (0. 3) n 3 (0. 2) n 4 (0. 3) n 3 (0. 166)

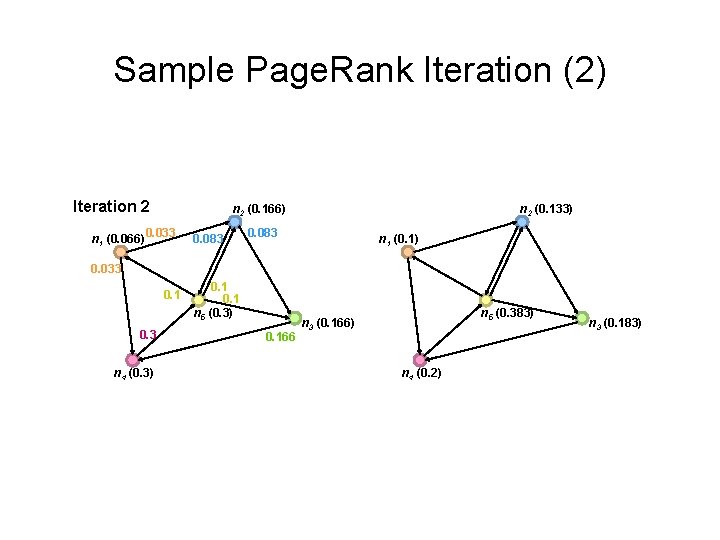

Sample Page. Rank Iteration (2) Iteration 2 (0. 166) n 1 (0. 066) 0. 033 0. 083 n 2 (0. 133) 0. 083 n 1 (0. 1) 0. 033 0. 1 0. 3 n 4 (0. 3) 0. 1 n 5 (0. 3) 0. 166 n 5 (0. 383) n 3 (0. 166) n 4 (0. 2) n 3 (0. 183)

![Page. Rank in Map. Reduce n 1 [n 2, n 4] n 2 [n Page. Rank in Map. Reduce n 1 [n 2, n 4] n 2 [n](http://slidetodoc.com/presentation_image_h2/bf6b814b4d4cbe620a3a3c90432278aa/image-35.jpg)

Page. Rank in Map. Reduce n 1 [n 2, n 4] n 2 [n 3, n 5] n 2 n 3 [n 4] n 4 [n 5] n 4 n 5 [n 1, n 2, n 3] Map n 1 n 4 n 2 n 5 n 3 n 4 n 1 n 2 n 5 Reduce n 1 [n 2, n 4] n 2 [n 3, n 5] n 3 [n 4] n 4 [n 5] n 5 [n 1, n 2, n 3] n 3 n 5

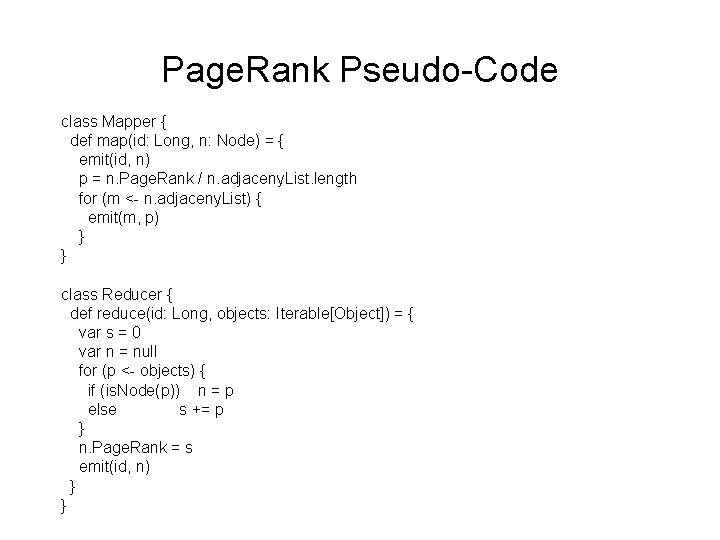

Page. Rank Pseudo-Code class Mapper { def map(id: Long, n: Node) = { emit(id, n) p = n. Page. Rank / n. adjaceny. List. length for (m <- n. adjaceny. List) { emit(m, p) } } class Reducer { def reduce(id: Long, objects: Iterable[Object]) = { var s = 0 var n = null for (p <- objects) { if (is. Node(p)) n = p else s += p } n. Page. Rank = s emit(id, n) } }

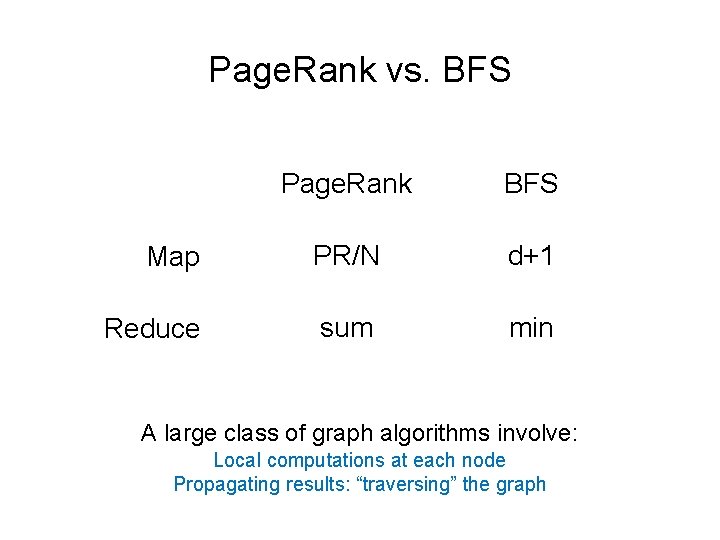

Page. Rank vs. BFS Page. Rank BFS Map PR/N d+1 Reduce sum min A large class of graph algorithms involve: Local computations at each node Propagating results: “traversing” the graph

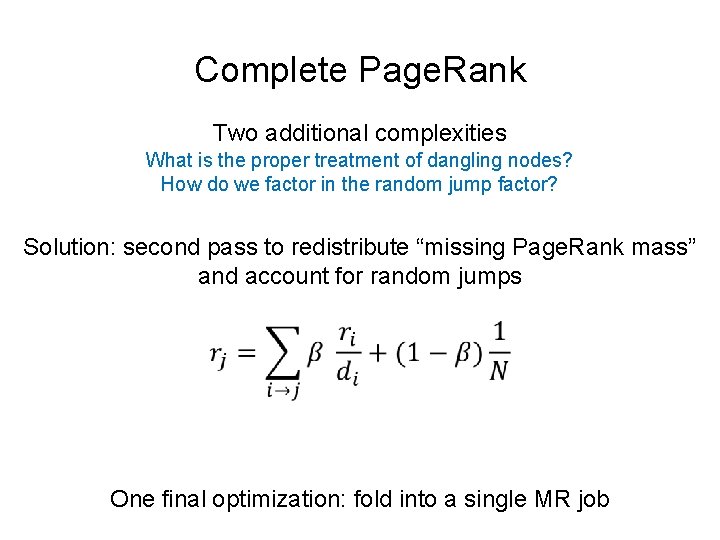

Complete Page. Rank Two additional complexities What is the proper treatment of dangling nodes? How do we factor in the random jump factor? Solution: second pass to redistribute “missing Page. Rank mass” and account for random jumps One final optimization: fold into a single MR job

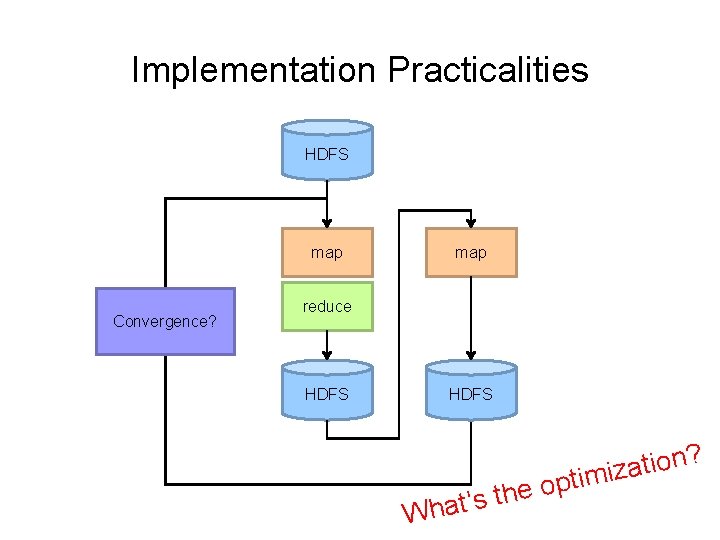

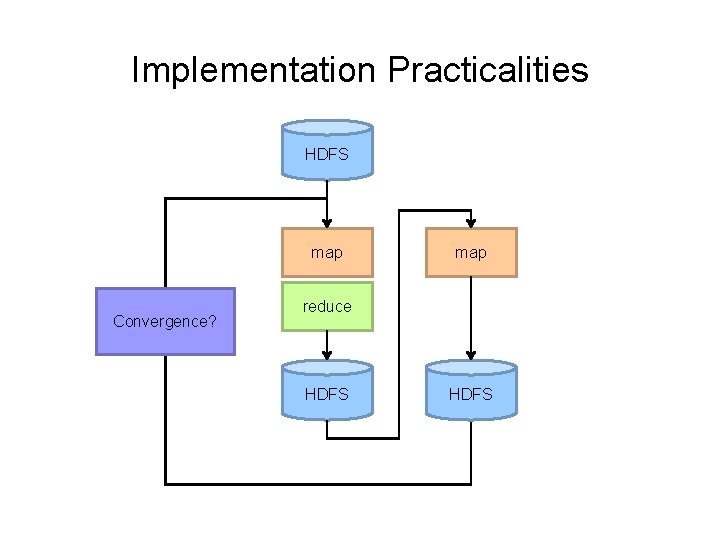

Implementation Practicalities HDFS map Convergence? map reduce HDFS h t s ’ t a Wh ? n o i t a iz m i t p o e

Page. Rank Convergence Alternative convergence criteria Iterate until Page. Rank values don’t change Iterate until Page. Rank rankings don’t change Fixed number of iterations

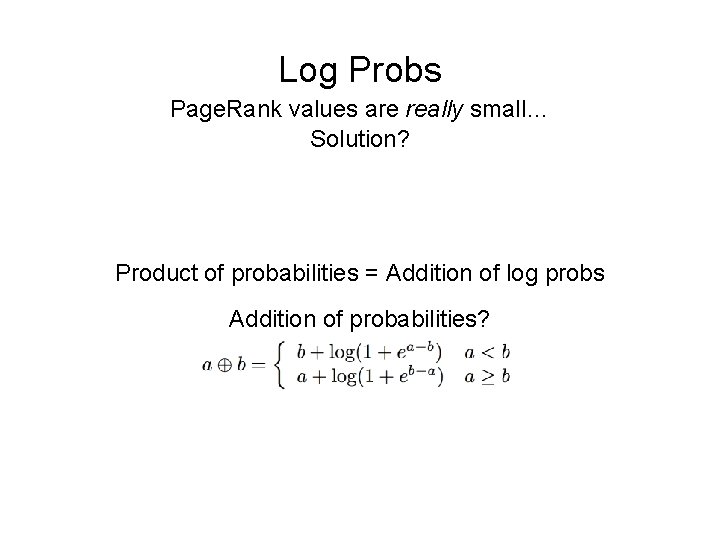

Log Probs Page. Rank values are really small… Solution? Product of probabilities = Addition of log probs Addition of probabilities?

Beyond Page. Rank Variations of Page. Rank Weighted edges Personalized Page. Rank (A 4 ) Variants on graph random walks Hubs and authorities (HITS) SALSA

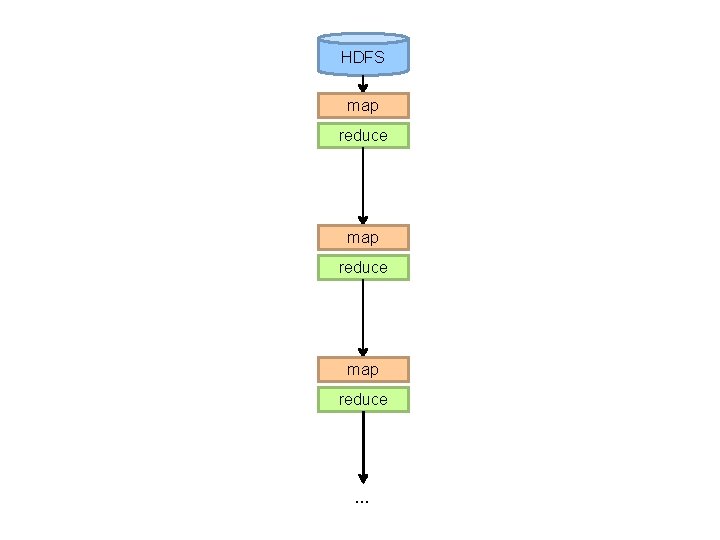

Implementation Practicalities HDFS map Convergence? map reduce HDFS

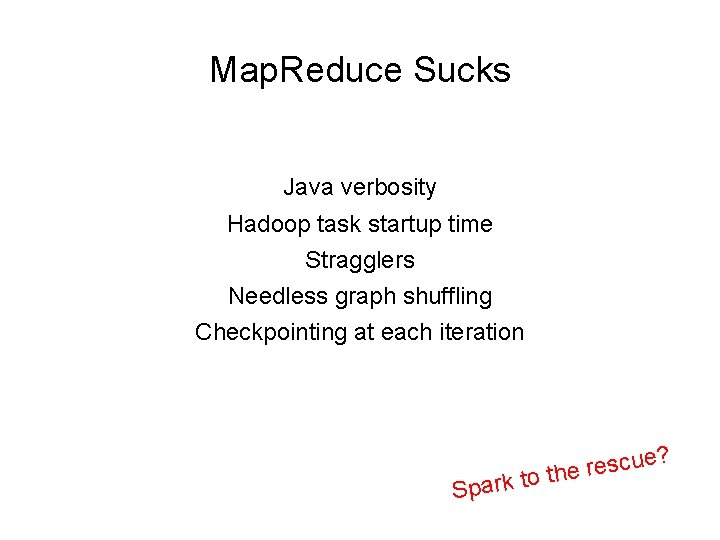

Map. Reduce Sucks Java verbosity Hadoop task startup time Stragglers Needless graph shuffling Checkpointing at each iteration Spa ue? c s e rk to th

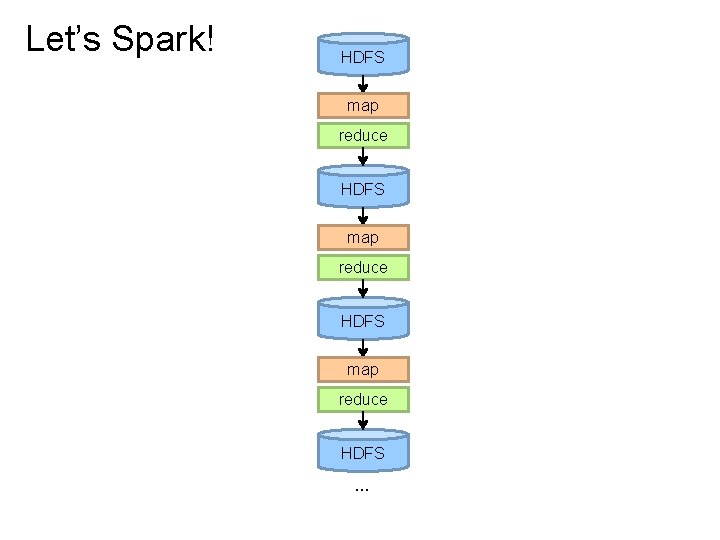

Let’s Spark! HDFS map reduce HDFS …

HDFS map reduce …

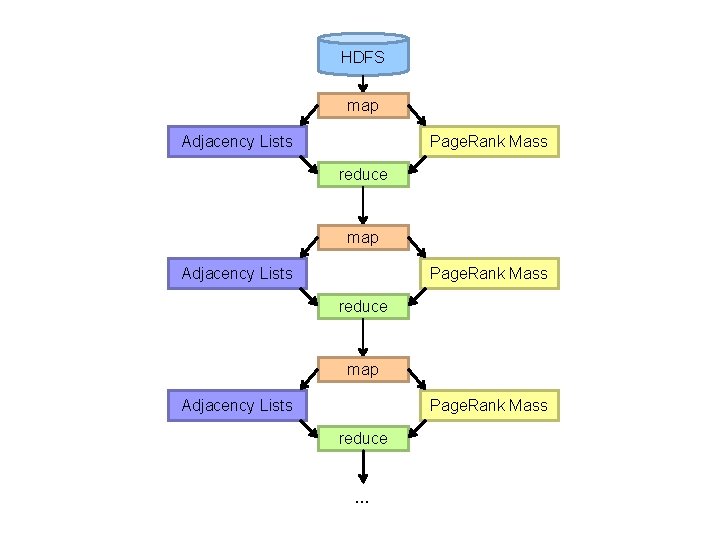

HDFS map Adjacency Lists Page. Rank Mass reduce …

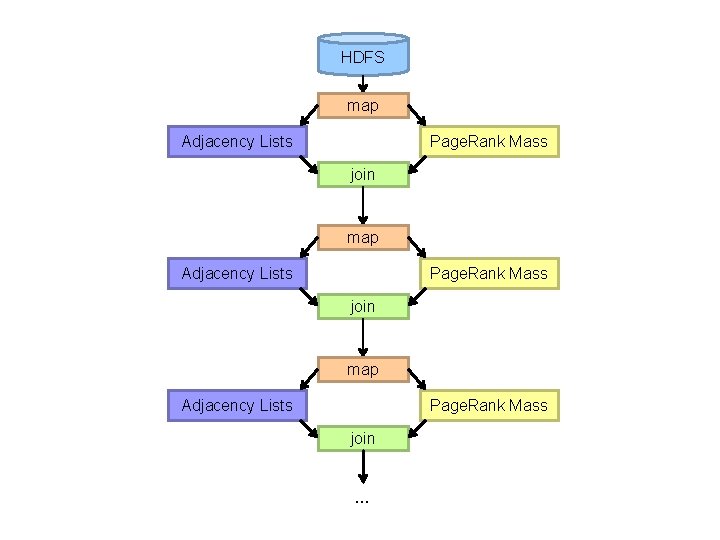

HDFS map Adjacency Lists Page. Rank Mass join …

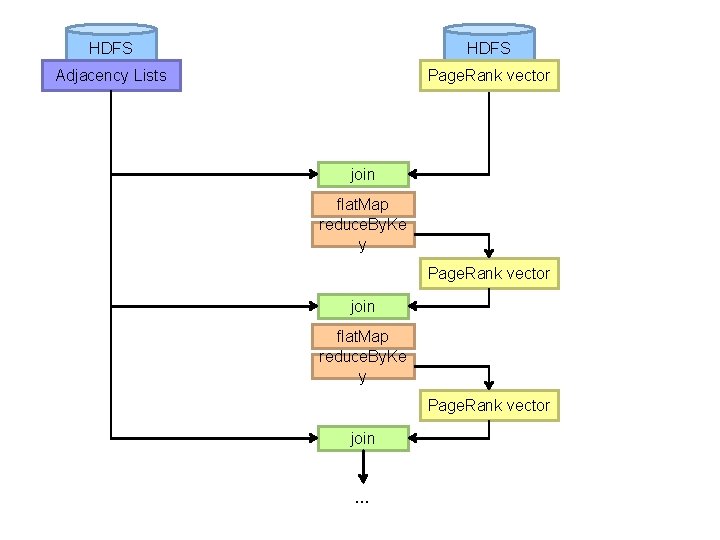

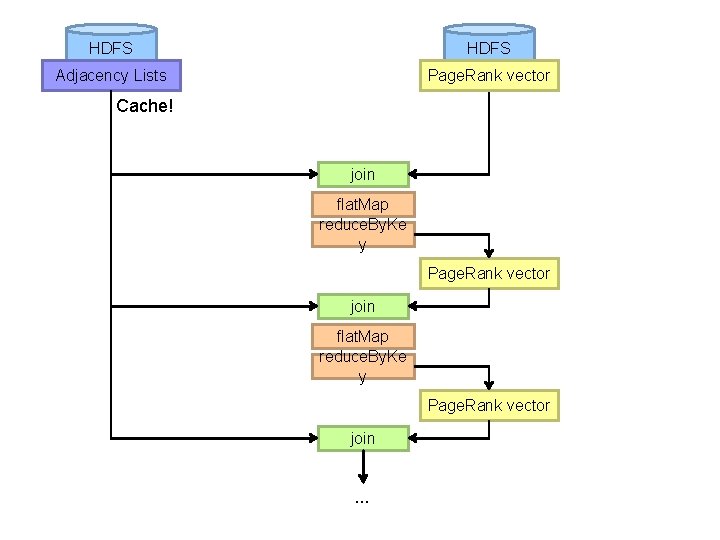

HDFS Adjacency Lists Page. Rank vector join flat. Map reduce. By. Ke y Page. Rank vector join …

HDFS Adjacency Lists Page. Rank vector Cache! join flat. Map reduce. By. Ke y Page. Rank vector join …

Map. Reduce vs. Spark Source: http: //ampcamp. berkeley. edu/wp-content/uploads/2012/06/matei-zaharia-part-2 -amp-camp-2012 -standalone-programs. pdf

- Slides: 51