DataDriven Dependency Parsing Kenji Sagae 1 CSCI544 Background

Data-Driven Dependency Parsing Kenji Sagae 1 CSCI-544

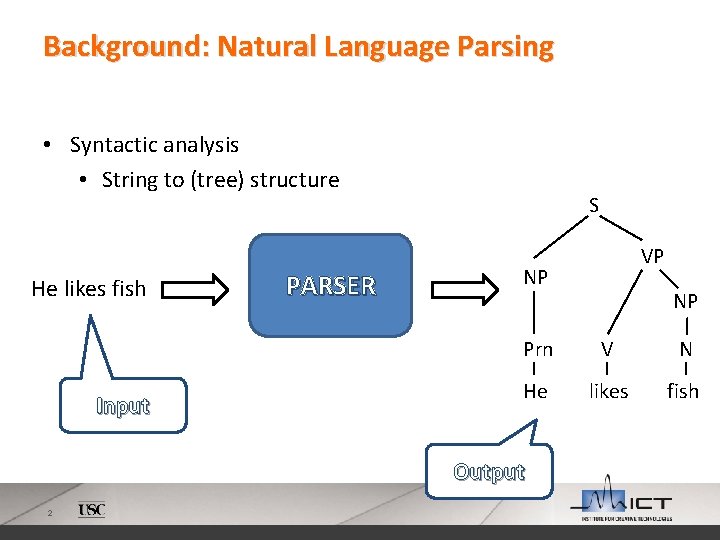

Background: Natural Language Parsing • Syntactic analysis • String to (tree) structure He likes fish Input PARSER S NP NP Prn V N He likes fish Output 2 VP

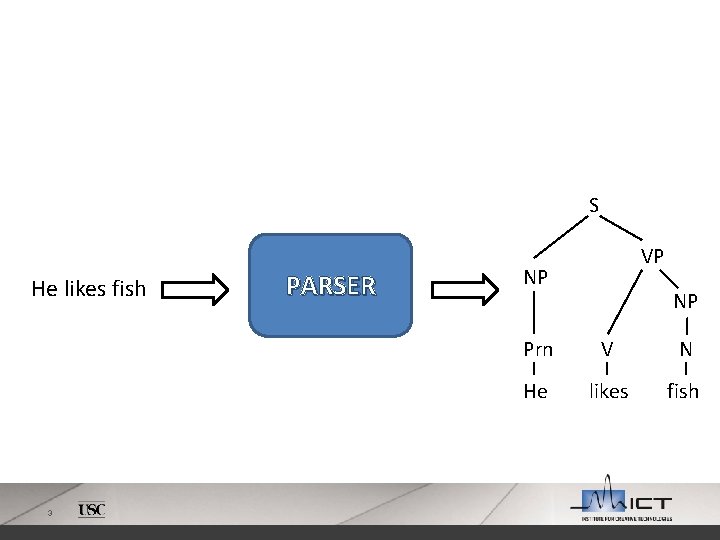

S He likes fish 3 PARSER VP NP NP Prn V N He likes fish

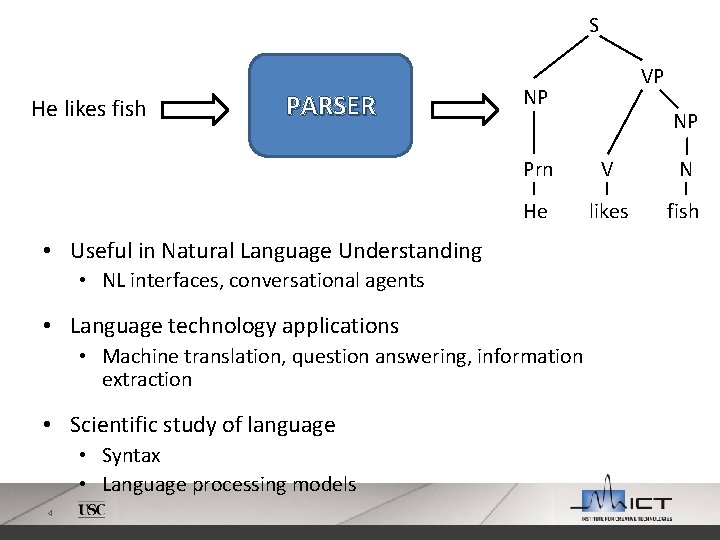

S He likes fish PARSER NP V N He likes fish • NL interfaces, conversational agents • Language technology applications • Machine translation, question answering, information extraction • Syntax • Language processing models 4 NP Prn • Useful in Natural Language Understanding • Scientific study of language VP

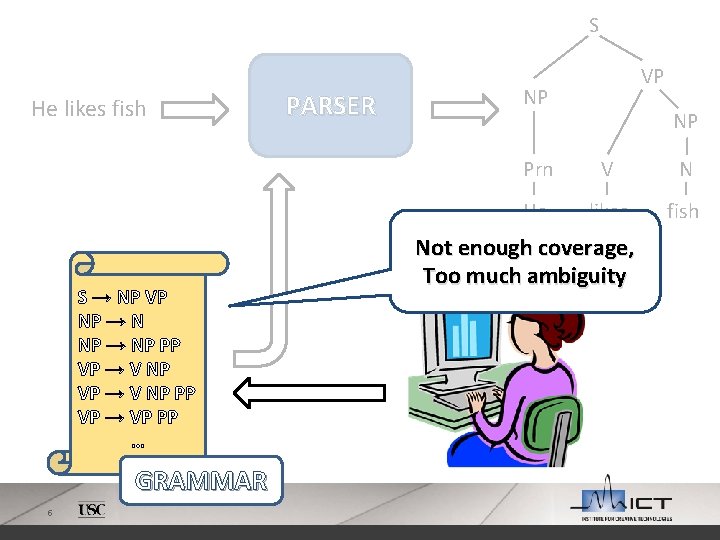

S He likes fish S → NP VP NP → NP PP VP → VP PP … GRAMMAR 5 PARSER VP NP NP Prn V N He likes fish Not enough coverage, Too much ambiguity

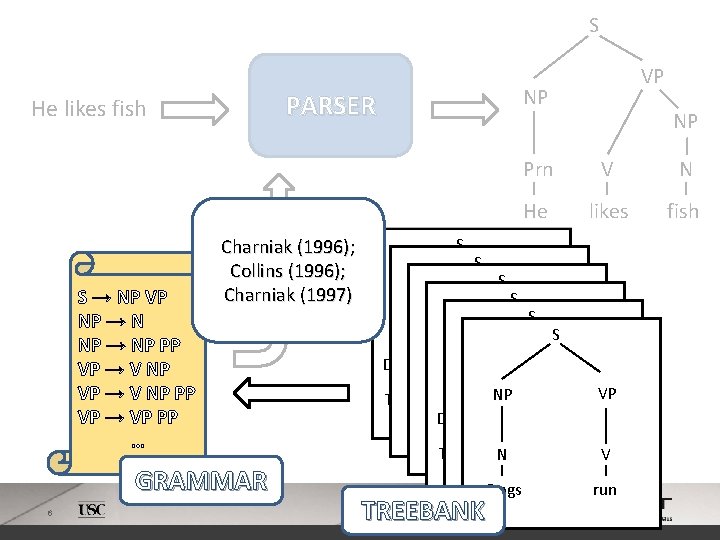

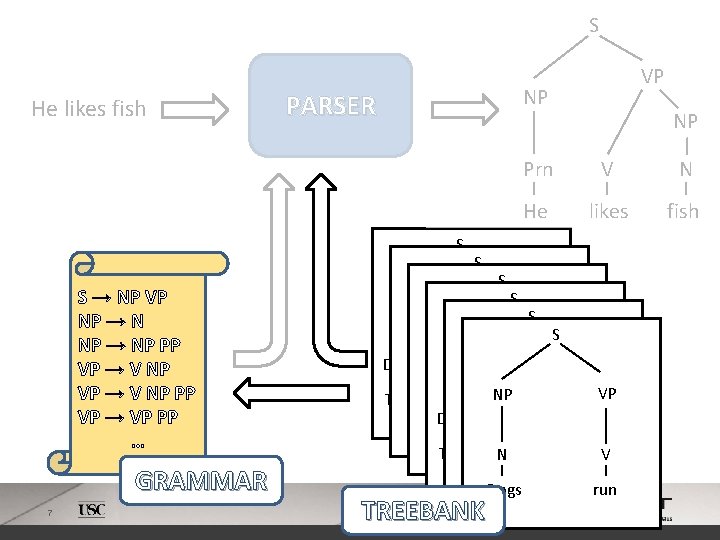

S S → NP VP NP → NP PP VP → VP PP … Charniak (1996); Collins (1996); Charniak (1997) GRAMMAR 6 NP PARSER He likes fish VP S NP Prn V N He likes fish S SVP S VP NP S NP Adv. P S VPVP NP Adv. P N NP Adv VP Det V NPV Adv. P N VP The boy. N runs NP fast. V Adv. P Dogs. Det run N V fast Adv Dogs N run Adv V The boy. N runs fast. V Dogs run fast Dogs run TREEBANK 6

S He likes fish NP PARSER S S → NP VP NP → NP PP VP → VP PP … GRAMMAR 7 VP S NP Prn V N He likes fish SVP S VP NP S NP Adv. P S VPVP NP Adv. P N NP Adv VP Det V NPV Adv. P N VP The boy. N runs NP fast. V Adv. P Dogs. Det run N V fast Adv Dogs N run Adv V The boy. N runs fast. V Dogs run fast Dogs run TREEBANK

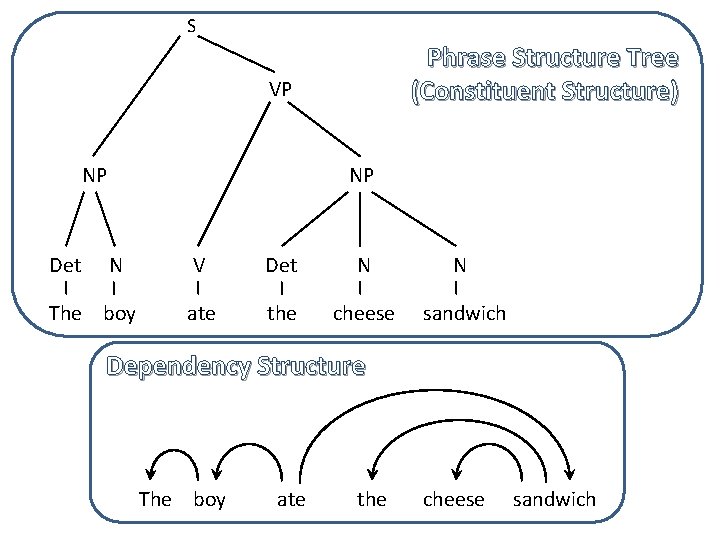

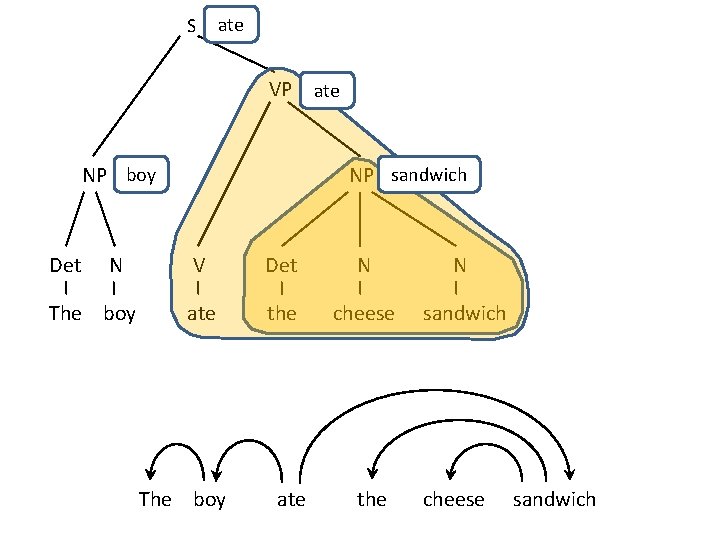

S Phrase Structure Tree (Constituent Structure) VP NP Det NP N The boy V Det N N ate the cheese sandwich Dependency Structure 8 The boy ate the cheese sandwich

S ate VP ate NP boy Det N The boy 9 NP sandwich V Det N N ate the cheese sandwich The boy ate the cheese sandwich

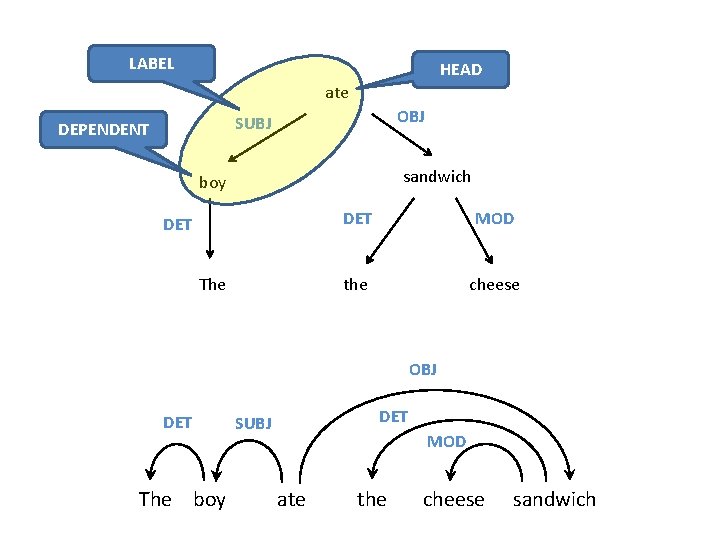

LABEL HEAD ate OBJ SUBJ DEPENDENT sandwich boy DET The DET MOD the cheese OBJ DET 10 The boy DET SUBJ MOD ate the cheese sandwich

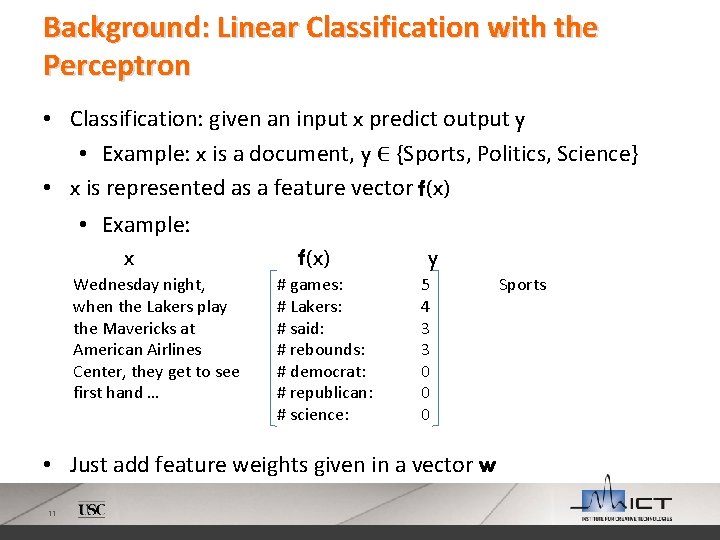

Background: Linear Classification with the Perceptron • Classification: given an input x predict output y • Example: x is a document, y ∈ {Sports, Politics, Science} • x is represented as a feature vector f(x) • Example: x f(x) y Wednesday night, when the Lakers play the Mavericks at American Airlines Center, they get to see first hand … # games: # Lakers: # said: # rebounds: # democrat: # republican: # science: 5 4 3 3 0 0 0 • Just add feature weights given in a vector w 11 Sports

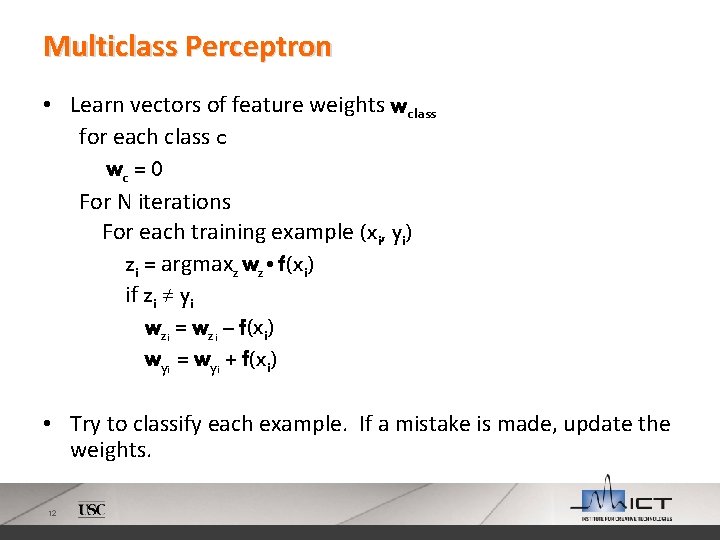

Multiclass Perceptron • Learn vectors of feature weights wclass for each class c wc = 0 For N iterations For each training example (xi, yi) zi = argmaxz wz • f(xi) if zi ≠ yi wzi = wzi – f(xi) wyi = wyi + f(xi) • Try to classify each example. If a mistake is made, update the weights. 12

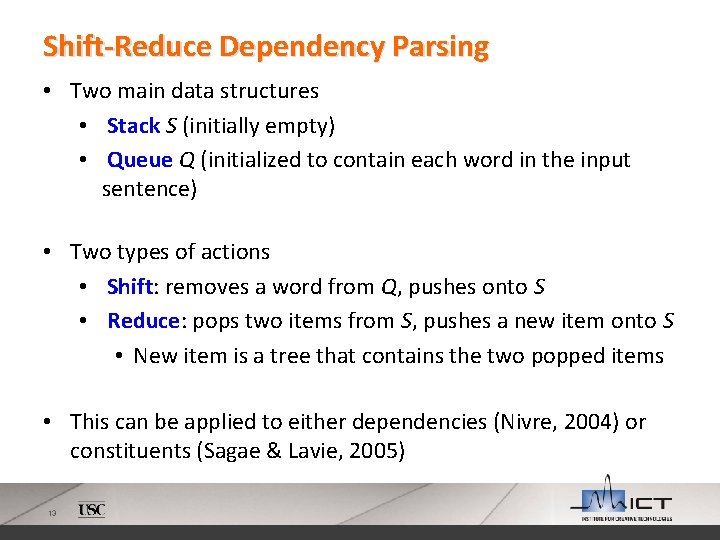

Shift-Reduce Dependency Parsing • Two main data structures • Stack S (initially empty) • Queue Q (initialized to contain each word in the input sentence) • Two types of actions • Shift: removes a word from Q, pushes onto S • Reduce: pops two items from S, pushes a new item onto S • New item is a tree that contains the two popped items • This can be applied to either dependencies (Nivre, 2004) or constituents (Sagae & Lavie, 2005) 13

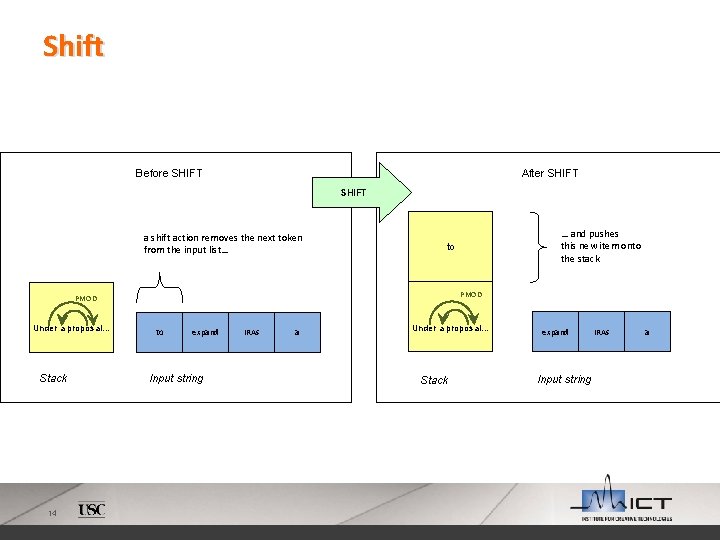

Shift Before SHIFT After SHIFT a shift action removes the next token from the input list… PMOD Under a proposal… Stack 14 … and pushes this new item onto the stack to to expand Input string IRAs a Under a proposal… Stack expand Input string IRAs a

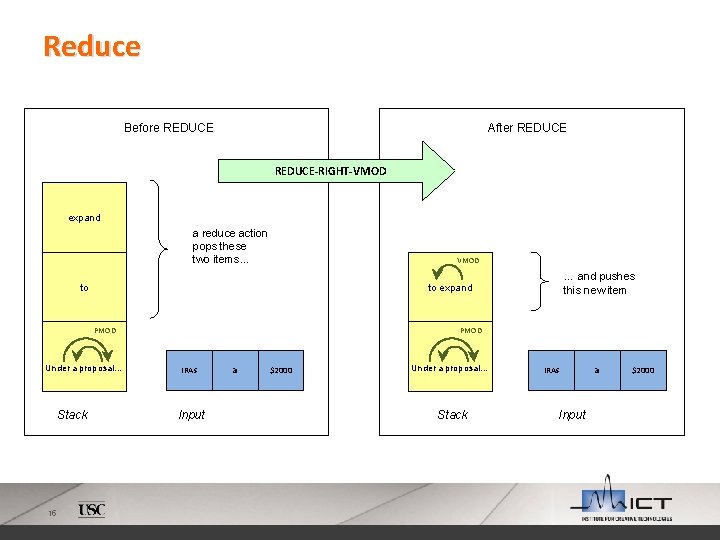

Reduce Before REDUCE After REDUCE-RIGHT-VMOD expand a reduce action pops these two items… VMOD to PMOD Under a proposal… Stack 15 … and pushes this new item to expand PMOD IRAs Input a $2000 Under a proposal… Stack IRAs Input a $2000

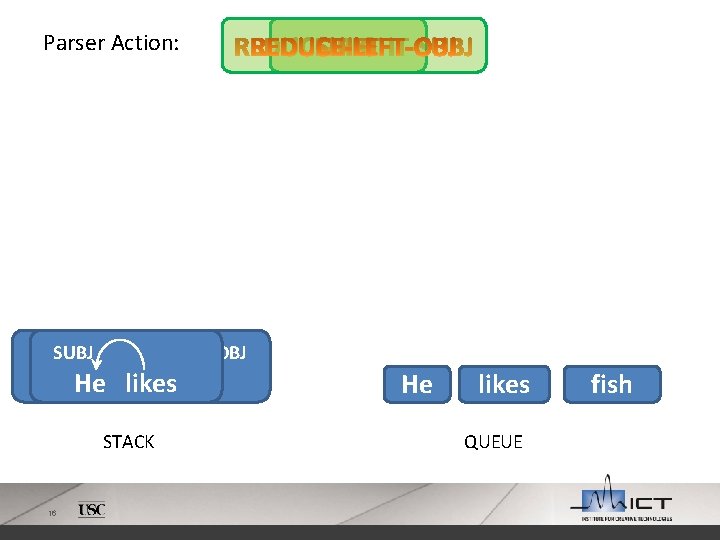

Parser Action: SUBJ OBJ He He likes fish STACK 16 He likes QUEUE fish

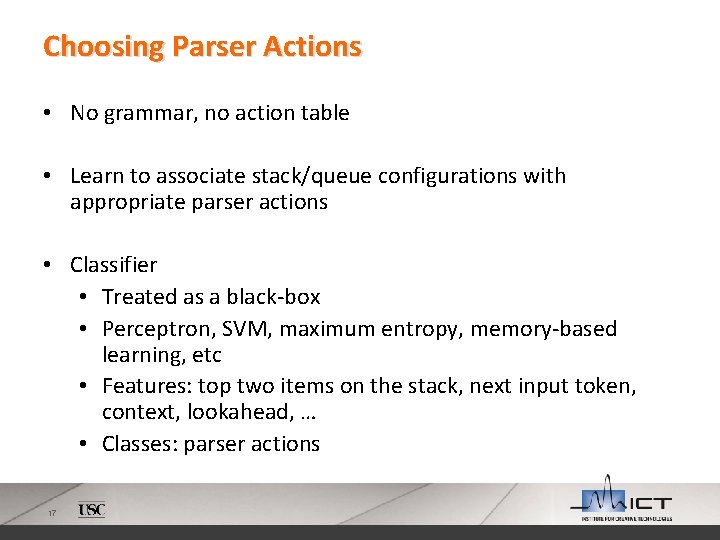

Choosing Parser Actions • No grammar, no action table • Learn to associate stack/queue configurations with appropriate parser actions • Classifier • Treated as a black-box • Perceptron, SVM, maximum entropy, memory-based learning, etc • Features: top two items on the stack, next input token, context, lookahead, … • Classes: parser actions 17

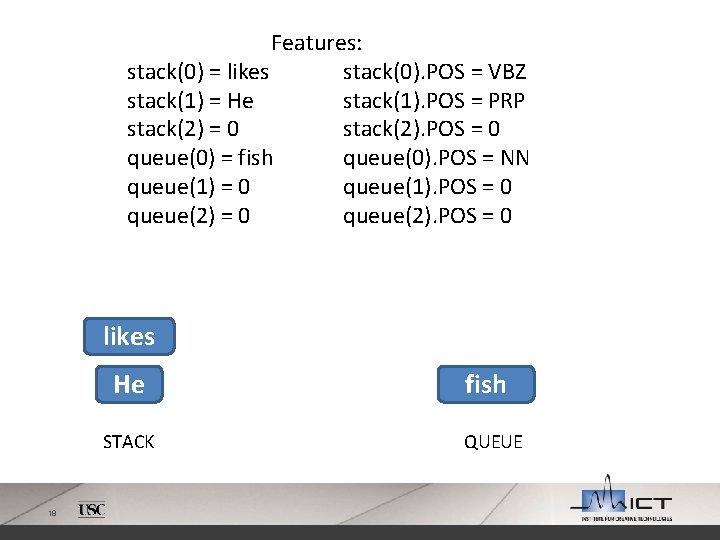

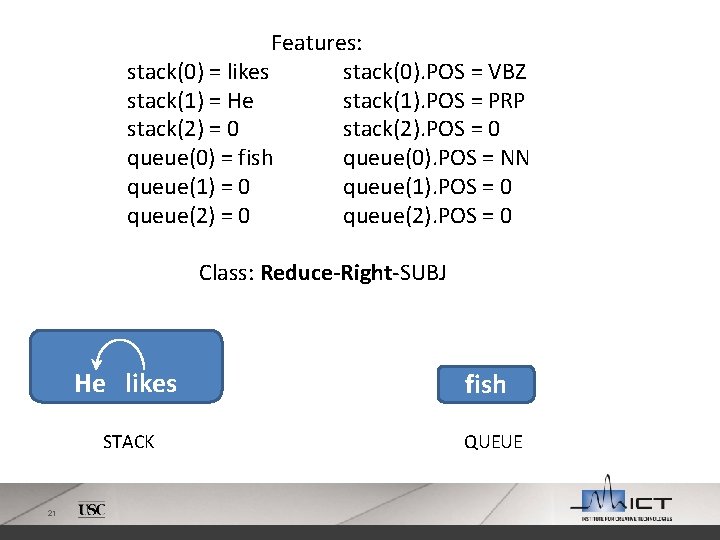

Features: stack(0) = likes stack(0). POS = VBZ stack(1) = He stack(1). POS = PRP stack(2) = 0 stack(2). POS = 0 queue(0) = fish queue(0). POS = NN queue(1) = 0 queue(1). POS = 0 queue(2). POS = 0 likes He STACK 18 fish QUEUE

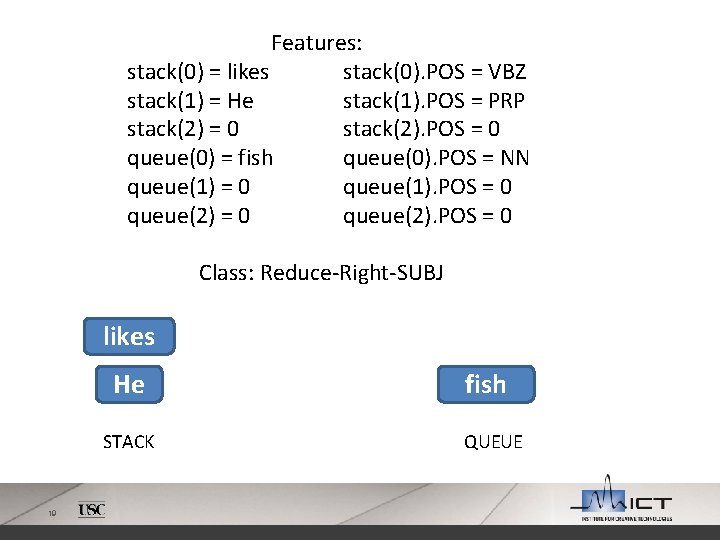

Features: stack(0) = likes stack(0). POS = VBZ stack(1) = He stack(1). POS = PRP stack(2) = 0 stack(2). POS = 0 queue(0) = fish queue(0). POS = NN queue(1) = 0 queue(1). POS = 0 queue(2). POS = 0 Class: Reduce-Right-SUBJ likes He STACK 19 fish QUEUE

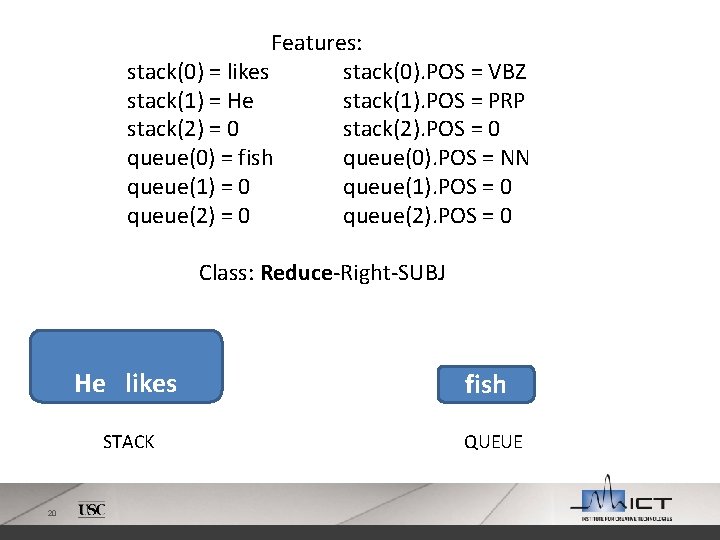

Features: stack(0) = likes stack(0). POS = VBZ stack(1) = He stack(1). POS = PRP stack(2) = 0 stack(2). POS = 0 queue(0) = fish queue(0). POS = NN queue(1) = 0 queue(1). POS = 0 queue(2). POS = 0 Class: Reduce-Right-SUBJ He likes STACK 20 fish QUEUE

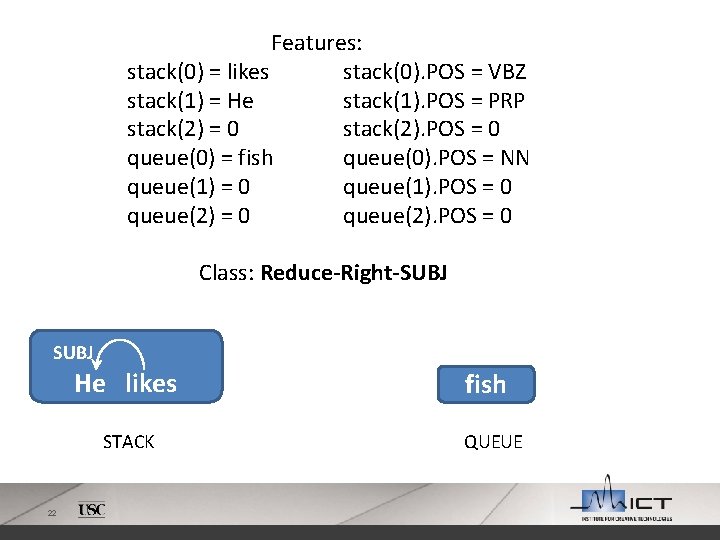

Features: stack(0) = likes stack(0). POS = VBZ stack(1) = He stack(1). POS = PRP stack(2) = 0 stack(2). POS = 0 queue(0) = fish queue(0). POS = NN queue(1) = 0 queue(1). POS = 0 queue(2). POS = 0 Class: Reduce-Right-SUBJ He likes STACK 21 fish QUEUE

Features: stack(0) = likes stack(0). POS = VBZ stack(1) = He stack(1). POS = PRP stack(2) = 0 stack(2). POS = 0 queue(0) = fish queue(0). POS = NN queue(1) = 0 queue(1). POS = 0 queue(2). POS = 0 Class: Reduce-Right-SUBJ He likes STACK 22 fish QUEUE

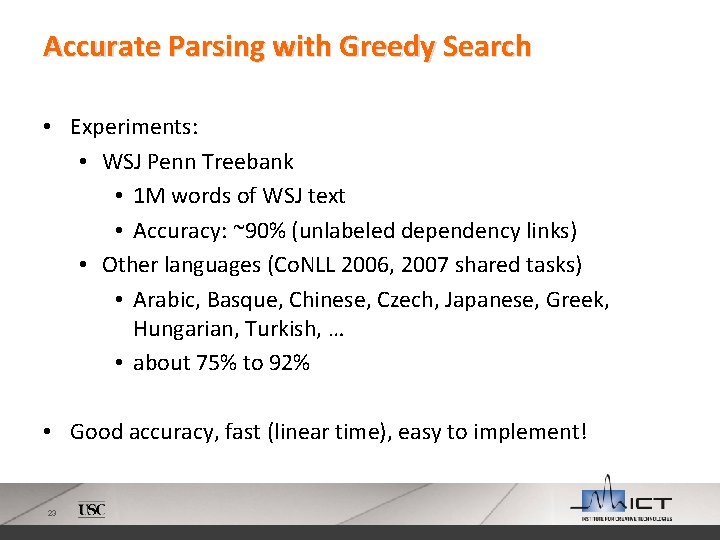

Accurate Parsing with Greedy Search • Experiments: • WSJ Penn Treebank • 1 M words of WSJ text • Accuracy: ~90% (unlabeled dependency links) • Other languages (Co. NLL 2006, 2007 shared tasks) • Arabic, Basque, Chinese, Czech, Japanese, Greek, Hungarian, Turkish, … • about 75% to 92% • Good accuracy, fast (linear time), easy to implement! 23

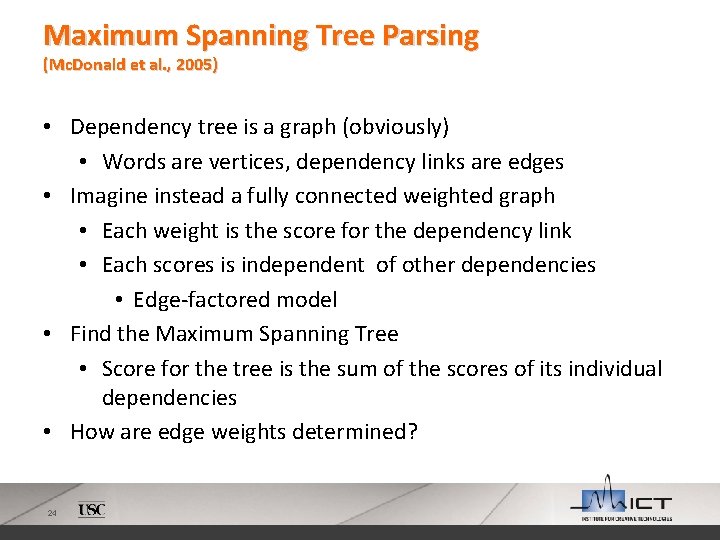

Maximum Spanning Tree Parsing (Mc. Donald et al. , 2005) • Dependency tree is a graph (obviously) • Words are vertices, dependency links are edges • Imagine instead a fully connected weighted graph • Each weight is the score for the dependency link • Each scores is independent of other dependencies • Edge-factored model • Find the Maximum Spanning Tree • Score for the tree is the sum of the scores of its individual dependencies • How are edge weights determined? 24

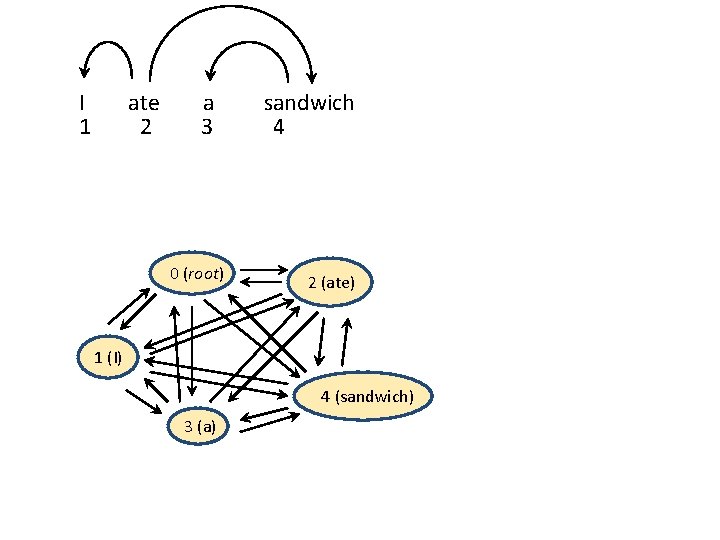

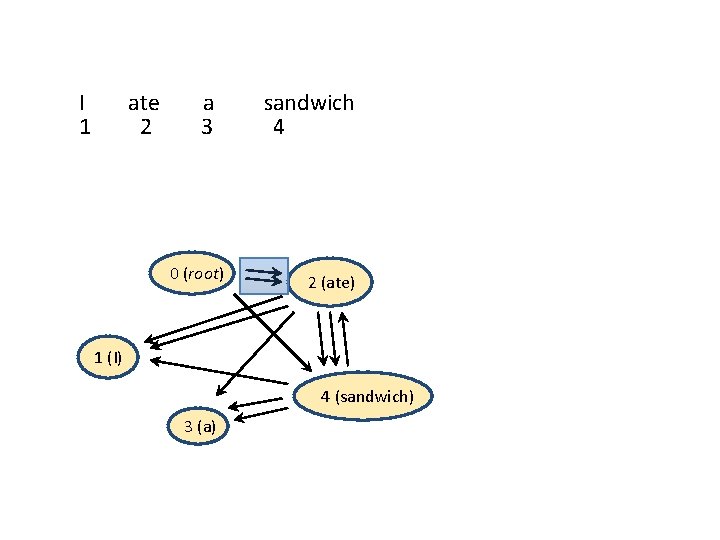

I 1 ate 2 a 3 0 (root) sandwich 4 2 (ate) 1 (I) 4 (sandwich) 3 (a) 25

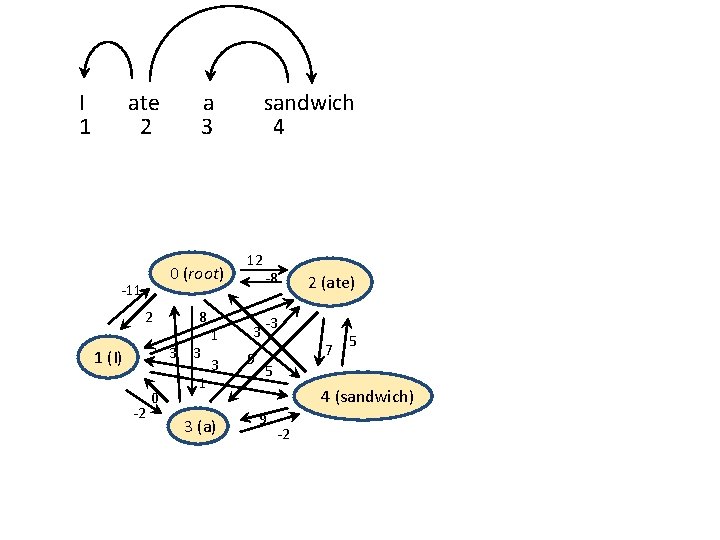

I 1 ate 2 0 (root) -11 2 8 3 3 1 (I) -2 26 a 3 0 1 1 3 3 (a) sandwich 4 12 3 9 -8 2 (ate) -3 7 5 5 4 (sandwich) 9 -2

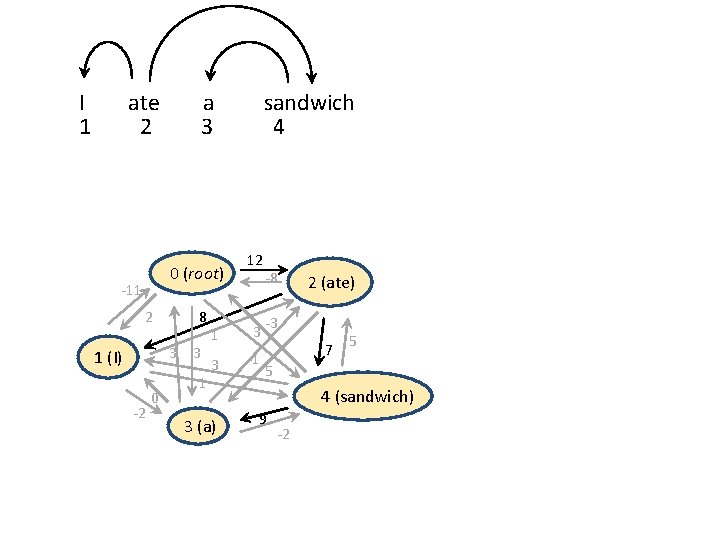

I 1 ate 2 0 (root) -11 2 8 3 3 1 (I) -2 27 a 3 0 1 sandwich 4 12 1 3 3 -1 3 (a) -8 2 (ate) -3 7 5 5 4 (sandwich) 9 -2

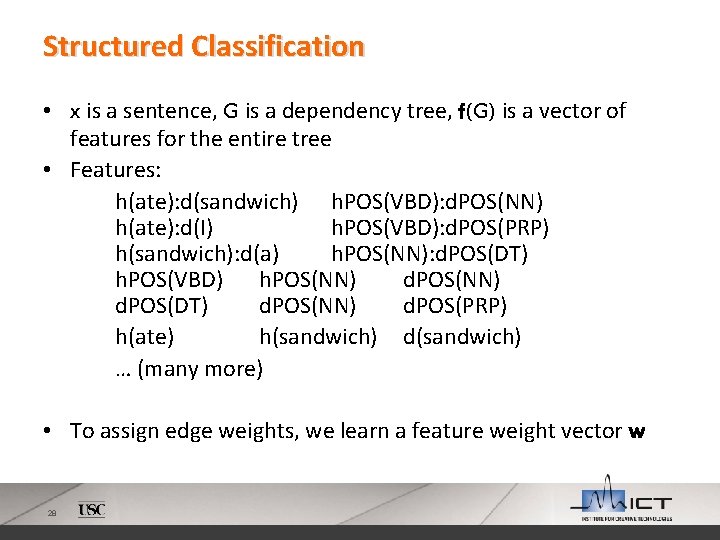

Structured Classification • x is a sentence, G is a dependency tree, f(G) is a vector of features for the entire tree • Features: h(ate): d(sandwich) h. POS(VBD): d. POS(NN) h(ate): d(I) h. POS(VBD): d. POS(PRP) h(sandwich): d(a) h. POS(NN): d. POS(DT) h. POS(VBD) h. POS(NN) d. POS(DT) d. POS(NN) d. POS(PRP) h(ate) h(sandwich) d(sandwich) … (many more) • To assign edge weights, we learn a feature weight vector w 28

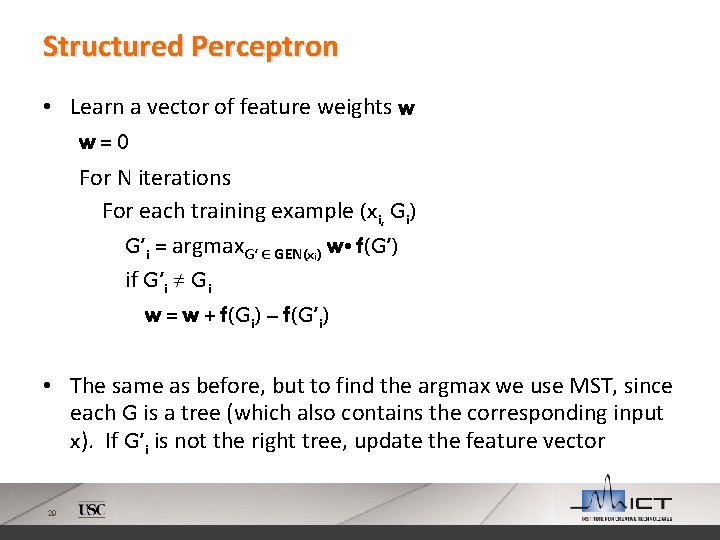

Structured Perceptron • Learn a vector of feature weights w w=0 For N iterations For each training example (xi, Gi) G’i = argmax. G’ ∈ GEN(xi) w • f(G’) if G’i ≠ Gi w = w + f(Gi) – f(G’i) • The same as before, but to find the argmax we use MST, since each G is a tree (which also contains the corresponding input x). If G’i is not the right tree, update the feature vector 29

Question: Are there trees that an MST parser can find, but a Shift-Reduce parser* can’t? (*shift-reduce parser as described in slides 13 -19) 30

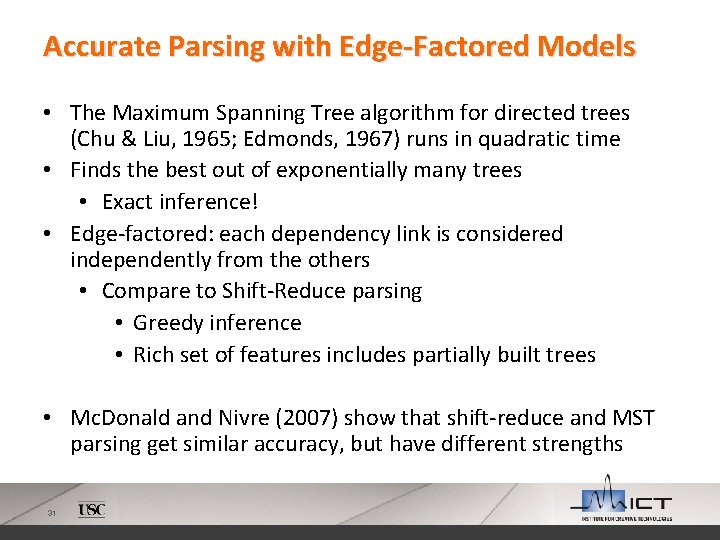

Accurate Parsing with Edge-Factored Models • The Maximum Spanning Tree algorithm for directed trees (Chu & Liu, 1965; Edmonds, 1967) runs in quadratic time • Finds the best out of exponentially many trees • Exact inference! • Edge-factored: each dependency link is considered independently from the others • Compare to Shift-Reduce parsing • Greedy inference • Rich set of features includes partially built trees • Mc. Donald and Nivre (2007) show that shift-reduce and MST parsing get similar accuracy, but have different strengths 31

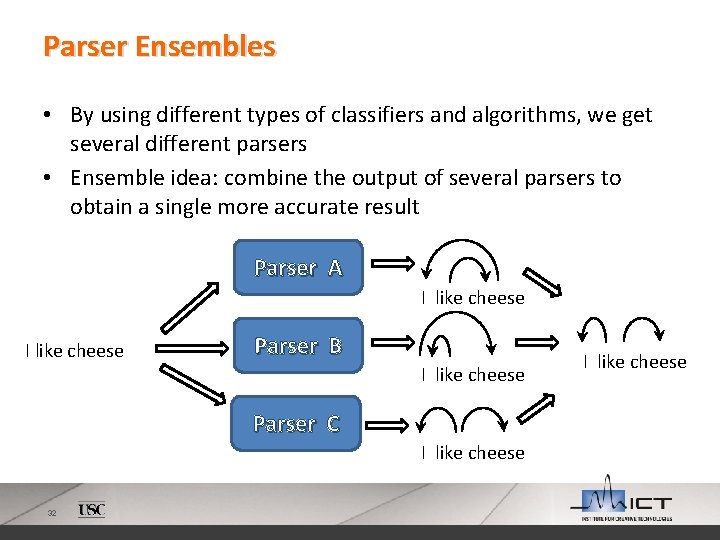

Parser Ensembles • By using different types of classifiers and algorithms, we get several different parsers • Ensemble idea: combine the output of several parsers to obtain a single more accurate result Parser A I like cheese Parser B I like cheese Parser C I like cheese 32 I like cheese

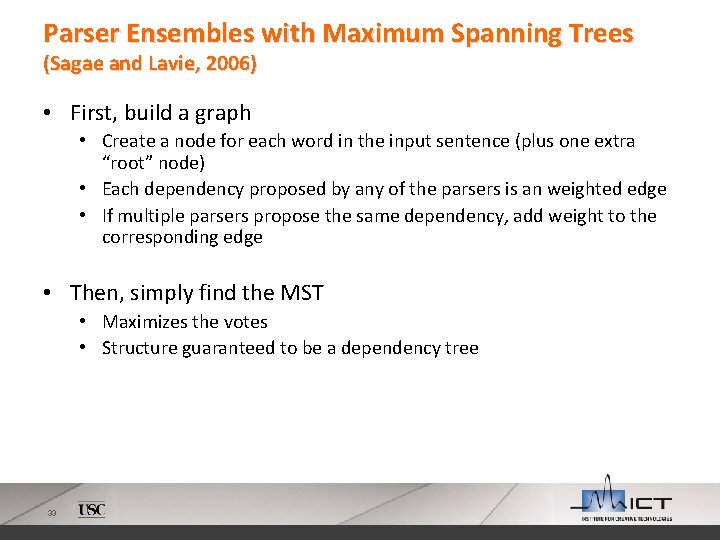

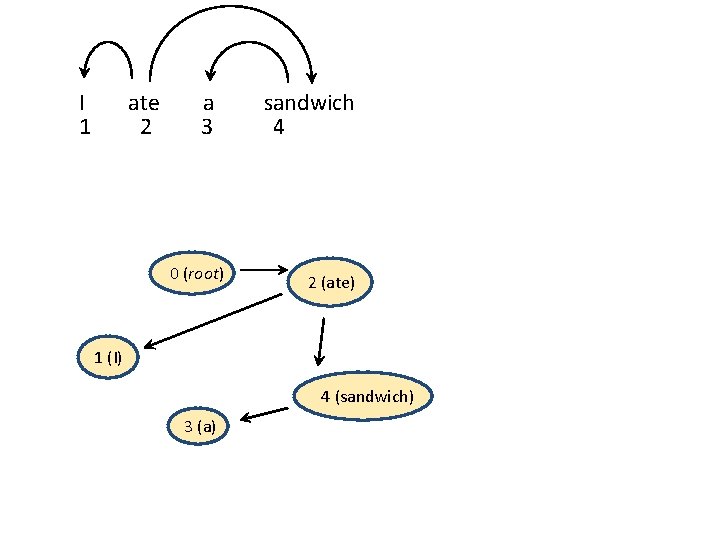

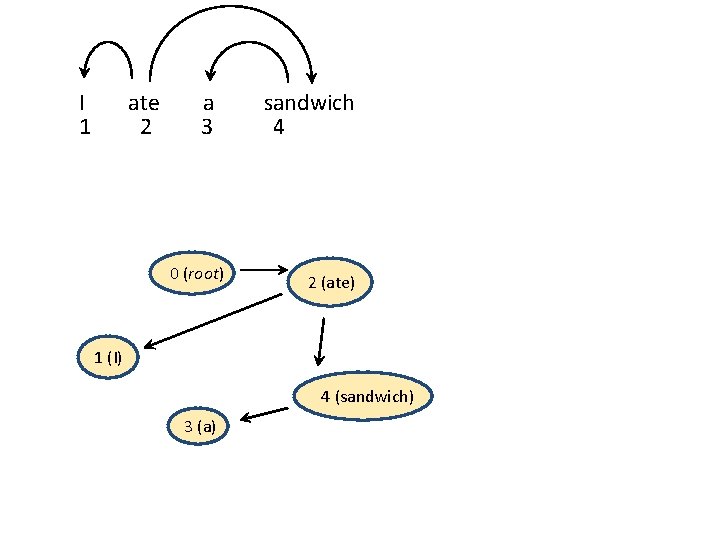

Parser Ensembles with Maximum Spanning Trees (Sagae and Lavie, 2006) • First, build a graph • Create a node for each word in the input sentence (plus one extra “root” node) • Each dependency proposed by any of the parsers is an weighted edge • If multiple parsers propose the same dependency, add weight to the corresponding edge • Then, simply find the MST • Maximizes the votes • Structure guaranteed to be a dependency tree 33

I 1 ate 2 a 3 0 (root) sandwich 4 2 (ate) 1 (I) 4 (sandwich) 3 (a) 34

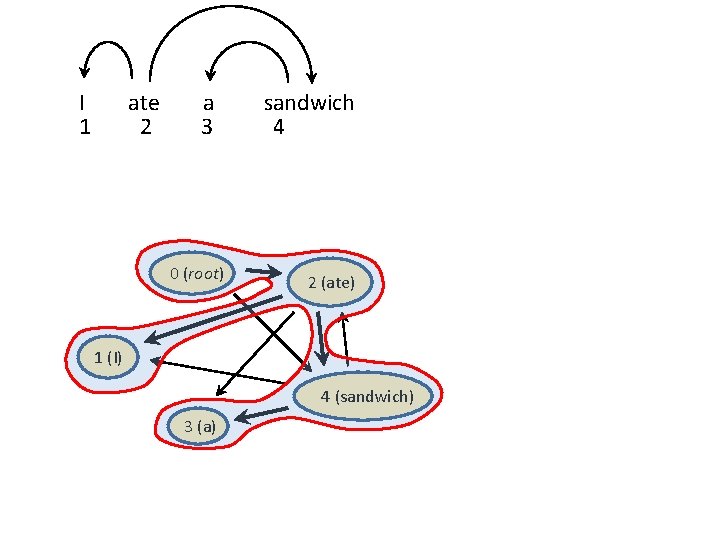

I 1 ate 2 a 3 0 (root) sandwich 4 2 (ate) 1 (I) 4 (sandwich) 3 (a) 35

I 1 ate 2 a 3 sandwich 4 Parser A Parser B Parser C 0 (root) 2 (ate) 1 (I) 4 (sandwich) 3 (a) 36

I 1 ate 2 a 3 0 (root) sandwich 4 2 (ate) 1 (I) 4 (sandwich) 3 (a) 37

I 1 ate 2 a 3 0 (root) sandwich 4 2 (ate) 1 (I) 4 (sandwich) 3 (a) 38

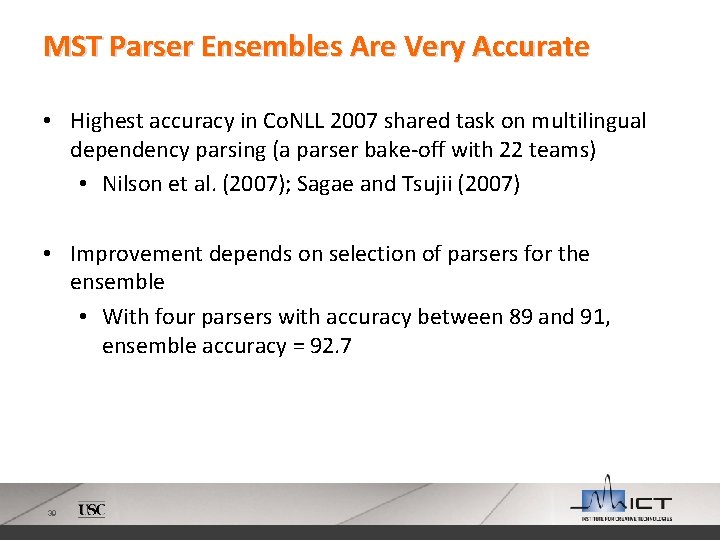

MST Parser Ensembles Are Very Accurate • Highest accuracy in Co. NLL 2007 shared task on multilingual dependency parsing (a parser bake-off with 22 teams) • Nilson et al. (2007); Sagae and Tsujii (2007) • Improvement depends on selection of parsers for the ensemble • With four parsers with accuracy between 89 and 91, ensemble accuracy = 92. 7 39

- Slides: 39