CPSC 503 Computational Linguistics Intro probability and information

CPSC 503 Computational Linguistics Intro probability and information Theory Lecture 5 Giuseppe Carenini 1/1/2022 CPSC 503 Spring 2004 1

Today 28/1 • Why do we need probabilities and information theory? • Basic Probability Theory • Basic Information Theory 1/1/2022 CPSC 503 Spring 2004 2

Why do we need probabilities? • For Spelling errors: what is the most probable correct word? • For real-word spelling errors, speech and hand writing recognition - What is the most probable next word? • Part-of-speech tagging, word-sense disambiguation, probabilistic parsing Basic question: What is the probability of sequence of words? (e. g. of a sentence) 1/1/2022 CPSC 503 Spring 2004 3

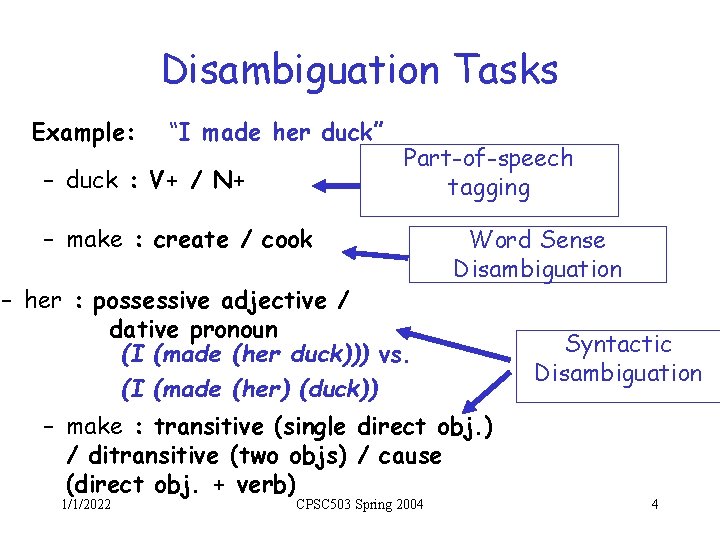

Disambiguation Tasks Example: “I made her duck” – duck : V+ / N+ Part-of-speech tagging – make : create / cook – her : possessive adjective / dative pronoun (I (made (her duck))) vs. (I (made (her) (duck)) Word Sense Disambiguation – make : transitive (single direct obj. ) / ditransitive (two objs) / cause (direct obj. + verb) 1/1/2022 CPSC 503 Spring 2004 Syntactic Disambiguation 4

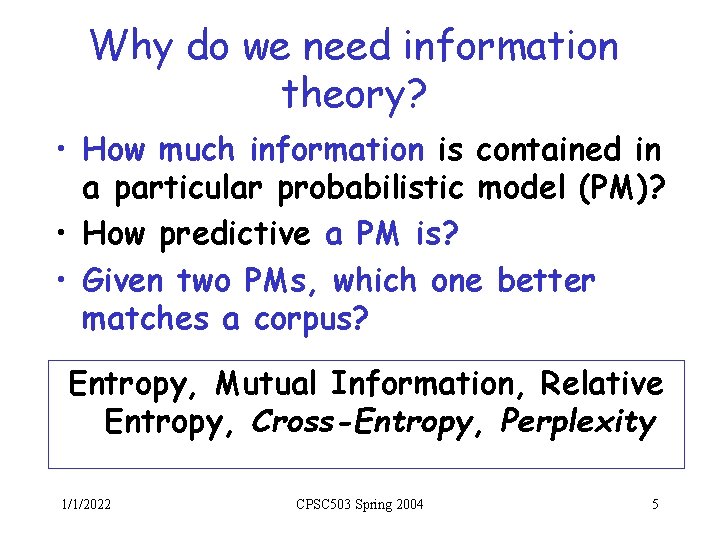

Why do we need information theory? • How much information is contained in a particular probabilistic model (PM)? • How predictive a PM is? • Given two PMs, which one better matches a corpus? Entropy, Mutual Information, Relative Entropy, Cross-Entropy, Perplexity 1/1/2022 CPSC 503 Spring 2004 5

Basic Probability/Info Theory • An overview (not complete! sometimes imprecise!) • Clarify basic concepts you may encounter in NLP • Try to address common misunderstandings 1/1/2022 CPSC 503 Spring 2004 6

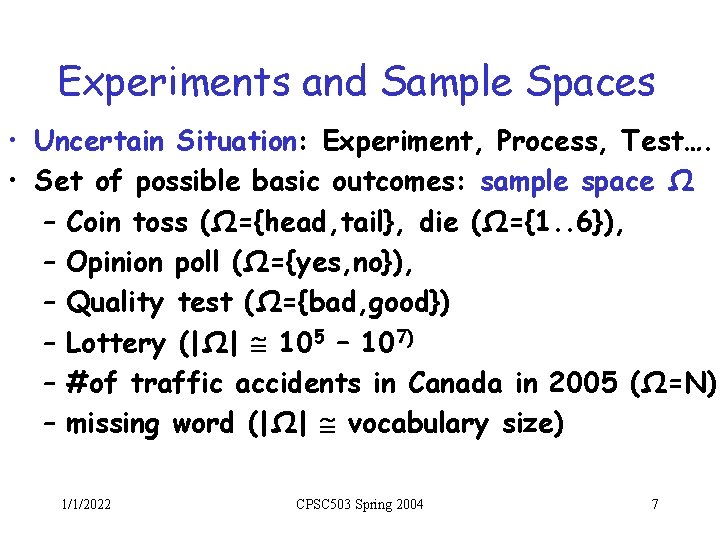

Experiments and Sample Spaces • Uncertain Situation: Experiment, Process, Test…. • Set of possible basic outcomes: sample space Ω – Coin toss (Ω={head, tail}, die (Ω={1. . 6}), – Opinion poll (Ω={yes, no}), – Quality test (Ω={bad, good}) – Lottery (|Ω| 105 – 107) – #of traffic accidents in Canada in 2005 (Ω=N) – missing word (|Ω| vocabulary size) 1/1/2022 CPSC 503 Spring 2004 7

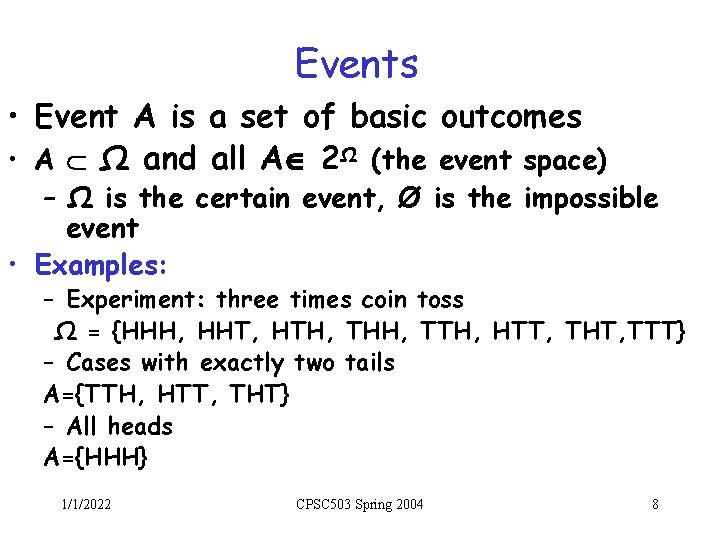

Events • Event A is a set of basic outcomes • A Ω and all A 2Ω (the event space) – Ω is the certain event, Ø is the impossible event • Examples: – Experiment: three times coin toss Ω = {HHH, HHT, HTH, THH, TTH, HTT, THT, TTT} – Cases with exactly two tails A={TTH, HTT, THT} – All heads A={HHH} 1/1/2022 CPSC 503 Spring 2004 8

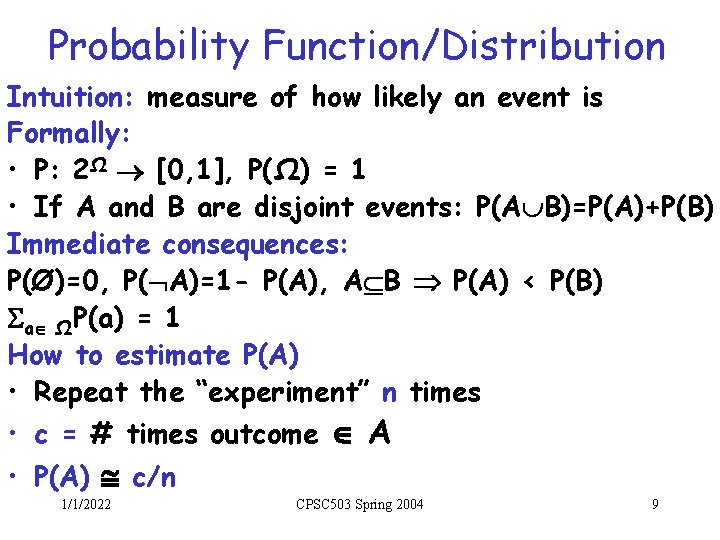

Probability Function/Distribution Intuition: measure of how likely an event is Formally: • P: 2Ω [0, 1], P(Ω) = 1 • If A and B are disjoint events: P(A B)=P(A)+P(B) Immediate consequences: P(Ø)=0, P( A)=1 - P(A), A B P(A) < P(B) a ΩP(a) = 1 How to estimate P(A) • Repeat the “experiment” n times • c = # times outcome A • P(A) c/n 1/1/2022 CPSC 503 Spring 2004 9

Missing Word from Book 1/1/2022 CPSC 503 Spring 2004 10

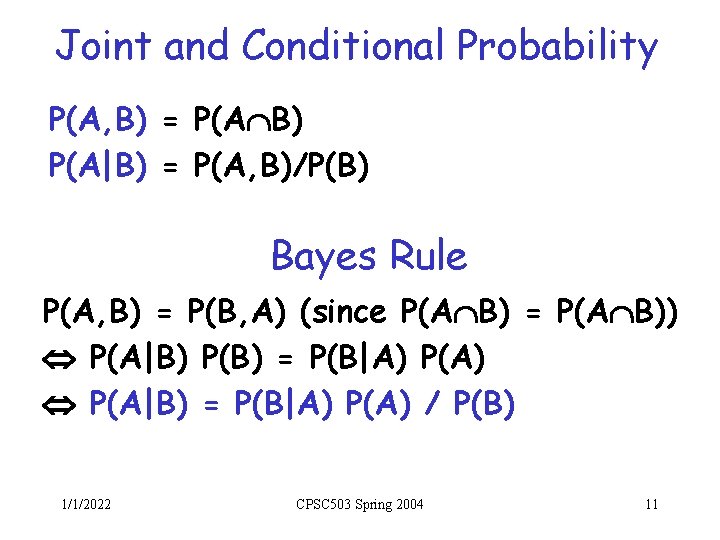

Joint and Conditional Probability P(A, B) = P(A B) P(A|B) = P(A, B)/P(B) Bayes Rule P(A, B) = P(B, A) (since P(A B) = P(A B)) P(A|B) P(B) = P(B|A) P(A) P(A|B) = P(B|A) P(A) / P(B) 1/1/2022 CPSC 503 Spring 2004 11

Missing Word: Independence 1/1/2022 CPSC 503 Spring 2004 12

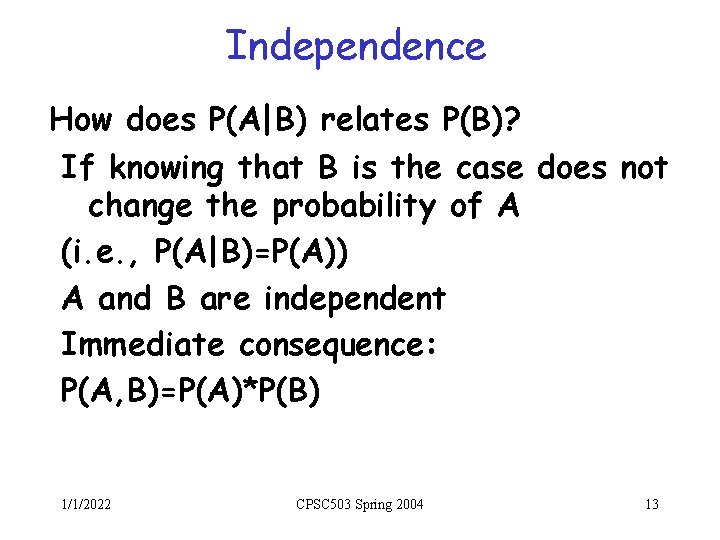

Independence How does P(A|B) relates P(B)? If knowing that B is the case does not change the probability of A (i. e. , P(A|B)=P(A)) A and B are independent Immediate consequence: P(A, B)=P(A)*P(B) 1/1/2022 CPSC 503 Spring 2004 13

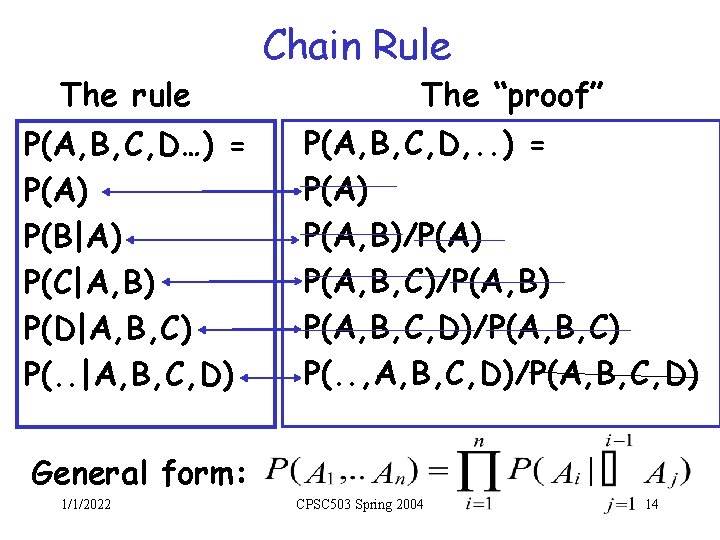

The rule P(A, B, C, D…) = P(A) P(B|A) P(C|A, B) P(D|A, B, C) P(. . |A, B, C, D) Chain Rule The “proof” P(A, B, C, D, . . ) = P(A) P(A, B)/P(A) P(A, B, C)/P(A, B) P(A, B, C, D)/P(A, B, C) P(. . , A, B, C, D)/P(A, B, C, D) General form: 1/1/2022 CPSC 503 Spring 2004 14

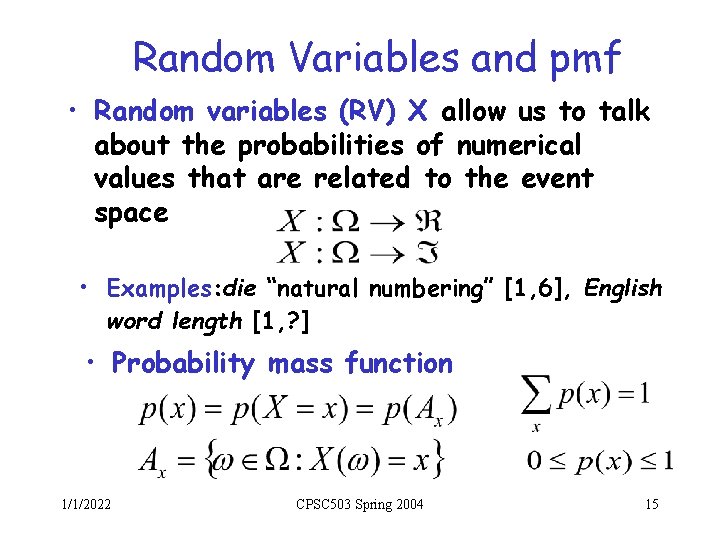

Random Variables and pmf • Random variables (RV) X allow us to talk about the probabilities of numerical values that are related to the event space • Examples: die “natural numbering” [1, 6], English word length [1, ? ] • Probability mass function 1/1/2022 CPSC 503 Spring 2004 15

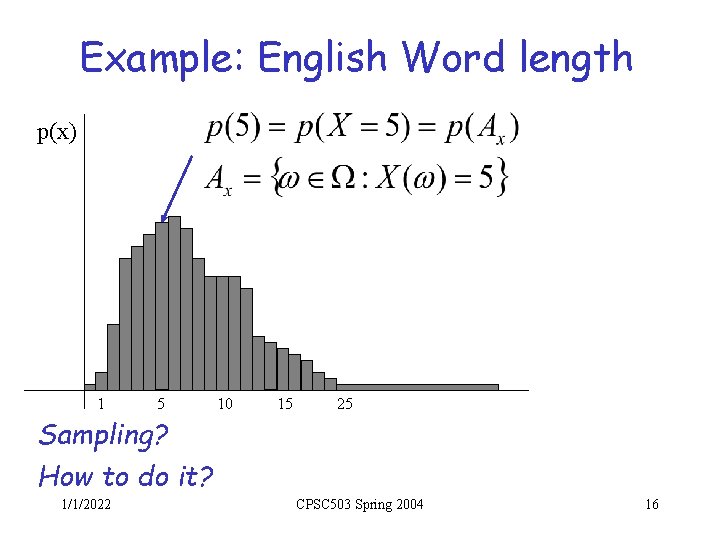

Example: English Word length p(x) 1 5 10 15 25 Sampling? How to do it? 1/1/2022 CPSC 503 Spring 2004 16

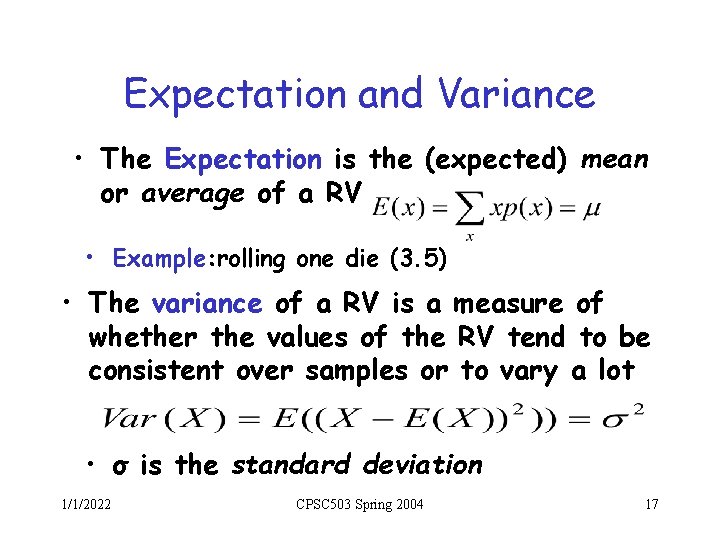

Expectation and Variance • The Expectation is the (expected) mean or average of a RV • Example: rolling one die (3. 5) • The variance of a RV is a measure of whether the values of the RV tend to be consistent over samples or to vary a lot • σ is the standard deviation 1/1/2022 CPSC 503 Spring 2004 17

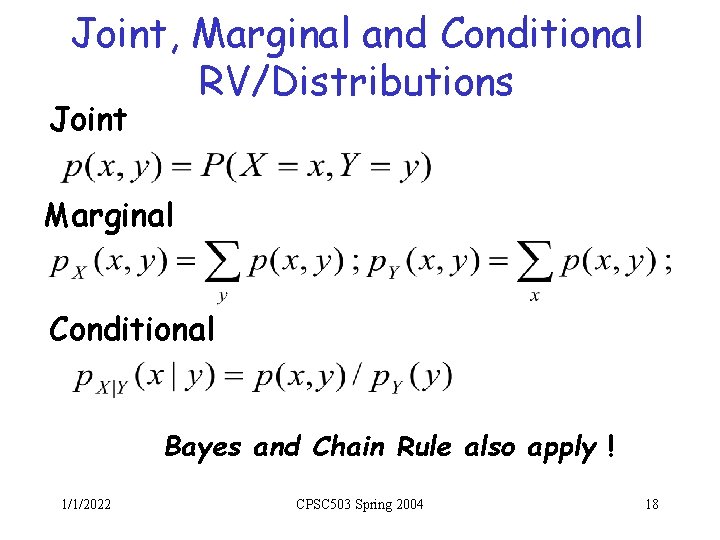

Joint, Marginal and Conditional RV/Distributions Joint Marginal Conditional Bayes and Chain Rule also apply ! 1/1/2022 CPSC 503 Spring 2004 18

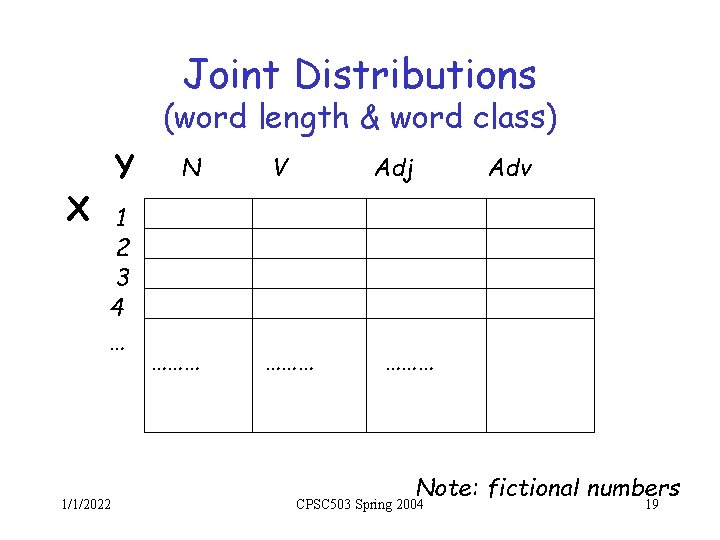

Joint Distributions (word length & word class) X Y 1 2 3 4 … 1/1/2022 N ……… V Adj ……… Adv ……… Note: fictional numbers CPSC 503 Spring 2004 19

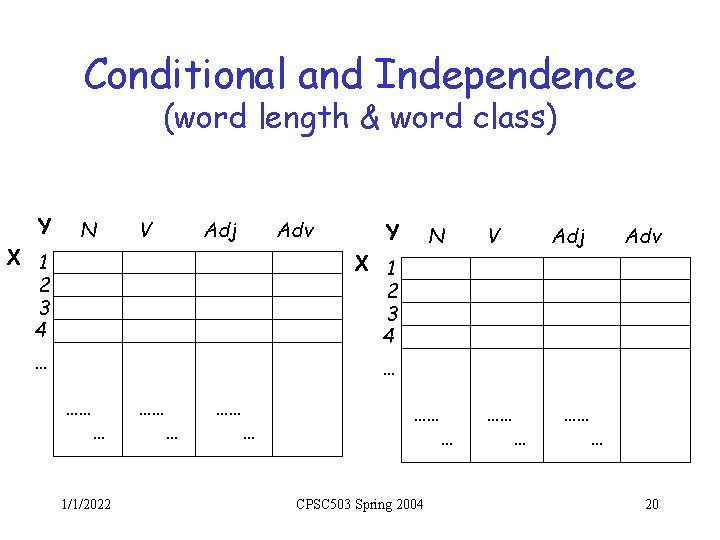

Conditional and Independence (word length & word class) Y X 1 2 3 4 N V Adj Adv Y N X 1 2 3 4 … V Adj Adv … …… … 1/1/2022 …… … CPSC 503 Spring 2004 …… … 20

Standard Distributions Discrete • Binomial • Multinomial Continuous • Normal 1/1/2022 Go back to your Stats textbook … CPSC 503 Spring 2004 21

Today 28/1 • Why do we need probabilities and information theory? • Basic Probability Theory • Basic Information Theory 1/1/2022 CPSC 503 Spring 2004 22

Entropy • Def 1. Measure of uncertainty • Def 2. Measure of the information that we need to resolve an uncertain situation • Def 3. Measure of the information that we obtain form an experiment that resolves an uncertain situation – Let p(x)=P(X=x); where x X. – H(p)= H(X)= - x X p(x)log 2 p(x) – It is normally measured in bits. 1/1/2022 CPSC 503 Spring 2004 23

Entropy (extra-slides) – – Using the formula: Example: binary outcome The Limits (why exactly that formula? ) • Entropy and Expectation • Coding interpretation – Joint and Conditional Entropy – Summary of key Properties 1/1/2022 CPSC 503 Spring 2004 24

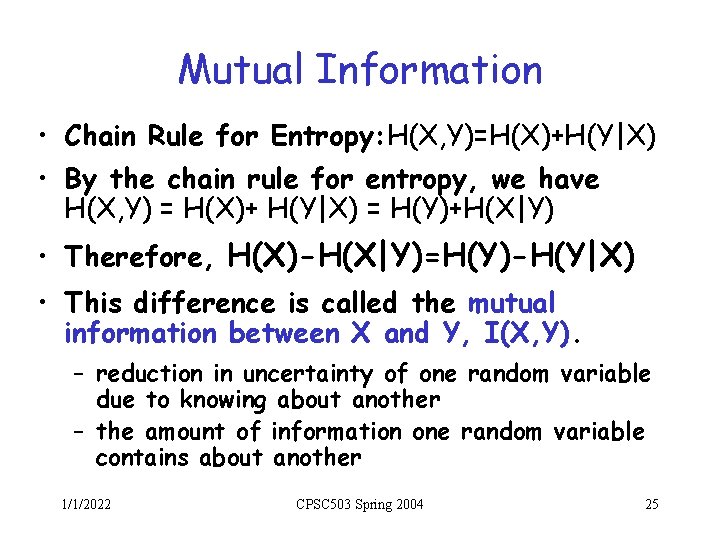

Mutual Information • Chain Rule for Entropy: H(X, Y)=H(X)+H(Y|X) • By the chain rule for entropy, we have H(X, Y) = H(X)+ H(Y|X) = H(Y)+H(X|Y) • Therefore, H(X)-H(X|Y)=H(Y)-H(Y|X) • This difference is called the mutual information between X and Y, I(X, Y). – reduction in uncertainty of one random variable due to knowing about another – the amount of information one random variable contains about another 1/1/2022 CPSC 503 Spring 2004 25

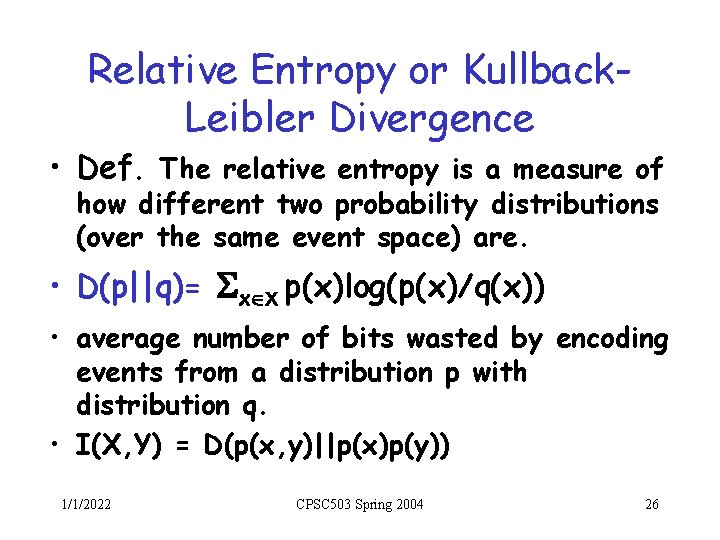

Relative Entropy or Kullback. Leibler Divergence • Def. The relative entropy is a measure of how different two probability distributions (over the same event space) are. • D(p||q)= x X p(x)log(p(x)/q(x)) • average number of bits wasted by encoding events from a distribution p with distribution q. • I(X, Y) = D(p(x, y)||p(x)p(y)) 1/1/2022 CPSC 503 Spring 2004 26

Next Time • Probabilistic models applied to spelling • Read Chp. 5 up to pag. 156 1/1/2022 CPSC 503 Spring 2004 27

- Slides: 27