CIIs 10 10 Performance Assessment Campaign Implementation Session

- Slides: 31

CII’s 10 -10 Performance Assessment Campaign - Implementation Session CII Performance Assessment Committee 2013 Annual Conference July 29– 31 • Orlando

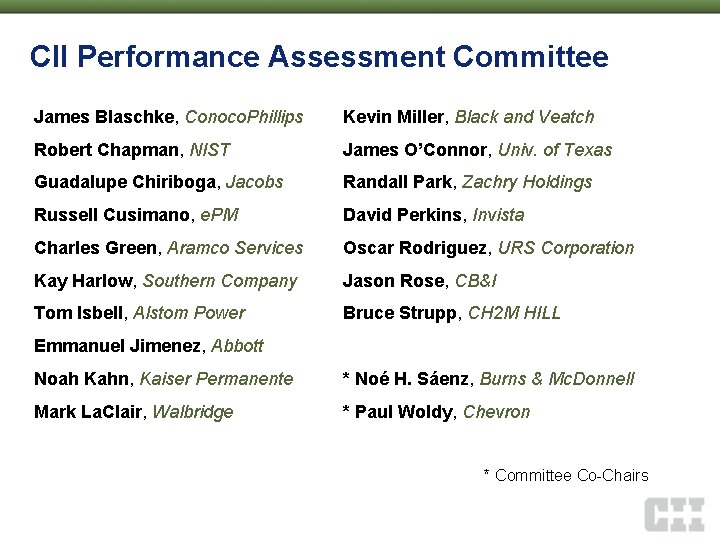

CII Performance Assessment Committee James Blaschke, Conoco. Phillips Kevin Miller, Black and Veatch Robert Chapman, NIST James O’Connor, Univ. of Texas Guadalupe Chiriboga, Jacobs Randall Park, Zachry Holdings Russell Cusimano, e. PM David Perkins, Invista Charles Green, Aramco Services Oscar Rodriguez, URS Corporation Kay Harlow, Southern Company Jason Rose, CB&I Tom Isbell, Alstom Power Bruce Strupp, CH 2 M HILL Emmanuel Jimenez, Abbott Noah Kahn, Kaiser Permanente * Noé H. Sáenz, Burns & Mc. Donnell Mark La. Clair, Walbridge * Paul Woldy, Chevron * Committee Co-Chairs

Agenda Moderator Theory Noé H. Sáenz, Burns & Mc. Donnell Panel Stephen Mulva, CII Mike Elliott, Phillips 66 Application Bruce Strupp, CH 2 M HILL Results

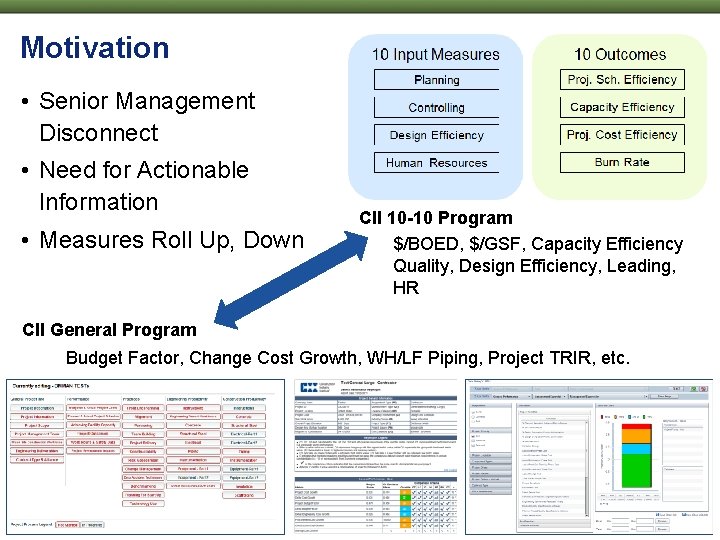

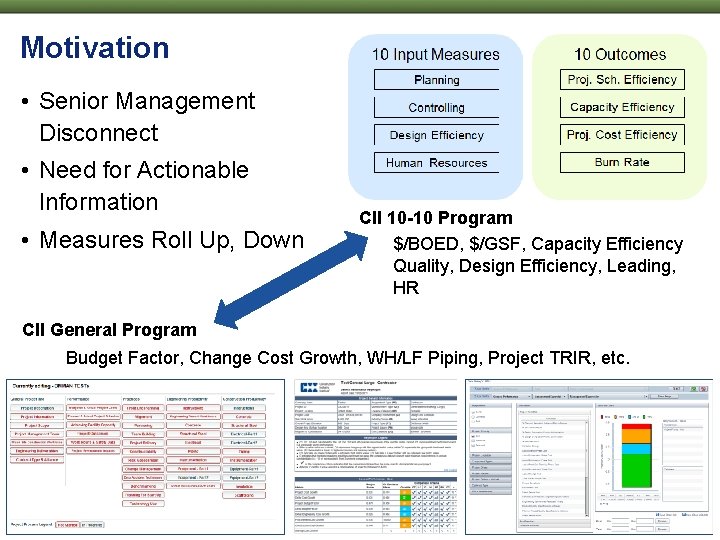

Motivation • Senior Management Disconnect • Need for Actionable Information • Measures Roll Up, Down CII 10 -10 Program $/BOED, $/GSF, Capacity Efficiency Quality, Design Efficiency, Leading, HR CII General Program Budget Factor, Change Cost Growth, WH/LF Piping, Project TRIR, etc.

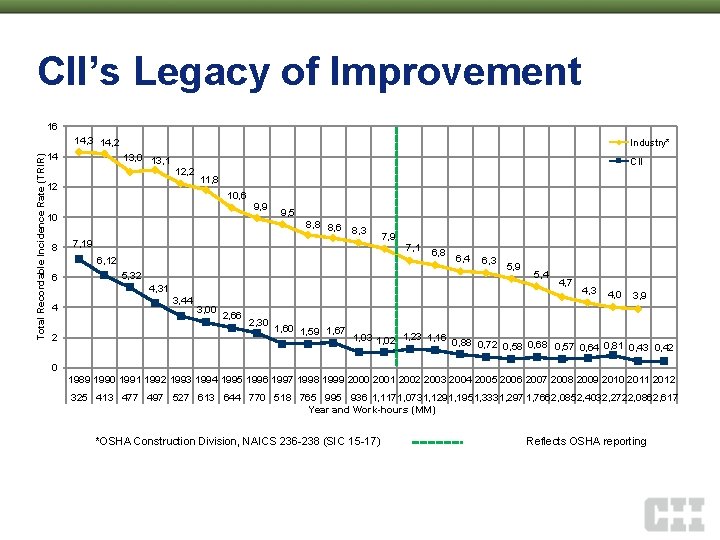

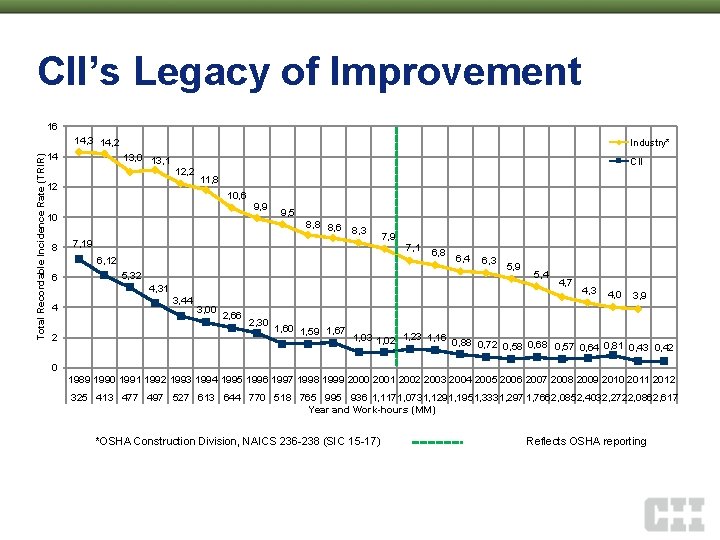

CII’s Legacy of Improvement 16 Total Recordable Incidence Rate (TRIR) 14, 3 14, 2 14 Industry* 13, 0 13, 1 12, 2 12 CII 11, 8 10, 6 10 8 9, 9 9, 5 8, 8 8, 6 8, 3 7, 19 6, 12 6 4 2 5, 32 4, 31 3, 44 3, 00 2, 66 2, 30 1, 60 1, 59 1, 67 7, 9 7, 1 6, 8 6, 4 6, 3 5, 9 5, 4 4, 7 4, 3 4, 0 3, 9 1, 03 1, 02 1, 23 1, 16 0, 88 0, 72 0, 58 0, 68 0, 57 0, 64 0, 81 0, 43 0, 42 0 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004 2005 2006 2007 2008 2009 2010 2011 2012 325 413 477 497 527 613 644 770 518 765 995 936 1, 1171, 0731, 1291, 1951, 3331, 297 1, 766 2, 085 2, 403 2, 272 2, 086 2, 617 Year and Work-hours (MM) *OSHA Construction Division, NAICS 236 -238 (SIC 15 -17) Reflects OSHA reporting

Theory Stephen Mulva, CII 2013 Annual Conference July 29– 31 • Orlando

We Stand for the Project! • Project – Company – Individual • “Governing Dynamics” courtesy Universal Pictures

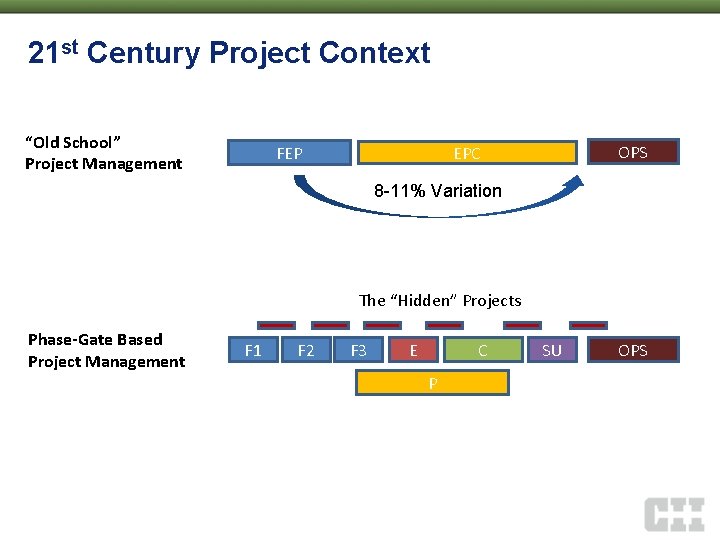

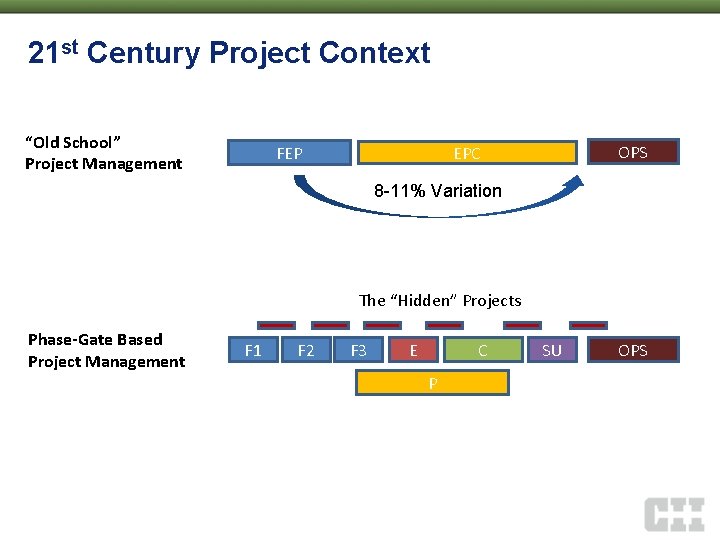

21 st Century Project Context “Old School” Project Management OPS EPC FEP 8 -11% Variation The “Hidden” Projects Phase-Gate Based Project Management F 1 F 2 F 3 E C P SU OPS

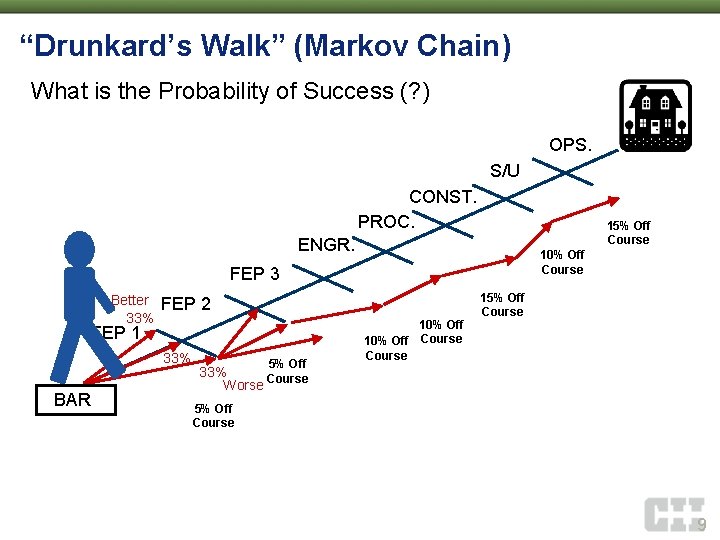

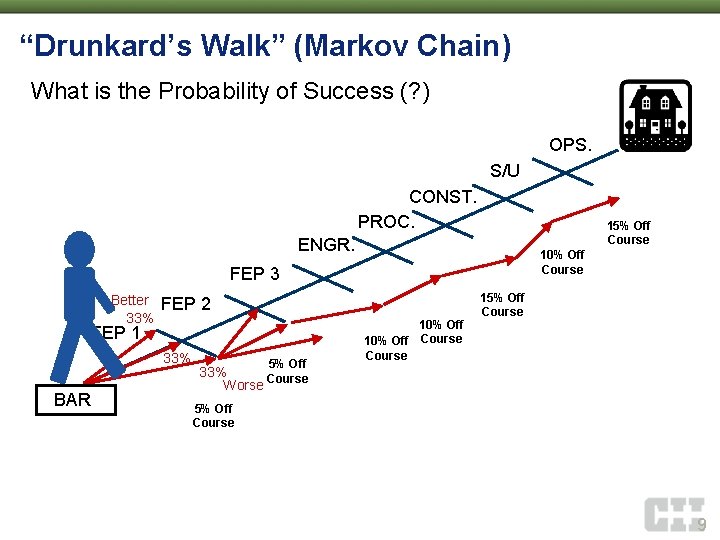

“Drunkard’s Walk” (Markov Chain) What is the Probability of Success (? ) OPS. S/U CONST. PROC. 15% Off Course ENGR. 10% Off Course FEP 3 Better 33% FEP 2 FEP 1 33% BAR 5% Off 10% Off Course 15% Off Course 33% Course Worse 5% Off Course 9

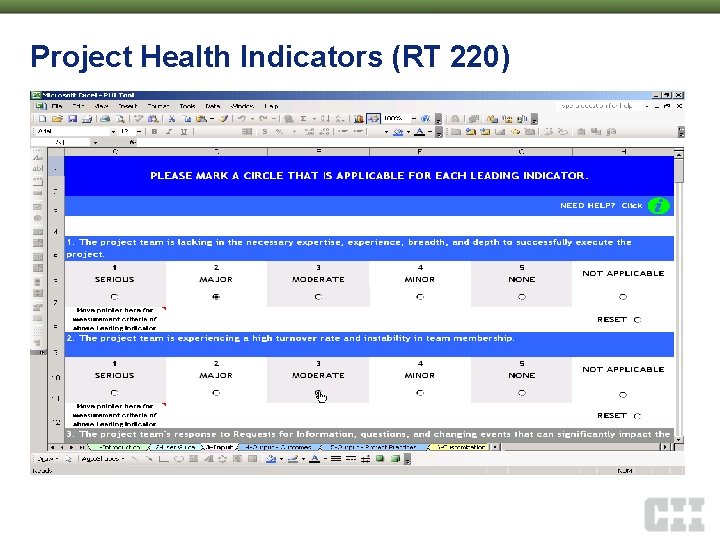

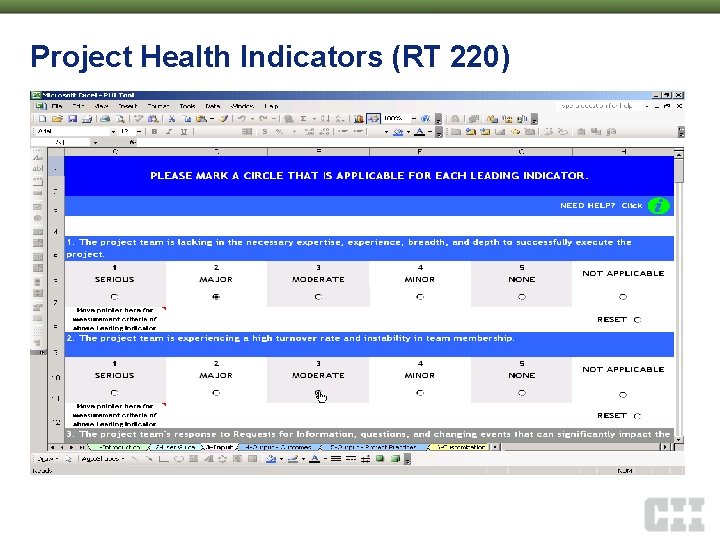

Project Health Indicators (RT 220)

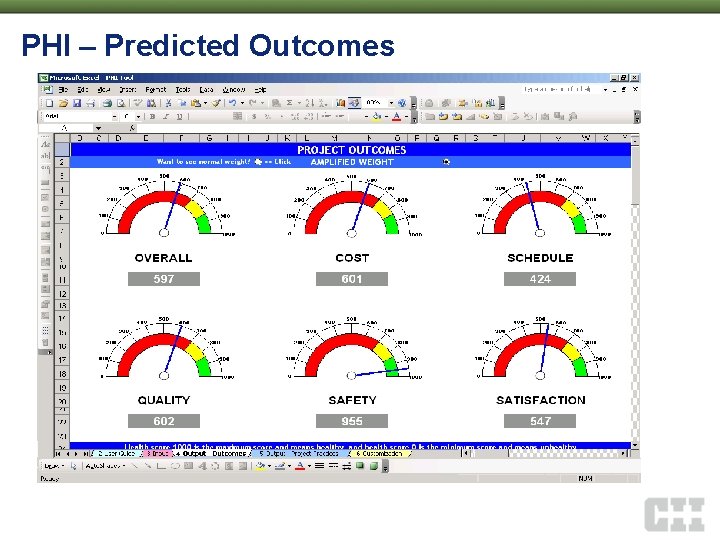

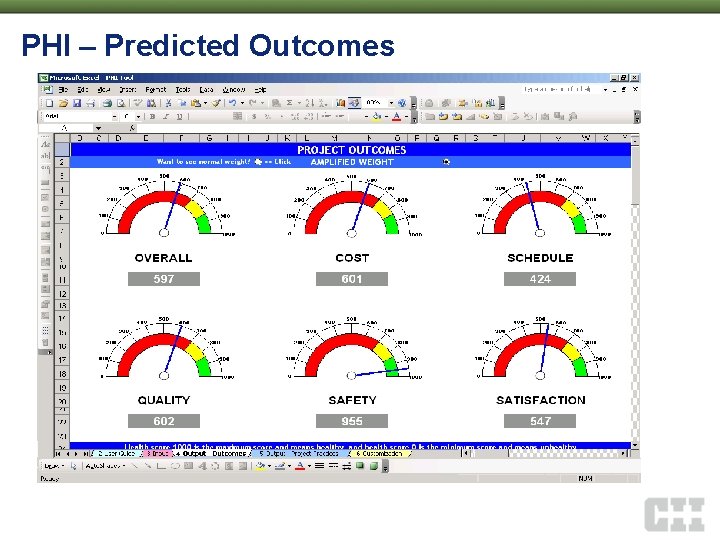

PHI – Predicted Outcomes

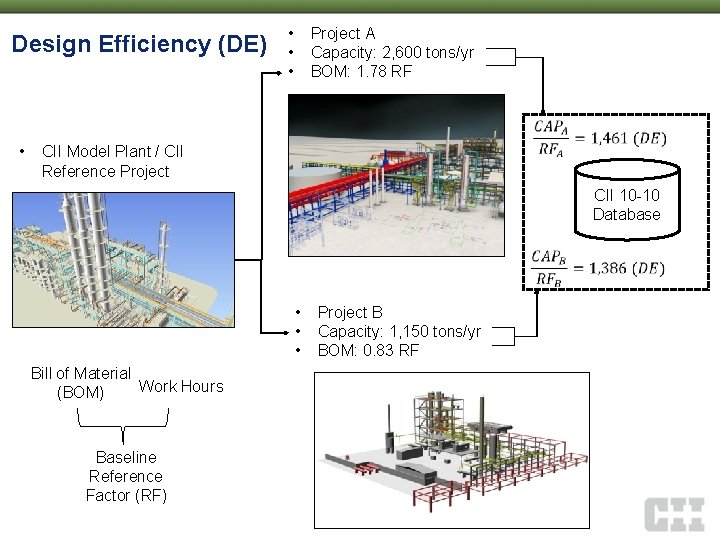

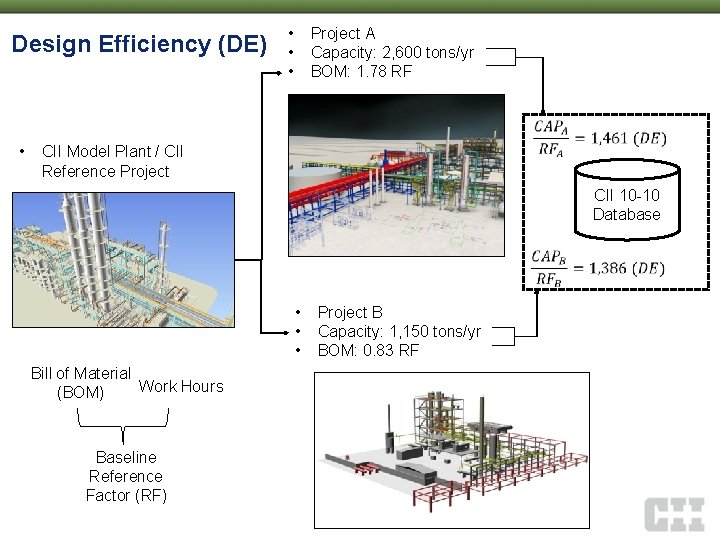

Design Efficiency (DE) • • Project A Capacity: 2, 600 tons/yr BOM: 1. 78 RF CII Model Plant / CII Reference Project CII 10 -10 Database • • • Bill of Material Work Hours (BOM) Baseline Reference Factor (RF) Project B Capacity: 1, 150 tons/yr BOM: 0. 83 RF

“Famous” Construction Quotes “Construction would be easy, if it weren’t for all the people involved” Ted Van. Wyck “When we pay for benchmarking, we typically tend to find the data being asked” Sanat Doshi

Application Mike Elliott, Phillips 66 2013 Annual Conference July 29– 31 • Orlando

10 -10 Questionnaires • Practice-Based – Yes/No – 5 -point scales (strongly agree strongly disagree) • Phase-Based – Help for current projects – Answered as project nears phase completion • Quantitative, yet simple to answer • Research-based, empirically tested • Paper-Based (2013 -2014) • Examples…

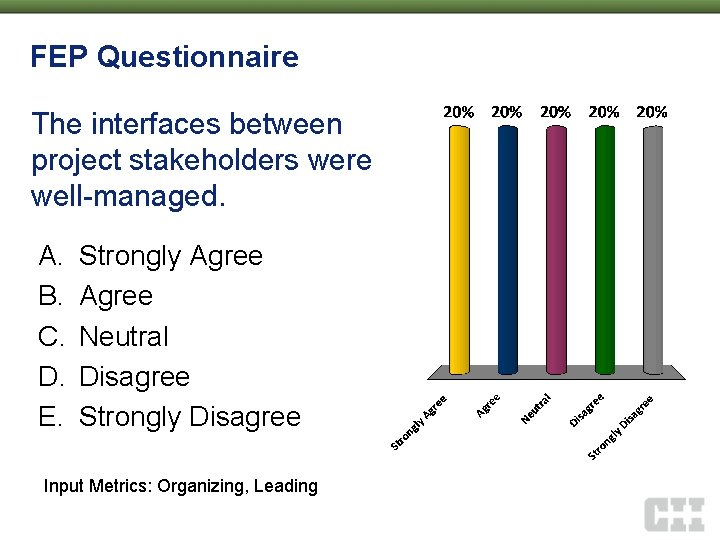

FEP Questionnaire The interfaces between project stakeholders were well-managed. A. B. C. D. E. Strongly Agree Neutral Disagree Strongly Disagree Input Metrics: Organizing, Leading

Engineering Questionnaire The equipment procurement and vendor schedules were a significant challenge or problem for this project A. B. C. D. E. Strongly Agree Neutral Disagree Strongly Disagree Input Metrics: Planning, Controlling, Partnering & Supply Chain Management

Procurement Questionnaire Preferred suppliers were used effectively to streamline the procurement process A. B. C. D. E. Strongly Agree Neutral Disagree Strongly Disagree Input Metrics: Planning, Controlling, Quality, and Partnering & Supply Chain Management (SCM)

Construction Questionnaire The availability and competency of craft labor was adequate A. B. C. D. E. Strongly Agree Neutral Disagree Strongly Disagree Input Metrics: Planning, Controlling, Quality, HR and Safety

Start-Up Questionnaire The project experienced an excessive number of project management team personnel changes A. B. C. D. E. Strongly Agree Neutral Disagree Strongly Disagree Input Metrics: Organizing, Leading, and Human Resources (HR)

Start-Up Questionnaire • Which of the following statements characterize the decisions made by the manager(s) of this project? (please check all that apply) – Considered final and not revisited – Collaborative and inclusive – Made at the lowest appropriate level in the organization – Communicated promptly to the team – Made in a timely and effective manner – Consistent with the delegation of authority • Input Measure: Leading

Results Bruce Strupp, CH 2 M HILL 2013 Annual Conference July 29– 31 • Orlando

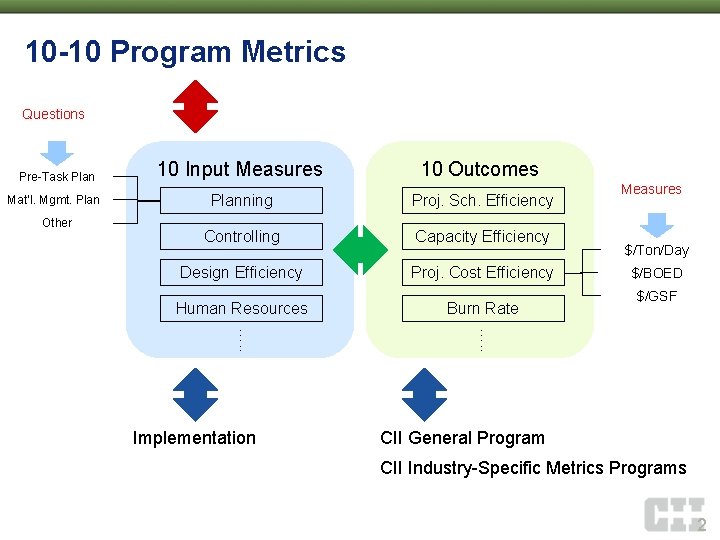

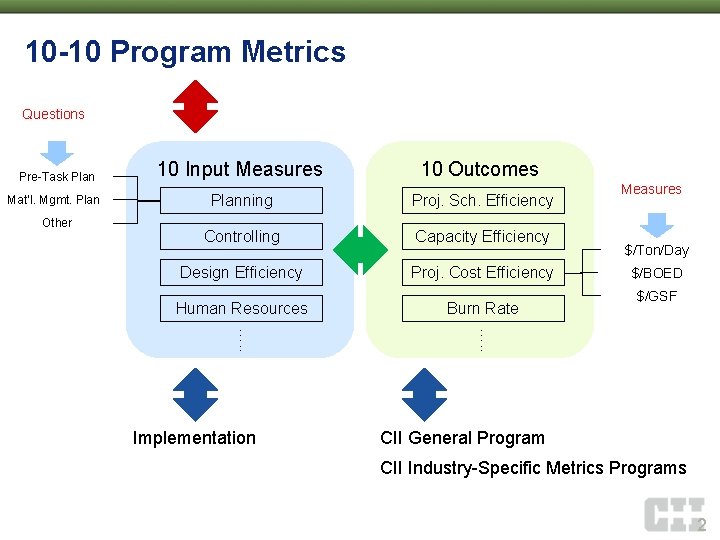

10 -10 Program Metrics Questions Pre-Task Plan 10 Input Measures 10 Outcomes Mat’l. Mgmt. Planning Proj. Sch. Efficiency Controlling Capacity Efficiency Design Efficiency Proj. Cost Efficiency Human Resources Burn Rate Other $/Ton/Day $/BOED $/GSF …. . Implementation Measures CII General Program CII Industry-Specific Metrics Programs 2

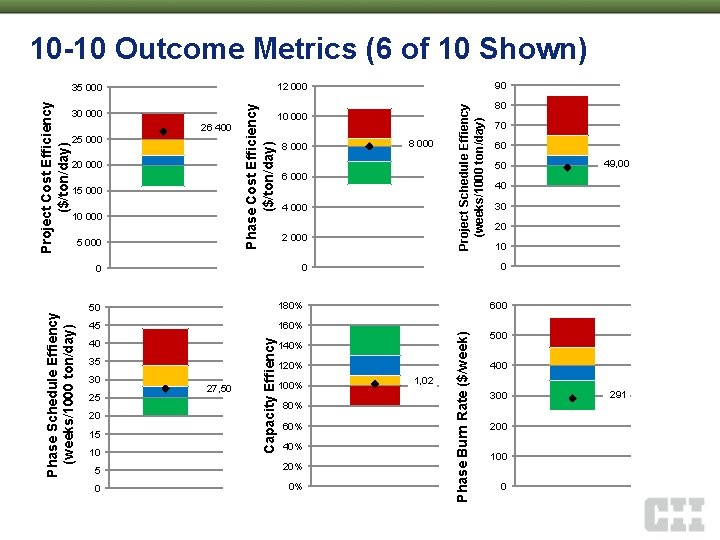

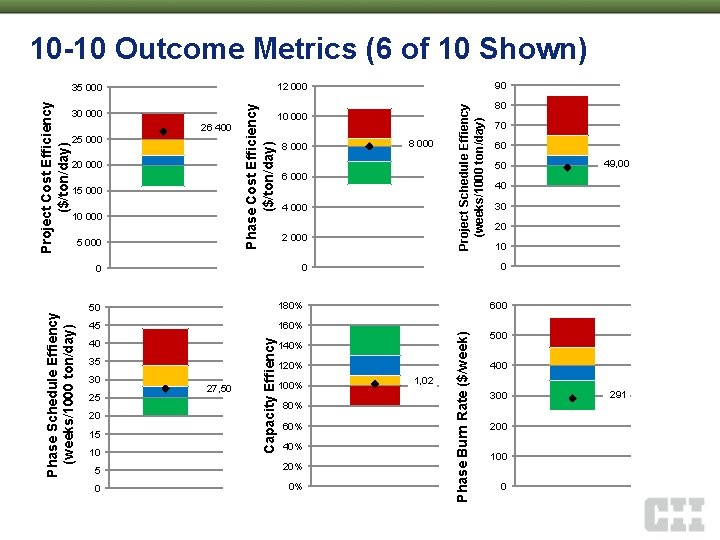

10 -10 Outcome Metrics (6 of 10 Shown) 20 000 15 000 10 000 6 000 4 000 2 000 45 160% 40 35 20 15 10 27, 50 120% 80% 60% 40% 5 20% 0 0% 70 60 50 49, 00 40 30 20 10 600 140% 100% 80 0 1, 02 Phase Burn Rate ($/week) 180% Capacity Effiency 50 25 8 000 0 0 Phase Schedule Effiency (weeks/1000 ton/day) 8 000 Project Schedule Effiency (weeks/1000 ton/day) 26 400 25 000 Phase Cost Efficiency ($/ton/day) Project Cost Efficiency ($/ton/day) 30 000 30 90 12 000 35 000 500 400 300 200 100 0 291

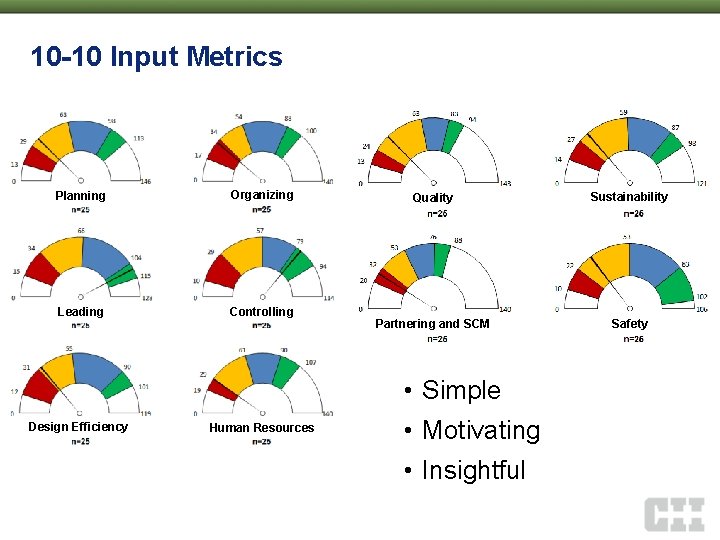

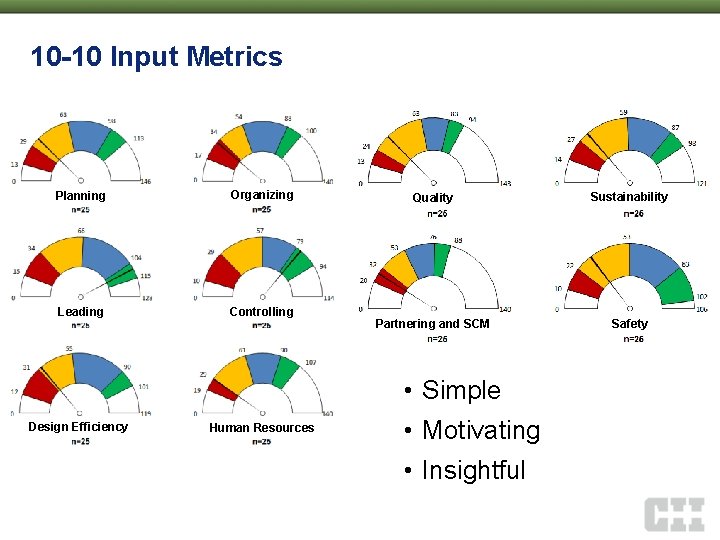

10 -10 Input Metrics Planning Organizing Leading Controlling Quality Sustainability Partnering and SCM Safety • Simple Design Efficiency Human Resources • Motivating • Insightful

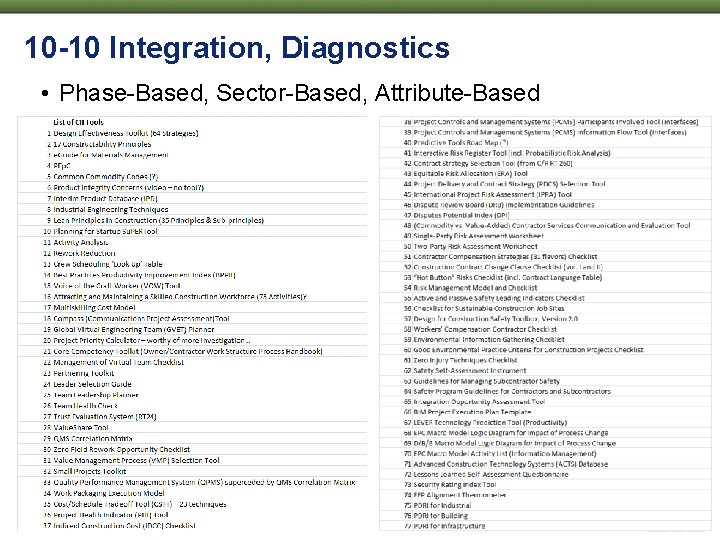

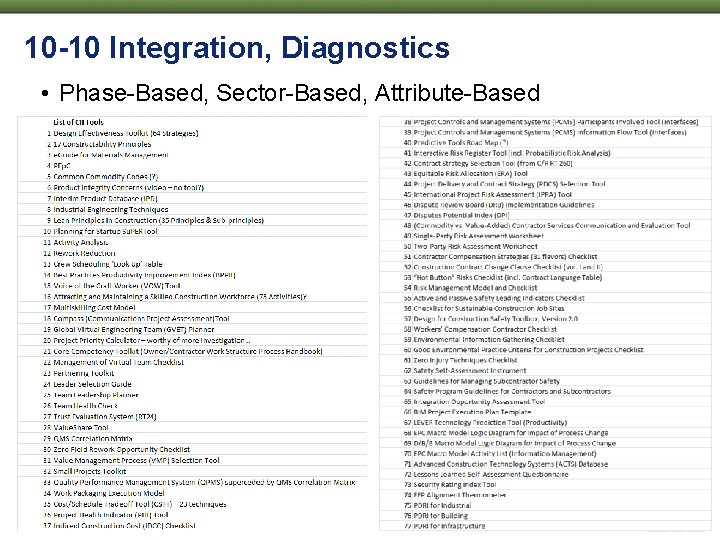

10 -10 Integration, Diagnostics • Phase-Based, Sector-Based, Attribute-Based

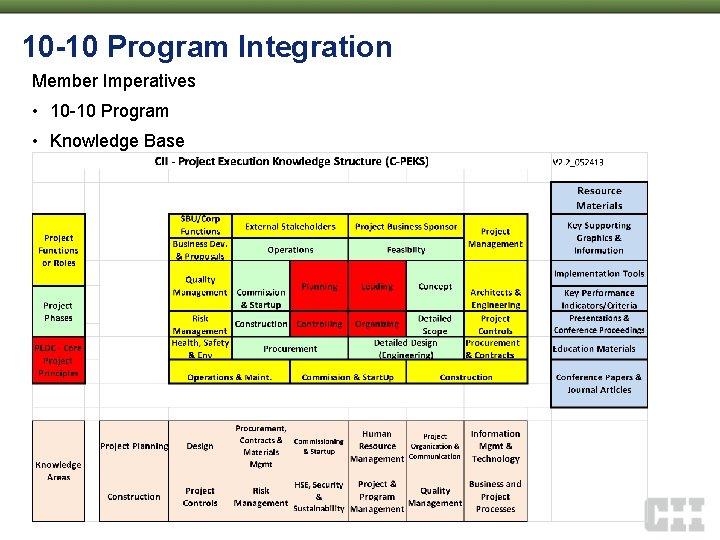

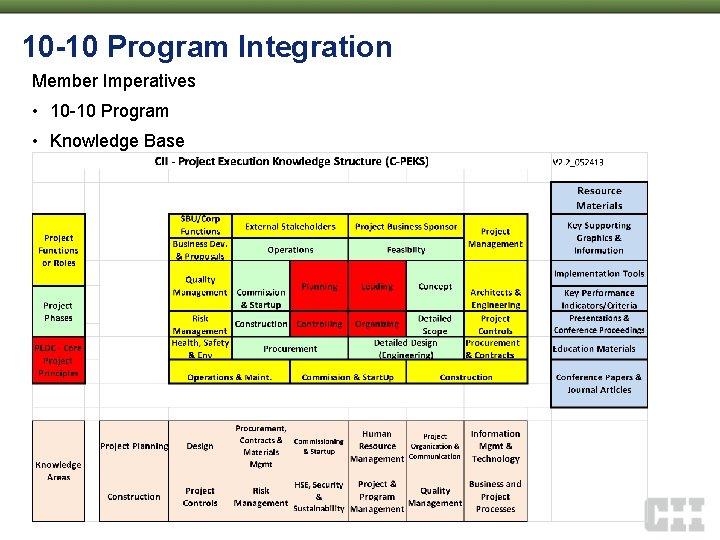

10 -10 Program Integration Member Imperatives • 10 -10 Program • Knowledge Base

Campaign Noé H. Sáenz, Burns & Mc. Donnell 2013 Annual Conference July 29– 31 • Orlando

10 -10 Program Campaign • August 2013 – April 2014 – Anonymous Data Collection (~1, 200 Phase. Projects) – 10 -10 Automation • July 21 -23, 2014 CII Annual Conference • 2014 and Beyond – Company-level dashboard – Self-help vs. facilitated evaluation and implementation • It starts NOW!

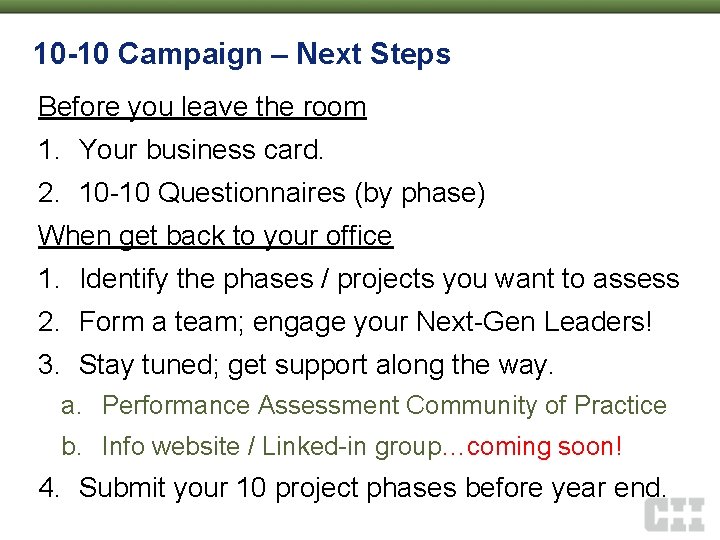

10 -10 Campaign – Next Steps Before you leave the room 1. Your business card. 2. 10 -10 Questionnaires (by phase) When get back to your office 1. Identify the phases / projects you want to assess 2. Form a team; engage your Next-Gen Leaders! 3. Stay tuned; get support along the way. a. Performance Assessment Community of Practice b. Info website / Linked-in group…coming soon! 4. Submit your 10 project phases before year end.

Questions? Moderator Theory Noé H. Sáenz, Burns & Mc. Donnell Panel Stephen Mulva, CII Mike Elliott, Phillips 66 Application Bruce Strupp, CH 2 M Hill Results