CAT Patterns for adopting dataflows in Power BI

CAT Patterns for adopting dataflows in Power BI Matthew Roche Principal Program Manager Microsoft @SQLAll. Father | https: //ssbipolar. com/ CUSTOMER ENGAGEMENT We remove deep technical issues to drive revenue, satisfaction, and adoption from our top customers EVANGELIZATION PATTERNS & PRACTICES We drive significant product awareness across many different channels We curate “How-to” & “Best practices” content for Power BI FIELD & PARTNER ENABLEMENT PRODUCT FEEDBACK We enable thousands of sellers/partners to drive revenue, satisfaction and adoption We influence the product roadmap through engineering feedback rhythms

Matthew Roche • It’s that purple-haired CAT guy! • Didn’t he take his shirt off that one time at PASS? • I wonder if he brought any swords… • I’m glad there isn’t a Manowar show tonight, because I want to learn dataflow stuff.

Introducing Power BI dataflows

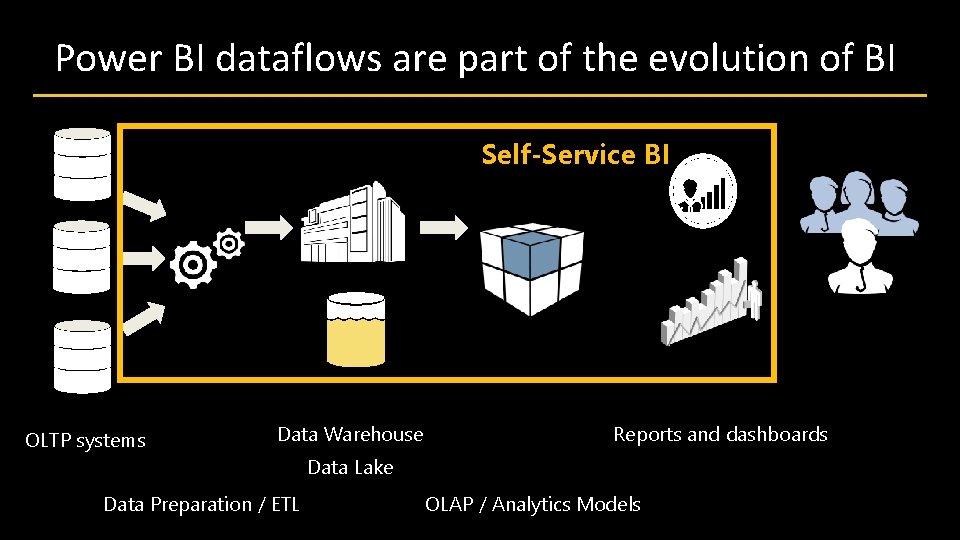

Power BI dataflows are part of the evolution of BI Self-Service BI OLTP systems Data Warehouse Reports and dashboards Data Lake Data Preparation / ETL OLAP / Analytics Models

Positioning dataflows

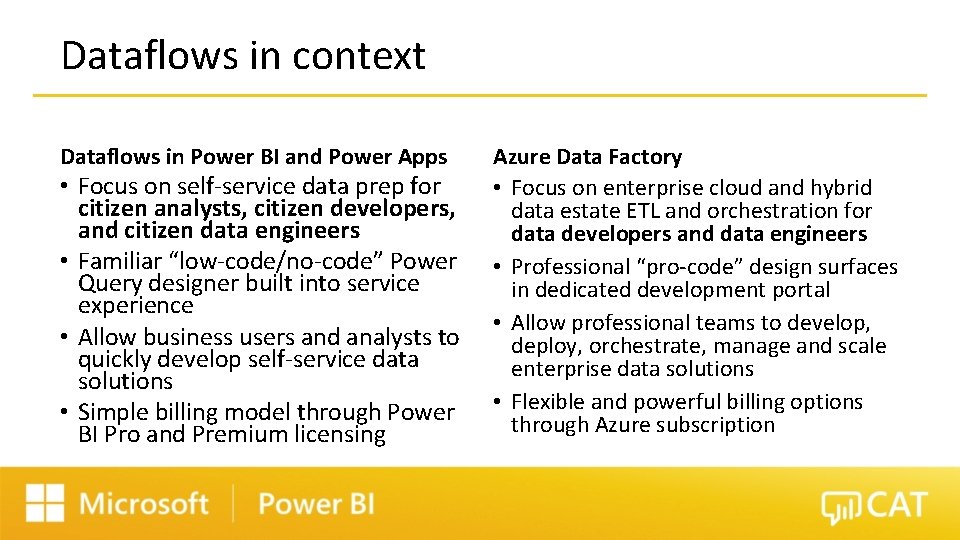

Dataflows in context Dataflows in Power BI and Power Apps • Focus on self-service data prep for citizen analysts, citizen developers, and citizen data engineers • Familiar “low-code/no-code” Power Query designer built into service experience • Allow business users and analysts to quickly develop self-service data solutions • Simple billing model through Power BI Pro and Premium licensing Azure Data Factory • Focus on enterprise cloud and hybrid data estate ETL and orchestration for data developers and data engineers • Professional “pro-code” design surfaces in dedicated development portal • Allow professional teams to develop, deploy, orchestrate, manage and scale enterprise data solutions • Flexible and powerful billing options through Azure subscription

Dataflow usage patterns

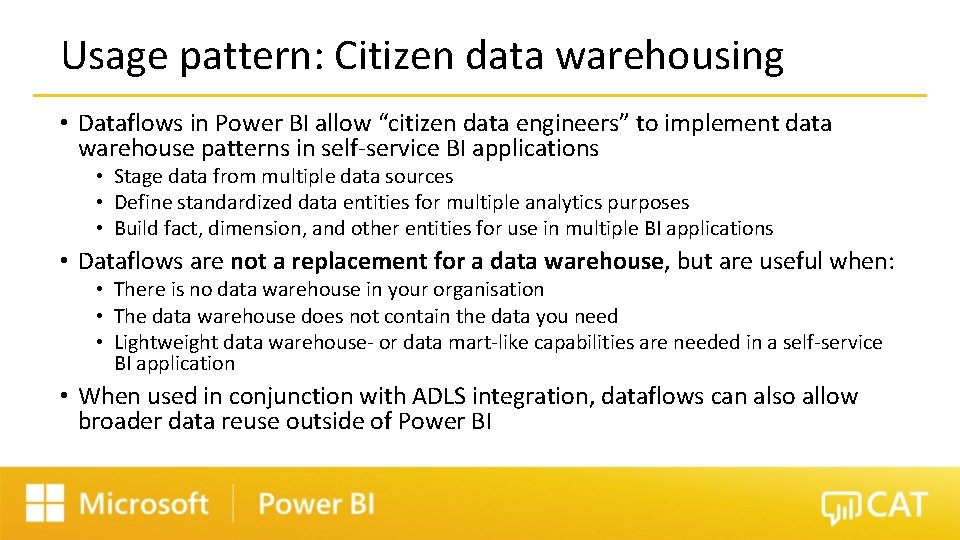

Usage pattern: Citizen data warehousing • Dataflows in Power BI allow “citizen data engineers” to implement data warehouse patterns in self-service BI applications • Stage data from multiple data sources • Define standardized data entities for multiple analytics purposes • Build fact, dimension, and other entities for use in multiple BI applications • Dataflows are not a replacement for a data warehouse, but are useful when: • There is no data warehouse in your organisation • The data warehouse does not contain the data you need • Lightweight data warehouse- or data mart-like capabilities are needed in a self-service BI application • When used in conjunction with ADLS integration, dataflows can also allow broader data reuse outside of Power BI

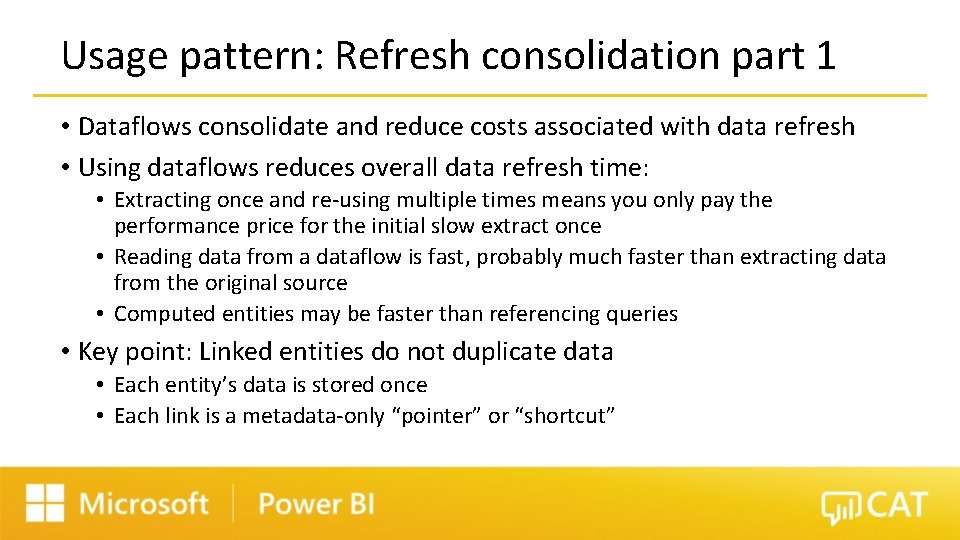

Usage pattern: Refresh consolidation part 1 • Dataflows consolidate and reduce costs associated with data refresh • Using dataflows reduces overall data refresh time: • Extracting once and re-using multiple times means you only pay the performance price for the initial slow extract once • Reading data from a dataflow is fast, probably much faster than extracting data from the original source • Computed entities may be faster than referencing queries • Key point: Linked entities do not duplicate data • Each entity’s data is stored once • Each link is a metadata-only “pointer” or “shortcut”

Usage pattern: Refresh consolidation part 2 • Dataflows consolidate and reduce costs associated with data refresh • Dataflow refresh can be scheduled independently of dataset refresh • Each dataflow can have its own refresh schedule • Each dataflow’s refresh schedule can be aligned with the data sources it references • Increases control and reduces number of calls to source system • Refresh against line-of-business database needing to align with load window / hours of operation • Refreshes against API data sources when there is a limit on the number of calls to the API, or costs associated with surplus API calls • Datasets refresh against dataflows without affecting source systems

Usage pattern: Data consistency • Dataflows increase consistency between datasets • Using dataflows helps increase structural consistency by reducing the chance that different users will make different decisions when preparing data • Dataflow refresh helps increase temporal consistency by having a single set of data extracted from source systems at a single point in time • Share complex M queries that some users would not be able to write • Share tables that have no source, such as a standard Date dimension generated programmatically using Power Query

Usage pattern: Simplify discovery and connectivity • Dataflows simplify the data source discovery and connection experience • Dataflows provide a consolidated discovery experience in “Get data” • All dataflows are visible using a single integrated experience • Dataflows are organized by workspace • Previews are available for all entities • Dataflows hide the complexity of connecting to a data source • No connection strings or URLs to remember • No additional authentication required – log in once to Power BI service using AAD • No drivers or specialized software to install

Dataflow adoption patterns

Adoption patterns: Authors • Dataflows are not broadly created by “citizen analysts” • If a user primarily creates reports for themselves, they’re unlikely to think about data reuse • If a user primarily creates a small number of reports for a small audience, they’re unlikely to think about data reuse • Because the benefits of dataflows are derived in large part from the reuse of data and data preparation logic, self-service dataflow authors are typically “citizen data engineers” who understand the benefits of reuse • Not every Power BI author needs to be a dataflow author • The current dataflow authoring experience raises the bar for adoption – most analysts will not leave one tool to perform related tasks in another tool • The most successful organizations are deliberately building these skills and competencies in their communities of practice

Adoption patterns: Authoring • Two common patterns for dataflow authoring • Citizen data engineers • ETL developers in IT / Center of Excellence • Three common patterns for dataflow creation • Project proactive – BI project team or citizen data engineer identifies key reusable data entities as part of project scope • Enterprise proactive – Central BI team or COE identifies key reusable data entities for analytics as independent strategic effort • Enterprise reactive – Central BI team or COE identifies redundancies and inefficiencies in Power BI lineage

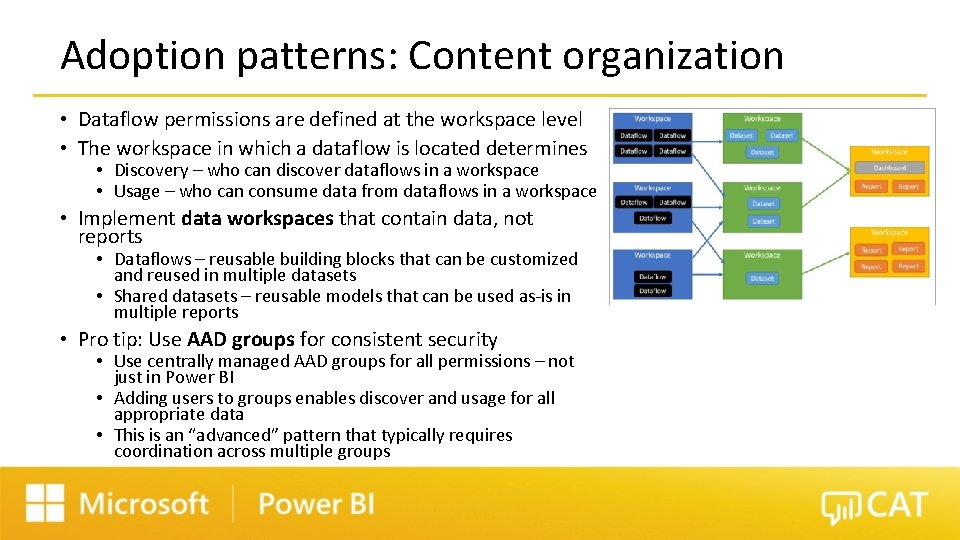

Adoption patterns: Content organization • Dataflow permissions are defined at the workspace level • The workspace in which a dataflow is located determines • Discovery – who can discover dataflows in a workspace • Usage – who can consume data from dataflows in a workspace • Implement data workspaces that contain data, not reports • Dataflows – reusable building blocks that can be customized and reused in multiple datasets • Shared datasets – reusable models that can be used as-is in multiple reports • Pro tip: Use AAD groups for consistent security • Use centrally managed AAD groups for all permissions – not just in Power BI • Adding users to groups enables discover and usage for all appropriate data • This is an “advanced” pattern that typically requires coordination across multiple groups

Adoption patterns: Hiding data source complexity • Some sources are more complex than others, such as API sources and ERP systems • Create dataflows to hide complexity of data sources • Typically IT-developed and -managed dataflows • Treated as a supported application / data source • Expose common data entities for larger groups of analysts • Horizontal partitioning of entity data • Upstream dataflows contain all data, available only to small group of privileged users • Downstream dataflows contain data curated subsets of data, available to members of appropriate AAD groups, implemented with linked and computed entities • Refresh is manually orchestrated – Composable ETL works within workspace boundaries

Adoption patterns: Promotion and community • Power BI authors won’t know about dataflows unless someone tells them • Most people will keep doing what they already do, because they are comfortable • Most people need a reason to change their behavior • Promote dataflows as a feature • Let Power BI authors know about dataflows and what they do • Encourage users to explore • Promote specific data sources • Let Power BI authors know about specific high-value dataflows • Use lineage to understand who is directly accessing data sources, bypassing dataflows • Promote opportunities to create dataflows • Include dataflows content in “advanced” Power BI author training • Make it obvious that dataflows are not just for IT to create • Visit https: //ssbipolar. com/building-a-data-culture/ for more ideas and guidance

Summary and resources

Related content from the Power BI CAT team • Official CAT team content • Power BI guidance documentation: https: //docs. microsoft. com/en-us/powerbi/guidance/ • Dataflows best practices: https: //docs. microsoft. com/en-us/power-bi/transform -model/service-dataflows-best-practices • Matthew Roche’s blog: • https: //ssbipolar. com/dataflows-in-power-bi/ • https: //ssbipolar. com/building-a-data-culture/ • Chris Webb’s blog: • https: //blog. crossjoin. co. uk/category/dataflows/

Q&A

- Slides: 21