Akhil Langer Harshit Dokania Laxmikant Kale Udatta Palekar

![Publications http: //charm. cs. uiuc. edu/research/energy • • • [PMAM 15]. Energy-efficient Computing for Publications http: //charm. cs. uiuc. edu/research/energy • • • [PMAM 15]. Energy-efficient Computing for](https://slidetodoc.com/presentation_image_h2/a69d4befc3ffbc6e0faf3eb089c4ab2e/image-22.jpg)

- Slides: 23

Akhil Langer, Harshit Dokania, Laxmikant Kale, Udatta Palekar* Parallel Programming Laboratory Department of Computer Science University of Illinois at Urbana-Champaign *Department of Business Administration University of Illinois at Urbana-Champaign http: //charm. cs. uiuc. edu/research/energy 29 th May 2015 The Eleventh Workshop on High-Performance, Power-Aware Computing (HPPAC) Hyderabad, India

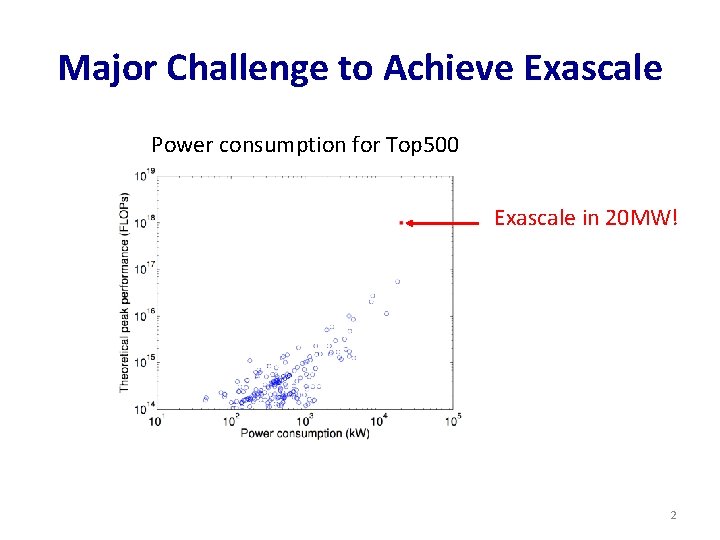

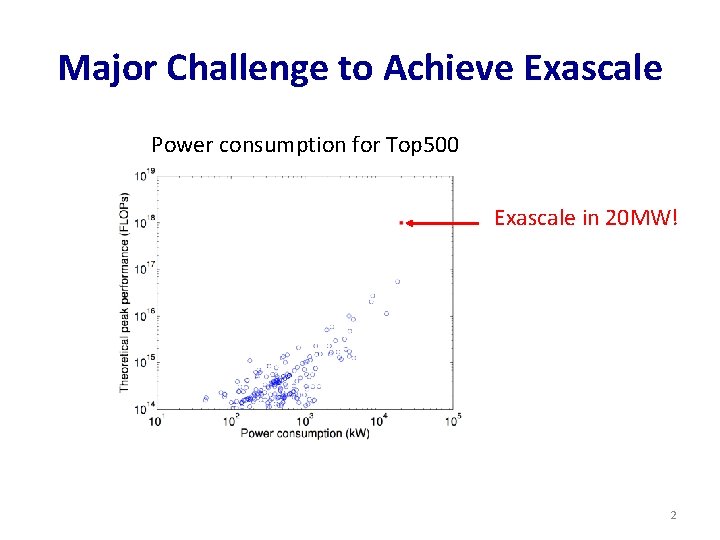

Major Challenge to Achieve Exascale Power consumption for Top 500 Exascale in 20 MW! 2

Data Center Power How is power demand of data center calculated? q. Using Thermal Design Power (TDP)! However, TDP is hardly reached!! Constraining CPU/Memory power Intel Sandy Bridge q Running Average Power Limit (RAPL) library Ø measure and set CPU/memory power 3

Constraining CPU/Memory power Intel Sandy Bridge q Running Average Power Limit (RAPL) library Ø measure and set CPU/memory power Achieved using combination of P-states and Clock throttling • Performance states (or P-states) corresponding to processor’s voltage and frequency e. g. P 0 – 3 GHz, P 1 - 2. 66 GHz, P 2 -2. 33 GHz, P 3 -2 GHz • Clock throttling – processor is forced to be idle 4

Constraining CPU/Memory power Intel Sandy Bridge q Running Average Power Limit (RAPL) library Ø measure and set CPU/memory power Solution to Data Center Power q Constrain power consumption of nodes q Overprovisioning - Use more nodes than conventional data center for same power budget 5

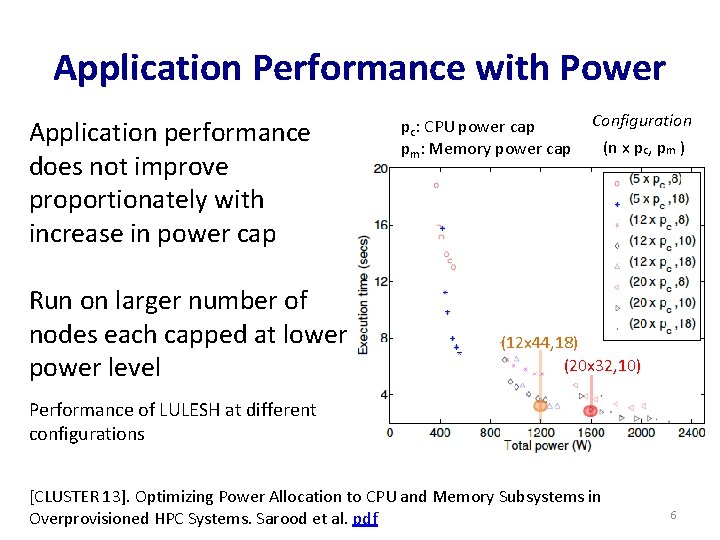

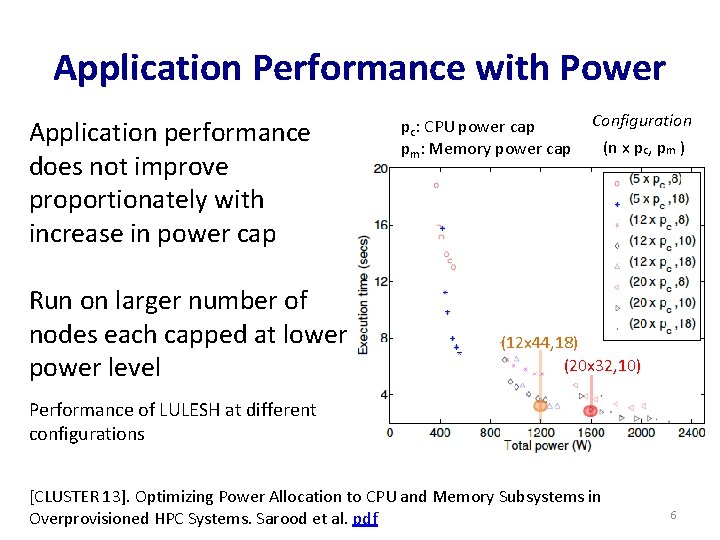

Application Performance with Power Application performance does not improve proportionately with increase in power cap Run on larger number of nodes each capped at lower power level pc: CPU power cap pm: Memory power cap Configuration (n x pc, pm ) (12 x 44, 18) (20 x 32, 10) Performance of LULESH at different configurations [CLUSTER 13]. Optimizing Power Allocation to CPU and Memory Subsystems in Overprovisioned HPC Systems. Sarood et al. pdf 6

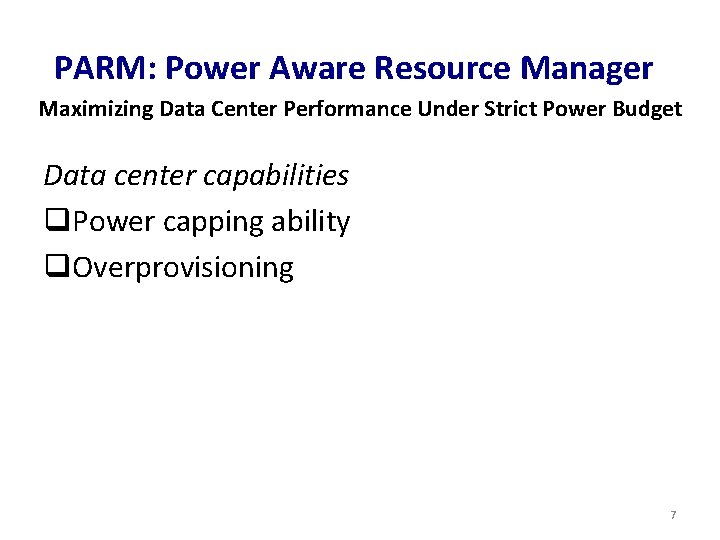

PARM: Power Aware Resource Manager Maximizing Data Center Performance Under Strict Power Budget Data center capabilities q. Power capping ability q. Overprovisioning 7

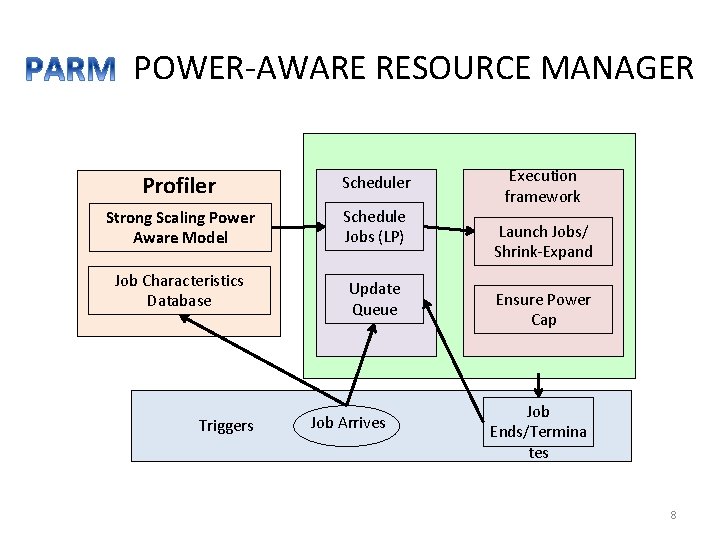

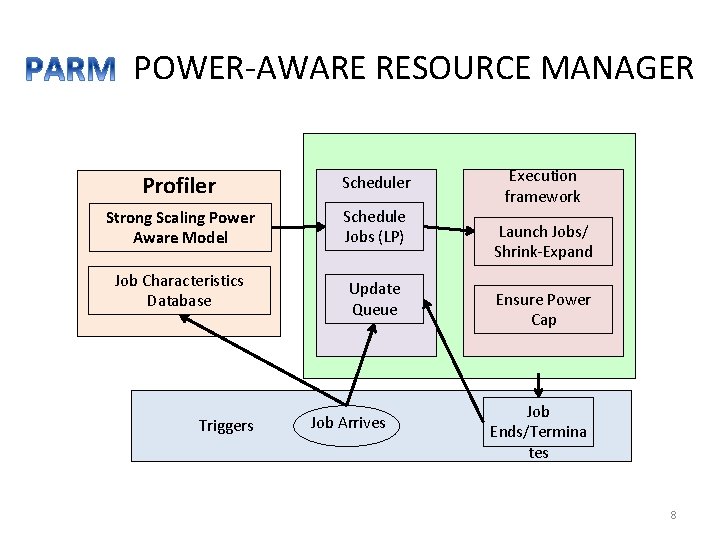

POWER-AWARE RESOURCE MANAGER Profiler Scheduler Strong Scaling Power Aware Model Schedule Jobs (LP) Job Characteristics Database Triggers Update Queue Job Arrives ` Execution framework Launch Jobs/ Shrink-Expand Ensure Power Cap Job Ends/Termina tes 8

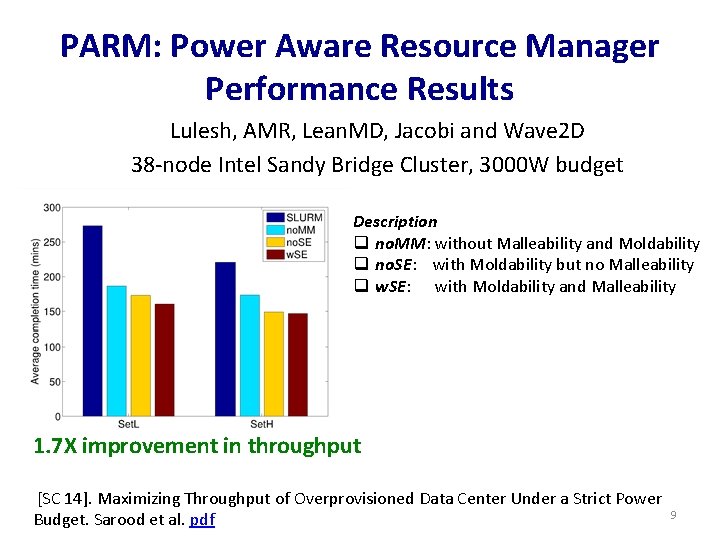

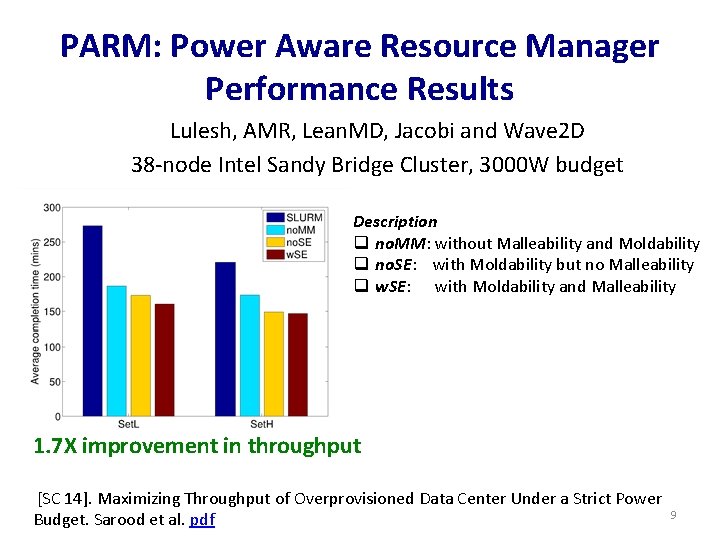

PARM: Power Aware Resource Manager Performance Results Lulesh, AMR, Lean. MD, Jacobi and Wave 2 D 38 -node Intel Sandy Bridge Cluster, 3000 W budget Description q no. MM: without Malleability and Moldability q no. SE: with Moldability but no Malleability q w. SE: with Moldability and Malleability 1. 7 X improvement in throughput [SC 14]. Maximizing Throughput of Overprovisioned Data Center Under a Strict Power Budget. Sarood et al. pdf 9

Energy Consumption Analysis • Although power is a critical constraint, high energy consumption can lead to excessing electricity costs – 20 MW power @ $0. 07/KWh = USD 1 M/month • In Future, users may be charged in terms of energy units instead of core hours! • Selecting right configuration is important for desirable energy-vs-time tradeoff 10

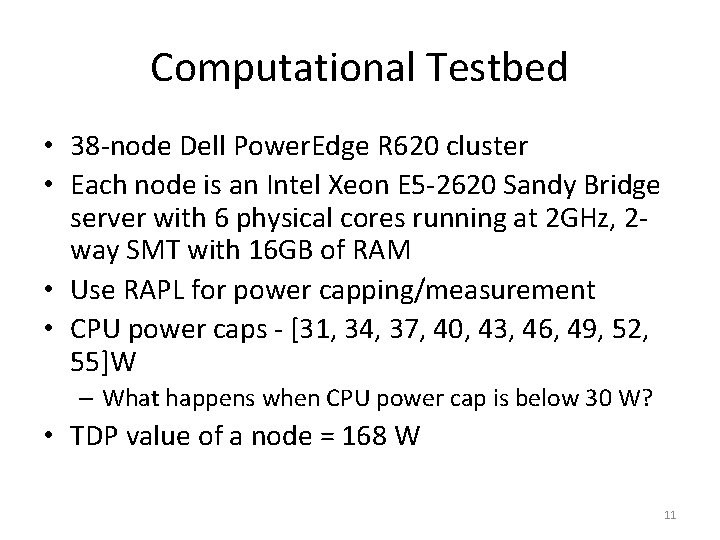

Computational Testbed • 38 -node Dell Power. Edge R 620 cluster • Each node is an Intel Xeon E 5 -2620 Sandy Bridge server with 6 physical cores running at 2 GHz, 2 way SMT with 16 GB of RAM • Use RAPL for power capping/measurement • CPU power caps - [31, 34, 37, 40, 43, 46, 49, 52, 55]W – What happens when CPU power cap is below 30 W? • TDP value of a node = 168 W 11

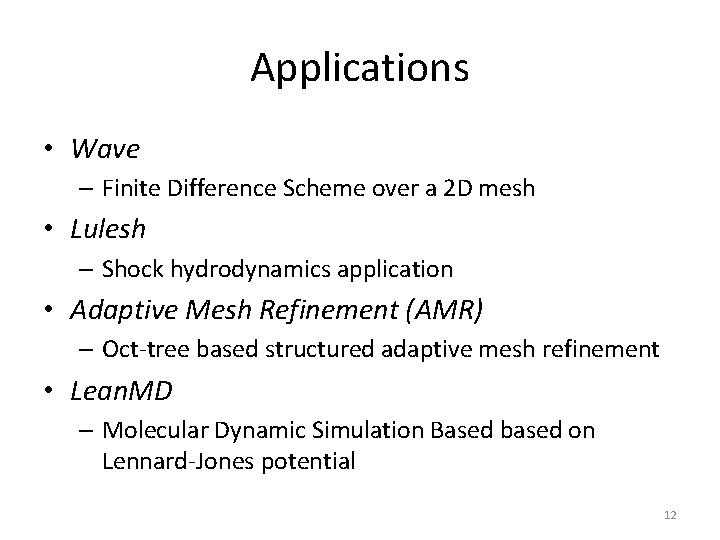

Applications • Wave – Finite Difference Scheme over a 2 D mesh • Lulesh – Shock hydrodynamics application • Adaptive Mesh Refinement (AMR) – Oct-tree based structured adaptive mesh refinement • Lean. MD – Molecular Dynamic Simulation Based based on Lennard-Jones potential 12

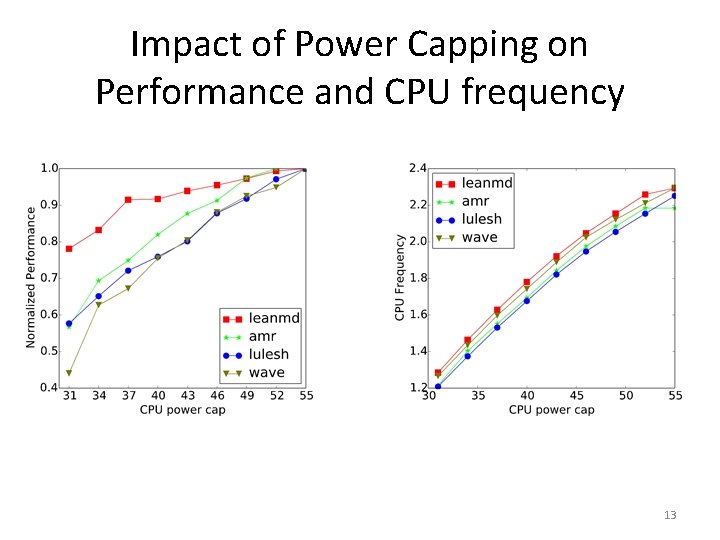

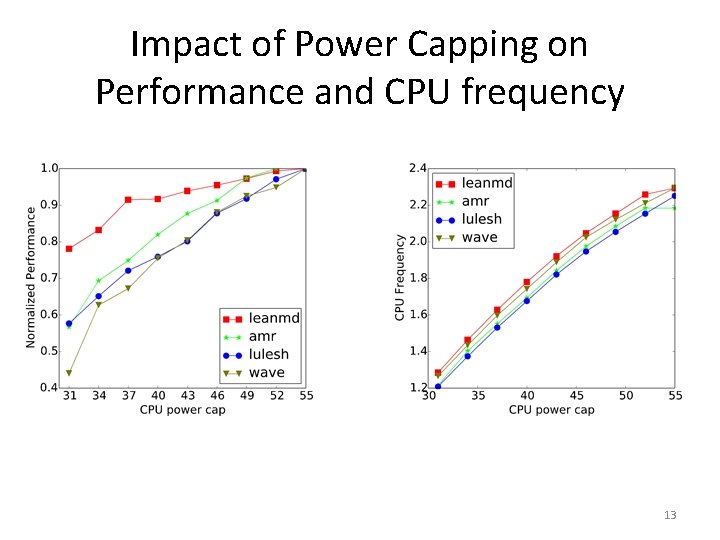

Impact of Power Capping on Performance and CPU frequency 13

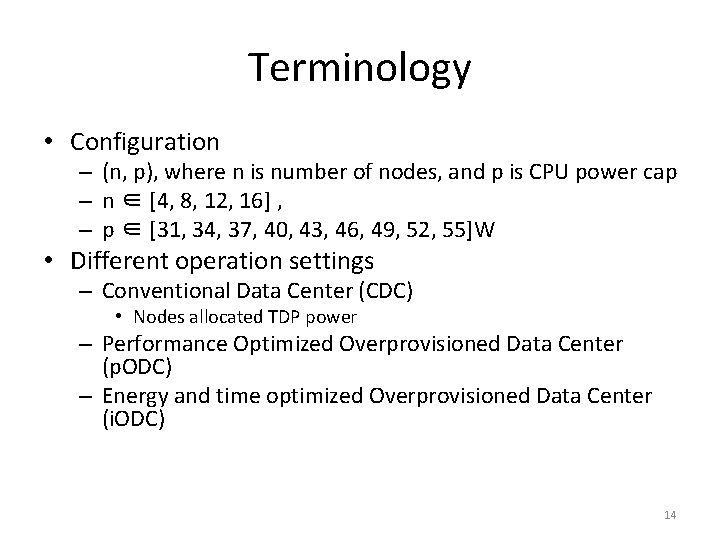

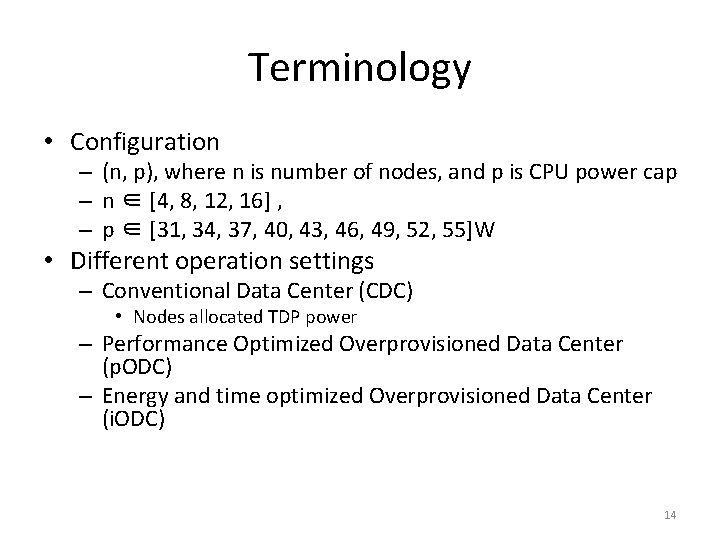

Terminology • Configuration – (n, p), where n is number of nodes, and p is CPU power cap – n ∈ [4, 8, 12, 16] , – p ∈ [31, 34, 37, 40, 43, 46, 49, 52, 55]W • Different operation settings – Conventional Data Center (CDC) • Nodes allocated TDP power – Performance Optimized Overprovisioned Data Center (p. ODC) – Energy and time optimized Overprovisioned Data Center (i. ODC) 14

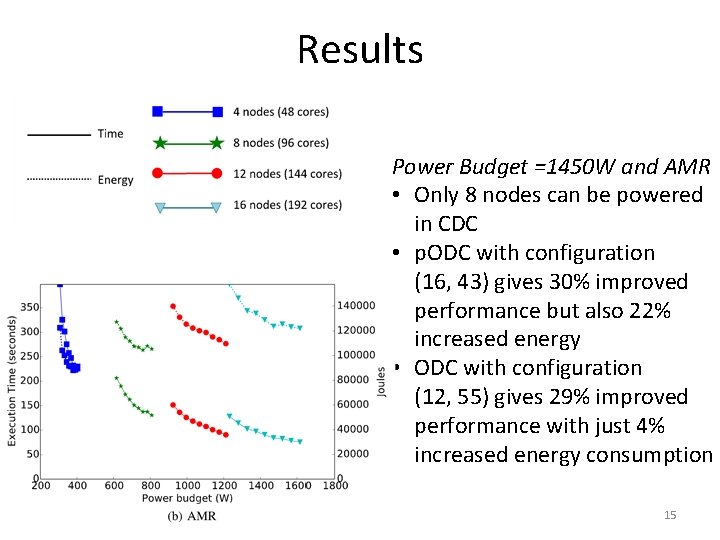

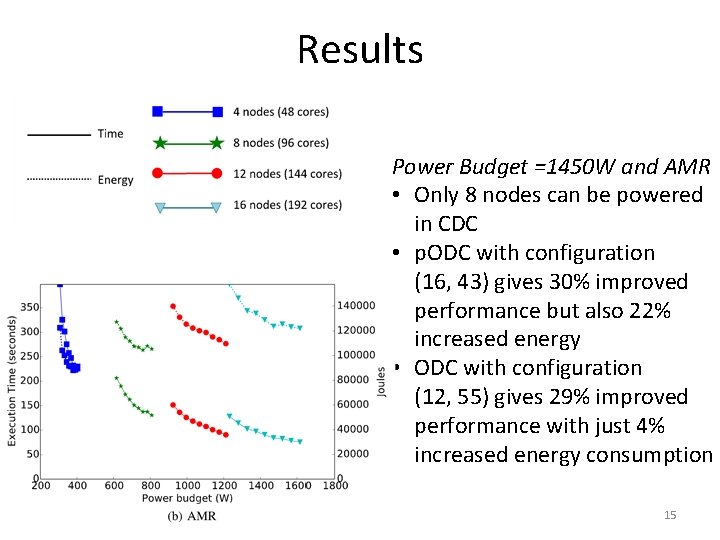

Results Power Budget =1450 W and AMR • Only 8 nodes can be powered in CDC • p. ODC with configuration (16, 43) gives 30% improved performance but also 22% increased energy • ODC with configuration (12, 55) gives 29% improved performance with just 4% increased energy consumption 15

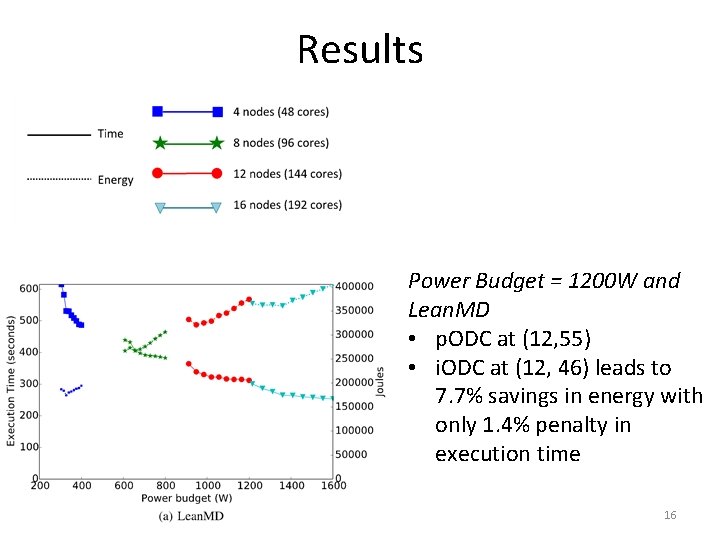

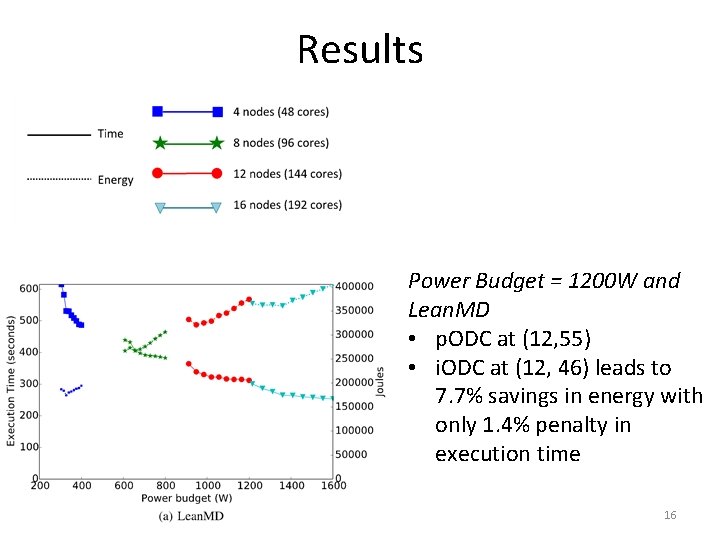

Results Power Budget = 1200 W and Lean. MD • p. ODC at (12, 55) • i. ODC at (12, 46) leads to 7. 7% savings in energy with only 1. 4% penalty in execution time 16

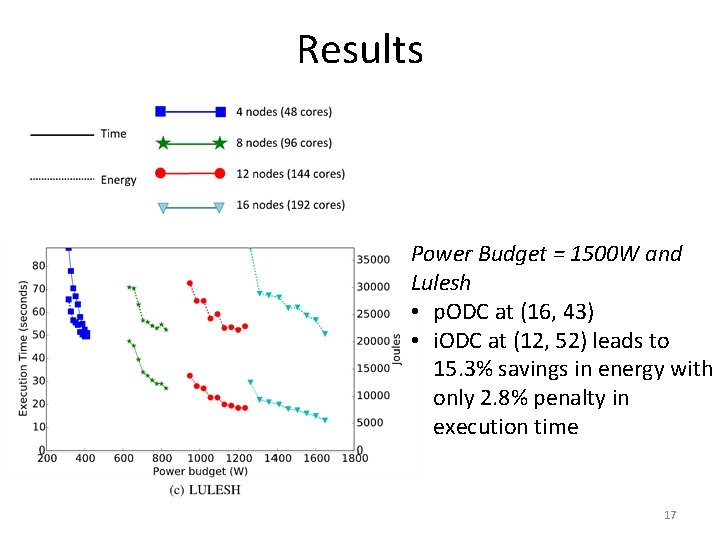

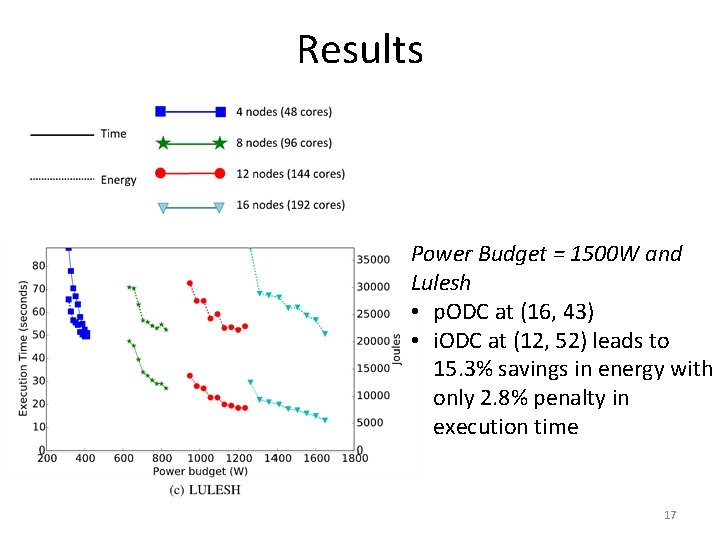

Results Power Budget = 1500 W and Lulesh • p. ODC at (16, 43) • i. ODC at (12, 52) leads to 15. 3% savings in energy with only 2. 8% penalty in execution time 17

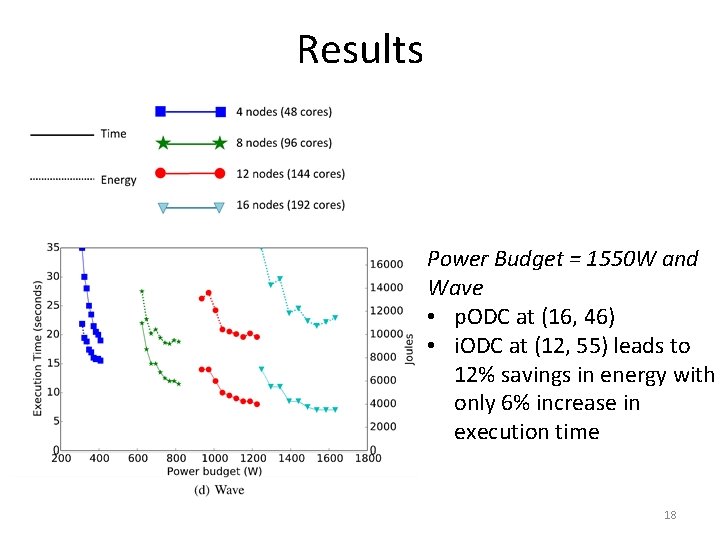

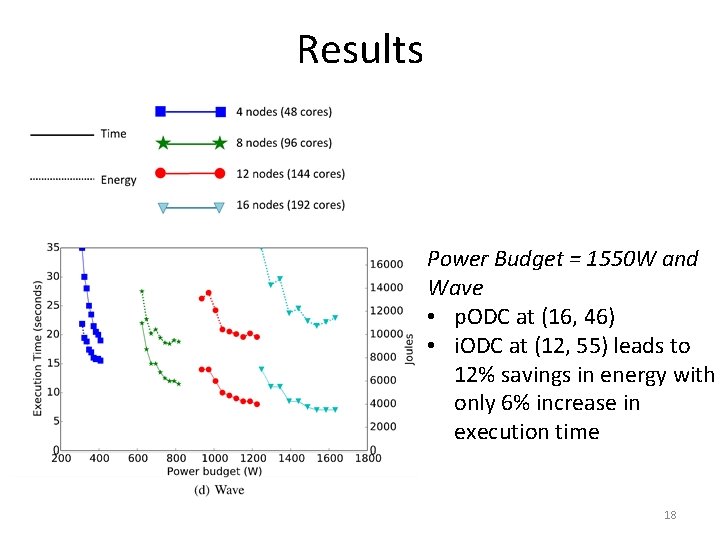

Results Power Budget = 1550 W and Wave • p. ODC at (16, 46) • i. ODC at (12, 55) leads to 12% savings in energy with only 6% increase in execution time 18

Results Note: Configuration choice currently limited by profiled samples, better configurations can be obtained by performance modeling that can predict performance and energy for any configuration 19

Future Work • Automate the selection of configurations for i. ODC using performance modeling and energyvs-time tradeoff metrics • Incorporate CPU temperature and data center cooling energy consumption into the analysis 20

Takeaways q Overprovisioned Data Centers can lead to significant performance improvements under a strict power budget q However, energy consumption can be excessive in a purely performance optimized overprovisioned data center q Intelligent selection of configuration can lead to significant energy savings with minimal impact on performance 21

![Publications http charm cs uiuc eduresearchenergy PMAM 15 Energyefficient Computing for Publications http: //charm. cs. uiuc. edu/research/energy • • • [PMAM 15]. Energy-efficient Computing for](https://slidetodoc.com/presentation_image_h2/a69d4befc3ffbc6e0faf3eb089c4ab2e/image-22.jpg)

Publications http: //charm. cs. uiuc. edu/research/energy • • • [PMAM 15]. Energy-efficient Computing for HPC Workloads on Heterogeneous Many-core Chips. Langer et al. pdf [SC 14]. Maximizing Throughput of Overprovisioned Data Center Under a Strict Power Budget. Sarood et al. pdf [TOPC 14]. Power Management of Extreme-scale Networks with On/Off Links in Runtime Systems. Ehsan et al. pdf [SC 14]. Using an Adaptive Runtime System to Reconfigure the Cache Hierarchy. Ehsan et al. pdf [SC 13]. A Cool Way of Improving the Reliability of HPC Machines. Sarood et al. pdf [CLUSTER 13]. Optimizing Power Allocation to CPU and Memory Subsystems in Overprovisioned HPC Systems. Sarood et al. pdf [CLUSTER 13]. Thermal Aware Automated Load Balancing for HPC Applications. Harshitha et al. pdf [IEEE TC 12]. Cool Load Balancing for High Performance Computing Data Centers. Sarood et al. pdf [SC 12]. A Cool Load Balancer for Parallel Applications. Sarood et al. pdf [CLUSTER 12]. Meta-Balancer: Automated Load Balancing Invocation Based on Application Characteristics. Harshitha et al. pdf 22

Akhil Langer, Harshit Dokania, Laxmikant Kale, Udatta Palekar* Parallel Programming Laboratory Department of Computer Science University of Illinois at Urbana-Champaign *Department of Business Administration University of Illinois at Urbana-Champaign http: //charm. cs. uiuc. edu/research/energy 29 th May 2015 The Eleventh Workshop on High-Performance, Power-Aware Computing (HPPAC) Hyderabad, India