A Unified Continuous Greedy Algorithm for Submodular Maximization

A Unified Continuous Greedy Algorithm for Submodular Maximization Moran Feldman Joseph (Seffi) Naor Roy Schwartz Technion – Israel Institute of Technology

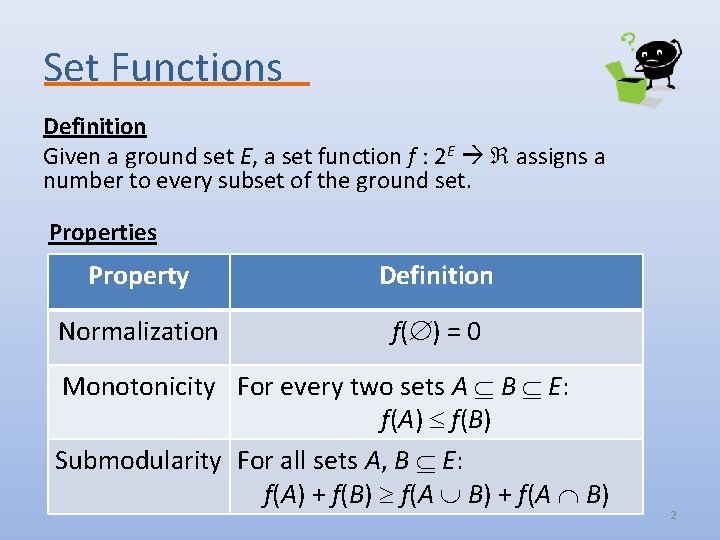

Set Functions Definition Given a ground set E, a set function f : 2 E assigns a number to every subset of the ground set. Properties Property Definition Normalization f( ) = 0 Monotonicity For every two sets A B E: f(A) f(B) Submodularity For all sets A, B E: f(A) + f(B) f(A B) + f(A B) 2

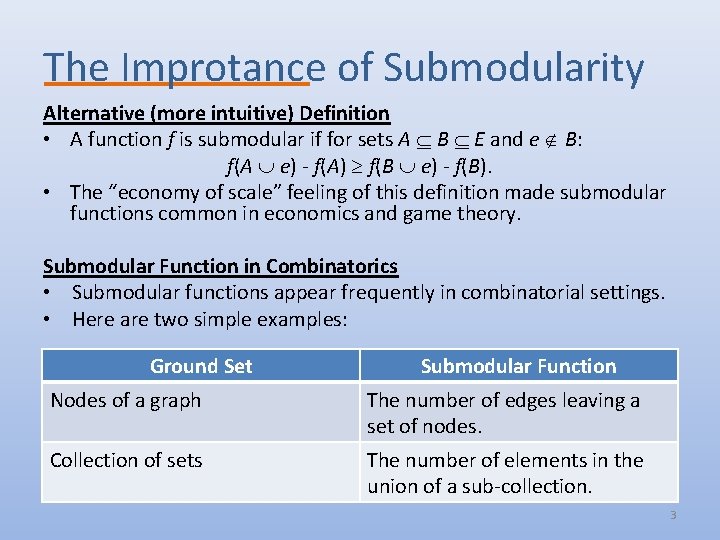

The Improtance of Submodularity Alternative (more intuitive) Definition • A function f is submodular if for sets A B E and e B: f(A e) - f(A) f(B e) - f(B). • The “economy of scale” feeling of this definition made submodular functions common in economics and game theory. Submodular Function in Combinatorics • Submodular functions appear frequently in combinatorial settings. • Here are two simple examples: Ground Set Submodular Function Nodes of a graph The number of edges leaving a set of nodes. Collection of sets The number of elements in the union of a sub-collection. 3

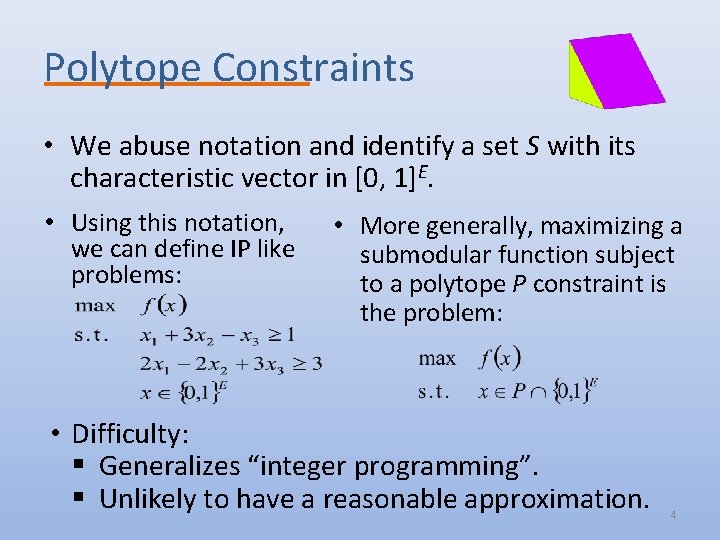

Polytope Constraints • We abuse notation and identify a set S with its characteristic vector in [0, 1]E. • Using this notation, we can define IP like problems: • More generally, maximizing a submodular function subject to a polytope P constraint is the problem: • Difficulty: § Generalizes “integer programming”. § Unlikely to have a reasonable approximation. 4

![Relaxation • Replace the constraint x {0, 1}E with x [0, 1]E. • Use Relaxation • Replace the constraint x {0, 1}E with x [0, 1]E. • Use](http://slidetodoc.com/presentation_image_h2/46104b13b914cc0196407d2a5060e1cc/image-5.jpg)

Relaxation • Replace the constraint x {0, 1}E with x [0, 1]E. • Use the multilinear extension F (a. k. a. extension by expectation) [Calinescu et al. 07] as objective. – Given a vector x, let R(x) denote a random set containing every element e E with probability xe independently. – F(x) = E[f(R(x))]. The Problem • Approximating the relaxed program. Motivation • For many polytopes, a fractional solution can be rounded without losing too much in the objective. – Matroid Polytopes – no loss [Calinescu et al. 07]. – Constant number of knapsacks – (1 – ε) loss [Kulik et al. 09]. – Unsplittable flow in trees – O(1) loss [Chekuri et al. 11]. 5

![The Continuous Greedy Algorithm The Algorithm [Vondrak 08] • Let δ > 0 be The Continuous Greedy Algorithm The Algorithm [Vondrak 08] • Let δ > 0 be](http://slidetodoc.com/presentation_image_h2/46104b13b914cc0196407d2a5060e1cc/image-6.jpg)

The Continuous Greedy Algorithm The Algorithm [Vondrak 08] • Let δ > 0 be a small number. 1. Initialize: y(0) and t 0. 2. While t < 1 do: 3. For every e E, let we = F(y(t) e) – F(y(t)). 4. Find a solution x in P [0, 1]E maximizing w ∙ x. 5. y(t + δ) y(t) + δ ∙ x 6. Set t t + δ 7. Return y(t) Remark If we cannot be evaluated directly, it can be approximated arbitrarily well via sampling. 6

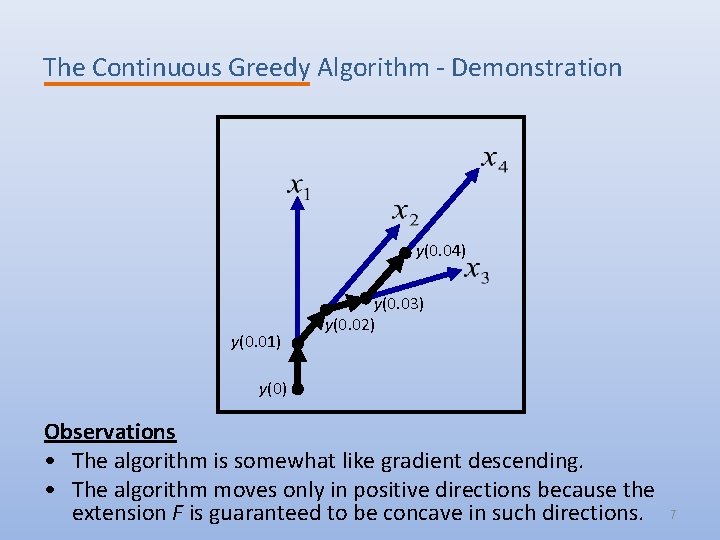

The Continuous Greedy Algorithm - Demonstration y(0. 04) y(0. 01) y(0. 03) y(0. 02) y(0) Observations • The algorithm is somewhat like gradient descending. • The algorithm moves only in positive directions because the extension F is guaranteed to be concave in such directions. 7

The Continuous Greedy Algorithm - Analysis Theorem • Assuming, – f is a normalized monotone submodular function. – P is a solvable polytope. • The continuous greedy algorithm gives 1 – 1/e – o(n-1) approximation. There are two important lemmata in the proof of theorem. Lemma 1 There is a good direction, i. e. , w ∙ x f(OPT) – F(y(t)). Proof Idea OPT itself is a feasible direction, and its value is at least f(OPT) – F(y(t)). 8

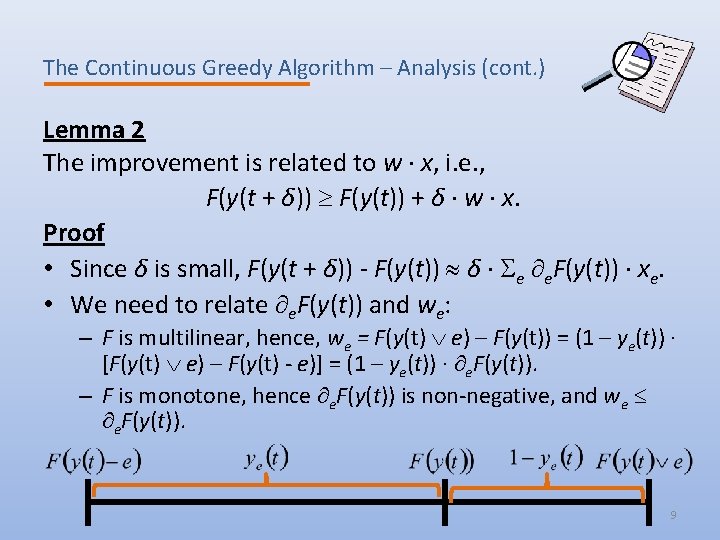

The Continuous Greedy Algorithm – Analysis (cont. ) Lemma 2 The improvement is related to w ∙ x, i. e. , F(y(t + δ)) F(y(t)) + δ ∙ w ∙ x. Proof • Since δ is small, F(y(t + δ)) - F(y(t)) δ ∙ e e. F(y(t)) ∙ xe. • We need to relate e. F(y(t)) and we: – F is multilinear, hence, we = F(y(t) e) – F(y(t)) = (1 – ye(t)) ∙ [F(y(t) e) – F(y(t) - e)] = (1 – ye(t)) ∙ e. F(y(t)). – F is monotone, hence e. F(y(t)) is non-negative, and we e. F(y(t)). 9

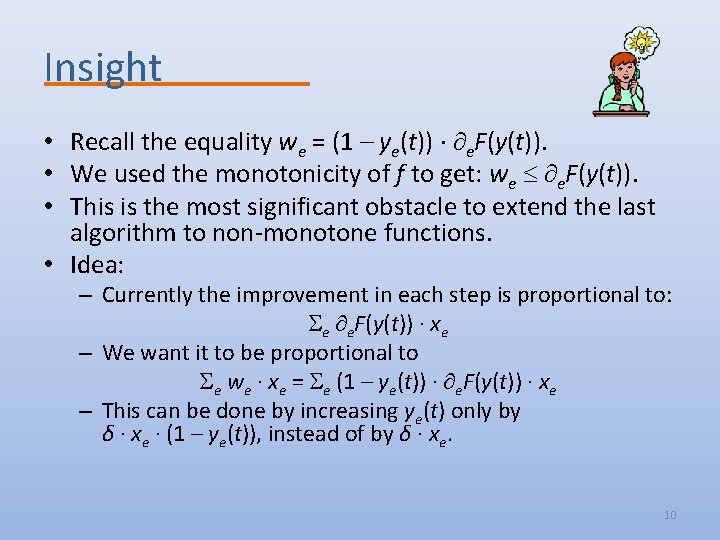

Insight • Recall the equality we = (1 – ye(t)) ∙ e. F(y(t)). • We used the monotonicity of f to get: we e. F(y(t)). • This is the most significant obstacle to extend the last algorithm to non-monotone functions. • Idea: – Currently the improvement in each step is proportional to: e e. F(y(t)) ∙ xe – We want it to be proportional to e we ∙ xe = e (1 – ye(t)) ∙ e. F(y(t)) ∙ xe – This can be done by increasing ye(t) only by δ ∙ xe ∙ (1 – ye(t)), instead of by δ ∙ xe. 10

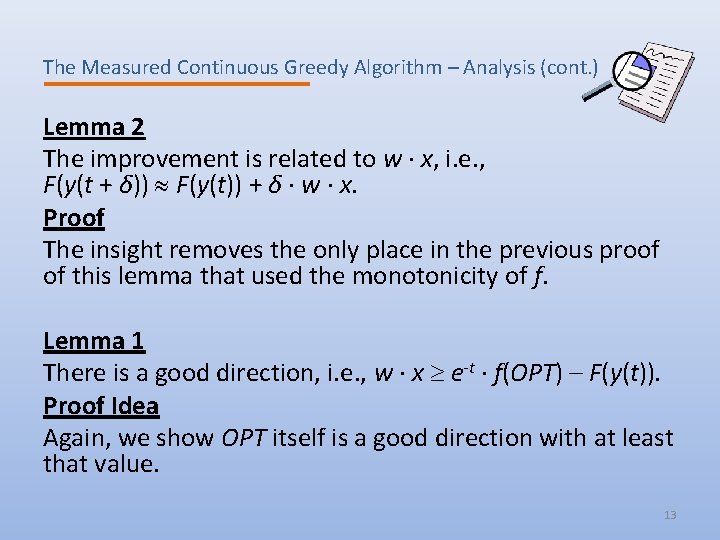

The Measured Continuous Greedy Algorithm The Algorithm • Let δ > 0 be a small number. 1. Initialize: y(0) and t 0. 2. While t < T do: 3. For every e E, let we = F(y(t) e) – F(y(t)). 4. Find a solution x in P [0, 1]E maximizing w ∙ x. 5. For every e E, ye(t + δ) ye(t) + δ ∙ xe ∙ (1 – ye(t)). 6. Set t t + δ 7. Return y(t) Remark • The algorithm never leaves the box [0, 1]E, so it can be used with arbitrary values of T. 11

The Measured Continuous Greedy Algorithm - Analysis Theorem • Assuming, – f is a non-negative submodular function. – P is a solvable down-montone polytope. • The approximation ratio of the measured continuous greedy algorithm with T = 1 is 1/e – o(n-1). Remarks • The solution is no longer a convex combination of P points. • For T 1, the output is in P since P is down-monotone. 12

The Measured Continuous Greedy Algorithm – Analysis (cont. ) Lemma 2 The improvement is related to w ∙ x, i. e. , F(y(t + δ)) F(y(t)) + δ ∙ w ∙ x. Proof The insight removes the only place in the previous proof of this lemma that used the monotonicity of f. Lemma 1 There is a good direction, i. e. , w ∙ x e-t ∙ f(OPT) – F(y(t)). Proof Idea Again, we show OPT itself is a good direction with at least that value. 13

Result for Monotone Functions • For non-monotone functions, the approximation ratio is maximized for T = 1. • For monotone f, we get the an approximation ratio of 1 -e-T. – For T = 1, this is the same ratio of the previous algorithm. – The approximation ratio improves as T increases. • In general, T > 1 might cause the algorithm to produce solutions outside the polytope. • However, for some polytopes, somewhat larger values of T can be used. 14

The Submodular Welfare Problem Instance • A set P of n players , and a set Q of m items. • Normalized monotone submodular utility function wj: 2 Q + for each player. Objective • Let Qj Q denote the set of items the jth player gets. • The utility of the jth player is wj(Qj). • Distribute the items among the players, maximizing the sum of utilities. Approximation • Can be represented as a problem of the previous form. • The algorithm can be executed till time -n ∙ ln (1 – 1/n). • The expected value of the solution is at least: 1 – (1 – 1/n)n. 15

Open Problem • The measured continuous greedy algorithm provides tight approximation for monotone functions [Vondrak 06]. • Is this also the case for non-monotone functions? • The current approximation ratio of e-1 is a natural number. 16

- Slides: 17