A StyleBased Generator Architecture for Generative Adversarial Networks

用于生成对抗性网络的 一种基于样式的生成器架构 A Style-Based Generator Architecture for Generative Adversarial Networks Jiaman Chen

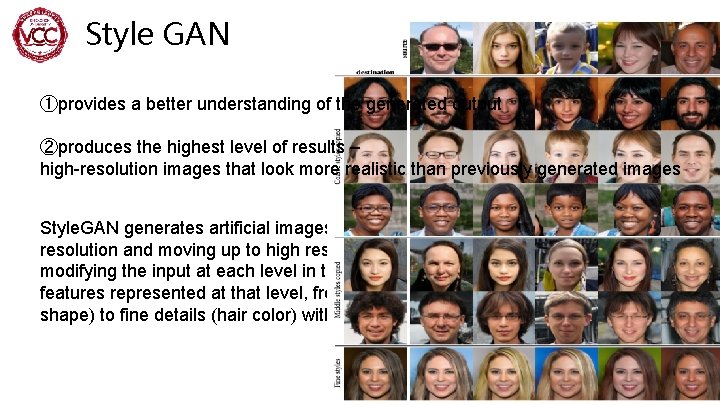

Style GAN ①provides a better understanding of the generated output ②produces the highest level of results – high-resolution images that look more realistic than previously generated images Style. GAN generates artificial images step by step, starting at very low resolution and moving up to high resolution (1024 x 1024). By individually modifying the input at each level in the network, it can control the visual features represented at that level, from rough features (posture, facial shape) to fine details (hair color) without affecting the other levels. 2

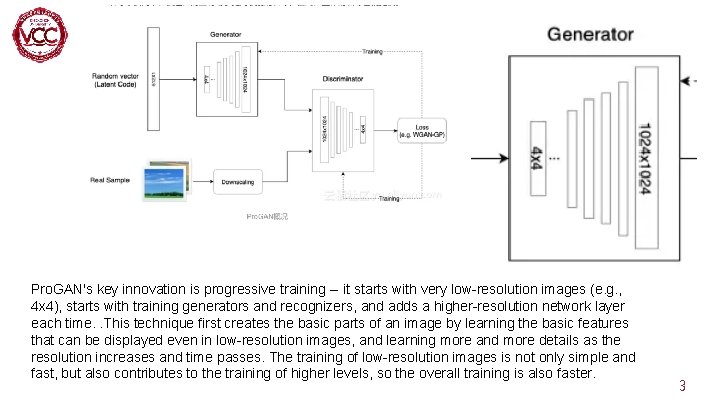

Pro. GAN's key innovation is progressive training -- it starts with very low-resolution images (e. g. , 4 x 4), starts with training generators and recognizers, and adds a higher-resolution network layer each time. . This technique first creates the basic parts of an image by learning the basic features that can be displayed even in low-resolution images, and learning more and more details as the resolution increases and time passes. The training of low-resolution images is not only simple and fast, but also contributes to the training of higher levels, so the overall training is also faster. 3

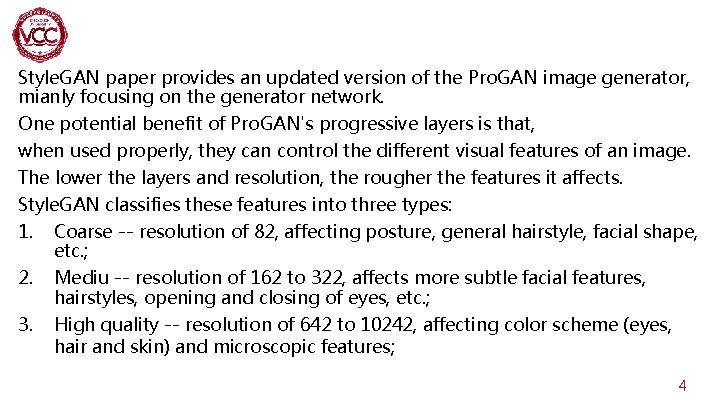

Style. GAN paper provides an updated version of the Pro. GAN image generator, mianly focusing on the generator network. One potential benefit of Pro. GAN's progressive layers is that, when used properly, they can control the different visual features of an image. The lower the layers and resolution, the rougher the features it affects. Style. GAN classifies these features into three types: 1. Coarse -- resolution of 82, affecting posture, general hairstyle, facial shape, etc. ; 2. Mediu -- resolution of 162 to 322, affects more subtle facial features, hairstyles, opening and closing of eyes, etc. ; 3. High quality -- resolution of 642 to 10242, affecting color scheme (eyes, hair and skin) and microscopic features; 4

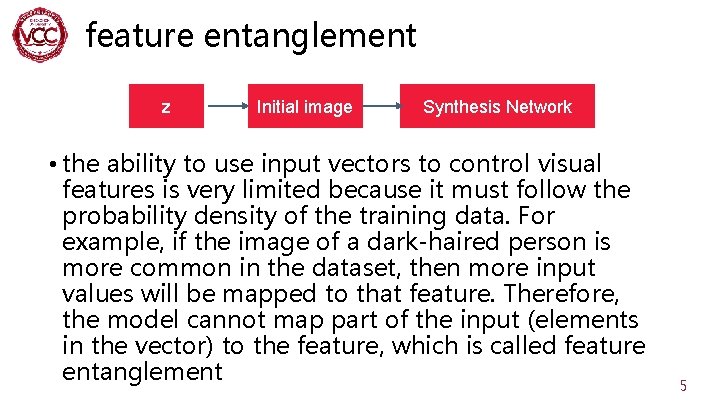

feature entanglement z Initial image Synthesis Network • the ability to use input vectors to control visual features is very limited because it must follow the probability density of the training data. For example, if the image of a dark-haired person is more common in the dataset, then more input values will be mapped to that feature. Therefore, the model cannot map part of the input (elements in the vector) to the feature, which is called feature entanglement 5

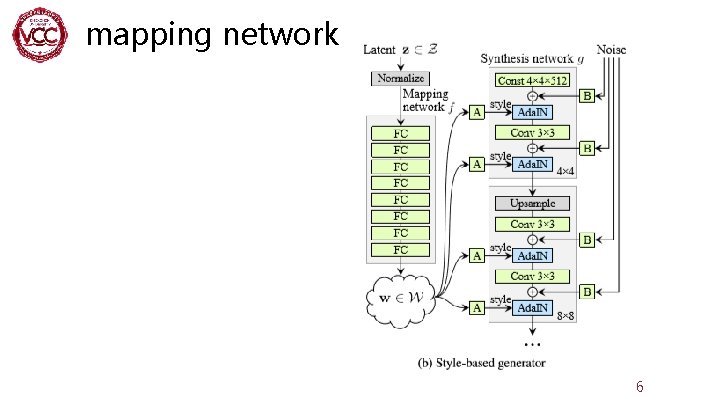

mapping network 6

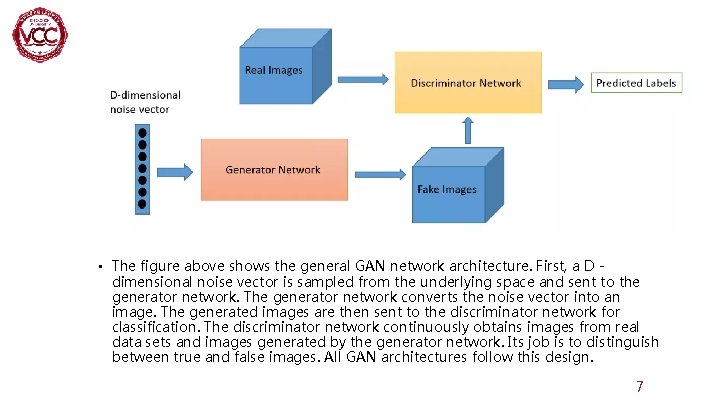

• The figure above shows the general GAN network architecture. First, a D dimensional noise vector is sampled from the underlying space and sent to the generator network. The generator network converts the noise vector into an image. The generated images are then sent to the discriminator network for classification. The discriminator network continuously obtains images from real data sets and images generated by the generator network. Its job is to distinguish between true and false images. All GAN architectures follow this design. 7

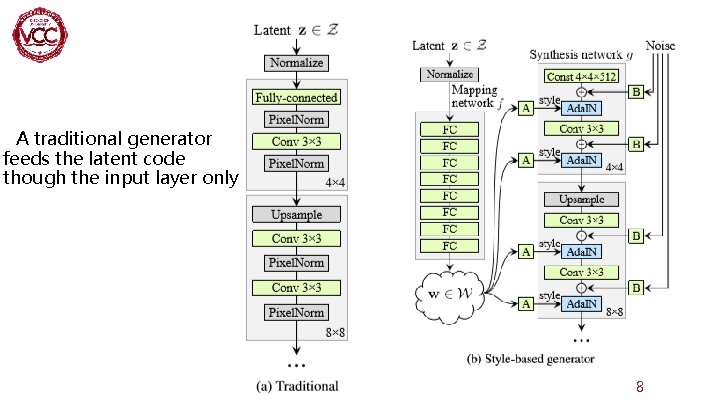

A traditional generator feeds the latent code though the input layer only 8

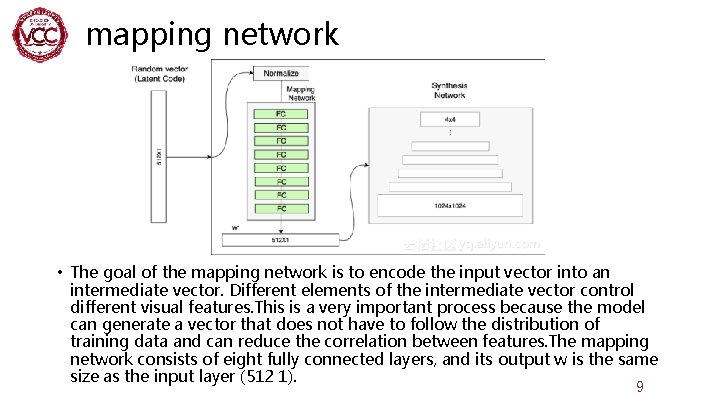

mapping network • The goal of the mapping network is to encode the input vector into an intermediate vector. Different elements of the intermediate vector control different visual features. This is a very important process because the model can generate a vector that does not have to follow the distribution of training data and can reduce the correlation between features. The mapping network consists of eight fully connected layers, and its output w is the same size as the input layer (512 1). 9

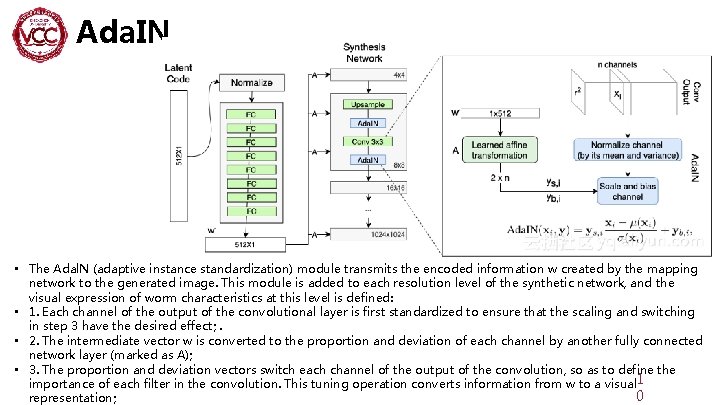

Ada. IN • The Adal. N (adaptive instance standardization) module transmits the encoded information w created by the mapping network to the generated image. This module is added to each resolution level of the synthetic network, and the visual expression of worm characteristics at this level is defined: • 1. Each channel of the output of the convolutional layer is first standardized to ensure that the scaling and switching in step 3 have the desired effect; . • 2. The intermediate vector w is converted to the proportion and deviation of each channel by another fully connected network layer (marked as A); • 3. The proportion and deviation vectors switch each channel of the output of the convolution, so as to define the importance of each filter in the convolution. This tuning operation converts information from w to a visual 1 0 representation;

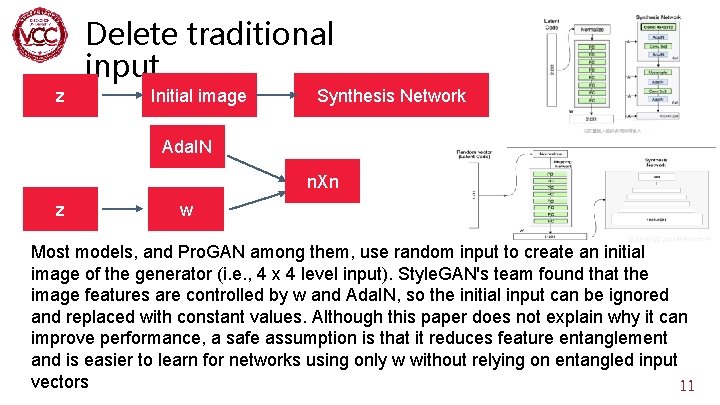

Delete traditional input. z Initial image Synthesis Network Ada. IN n. Xn z w Most models, and Pro. GAN among them, use random input to create an initial image of the generator (i. e. , 4 x 4 level input). Style. GAN's team found that the image features are controlled by w and Ada. IN, so the initial input can be ignored and replaced with constant values. Although this paper does not explain why it can improve performance, a safe assumption is that it reduces feature entanglement and is easier to learn for networks using only w without relying on entangled input vectors 11

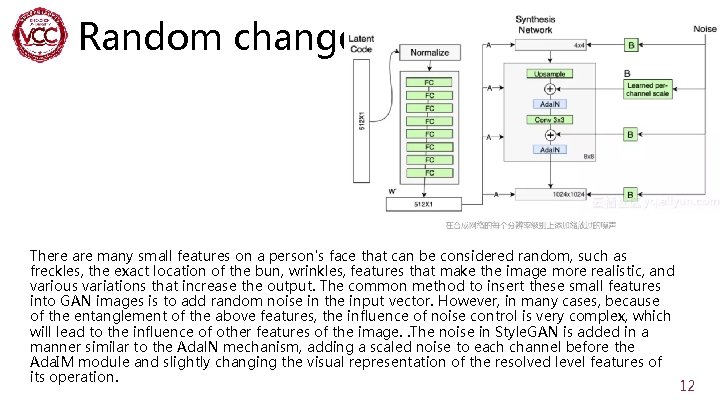

Random change. There are many small features on a person's face that can be considered random, such as freckles, the exact location of the bun, wrinkles, features that make the image more realistic, and various variations that increase the output. The common method to insert these small features into GAN images is to add random noise in the input vector. However, in many cases, because of the entanglement of the above features, the influence of noise control is very complex, which will lead to the influence of other features of the image. . The noise in Style. GAN is added in a manner similar to the Adal. N mechanism, adding a scaled noise to each channel before the Ada. IM module and slightly changing the visual representation of the resolved level features of its operation. 12

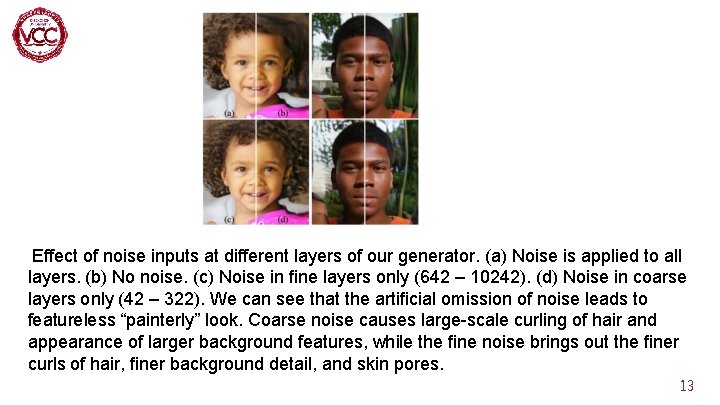

Effect of noise inputs at different layers of our generator. (a) Noise is applied to all layers. (b) No noise. (c) Noise in fine layers only (642 – 10242). (d) Noise in coarse layers only (42 – 322). We can see that the artificial omission of noise leads to featureless “painterly” look. Coarse noise causes large-scale curling of hair and appearance of larger background features, while the fine noise brings out the finer curls of hair, finer background detail, and skin pores. 13

Reference • Astyle-Based Generator Architecture for Generative Adversarial Networks • https: //www. jianshu. com/p/5 dc 2486 c 70 cf 14

DOWNLOADS at http: //vcc. szu. edu. cn Thank You!

- Slides: 15