A Generalized Portable SHMEM Library Krzysztof Parzyszek Ames

A Generalized Portable SHMEM Library Krzysztof Parzyszek Ames Laboratory Jarek Nieplocha Pacific Northwest National Laboratory Ricky Kendall Ames Laboratory PDCS-2000 November 9, 2000

Overview z Introduction y global address space programming model y one-sided communication z Cray SHMEM z GPSHMEM - Generalized Portable SHMEM z Implementation Approach z Experimental Results z Conclusions PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

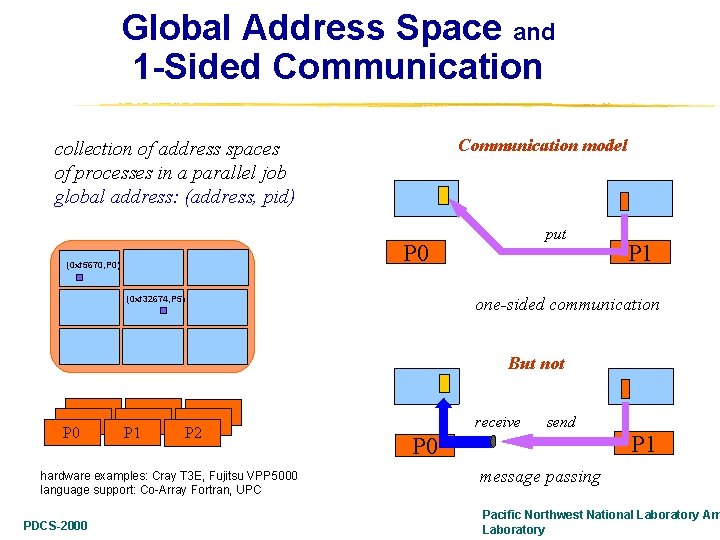

Global Address Space and 1 -Sided Communication model collection of address spaces of processes in a parallel job global address: (address, pid) put P 0 (0 xf 5670, P 0) P 1 one-sided communication (0 xf 32674, P 5) But not P 0 P 1 P 2 hardware examples: Cray T 3 E, Fujitsu VPP 5000 language support: Co-Array Fortran, UPC PDCS-2000 receive send P 0 P 1 message passing Pacific Northwest National Laboratory Am Laboratory

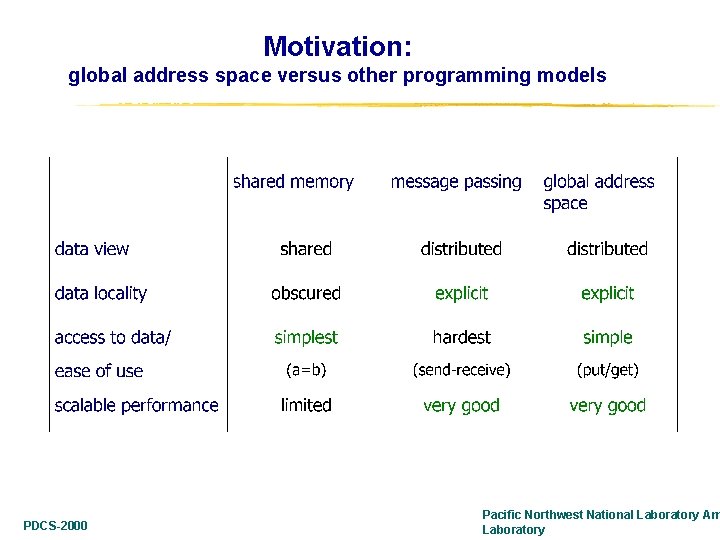

Motivation: global address space versus other programming models PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

One-sided communication interfaces z First commercial implementation - SHMEM on the Cray T 3 D y put, get, scatter, gather, atomic swap y memory consistency issues (solved on the T 3 E) y maps well to the Cray T 3 E hardware - excellent application performance z Vendors specific interfaces y IBM LAPI, Fujitsu MPlib, NEC Parlib/CJ, Hitachi RDMA, Quadrics Elan z Portable Interfaces y MPI-2 1 -sided (related but rather restrictive model) y ARMCI one-sided communication library y SHMEM (some platforms) y GPSHMEM -- first fully portable implementation of SHMEM PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

History of SHMEM z Introduced in on the Cray T 3 D in 1993 y y one-sided operations: put, get, scatter, gather, atomic swap collective operations: synchronization, reduction cache not coherent w. r. t. SHMEM operations (problem solved on the T 3 E) highest level of performance on any MPP at that time z Increased availability y SGI after purchasing Cray ported to IRIX systems and Cray vector systems x but not always full functionality (w/o atomic ops on vector systems like Cray J 90) x extensions to match more datatypes - SHMEM API is datatype oriented y HPVM project lead by Andrew Chien (UIUC/UCSD) x ported and extended a subset of SHMEM x on top of Fast Messages for Linux (later dropped) and Windows clusters y Quadrics/Compaq port to Elan x available on Linux and Tru 64 clusters with QSW switch y subset on top of LAPI for the IBM SP x internal porting tool by the IBM ACTS group at Watson PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

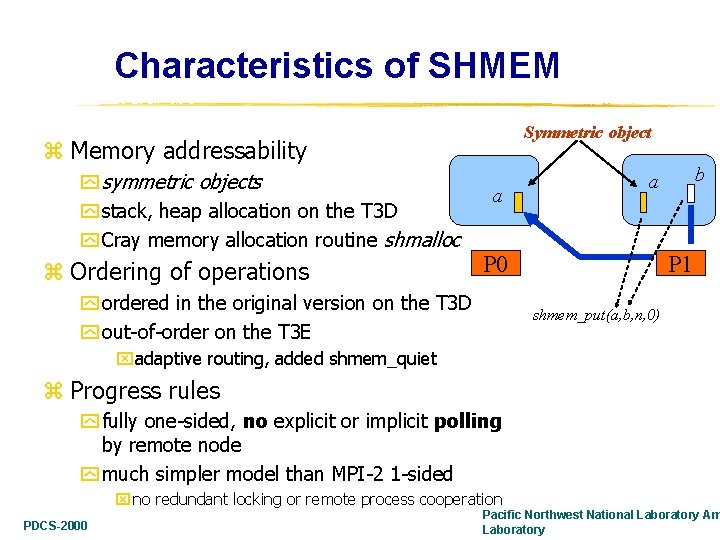

Characteristics of SHMEM Symmetric object z Memory addressability y symmetric objects y stack, heap allocation on the T 3 D y Cray memory allocation routine shmalloc z Ordering of operations a P 0 y ordered in the original version on the T 3 D y out-of-order on the T 3 E b a P 1 shmem_put(a, b, n, 0) xadaptive routing, added shmem_quiet z Progress rules y fully one-sided, no explicit or implicit polling by remote node y much simpler model than MPI-2 1 -sided x no redundant locking or remote process cooperation PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

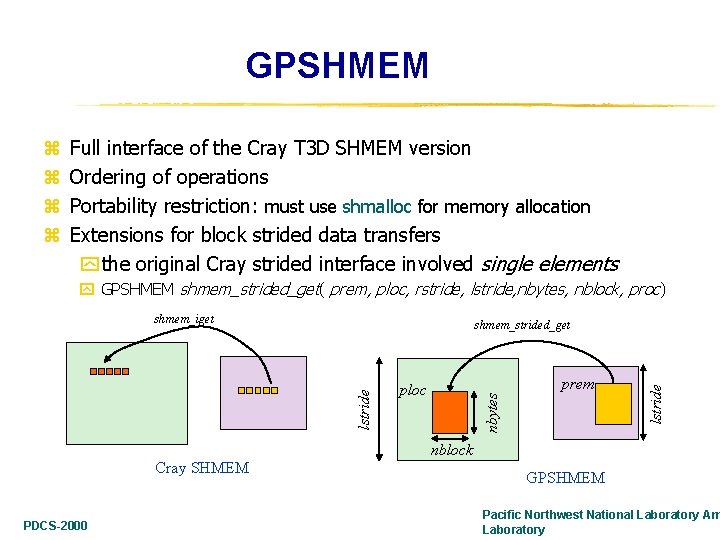

GPSHMEM z z Full interface of the Cray T 3 D SHMEM version Ordering of operations Portability restriction: must use shmalloc for memory allocation Extensions for block strided data transfers y the original Cray strided interface involved single elements y GPSHMEM shmem_strided_get( prem, ploc, rstride, lstride, nbytes, nblock, proc) shmem_iget prem lstride ploc nbytes lstride shmem_strided_get nblock Cray SHMEM PDCS-2000 GPSHMEM Pacific Northwest National Laboratory Am Laboratory

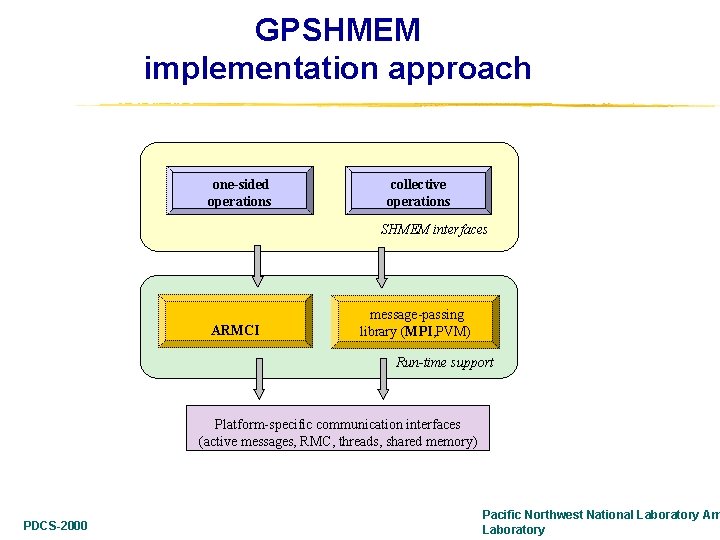

GPSHMEM implementation approach one-sided operations collective operations SHMEM interfaces ARMCI message-passing library (MPI, PVM) Run-time support Platform-specific communication interfaces (active messages, RMC, threads, shared memory) PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

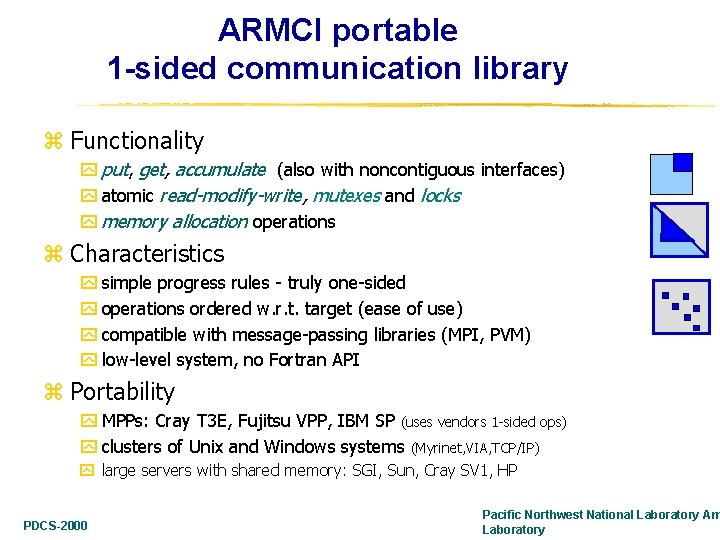

ARMCI portable 1 -sided communication library z Functionality y put, get, accumulate (also with noncontiguous interfaces) y atomic read-modify-write, mutexes and locks y memory allocation operations z Characteristics y simple progress rules - truly one-sided y operations ordered w. r. t. target (ease of use) y compatible with message-passing libraries (MPI, PVM) y low-level system, no Fortran API z Portability y MPPs: Cray T 3 E, Fujitsu VPP, IBM SP (uses vendors 1 -sided ops) y clusters of Unix and Windows systems (Myrinet, VIA, TCP/IP) y large servers with shared memory: SGI, Sun, Cray SV 1, HP PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

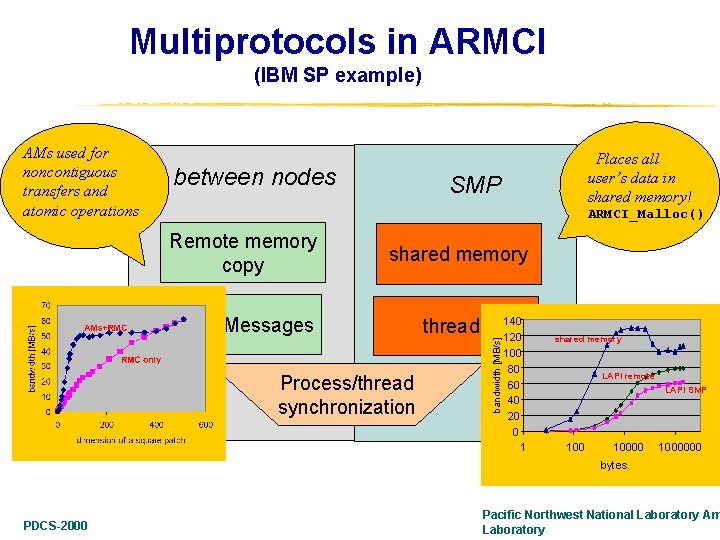

Multiprotocols in ARMCI (IBM SP example) between nodes Places all user’s data in shared memory! SMP ARMCI_Malloc() Remote memory copy shared memory Active Messages threads Process/thread synchronization bandwidth [MB/s] AMs used for noncontiguous transfers and atomic operations 140 120 100 80 60 40 20 0 1 shared memory LAPI remote LAPI SMP 1000000 bytes PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

Experience z Performance studies y GPSHMEM overhead over SHMEM on the Cray T 3 E y Comparison to MPI-2 1 -sided on the Fujitsu VX-4 z Applications - see paper y matrix multiplication on a Linux cluster y porting Cray T 3 E codes PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

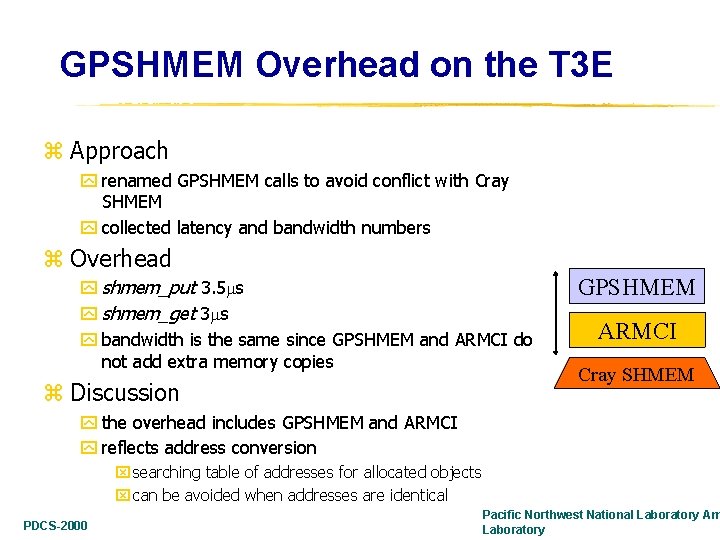

GPSHMEM Overhead on the T 3 E z Approach y renamed GPSHMEM calls to avoid conflict with Cray SHMEM y collected latency and bandwidth numbers z Overhead y shmem_put 3. 5 s y shmem_get 3 s y bandwidth is the same since GPSHMEM and ARMCI do not add extra memory copies z Discussion GPSHMEM ARMCI Cray SHMEM y the overhead includes GPSHMEM and ARMCI y reflects address conversion x searching table of addresses for allocated objects x can be avoided when addresses are identical PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

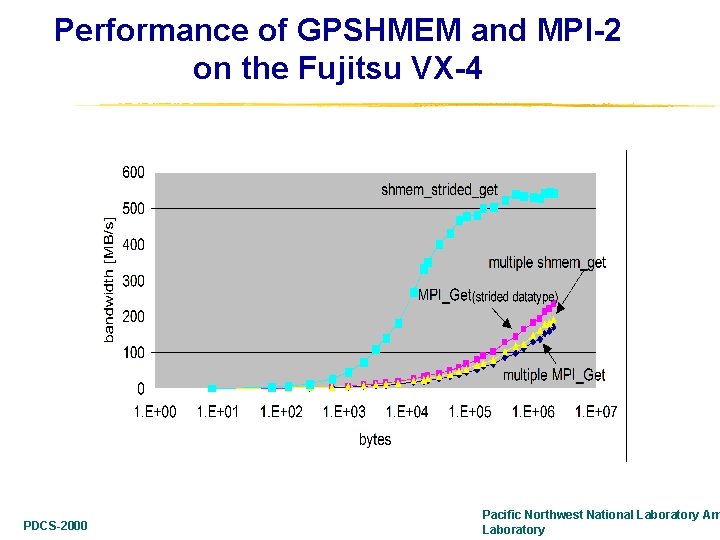

Performance of GPSHMEM and MPI-2 on the Fujitsu VX-4 PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

Conclusions z Described a fully portable implementation of SHMEM-like library y SHMEM becomes a viable alternative to MPI-2 1 -sided y Good performance closely tied up to ARMCI y Offers potential wide portability to other tools based on SHMEM xe. g. Co-Array Fortran z Cray SHMEM API incomplete for strided data structures y extensions for block strided transfers improve performance z More work with applications needed to drive future extensions and development z Code availability: rickyk@ameslab. gov PDCS-2000 Pacific Northwest National Laboratory Am Laboratory

- Slides: 15