WebMining Agents Augmenting Probabilistic Graphical Models with Ontology

Web-Mining Agents Augmenting Probabilistic Graphical Models with Ontology Information: Object Classification Prof. Dr. Ralf Möller Dr. Özgür L. Özçep Universität zu Lübeck Institut für Informationssysteme Tanya Braun (Exercises)

Based on: Large-Scale Object Recognition using Label Relation Graphs Jia Deng 1, 2, Nan Ding 2, Yangqing Jia 2, Andrea Frome 2, Kevin Murphy 2, Samy Bengio 2, Yuan Li 2, Hartmut Neven 2, Hartwig Adam 2 University of Michigan 1, Google 2

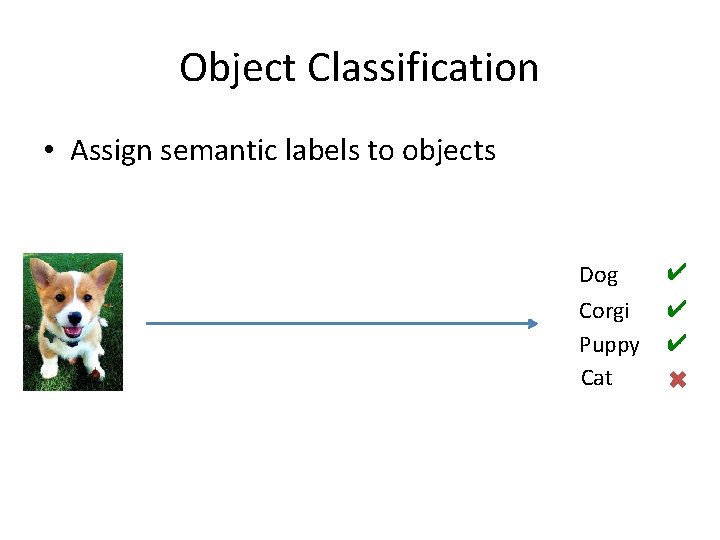

Object Classification • Assign semantic labels to objects Dog ✔ Corgi Puppy Cat ✔ ✔ ✖

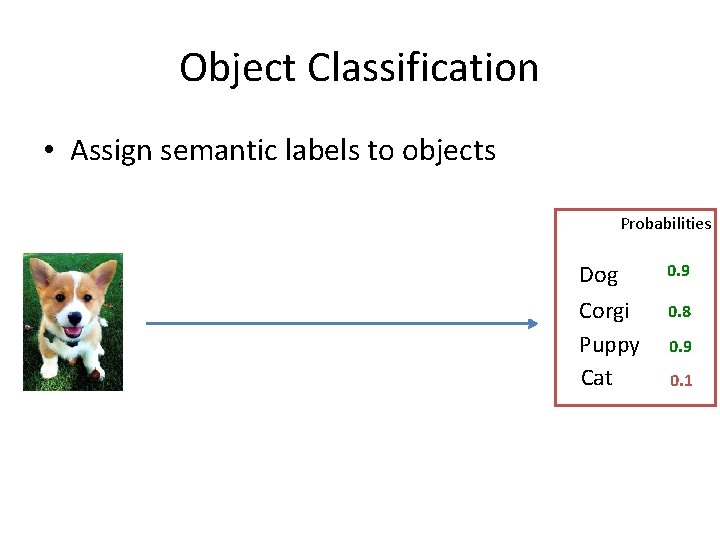

Object Classification • Assign semantic labels to objects Probabilities Dog 0. 9 Corgi Puppy Cat 0. 8 0. 9 0. 1

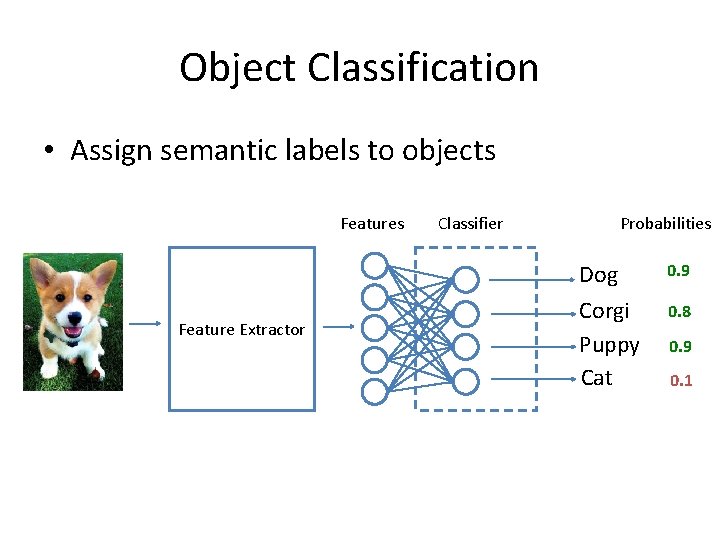

Object Classification • Assign semantic labels to objects Feature Extractor Classifier Probabilities Dog 0. 9 Corgi Puppy Cat 0. 8 0. 9 0. 1

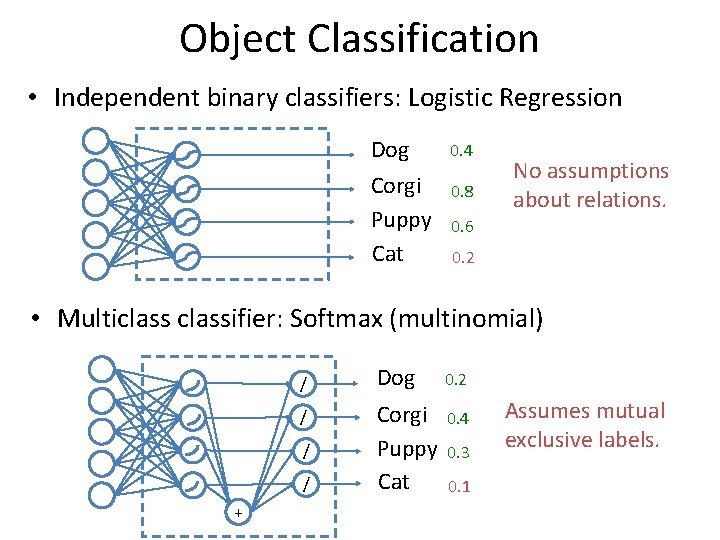

Object Classification • Independent binary classifiers: Logistic Regression Dog Corgi Puppy Cat 0. 4 0. 8 No assumptions about relations. 0. 6 0. 2 • Multiclassifier: Softmax (multinomial) / / + Dog 0. 2 Corgi Puppy Cat 0. 4 0. 3 0. 1 Assumes mutual exclusive labels.

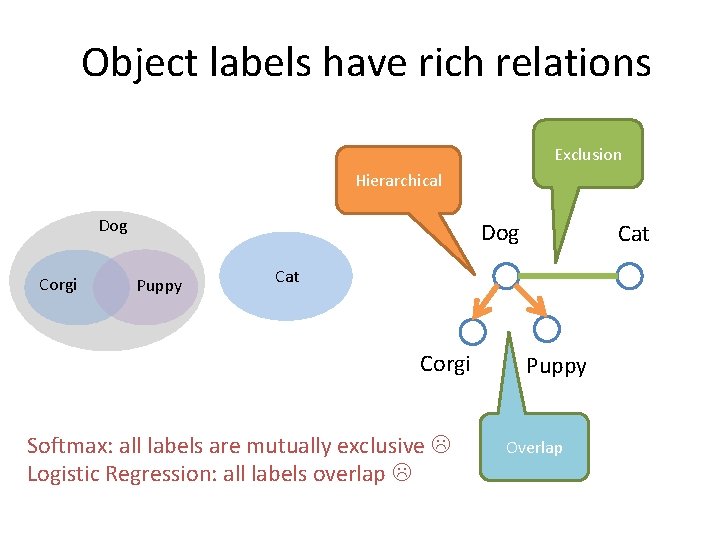

Object labels have rich relations Exclusion Hierarchical Dog Corgi Dog Puppy Cat Corgi Softmax: all labels are mutually exclusive Logistic Regression: all labels overlap Puppy Overlap

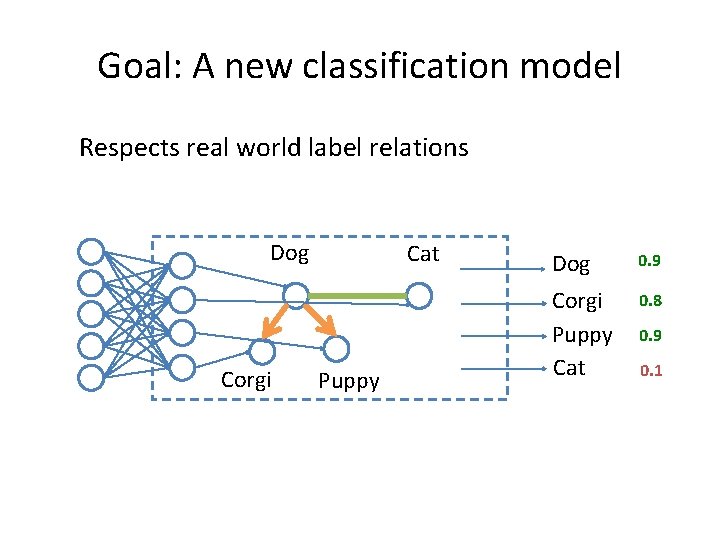

Goal: A new classification model Respects real world label relations Dog Corgi Cat Puppy Dog 0. 9 Corgi Puppy Cat 0. 8 0. 9 0. 1

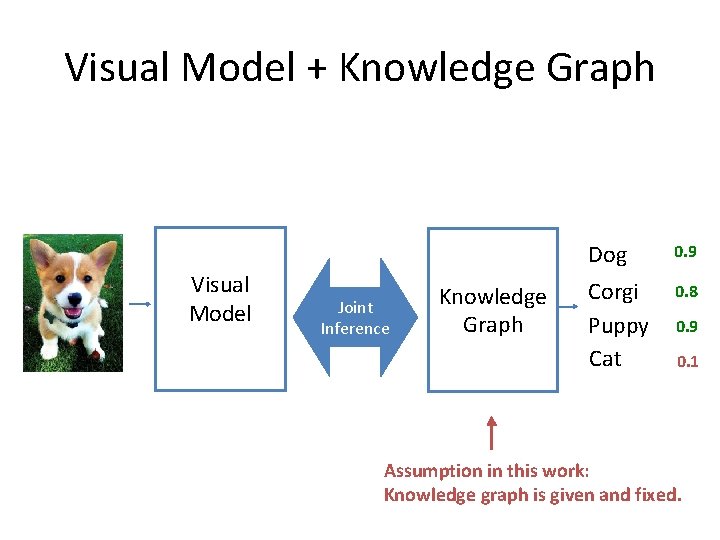

Visual Model + Knowledge Graph Visual Model Joint Inference Knowledge Graph Dog 0. 9 Corgi Puppy Cat 0. 8 0. 9 0. 1 Assumption in this work: Knowledge graph is given and fixed.

Agenda • Encoding prior knowledge (HEX graph) • Classification model • Efficient Exact Inference

Agenda • Encoding prior knowledge (HEX graph) • Classification model • Efficient Exact Inference

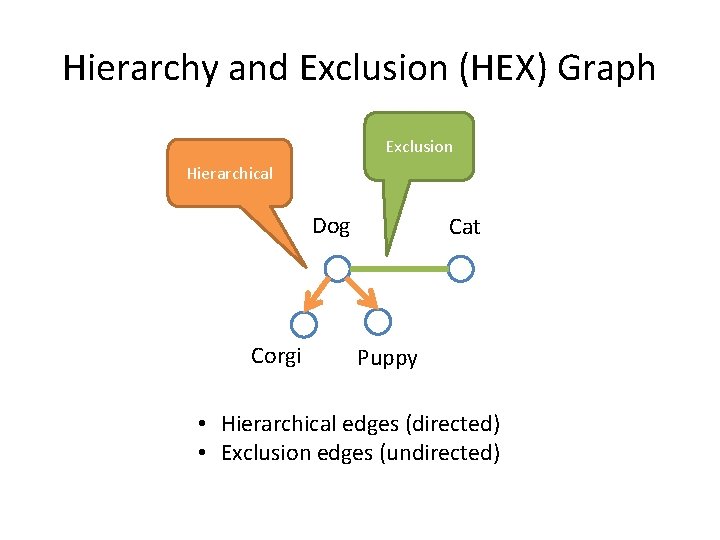

Hierarchy and Exclusion (HEX) Graph Exclusion Hierarchical Dog Corgi Cat Puppy • Hierarchical edges (directed) • Exclusion edges (undirected)

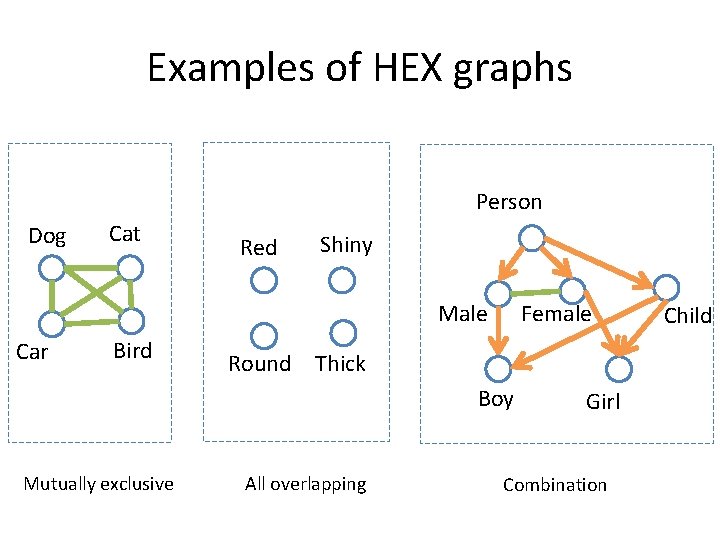

Examples of HEX graphs Person Dog Cat Red Shiny Female Male Car Bird Round Thick Boy Mutually exclusive All overlapping Girl Combination Child

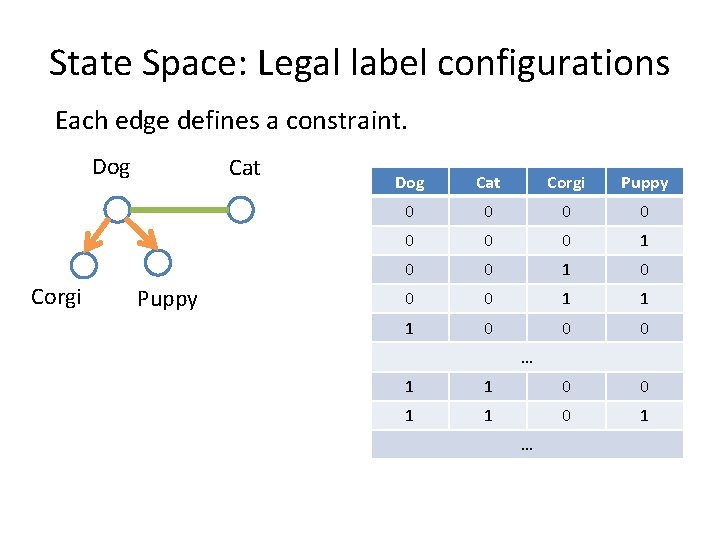

State Space: Legal label configurations Each edge defines a constraint. Dog Corgi Cat Puppy Dog Cat Corgi Puppy 0 0 0 0 1 1 1 0 0 0 … 1 1 0 0 1 1 0 1 …

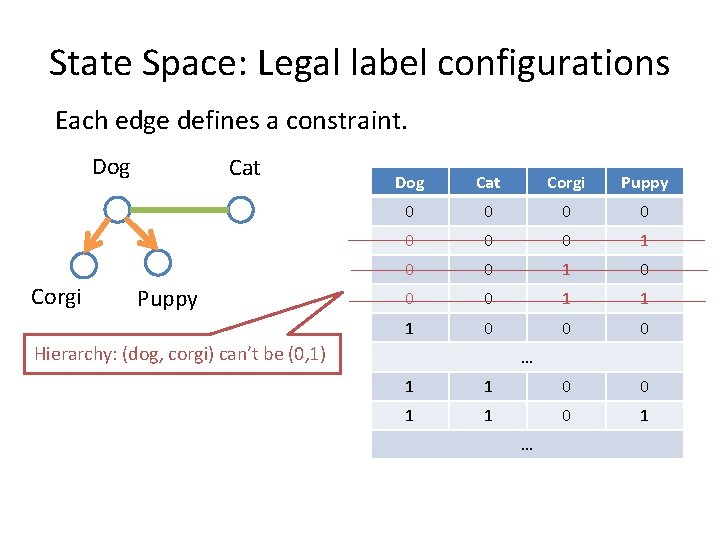

State Space: Legal label configurations Each edge defines a constraint. Dog Corgi Cat Puppy Dog Cat Corgi Puppy 0 0 0 0 1 1 1 0 0 0 Hierarchy: (dog, corgi) can’t be (0, 1) … 1 1 0 0 1 1 0 1 …

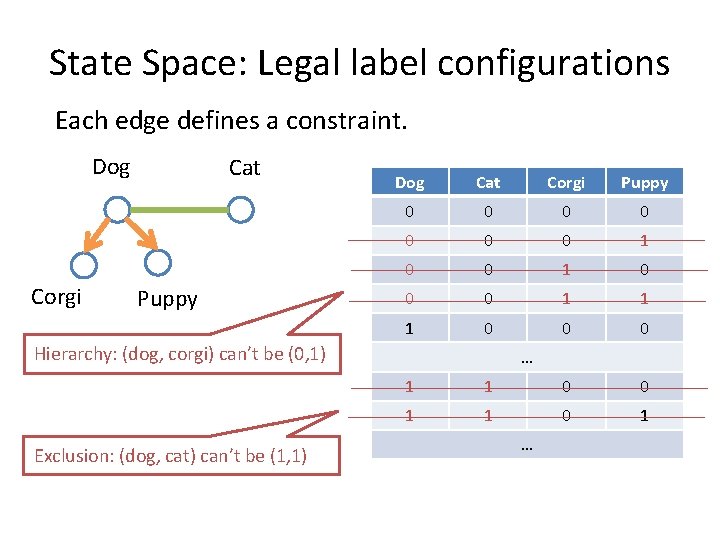

State Space: Legal label configurations Each edge defines a constraint. Dog Corgi Cat Puppy Dog Cat Corgi Puppy 0 0 0 0 1 1 1 0 0 0 Hierarchy: (dog, corgi) can’t be (0, 1) Exclusion: (dog, cat) can’t be (1, 1) … 1 1 0 0 1 1 0 1 …

Agenda • Encoding prior knowledge (HEX graph) • Classification model • Efficient Exact Inference

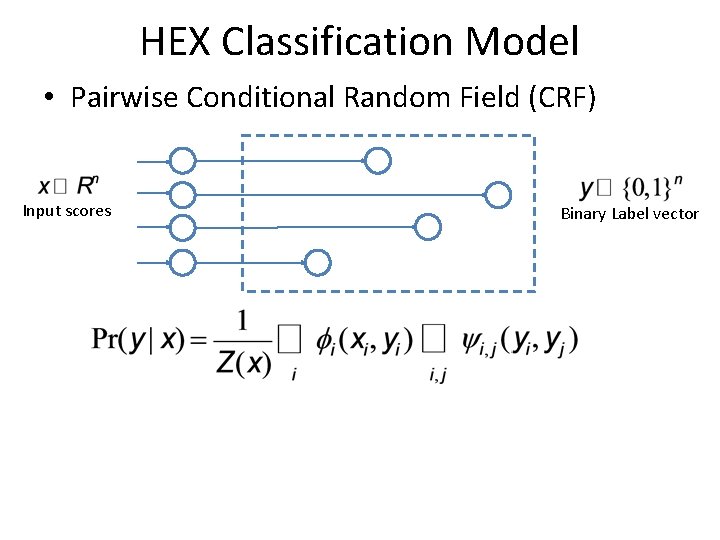

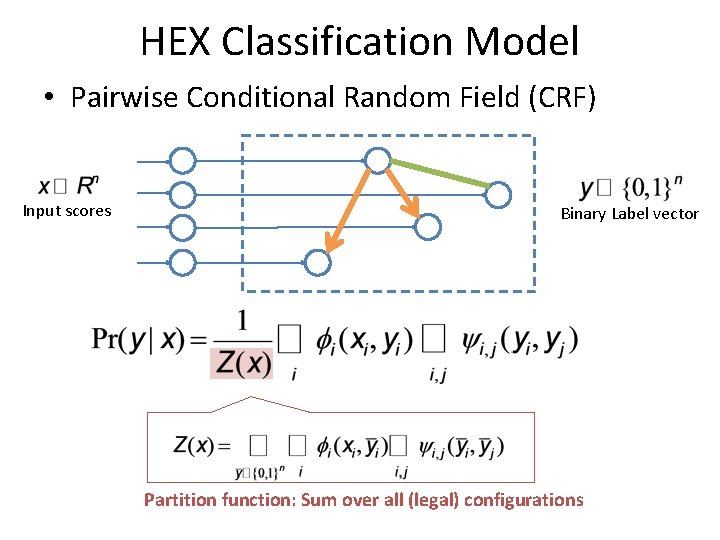

HEX Classification Model • Pairwise Conditional Random Field (CRF) Input scores Binary Label vector

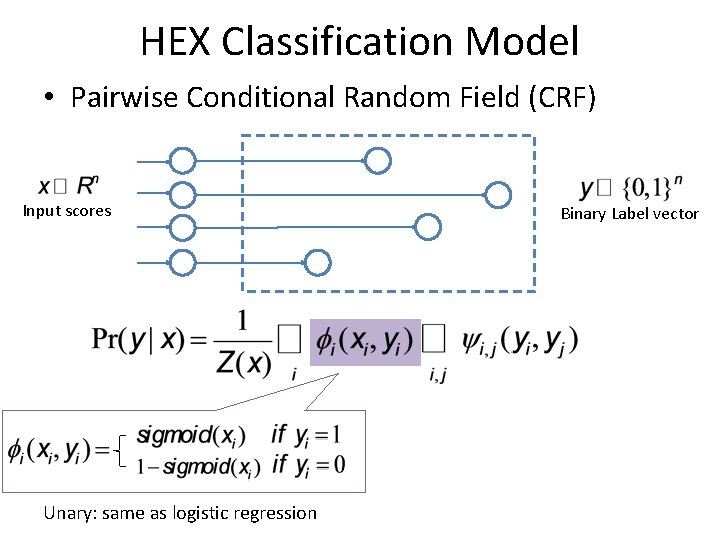

HEX Classification Model • Pairwise Conditional Random Field (CRF) Input scores Unary: same as logistic regression Binary Label vector

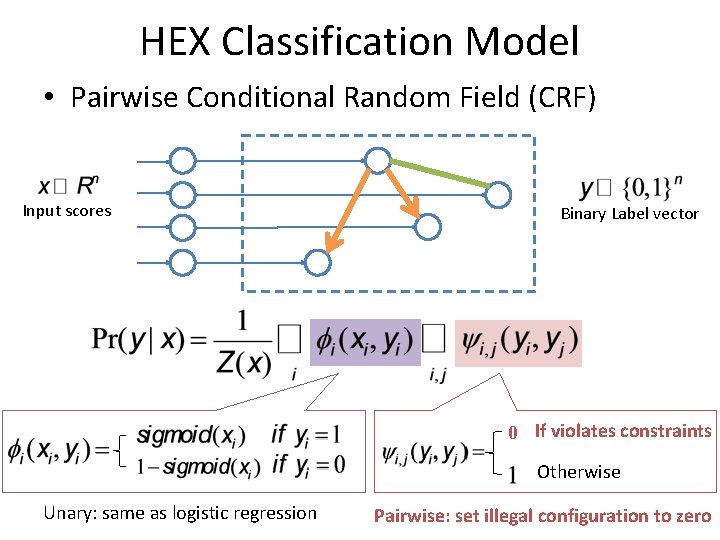

HEX Classification Model • Pairwise Conditional Random Field (CRF) Input scores Binary Label vector 0 If violates constraints Otherwise Unary: same as logistic regression Pairwise: set illegal configuration to zero

HEX Classification Model • Pairwise Conditional Random Field (CRF) Input scores Binary Label vector Partition function: Sum over all (legal) configurations

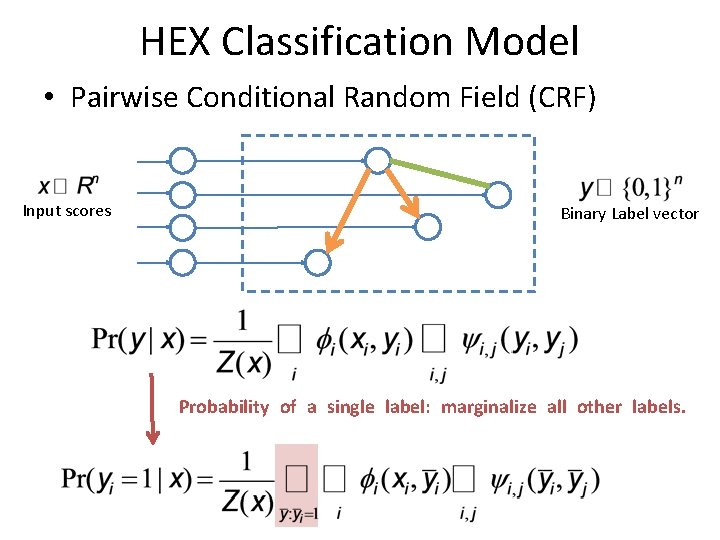

HEX Classification Model • Pairwise Conditional Random Field (CRF) Input scores Binary Label vector Probability of a single label: marginalize all other labels.

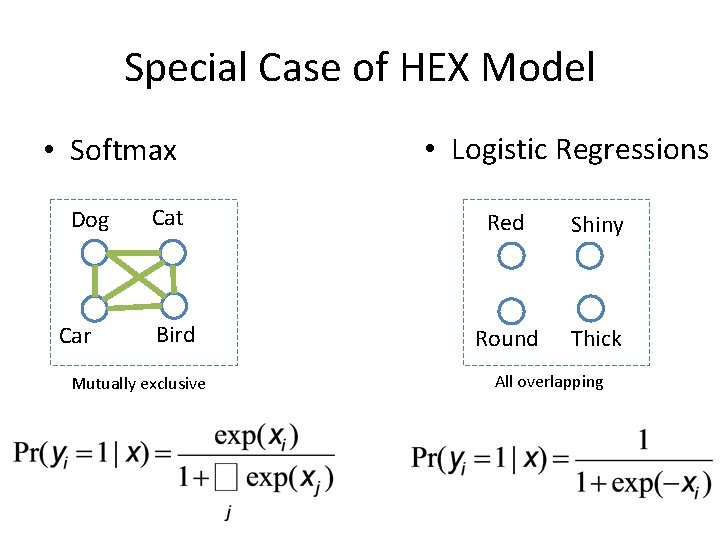

Special Case of HEX Model • Softmax Dog Car Cat Bird Mutually exclusive • Logistic Regressions Red Shiny Round Thick All overlapping

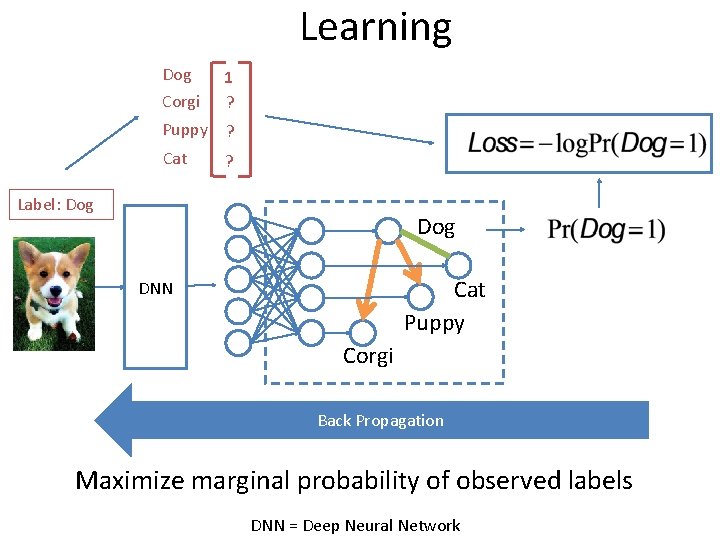

Learning Dog Corgi 1 ? Puppy ? Cat ? Label: Dog Cat Puppy DNN Corgi Back Propagation Maximize marginal probability of observed labels DNN = Deep Neural Network

Agenda • Encoding prior knowledge (HEX graph) • Classification model • Efficient Exact Inference

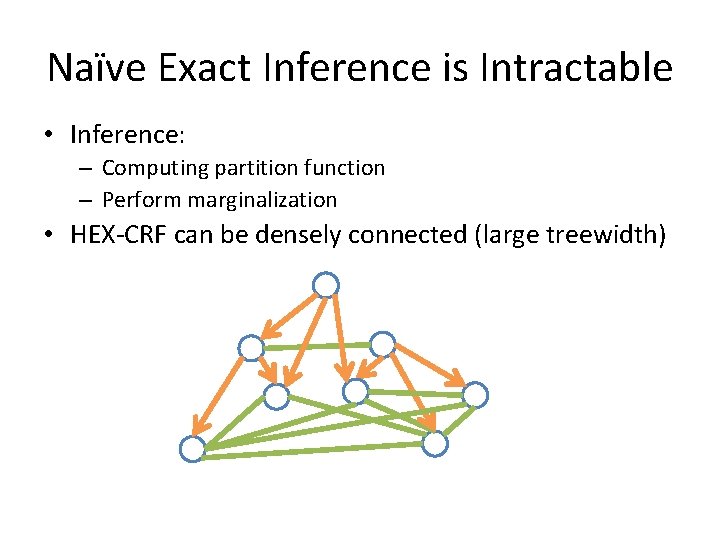

Naïve Exact Inference is Intractable • Inference: – Computing partition function – Perform marginalization • HEX-CRF can be densely connected (large treewidth)

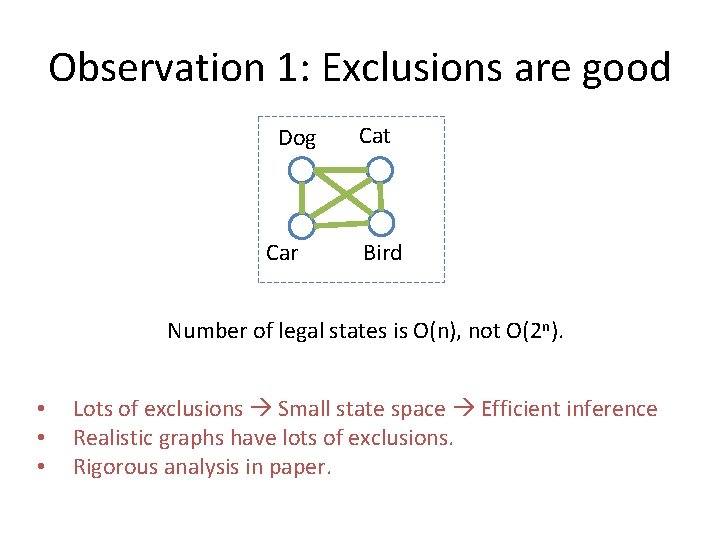

Observation 1: Exclusions are good Dog Car Cat Bird Number of legal states is O(n), not O(2 n). • • • Lots of exclusions Small state space Efficient inference Realistic graphs have lots of exclusions. Rigorous analysis in paper.

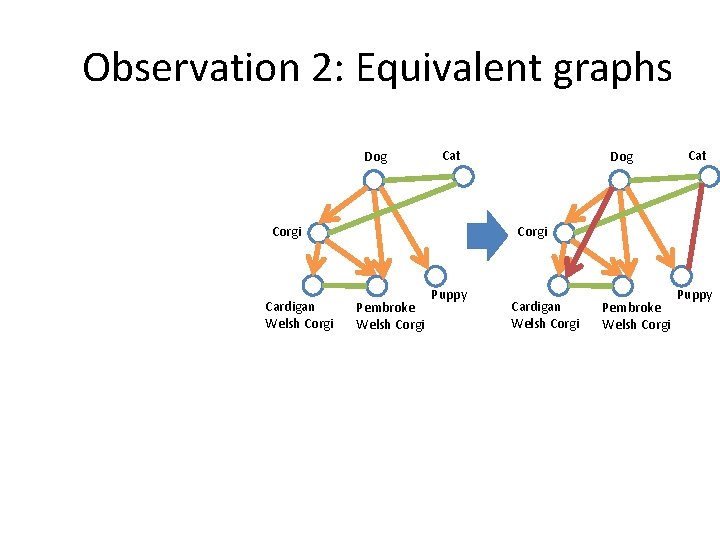

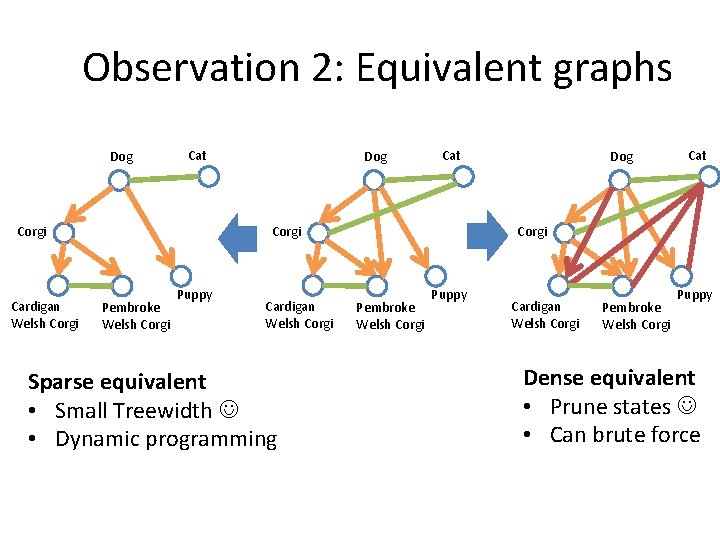

Observation 2: Equivalent graphs Dog Cat Corgi Cardigan Welsh Corgi Dog Pembroke Welsh Corgi Puppy Cardigan Welsh Corgi Pembroke Welsh Corgi Puppy

Observation 2: Equivalent graphs Dog Cat Corgi Cardigan Welsh Corgi Dog Cat Corgi Pembroke Welsh Corgi Puppy Cardigan Welsh Corgi Sparse equivalent • Small Treewidth • Dynamic programming Dog Cat Corgi Pembroke Welsh Corgi Puppy Cardigan Welsh Corgi Pembroke Welsh Corgi Puppy Dense equivalent • Prune states • Can brute force

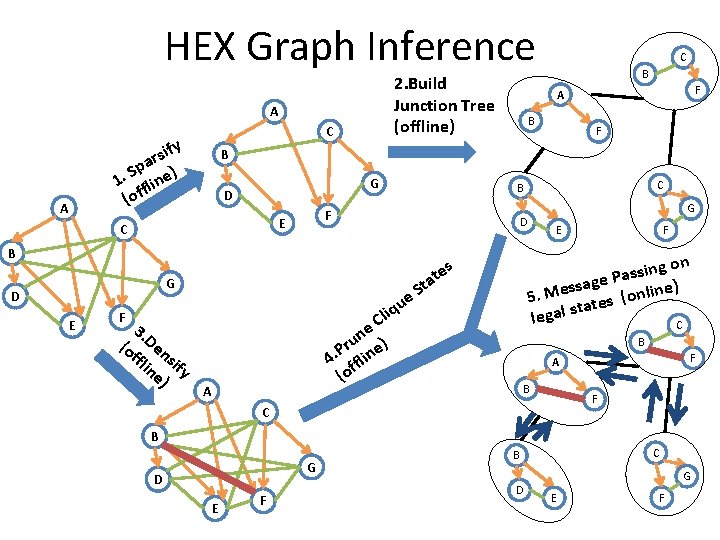

HEX Graph Inference 2. Build Junction Tree (offline) A C ify s r a Sp e). 1 flin (of A G D C B F F e qu i l C te a t S ne ) u r e P. 4 fflin (o A G F E ing on s s a P age s s e ine) l M n o 5. ( tes a t s l a leg C s F C D G 3. (o Den ffl si in fy e) F A B B E D C B F A B F C B G D E F C B D G E F

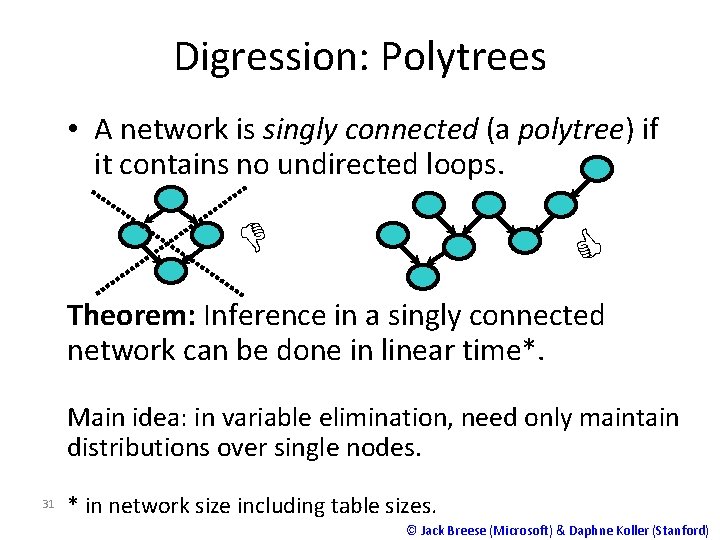

Digression: Polytrees • A network is singly connected (a polytree) if it contains no undirected loops. D C Theorem: Inference in a singly connected network can be done in linear time*. Main idea: in variable elimination, need only maintain distributions over single nodes. 31 * in network size including table sizes. © Jack Breese (Microsoft) & Daphne Koller (Stanford)

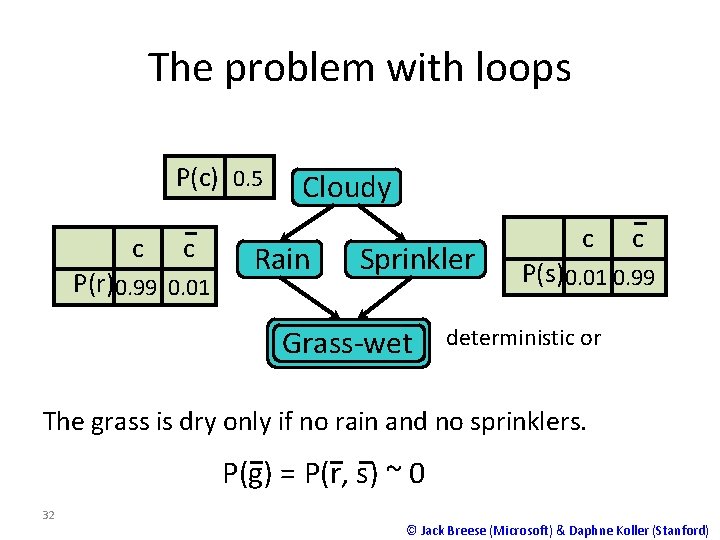

The problem with loops P(c) 0. 5 c c P(r)0. 99 0. 01 Cloudy Rain Sprinkler Grass-wet c c P(s)0. 01 0. 99 deterministic or The grass is dry only if no rain and no sprinklers. P(g) = P(r, s) ~ 0 32 © Jack Breese (Microsoft) & Daphne Koller (Stanford)

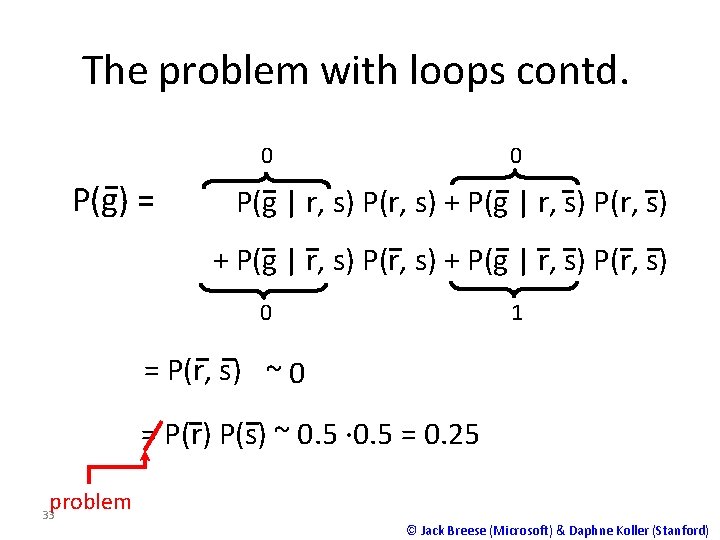

The problem with loops contd. 0 0 P(g) = P(g | r, s) P(r, s) + P(g | r, s) P(r, s) 0 1 = P(r, s) ~ 0 = P(r) P(s) ~ 0. 5 · 0. 5 = 0. 25 problem 33 © Jack Breese (Microsoft) & Daphne Koller (Stanford)

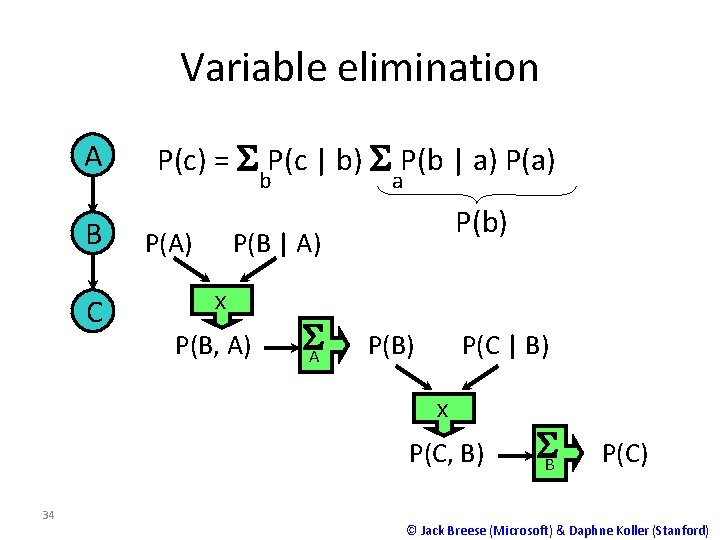

Variable elimination A B C P(c) = S P(c | b) S P(b | a) P(a) b P(A) a P(b) P(B | A) x P(B, A) SA P(B) P(C | B) x P(C, B) 34 SB P(C) © Jack Breese (Microsoft) & Daphne Koller (Stanford)

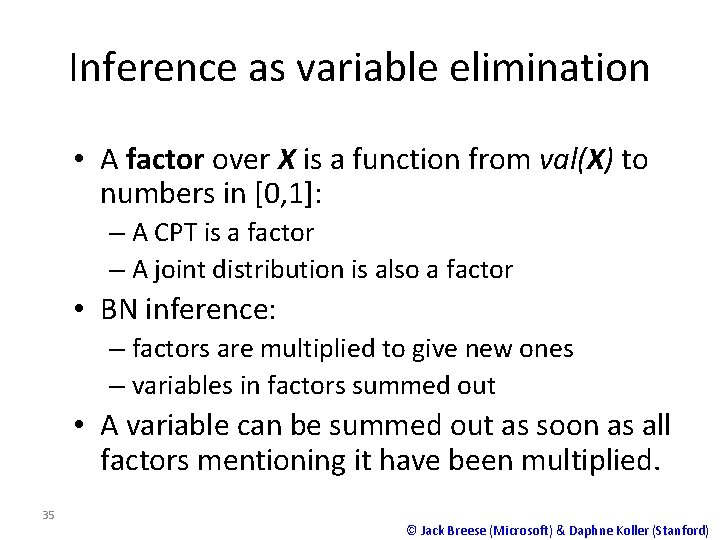

Inference as variable elimination • A factor over X is a function from val(X) to numbers in [0, 1]: – A CPT is a factor – A joint distribution is also a factor • BN inference: – factors are multiplied to give new ones – variables in factors summed out • A variable can be summed out as soon as all factors mentioning it have been multiplied. 35 © Jack Breese (Microsoft) & Daphne Koller (Stanford)

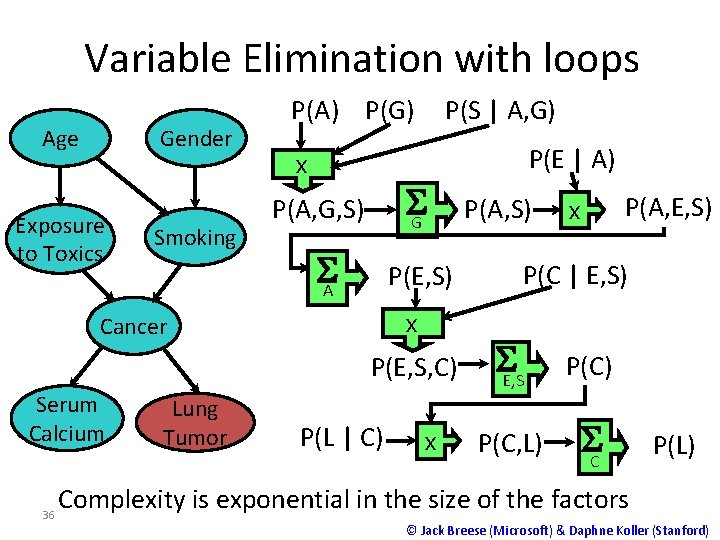

Variable Elimination with loops Age Gender Exposure to Toxics Smoking P(A) P(G) P(S | A, G) P(E | A) x SG P(A, G, S) SA P(E, S) x Cancer P(E, S, C) Serum Calcium 36 Lung Tumor P(L | C) x P(A, S) P(A, E, S) x P(C | E, S) SE, S P(C) P(C, L) SC P(L) Complexity is exponential in the size of the factors © Jack Breese (Microsoft) & Daphne Koller (Stanford)

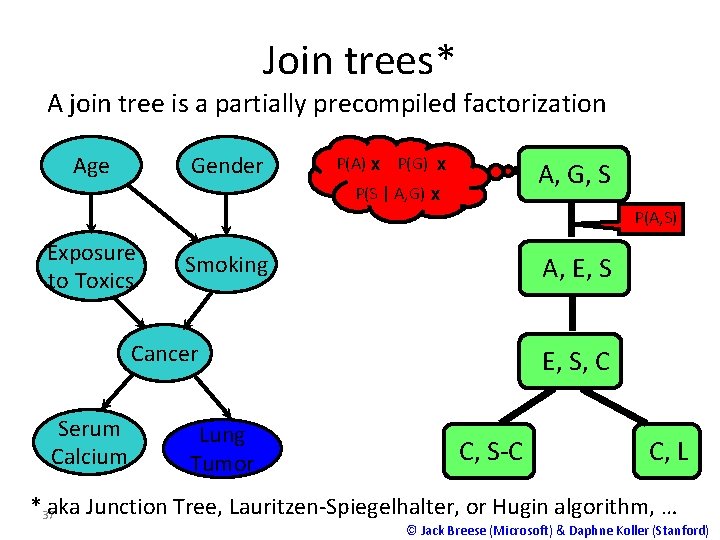

Join trees* A join tree is a partially precompiled factorization Age Gender P(A) x P(G) x A, G, S P(S | A, G) x P(A, S) Exposure to Toxics Smoking A, E, S Cancer Serum Calcium Lung Tumor E, S, C C, S-C C, L * 37 aka Junction Tree, Lauritzen-Spiegelhalter, or Hugin algorithm, … © Jack Breese (Microsoft) & Daphne Koller (Stanford)

Junction Tree Algorithm • Converts Bayes Net into an undirected tree – Joint probability remains unchanged – Exact marginals can be computed • Why ? ? ? – Uniform treatment of Bayes Net and MRF – Efficient inference is possible for undirected trees

- Slides: 38