Toward CacheFriendly Hardware Accelerators Yakun Sophia Shao Sam

![More accelerators. Out-of-Core Accelerators Maltiel Consulting estimates [Die photo from Chipworks] [Accelerators annotated by More accelerators. Out-of-Core Accelerators Maltiel Consulting estimates [Die photo from Chipworks] [Accelerators annotated by](https://slidetodoc.com/presentation_image_h2/6269b53cef796ffc0a00ff8ef57768cd/image-2.jpg)

- Slides: 18

Toward Cache-Friendly Hardware Accelerators Yakun Sophia Shao, Sam Xi, Viji Srinivasan, Gu-Yeon Wei, David Brooks

![More accelerators OutofCore Accelerators Maltiel Consulting estimates Die photo from Chipworks Accelerators annotated by More accelerators. Out-of-Core Accelerators Maltiel Consulting estimates [Die photo from Chipworks] [Accelerators annotated by](https://slidetodoc.com/presentation_image_h2/6269b53cef796ffc0a00ff8ef57768cd/image-2.jpg)

More accelerators. Out-of-Core Accelerators Maltiel Consulting estimates [Die photo from Chipworks] [Accelerators annotated by Sophia Shao @ Harvard] 2 Shao (Harvard) estimates

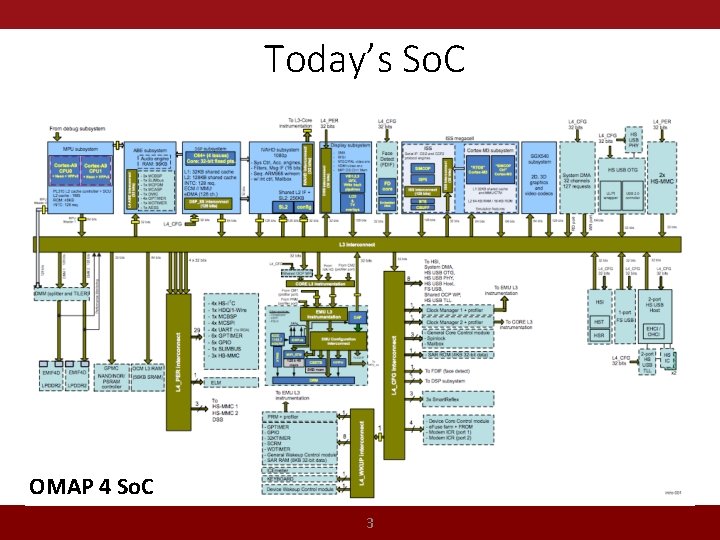

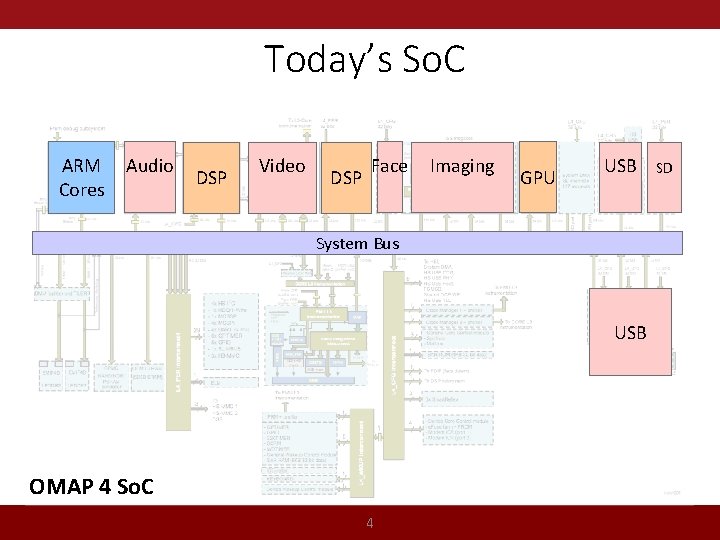

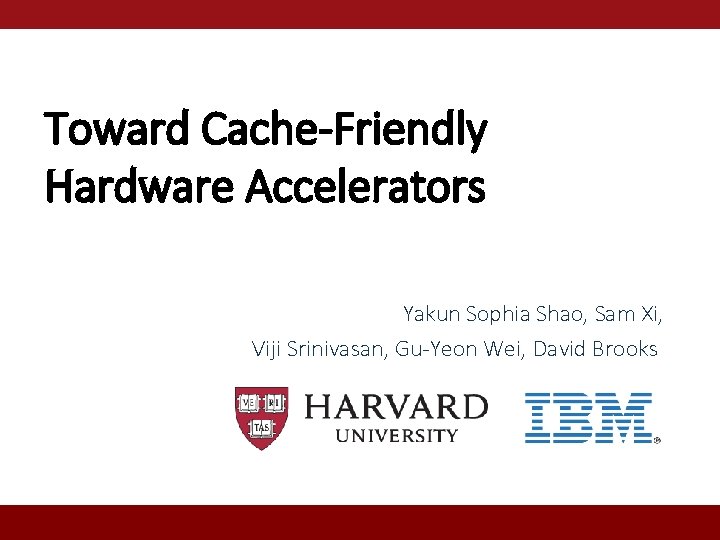

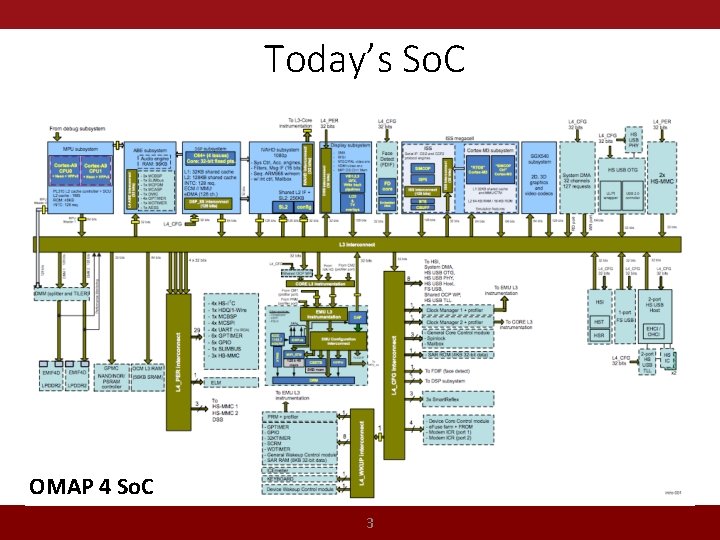

Today’s So. C OMAP 4 So. C 3

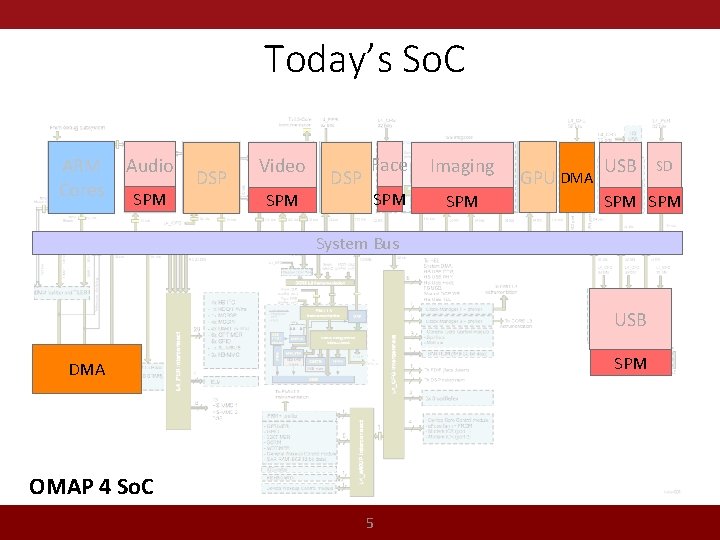

Today’s So. C ARM Cores Audio DSP Video DSP Face Imaging GPU USB System Bus USB OMAP 4 So. C 4 SD

Today’s So. C ARM Cores Audio SPM DSP Video SPM DSP Face Imaging SPM GPU DMA USB SPM System Bus USB SPM DMA OMAP 4 So. C 5 SD

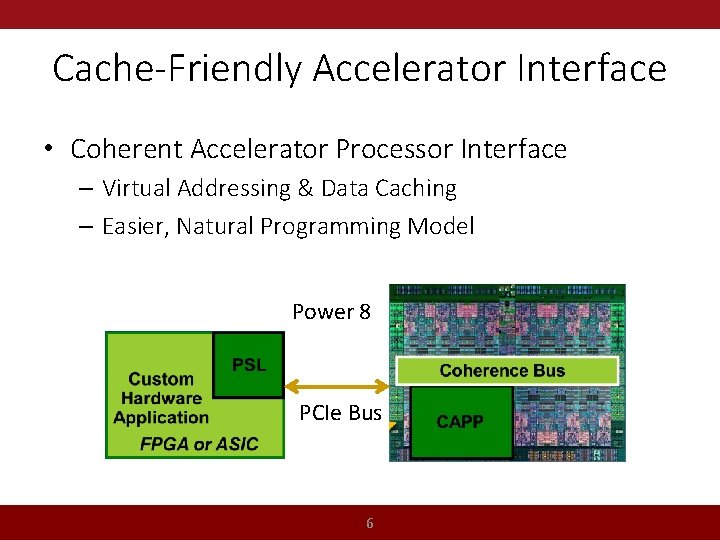

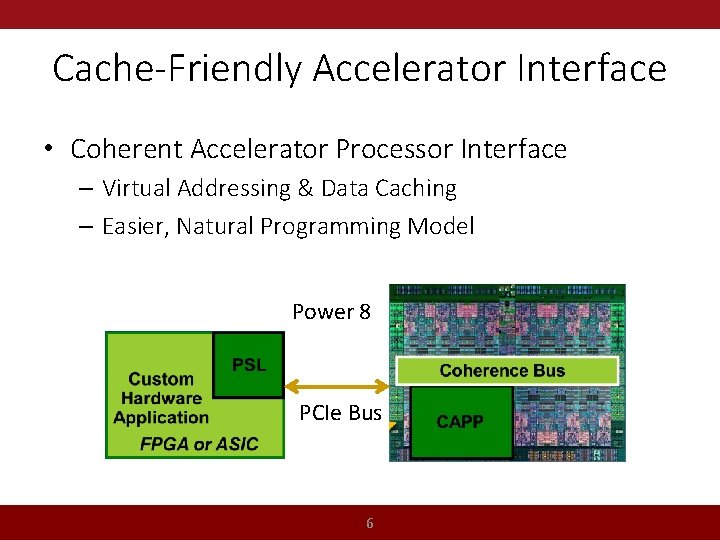

Cache-Friendly Accelerator Interface • Coherent Accelerator Processor Interface – Virtual Addressing & Data Caching – Easier, Natural Programming Model Power 8 PCIe Bus 6

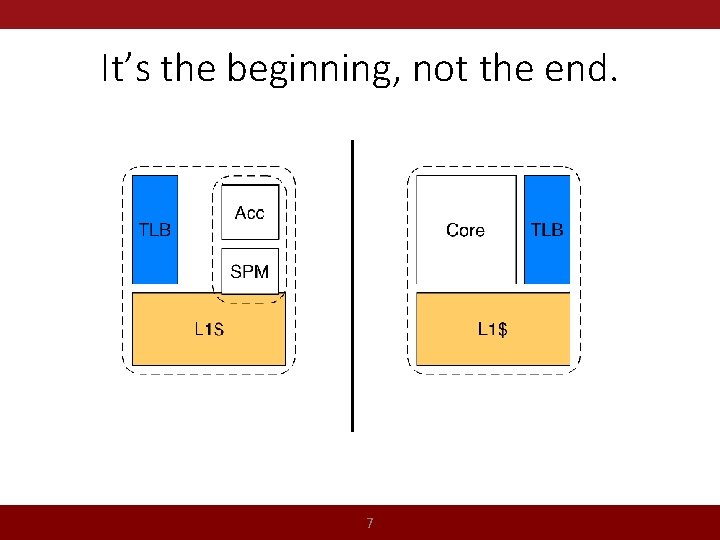

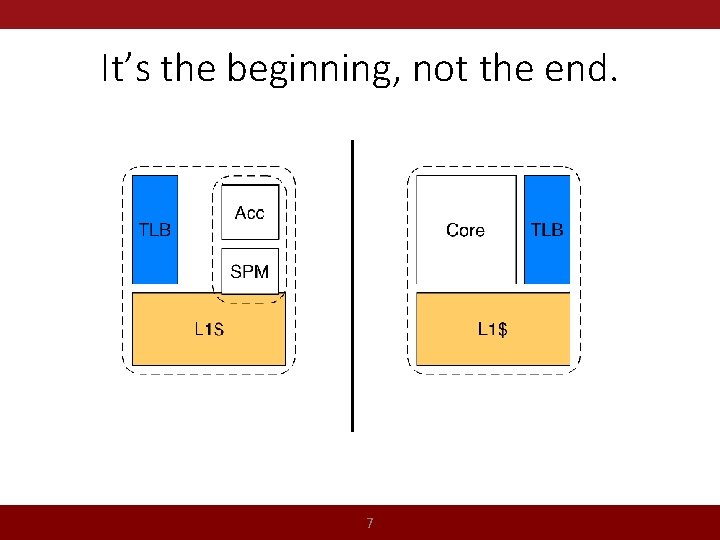

It’s the beginning, not the end. 7

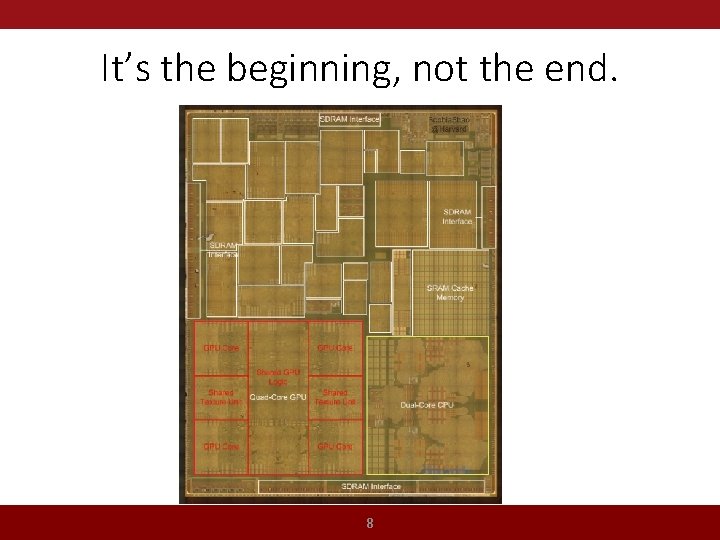

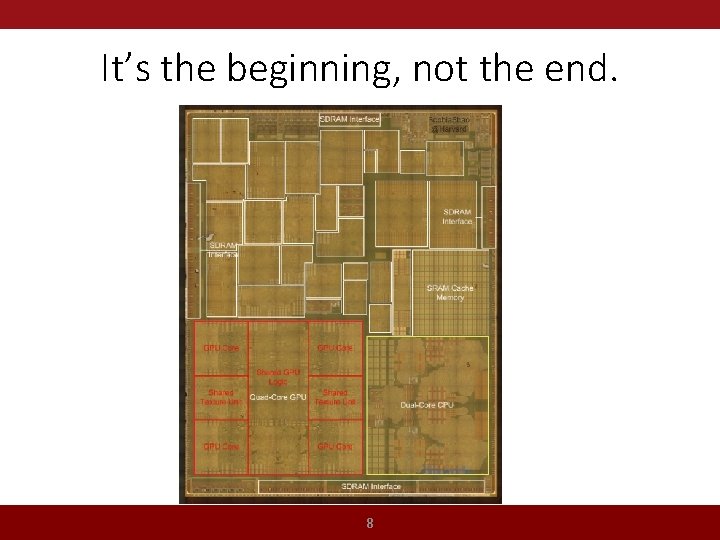

It’s the beginning, not the end. 8

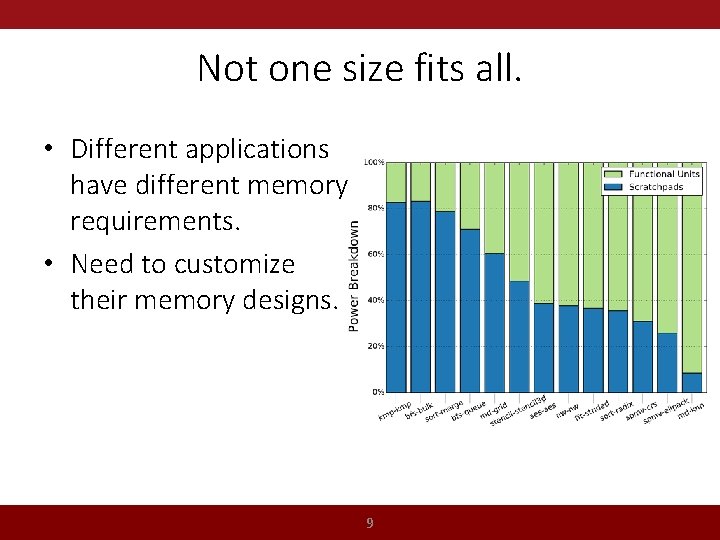

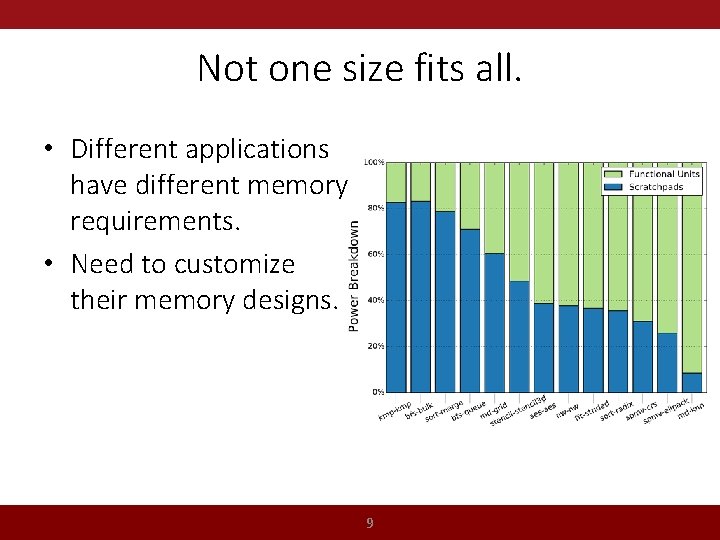

Not one size fits all. • Different applications have different memory requirements. • Need to customize their memory designs. 9

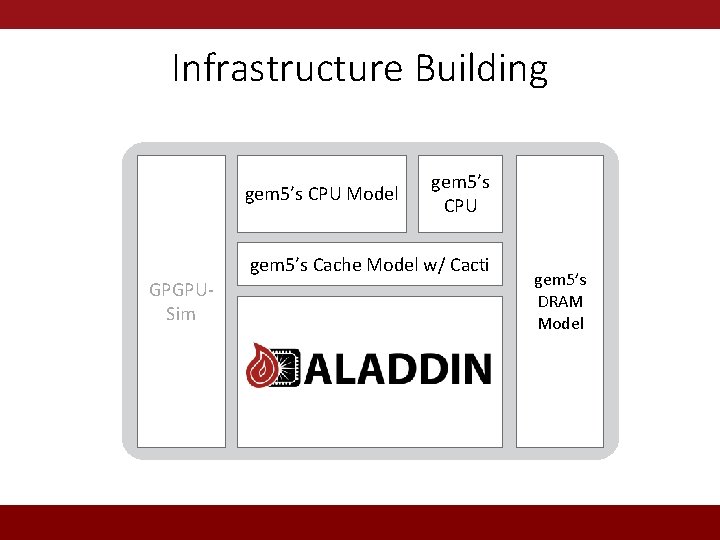

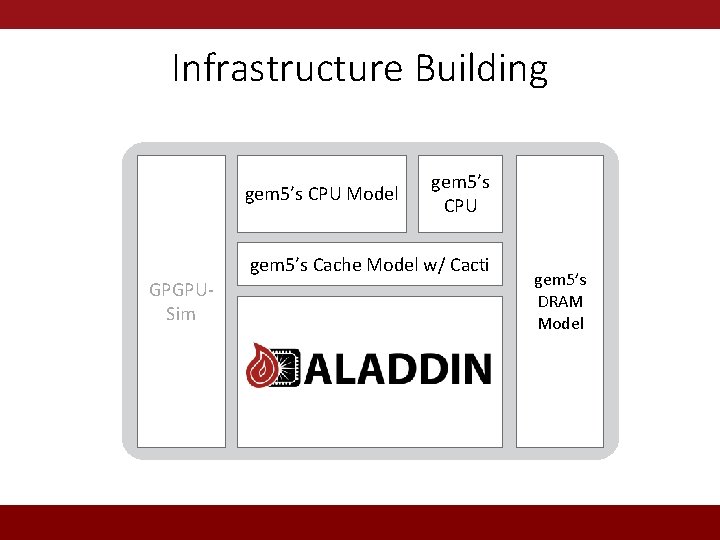

Infrastructure Building Big gem 5’s CPU Model Cores gem 5’s Small Cores CPU gem 5’s Shared Cache. Resources Model w/ Cacti GPGPUGPU Sim Accelerators gem 5’s Memory DRAM Interface Model

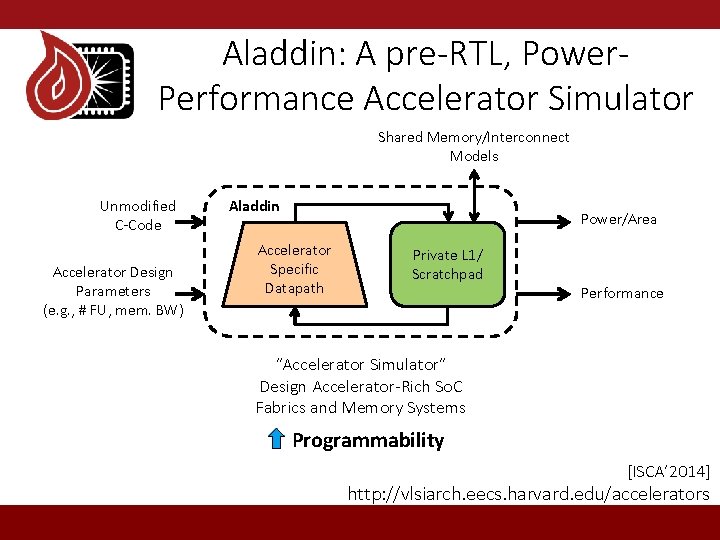

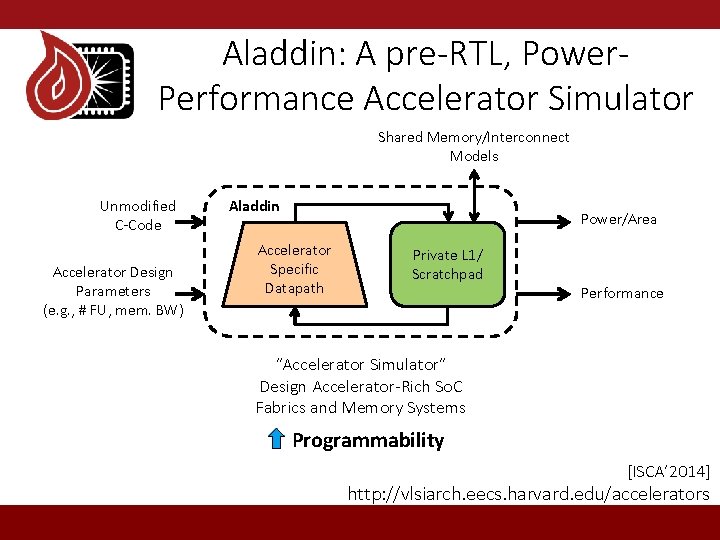

Aladdin: A pre-RTL, Power. Performance Accelerator Simulator Shared Memory/Interconnect Models Unmodified C-Code Accelerator Design Parameters (e. g. , # FU, mem. BW) Aladdin Power/Area Accelerator Specific Datapath Private L 1/ Scratchpad Performance “Accelerator Simulator” Design Accelerator-Rich So. C Fabrics and Memory Systems Programmability [ISCA’ 2014] http: //vlsiarch. eecs. harvard. edu/accelerators

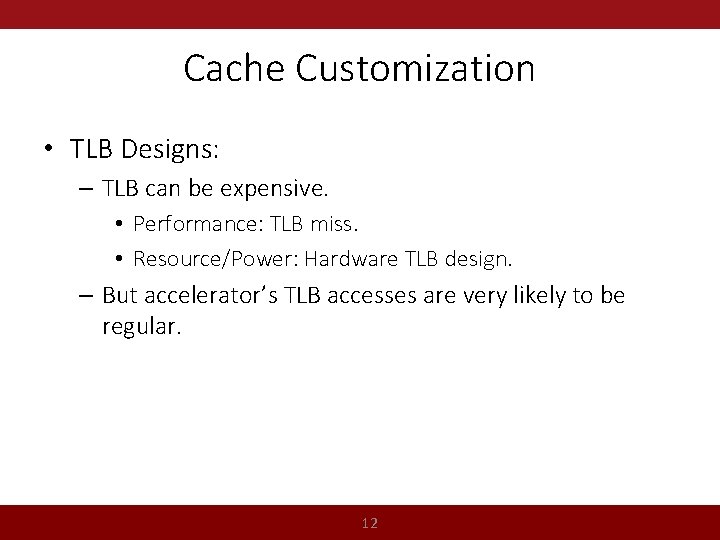

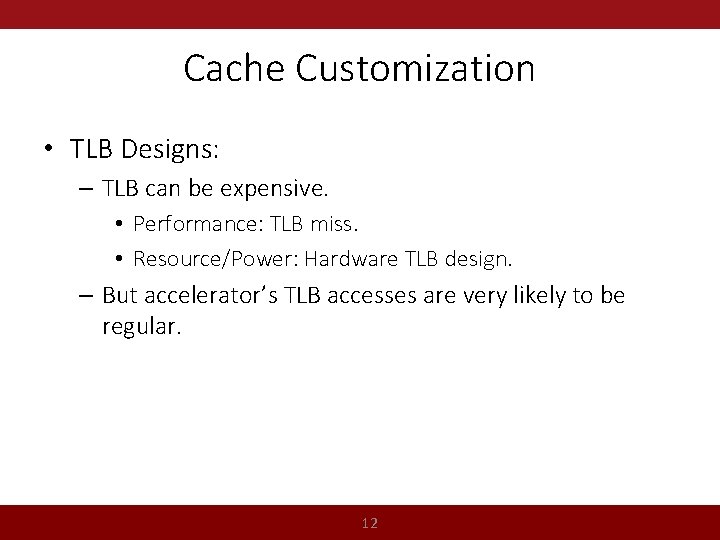

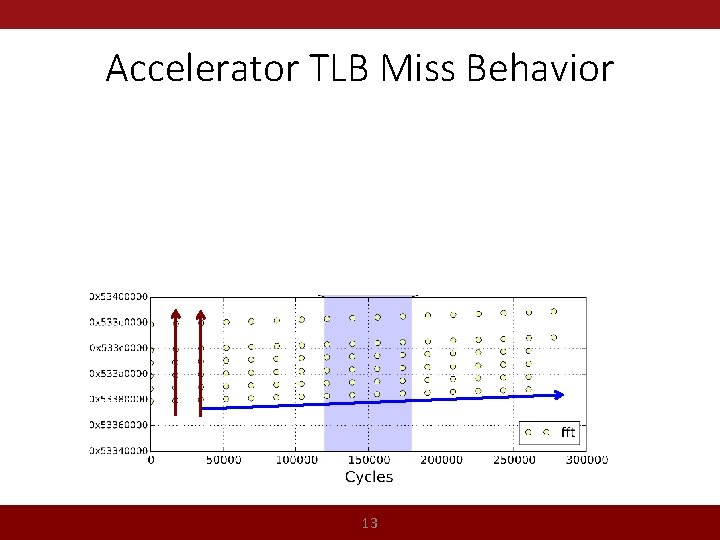

Cache Customization • TLB Designs: – TLB can be expensive. • Performance: TLB miss. • Resource/Power: Hardware TLB design. – But accelerator’s TLB accesses are very likely to be regular. 12

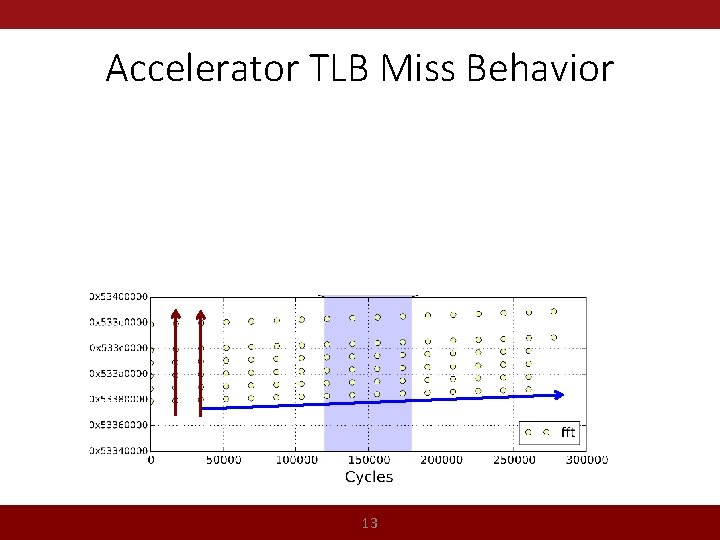

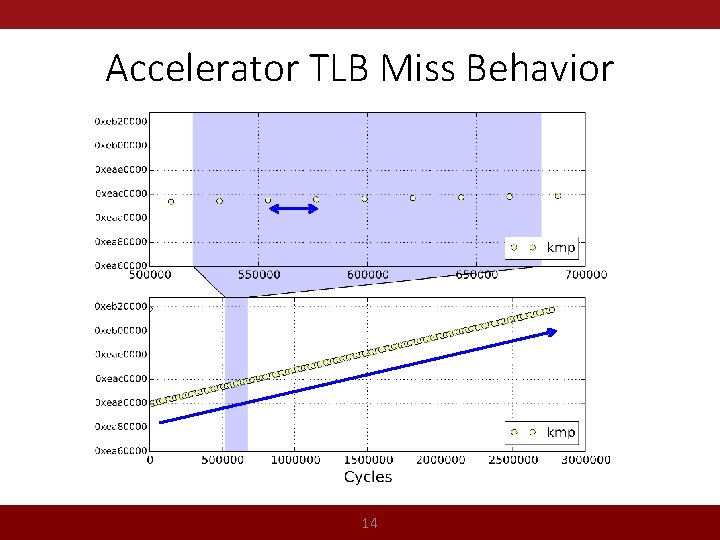

Accelerator TLB Miss Behavior 13

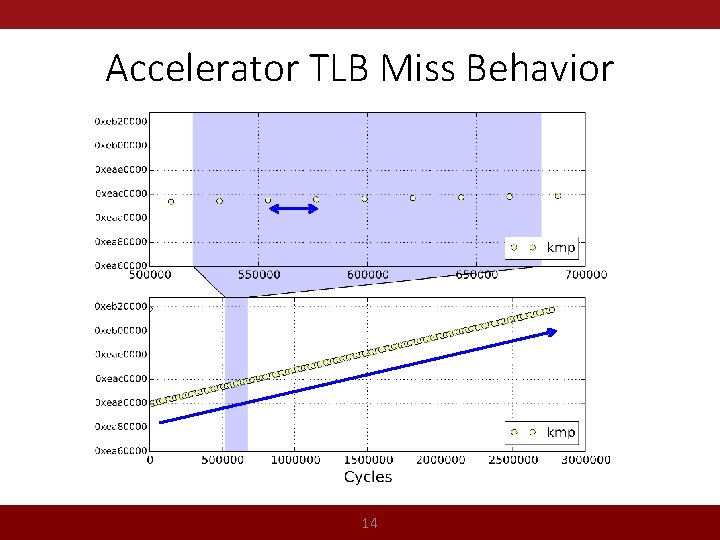

Accelerator TLB Miss Behavior 14

Cache Customization • TLB Designs: – TLB can be expensive. • Performance: TLB miss. • Resource/Power: Hardware TLB design. – But accelerator’s TLB accesses are very likely to be regular. • Cache Prefetcher Designs: 15

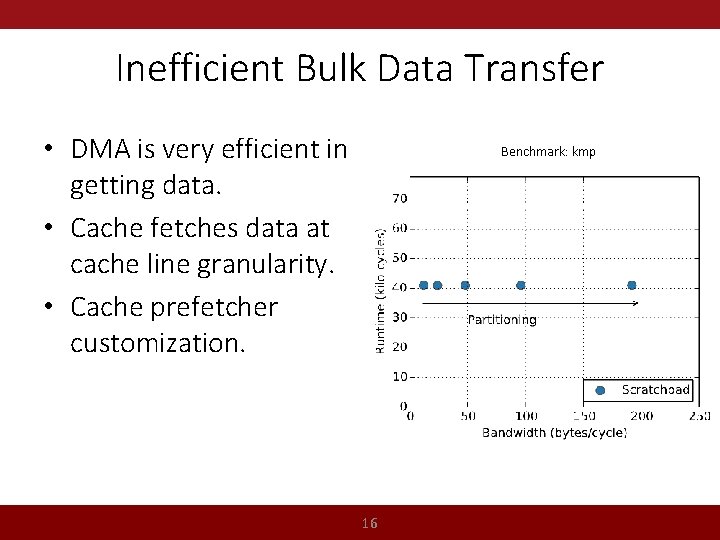

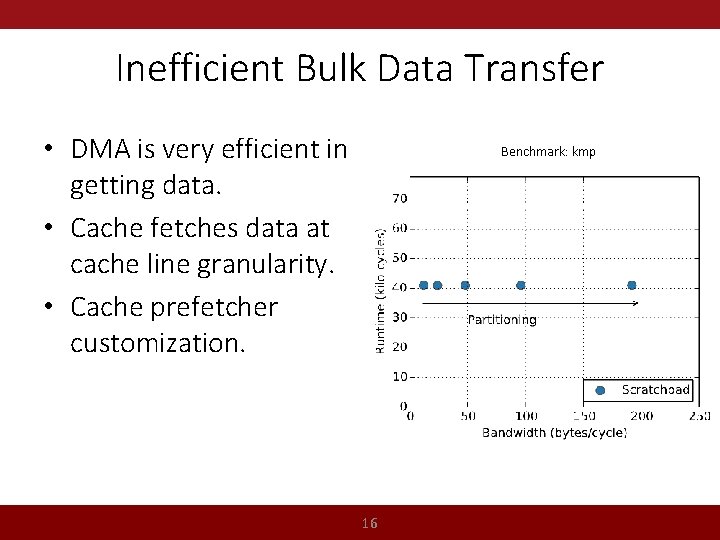

Inefficient Bulk Data Transfer • DMA is very efficient in getting data. • Cache fetches data at cache line granularity. • Cache prefetcher customization. Benchmark: kmp 16

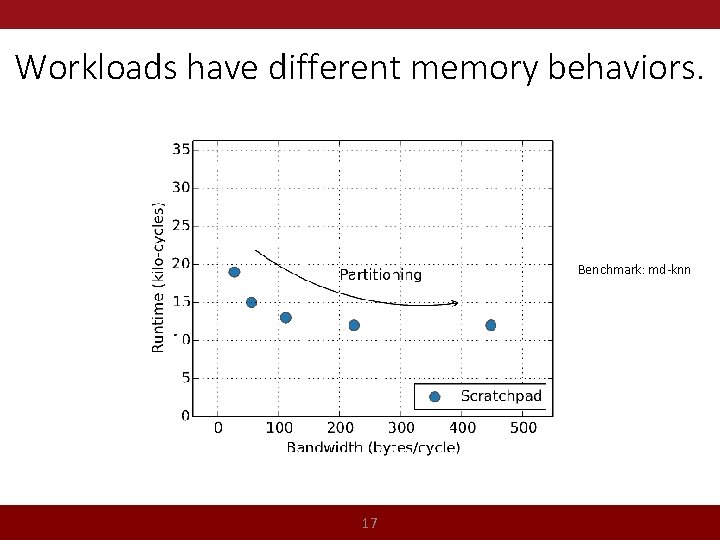

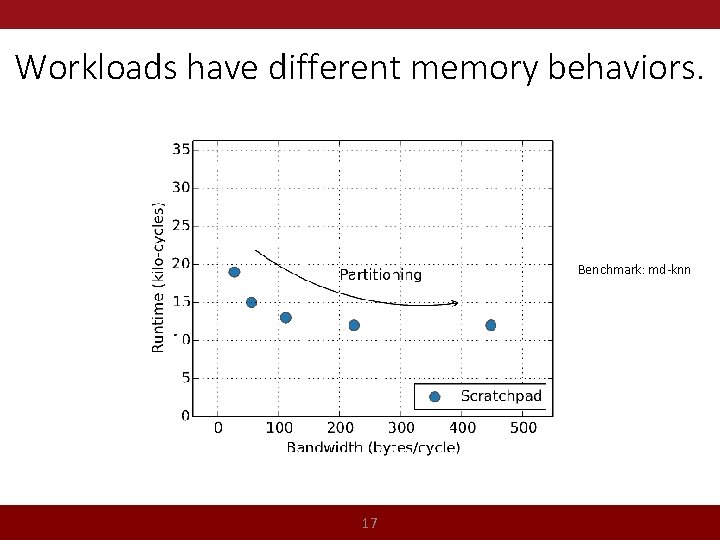

Workloads have different memory behaviors. Benchmark: md-knn 17

Toward Cache-Friendly Hardware Accelerators • With more accelerators on the So. Cs, programming them will become challenging. • Shared address space and caching make programming accelerators easier. • Leveraging the application-specific nature of accelerators can reduce the overhead of cache. 18