Third SIGHAN Bakeoff Named Entity Task Using Ensemble

Third SIGHAN Bakeoff. Named Entity Task Using Ensemble Methods in CNER Chia-Wei Wu, Shyh-Yi Jan, Richard Tzong-Han Tsai, Wen-Lian Hsu Intelligent Agent Systems Lab (IASL) Institute of Information Science, Academia Sinica Intelligent Agent Systems Lab Chiawei (Terry) Wu

Introduction o Conditional Random Fields (CRFs) n Advantages: o n Disadvantages : o o o More complex feature type need more computing resources. We can not apply all useful features in one model. Integrate systems with different feature sets n o The ability of handling overlapping features. Ex. The combination of multiple states and characters. Different feature types have different contributions. Character-based model. Chia-Wei Wu

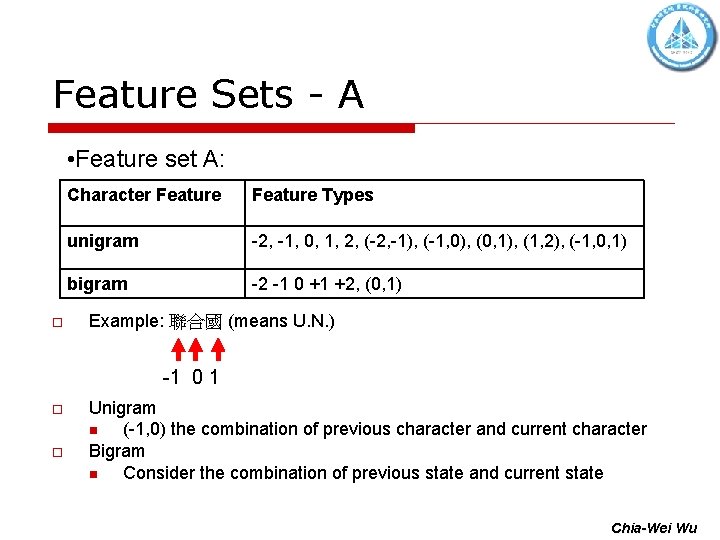

Feature Sets - A • Feature set A: o Character Feature Types unigram -2, -1, 0, 1, 2, (-2, -1), (-1, 0), (0, 1), (1, 2), (-1, 0, 1) bigram -2 -1 0 +1 +2, (0, 1) Example: 聯合國 (means U. N. ) -1 0 1 o o Unigram n (-1, 0) the combination of previous character and current character Bigram n Consider the combination of previous state and current state Chia-Wei Wu

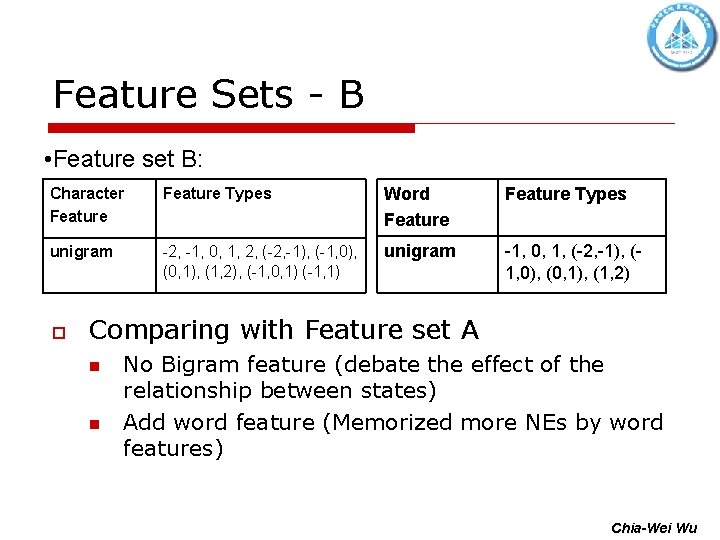

Feature Sets - B • Feature set B: Character Feature Types Word Feature Types unigram -2, -1, 0, 1, 2, (-2, -1), (-1, 0), (0, 1), (1, 2), (-1, 0, 1) (-1, 1) unigram -1, 0, 1, (-2, -1), (1, 0), (0, 1), (1, 2) o Comparing with Feature set A n n No Bigram feature (debate the effect of the relationship between states) Add word feature (Memorized more NEs by word features) Chia-Wei Wu

Ensemble Methods GOAL: Integrating the tagging results of feature set A and B. o Weighted Majority Vote o Memory Based learner – memorized the tagged NEs Chia-Wei Wu

Weighted Majority Vote o Weighted Majority Vote n o Integrate the results by the competition of scores generated from different classifiers. Example: A classifier tags “楊” as B_PER with 0. 957 scores B classifier tags “楊” as B_ORG with 0. 662 scores Then, by comparing their scores, we take A classifier’s result as output. n We then apply Viterbi to the new output sequence. Chia-Wei Wu

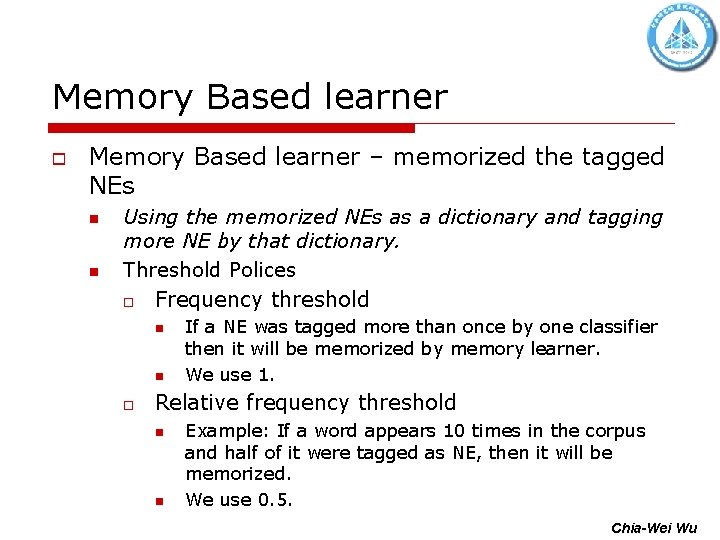

Memory Based learner o Memory Based learner – memorized the tagged NEs n n Using the memorized NEs as a dictionary and tagging more NE by that dictionary. Threshold Polices o Frequency threshold n n o If a NE was tagged more than once by one classifier then it will be memorized by memory learner. We use 1. Relative frequency threshold n n Example: If a word appears 10 times in the corpus and half of it were tagged as NE, then it will be memorized. We use 0. 5. Chia-Wei Wu

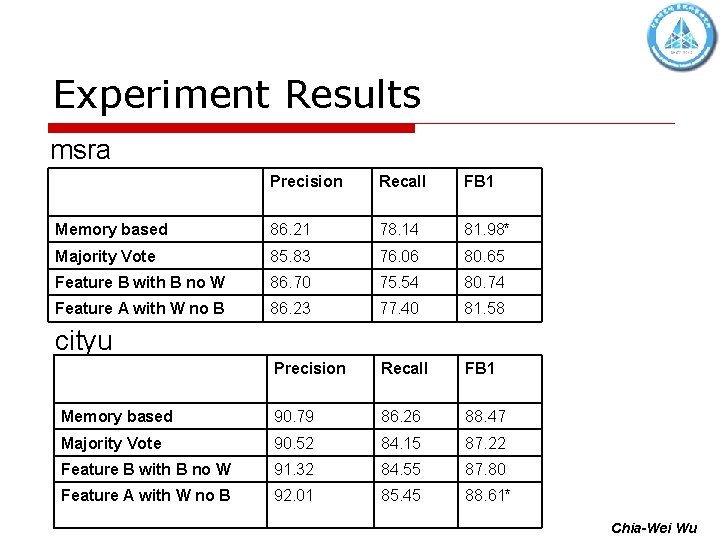

Experiment Results msra Precision Recall FB 1 Memory based 86. 21 78. 14 81. 98* Majority Vote 85. 83 76. 06 80. 65 Feature B with B no W 86. 70 75. 54 80. 74 Feature A with W no B 86. 23 77. 40 81. 58 Precision Recall FB 1 Memory based 90. 79 86. 26 88. 47 Majority Vote 90. 52 84. 15 87. 22 Feature B with B no W 91. 32 84. 55 87. 80 Feature A with W no B 92. 01 85. 45 88. 61* cityu Chia-Wei Wu

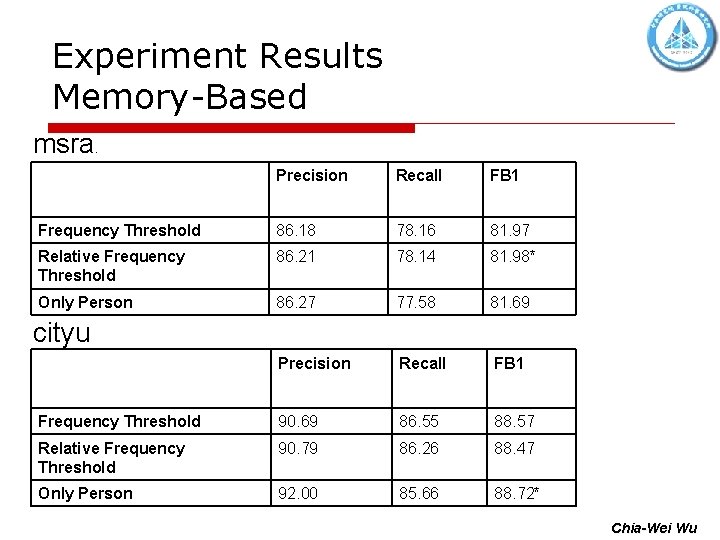

Experiment Results Memory-Based msra . Precision Recall FB 1 Frequency Threshold 86. 18 78. 16 81. 97 Relative Frequency Threshold 86. 21 78. 14 81. 98* Only Person 86. 27 77. 58 81. 69 Precision Recall FB 1 Frequency Threshold 90. 69 86. 55 88. 57 Relative Frequency Threshold 90. 79 86. 26 88. 47 Only Person 92. 00 85. 66 88. 72* cityu Chia-Wei Wu

Summary o o o Bigram feature is more useful than word feature. Ensemble methods could not guarantee a improvement in every corpus. If we only apply ensemble method in person type NE(PER), since its relative high precision rate, then the performance can be improved in both cityu and msra. Chia-Wei Wu

Thank you.

- Slides: 11