The Need for an Improved PAUSE Mitch Gusat

- Slides: 19

The Need for an Improved PAUSE Mitch Gusat and Cyriel Minkenberg IEEE 802 Dallas Nov. 2006 IBM Zurich Research Lab Gmb. H 1

Outline I) Overcoming PAUSE-induced Deadlocks Ø PAUSE exposed to circular dependencies Ø Two deadlock-free PAUSE solutions II) PAUSE Interaction with Congestion Management III) Conclusions IBM Zurich Research Lab Gmb. H 2

PAUSE Issues • PAUSE-related issues interfere with BCN simulations • Correctness Ø Deadlocks ü cycles in the routing graph (if multipath adaptivity is enabled) – multiple solutions exist ü circular dependencies (in bidir fabrics) Ø BCN can’t help this => Solutions required • Performance (to be elaborated in a future report) Ø low-order HOL-blocking and memory hogging ü Non-selective PAUSE causes hogging, i. e. , monopolization of common resources: e. g. shared memory may be monopolized by frames for a congested port (as shown here) ü Consequences – best: reduced throughput – worst: unfairness, starvation, saturation tree, collapse ü properly tuned, BCN can address this problem IBM Zurich Research Lab Gmb. H 3

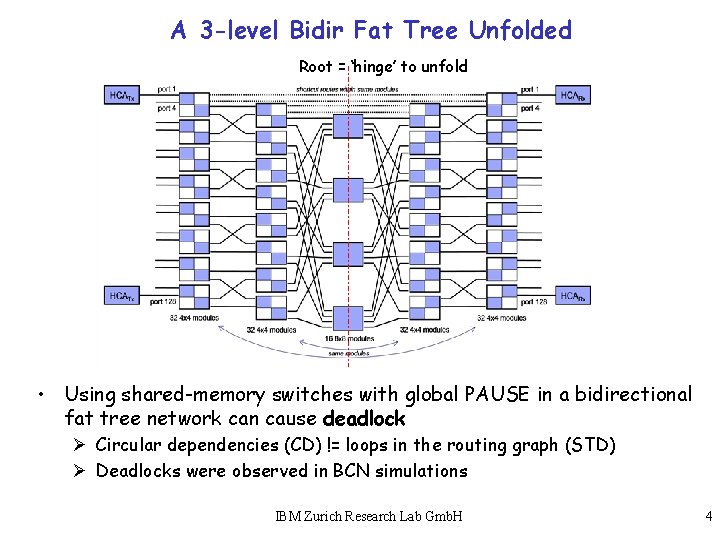

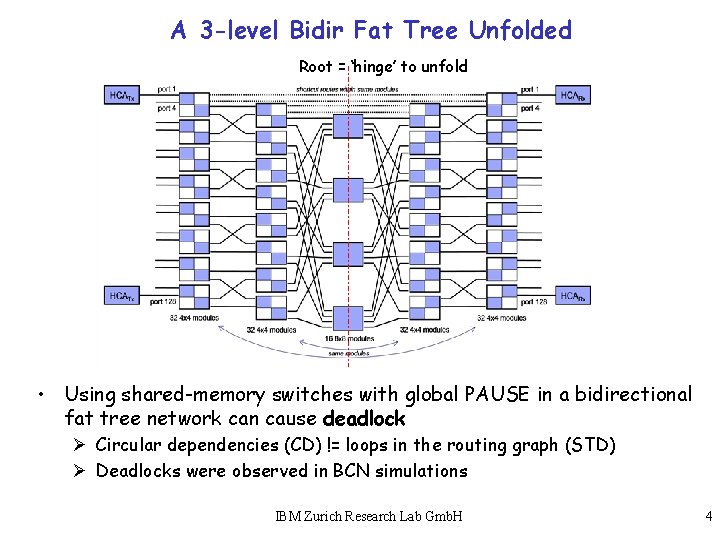

A 3 -level Bidir Fat Tree Unfolded Root = ‘hinge’ to unfold • Using shared-memory switches with global PAUSE in a bidirectional fat tree network can cause deadlock Ø Circular dependencies (CD) != loops in the routing graph (STD) Ø Deadlocks were observed in BCN simulations IBM Zurich Research Lab Gmb. H 4

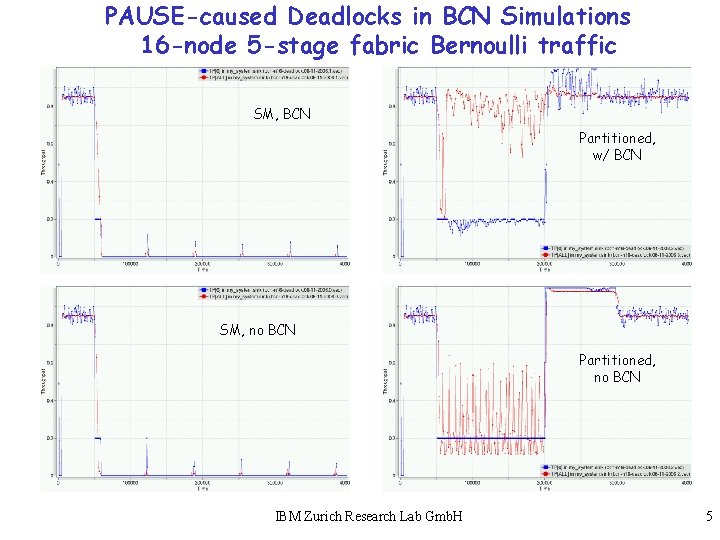

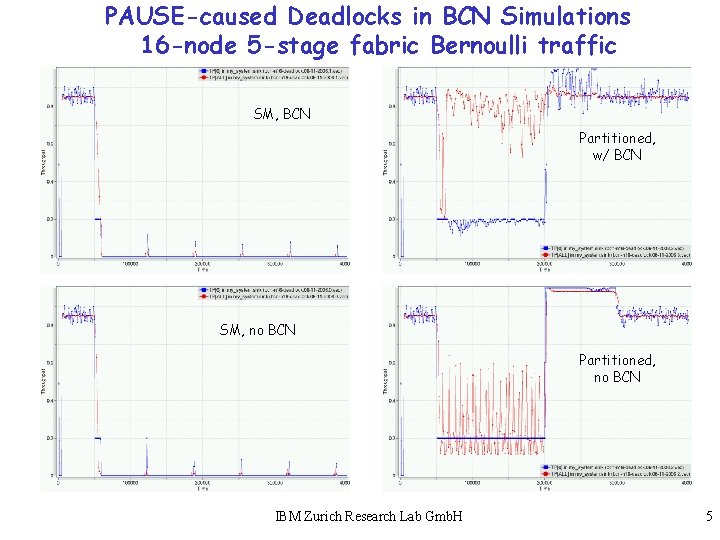

PAUSE-caused Deadlocks in BCN Simulations 16 -node 5 -stage fabric Bernoulli traffic SM, BCN Partitioned, w/ BCN SM, no BCN Partitioned, no BCN IBM Zurich Research Lab Gmb. H 5

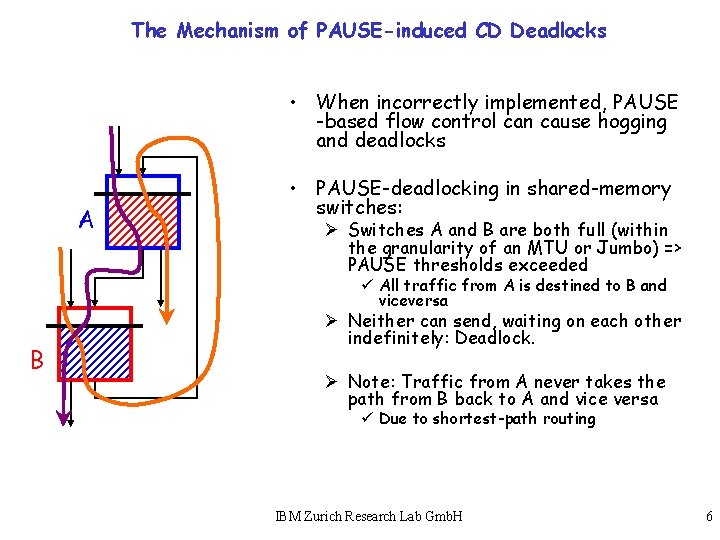

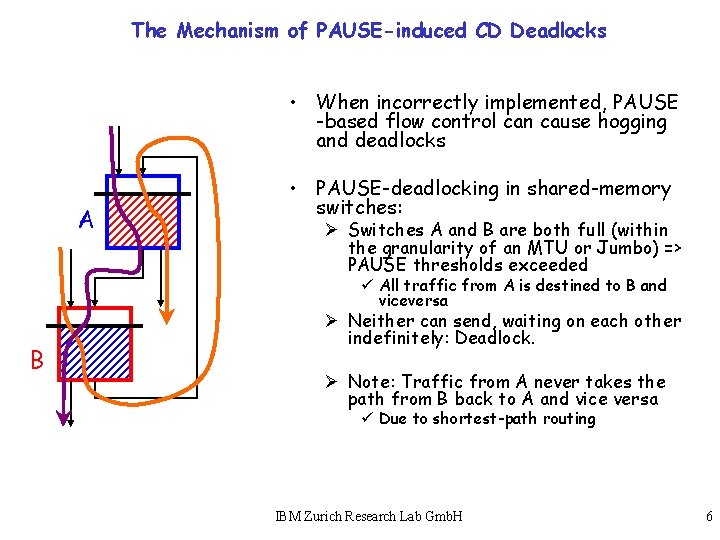

The Mechanism of PAUSE-induced CD Deadlocks • When incorrectly implemented, PAUSE -based flow control can cause hogging and deadlocks A • PAUSE-deadlocking in shared-memory switches: Ø Switches A and B are both full (within the granularity of an MTU or Jumbo) => PAUSE thresholds exceeded ü All traffic from A is destined to B and viceversa B Ø Neither can send, waiting on each other indefinitely: Deadlock. Ø Note: Traffic from A never takes the path from B back to A and vice versa ü Due to shortest-path routing IBM Zurich Research Lab Gmb. H 6

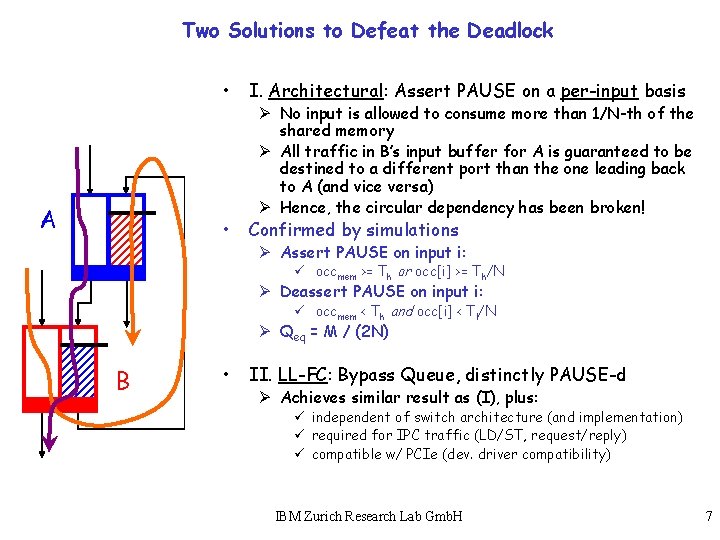

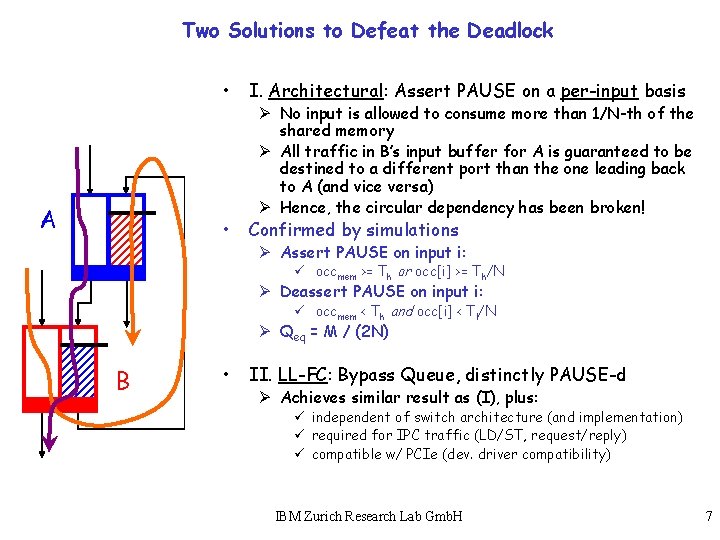

Two Solutions to Defeat the Deadlock A • I. Architectural: Assert PAUSE on a per-input basis • Confirmed by simulations Ø No input is allowed to consume more than 1/N-th of the shared memory Ø All traffic in B’s input buffer for A is guaranteed to be destined to a different port than the one leading back to A (and vice versa) Ø Hence, the circular dependency has been broken! Ø Assert PAUSE on input i: ü occmem >= Th or occ[i] >= Th/N Ø Deassert PAUSE on input i: ü occmem < Th and occ[i] < Tl/N Ø Qeq = M / (2 N) B • II. LL-FC: Bypass Queue, distinctly PAUSE-d Ø Achieves similar result as (I), plus: ü independent of switch architecture (and implementation) ü required for IPC traffic (LD/ST, request/reply) ü compatible w/ PCIe (dev. driver compatibility) IBM Zurich Research Lab Gmb. H 7

Simulation of BCN with Deadlock-free PAUSE • Observations Ø Qeq should be set to partition the shared memory ü Setting it higher promotes hogging ü Setting it lower wastes memory space Ø BCN works best with large buffers per port ü Buffer size per port should be significantly larger than mean burst size ü 256 frames per port IBM Zurich Research Lab Gmb. H 8

PAUSE Interaction with Congestion Management • What is the effect of deadlock-free PAUSE on BCN? • Memory partitioning ‘stiffens’ the feedback loop • PAUSE triggers backpressure tree earlier Ø Backrolling propagation speed depends not only on the available memory, but also on the switch service discipline • Next: Static analysis of PAUSE-BCN interference, function of the switch service discipline Note: To visualise the analytical iterations, enable animation. IBM Zurich Research Lab Gmb. H 9

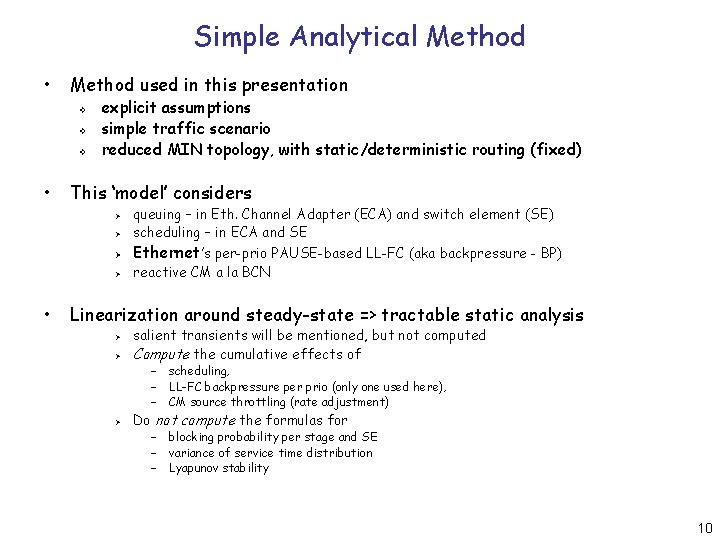

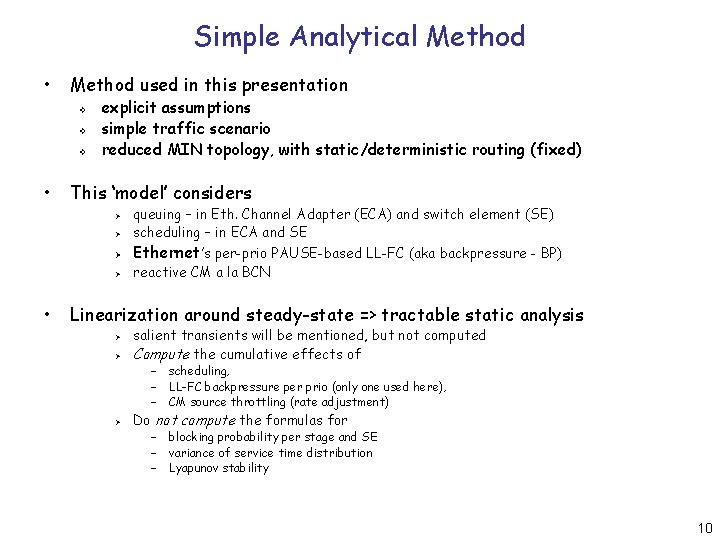

Simple Analytical Method • Method used in this presentation v v v • explicit assumptions simple traffic scenario reduced MIN topology, with static/deterministic routing (fixed) This ‘model’ considers Ø Ø • queuing – in Eth. Channel Adapter (ECA) and switch element (SE) scheduling – in ECA and SE Ethernet’s per-prio PAUSE-based LL-FC (aka backpressure - BP) reactive CM a la BCN Linearization around steady-state => tractable static analysis Ø Ø salient transients will be mentioned, but not computed Compute the cumulative effects of – scheduling, – LL-FC backpressure per prio (only one used here), – CM source throttling (rate adjustment) Ø Do not compute the formulas for – blocking probability per stage and SE – variance of service time distribution – Lyapunov stability 10

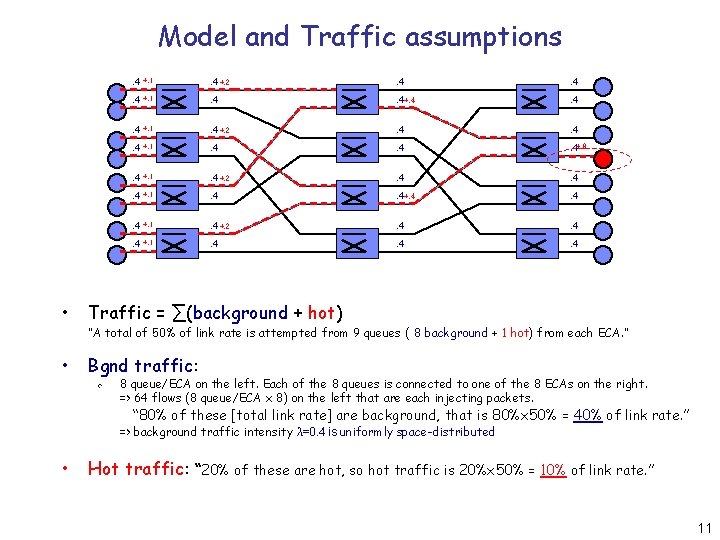

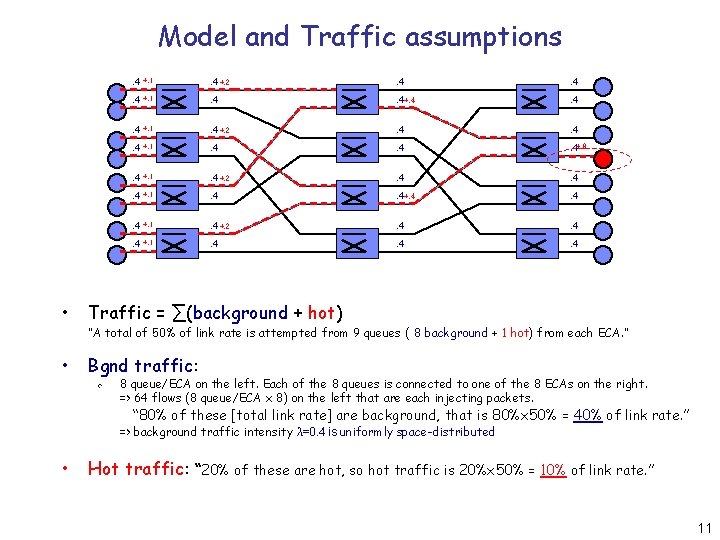

Model and Traffic assumptions. 4 +. 1 . 4 +. 2 . 4 . 4 +. 1 . 4 . 4+. 8 . 4 +. 1 . 4 +. 2 . 4 . 4 +. 1 . 4 . 4 • Traffic = ∑(background + hot) • Bgnd traffic: “A total of 50% of link rate is attempted from 9 queues ( 8 background + 1 hot) from each ECA. ” v 8 queue/ECA on the left. Each of the 8 queues is connected to one of the 8 ECAs on the right. => 64 flows (8 queue/ECA x 8) on the left that are each injecting packets. “ 80% of these [total link rate] are background, that is 80%x 50% = 40% of link rate. ” => background traffic intensity λ=0. 4 is uniformly space-distributed • Hot traffic: “ 20% of these are hot, so hot traffic is 20%x 50% = 10% of link rate. ” 11

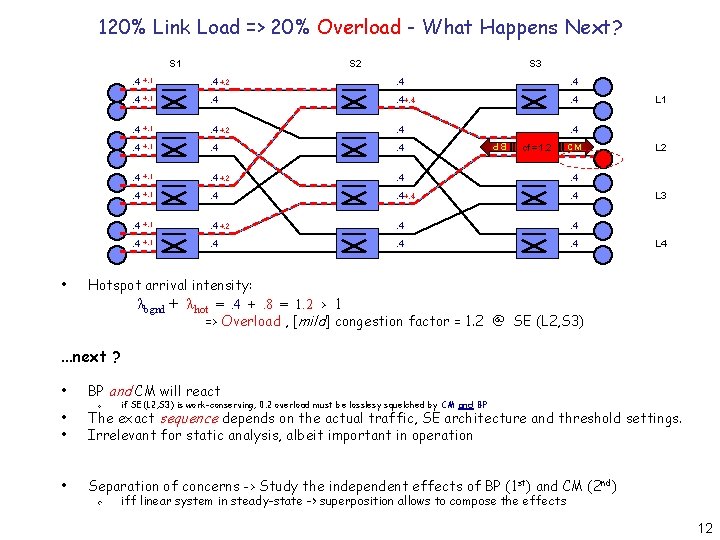

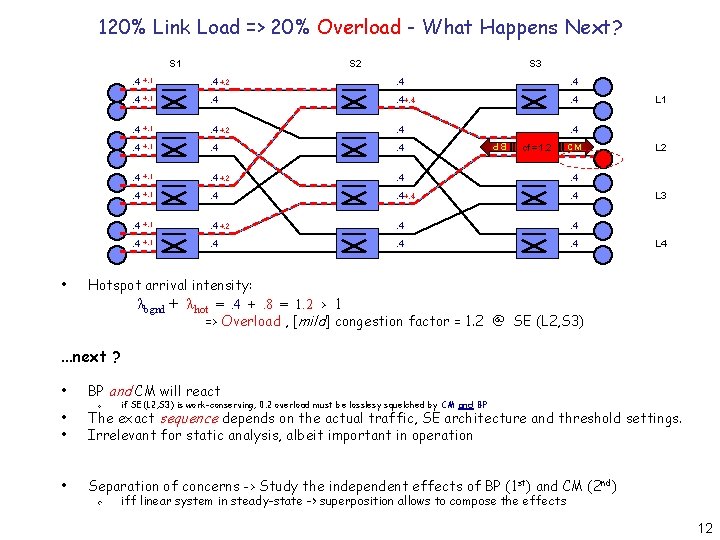

120% Link Load => 20% Overload - What Happens Next? S 1 S 3 . 4 +. 1 . 4 +. 2 . 4 . 4 . 4 +. 1 . 4 . 4 +. 4 . 4 . 4 +. 1 . 4 +. 2 . 4 . 4 . 4 +. 1 . 4 . 4 . 4 BP • S 2 cf = 1. 2 CM. 4+. 6 L 1 L 2 L 3 L 4 Hotspot arrival intensity: λbgnd + λhot =. 4 +. 8 = 1. 2 > 1 => Overload , [mild] congestion factor = 1. 2 @ SE (L 2, S 3) . . . next ? • BP and CM will react v if SE(L 2, S 3) is work-conserving, 0. 2 overload must be losslesy squelched by CM and BP • • The exact sequence depends on the actual traffic, SE architecture and threshold settings. Irrelevant for static analysis, albeit important in operation • Separation of concerns -> Study the independent effects of BP (1 st) and CM (2 nd) v iff linear system in steady-state -> superposition allows to compose the effects 12

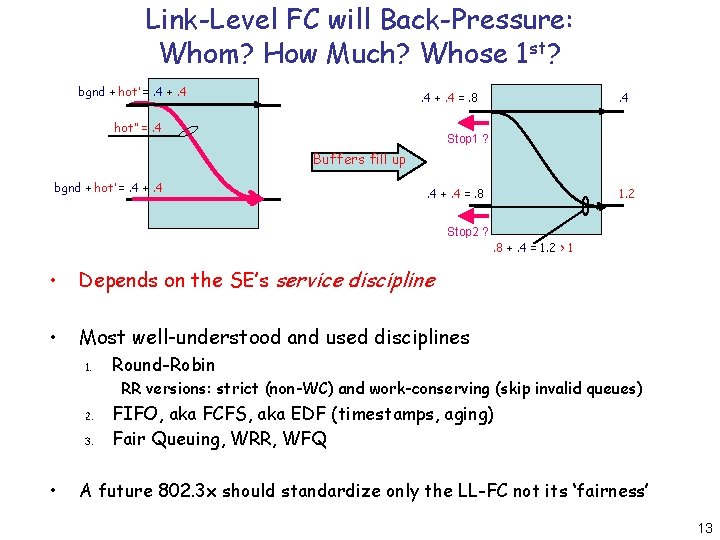

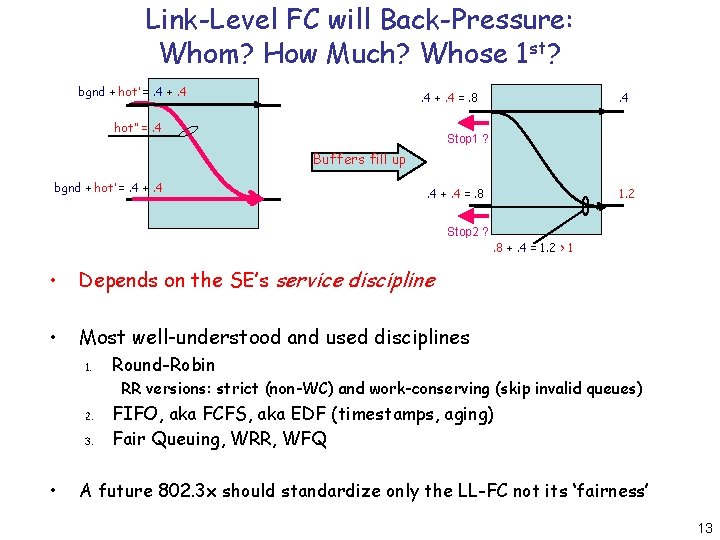

Link-Level FC will Back-Pressure: Whom? How Much? Whose 1 st? bgnd + hot’ =. 4 +. 4 =. 8 hot” =. 4 Stop 1 ? Buffers fill up bgnd + hot’ =. 4 +. 4 =. 8 1. 2 Stop 2 ? . 8 +. 4 = 1. 2 > 1 • Depends on the SE’s service discipline • Most well-understood and used disciplines 1. Round-Robin RR versions: strict (non-WC) and work-conserving (skip invalid queues) 2. 3. • FIFO, aka FCFS, aka EDF (timestamps, aging) Fair Queuing, WRR, WFQ A future 802. 3 x should standardize only the LL-FC not its ‘fairness’ 13

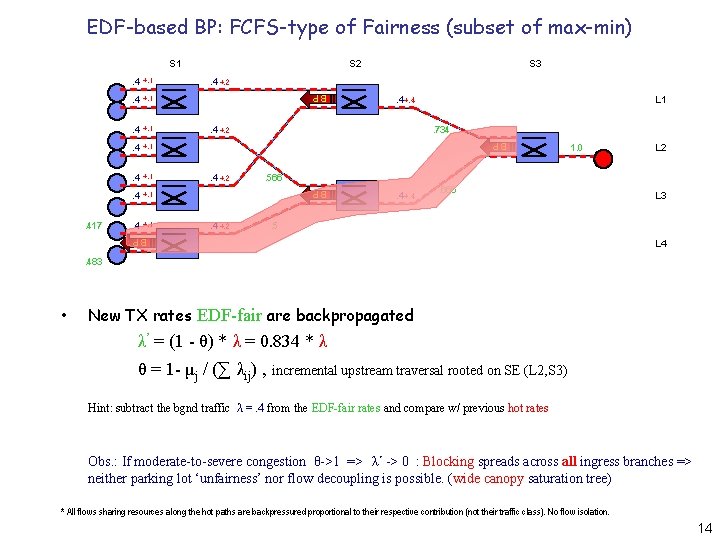

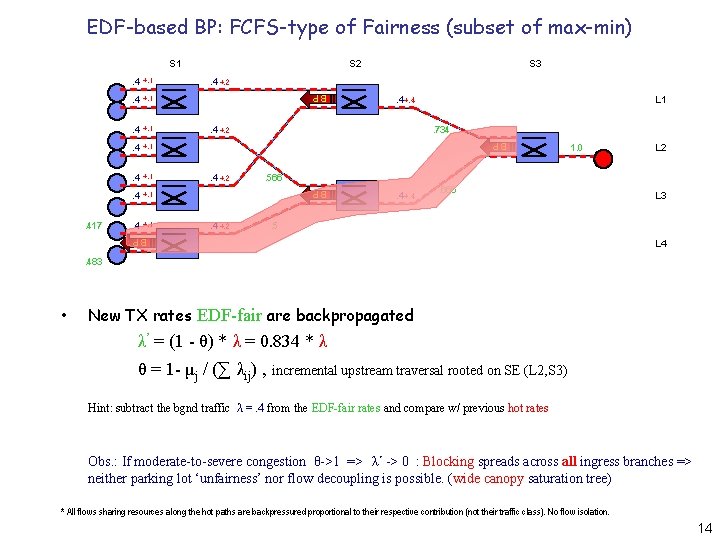

EDF-based BP: FCFS-type of Fairness (subset of max-min) S 1 +. 1 . 4 +. 2 L 1. 734 . 4 +. 2 1. 0 . 4 +. 4 . 666 L 3 . 5 BP. 4 L 2 . 566 BP . 4 S 3 BP +. 1 BP . 417 . 4 S 2 +. 1 L 4 . 483 • New TX rates EDF-fair are backpropagated λ’ = (1 - θ) * λ = 0. 834 * λ θ = 1 - μj / (∑ λij) , incremental upstream traversal rooted on SE (L 2, S 3) Hint: subtract the bgnd traffic λ =. 4 from the EDF-fair rates and compare w/ previous hot rates Obs. : If moderate-to-severe congestion θ->1 => λ’ -> 0 : Blocking spreads across all ingress branches => neither parking lot ‘unfairness’ nor flow decoupling is possible. (wide canopy saturation tree) * All flows sharing resources along the hot paths are backpressured proportional to their respective contribution (not their traffic class). No flow isolation. 14

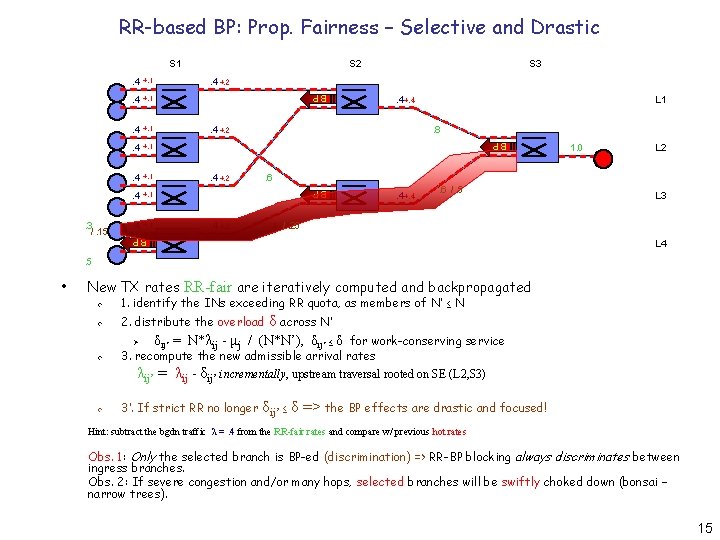

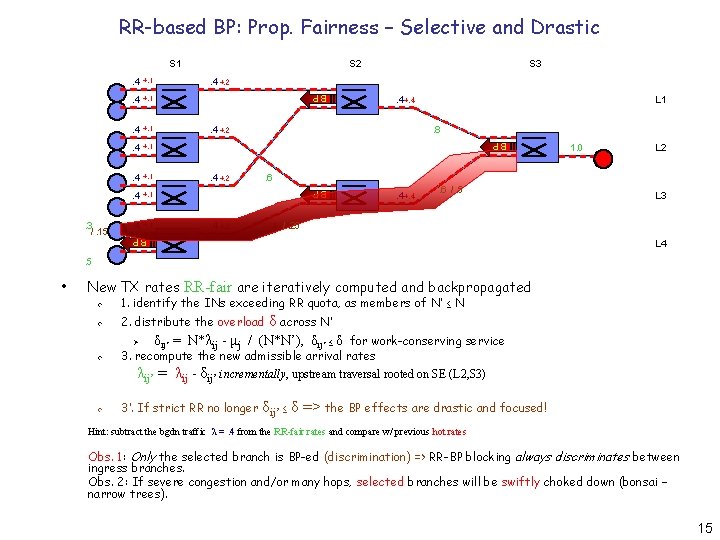

RR-based BP: Prop. Fairness – Selective and Drastic S 1 +. 1 . 4 +. 2 L 1. 8 . 4 +. 2 . 4 +. 4 . 6 /. 5 L 2 L 3 . 4 /. 25 BP. 4 1. 0 . 6 BP . 4 S 3 BP +. 1 BP . 3 /. 15 . 4 S 2 +. 1 L 4 . 5 • New TX rates RR-fair are iteratively computed and backpropagated v 1. identify the INs exceeding RR quota, as members of N’ ≤ N v 2. distribute the overload δ across N’ v Ø δij’ = N*λij - μj / (N*N’), δij’ ≤ δ for work-conserving service 3. recompute the new admissible arrival rates λij’ = λij - δij’ incrementally, upstream traversal rooted on SE (L 2, S 3) v 3’. If strict RR no longer δij’ ≤ δ => the BP effects are drastic and focused! Hint: subtract the bgdn traffic λ =. 4 from the RR-fair rates and compare w/ previous hot rates Obs. 1: Only the selected branch is BP-ed (discrimination) => RR-BP blocking always discriminates between ingress branches. Obs. 2: If severe congestion and/or many hops, selected branches will be swiftly choked down (bonsai – narrow trees). 15

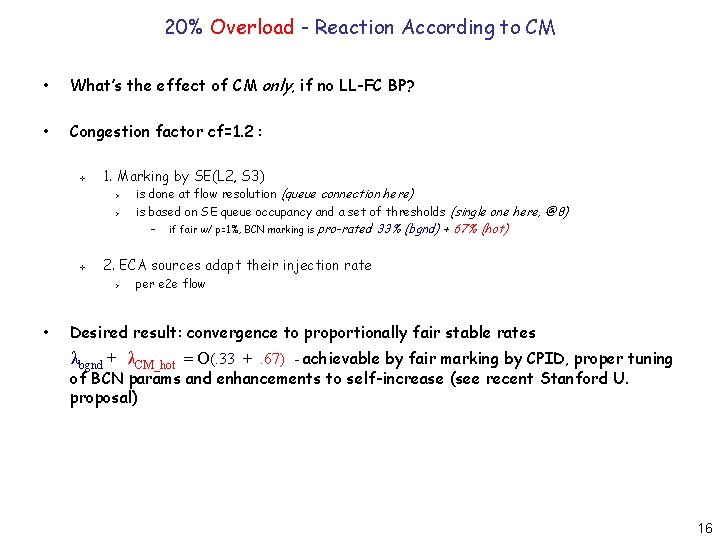

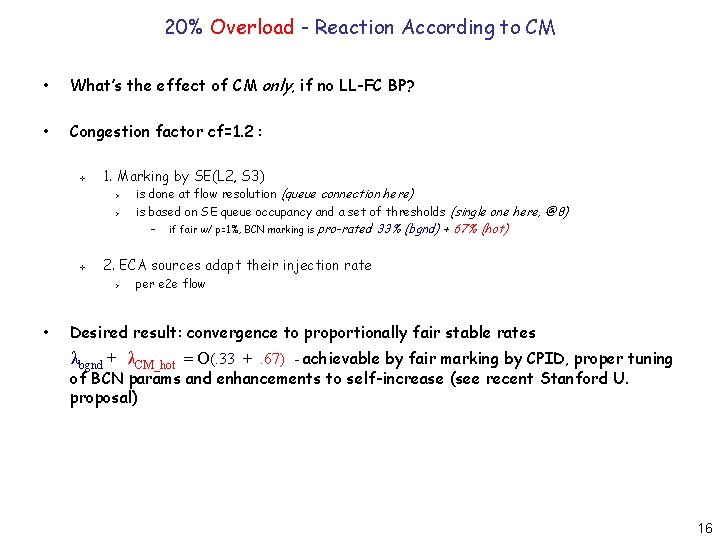

20% Overload - Reaction According to CM • What’s the effect of CM only, if no LL-FC BP? • Congestion factor cf=1. 2 : v 1. Marking by SE(L 2, S 3) Ø Ø is done at flow resolution (queue connection here) is based on SE queue occupancy and a set of thresholds (single one here, @8) – v pro-rated 33% (bgnd) + 67% (hot) 2. ECA sources adapt their injection rate Ø • if fair w/ p=1%, BCN marking is per e 2 e flow Desired result: convergence to proportionally fair stable rates λbgnd + λCM_hot = O(. 33 +. 67) - achievable by fair marking by CPID, proper tuning of BCN params and enhancements to self-increase (see recent Stanford U. proposal) 16

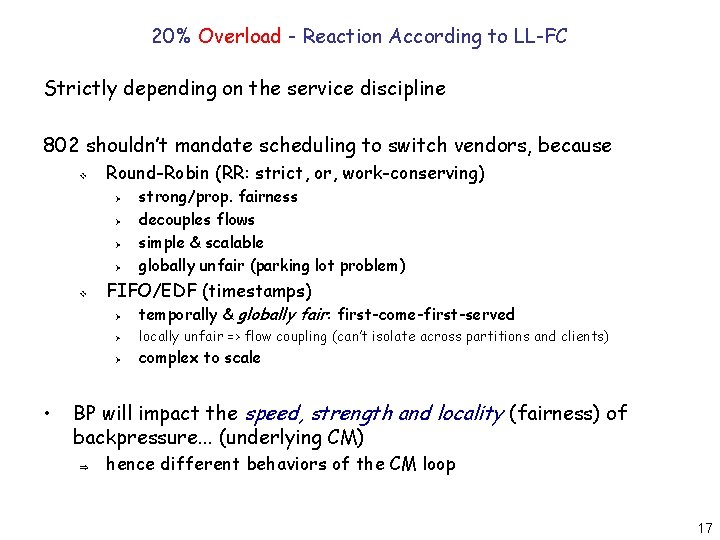

20% Overload - Reaction According to LL-FC Strictly depending on the service discipline 802 shouldn’t mandate scheduling to switch vendors, because v Round-Robin (RR: strict, or, work-conserving) Ø Ø v • strong/prop. fairness decouples flows simple & scalable globally unfair (parking lot problem) FIFO/EDF (timestamps) Ø temporally & globally fair: first-come-first-served Ø locally unfair => flow coupling (can’t isolate across partitions and clients) Ø complex to scale BP will impact the speed, strength and locality (fairness) of backpressure. . . (underlying CM) Þ hence different behaviors of the CM loop 17

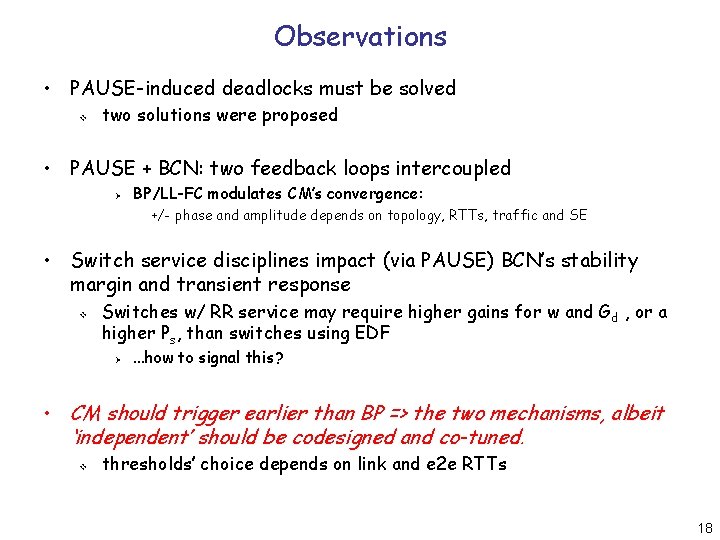

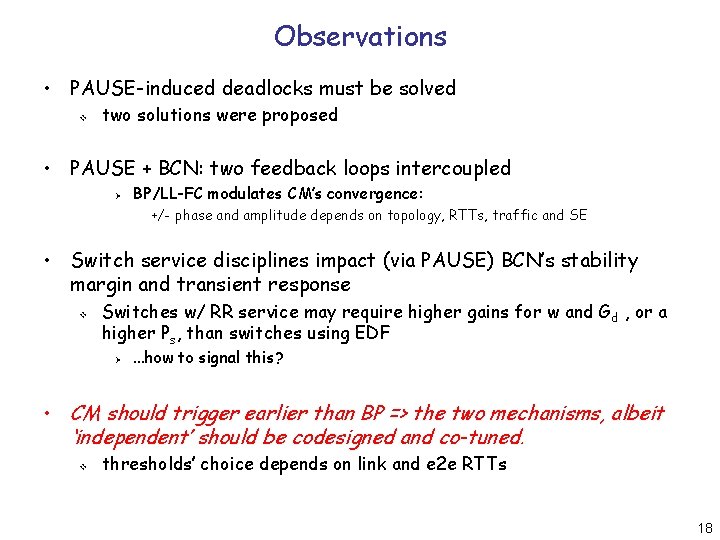

Observations • PAUSE-induced deadlocks must be solved v two solutions were proposed • PAUSE + BCN: two feedback loops intercoupled Ø BP/LL-FC modulates CM’s convergence: +/- phase and amplitude depends on topology, RTTs, traffic and SE • Switch service disciplines impact (via PAUSE) BCN’s stability margin and transient response v Switches w/ RR service may require higher gains for w and G d , or a higher Ps, than switches using EDF Ø . . . how to signal this? • CM should trigger earlier than BP => the two mechanisms, albeit ‘independent’ should be codesigned and co-tuned. v thresholds’ choice depends on link and e 2 e RTTs 18

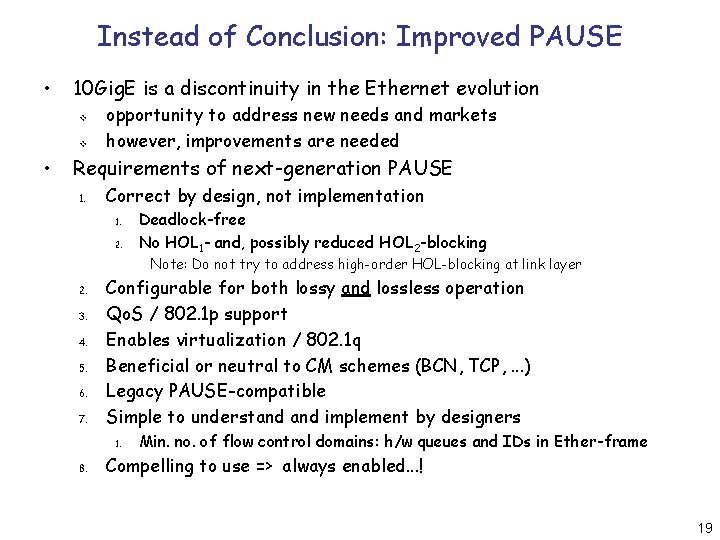

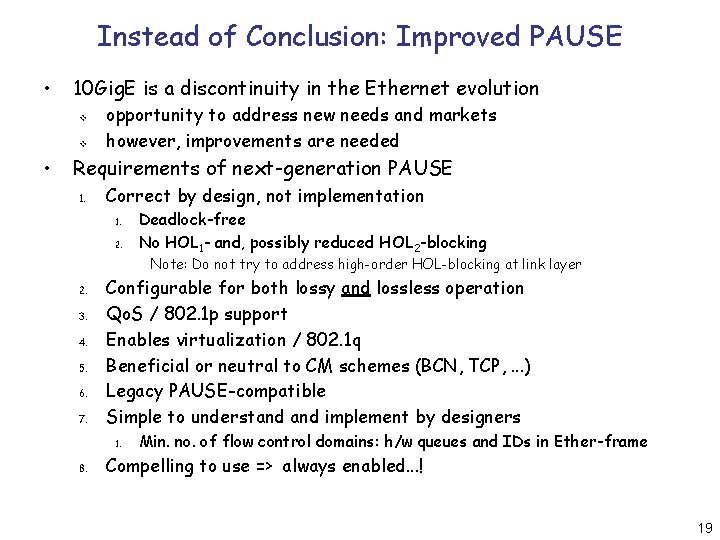

Instead of Conclusion: Improved PAUSE • 10 Gig. E is a discontinuity in the Ethernet evolution v v • opportunity to address new needs and markets however, improvements are needed Requirements of next-generation PAUSE 1. Correct by design, not implementation 1. 2. Deadlock-free No HOL 1 - and, possibly reduced HOL 2 -blocking Note: Do not try to address high-order HOL-blocking at link layer 2. 3. 4. 5. 6. 7. Configurable for both lossy and lossless operation Qo. S / 802. 1 p support Enables virtualization / 802. 1 q Beneficial or neutral to CM schemes (BCN, TCP, . . . ) Legacy PAUSE-compatible Simple to understand implement by designers 1. 8. Min. no. of flow control domains: h/w queues and IDs in Ether-frame Compelling to use => always enabled. . . ! 19