System Level Benchmarking Analysis of the CortexA 9

System Level Benchmarking Analysis of the Cortex™-A 9 MPCore™ Anirban Lahiri Technology Researcher Adya Shrotriya, Nicolas Zea Interns This project in ARM is in part funded by ICT-e. Mu. Co, a European project supported under the Seventh Framework Programme (7 FP) for research and technological development John Goodacre Director, Program Management ARM Processor Division October 2009

Agenda § Look at leading ARM platforms – PBX-A 9, V 2 -A 9 § Observe and analyse phenomena related to execution behaviour – especially related to memory bandwidth and latency § Understand the benefits offered by ARM MPCore technology § Strategies for optimization 2

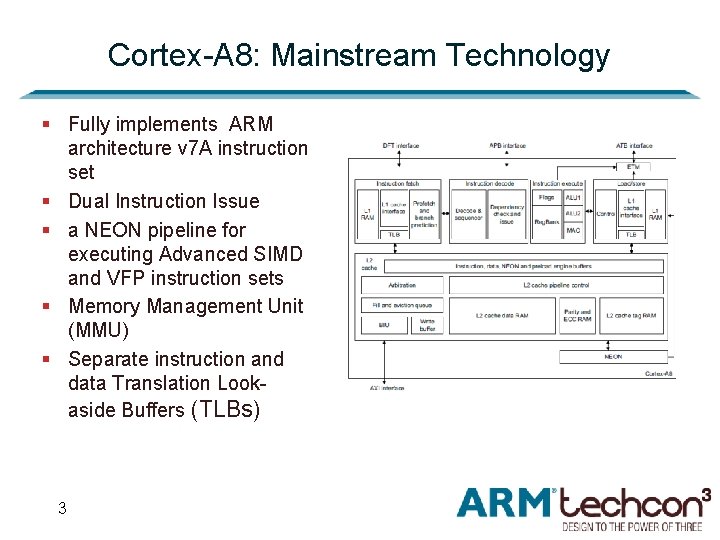

Cortex-A 8: Mainstream Technology § Fully implements ARM architecture v 7 A instruction set § Dual Instruction Issue § a NEON pipeline for executing Advanced SIMD and VFP instruction sets § Memory Management Unit (MMU) § Separate instruction and data Translation Lookaside Buffers (TLBs) 3

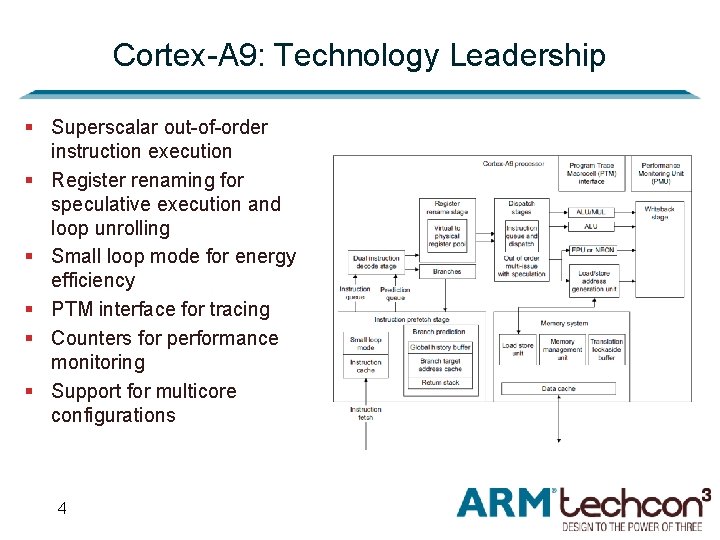

Cortex-A 9: Technology Leadership § Superscalar out-of-order instruction execution § Register renaming for speculative execution and loop unrolling § Small loop mode for energy efficiency § PTM interface for tracing § Counters for performance monitoring § Support for multicore configurations 4

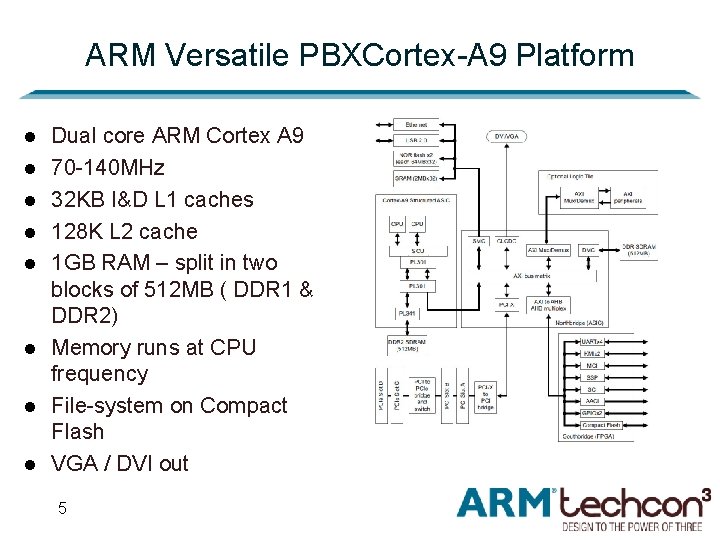

ARM Versatile PBXCortex-A 9 Platform l l l l Dual core ARM Cortex A 9 70 -140 MHz 32 KB I&D L 1 caches 128 K L 2 cache 1 GB RAM – split in two blocks of 512 MB ( DDR 1 & DDR 2) Memory runs at CPU frequency File-system on Compact Flash VGA / DVI out 5

ARM Versatile 2 -A 9 Platform (V 2) § ARM-NEC Cortex-A 9 test-chip ~400 MHz § Cortex A 9 x 4 § 4 x NEON/FPU § 32 KB I&D invidual L 1 caches § 512 K L 2 cache § 1 GB RAM (32 b DDR 2) 6 ARM recently announced a 2 GHz dualcore implementation

Software Framework § Linux - Kernel 2. 6. 28 § Debian 5. 0 “Lenny” Linux file-system compiled for ARMv 4 T § LMBench 3. 0 a 9 § Includes STREAM benchmarks § Single instance and multiple instances 7

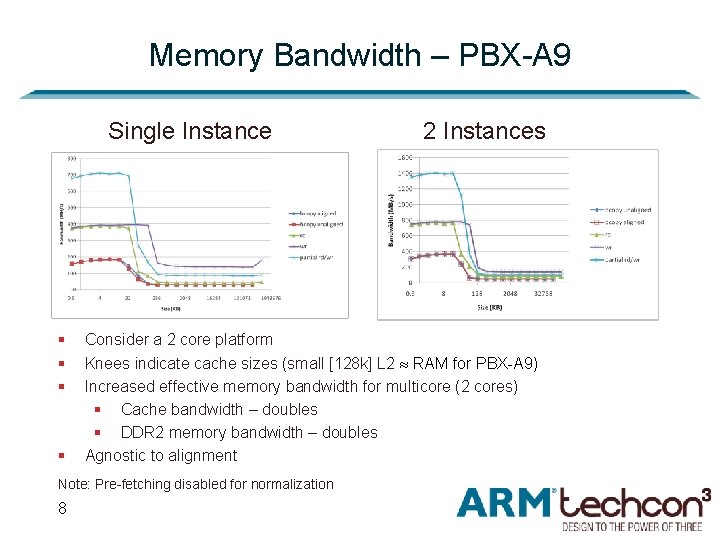

Memory Bandwidth – PBX-A 9 Single Instance § § Consider a 2 core platform Knees indicate cache sizes (small [128 k] L 2 RAM for PBX-A 9) Increased effective memory bandwidth for multicore (2 cores) § Cache bandwidth – doubles § DDR 2 memory bandwidth – doubles Agnostic to alignment Note: Pre-fetching disabled for normalization 8 2 Instances

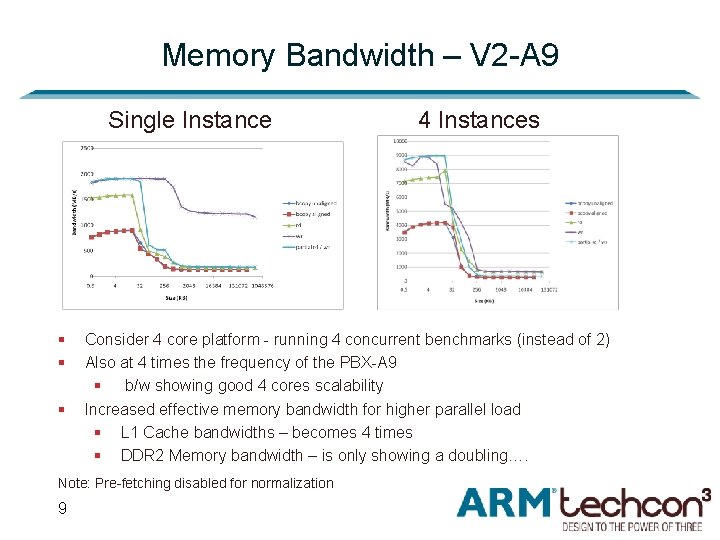

Memory Bandwidth – V 2 -A 9 Single Instance § § § Consider 4 core platform - running 4 concurrent benchmarks (instead of 2) Also at 4 times the frequency of the PBX-A 9 § b/w showing good 4 cores scalability Increased effective memory bandwidth for higher parallel load § L 1 Cache bandwidths – becomes 4 times § DDR 2 Memory bandwidth – is only showing a doubling…. Note: Pre-fetching disabled for normalization 9 4 Instances

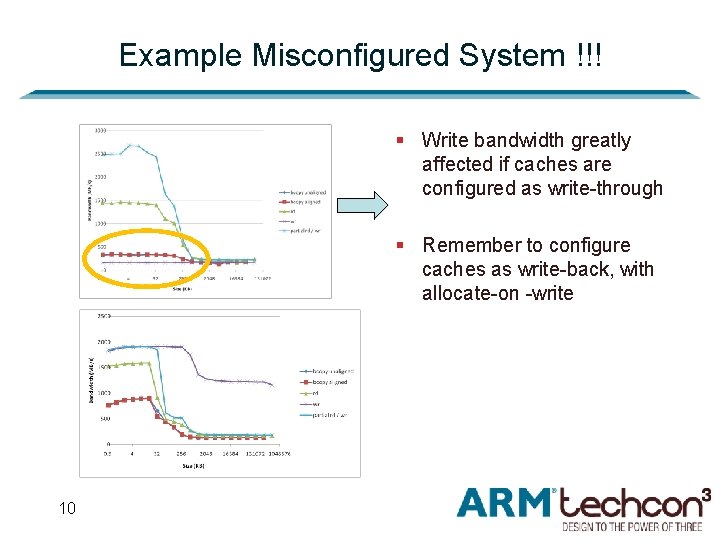

Example Misconfigured System !!! § Write bandwidth greatly affected if caches are configured as write-through § Remember to configure caches as write-back, with allocate-on -write 10

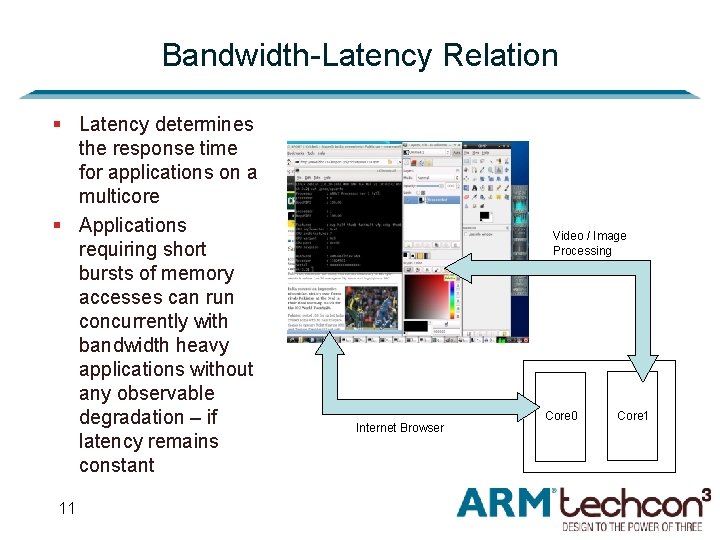

Bandwidth-Latency Relation § Latency determines the response time for applications on a multicore § Applications requiring short bursts of memory accesses can run concurrently with bandwidth heavy applications without any observable degradation – if latency remains constant 11 Video / Image Processing Internet Browser Core 0 Core 1

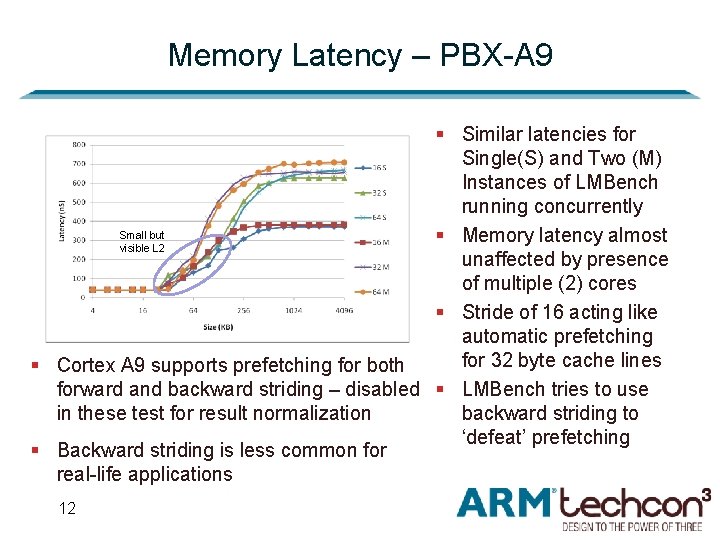

Memory Latency – PBX-A 9 § Similar latencies for Single(S) and Two (M) Instances of LMBench running concurrently Small but § Memory latency almost visible L 2 unaffected by presence of multiple (2) cores § Stride of 16 acting like automatic prefetching for 32 byte cache lines § Cortex A 9 supports prefetching for both forward and backward striding – disabled § LMBench tries to use backward striding to in these test for result normalization ‘defeat’ prefetching § Backward striding is less common for real-life applications 12

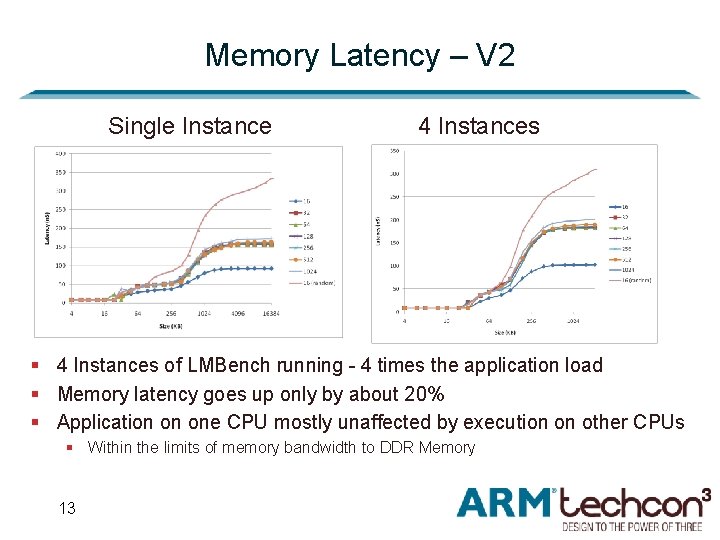

Memory Latency – V 2 Single Instance 4 Instances § 4 Instances of LMBench running - 4 times the application load § Memory latency goes up only by about 20% § Application on one CPU mostly unaffected by execution on other CPUs § Within the limits of memory bandwidth to DDR Memory 13

Summary and Recommendations § Running multiple memory intensive applications on a single CPU can be detrimental – cache conflicts § Spreading Memory and CPU intensive applications over the multiple cores provides better performance 14

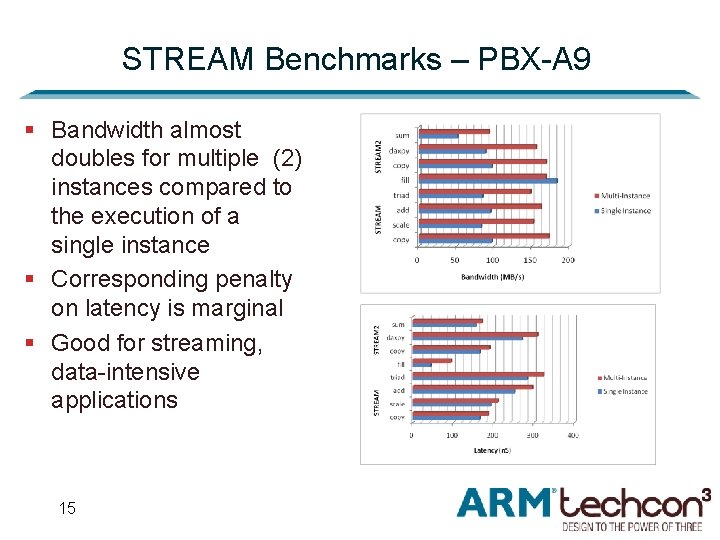

STREAM Benchmarks – PBX-A 9 § Bandwidth almost doubles for multiple (2) instances compared to the execution of a single instance § Corresponding penalty on latency is marginal § Good for streaming, data-intensive applications 15

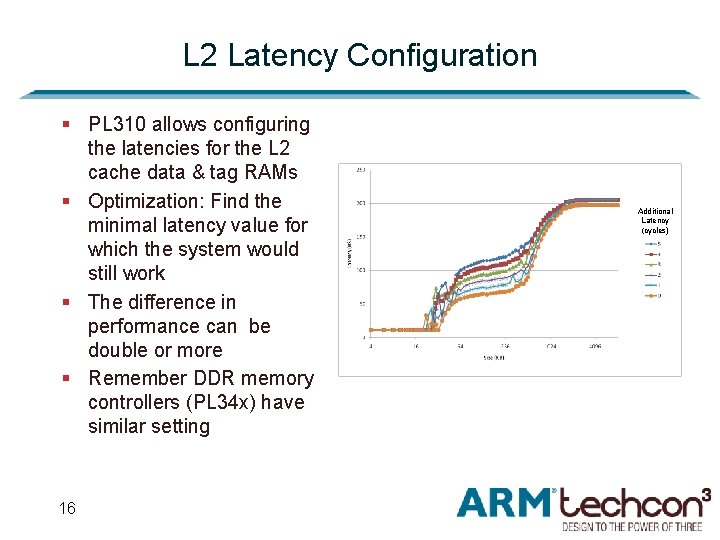

L 2 Latency Configuration § PL 310 allows configuring the latencies for the L 2 cache data & tag RAMs § Optimization: Find the minimal latency value for which the system would still work § The difference in performance can be double or more § Remember DDR memory controllers (PL 34 x) have similar setting 16 Additional Latency (cycles)

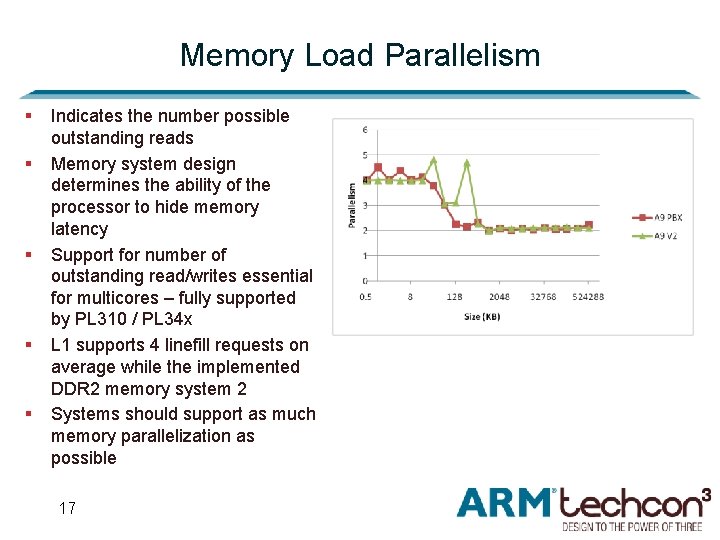

Memory Load Parallelism § § § Indicates the number possible outstanding reads Memory system design determines the ability of the processor to hide memory latency Support for number of outstanding read/writes essential for multicores – fully supported by PL 310 / PL 34 x L 1 supports 4 linefill requests on average while the implemented DDR 2 memory system 2 Systems should support as much memory parallelization as possible 17

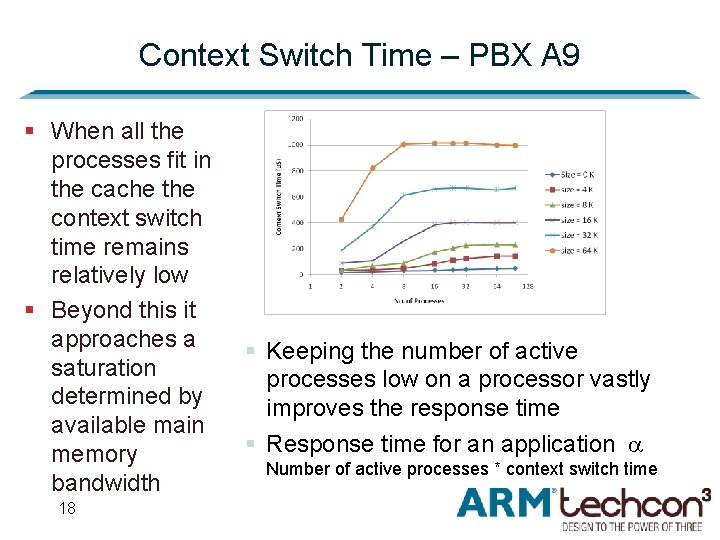

Context Switch Time – PBX A 9 § When all the processes fit in the cache the context switch time remains relatively low § Beyond this it approaches a saturation determined by available main memory bandwidth 18 § Keeping the number of active processes low on a processor vastly improves the response time § Response time for an application Number of active processes * context switch time

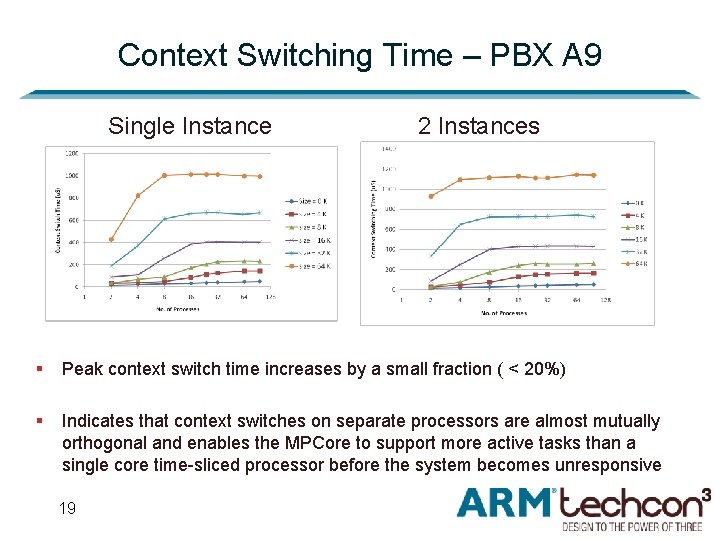

Context Switching Time – PBX A 9 Single Instance 2 Instances § Peak context switch time increases by a small fraction ( < 20%) § Indicates that context switches on separate processors are almost mutually orthogonal and enables the MPCore to support more active tasks than a single core time-sliced processor before the system becomes unresponsive 19

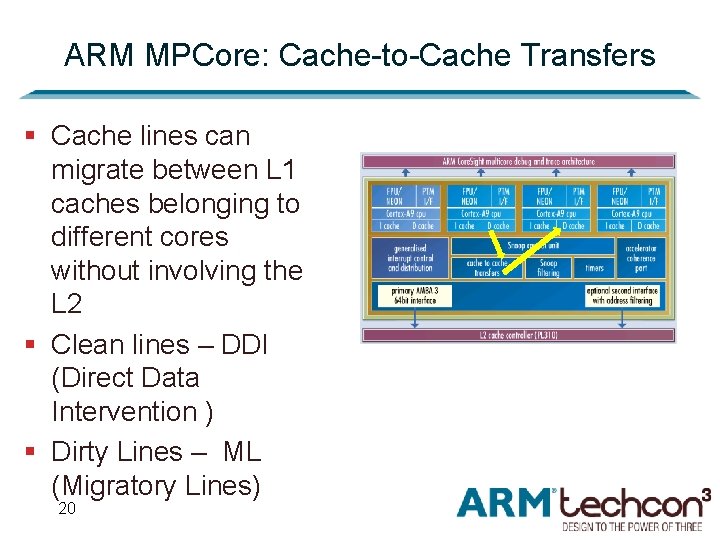

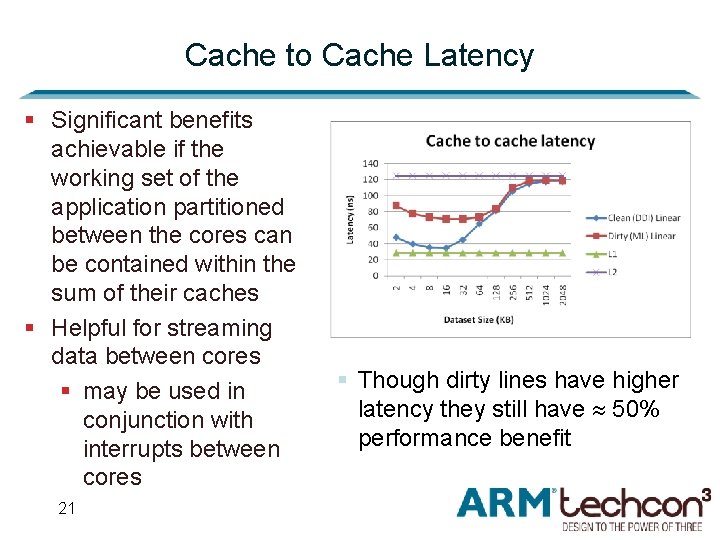

ARM MPCore: Cache-to-Cache Transfers § Cache lines can migrate between L 1 caches belonging to different cores without involving the L 2 § Clean lines – DDI (Direct Data Intervention ) § Dirty Lines – ML (Migratory Lines) 20

Cache to Cache Latency § Significant benefits achievable if the working set of the application partitioned between the cores can be contained within the sum of their caches § Helpful for streaming data between cores § may be used in conjunction with interrupts between cores 21 § Though dirty lines have higher latency they still have 50% performance benefit

Conclusion § Illustration of typical system behaviours for Multi-cores § Explored the potential benefits of multicores § Insights to avoid common system bottlenecks and pitfalls § Optimization strategies for system designers and OS architects 22

Thank you !!!

- Slides: 23