Streaming Architectures and GPUs Ian Buck Bill Dally

Streaming Architectures and GPUs Ian Buck Bill Dally & Pat Hanrahan Stanford University February 11, 2004

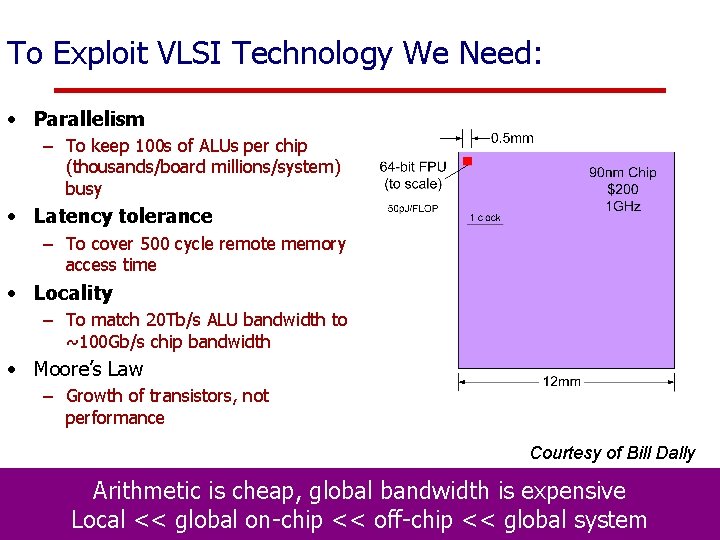

To Exploit VLSI Technology We Need: • Parallelism – To keep 100 s of ALUs per chip (thousands/board millions/system) busy • Latency tolerance – To cover 500 cycle remote memory access time • Locality – To match 20 Tb/s ALU bandwidth to ~100 Gb/s chip bandwidth • Moore’s Law – Growth of transistors, not performance Courtesy of Bill Dally Arithmetic is cheap, global bandwidth is expensive Local << global on-chip << off-chip << global system February 11, 2004

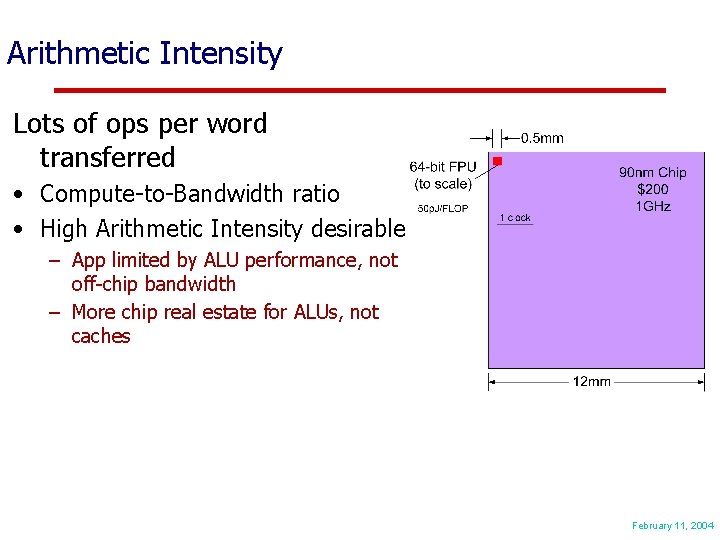

Arithmetic Intensity Lots of ops per word transferred • Compute-to-Bandwidth ratio • High Arithmetic Intensity desirable – App limited by ALU performance, not off-chip bandwidth – More chip real estate for ALUs, not caches Courtesy of Pat Hanrahan February 11, 2004

Brook: Stream programming Model – Enforce Data Parallel computing – Encourage Arithmetic Intensity – Provide fundamental ops for stream computing February 11, 2004

Streams & Kernels • Streams – Collection of records requiring similar computation • Vertex positions, voxels, FEM cell, … – Provide data parallelism • Kernels – Functions applied to each element in stream • transforms, PDE, … • No dependencies between stream elements – Encourage high Arithmetic Intensity February 11, 2004

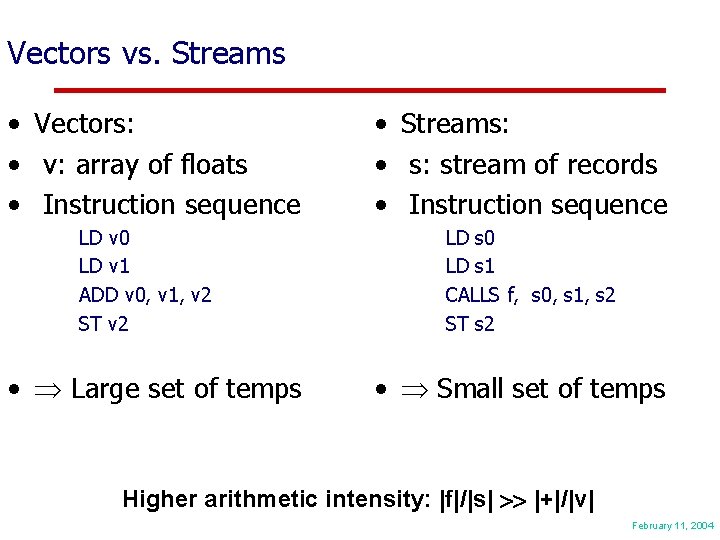

Vectors vs. Streams • Vectors: • v: array of floats • Instruction sequence LD v 0 LD v 1 ADD v 0, v 1, v 2 ST v 2 • Large set of temps • Streams: • s: stream of records • Instruction sequence LD s 0 LD s 1 CALLS f, s 0, s 1, s 2 ST s 2 • Small set of temps Higher arithmetic intensity: |f|/|s| |+|/|v| February 11, 2004

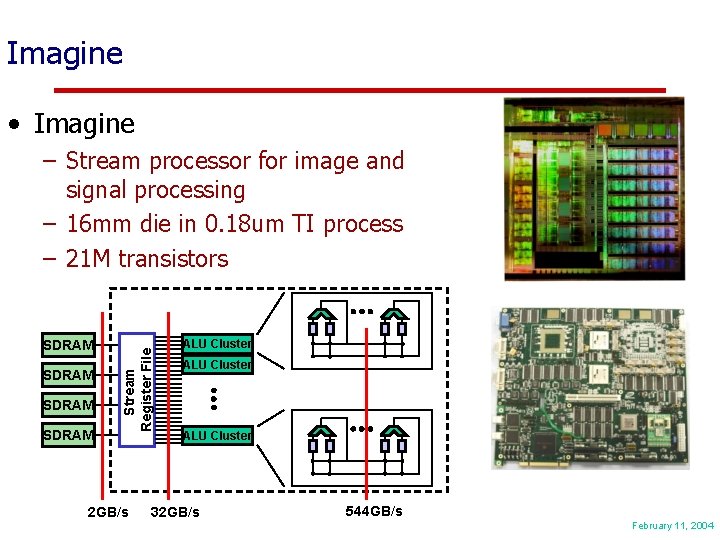

Imagine • Imagine SDRAM Stream Register File – Stream processor for image and signal processing – 16 mm die in 0. 18 um TI process – 21 M transistors 2 GB/s ALU Cluster 32 GB/s 544 GB/s February 11, 2004

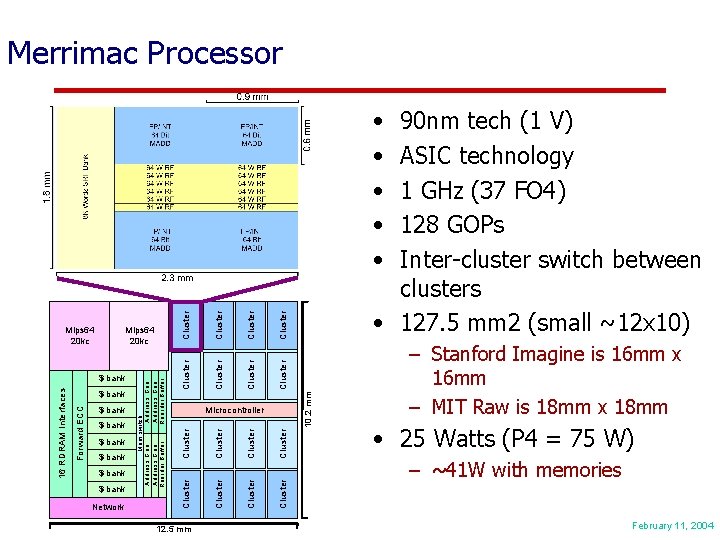

Merrimac Processor Network 12. 5 mm Cluster 90 nm tech (1 V) ASIC technology 1 GHz (37 FO 4) 128 GOPs Inter-cluster switch between clusters • 127. 5 mm 2 (small ~12 x 10) 10. 2 mm Cluster Cluster $ bank Cluster $ bank Microcontroller Cluster $ bank Forward ECC 16 RDRAM Interfaces $ bank Cluster Mips 64 20 kc M e m sw itc h A ddres s Ge n A ddres s Gen Reorder B uf fer R eorder B uf fe r Mips 64 20 kc Cluster • • • – Stanford Imagine is 16 mm x 16 mm – MIT Raw is 18 mm x 18 mm • 25 Watts (P 4 = 75 W) – ~41 W with memories February 11, 2004

Merrimac Streaming Supercomputer February 11, 2004

Streaming Applications • • • Finite volume – Stream. FLO (from TFLO) Finite element - Stream. FEM Molecular dynamics code (ODEs) - Stream. MD Model (elliptic, hyperbolic and parabolic) PDEs PCA Applications: FFT, Matrix Mul, SVD, Sort February 11, 2004

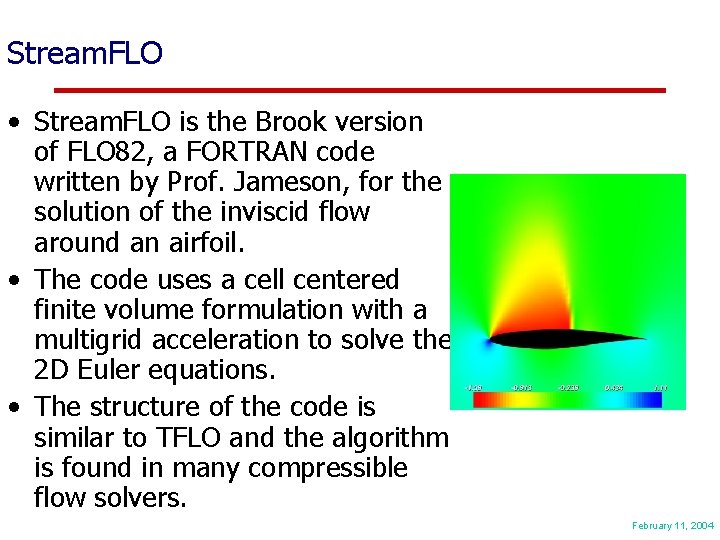

Stream. FLO • Stream. FLO is the Brook version of FLO 82, a FORTRAN code written by Prof. Jameson, for the solution of the inviscid flow around an airfoil. • The code uses a cell centered finite volume formulation with a multigrid acceleration to solve the 2 D Euler equations. • The structure of the code is similar to TFLO and the algorithm is found in many compressible flow solvers. February 11, 2004

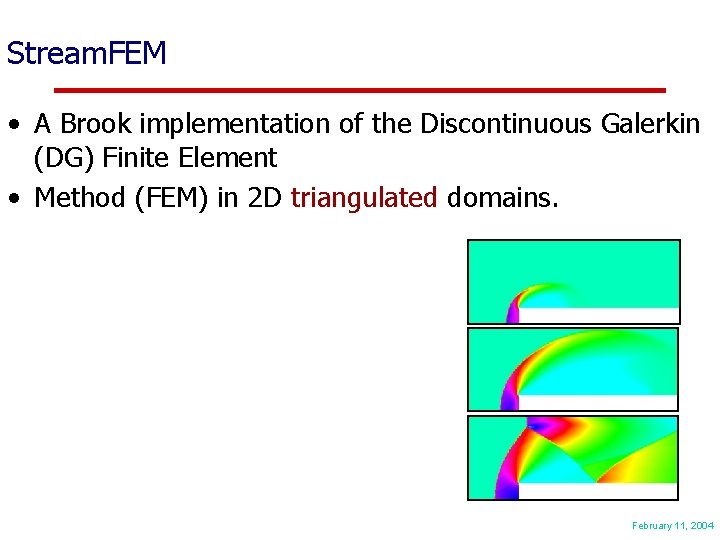

Stream. FEM • A Brook implementation of the Discontinuous Galerkin (DG) Finite Element • Method (FEM) in 2 D triangulated domains. February 11, 2004

Stream. MD: motivation • Application: study the folding of human proteins. • Molecular Dynamics: computer simulation of the dynamics of macro molecules. • Why this application? – Expect high arithmetic intensity. – Requires variable length neighborlists. – Molecular Dynamics can be used in engine simulation to model spray, e. g. droplet formation and breakup, drag, deformation of droplet. • Test case chosen for initial evaluation: box of water molecules. DNA molecule Human immunodeficiency virus (HIV) February 11, 2004

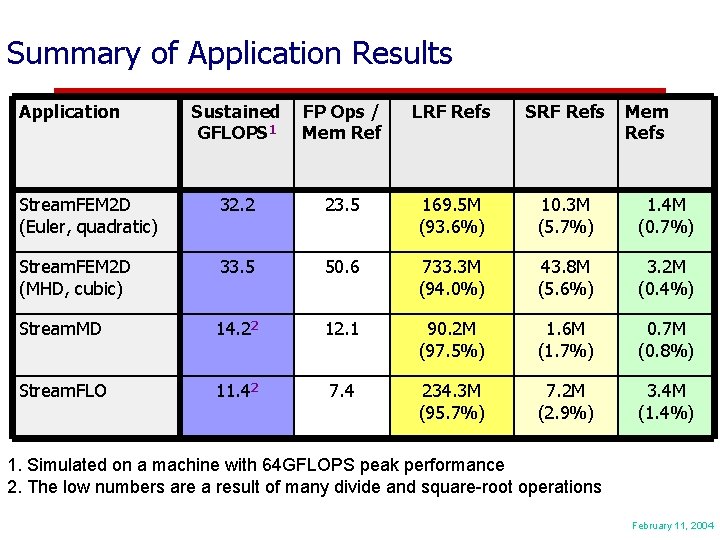

Summary of Application Results Application Sustained GFLOPS 1 FP Ops / Mem Ref LRF Refs SRF Refs Mem Refs Stream. FEM 2 D (Euler, quadratic) 32. 2 23. 5 169. 5 M (93. 6%) 10. 3 M (5. 7%) 1. 4 M (0. 7%) Stream. FEM 2 D (MHD, cubic) 33. 5 50. 6 733. 3 M (94. 0%) 43. 8 M (5. 6%) 3. 2 M (0. 4%) Stream. MD 14. 22 12. 1 90. 2 M (97. 5%) 1. 6 M (1. 7%) 0. 7 M (0. 8%) Stream. FLO 11. 42 7. 4 234. 3 M (95. 7%) 7. 2 M (2. 9%) 3. 4 M (1. 4%) 1. Simulated on a machine with 64 GFLOPS peak performance 2. The low numbers are a result of many divide and square-root operations February 11, 2004

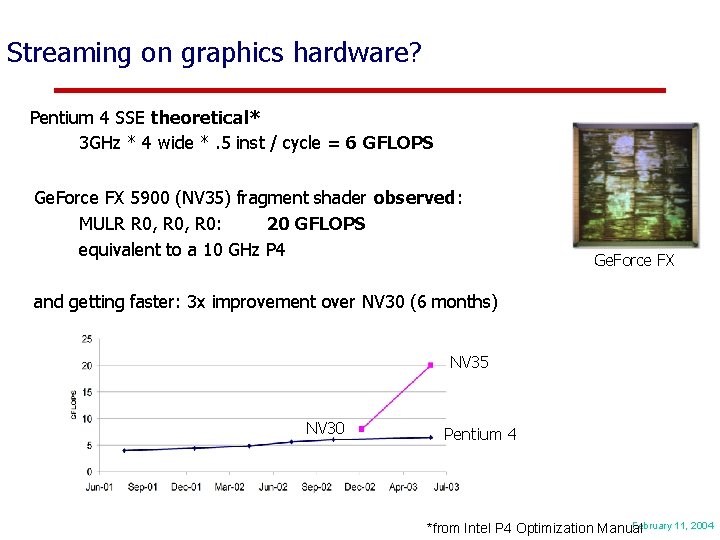

Streaming on graphics hardware? Pentium 4 SSE theoretical* 3 GHz * 4 wide *. 5 inst / cycle = 6 GFLOPS Ge. Force FX 5900 (NV 35) fragment shader observed: MULR R 0, R 0: 20 GFLOPS equivalent to a 10 GHz P 4 Ge. Force FX and getting faster: 3 x improvement over NV 30 (6 months) NV 35 NV 30 Pentium 4 February 11, 2004 *from Intel P 4 Optimization Manual

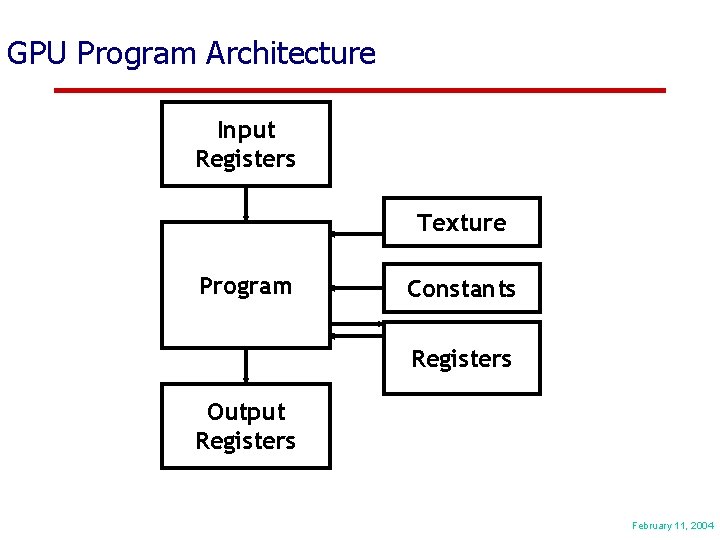

GPU Program Architecture Input Registers Texture Program Constants Registers Output Registers February 11, 2004

![Example Program Simple Specular and Diffuse Lighting !!VP 1. 0 # # c[0 -3] Example Program Simple Specular and Diffuse Lighting !!VP 1. 0 # # c[0 -3]](http://slidetodoc.com/presentation_image/40e8be9489987988f367769d106690e6/image-17.jpg)

Example Program Simple Specular and Diffuse Lighting !!VP 1. 0 # # c[0 -3] = modelview projection (composite) matrix # c[4 -7] = modelview inverse transpose # c[32] = eye-space light direction # c[33] = constant eye-space half-angle vector (infinite viewer) # c[35]. x = pre-multiplied monochromatic diffuse light color & diffuse mat. # c[35]. y = pre-multiplied monochromatic ambient light color & diffuse mat. # c[36] = specular color # c[38]. x = specular power # outputs homogenous position and color # DP 4 o[HPOS]. x, c[0], v[OPOS]; # Compute position. DP 4 o[HPOS]. y, c[1], v[OPOS]; DP 4 o[HPOS]. z, c[2], v[OPOS]; DP 4 o[HPOS]. w, c[3], v[OPOS]; DP 3 R 0. x, c[4], v[NRML]; # Compute normal. DP 3 R 0. y, c[5], v[NRML]; DP 3 R 0. z, c[6], v[NRML]; # R 0 = N' = transformed normal DP 3 R 1. x, c[32], R 0; # R 1. x = Ldir DOT N' DP 3 R 1. y, c[33], R 0; # R 1. y = H DOT N' MOV R 1. w, c[38]. x; # R 1. w = specular power LIT R 2, R 1; # Compute lighting values MAD R 3, c[35]. x, R 2. y, c[35]. y; # diffuse + ambient MAD o[COL 0]. xyz, c[36], R 2. z, R 3; # + specular END February 11, 2004

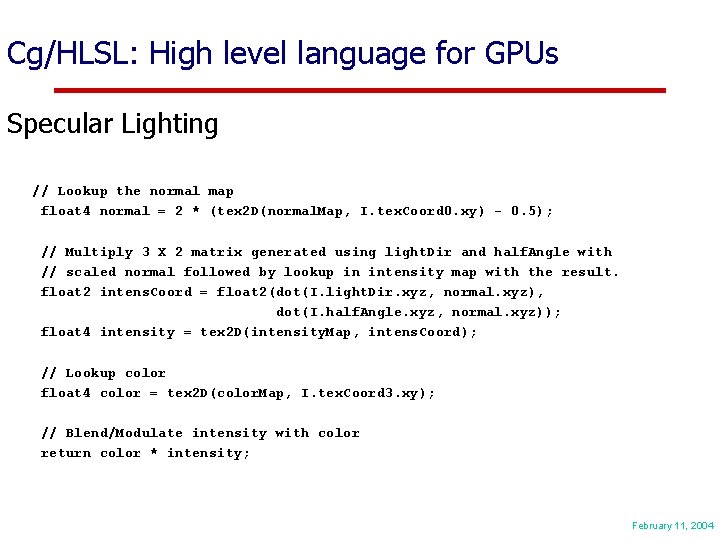

Cg/HLSL: High level language for GPUs Specular Lighting // Lookup the normal map float 4 normal = 2 * (tex 2 D(normal. Map, I. tex. Coord 0. xy) - 0. 5); // Multiply 3 X 2 matrix generated using light. Dir and half. Angle with // scaled normal followed by lookup in intensity map with the result. float 2 intens. Coord = float 2(dot(I. light. Dir. xyz, normal. xyz), dot(I. half. Angle. xyz, normal. xyz)); float 4 intensity = tex 2 D(intensity. Map, intens. Coord); // Lookup color float 4 color = tex 2 D(color. Map, I. tex. Coord 3. xy); // Blend/Modulate intensity with color return color * intensity; February 11, 2004

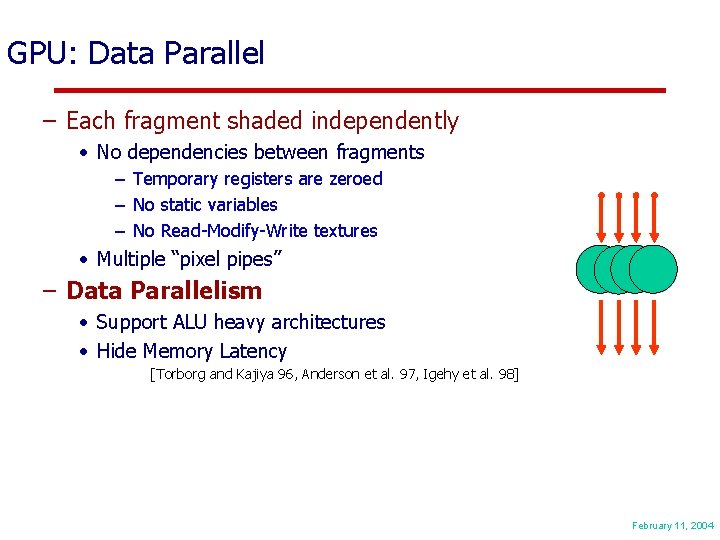

GPU: Data Parallel – Each fragment shaded independently • No dependencies between fragments – Temporary registers are zeroed – No static variables – No Read-Modify-Write textures • Multiple “pixel pipes” – Data Parallelism • Support ALU heavy architectures • Hide Memory Latency [Torborg and Kajiya 96, Anderson et al. 97, Igehy et al. 98] February 11, 2004

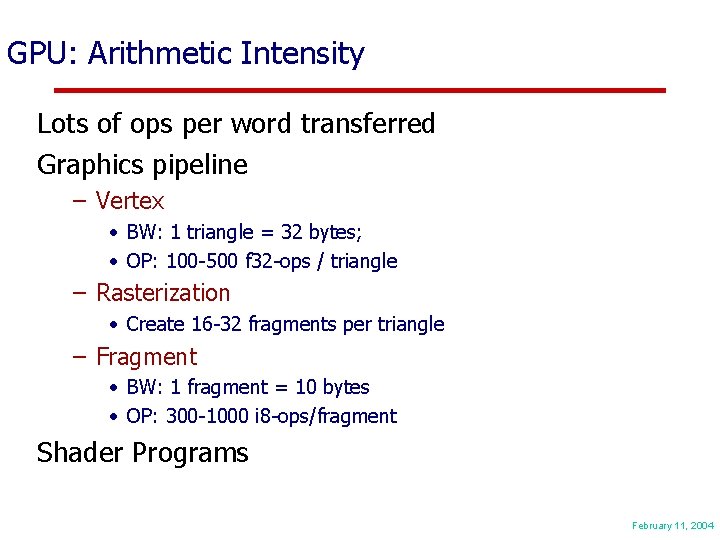

GPU: Arithmetic Intensity Lots of ops per word transferred Graphics pipeline – Vertex • BW: 1 triangle = 32 bytes; • OP: 100 -500 f 32 -ops / triangle – Rasterization • Create 16 -32 fragments per triangle – Fragment • BW: 1 fragment = 10 bytes • OP: 300 -1000 i 8 -ops/fragment Shader Programs Courtesy of Pat Hanrahan February 11, 2004

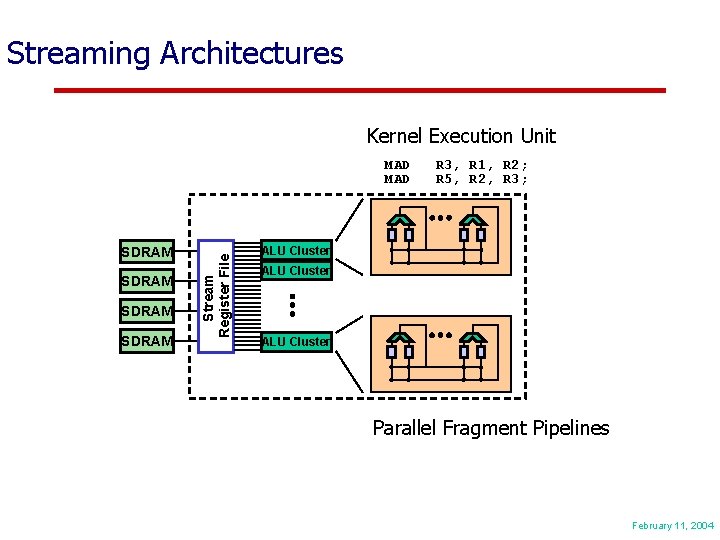

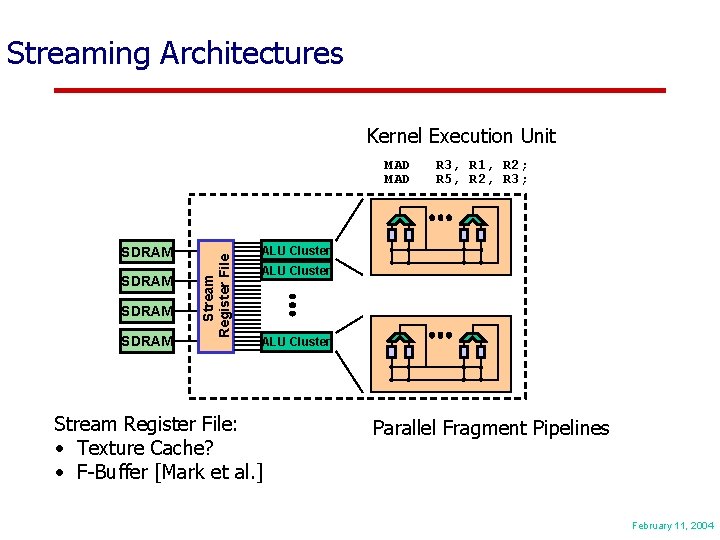

SDRAM Stream Register File Streaming Architectures ALU Cluster February 11, 2004

Streaming Architectures Kernel Execution Unit SDRAM Stream Register File MAD R 3, R 1, R 2; R 5, R 2, R 3; ALU Cluster February 11, 2004

Streaming Architectures Kernel Execution Unit SDRAM Stream Register File MAD R 3, R 1, R 2; R 5, R 2, R 3; ALU Cluster Parallel Fragment Pipelines February 11, 2004

Streaming Architectures Kernel Execution Unit SDRAM Stream Register File MAD R 3, R 1, R 2; R 5, R 2, R 3; ALU Cluster Stream Register File: • Texture Cache? • F-Buffer [Mark et al. ] Parallel Fragment Pipelines February 11, 2004

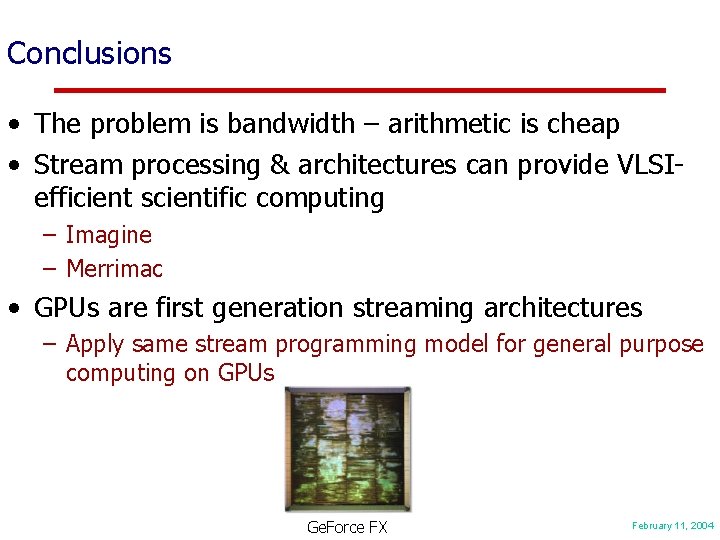

Conclusions • The problem is bandwidth – arithmetic is cheap • Stream processing & architectures can provide VLSIefficient scientific computing – Imagine – Merrimac • GPUs are first generation streaming architectures – Apply same stream programming model for general purpose computing on GPUs Ge. Force FX February 11, 2004

- Slides: 25