Star Schema Pentaho PDI Lab 2 Sakila ER

Star Schema Pentaho PDI Lab 2

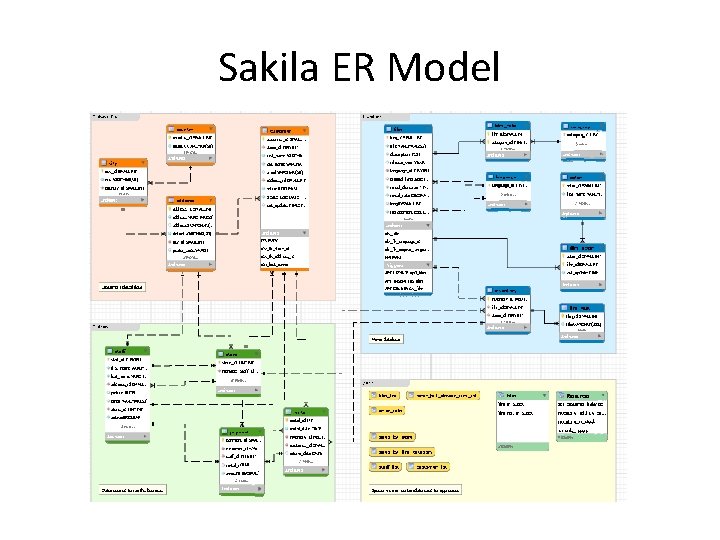

Sakila ER Model

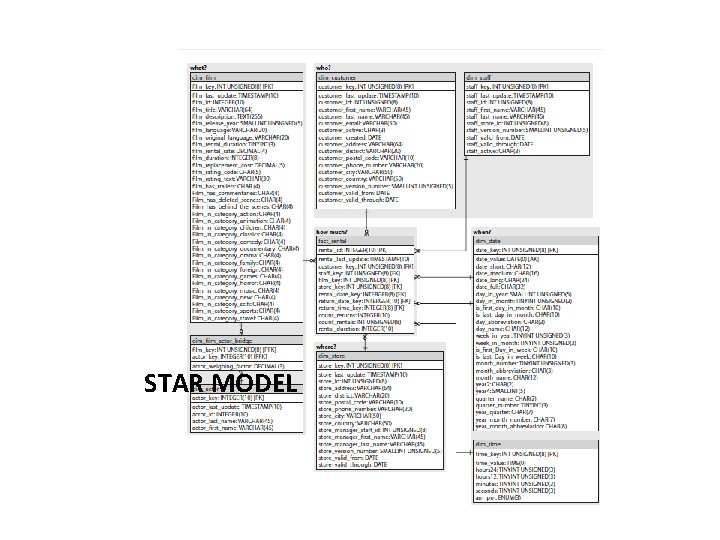

STAR MODEL

Step 1 • Explain the “Logic Layer” (ER model): –Load Sakila model. (sakila-data. sql) –Emptying all tables before! SET FOREIGN_KEY_CHECKS=0; TRUNCATE actor; SET FOREIGN_KEY_CHECKS=1; • Star Model: –Identify the fact table. –Identify relationships. –Identify the level of granularity. –Load the star schema model. –sakila_dwh_schema. sql

Step 2: Create users Sakila: CREATE USER 'sakila'@'localhost' IDENTIFIED BY 'sakila'; GRANT ALL PRIVILEGES ON sakila. * TO 'sakila'@'localhost' ; Sakila_DWH: CREATE USER 'sakila_dwh'@'localhost' IDENTIFIED BY 'sakila_dwh'; GRANT ALL PRIVILEGES ON sakila_dwh. * TO 'sakila_dwh'@'localhost';

Step 3: Dim_date Transformation • The dim_date and dim_time dimension tables are static: They are initially loaded with a generated dataset and do not need to be periodically reloaded from the sakila sample database. • The transformation file for loading the dim_date dimension table is called load_dim_date. ktr

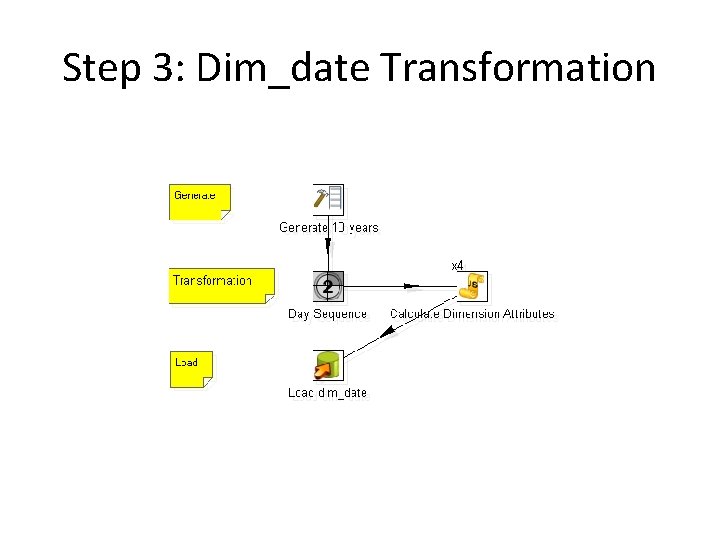

Step 3: Dim_date Transformation

Step 3: Dim_date Transformation • Generate 10 years: The transformation works by first generating about 10 years worth of rows (10 × 366 =3660) using a step of the Generate Rows type, which is labeled “Generate 10 years”. The generated rows have a few fields with constant values, and one of these constants is the initial date, which was set at 2000 -01 -01. Other constants include the language and country code. • Day Sequence: The generated rows are fed into the Day Sequence step in Figure 4 -4. This step is of the Sequence type, and its purpose is to generate an incrementing number for each row passed by the incoming stream. In the subsequent step, this sequence number will be added to the initial date in order to generate a series of consecutive calendar dates. • Calculate Dimension Attributes: The Calculate Dimension Attributes step is the heart of the transformation. This is a step of the Modified Java Script Value type. In this step, the field values from the stream incoming from the Day Sequence step are bound to Java. Script variables, which are then used to compute various representations of the date, in different formats.

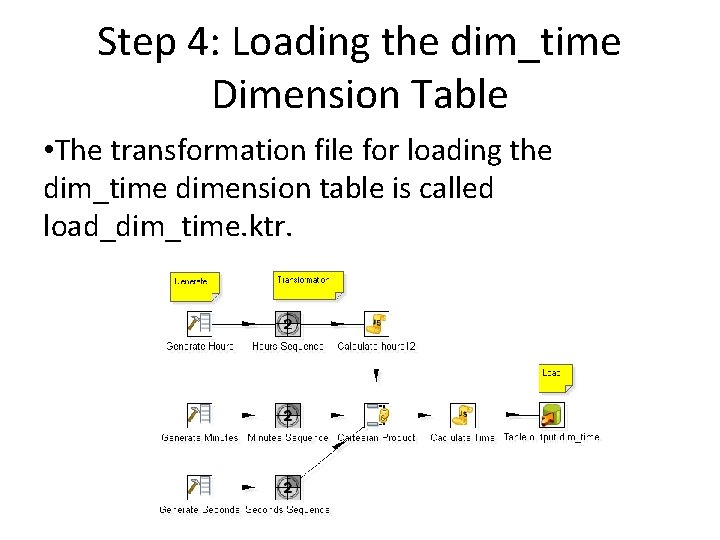

Step 4: Loading the dim_time Dimension Table • The transformation file for loading the dim_time dimension table is called load_dim_time. ktr.

Step 4: Loading the dim_time Dimension Table • Explain the steps involved in the transformation. • How many records are generated in the table? , Why?

Step 5: The load_rentals Job • The entire ETL procedure for the rental star schema is consolidated into one single Kettle job called load_rentals. kjb. This does all the work of updating and maintaining the dimension tables and loading the fact table.

Step 5: The load_rentals Job

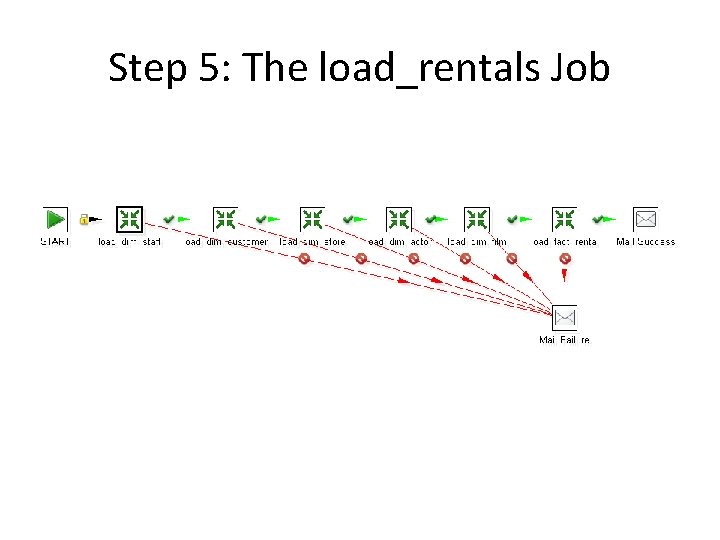

Step 5: The load_rentals Job • The START job entry is connected to a sequence of transformation job entries. The hop between the START job entry and the first transformation job entry is adorned with a little lock: This symbol indicates an unconditional hop, which means that the execution path of the job as a whole will always follow this path.

Step 5: The load_rentals Job • The transformation job entries that follow the START job entry all refer to a particular transformation that performs a distinct part of the process. For example, the load_dim_staff job entry executes the load_dim_staff. ktr transformation (which loads the dim_staff dimension table), and the subsequent load_dim_customer job entry executes the load_dim_customer. ktr transformation (which loads the dim_customer dimension table), and

Step 5: The load_rentals Job • Explain each transformations (in Detail) involved in the job and his steps. • Run the Job and check that the DW is loaded • Note: Run transformations independently also works. • Deliver the lab report on Feb 24, 2015: on Drive

- Slides: 15