RuleBased Anomaly Detection on IP Flows Nick Duffield

Rule-Based Anomaly Detection on IP Flows Nick Duffield, Patrick Haffner, Balachander Krishnamurthy, Haakon Ringberg

Unwanted traffic detection IP header TCP header Enterpris e App header Payload Intrusion Detection Systems (IDSes) protect the edge of a network q Inspect IP packets q Look for worms, Do. S, scans, instant messaging, etc Many IDSes leverage known signatures of traffic 2 q e. g. , Slammer packets contain “MS-SQL” (say) in the payload q or AOL IM packets use specific TCP ports and application headers

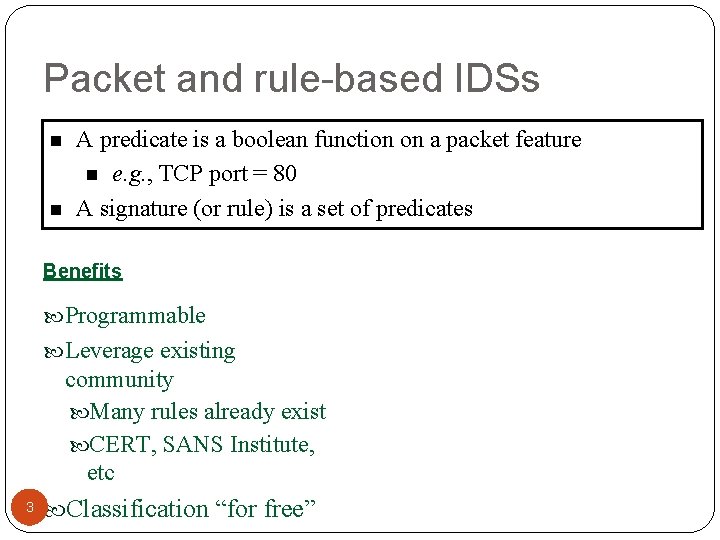

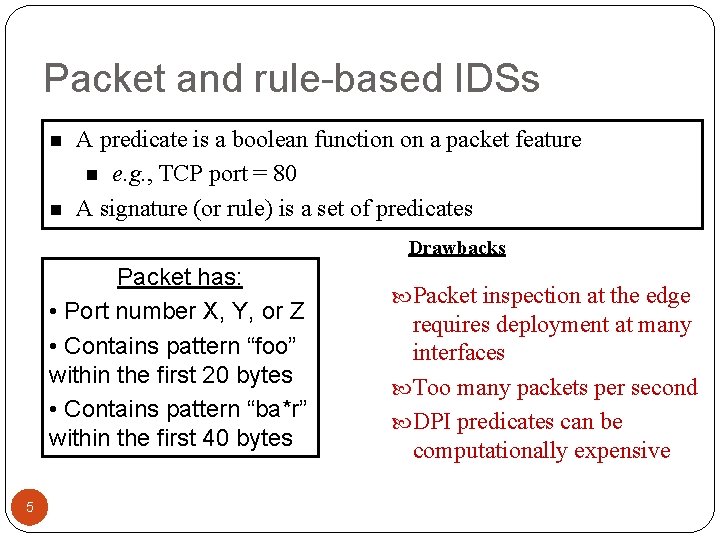

Packet and rule-based IDSs n n A predicate is a boolean function on a packet feature n e. g. , TCP port = 80 A signature (or rule) is a set of predicates Benefits Programmable Leverage existing community Many rules already exist CERT, SANS Institute, etc 3 Classification “for free”

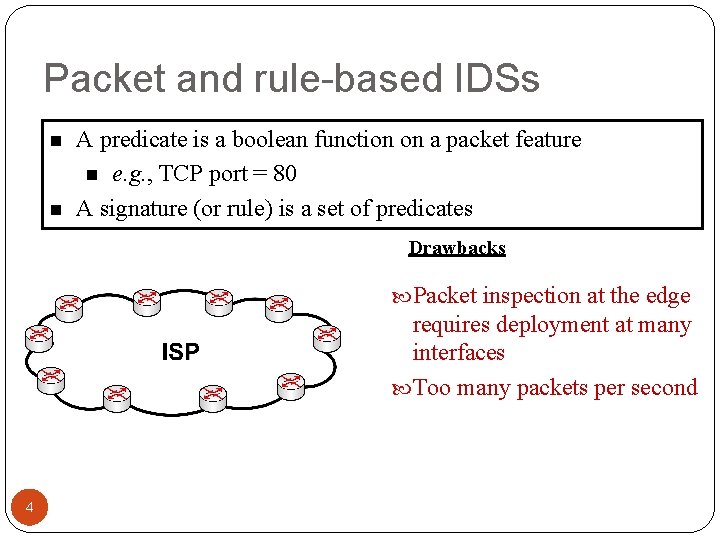

Packet and rule-based IDSs n n A predicate is a boolean function on a packet feature n e. g. , TCP port = 80 A signature (or rule) is a set of predicates Drawbacks Packet inspection at the edge requires deployment at many interfaces Too many packets per second 4

Packet and rule-based IDSs n n A predicate is a boolean function on a packet feature n e. g. , TCP port = 80 A signature (or rule) is a set of predicates Drawbacks Packet has: • Port number X, Y, or Z • Contains pattern “foo” within the first 20 bytes • Contains pattern “ba*r” within the first 40 bytes 5 Packet inspection at the edge requires deployment at many interfaces Too many packets per second DPI predicates can be computationally expensive

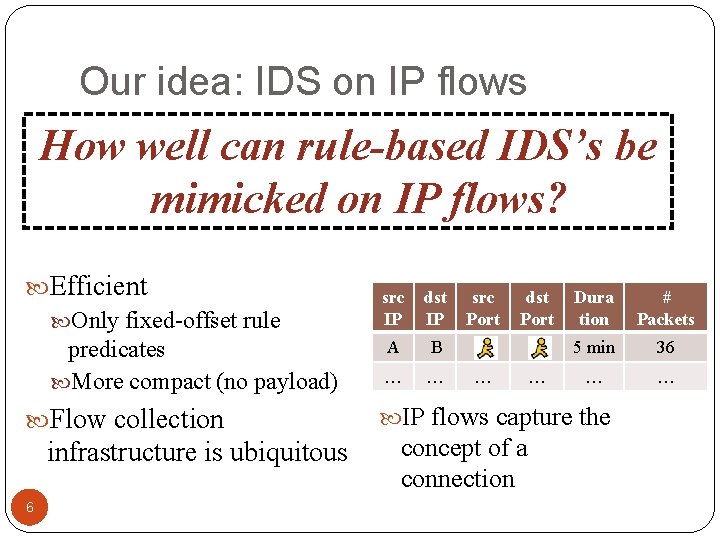

Our idea: IDS on IP flows How well can rule-based IDS’s be mimicked on IP flows? Efficient Only fixed-offset rule predicates More compact (no payload) Flow collection infrastructure is ubiquitous 6 src IP dst IP A B … … src Port dst Port … … Dura tion # Packets 5 min 36 … … IP flows capture the concept of a connection

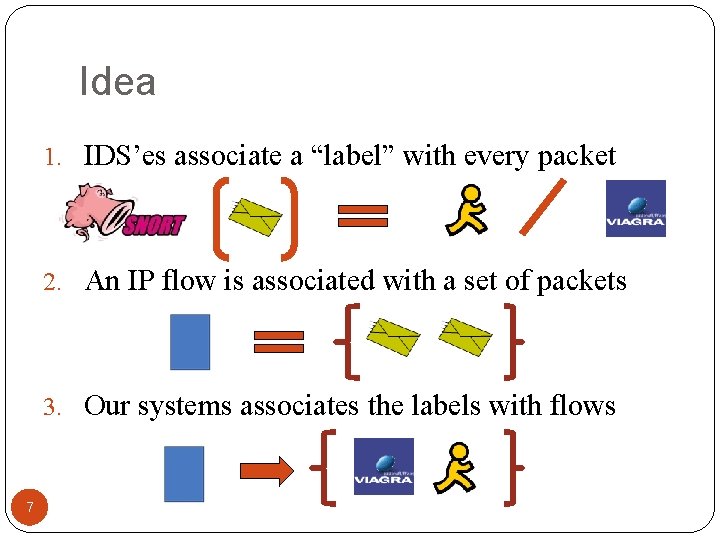

Idea 1. IDS’es associate a “label” with every packet 2. An IP flow is associated with a set of packets 3. Our systems associates the labels with flows 7

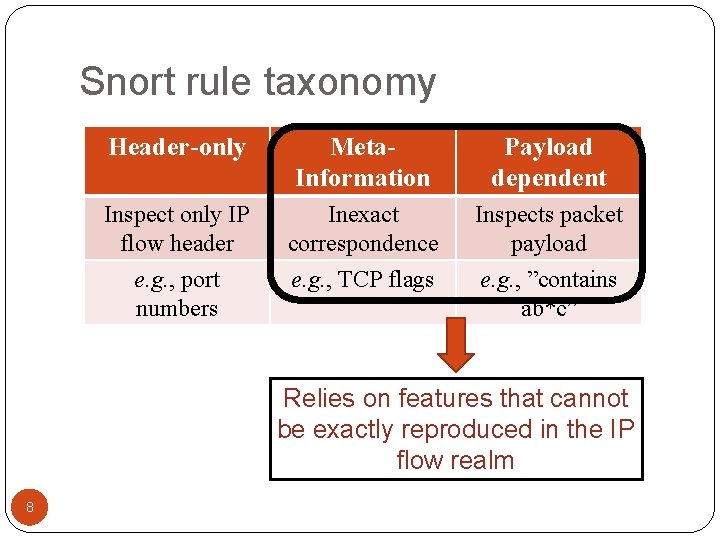

Snort rule taxonomy Header-only Meta. Information Payload dependent Inspect only IP flow header Inexact correspondence Inspects packet payload e. g. , port numbers e. g. , TCP flags e. g. , ”contains ab*c” Relies on features that cannot be exactly reproduced in the IP flow realm 8

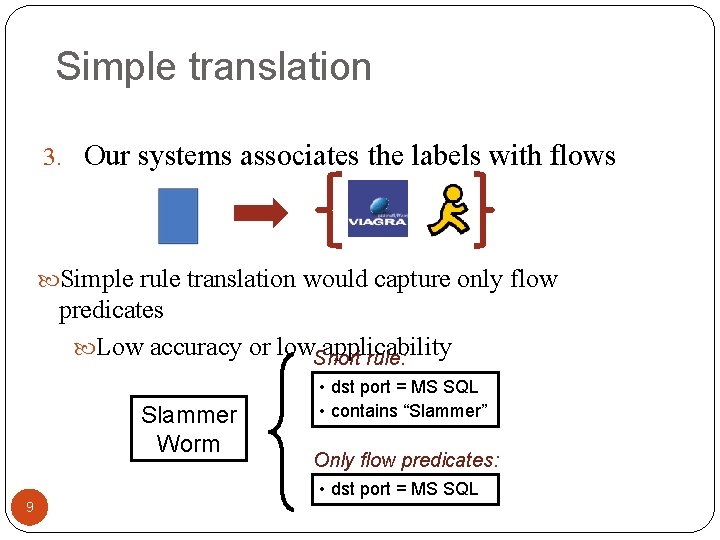

Simple translation 3. Our systems associates the labels with flows Simple rule translation would capture only flow 9 predicates Low accuracy or low. Snort applicability rule: Slammer Worm • dst port = MS SQL • contains “Slammer” Only flow predicates: • dst port = MS SQL 9

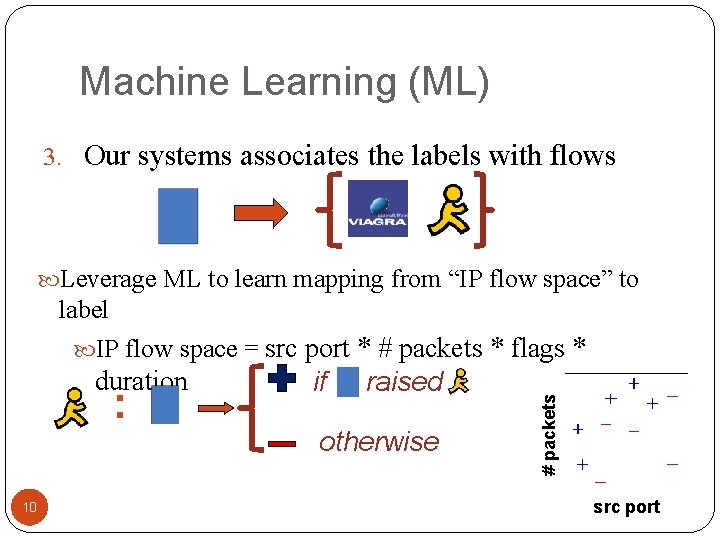

Machine Learning (ML) 3. Our systems associates the labels with flows Leverage ML to learn mapping from “IP flow space” to duration : 10 if raised otherwise # packets label IP flow space = src port * # packets * flags * src port

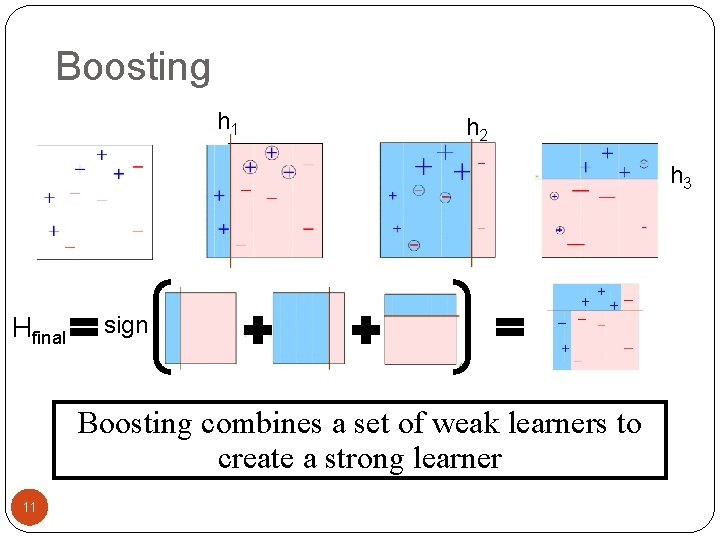

Boosting h 1 h 2 h 3 Hfinal sign Boosting combines a set of weak learners to create a strong learner 11

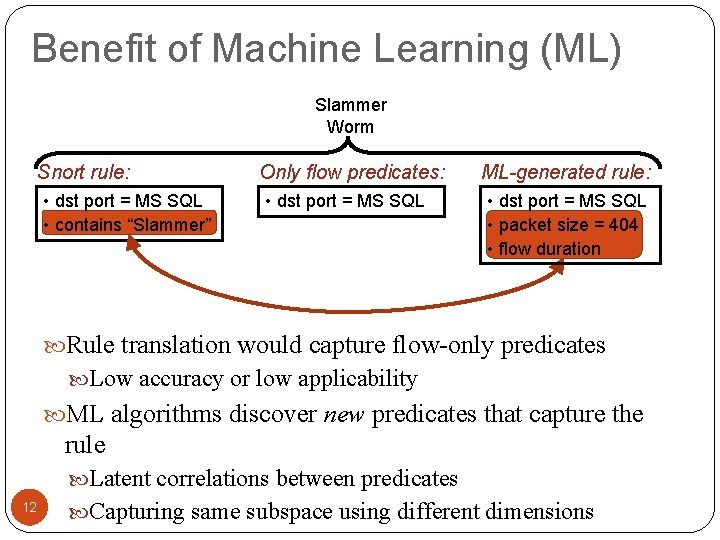

Benefit of Machine Learning (ML) Slammer Worm Snort rule: • dst port = MS SQL • contains “Slammer” Only flow predicates: • dst port = MS SQL ML-generated rule: • dst port = MS SQL • packet size = 404 • flow duration Rule translation would capture flow-only predicates Low accuracy or low applicability ML algorithms discover new predicates that capture the rule Latent correlations between predicates 12 Capturing same subspace using different dimensions

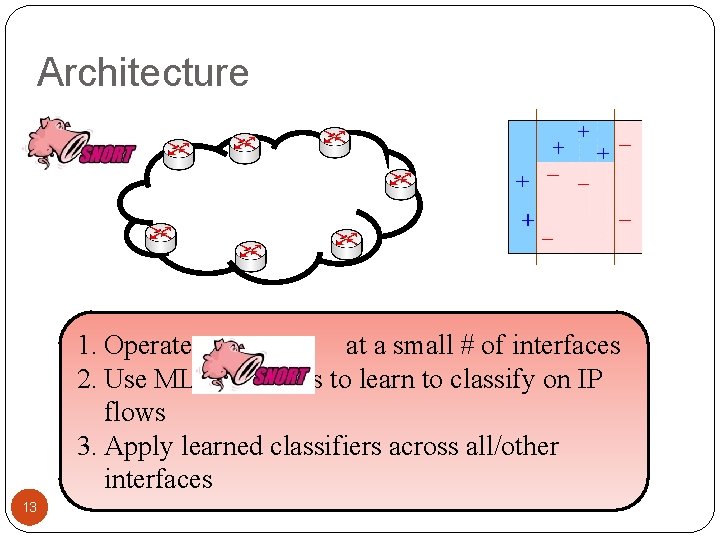

Architecture 1. Operate at a small # of interfaces 2. Use ML algorithms to learn to classify on IP flows 3. Apply learned classifiers across all/other interfaces 13

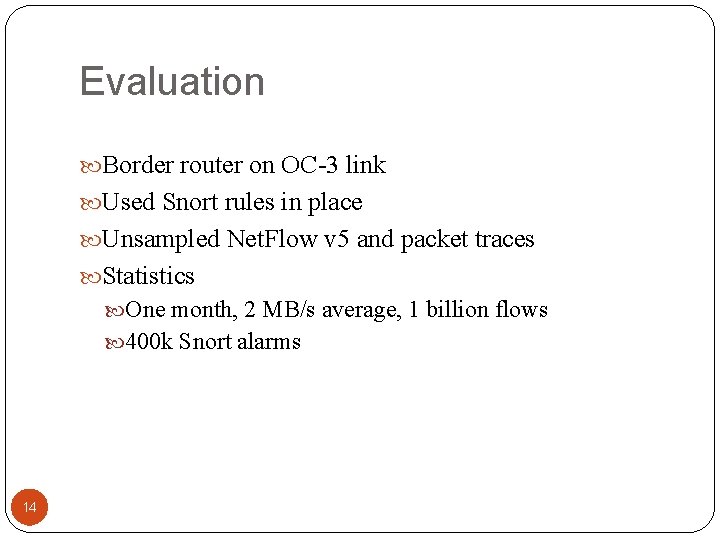

Evaluation Border router on OC-3 link Used Snort rules in place Unsampled Net. Flow v 5 and packet traces Statistics One month, 2 MB/s average, 1 billion flows 400 k Snort alarms 14

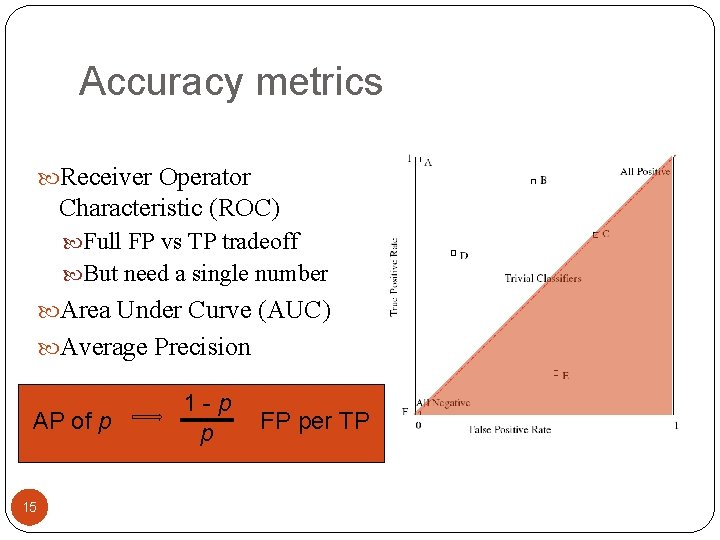

Accuracy metrics Receiver Operator Characteristic (ROC) Full FP vs TP tradeoff But need a single number Area Under Curve (AUC) Average Precision AP of p 15 1 -p p FP per TP

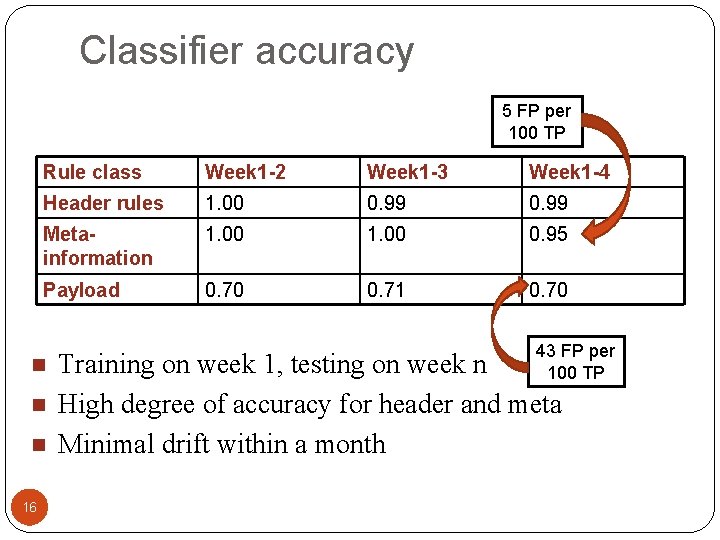

Classifier accuracy 5 FP per 100 TP Rule class Week 1 -2 Week 1 -3 Week 1 -4 Header rules 1. 00 0. 99 Metainformation 1. 00 0. 95 Payload 0. 70 0. 71 0. 70 n n n 16 43 FP per 100 TP Training on week 1, testing on week n High degree of accuracy for header and meta Minimal drift within a month

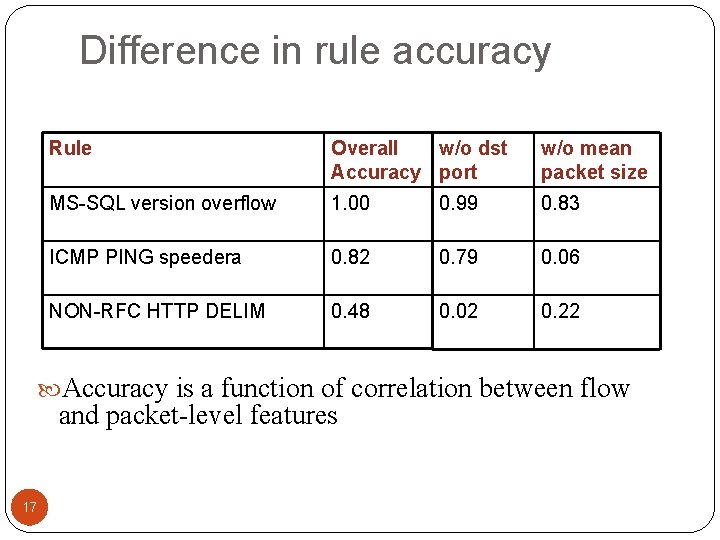

Difference in rule accuracy Rule Overall w/o dst Accuracy port w/o mean packet size MS-SQL version overflow 1. 00 0. 99 0. 83 ICMP PING speedera 0. 82 0. 79 0. 06 NON-RFC HTTP DELIM 0. 48 0. 02 0. 22 Accuracy is a function of correlation between flow and packet-level features 17

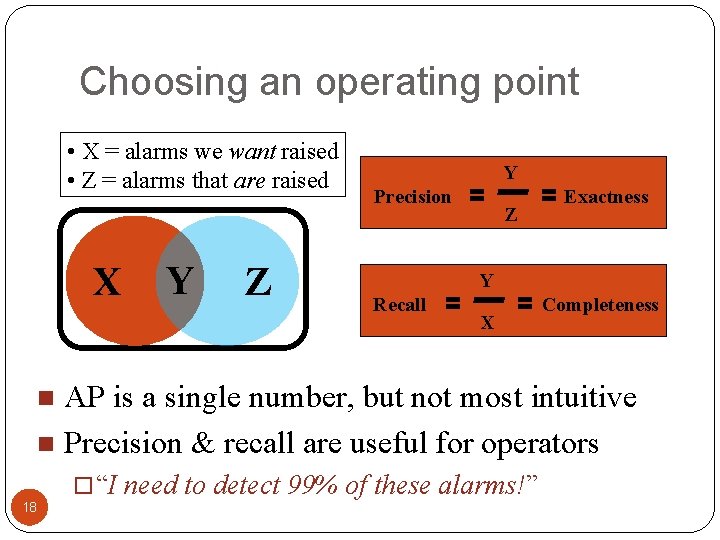

Choosing an operating point • X = alarms we want raised • Z = alarms that are raised X Y Z Y Precision Z Exactness Y Recall X Completeness AP is a single number, but not most intuitive n Precision & recall are useful for operators n 18 “I need to detect 99% of these alarms!”

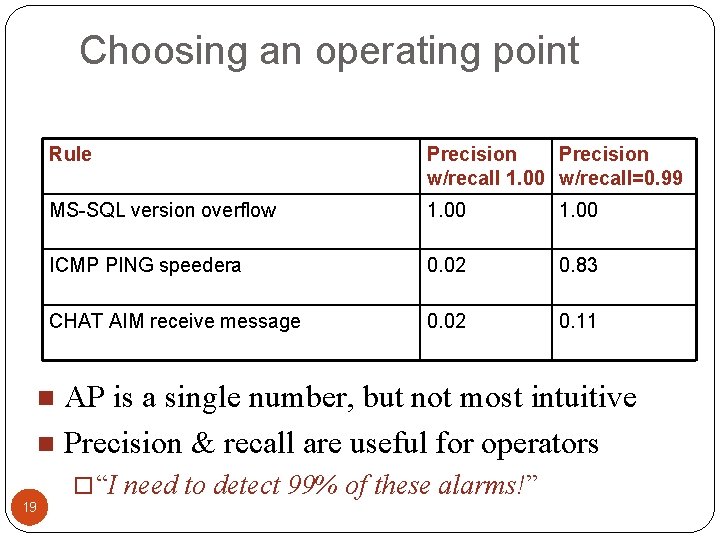

Choosing an operating point Rule Precision w/recall 1. 00 w/recall=0. 99 MS-SQL version overflow 1. 00 ICMP PING speedera 0. 02 0. 83 CHAT AIM receive message 0. 02 0. 11 AP is a single number, but not most intuitive n Precision & recall are useful for operators n 19 “I need to detect 99% of these alarms!”

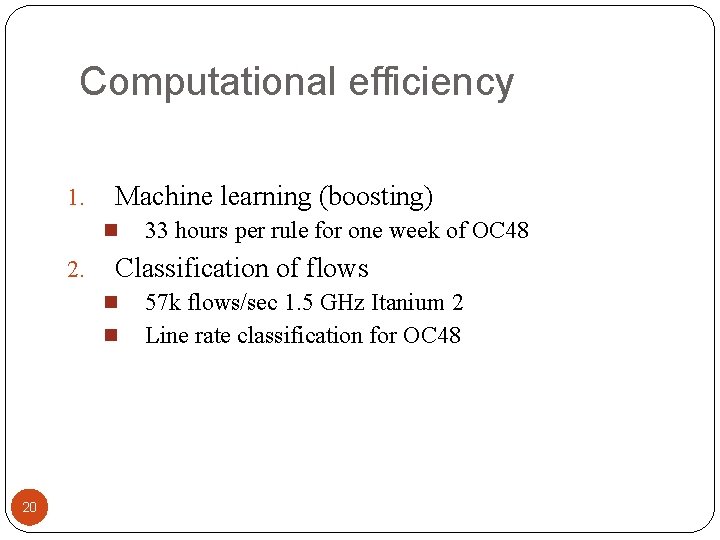

Computational efficiency 1. Machine learning (boosting) n 2. Classification of flows n n 20 33 hours per rule for one week of OC 48 57 k flows/sec 1. 5 GHz Itanium 2 Line rate classification for OC 48

Conclusion Applying Snort alarms to flows is feasible ML algorithms discover latent correlations between packet and flow predicates High degree of accuracy for many rules Minimal drift within a month Prototype can scale up to OC 48 speeds Qualitatively predictive rule taxonomy Future work Performance on sampled Net. Flow Cross-site training /classification 21

Thank you! Questions? Nick Duffield, Patrick Haffner, Balachander Krishnamurthy, Haakon Ringberg 22

- Slides: 22