Responsible Conduct of Research Part 1 Responsible data

Responsible Conduct of Research Part 1. Responsible data collection and management Part 2. Responsible data analysis and reporting Laurel A. Beckett, Ph. D. Professor and Chief, Division of Biostatistics Department of Public Health Sciences School of Medicine

Part 1. Responsible collection and management of the data. General principals. • • • Ensuring accuracy of the data Accurate reporting of your methods Preservation of records. Clarity on ownership and responsibility Adequate provision for sharing

1. Ensuring accuracy of the data is easier if you plan it that way. 1. Make it hard to do things wrong and easy to do them right. • You can do this at various steps in the process: • • Planning the study, Carrying out the data collection, Analyzing the data, Preserving and sharing the data.

• Start by making sure you have a complete list of the information you need to collect. Check this list. Then check your forms against the list as you develop them to make sure something didn’t get inadvertently left out. • Simplify as much as possible; people do better when there is less stuff to fill out. • Some information is so important you might want internal checks by asking a question than once or in different ways.

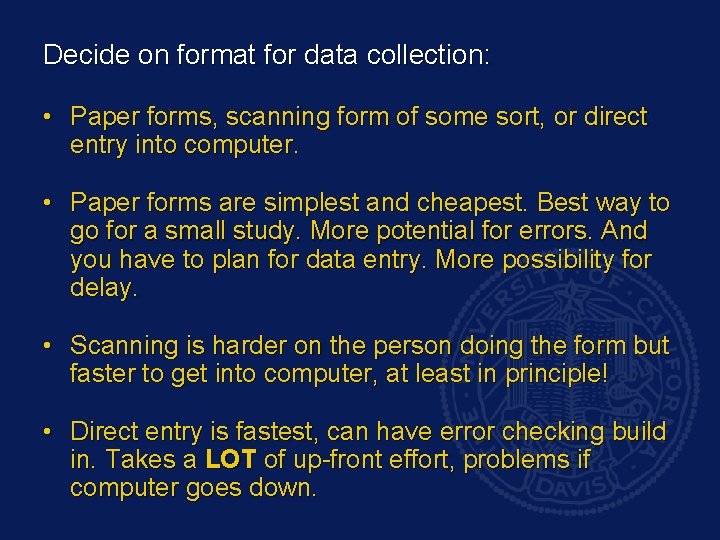

Decide on format for data collection: • Paper forms, scanning form of some sort, or direct entry into computer. • Paper forms are simplest and cheapest. Best way to go for a small study. More potential for errors. And you have to plan for data entry. More possibility for delay. • Scanning is harder on the person doing the form but faster to get into computer, at least in principle! • Direct entry is fastest, can have error checking build in. Takes a LOT of up-front effort, problems if computer goes down.

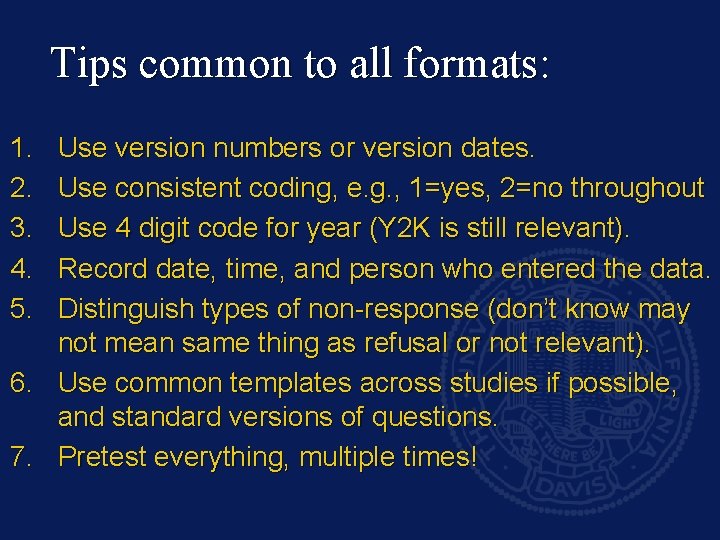

Tips common to all formats: 1. 2. 3. 4. 5. Use version numbers or version dates. Use consistent coding, e. g. , 1=yes, 2=no throughout Use 4 digit code for year (Y 2 K is still relevant). Record date, time, and person who entered the data. Distinguish types of non-response (don’t know may not mean same thing as refusal or not relevant). 6. Use common templates across studies if possible, and standard versions of questions. 7. Pretest everything, multiple times!

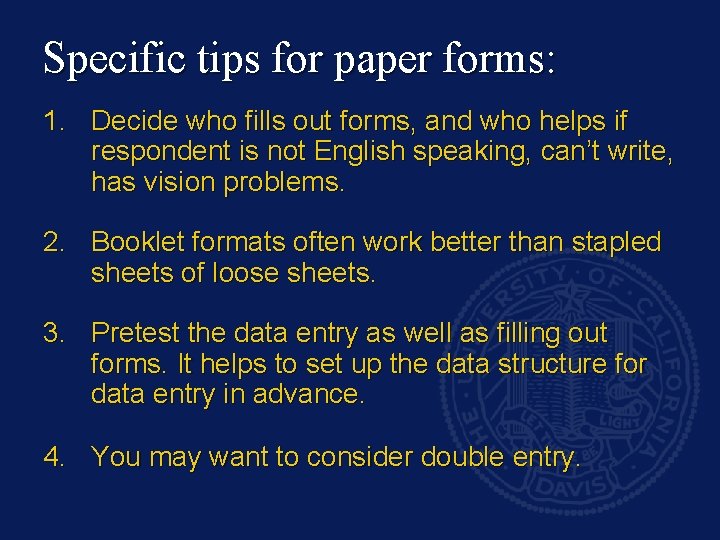

Specific tips for paper forms: 1. Decide who fills out forms, and who helps if respondent is not English speaking, can’t write, has vision problems. 2. Booklet formats often work better than stapled sheets of loose sheets. 3. Pretest the data entry as well as filling out forms. It helps to set up the data structure for data entry in advance. 4. You may want to consider double entry.

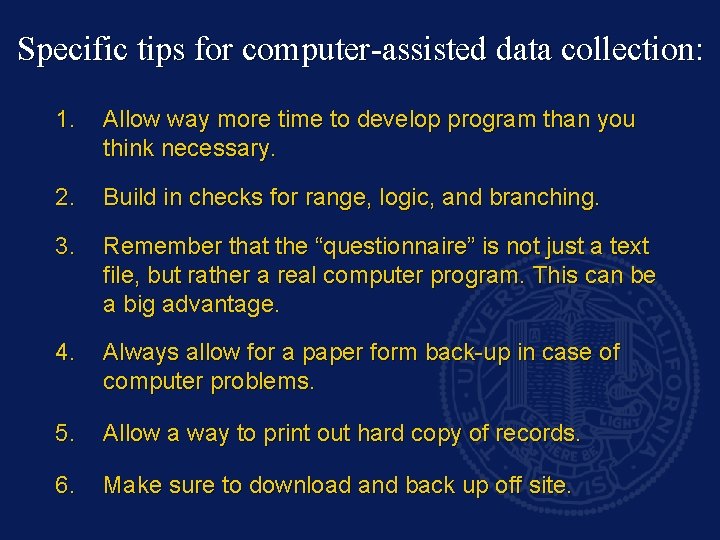

Specific tips for computer-assisted data collection: 1. Allow way more time to develop program than you think necessary. 2. Build in checks for range, logic, and branching. 3. Remember that the “questionnaire” is not just a text file, but rather a real computer program. This can be a big advantage. 4. Always allow for a paper form back-up in case of computer problems. 5. Allow a way to print out hard copy of records. 6. Make sure to download and back up off site.

Anticipate possible sources of error and try to find ways to prevent them: 1. Non-response, refusal and errors by respondent. 2. Recording error by interviewer (failure to enter value, enter wrong value, misunderstand respondent). 3. Data entry errors when paper forms transcribed. 4. Error in data management (wrong data stored or transmitted, or errors introduced). 5. Programming error (everyone enters something correctly but summary score or other programmed value is wrong).

2. Accuracy in reporting includes all aspects: • How you report the primary data, • Reporting how you collected the primary data, • And how you compiled, stored and audited the data after collection. This means keeping good records! For a small lab study this might just be a good lab notebook, but for a large clinical trial it will be many file cabinets and gigabytes of computer memory. Include this in your planning. Worse yet, you have to keep records for a very long time.

Reporting of primary data: • This means making sure that what the respondent said or what you measured in the lab is what shows up in your final analysis and paper. • You need to check all the steps along the way from interview or instrument to publication to make sure that the numbers at the end reflect the data at the beginning.

Reporting of primary data: Continued • Keep good records and report accurately how you collected the primary data. This means having copies of paper forms, computer forms, data entry instructions, computer programs, and audit forms. • This is one reason for having hard copies of computer data entry sessions. Most computer data entry programs have a feature that allows you to do this.

Reporting of primary data: Continued • Another useful form of record: a code book for the data collected. • For each data item collected the code book tells the variable name, interpretation, allowable values, whethere any branching considerations, labels of values if appropriate. • The code book should also have the name and contact information for the person who compiled it, the date last updated, and the version number to which it refers.

Reporting of primary data: Continued • Some FDA guidelines require such things as double programming of data entry and data management programs. Be sure you can document who programmed computers, when, and have those programs available in case of audit. • I like to have a set of “good programming practice” guidelines and use that as part of evaluation of programmers. It is also useful to show your external reviewers.

Conduct regular audits and keep track of your results. This includes audits of: 1. Accuracy of the primary data and, 2. Accuracy of the process (consents, IRB annual renewal, storage and back-up of data). Audits typically do a percentage of the primary data and ALL of the consents, IRB and so on. Set a standard for accuracy, and if it is not met, do diagnostics, audit more stuff, and get it fixed.

If errors are found, they need to be corrected. Errors should be corrected on the “primary data base, ” not on copies. There should be limited permission to change primary data base and you should track who makes changes and why. Best to preserve ability to restore an older version if needed. Also track queries about data and how they were resolved. Keep good records of the error finding and correction process.

3. Preserve records – if not forever, at least as long as the current NIH guidelines at the time you complete the study. Keep your original data (paper forms or copies of computer data entry) in a safe place, locked, and in separate location from the primary computer. Back up your computer regularly and keep those backups off site. Update back-up media as needed. Tapes and diskettes are now obsolete; CD’s could go that way, too. Check your back-ups occasionally and redo if necessary or make copies to new media.

4. Be clear on who ownership of the data. The institution that received the grant typically “owns” the data. The PI has custody and is responsible for accuracy and protection. NIH now expects a copy of the data to be made public at some point. There is an expectation of accuracy. You need to decide which is the “primary” copy. This should not live somewhere that it can vanish from PI’s control.

Note: Ownership does not necessarily include permission to peek at the data. Clinical trials that are masked typically restrict that to the study statisticians and the Data and Safety Monitoring Committee (DSMC). Who gets access for writing papers, and who gets to write which papers? Best to set up a mechanism in advance.

The PI is responsible for confidentiality but collaborators have to behave themselves. Collaborators, including trainees, have rights of access to the data. Typically there is a process of approval of publications (maybe a committee). Publications are expected to come out in a timely fashion. You should be able to produce the data that were used for the publication if so requested.

5. Researchers who want to replicate your results should be able to do so. This may require access to the original data. It may also require access to programs. And accurate documentation should be available. The code book is useful here.

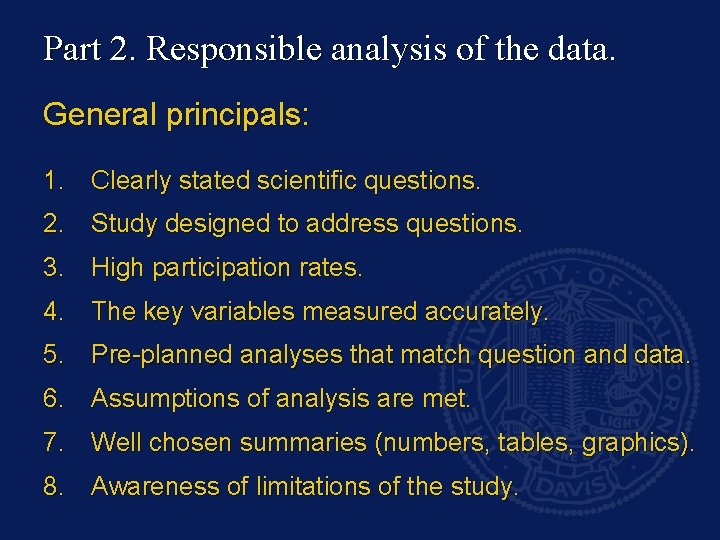

Part 2. Responsible analysis of the data. General principals: 1. Clearly stated scientific questions. 2. Study designed to address questions. 3. High participation rates. 4. The key variables measured accurately. 5. Pre-planned analyses that match question and data. 6. Assumptions of analysis are met. 7. Well chosen summaries (numbers, tables, graphics). 8. Awareness of limitations of the study.

2. Design your study to address your questions. Plan a sample size with adequate power for your hypotheses. Allow for multiple comparisons if needed. Use pilot data or previous literature to give you a basis for computing sample size and power. Make good use of ways to reduce variance and improve precision: clever sampling designs, repeated measures, regression adjustment for covariates, paired designs. The exact strategy will depend on your setting.

3. Attaining and keeping the desired sample size is really important! Try for a high participation rate, both to get sample size and to avoid selection bias. Try to avoid drop-out and loss to follow-up. Try to get complete data on participants. If you do have some losses or incomplete data, make use of special techniques that use partial data, but then be careful to check the assumptions.

4. Make sure your key outcomes and predictors are carefully measured. Check the precision of your measurements. If you are using trained raters, monitor and retrain as necessary. Check inter-rater reliability. Validate new instruments before using in a study. Think about face validity, within and between patient variation, within and between rater variation, bias, precision, reproducibility. Don’t just rely on r-square or kappa or phi or percent agreement. More detailed assessment can be very illuminating and help to improve the instrument.

I like to collect more detailed data and collapse into categories later. For example, ask about years of formal education rather than category, and measure SBP/DBP rather than just recording whether someone is hypertensive, borderline or normal. If the data are not normally distributed, consider transformation or nonparametric approaches. Look at your data before analysis, to assess for problems (both with data quality and with the statistical distribution assumptions).

5. Pre-plan the analyses to match the questions. If your specific aims are clear enough, it should be possible to write down a very careful analytic plan. The question should drive the design and the design should drive the analysis. If you will be doing interim analysis, plan for this by adjusting your alpha and sample size as needed, e. g. , the O’Brien-Fleming rule for alpha spending. Be VERY wary of post-hoc hypotheses? If you write down your plan up front, these should be easily recognized.

If the data violate the assumptions of your planned analysis, have a back-up plan such as transformation or more robust analysis. Don’t just throw out data until the data match the plan. Keep all analytic documents (the plan, univariate and bivariate looks at data, validation, programs). Programs should say who wrote them and when. I like lots of comments in programs. Cross reference programs, output and papers. I Iike to have output footnoted with the file name of the source code.

Irresponsible conduct of research: potential pitfalls in statistical analysis and reporting. Post-hoc hypotheses. Inappropriate analyses (method does not address question of interest or data violate assumptions). Fragmentary reports (incomplete summary of findings with results you don’t like suppressed). Data or experiments suppressed or trimmed to give desired results. Selective reports of findings, including misleading choice of summary statistics or graphics.

6. Check the assumptions and use analysis suited to the data you have. Classical assumptions: linearity, normality, homoscedasticity, independence, for example. You can get spurious results if these are violated – either Type I or Type II errors, too wide or too narrow confidence intervals. Possible fixes: transform data, choose a different statistical model and procedure, choose robust procedures (non-parametric or other).

7. Choose summaries that reveal and clarify, not obscure, your key findings. Graphics: Used for emphasis. Carry a big impact. Ed Tuft’s books have lots of good ideas. Tables: When people will want to have details available. They tend to obscure the message. Summary statistics: Measures of spread + center. Inferences: Include P value, magnitude of effect, precision, and some idea of how much variation there is left to explain. Use units that make sense and are interpretable.

8. Consider and discuss the limitations of your study. Try to assess impact of limitations. For example, you might be able to do sensitivity analysis to get an idea of impact of non-response. You can use your sample size and power to determine whether your null finding is informative, and how much (how big an effect might you have missed, with what probability).

Note for Clinical Trials: The CONSORT document (available on line, revised version, through many journals) outlines what you need to report. This can be used as a guideline for other kinds of studies, too. We pre-plan our study reports and give part of DSMC reports in outline form following this document. For example, the study flow charts are really helpful for tracking participation and loss to follow-up.

Responsible collaboration: guidelines for working with a statistician: 1. Consult the statistician early if possible! We can’t save a study that was designed wrong. 2. Agree up front on who will have responsibility for data accuracy, data management, back up, quality control. This might be the researcher; the statistical group, or a separate data management group. 3. Agree on goals of study and on a draft analytic plan early in the process. Try to understand what the statistician is proposing, and why.

4. Discuss whether statistician will provide just analytic results, or also draft tables, graphics, methods sections, and other components. 5. Discuss the possibility of regular meetings or statistician attending some research group meetings. 6. Plan to credit work appropriately. 7. Discuss who will keep the records of analysis and programs, and how any transition might be handled if it looks like that might occur (for example, if a grad student is doing analyses but is expected to graduate). 8. If there’s something you don’t understand, ASK!

Responsible Conduct of Research: Objective Reporting References: • Moher D, Schultz KF, Altman D. The CONSORT statement: revised recommendations for improving the quality of reports of parallel-group randomized trials. JAMA 2001; 285: 1997 -1991. – This statement has been accepted in and published by a number of top-tier medical journals. It includes a 22 -item checklist of items that should be included in a report of a clinical trial and a diagram of patient flow that can serve as a useful template. Many items apply not just to clinical trials, but to any clinical research. • The CONSORT statement has been used as a starting point for publication guidelines for other kinds of studies, too.

Responsible Conduct of Research: Objective Reporting References continued: • Stone SP, Cooper BS, Kibbler CC, Cookson BD, Roberts JA, Medley GF, Duckworth G, Lai R, Ebrahim S, Brown EM, Wiffen PJ, Davey PG. The ORION statement: guidelines for transparent reporting of outbreak reports and intervention studies of nosocomial infection. Lancet Infect Dis. 2007 Apr; 7(4): 282 -8. – This paper extends the guidelines to infectious disease study reports. • Mc. Shane LM, Altman DG, Sauerbrei W, Taube SE, Gion M, Clark GM; Statistics Subcommittee of the NCIEORTC Working Group on Cancer Diagnostics. Reporting recommendations for tumor marker prognostic studies (REMARK). J Natl Cancer Inst. 2005 Aug 17; 97(16): 1180 -4. – This version addresses the reporting guidelines for tumor biomarker studies.

Responsible Conduct of Research: Objective Reporting References continued: • Campbell MK, Elbourne DR, Altman DG; CONSORT group. CONSORT statement: extension to cluster randomised trials. BMJ. 2004 Mar 20; 328(7441): 702 -8. – The CONSORT guidelines have also been extended to cluster randomized designs. • Squires K, Pozniak AL, Pierone G, et al. Tenofovir disoproxil fumarate in nucleosideresistant HIV-1 infection: a randomized trial. Ann Intern Med 2003; 139: 313 -20. – This paper, in a journal that subscribes to the CONSORT principles, illustrates how to use the guidelines in reporting a clinical trial. Note the nice flow chart based on CONSORT.

Responsible Conduct of Research: Objective Reporting References continued: • Devereaux PJ, Manns BJ, Ghali WA, et al. The reporting of methodologic factors in randomized clinical trials and the association with a journal policy to promote adherence to the Consolidated Standards of Reporting Trials (CONSORT) checklist. Controlled Clinical Trials 2002; 23: 380 -388. – This paper studied 105 RCT’s in 29 medical journals and found that of the 11 methodological factors, the average was 6 reported in CONSORT-standard journals and 5 in non-CONSORT. There is still room for improvement in reporting. • Tufte, ER. The Visual Display of Quantitative Information. Graphics Press, Cheshire, CT: 1983. (Also Visual Explanations and Envisioning Information) – These three books provide a beautifully illustrated and thoughtful tutorial into how good graphics can inform readers, and bad graphics can mislead them. For a particularly dramatic example, read Tufte’s section on the Challenger explosion: the data were trying to warn people but were hidden in abysmal displays.

Responsible Conduct of Research: Objective Reporting References continued: • Lang TA, Secic M. How to report statistics in medicine: annotated guidelines for authors, editors, and reviewers. American College of Physicians, 1997. – This book is available in paperback and gives a good overview for the practicing physician of what to include and how to say or show it.

Responsible Conduct of Research: Objective Reporting Some web sites that may be helpful: http: //onlineethics. org/reseth/mod/data. html This web site is very nice, has a lot of references and links. If you do biomedical research, it is useful to read the following brief sections of the International Committee of Medical Journal Editors' "Uniform Requirements for Manuscripts Submitted to Biomedical Journals. " This statement was published in 1997 in the New England Journal of Medicine 335: 309 -315, and was updated May 2000. * Corrections, Retractions, and" Expressions of Concern" about Research Findings * Medical Journals and the Popular Media, * Human subjects protection, * Publication of industry-sponsored research. The UNC web site you can link to from this web site has an excellent “text” on statistical ethics and responsible analysis. NIH recently instituted a policy that requires that all proposals for contracts and grants for research involving human subjects submitted after October 1, 2000 certify that all key personnel have received education on the protection of human research subjects. This requirement applies to all applications for grants or proposals for contracts submitted to NIH after October 1 st and to all new and all non-competing grants for which an award is issued after October 1 st. NIH has posted a web series of "frequently-asked questions" regarding these new requirements. The frequently asked questions can be accessed at: http: //grants. nih. gov/grants/policy/hs_educ_faq. htm. Here is one NIH certificate web site: http: //cme. cancer. gov/clinicaltrials/learning/humanparticipant-protections. asp A terrific web site for statistical practice in medicine is Jerry Dallal’s “Little Handbook of Statistical Practice”: http: //www. tufts. edu/~gdallal/LHSP. HTM Jerry also has links to all the articles in the BMJ series on statistical practice in medicine, a superb series, very well written.

- Slides: 41