Radial Basis Function Networks 1 Introduction 2 Finding

Radial Basis Function Networks 1. Introduction 2. Finding RBF Parameters 3. Decision Surface of RBF Networks 4. Comparison between RBF and BP 11/22/2020 RBF Networks M. W. Mak 1

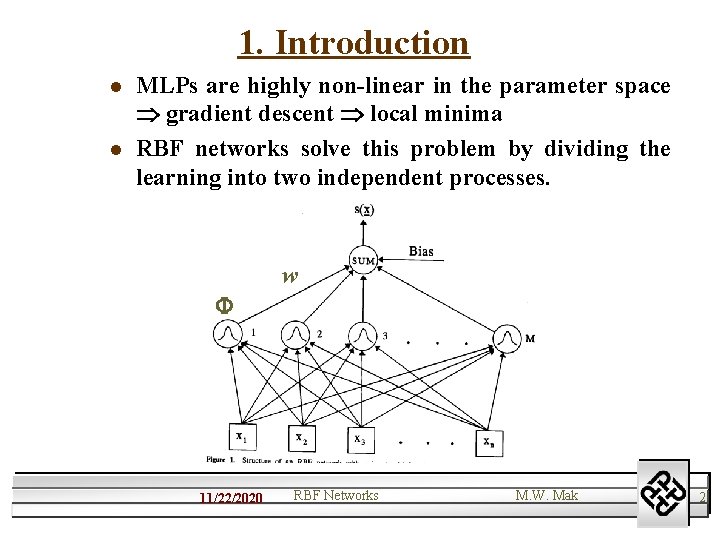

1. Introduction l l MLPs are highly non-linear in the parameter space gradient descent local minima RBF networks solve this problem by dividing the learning into two independent processes. w 11/22/2020 RBF Networks M. W. Mak 2

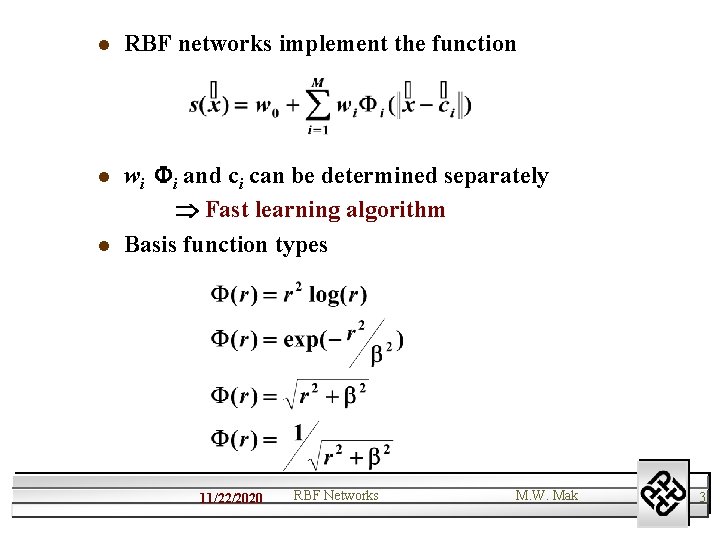

l RBF networks implement the function l wi i and ci can be determined separately Fast learning algorithm Basis function types l 11/22/2020 RBF Networks M. W. Mak 3

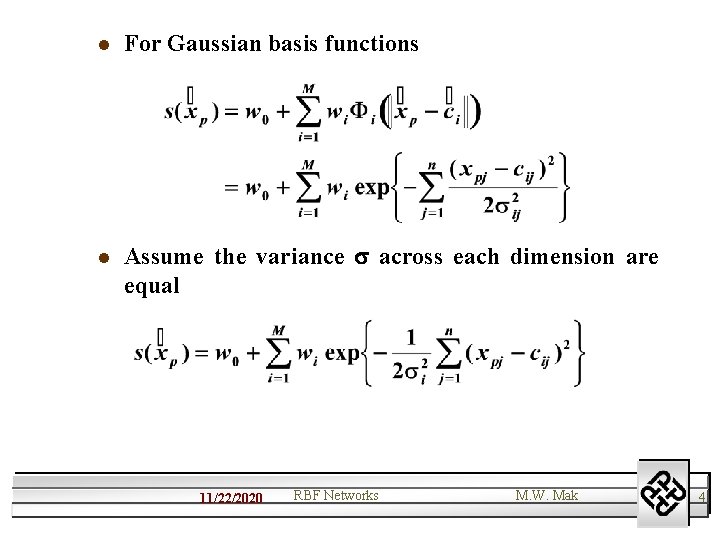

l For Gaussian basis functions l Assume the variance across each dimension are equal 11/22/2020 RBF Networks M. W. Mak 4

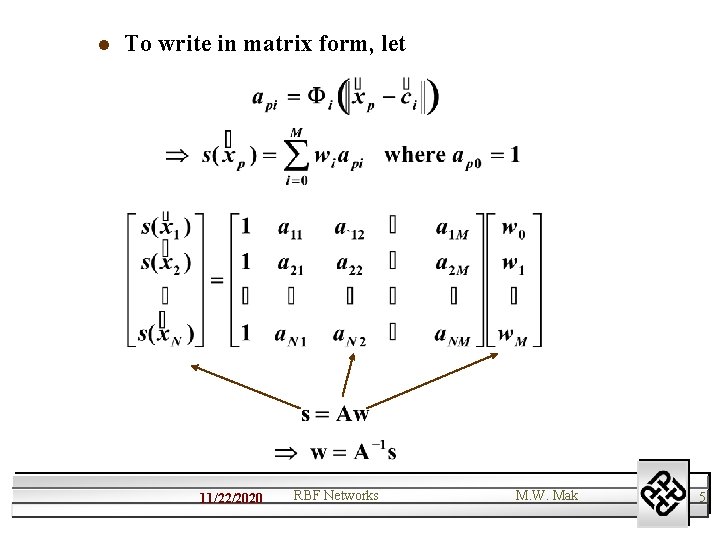

l To write in matrix form, let 11/22/2020 RBF Networks M. W. Mak 5

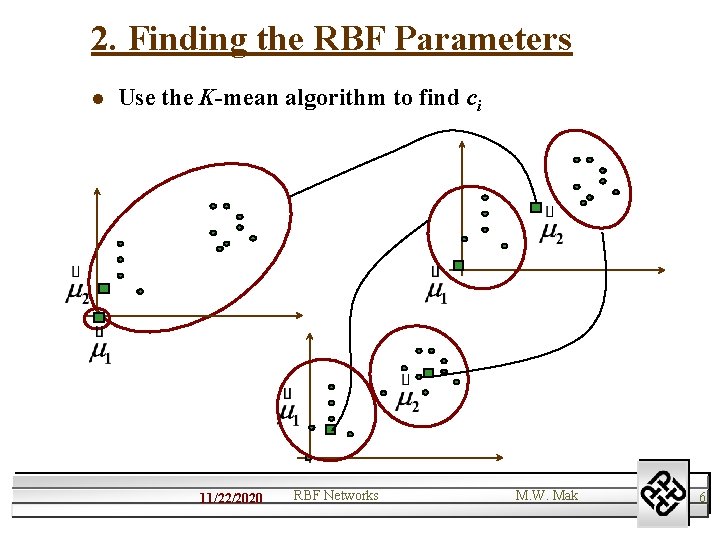

2. Finding the RBF Parameters l Use the K-mean algorithm to find ci 11/22/2020 RBF Networks M. W. Mak 6

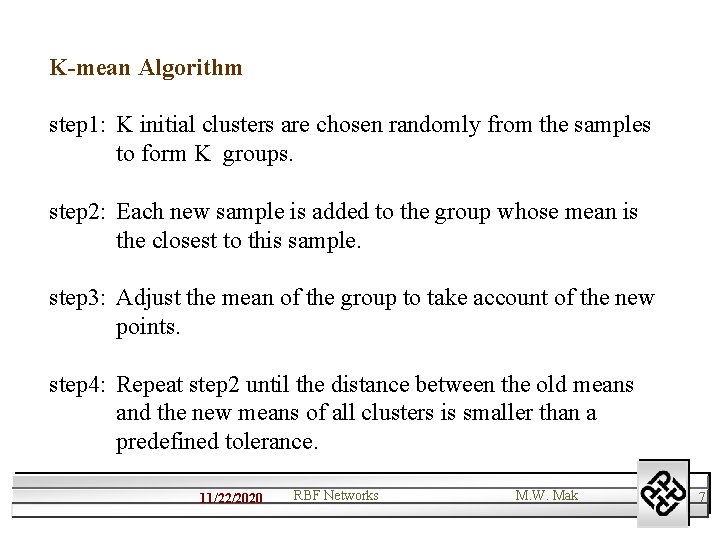

K-mean Algorithm step 1: K initial clusters are chosen randomly from the samples to form K groups. step 2: Each new sample is added to the group whose mean is the closest to this sample. step 3: Adjust the mean of the group to take account of the new points. step 4: Repeat step 2 until the distance between the old means and the new means of all clusters is smaller than a predefined tolerance. 11/22/2020 RBF Networks M. W. Mak 7

Outcome: There are K clusters with means representing the centroid of each clusters. Advantages: (1) A fast and simple algorithm. (2) Reduce the effects of noisy samples. 11/22/2020 RBF Networks M. W. Mak 8

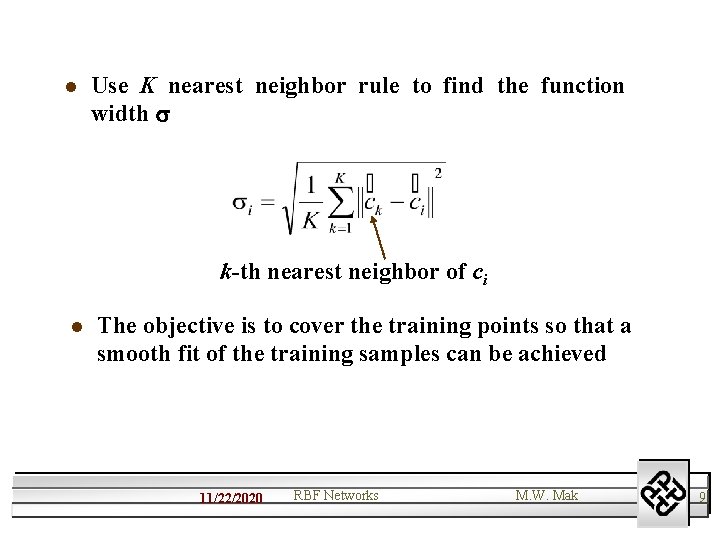

l Use K nearest neighbor rule to find the function width k-th nearest neighbor of ci l The objective is to cover the training points so that a smooth fit of the training samples can be achieved 11/22/2020 RBF Networks M. W. Mak 9

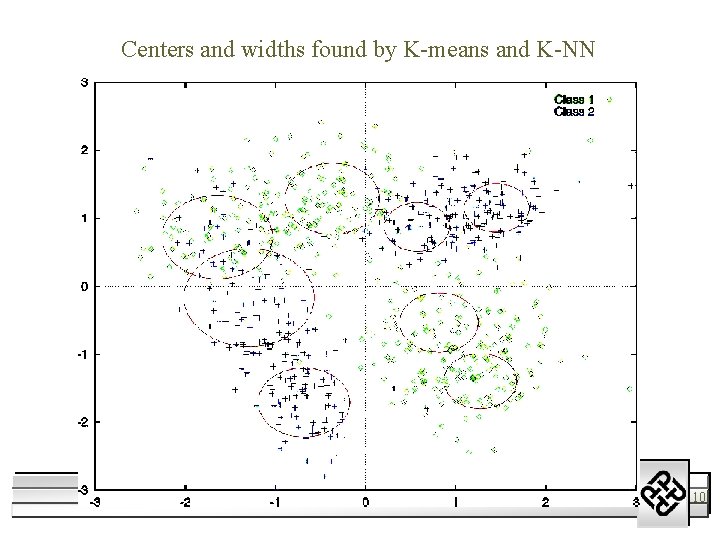

Centers and widths found by K-means and K-NN 11/22/2020 RBF Networks M. W. Mak 10

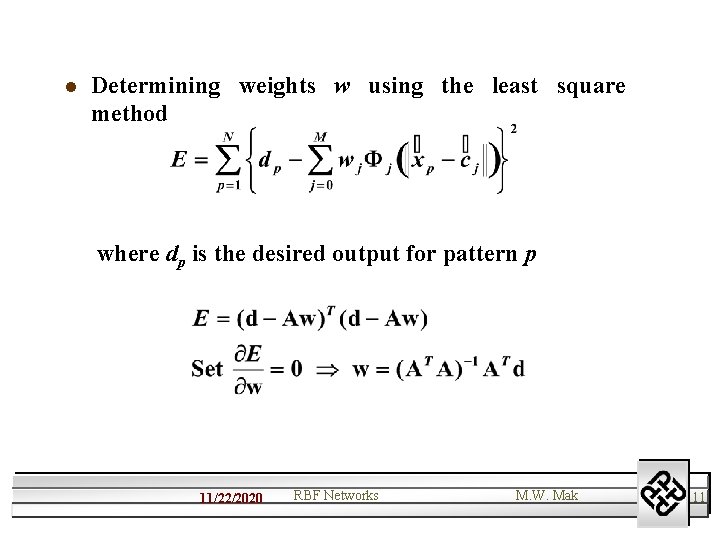

l Determining weights w using the least square method where dp is the desired output for pattern p 11/22/2020 RBF Networks M. W. Mak 11

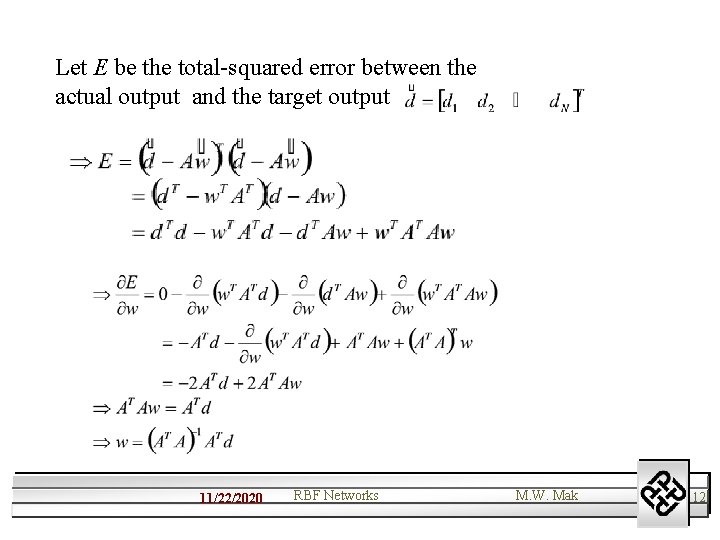

Let E be the total-squared error between the actual output and the target output 11/22/2020 RBF Networks M. W. Mak 12

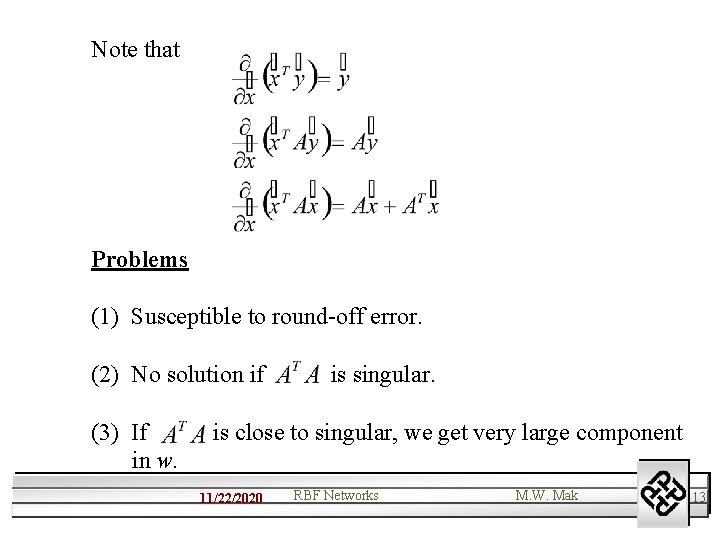

Note that Problems (1) Susceptible to round-off error. (2) No solution if (3) If in w. is singular. is close to singular, we get very large component 11/22/2020 RBF Networks M. W. Mak 13

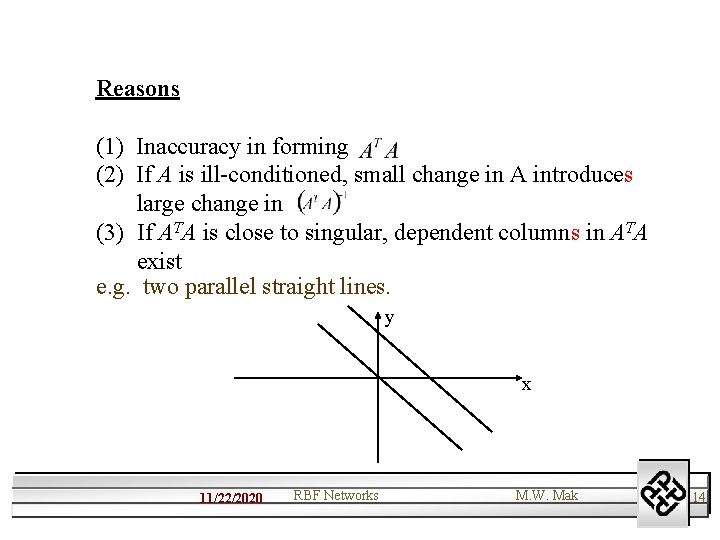

Reasons (1) Inaccuracy in forming (2) If A is ill-conditioned, small change in A introduces large change in (3) If ATA is close to singular, dependent columns in ATA exist e. g. two parallel straight lines. y x 11/22/2020 RBF Networks M. W. Mak 14

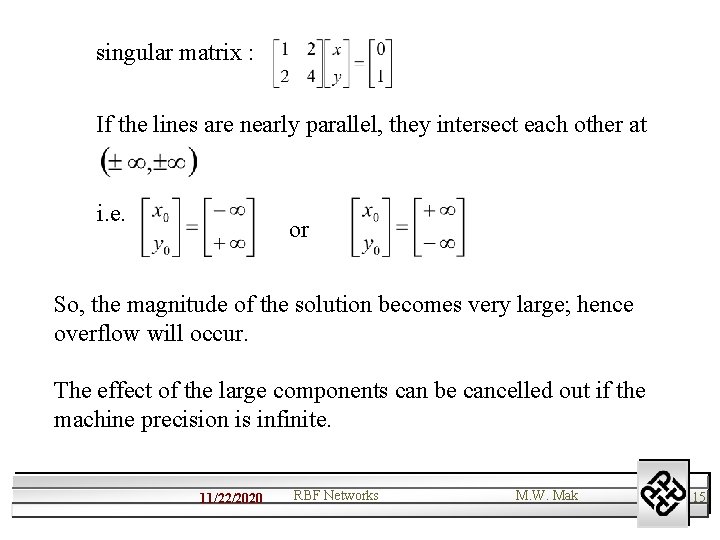

singular matrix : If the lines are nearly parallel, they intersect each other at i. e. or So, the magnitude of the solution becomes very large; hence overflow will occur. The effect of the large components can be cancelled out if the machine precision is infinite. 11/22/2020 RBF Networks M. W. Mak 15

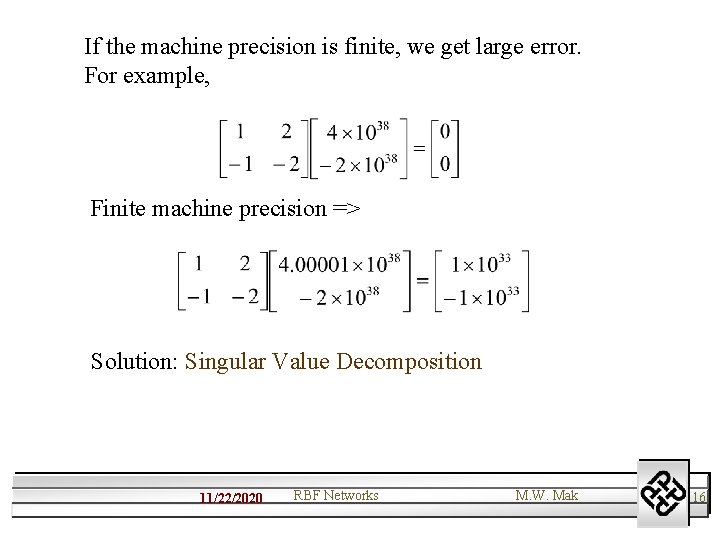

If the machine precision is finite, we get large error. For example, Finite machine precision => Solution: Singular Value Decomposition 11/22/2020 RBF Networks M. W. Mak 16

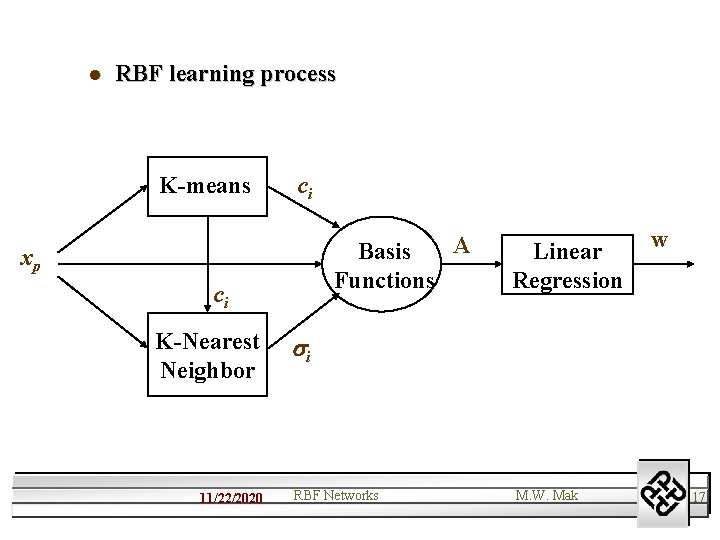

l RBF learning process K-means ci A Basis Functions xp ci K-Nearest Neighbor 11/22/2020 Linear Regression w i RBF Networks M. W. Mak 17

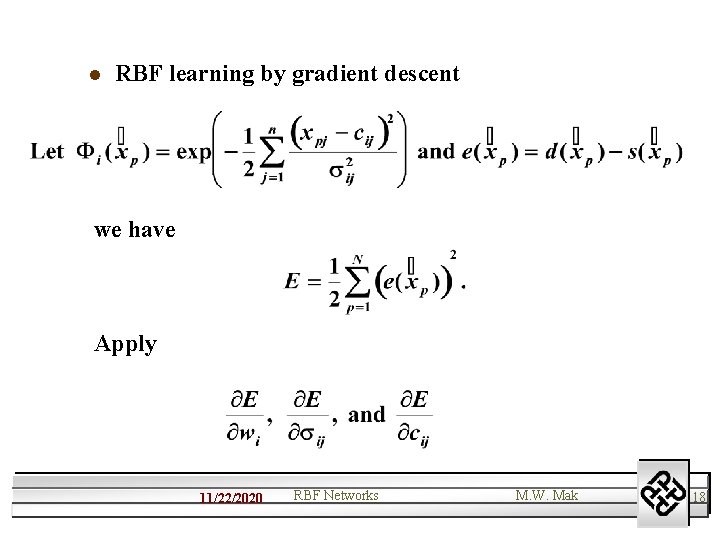

l RBF learning by gradient descent we have Apply 11/22/2020 RBF Networks M. W. Mak 18

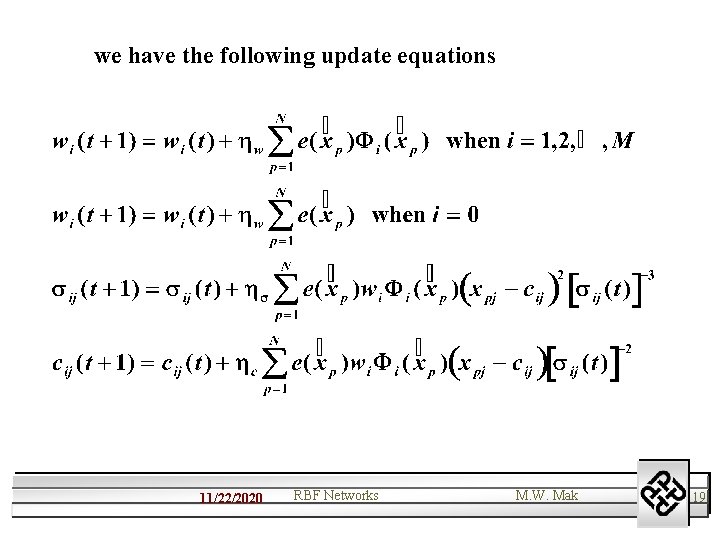

we have the following update equations 11/22/2020 RBF Networks M. W. Mak 19

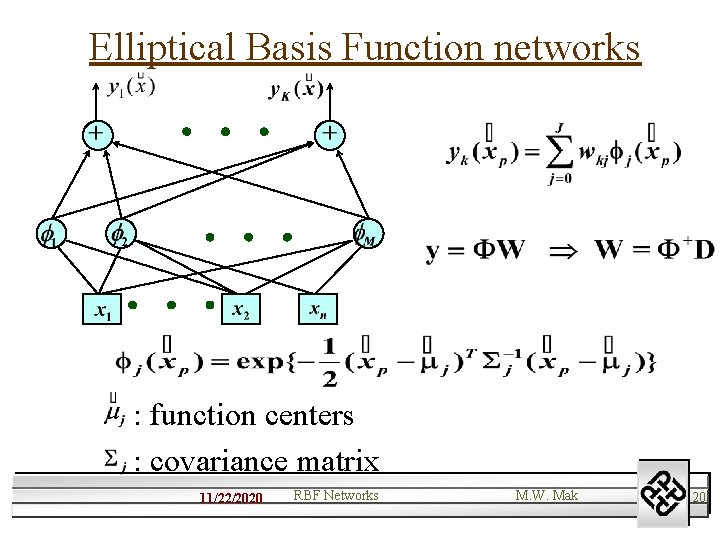

Elliptical Basis Function networks : function centers : covariance matrix 11/22/2020 RBF Networks M. W. Mak 20

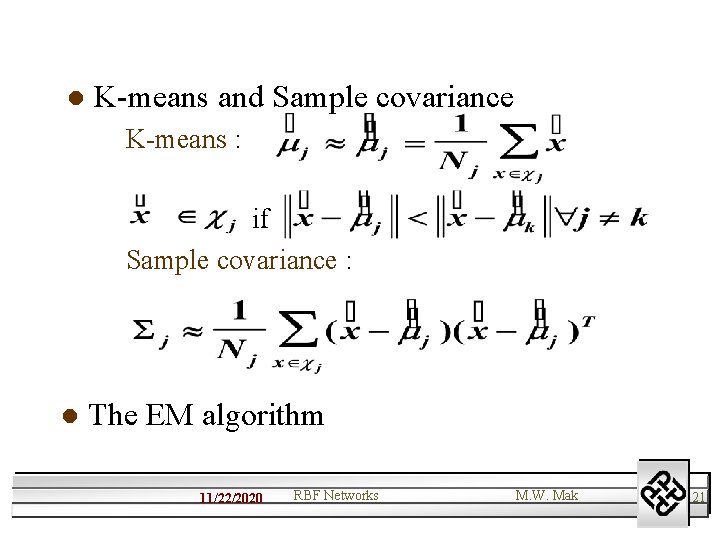

l K-means and Sample covariance K-means : if Sample covariance : l The EM algorithm 11/22/2020 RBF Networks M. W. Mak 21

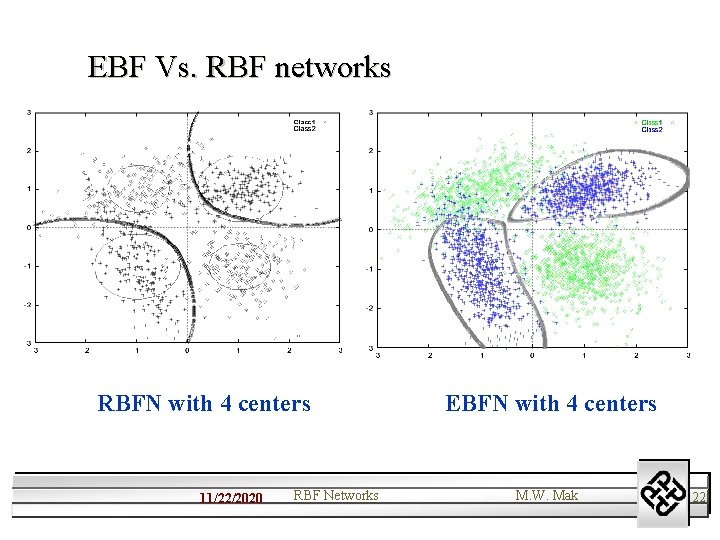

EBF Vs. RBF networks RBFN with 4 centers 11/22/2020 RBF Networks EBFN with 4 centers M. W. Mak 22

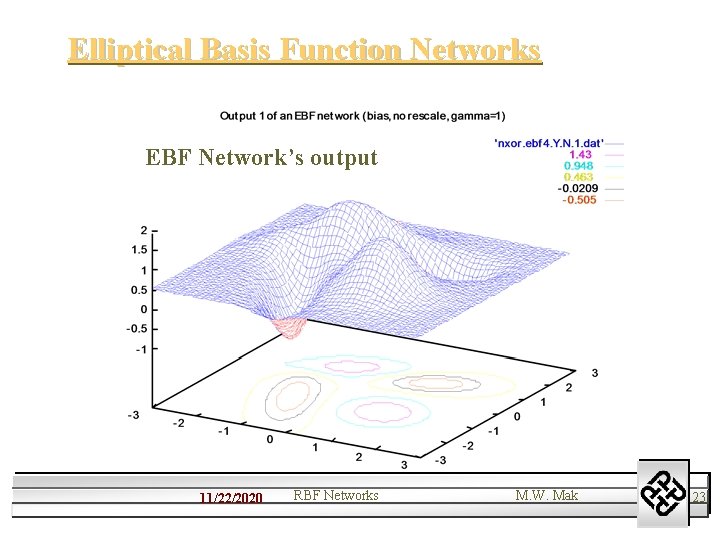

Elliptical Basis Function Networks EBF Network’s output 11/22/2020 RBF Networks M. W. Mak 23

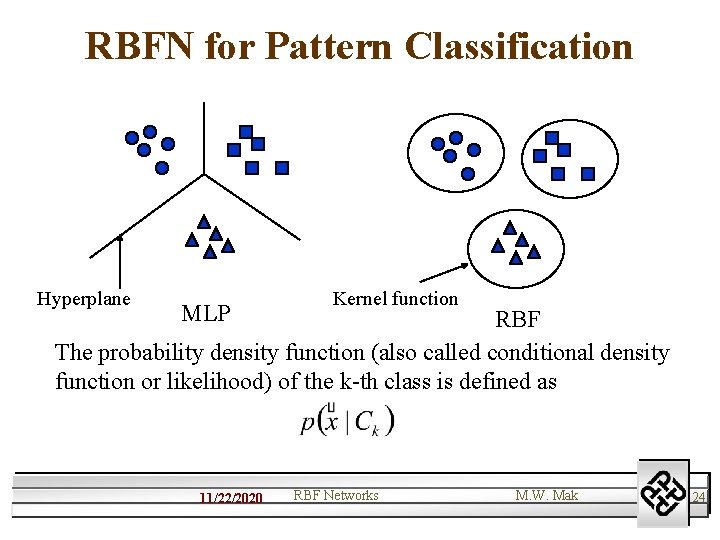

RBFN for Pattern Classification Hyperplane MLP Kernel function RBF The probability density function (also called conditional density function or likelihood) of the k-th class is defined as 11/22/2020 RBF Networks M. W. Mak 24

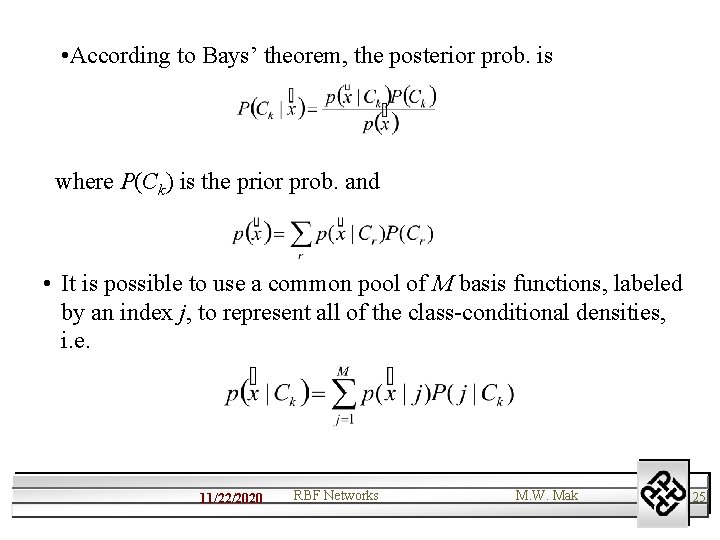

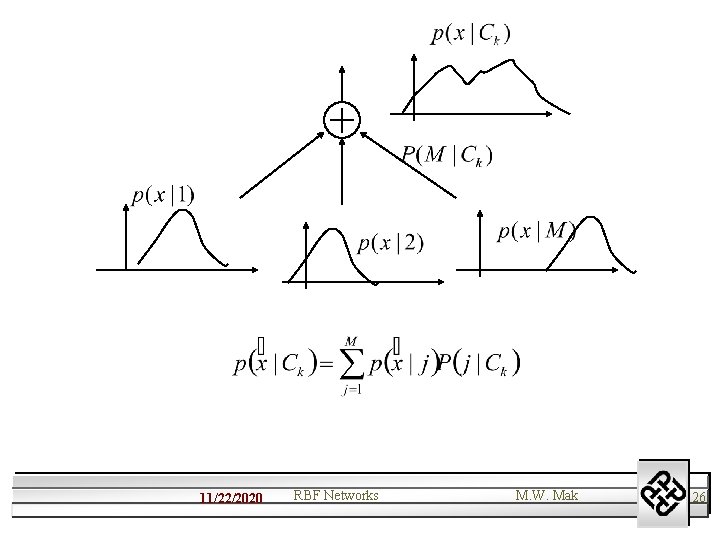

• According to Bays’ theorem, the posterior prob. is where P(Ck) is the prior prob. and • It is possible to use a common pool of M basis functions, labeled by an index j, to represent all of the class-conditional densities, i. e. 11/22/2020 RBF Networks M. W. Mak 25

11/22/2020 RBF Networks M. W. Mak 26

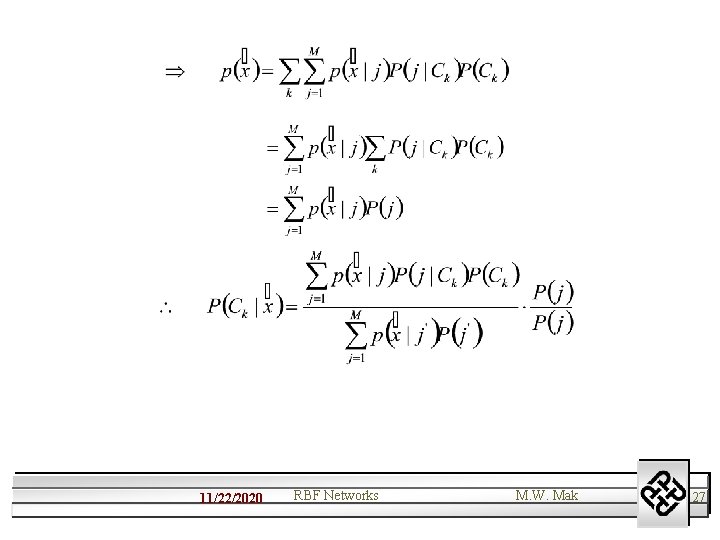

11/22/2020 RBF Networks M. W. Mak 27

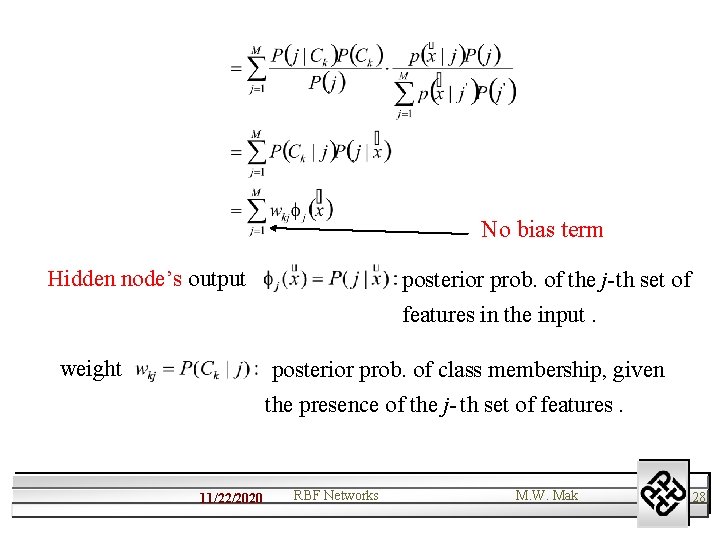

No bias term Hidden node’s output posterior prob. of the j-th set of features in the input. weight posterior prob. of class membership, given the presence of the j- th set of features. 11/22/2020 RBF Networks M. W. Mak 28

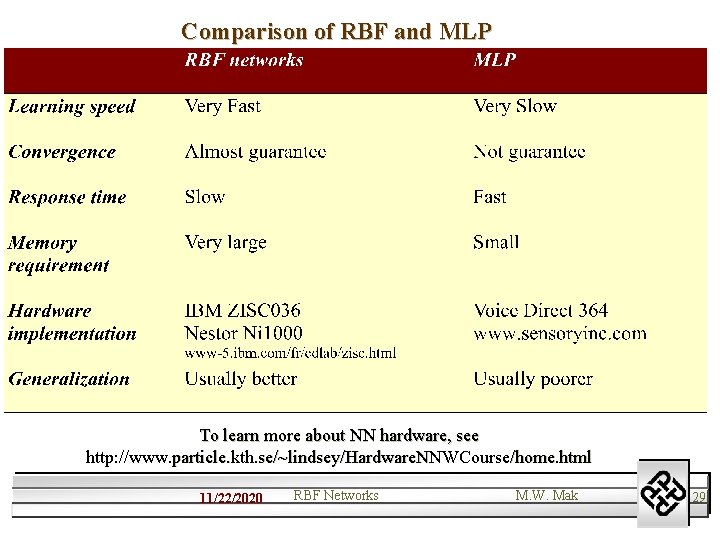

Comparison of RBF and MLP To learn more about NN hardware, see http: //www. particle. kth. se/~lindsey/Hardware. NNWCourse/home. html 11/22/2020 RBF Networks M. W. Mak 29

- Slides: 29