Performance testing with JMeter Adrian Ciobanu 20 August

Performance testing with JMeter Adrian Ciobanu 20 August 2015 Performance/Load/Stress testing What is JMeter DEMO: JMeter GUI Main components Recording settings Recording tips DEMO: Parameterize script Tips & tricks Process considerations General tips Most used components Windows Perf. Mon

There is an A for everything ü Theoretical side of things ü Definitions ü Vocab ü ISTQB ü Practical (realistic ) side of things ü Activities and their benefits ü Good practices ü Things to remember ü Lessons to learn ü Examples

Performance/Load/Stress • Performance testing (how fast is the system? ) is used to determine the speed or effectiveness of a computer, network, software program or device • Load testing (how much volume can the system process? ) is the process of exercising the system under test by feeding it the largest tasks it can operate with. Load testing is sometimes called volume testing, or longevity/endurance testing • Stress testing tries to break the system under test by overwhelming its resources or by taking resources away from it (in which case it is sometimes called negative testing). The main purpose behind this madness is to make sure that the system fails and recovers gracefully -- this quality is known as recoverability

What is JMeter • Apache JMeter is a 100% pure Java desktop application designed to performance/load test client/server software (such as a web application) • Initially developed for web apps, but later extended to ü FTP ü LDAP ü Databases ü Java Objects

What is JMeter • It is an Open source tool • Can be used on different protocols and server types : SOAP, REST, JDBC, Mail • User friendly GUI Design compare to other tools • Test results can be displayed in various formats such as Summary report, Aggregate report, View Results Tree and View Results in Table • Short learning curve (basic HTML knowledge should do it for a beginner) • Can be extended through plug-ins • Has excellent documentation

JMeter GUI

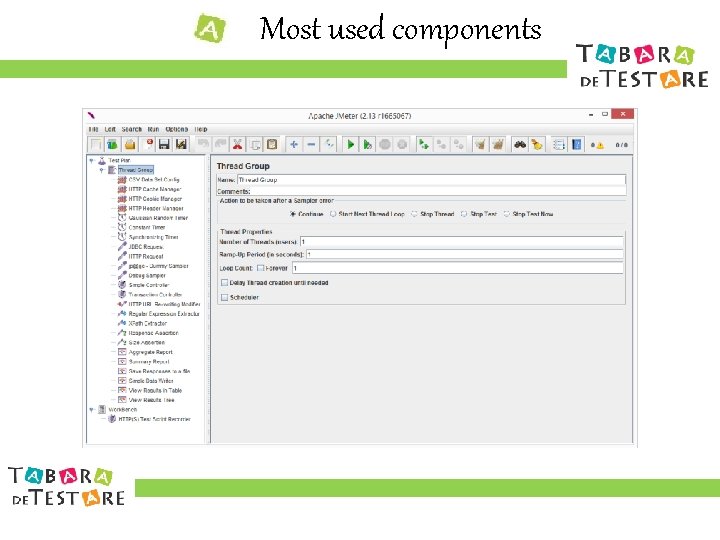

Main components • Test plan: • The main part of JMeter scripts • The “home” of thread groups, samplers, configuration elements and other specific components • Workbench: • Used mainly for generating script components using “HTTP(S) Test Script Recorder” component • By default not saving components in scripts

Main components • Samplers perform the actual work(requests) • Logic Controllers determine the order in which samplers are processed • Listeners provide means to view, save, and read saved test results • Configuration elements can be used to set up defaults and variables for later use by samplers • Assertions are used to perform additional checks on samplers • Timers(waits) are applied before the sampler is executed • Pre/Post Processors are used to modify the Samplers in their scope • Misc: Thread Group(+set. Up, +tear. Down), HTTPS Test Script Recorder

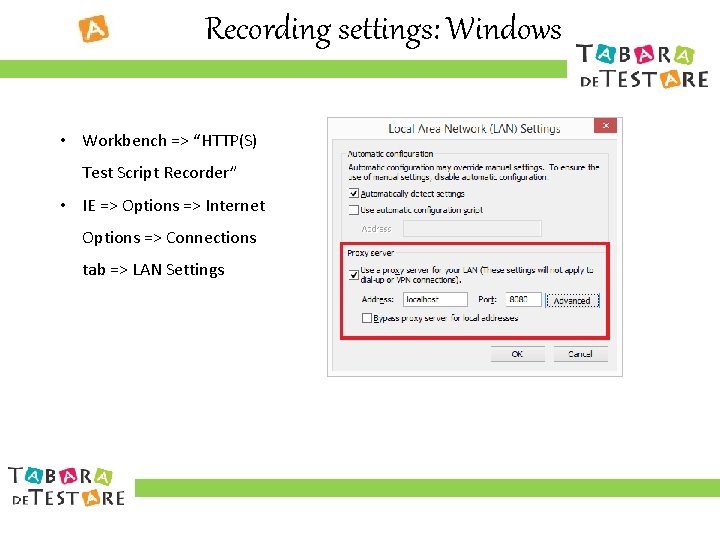

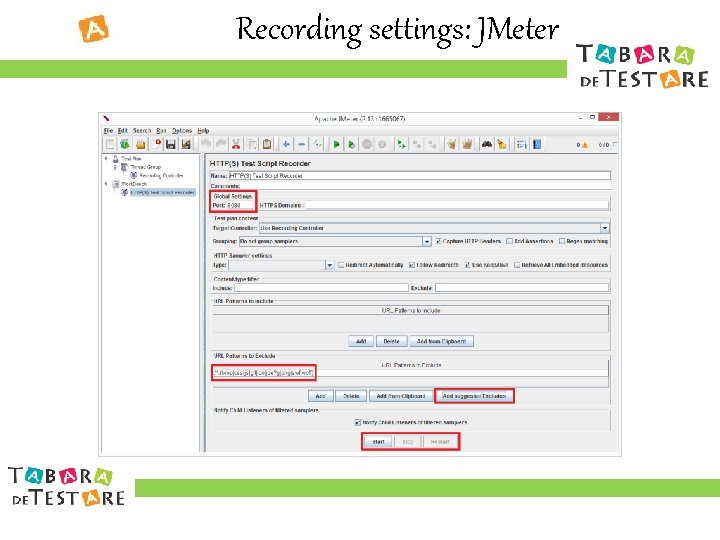

Recording settings: Windows • Workbench => “HTTP(S) Test Script Recorder” • IE => Options => Internet Options => Connections tab => LAN Settings

Recording settings: JMeter

Recording tips • Set adequate exclude patterns • Start clean sessions • Group samplers in Logic Controllers during recording • Name Logic Controllers by actions following Clean Code naming conventions for better readability • Remove duplicated requests (some requests captured by Recording Controller are also made as a redirection from other requests)

Parameterize script

Tips & tricks • Maintainability and readability: • Keep config elements at top(test plan level) for better readability • Keep hardcoding to a minimum (timers values, server name, server port and paths should be kept in global variables) • Keep global/static (per thread) variables at test plan level • Use properties to share variables between threads (${__set. Property(. . )}, ${__property(…)}) • Date and time should be extracted only once in script and stored in a variable that must be used later • Assert for false positives => check also the response content of pages with status 200 OK for error messages

Tips & tricks • Running: • Change default JVM memory settings in order to avoid OOM errors • Be careful at “CSV Data Set Config” controller settings • Use JMeter GUI mode to create the script and Non-GUI mode for long test • Be careful on parent-child relationship between components (setting timers at controller instead of sampler level will have undesired effects) • Use Ramp-Up Period and Timers to simulate real user-app interaction • Use set. Up and tear. Down logic from JMeter or Jenkins (ant, maven) • Use custom plugin elements as needed (jmeter-plugins. org) • Run recorded script with single and multiple concurrent users

Tips & tricks • Reporting • Check “Generate parent sample” on transaction controllers • Log errors as XML(whole response) and successes as CSV(resp summary) • Use Simple Data Writer to save bulk results during long(Non-GUI) runs instead of memory intensive listeners like View Results Tree • Other • Use “/” instead of ”” before variables in script paths • Use jmeter-t. bat to open scripts with double-click

Process considerations • Unstable environments/script lead to inconclusive results • Agile(Scrum): • Obtaining code freeze is “mission impossible” => changes in code after testing is done mean that we have to do performance again • Not enough time for performance testing => it is mostly done at the end of sprint and sometimes at the beginning of the next sprint

General tips • Choose appropriate testing tool (Soap. UI for web services) • Run tests on environments as close as possible to real environments in terms of processor, disks, memory, network • Make sure the application is stable (load after functional testing) • Test the most business-critical areas of the application (don’t do automation testing with JMeter asserting for presence of all buttons and labels in page) • Provide sufficient test data on test environment (too much test data will only show potential bottlenecks that are not yet present in real app) • Simulate number of each concurrent user type realistically (admin, user)

General tips • Upgrading hardware is not a bullet-proof solution (it will make the problem go away for a few moments and will come back later) • Don’t overload (CPU, memory) JMeter and app machines => use distributed(master/slaves) testing => keep total processor time below 80% threshold to have consistent results • Run a smoke test (1 thread, 1 loop) before each long test run • Use version control software to manage script versioning

Most used components

Windows Perf. Mon • Examine how programs you run affect your computer's performance, both in real time and by collecting log data for later analysis • Uses performance counters, event trace data, and configuration information, which can be combined into Data Collector Sets • Performance counters are measurements of system state or activity • Event trace data is collected from trace providers • Configuration information is collected from key values in the Windows registry • Run => perfmon • Create templates for later use

Q&A

Thank you Adrian Ciobanu Senior Tester @Endava

- Slides: 22