Public Performance Testing in Nutshell with Apache JMeter

Public Performance Testing in Nutshell with Apache JMeter Example Tomas Veprek Software Performance Tester Tieto, Testing Services tomas. veprek@tieto. com

Public Agenda 2. Why do we bother? 3. How do we do it? 1. What is it? Performance Testing 4. When do we do it? 5. Who benefits from it? 2 © Tieto Corporation 6. Where do we do it?

• Graduated from Technical University of Ostrava in 2003 • Since 2005 employed at Tieto Czech • Functional software tester • 3 rd tier support of EMC Documentum applications • Performance tester (2007 - present) • Proponent of Context-Driven School of Testing 3 © Tieto Corporation Public About Me …

Public 1. What is performance testing? 4 © Tieto Corporation

The process of evaluating a product by learning about it through exploration, experimentation, which includes to some degree: modelling, study, observation, inference, etc. -- James Bach 5 © Tieto Corporation Public Software testing is …

• Type of software testing that evaluates a software product mainly for the following qualities: Performance Stability 6 © Tieto Corporation Scalability Public Performance testing is …

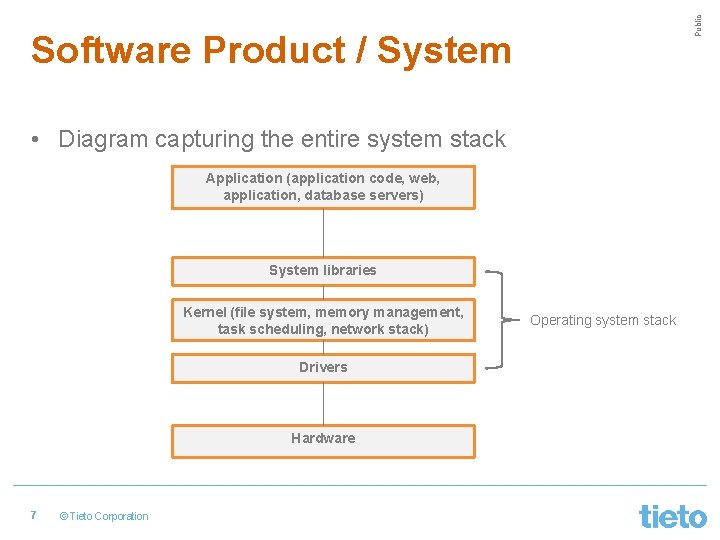

Public Software Product / System • Diagram capturing the entire system stack Application (application code, web, application, database servers) System libraries Kernel (file system, memory management, task scheduling, network stack) Drivers Hardware 7 © Tieto Corporation Operating system stack

• How fast are operations performed by the system under certain load? • Operations: • Submitting data after clicking a button on an HTML page • REST API call • Disk read / write operation • Different people see performance differently • Different tasks imply different performance expectations 8 © Tieto Corporation Public Performance

• • • Response time Latency Throughput IOPS Utilisation Saturation 9 © Tieto Corporation Public Key Performance Metrics

• Response time = time for an operation to complete, including the time spent waiting and being serviced • Latency = time an operation spent waiting in a queue • Response time = Latency + Service time • Easily quantifying degradation and improvement • Interpretation depends on the purpose of the operation 10 © Tieto Corporation Public Response Time and Latency

• Assume that a GET request consists of: • DNS resolution • Establishing TCP connection • Data transfer from the server to the client • What do we mean by latency and response time? 11 © Tieto Corporation Public Latency - HTTP GET Request

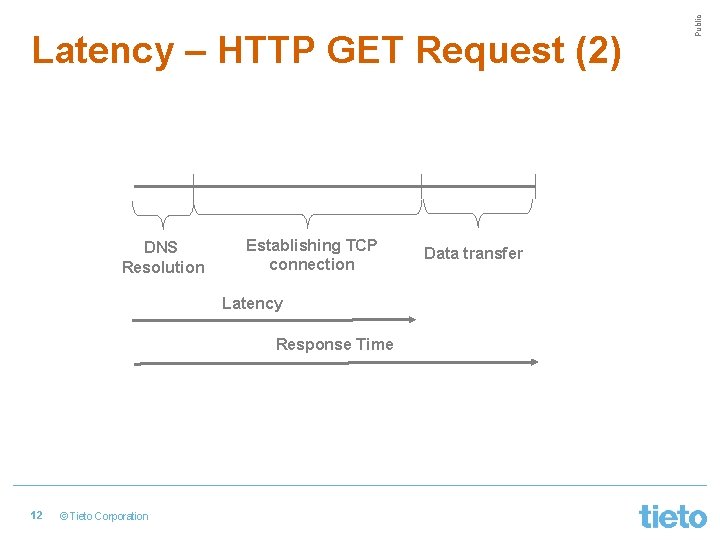

DNS Resolution Establishing TCP connection Latency Response Time 12 © Tieto Corporation Data transfer Public Latency – HTTP GET Request (2)

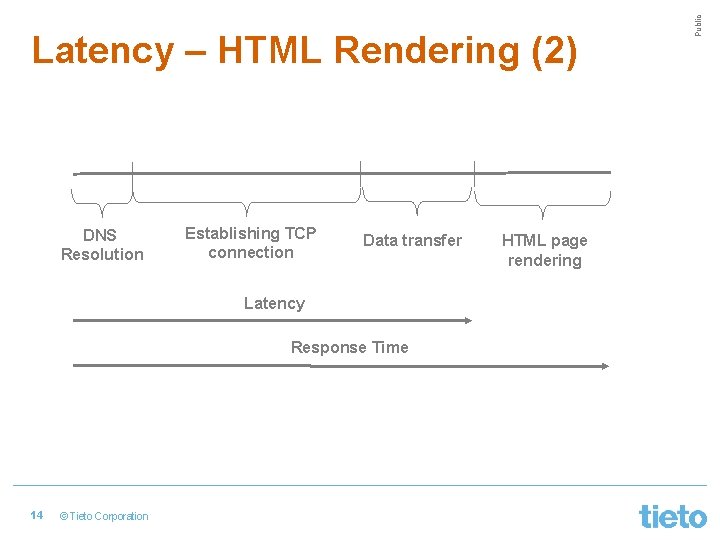

• User submits the data entered into a form on HTML page and waits until the page is rendered with the server response. • What do we mean by latency and response time? 13 © Tieto Corporation Public Latency – HTML Rendering

DNS Resolution Establishing TCP connection Data transfer Latency Response Time 14 © Tieto Corporation HTML page rendering Public Latency – HTML Rendering (2)

• The rate at which work is completed • The meaning depends on the target evaluated • Examples: • • 15 Bytes / bits per second SQL queries per second REST calls per second Transactions per second © Tieto Corporation Public Throughput

• Input / output operations completed per second • Throughput-oriented metric • The meaning depends on the target evaluated • Examples: • Network devices (TCP) – packets received / sent per second • Block devices – reads / writes per second 16 © Tieto Corporation Public IOPS

• Time-based definition: How busy a resource was during a period of time • Queuing network theory: U = B / T * 100 [%] • CPU utilisation • Disk utilisation • Capacity-based definition: The extent to which the capacity of a resource was used during a time period. 17 © Tieto Corporation Public Utilisation

• The extent to which a resource has queued work because it cannot accept more work • Capacity-based utilisation >= 100% • Increases latency and response time • Bottleneck = the resource that limits performance 18 © Tieto Corporation Public Saturation

• How does the performance of the product change under increasing load? • Load • Hourly number of users accessing the product • API calls per second • Amount of data uploaded to the product per second • System may perform and may not be scalable 19 © Tieto Corporation Public Scalability

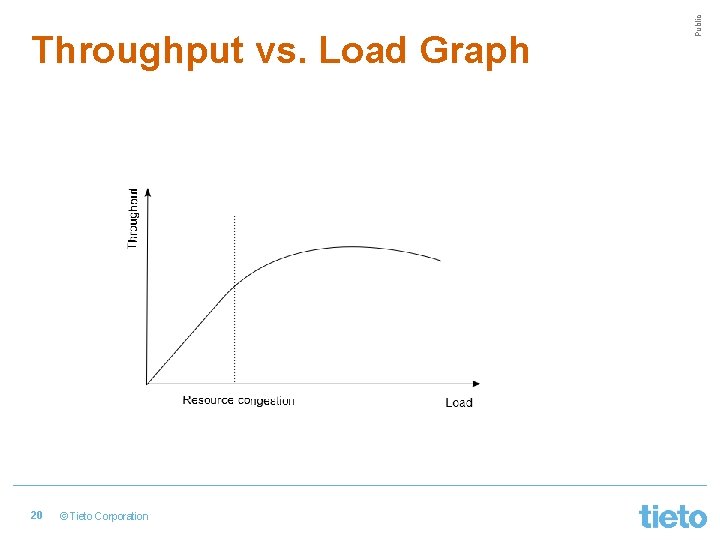

20 © Tieto Corporation Public Throughput vs. Load Graph

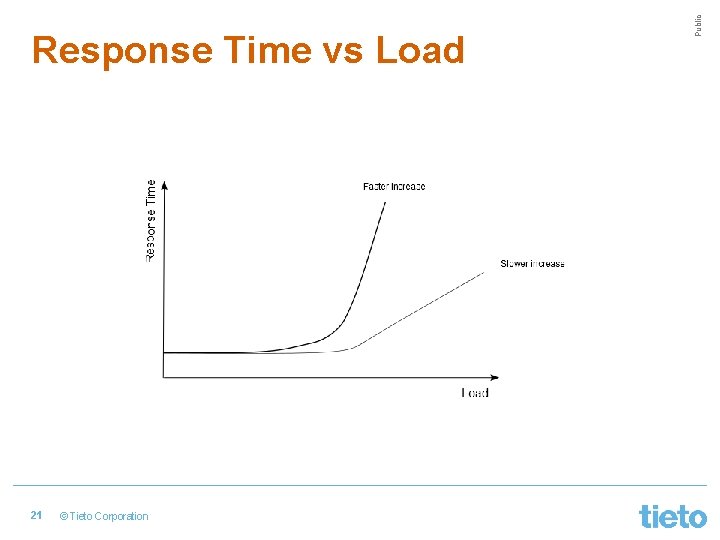

21 © Tieto Corporation Public Response Time vs Load

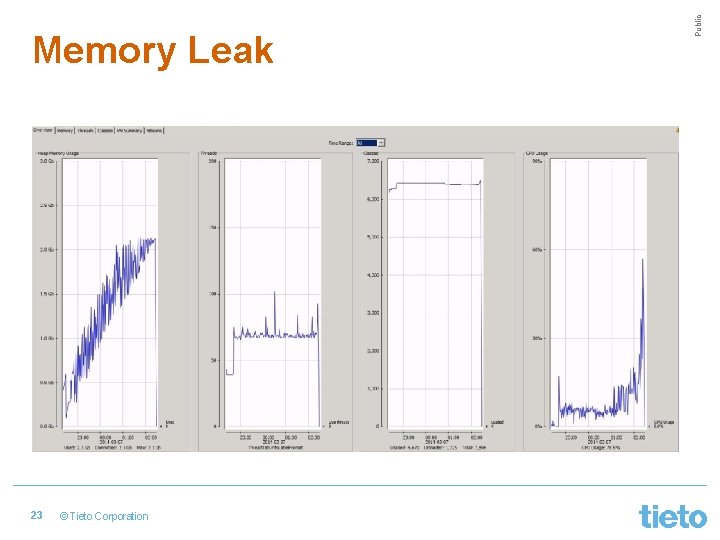

• Does the system perform over long time? • Does performance gets back to normal after an exceptional situation? • Memory leaks • Excessive logging on disk devices 22 © Tieto Corporation Public Stability

23 © Tieto Corporation Public Memory Leak

Public 2. Why to do performance testing? 24 © Tieto Corporation

Public It is all about risks and consequences Costs 25 © Tieto Corporation Benefits

Risk: System may crash after being released for public use Consequences: Company will lose money Company may lose its customers and its credit 26 © Tieto Corporation Public Risks and Consequences

Risk: System may be slower than our competitors’ systems Consequences: Users may complain Company may lose money 27 © Tieto Corporation Public Risks and Consequences (2)

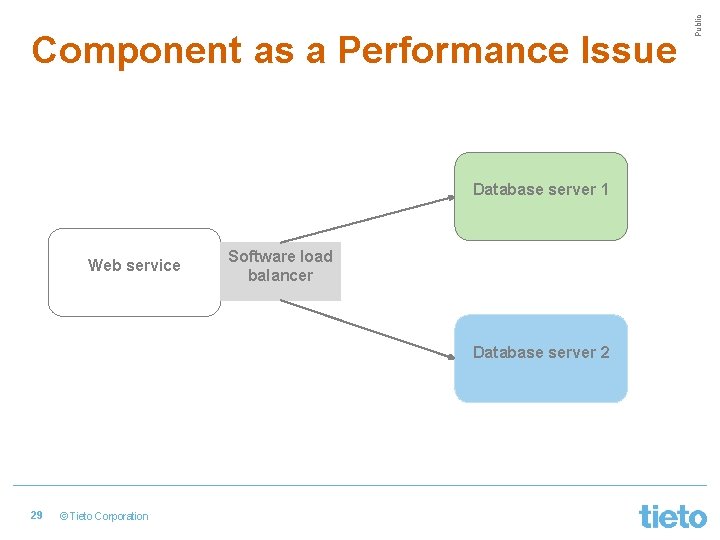

Risk: The new software component integrated in our system may degrade its performance Consequences: User experience will adversely be affected Additional costs for the company to fix the problem 28 © Tieto Corporation Public Risks and Consequences (3)

Database server 1 Web service Software load balancer Database server 2 29 © Tieto Corporation Public Component as a Performance Issue

Risk: System may not be able to handle occasionally high loads throughout the year Consequences: Customers will complain loudly Company will lose money and credit Customers may leave for competitors’ systems 30 © Tieto Corporation Public Risks and Consequences (4)

Risk: System will not be able to run over long time Consequences: System has to be restarted during the evening hours Users may complain about system unavailability 31 © Tieto Corporation Public Risks and Consequences (5)

Public 3. How to do performance testing? 32 © Tieto Corporation

• Problem statement clarification • Workload modelling • Understanding performance requirements • Performance test design • Load generation tool selection 33 © Tieto Corporation Public Common Performance Testing Activities

• Performance test implementation • Test data creation • Monitoring setup • Performance test execution • Test results analysis • Reporting to stakeholders 34 © Tieto Corporation Public Common Performance Testing Activities (2)

Client 35 © Tieto Corporation Performance tester Public How does it all begin?

• Client: “We are just about to release a new version of software application and we want to do performance testing. ” • P. Tester: “When exactly are you releasing? ” • Client: “In one week. ” • P. Tester: “What do you want to learn from performance testing? ” • Client: “How fast is the application compared to the previous version. ” 36 © Tieto Corporation Public Conversation with Client

• P. Tester: “Did you performance test the previous version? ” • Client: “No. We didn’t. I thought you would do it now. ” • P. Tester: “Do you realise there’s only one week left before deploying the new version in production? ” • Client: “Yes, the schedule is very tight, but you can just do simple performance tests. ” • P. Tester: “Uff!… What do you mean by simple tests? ” • …. 37 © Tieto Corporation Public Conversation with Client (2)

• What is the system to be performance tested? • What is the mission of performance testing (risks to be reduced, information to be collected)? • What context will performance testing be done in? • • • 38 Clients Time and budget System status Development team & lifecycle Experience and skills of performance testers © Tieto Corporation Public Activity #1: Clarify Problem Statement

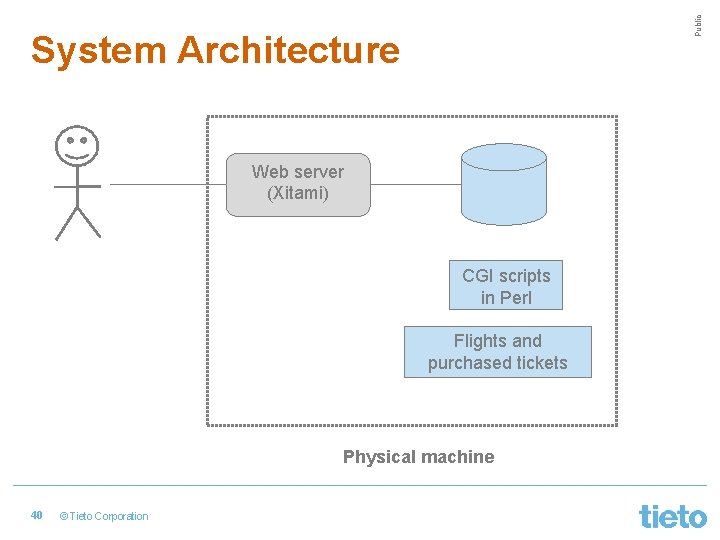

• Simple air ticket purchase system • Web-based application • Features: • • User registration Search for outbound and return flights Purchase an air ticket Browse the itinerary • Mission: Test how the application performs under the anticipated load in production. 39 © Tieto Corporation Public Problem Statement: Example

Public System Architecture Web server (Xitami) CGI scripts in Perl Flights and purchased tickets Physical machine 40 © Tieto Corporation

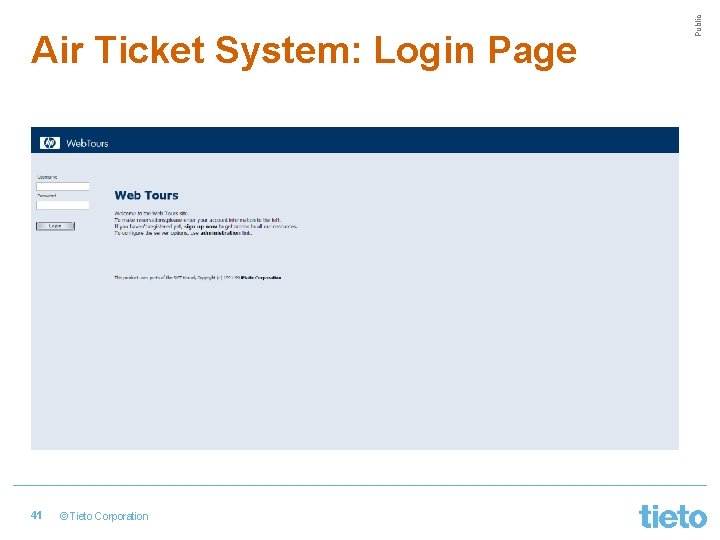

41 © Tieto Corporation Public Air Ticket System: Login Page

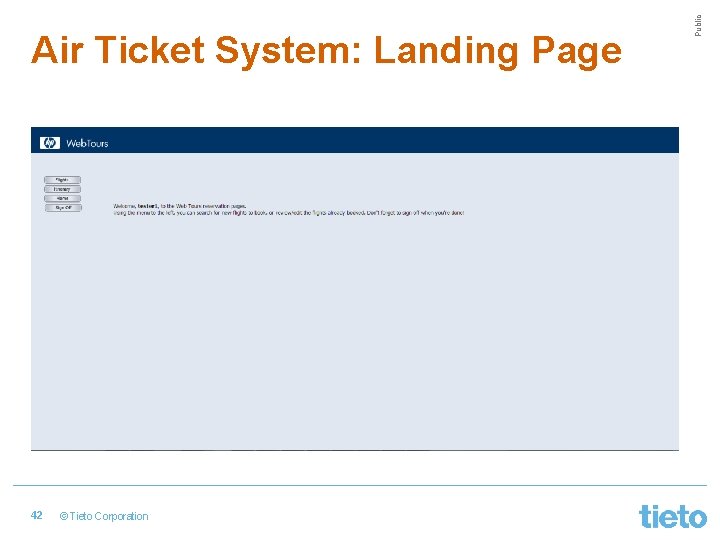

42 © Tieto Corporation Public Air Ticket System: Landing Page

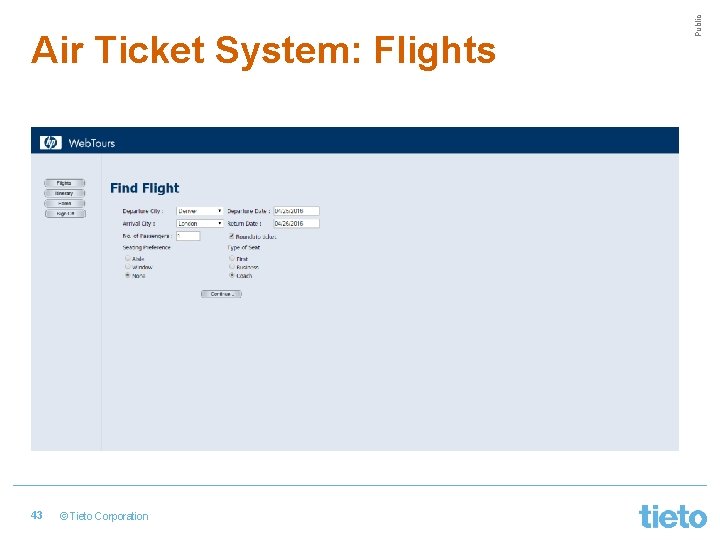

43 © Tieto Corporation Public Air Ticket System: Flights

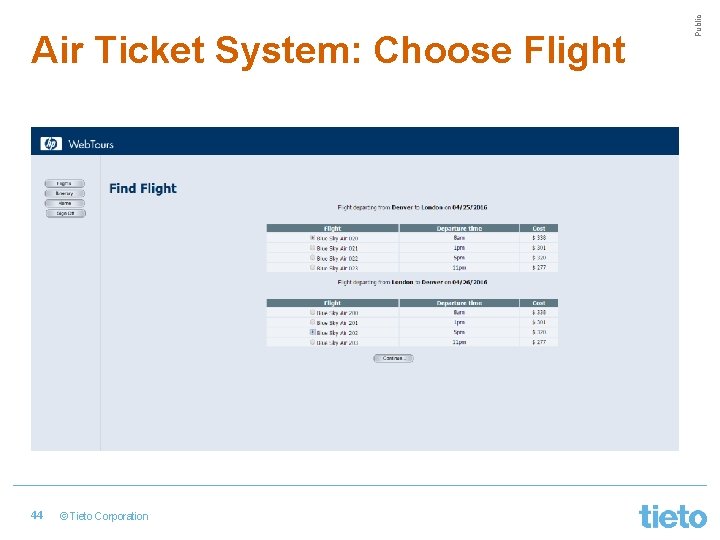

44 © Tieto Corporation Public Air Ticket System: Choose Flight

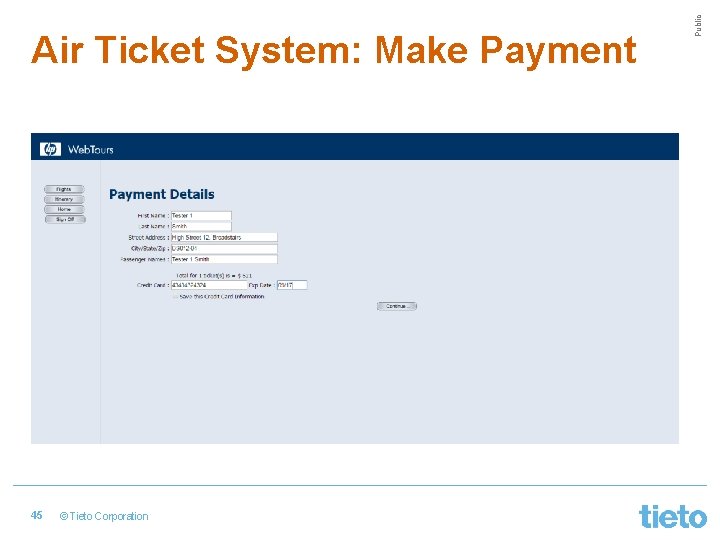

45 © Tieto Corporation Public Air Ticket System: Make Payment

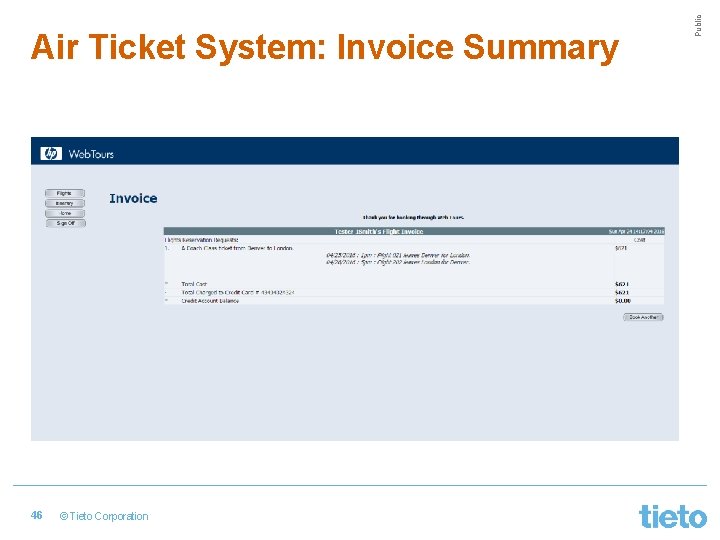

46 © Tieto Corporation Public Air Ticket System: Invoice Summary

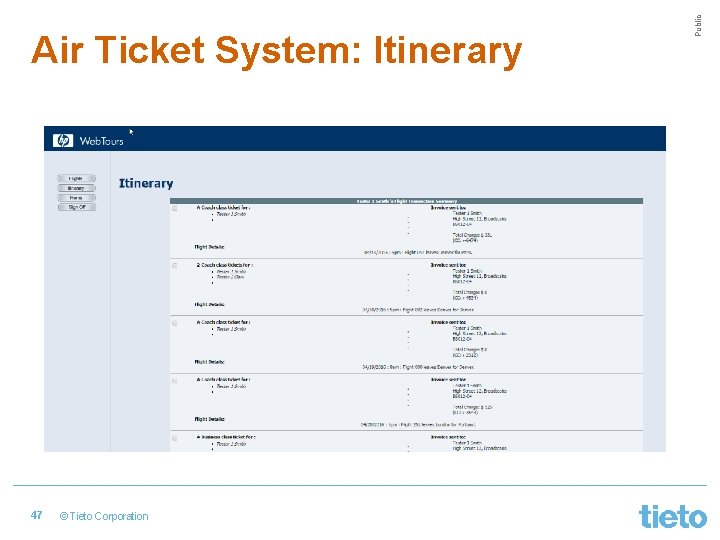

47 © Tieto Corporation Public Air Ticket System: Itinerary

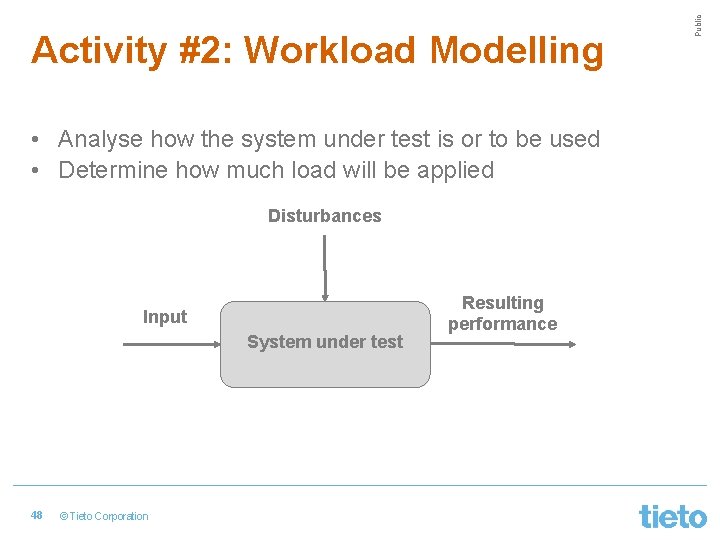

• Analyse how the system under test is or to be used • Determine how much load will be applied Disturbances Input System under test 48 © Tieto Corporation Resulting performance Public Activity #2: Workload Modelling

• Web applications: User actions over web pages • Web services: REST / SOAP requests • Relational database: SQL queries • Java component: Method calls • SMTP server: Requests sending emails 49 © Tieto Corporation Public Workload Modelling: Input

• Which operations do we consider as input? 1. Classify operations using the following criteria: • • 50 Frequency (popularity) Business criticality Data amount Execution time © Tieto Corporation Public Workload Modelling: Input (2)

2. Determine the distribution of operations • Operations do not occur with the same frequency • Distribution may significantly affect performance 3. Determine the range of anticipated load • Focus on peak load (usually calculated per hour) • Sources of usage information: • Discussion with stakeholders • Analysis of access log files (e. g. Google Analytics) • Best guess based on similar software systems 51 © Tieto Corporation Public Workload Modelling: Input (3)

• Scheduled jobs (e. g. database backups, search engine indexing) • Multiple tenants on virtualised servers • Distributed system architectures 52 © Tieto Corporation Public Workload Modelling: Disturbances

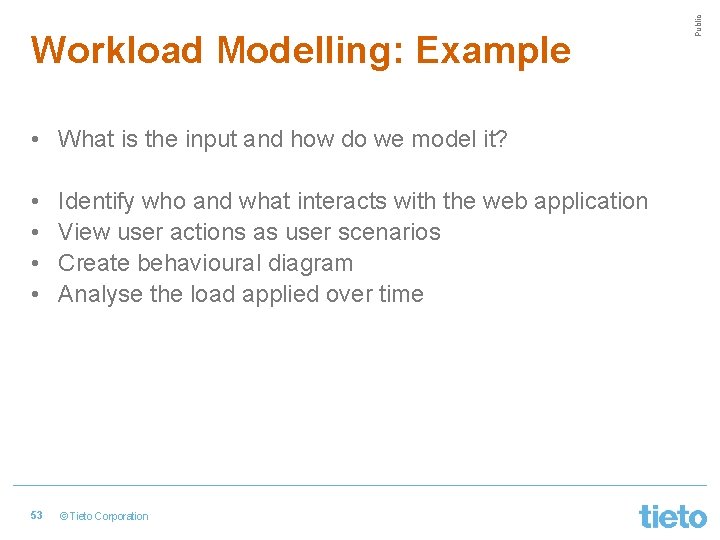

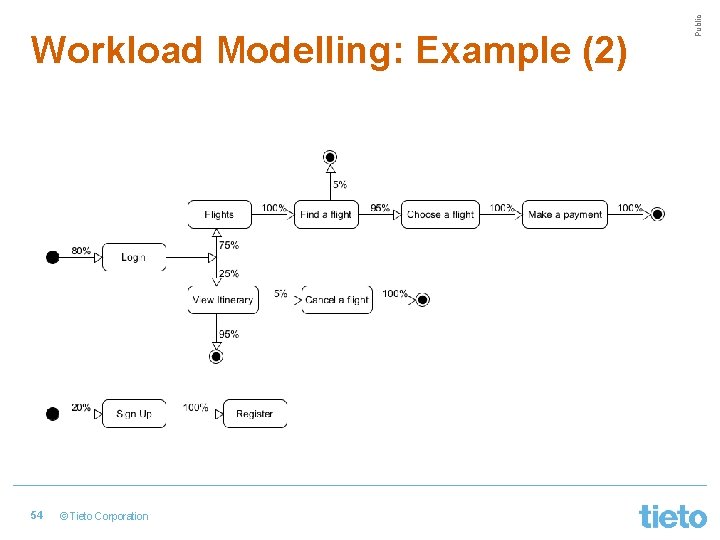

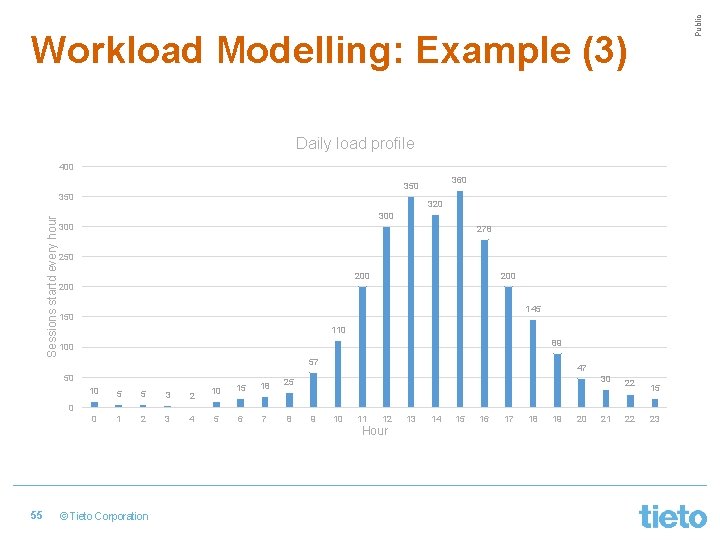

• What is the input and how do we model it? • • Identify who and what interacts with the web application View user actions as user scenarios Create behavioural diagram Analyse the load applied over time 53 © Tieto Corporation Public Workload Modelling: Example

54 © Tieto Corporation Public Workload Modelling: Example (2)

Public Workload Modelling: Example (3) Daily load profile 400 360 350 Sessions startd every hour 350 320 300 278 250 200 200 145 150 110 89 100 57 50 10 5 5 3 2 0 1 2 3 4 10 15 18 25 5 6 7 8 47 30 22 15 22 23 0 9 10 11 12 Hour 55 © Tieto Corporation 13 14 15 16 17 18 19 20 21

• Clients’ expectations of how the system should perform • Reduce the subjectivity of performance • Depends on the system under test • Examples: • E-shop must handle 1200 orders every hour and 98% of all orders should be placed within 10 s. • Web service must handle 10000 searches for available phone numbers every hour in less than 5 s. • The system must have enough capacity to accommodate the load created by 500 more users without any affect to the performance. 56 © Tieto Corporation Public Activity #3: Understanding Performance Requirements

• Air ticket sales application must handle the peak load of 400 users arriving every hour with the following conditions met: • Login must be completed in less than 3 s • In 98% cases, available flights should be found in less then 5 s • Purchase of flight tickets should not take longer than 7 s 57 © Tieto Corporation Public Performance Requirements: Example

• What tests do we carry out in order to fulfil our mission? • Load intensity and profile • Test duration • Ramp-up period • Performance test types: • • 58 Load test Scalability test Stability test High availability test © Tieto Corporation Public Activity #4: Performance Test Design

• Load intensity: 400 sessions started every hour • Think times: 10 s between page transitions (+- 25%) • How many virtual users (threads) do we need? • Ramp-up period: the longest session • Duration: 2 hours 59 © Tieto Corporation Public Performance Test Design: Example

• In majority of cases, the use of software tool for load generation is undeniable • Commercial or free depends on the budget • Software tool is the tip of an iceberg => not the centre of performance testing • Choose whatever load generation tool that does the job 60 © Tieto Corporation Public Activity #5: Load Generation Tool Selection

• Java-based, free and open source load generation tool • Suitable for HTTP(S), FTP, SMTP, TCP, JDBC, Mongo. DB, shell scripts, … • Scripts stored as XML files • Currently version 2. 13 (version 3. 0 soon coming) • Out-of-box bundle lacks reporting functions • JMeter Plugins enhance JMeter functionalities • JMeter cloud solutions: Blazemeter, Octoperf, flood IO • Twitter: @Apache. JMeter, @jmeter_plugins 61 © Tieto Corporation Public Load Generation Tool: JMeter

• • Commercial Free only the community version with 50 virtual users Great range of supported protocols Scripts in the C language Advanced reporting of performance test results Applications: Vu. Gen, Controller, Analysis, Load Agent Licences determined based on the number of virtual users and protocol bundles • Licences are relatively costly 62 © Tieto Corporation Public Load Generation Tool: HP Load. Runner

• Workload models transformed into test scripts (one-to-one relationship between scripts and paths or one complex script for web applications) • Performance tests are composed of test scripts • Supportive tools (e. g. HTTP proxies) used to implement test scripts 63 © Tieto Corporation Public Activity #6: Performance Test Implementation

• Paths in the workload model: • Path #1: Login -> Flights -> Find a flight -> exit • Path #2: Login -> Flights -> Find a flight -> Choose a flight -> Make a payment -> exit • Path #3: Login -> View Itinerary -> exit • Path #4: Login -> View Itinerary -> Cancel Flight -> exit • Path #5: Signup -> Register -> exit 64 © Tieto Corporation Public Performance Test Implementation: Example

• Amount, diversity and accuracy of test data may significantly affect performance test results • Recyclable and non-recyclable data • Static and dynamic data 65 © Tieto Corporation Public Activity #7: Test Data Creation

• User names and passwords – CSV file • Available flights – chosen randomly on the fly • Seat preference and type – chosen randomly on the fly • Number of passengers – kept constant during the test 66 © Tieto Corporation Public Test Data: Example

• Observing how the system performs • System-level monitoring: • CPU, memory, disk, network, IOPS • Application-level monitoring • Heap memory utilisation • Web server active connections • Most-time consuming SQL queries • Software tools: built-in OS tools, HP Sitescope, New Relic, Nagios 67 © Tieto Corporation Public Activity #8: Monitoring Setup

• Ensure the sufficient amount of test data is created • Activate the monitoring of the software system • Launch the performance test manually or schedule its execution • If possible, actively monitor the software system under load (e. g. log files, additional monitoring tools, profiling) • The test may be stopped earlier before its planned completion as a result of a high number of errors 68 © Tieto Corporation Public Activity #9: Performance Test Execution

• Depends on the purpose of the performance test • Was the mission of the performance test met? If not, why? • Load tests: Was the course of transaction response times stable during the test? If peaks occurred, what was the cause? • In complex software systems, the interpretation of test results and any suspicious behaviour is team work 69 © Tieto Corporation Public Activity #10: Performance Test Result Analysis

• Summarising the observations made from performance the results in a way understandable and helpful for the stakeholders we report to • Stakeholders: • • Programmers Product owner Project manager Users • Form: verbal or written (agreed beforehand with the stakeholders) 70 © Tieto Corporation Public Activity #11: Reporting to Stakeholders

• Apache JMeter plugins: http: //jmeter-plugins. org/ • Scott Barber’s User Experience, not Metrics Series: http: //www. perftestplus. com/pubs. htm • Blazemeter JMeter Cloud: https: //www. blazemeter. com/ • James Bach’s blog (Context-driven School of Testing): http: //www. satisfice. com/blog/ 71 © Tieto Corporation Public Useful Links

Public Tomas Veprek Software Performance Tester Tieto, Testing Services tomas. veprek@tieto. com

- Slides: 72