Parallel Computing Andrew Tomko COT 4810 12 February

Parallel Computing Andrew Tomko COT 4810 12 February 2008

Parallel Computing The simultaneous processing of different tasks by two or more microprocessors, as by a single computer with more than one central processing unit or by multiple computers connected together in a network.

Distributed Computing A method of computer processing in which different parts of a program are run simultaneously on two or more computers that are communicating with each other over a network. “Segmented” Parallel Processing

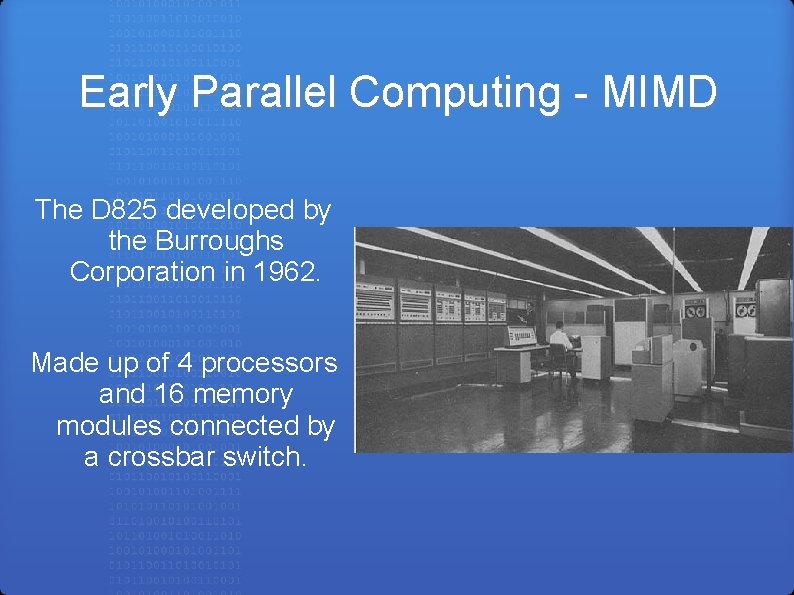

Early Parallel Computing - MIMD The D 825 developed by the Burroughs Corporation in 1962. Made up of 4 processors and 16 memory modules connected by a crossbar switch.

Early Parallel Computing - SIMD The ILLIAC IV developed by Slotnick in 1964. Up to 256 processors. “The most infamous of Supercomputers”

Today's Parallel Computers Blue. Gene/L developed by IBM in 2007 106, 496 processors 596 tera. FLOPS

New Considerations What is the best communication network? How can the program be parallelized? • Data Parallelism • Functional Parallelism

Popular Communication Networks • 2 D Mesh • Binary Tree • Hyper Trees • Butterfly • Hypercube

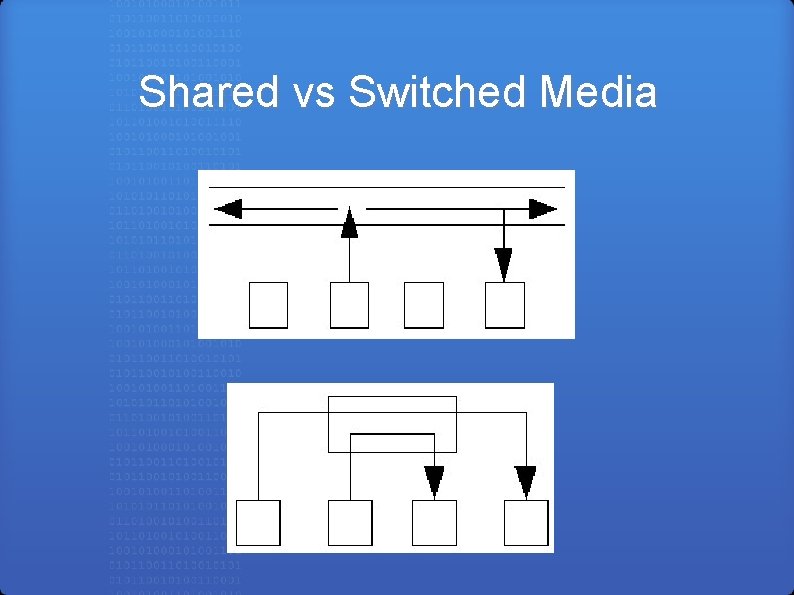

Shared vs Switched Media

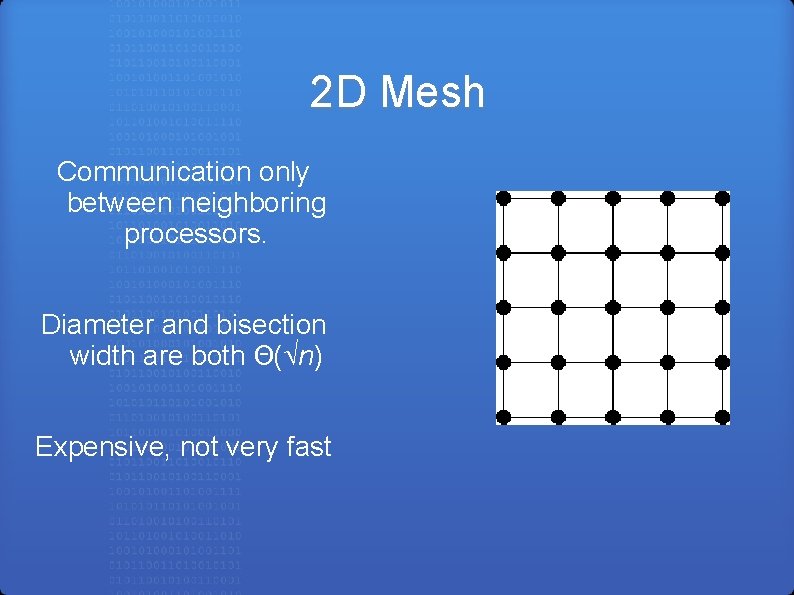

2 D Mesh Communication only between neighboring processors. Diameter and bisection width are both Θ( n) Expensive, not very fast

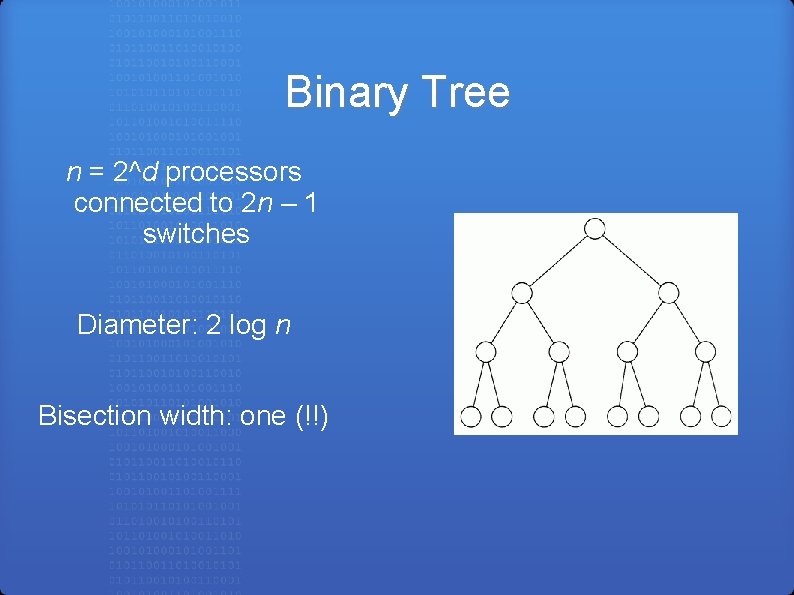

Binary Tree n = 2^d processors connected to 2 n – 1 switches Diameter: 2 log n Bisection width: one (!!)

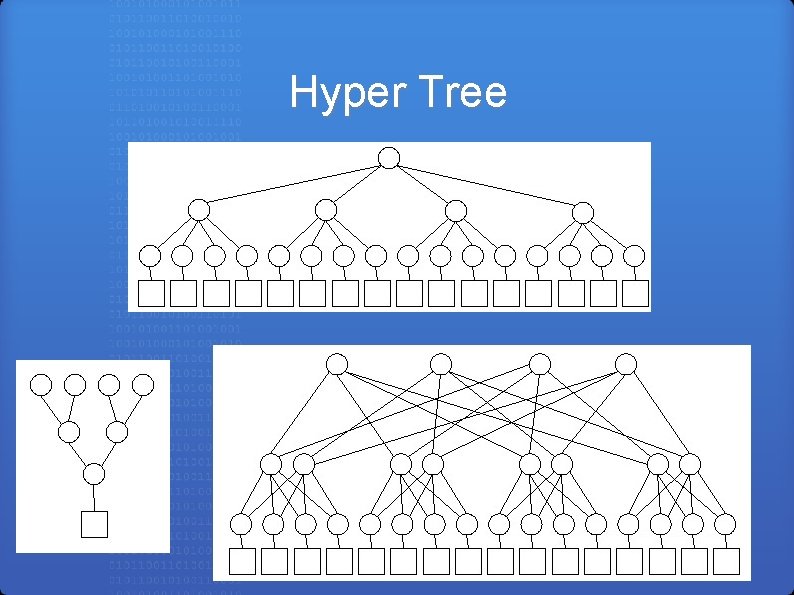

Hyper Tree

Hyper Tree Has the low diameter of a binary tree, but an improved bisection width. High speed and dependability, but also very expensive.

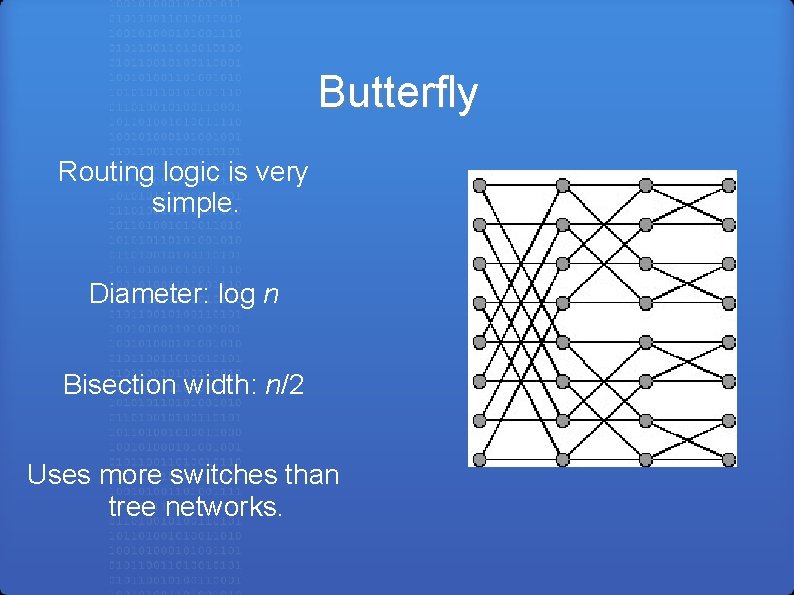

Butterfly Routing logic is very simple. Diameter: log n Bisection width: n/2 Uses more switches than tree networks.

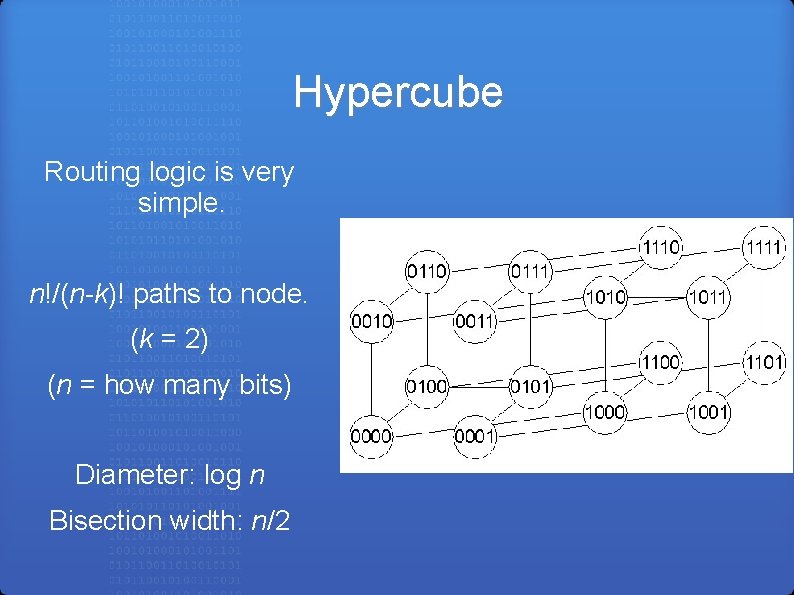

Hypercube Routing logic is very simple. n!/(n-k)! paths to node. (k = 2) (n = how many bits) Diameter: log n Bisection width: n/2

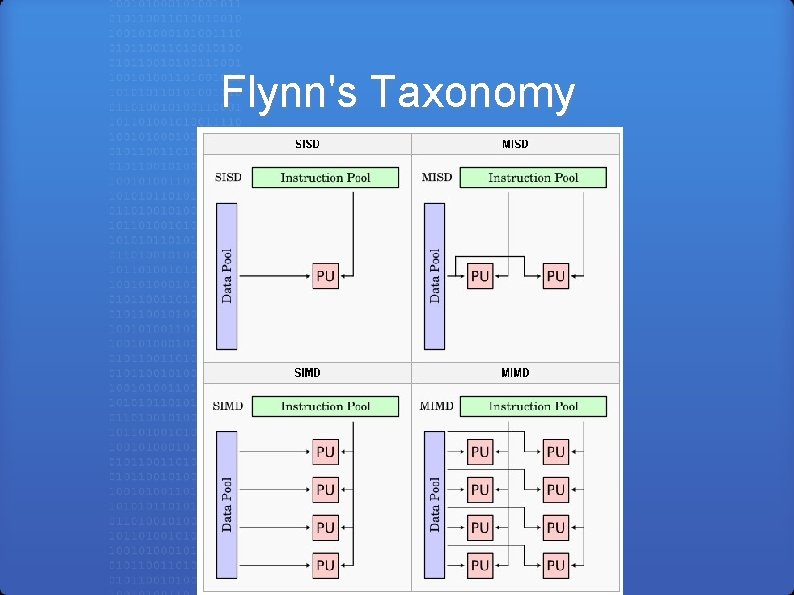

Flynn's Taxonomy

Parallel Algorithms • Need to have a completely new mindset. • Want to have all processors running at 100% utilization. • Minimize communication as much as possible.

Foster's Design Methodology • Partitioning • Communication • Agglomeration • Mapping

Partitioning The process of dividing the computation and the data into pieces. • More tasks than processors • Redundant computation / data storage minimized • Tasks are about the same size • Number of tasks increase with problem size

Communication Local / Global communication • Communication balanced among tasks • Each task communicates with only small number of neighbors • Tasks can perform communications concurrently • Tasks can perform computations concurrently

Agglomeration The process of grouping tasks into larger tasks to improve performance or simplify programming. • Locality of parallel algorithm has increased • Replicated computations take less time than the communications they replace • Amount of replicated data is small enough to allow scalability

Mapping The process of assigning tasks to processors. Goal: maximize utilization, minimize communication • Consider one task per processor and multiple tasks per processor • Consider static/dynamic allocation of tasks • Dynamic: task allocator should not be bottleneck • Static: ratio of tasks to processors should be at least 10: 1

Works Cited Dewdney, A. K. The New Turing Omnibus. New York: Henry Holt and Company, LLC, 1993. "parallel processing. " The American Heritage® Dictionary of the English Language, Fourth Edition. Houghton Mifflin Company, 2004. 11 Feb. 2008. <Dictionary. com http: //dictionary. reference. com/browse/parallel processing>. Quinn, Michael J. Parallel Programming. Boston: Mc. Graw Hill, 2004.

Questions 1) In a 4 -ary hypercube, how many paths (of length 3) are there from node 0110 to 0001? 2) What are the four steps of Foster's process for designing parallel algorithms?

- Slides: 24