Pano Optimizing 360 Video Streaming with a Better

Pano: Optimizing 360° Video Streaming with a Better Understanding of Quality Perception Yu Guan , Chengyuan Zheng, Xinggong Zhang , Zongming Guo, Junchen Jiang ACM SIGCOMM 2019, Beijing, China, August 2019 Presented by Kexiang Wang

Motivation ● ● VR expansion ○ 36. 9 millions VR users in the US(10% of the population) ○ There will be 55 millions VR headsets in 2022 ○ The delivery architecture is cheap and scalable. Conflicts between quality and bandwidth. ○ Users’ attention is unevenly distributed.

Current Solution ● Observation: Users’ attention is unevenly distributed. ● Goal: Maximize the quality on the area that the user is watching, while lower the quality of other area. ○ Viewport -driven streaming ○ Spatially partitions a video into tiles and encode each tile in multiple quality levels. ○ Dynamically assign higher quality level to tiles near the viewport.

Limitations of Viewport-driven streaming ● Each video chunk needs to be prefetched before the user watch it. ○ Viewport-driven streaming needs to anticipate user’s viewport position before the user moves head. ● Video size is significantly increased.

New quality-determining factors ● Users does not perceive the quality of 360° video the same way they do for non-360° video. ● Although the video size grows dramatically to create an immersive experience, a user’s span of attention remains largely constant. ● Key insight: the user-perceived quality of 360° videos is uniquely affected by users’ viewpoint movements.

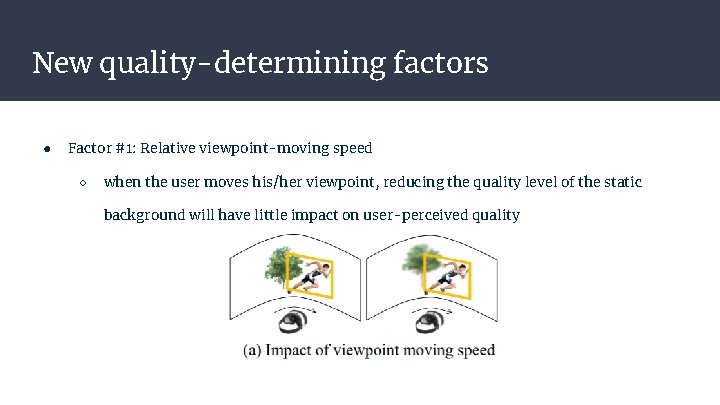

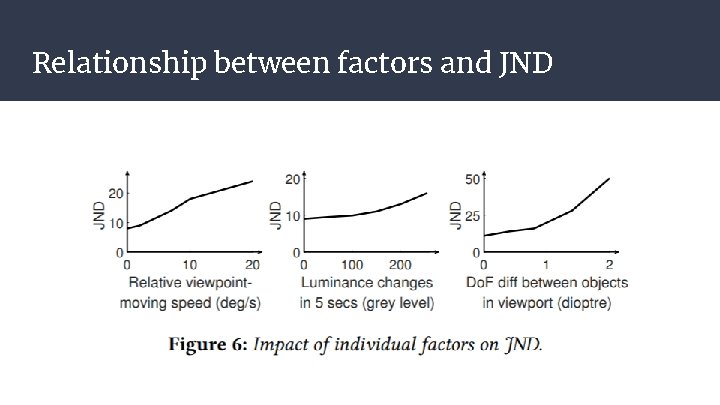

New quality-determining factors ● Factor #1: Relative viewpoint-moving speed ○ when the user moves his/her viewpoint, reducing the quality level of the static background will have little impact on user-perceived quality

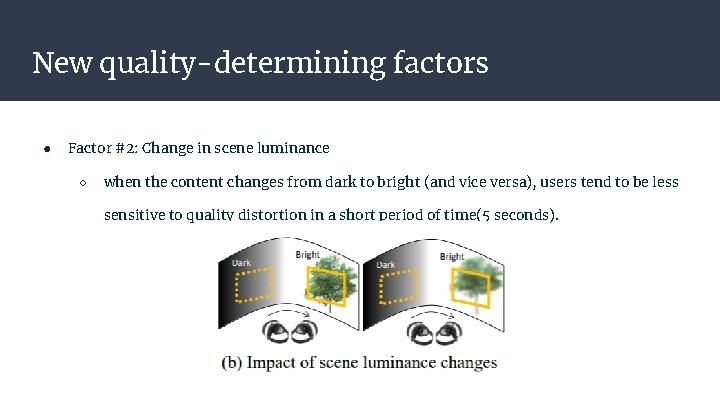

New quality-determining factors ● Factor #2: Change in scene luminance ○ when the content changes from dark to bright (and vice versa), users tend to be less sensitive to quality distortion in a short period of time(5 seconds).

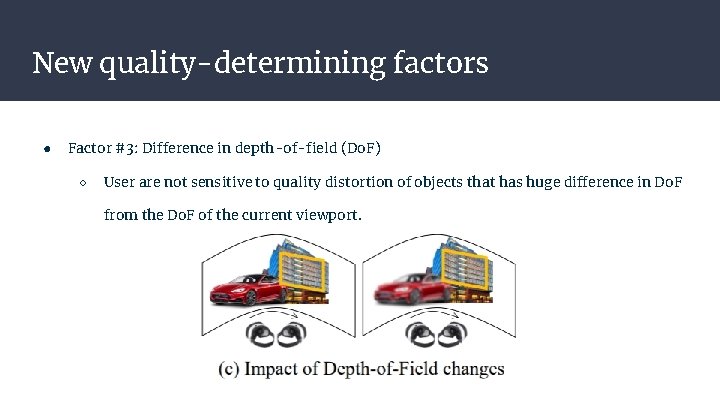

New quality-determining factors ● Factor #3: Difference in depth-of-field (Do. F) ○ User are not sensitive to quality distortion of objects that has huge difference in Do. F from the Do. F of the current viewport.

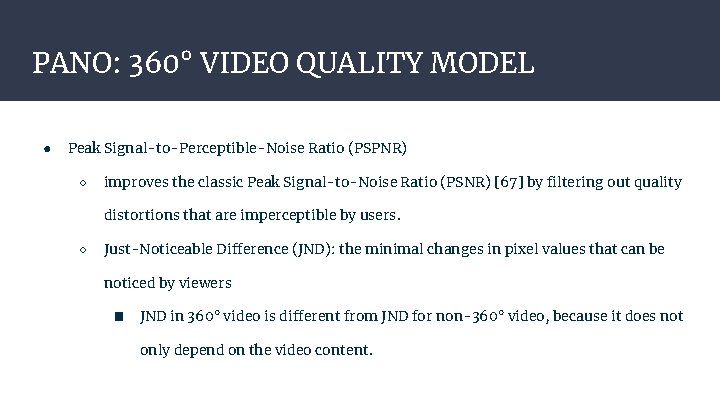

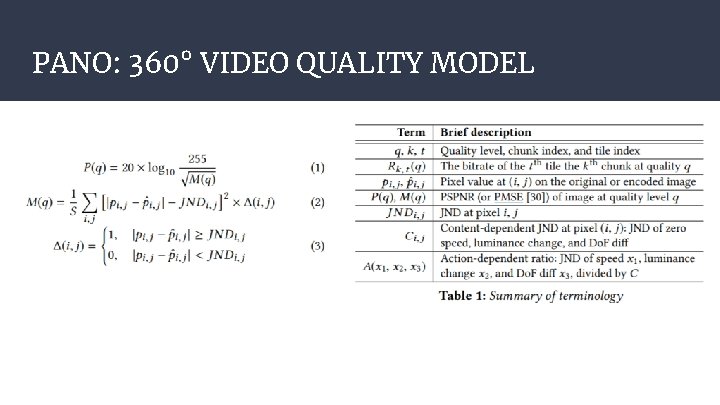

PANO: 360° VIDEO QUALITY MODEL ● Peak Signal-to-Perceptible-Noise Ratio (PSPNR) ○ improves the classic Peak Signal-to-Noise Ratio (PSNR) [67] by filtering out quality distortions that are imperceptible by users. ○ Just-Noticeable Difference (JND): the minimal changes in pixel values that can be noticed by viewers ■ JND in 360° video is different from JND for non-360° video, because it does not only depend on the video content.

PANO: 360° VIDEO QUALITY MODEL

Relationship between factors and JND

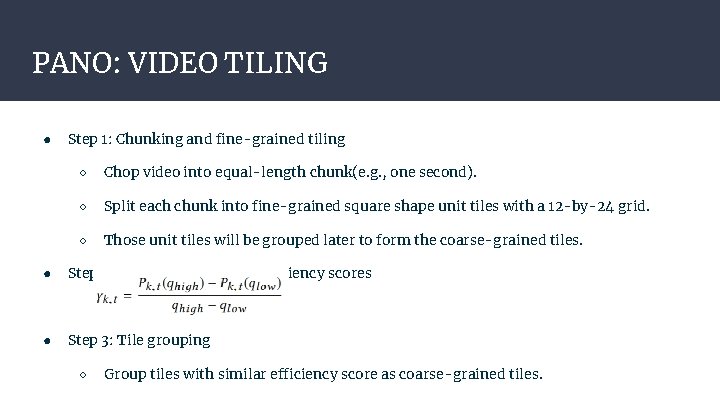

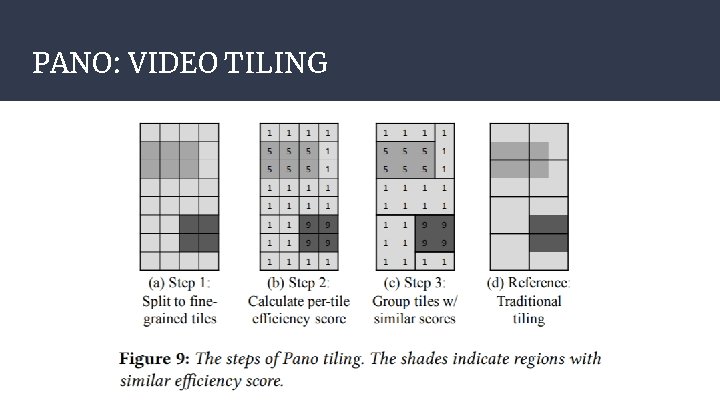

PANO: VIDEO TILING ● Step 1: Chunking and fine-grained tiling ○ Chop video into equal-length chunk(e. g. , one second). ○ Split each chunk into fine-grained square shape unit tiles with a 12 -by-24 grid. ○ Those unit tiles will be grouped later to form the coarse-grained tiles. ● Step 2: Calculating per-tile efficiency scores ● Step 3: Tile grouping ○ Group tiles with similar efficiency score as coarse-grained tiles.

PANO: VIDEO TILING

PANO: QUALITY ADAPTATION ● ● Challenge #1: How to adapt quality in the presence of noisy viewpoint estimates? ○ DFS with branch pruning. ○ Estimate lower bound using recent history. Challenge #2: How to be deployable on the existing DASH protocol? ○ Decouple the calculation of PSPNR into two phases. ○ Choose particular values of viewport moving speed, change of luminance and Do. F difference to compute a lookup table in offline mode.

IMPLEMENTATION ● Implemented with 15 K lines of code by C++, C#, Python and Matlab. ● Video provider : ○ Use Yolo(a neural network-based multi-class object detector) to extract features from the video, such as object trajectories, content luminance, and Do. F. ○ Use FFmpeg Crop. Filter to do tiling and encode each tile in 5 quality levels. ○ Each tile includes the following information ■ the coordinate of the tile’s top-left pixel ■ average luminance within the tile ■ average Do. F within the tile ■ the trajectory of each visual object ■ the PSPNR lookup table

IMPLEMENTATION ● Video server : the standard DASH video server. ● Client-side adaptation ○ The viewpoint estimator predicts viewpoint location in the next 1 -3 seconds, using a simple linear regression over the recent history viewpoint locations. ○ The client-side PSPNR estimator compares the predicted viewpoint movements with the information of the tile where the predicted viewpoint resides (extracted from the manifest file) to calculate the relative viewpoint speed, the luminance change, and the Do. F difference. ○ ● Computer PSPNR using the lookup table. Client-side streaming ○ Fetch the tiles in each chunk separably and stitch them into a panoramic frame.

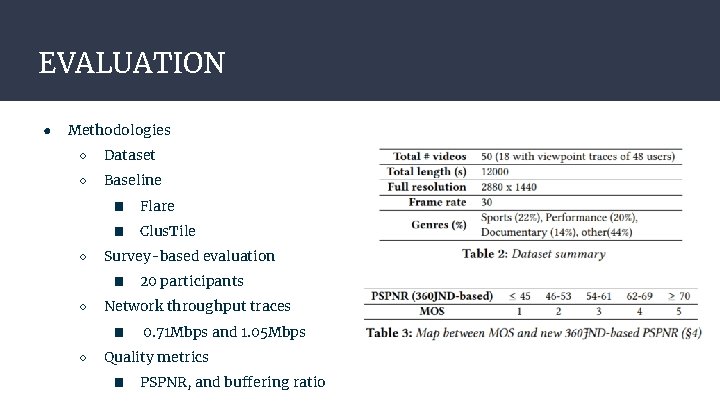

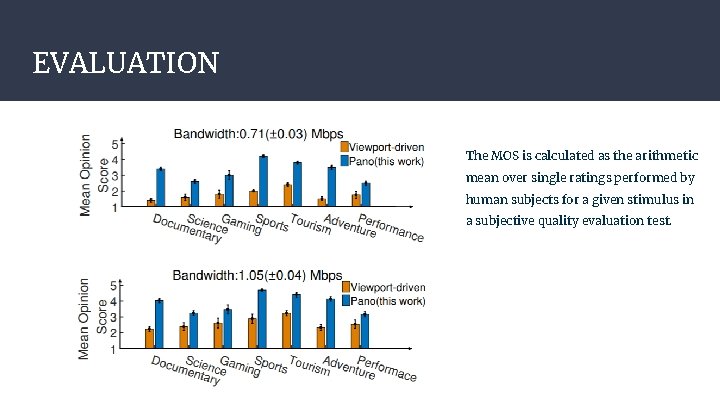

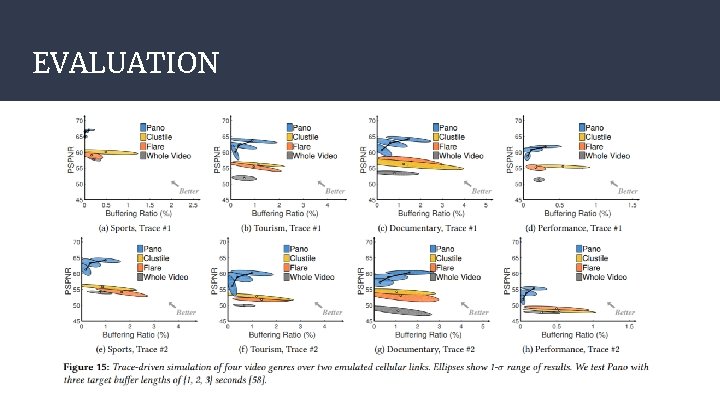

EVALUATION ● Methodologies ○ Dataset ○ Baseline ○ ■ Flare ■ Clus. Tile Survey-based evaluation ■ ○ Network throughput traces ■ ○ 20 participants 0. 71 Mbps and 1. 05 Mbps Quality metrics ■ PSPNR, and buffering ratio

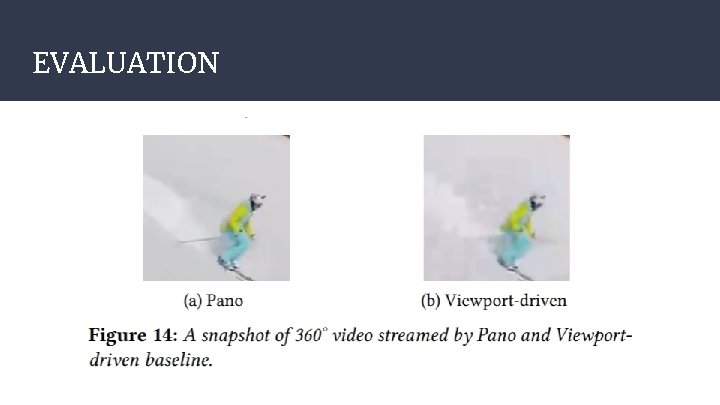

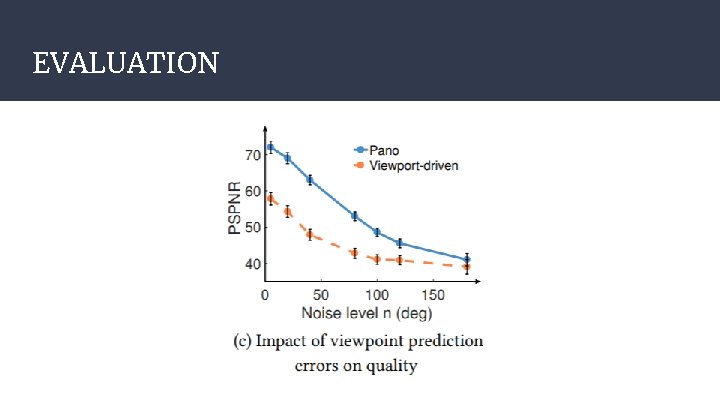

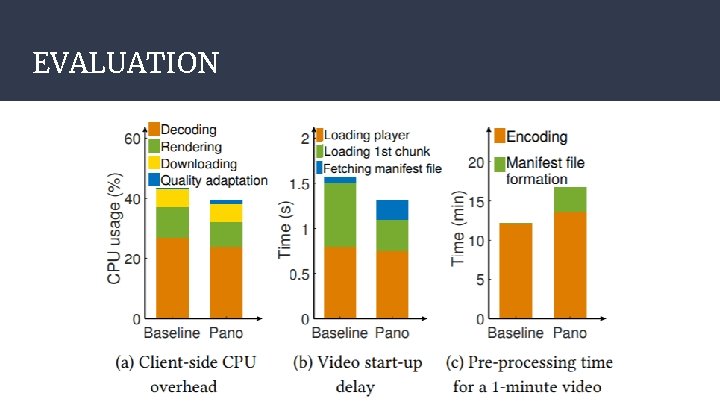

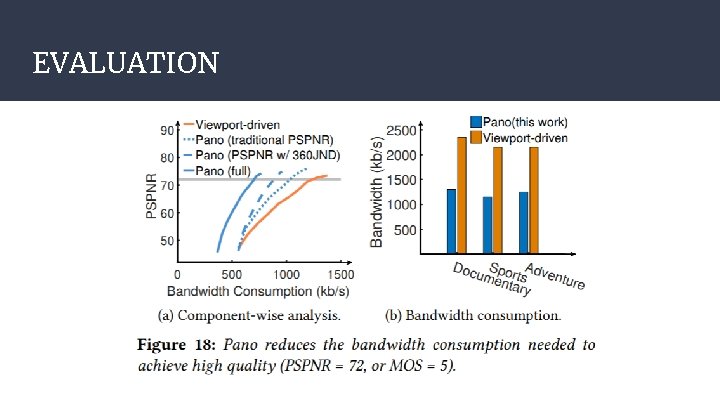

EVALUATION ● Key findings ○ Compared to the state-of-the-art solutions, Pano improves perceived quality without using more bandwidth: 25%-142% higher mean opinion score (MOS) or 10% higher PSPNR with the same or less buffering across a variety of 360° video genres. ○ Pano achieves substantial improvement even in the presence of viewpoint/bandwidth prediction errors. ○ Pano imposes minimal additional systems overhead and reduces the resource consumption on the client and the server.

EVALUATION The MOS is calculated as the arithmetic mean over single ratings performed by human subjects for a given stimulus in a subjective quality evaluation test.

EVALUATION

EVALUATION

EVALUATION

EVALUATION

EVALUATION

Pros and Cons ● Pros ○ The authors have a good outline of their methodologies and they explain their ideas well. ○ ● The implementation incorporates the existing DASH server and is cheap to deploy. Cons ○ The evaluation process is heavily survey based. ○ The evaluation dataset does not seem comprehensive. ○ Even though the Pano reduces impact of viewport estimation error, it still does not solve this problem that prevails in viewport-driven streaming.

- Slides: 25