PACLearning and Reconstruction of Quantum States Scott Aaronson

![19 Numerical Simulation [A. -Dechter 2008] We implemented Hazan’s algorithm in MATLAB, and tested 19 Numerical Simulation [A. -Dechter 2008] We implemented Hazan’s algorithm in MATLAB, and tested](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-19.jpg)

![Combining My Postselection and Quantum Learning Results [A. -Drucker 2010]: Given an n-qubit state Combining My Postselection and Quantum Learning Results [A. -Drucker 2010]: Given an n-qubit state](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-21.jpg)

![22 New Result [A. 2017, in preparation]: “Shadow Tomography” Theorem: Let be an unknown 22 New Result [A. 2017, in preparation]: “Shadow Tomography” Theorem: Let be an unknown](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-22.jpg)

![Proof Idea Theorem [A. 2006, fixed by Harrow-Montanaro-Lin 2016]: Let be an unknown D-dimensional Proof Idea Theorem [A. 2006, fixed by Harrow-Montanaro-Lin 2016]: Let be an unknown D-dimensional](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-23.jpg)

- Slides: 25

PAC-Learning and Reconstruction of Quantum States Scott Aaronson (University of Texas at Austin) COLT’ 2017, Amsterdam 1

2 What Is A Quantum (Pure) State? A unit vector of complex numbers Infinitely many bits in the amplitudes , —but when you measure, the state “collapses” to just 1 bit! (0 with probability | |2, 1 with probability | |2) Holevo’s Theorem (1973): By sending n qubits, Alice can communicate at most n classical bits to Bob (or 2 n if they pre-share entanglement)

3 The Problem for CS A state of n entangled qubits requires 2 n complex numbers to specify, even approximately: To a computer scientist, this is probably the central fact about quantum mechanics Why should we worry about it?

4 Answer 1: Quantum State Tomography Task: Given lots of copies of an unknown quantum state, produce an approximate classical description of it Central problem: To do tomography on a general state of n particles, you clearly need ~cn measurements Innsbruck group (2005): 8 particles / ~656, 000 experiments!

5 Answer 2: Quantum Computing Skepticism Levin Goldreich ‘t Hooft Davies Wolfram Some physicists and computer scientists believe quantum computers will be impossible for a fundamental reason For many of them, the problem is that a quantum computer would “manipulate an exponential amount of information” using only polynomial resources But is it really an exponential amount?

6 Can we tame the exponential beast? Idea: “Shrink quantum states down to reasonable size” by viewing them operationally Analogy: A probability distribution over n-bit strings also takes ~2 n bits to specify. But seeing a sample only provides n bits In this talk, I’ll survey 14 years of results showing how the standard tools from COLT can be used to upper-bound the “effective size” of quantum states [A. 2004], [A. 2006], [A. -Drucker 2010], [A. 2017], [A. -Hazan-Nayak 2017] Lesson: “The linearity of QM helps tame the exponentiality of QM”

7 The Absent-Minded Advisor Problem | Can you hand all your grad students the same n. O(1)qubit quantum state | , so that by measuring their copy of | in a suitable basis, each student can learn the {0, 1} answer to their n-bit thesis question? NO [Nayak 1999, Ambainis et al. 1999] Indeed, quantum communication is no better than classical for this task as n

8 On the Bright Side… Suppose Alice wants to describe an n-qubit quantum state | to Bob, well enough that, for any 2 -outcome measurement E in some finite set S, Bob can estimate Pr[E(| ) accepts] to constant additive error Theorem (A. 2004): In that case, it suffices for Alice to send Bob only ~n log n log|S| classical bits (trivial bounds: exp(n), |S|)

| ALL YES/NO MEASUREMENTS PERFORMABLE USING ≤n 2 GATES 9

10 Interlude: Mixed States D D Hermitian psd matrix with trace 1 Encodes (everything measurable about) a probability distribution where each superposition | i occurs with probability pi A yes-or-no measurement can be represented by a D D Hermitian psd matrix E with all eigenvalues in [0, 1] The measurement “accepts” with probability Tr(E ), and “rejects” with probability 1 -Tr(E )

11 How does theorem work? I 321 Alice is trying to describe the n-qubit state =| | to Bob In the beginning, Bob knows nothing about , so he guesses it’s the maximally mixed state 0=I (actually I/2 n = I/D) Then Alice helps Bob improve, by repeatedly telling him a measurement Et S on which his current guess t-1 badly fails Bob lets t be the state obtained by starting from t-1, then performing Et and postselecting on getting the right outcome

12 Question: Why can Bob keep reusing the same state? To ensure that, we actually need to “amplify” | to | log(n), slightly increasing the number of qubits from n to n log n Gentle Measurement / Almost As Good As New Lemma: Suppose a measurement of a mixed state yields a certain outcome with probability 1 - Then after the measurement, we still have a state ’ that’s -close to in trace distance

13 Crucial Claim: Bob’s iterative learning procedure will “converge” on a state T that behaves like | log(n) on all measurements in the set S, after at most T=O(n log(n)) iterations Proof: Let pt = Pr[first t postselections all succeed]. Then Solving, we find that. Iftp=wasn’t O(n log(n)) less than, t (2/3)p t-1, learning So it’s enough for Alicesay, to tell Bob about would’ve ended! T=O(n log(n)) measurements E 1, …, ET, using log|S| bits per measurement Complexity theory consequence: BQP/qpoly Post. BQP/poly (Open whether BQP/qpoly=BQP/poly)

14 Quantum Occam’s Razor Theorem Let | be an unknown entangled state of n particles Suppose you just want to be able to estimate the acceptance probabilities of most measurements E drawn from some probability distribution Then it suffices to do the following, for some “Quantum states are m=O(n): 1. Choose m measurements independently from PAC-learnable” 2. Go into your lab and estimate acceptance probabilities of all of them on | 3. Find any “hypothesis state” approximately consistent with all measurement outcomes

To prove theorem, we use Kearns and Schapire’s Fat-Shattering Dimension Let C be a class of functions from S to [0, 1]. We say a set {x 1, …, xk} S is -shattered by C if there exist reals a 1, …, ak such that, for all 2 k possible statements of the form f(x 1) a 1 - f(x 2) a 2+ … f(xk) ak- , there’s some f C that satisfies the statement. Then fat. C( ), the -fat-shattering dimension of C, is the size of the largest set -shattered by C.

Small Fat-Shattering Dimension Implies Small Sample Complexity 16 Let C be a class of functions from S to [0, 1], and let f C. Suppose we draw m elements x 1, …, xm independently from some distribution , and then output a hypothesis h C such that |h(xi)-f(xi)| for all i. Then provided /7 and we’ll have with probability at least 1 - over x 1, …, xm. Bartlett-Long 1996, building on Alon et al. 1993, building on Blumer et al. 1989

Upper-Bounding the Fat-Shattering Dimension of Quantum States Nayak 1999: If we want to encode k classical bits into n qubits, in such a way that any bit can be recovered with probability 1 -p, then we need n (1 -H(p))k Corollary (“turning Nayak’s result on its head”): Let Cn be the set of functions that map an n-qubit measurement E to to Tr(E ), for some . Then No need thank me! Quantum Occam’s Razor Theorem follows easily… 17

18 How do we find the hypothesis state? Here’s one way: let b 1, …, bm be the outcomes of measurements E 1, …, Em Then choose a hypothesis state to minimize This is a convex programming problem, which can be solved in time polynomial in D=2 n (good enough in practice for n 15 or so) Optimized, linear-time iterative method for this problem: [Hazan 2008]

![19 Numerical Simulation A Dechter 2008 We implemented Hazans algorithm in MATLAB and tested 19 Numerical Simulation [A. -Dechter 2008] We implemented Hazan’s algorithm in MATLAB, and tested](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-19.jpg)

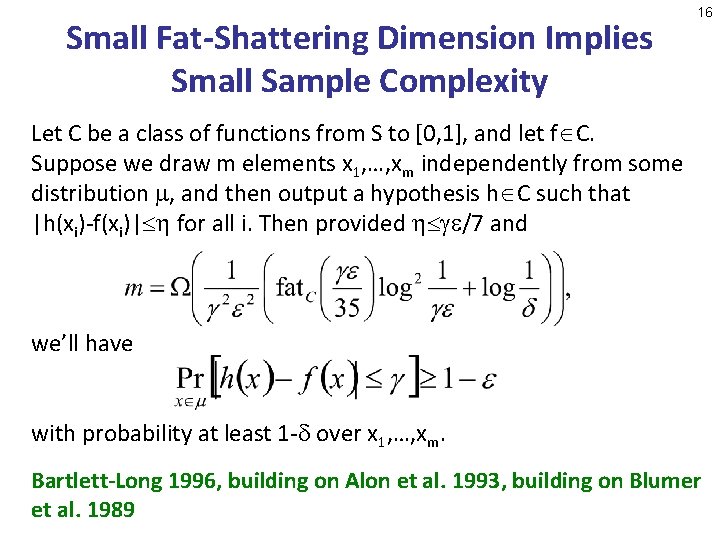

19 Numerical Simulation [A. -Dechter 2008] We implemented Hazan’s algorithm in MATLAB, and tested it on simulated quantum state data We studied how the number of sample measurements m needed for accurate predictions scales with the number of qubits n, for n≤ 10 Result of experiment: My theorem appears to be true…

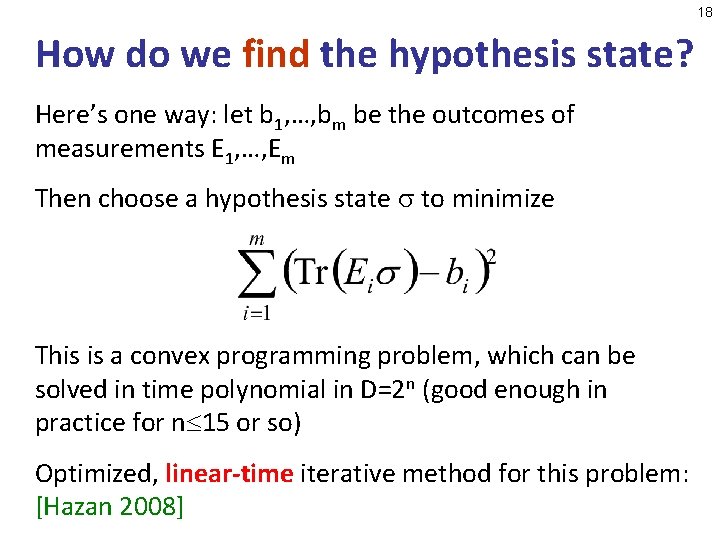

20 Now tested on real lab data as well! [Rocchetto et al. 2017, in preparation]

![Combining My Postselection and Quantum Learning Results A Drucker 2010 Given an nqubit state Combining My Postselection and Quantum Learning Results [A. -Drucker 2010]: Given an n-qubit state](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-21.jpg)

Combining My Postselection and Quantum Learning Results [A. -Drucker 2010]: Given an n-qubit state and complexity bound T, there exists an efficient measurement V on poly(n, T) qubits, such that any state that “passes” V can be used to efficiently estimate ’s response to any yes-or-no measurement E that’s implementable by a circuit of size T Application: Trusted quantum advice is equivalent to trusted classical advice + untrusted quantum advice In complexity terms: BQP/qpoly QMA/poly Proof uses boosting-like techniques, together with results on -covers and fat-shattering dimension 21

![22 New Result A 2017 in preparation Shadow Tomography Theorem Let be an unknown 22 New Result [A. 2017, in preparation]: “Shadow Tomography” Theorem: Let be an unknown](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-22.jpg)

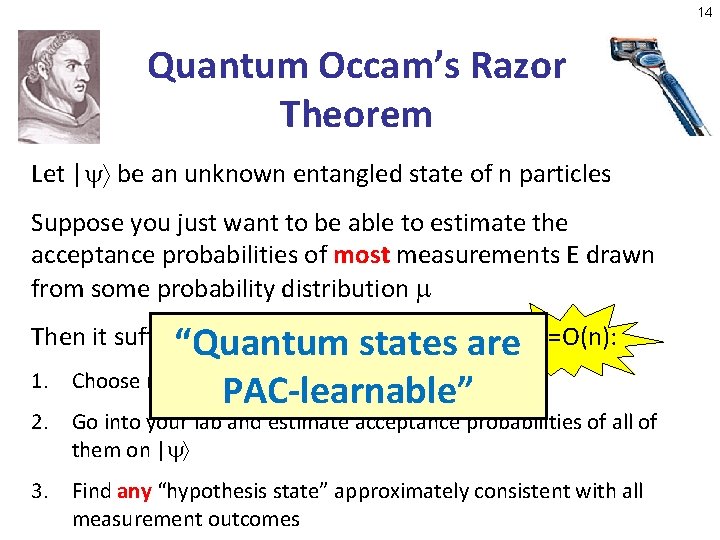

22 New Result [A. 2017, in preparation]: “Shadow Tomography” Theorem: Let be an unknown D-dimensional state, and let E 1, …, EM be known 2 -outcome measurements. Suppose we want to know Tr(Ei ) to within additive error , for all i [M]. We can achieve this, with high probability, given only k copies of , where Open Problem: Dependence on D removable? Challenge: How to measure E 1, …, EM without destroying our few copies of in the process!

![Proof Idea Theorem A 2006 fixed by HarrowMontanaroLin 2016 Let be an unknown Ddimensional Proof Idea Theorem [A. 2006, fixed by Harrow-Montanaro-Lin 2016]: Let be an unknown D-dimensional](https://slidetodoc.com/presentation_image_h2/987d61f5dfb41dc825960e0635e898f4/image-23.jpg)

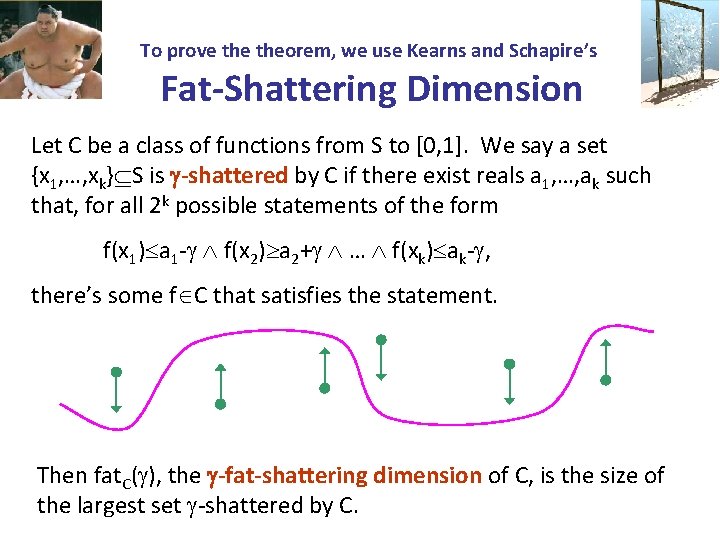

Proof Idea Theorem [A. 2006, fixed by Harrow-Montanaro-Lin 2016]: Let be an unknown D-dimensional state, and let E 1, …, EM be known 2 -outcome measurements. Suppose we’re promised that eithere exists an i such that Tr(Ei ) c, or else Tr(Ei ) c- for all i [M]. We can decide which, with high probability, given only k copies of , where Indeed, can find an i with Tr(Ei ) c- , if given O(log 4 M / 2) copies of Now run my postselected learning algorithm [A. 2004], but using the above to find the Ei’s to postselect on! 23

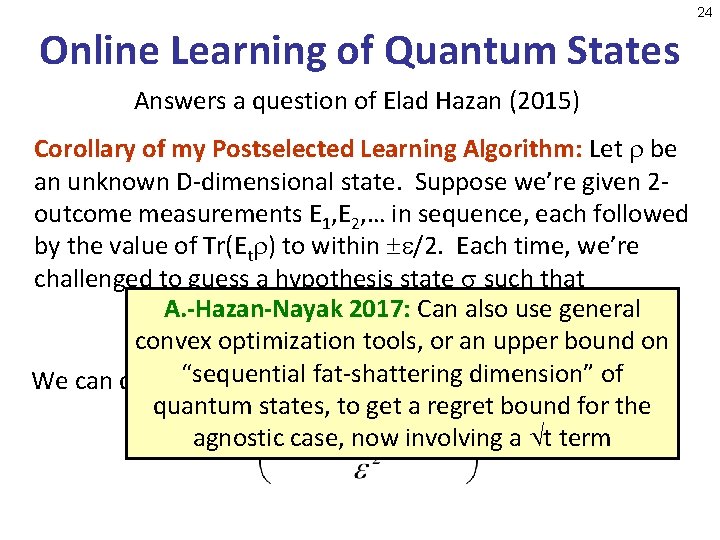

24 Online Learning of Quantum States Answers a question of Elad Hazan (2015) Corollary of my Postselected Learning Algorithm: Let be an unknown D-dimensional state. Suppose we’re given 2 outcome measurements E 1, E 2, … in sequence, each followed by the value of Tr(Et ) to within /2. Each time, we’re challenged to guess a hypothesis state such that A. -Hazan-Nayak 2017: Can also use general convex optimization tools, or an upper bound on dimension” We can do this“sequential so that wefat-shattering fail at most k times, whereof quantum states, to get a regret bound for the agnostic case, now involving a t term

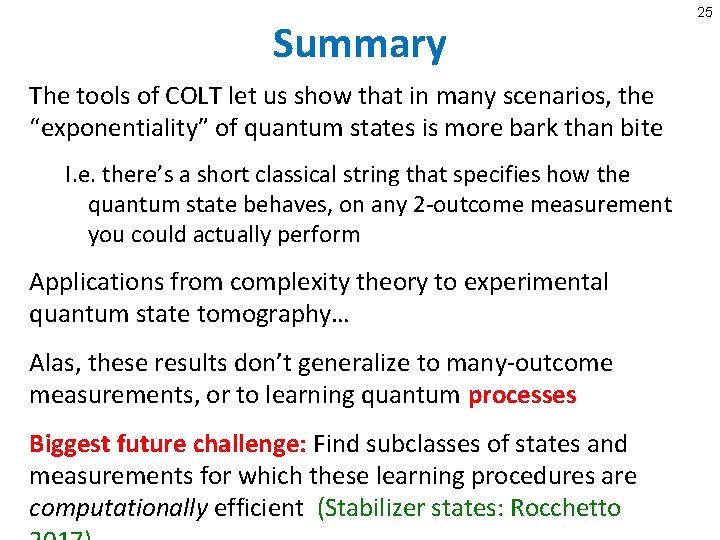

Summary The tools of COLT let us show that in many scenarios, the “exponentiality” of quantum states is more bark than bite I. e. there’s a short classical string that specifies how the quantum state behaves, on any 2 -outcome measurement you could actually perform Applications from complexity theory to experimental quantum state tomography… Alas, these results don’t generalize to many-outcome measurements, or to learning quantum processes Biggest future challenge: Find subclasses of states and measurements for which these learning procedures are computationally efficient (Stabilizer states: Rocchetto 25