Overview of deep learning Gregery T Buzzard Varieties

Overview of deep learning Gregery T. Buzzard

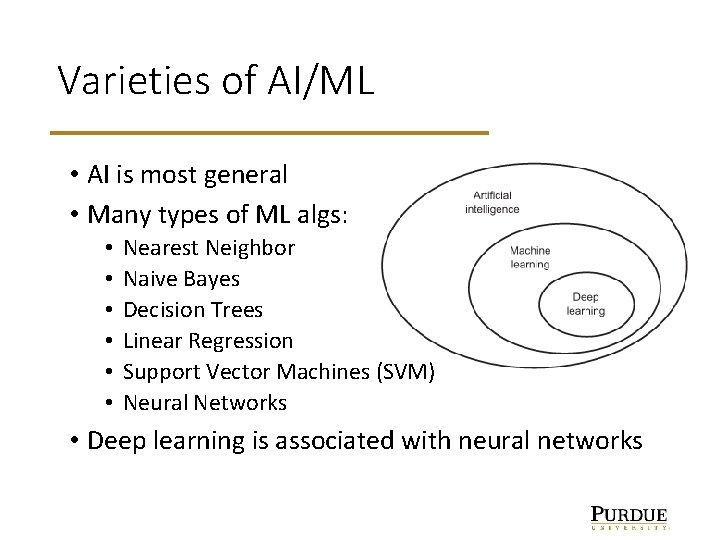

Varieties of AI/ML • AI is most general • Many types of ML algs: • • • Nearest Neighbor Naive Bayes Decision Trees Linear Regression Support Vector Machines (SVM) Neural Networks • Deep learning is associated with neural networks

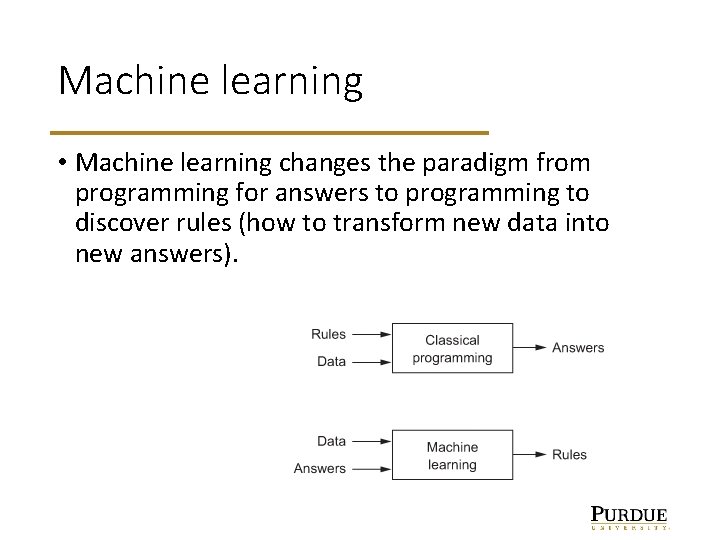

Machine learning • Machine learning changes the paradigm from programming for answers to programming to discover rules (how to transform new data into new answers).

Shallow vs deep • Shallow learning – only 1 or 2 layers: limited ability to combine features into more sophisticated features. E. g. , the concept of an eye. • Deep learning allows a model to learn all layers of a representation jointly rather than sequentially better than stacking shallow models. • Two key points: • Layer-by-layer way in which increasingly complex representations are developed • These representations are learned jointly

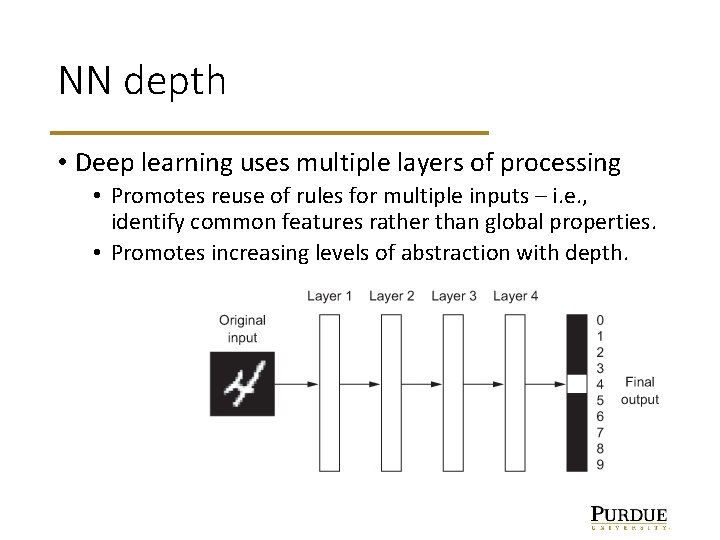

NN depth • Deep learning uses multiple layers of processing • Promotes reuse of rules for multiple inputs – i. e. , identify common features rather than global properties. • Promotes increasing levels of abstraction with depth.

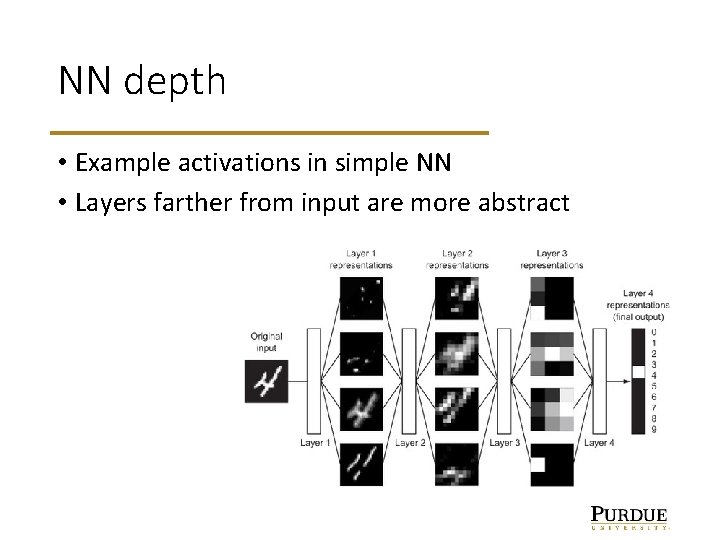

NN depth • Example activations in simple NN • Layers farther from input are more abstract

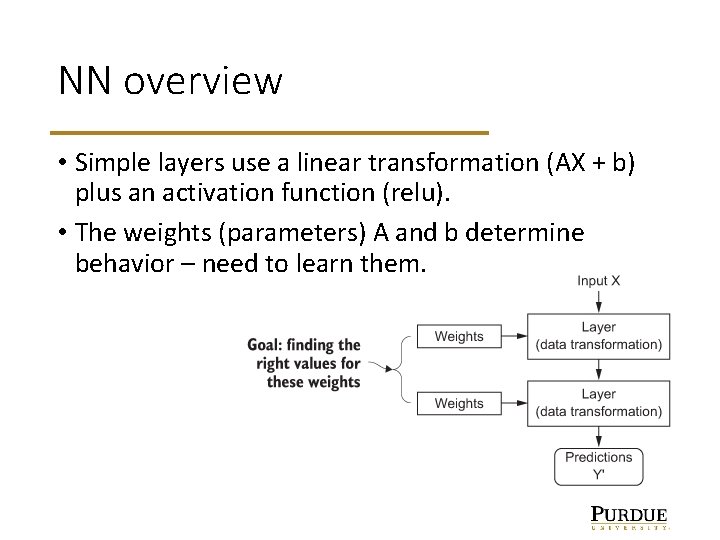

NN overview • Simple layers use a linear transformation (AX + b) plus an activation function (relu). • The weights (parameters) A and b determine behavior – need to learn them.

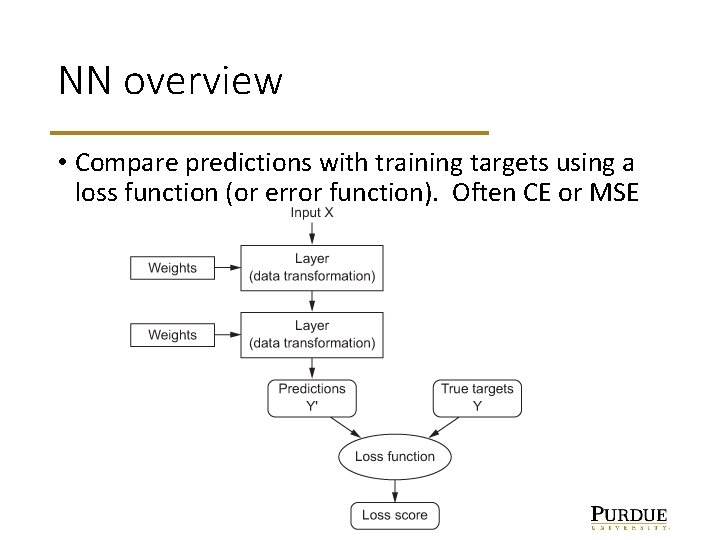

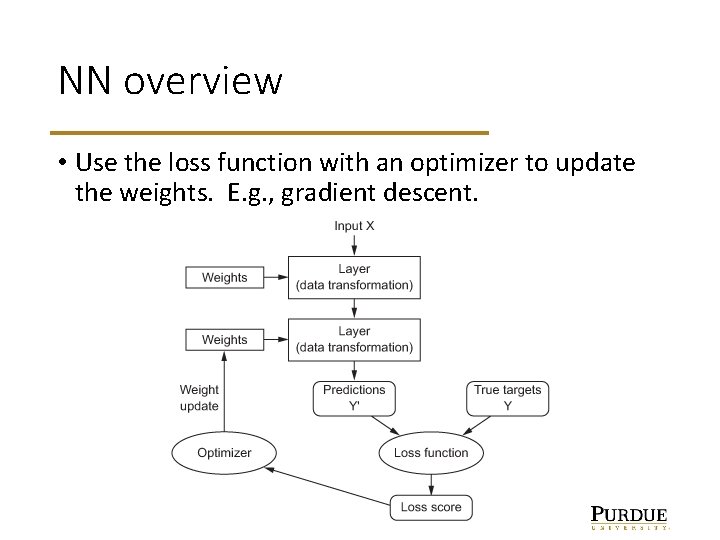

NN overview • Compare predictions with training targets using a loss function (or error function). Often CE or MSE

NN overview • Use the loss function with an optimizer to update the weights. E. g. , gradient descent.

Driving forces Three technical forces are driving advances in machine learning: • Hardware • Datasets and benchmarks • Algorithmic advances (and software platforms) • Belief that it works

Neural networks – hype is not new! • Developed by Frank Rosenblatt in 1957 at Cornell under a grant from the Office of Naval Research. • The New York Times reported the perceptron to be "the embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence. ” https: //en. wikipedia. org/wiki/Perceptron https: //www. dexlabanalytics. com/wp-content/uploads/2017/07/ The-Timeline-of-Artificial-Intelligence-and-Robotics. png

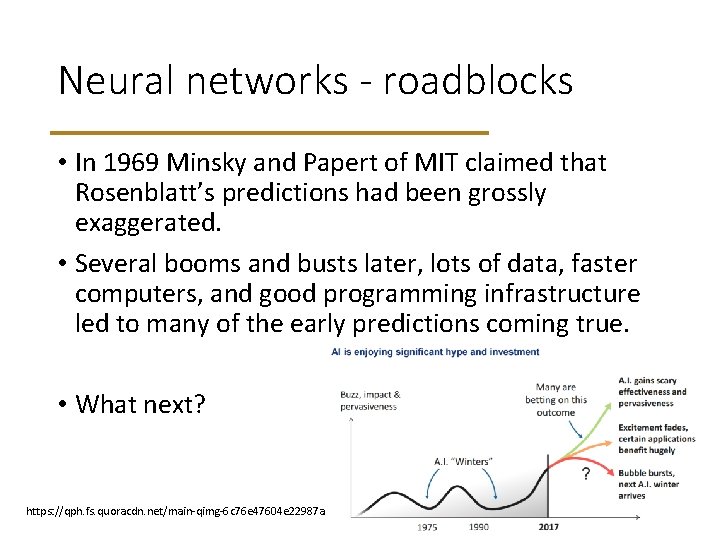

Neural networks - roadblocks • In 1969 Minsky and Papert of MIT claimed that Rosenblatt’s predictions had been grossly exaggerated. • Several booms and busts later, lots of data, faster computers, and good programming infrastructure led to many of the early predictions coming true. • What next? https: //qph. fs. quoracdn. net/main-qimg-6 c 76 e 47604 e 22987 a 0349 e 2 d 4 c 892646

• Example code on Google colaboratory

- Slides: 13