LStore Distributed storage system Alan Tackett Vanderbilt University

L-Store Distributed storage system Alan Tackett Vanderbilt University Joint Project Vanderbilt - ACCRE (namespace and glue) Lo. CI - UTK - Micah Beck, Terry Moore (storage protocol) Nevoa Networks - Hunter Hagewood (end user tools)

L-Store Goals § Scalable in both quantity and rate u u Metadata (# of files and transactions/sec) Data throughput(amount of data and throughput) § Reliable § Secure § Accessible

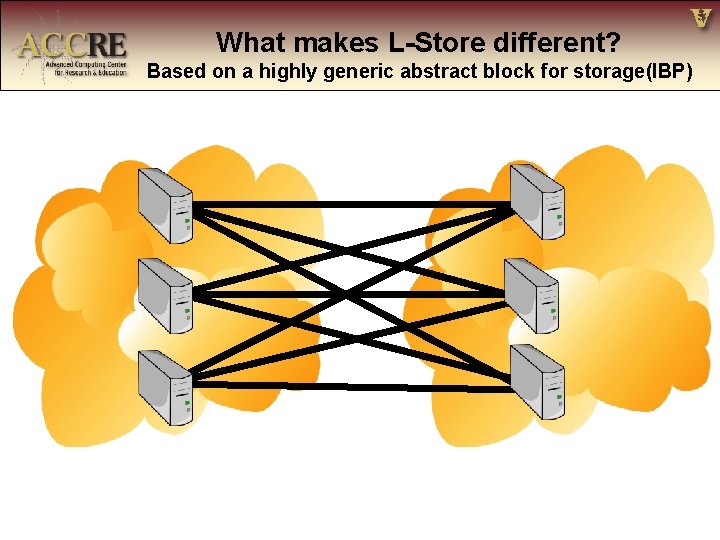

What makes L-Store different? Based on a highly generic abstract block for storage(IBP)

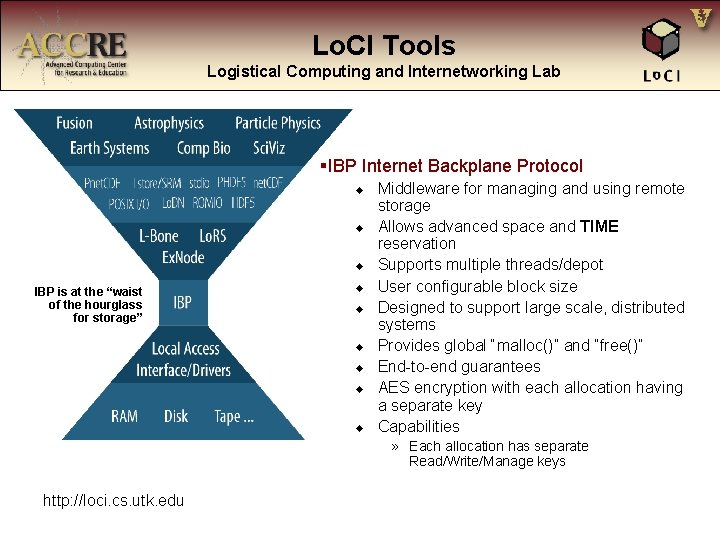

Lo. CI Tools Logistical Computing and Internetworking Lab §IBP Internet Backplane Protocol u u u IBP is at the “waist of the hourglass for storage” u u u Middleware for managing and using remote storage Allows advanced space and TIME reservation Supports multiple threads/depot User configurable block size Designed to support large scale, distributed systems Provides global “malloc()” and “free()” End-to-end guarantees AES encryption with each allocation having a separate key Capabilities » Each allocation has separate Read/Write/Manage keys http: //loci. cs. utk. edu

What makes L-Store different? Based on a highly generic abstract block for storage(IBP)

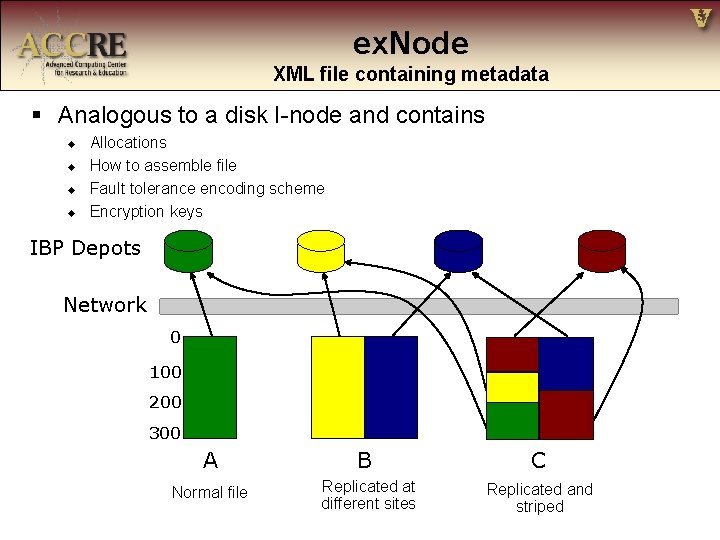

ex. Node XML file containing metadata § Analogous to a disk I-node and contains u u Allocations How to assemble file Fault tolerance encoding scheme Encryption keys IBP Depots Network 0 100 200 300 A B C Normal file Replicated at different sites Replicated and striped

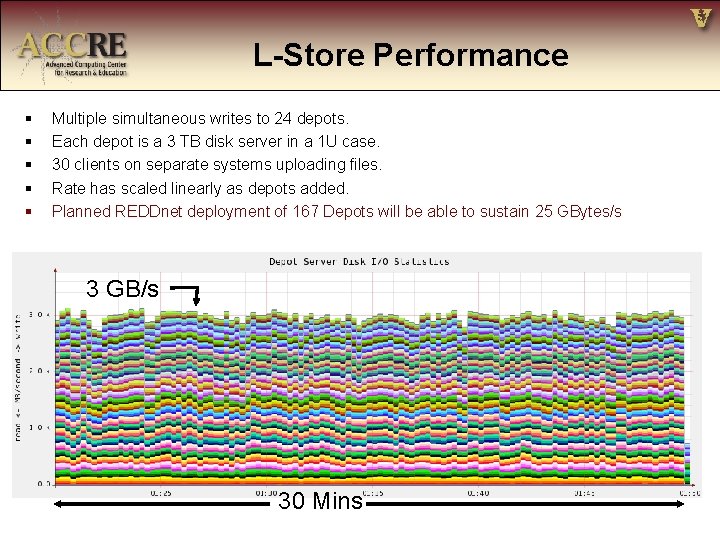

L-Store Performance § § § Multiple simultaneous writes to 24 depots. Each depot is a 3 TB disk server in a 1 U case. 30 clients on separate systems uploading files. Rate has scaled linearly as depots added. Planned REDDnet deployment of 167 Depots will be able to sustain 25 GBytes/s 3 GB/s 30 Mins

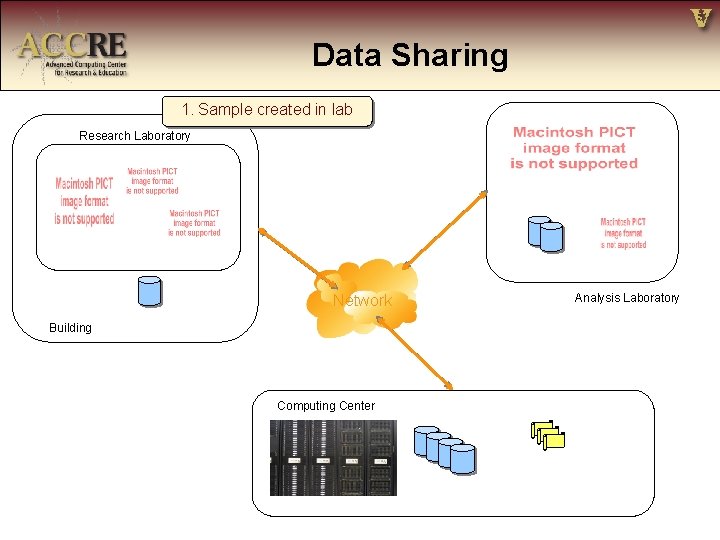

Data Sharing 1. Sample created in lab Research Laboratory Network Building Computing Center Analysis Laboratory

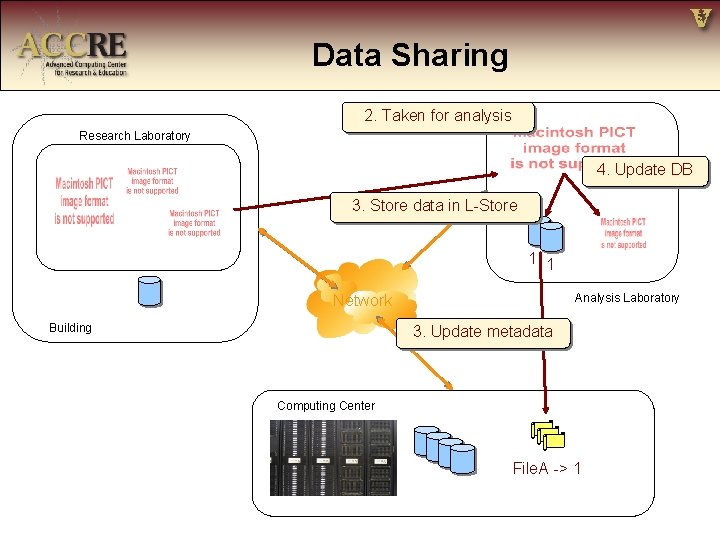

Data Sharing 2. Taken for analysis Research Laboratory 4. Update DB 3. Store data in L-Store 1 1 Network Building Analysis Laboratory 3. Update metadata Computing Center File. A -> 1

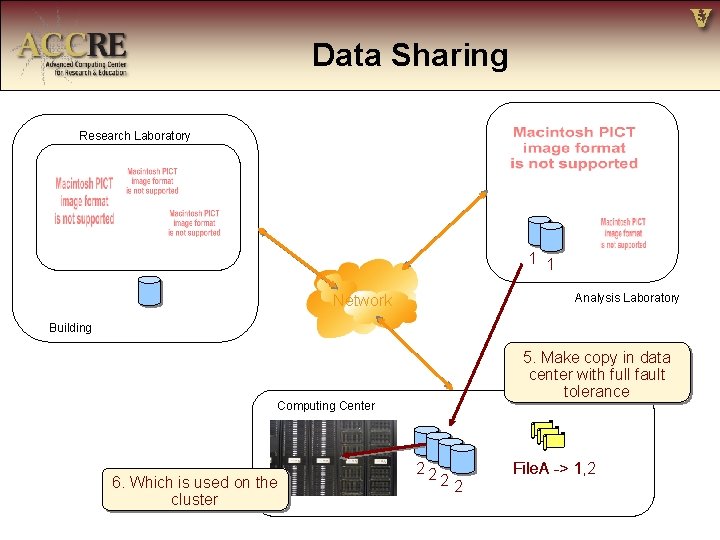

Data Sharing Research Laboratory 1 1 Network Analysis Laboratory Building 5. Make copy in data center with full fault tolerance Computing Center 6. Which is used on the cluster 22 22 File. A -> 11, 2

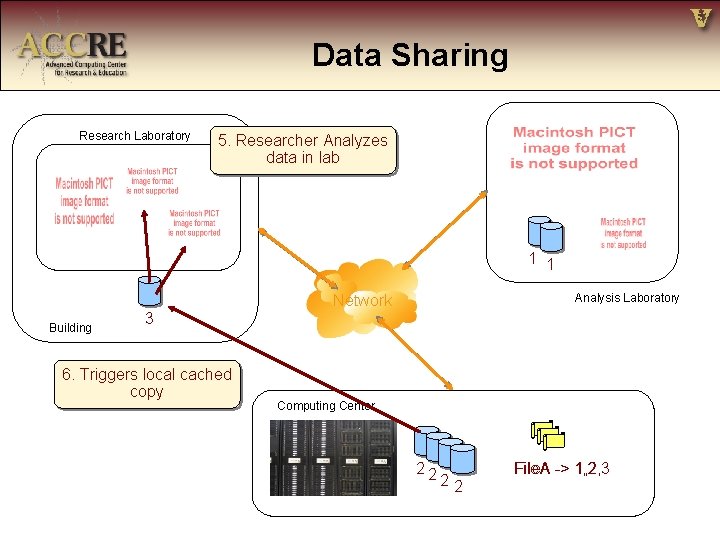

Data Sharing Research Laboratory 5. Researcher Analyzes data in lab 1 1 Network Building Analysis Laboratory 3 6. Triggers local cached copy Computing Center 22 22 File. A -> 1, 2, 3

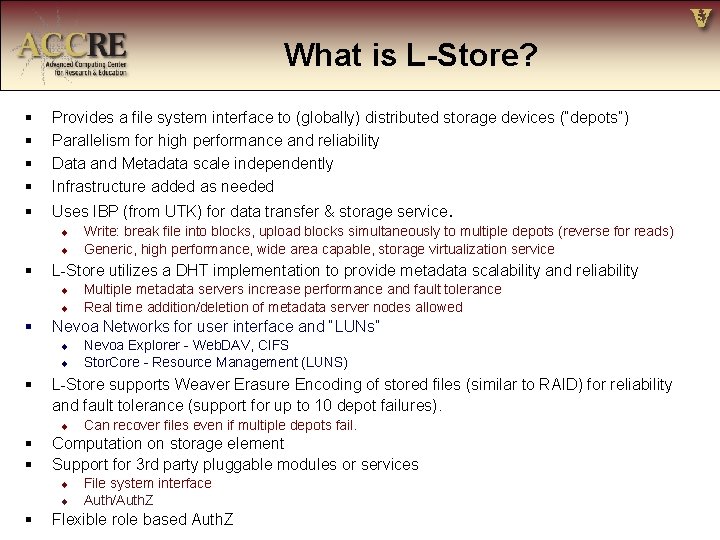

What is L-Store? § § Provides a file system interface to (globally) distributed storage devices (“depots”) Parallelism for high performance and reliability Data and Metadata scale independently Infrastructure added as needed § Uses IBP (from UTK) for data transfer & storage service. u u § L-Store utilizes a DHT implementation to provide metadata scalability and reliability u u § u Can recover files even if multiple depots fail. Computation on storage element Support for 3 rd party pluggable modules or services u u § Nevoa Explorer - Web. DAV, CIFS Stor. Core - Resource Management (LUNS) L-Store supports Weaver Erasure Encoding of stored files (similar to RAID) for reliability and fault tolerance (support for up to 10 depot failures). u § § Multiple metadata servers increase performance and fault tolerance Real time addition/deletion of metadata server nodes allowed Nevoa Networks for user interface and “LUNs” u § Write: break file into blocks, upload blocks simultaneously to multiple depots (reverse for reads) Generic, high performance, wide area capable, storage virtualization service File system interface Auth/Auth. Z Flexible role based Auth. Z

- Slides: 12