Lecture 20 of 41 Natural Language Processing NLP

- Slides: 16

Lecture 20 of 41 Natural Language Processing (NLP) Survey Automatic Speech Recognition (ASR) aka Speech-to-Text & Machine Translation (MT) William H. Hsu Department of Computer Science, KSU Canvas course redirector: http: //bit. ly/kstate-ai-class Course web site: http: //kdd. cs. ksu. edu/Courses/CIS 530/ Instructor home page: http: //www. cs. ksu. edu/~bhsu Reading for Today: § 22. 1 – 22. 3, p. 860 – 873, R&N 3 e § 23. 4 – 23. 5, p. 907 – 918, R&N 3 e Reading for Next Class: § 12. 3, p. 446 – 450, R&N 3 e CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

Lecture Outline l Last Class: Semantic Nets & Ontologies, § 12. 1 – 12. 2 (p. 437 – 445), R&N 3 e Semantic Networks Role in knowledge representation (KR) & knowledge engineering (KE) l Today: NLP Intro, § 22. 1 – 22. 3 (p. 860 – 873), 23. 4 – 23. 5 (p. 907 – 918); R&N 3 e Natural Language Processing (NLP) General tasks: translation, story understanding, speech, conversation Specialized tasks: information extraction, question answering (QA) Methods: parsing, learning for pattern recognition Models: statistical (Bayesian) – Hidden Markov Models (HMMs), etc. l Automatic Speech Recognition (ASR) aka Speech-to-Text l Text-To-Speech and Conversation l Statistical Machine Translation (SMT) l Document Understanding: Topic Modeling, Classification l Language Models l Next: More on Production Systems, Implementing Reasoners for Frame Problem l Later This Week: Analogy, Case-Based Reasoning; Probability Review CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

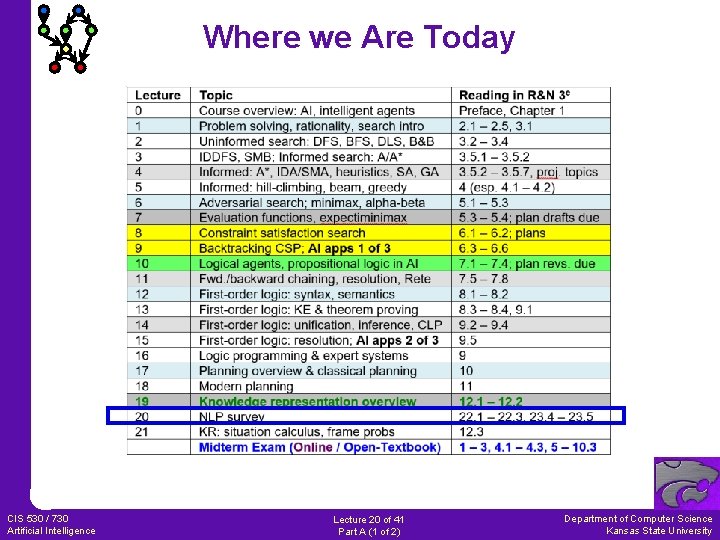

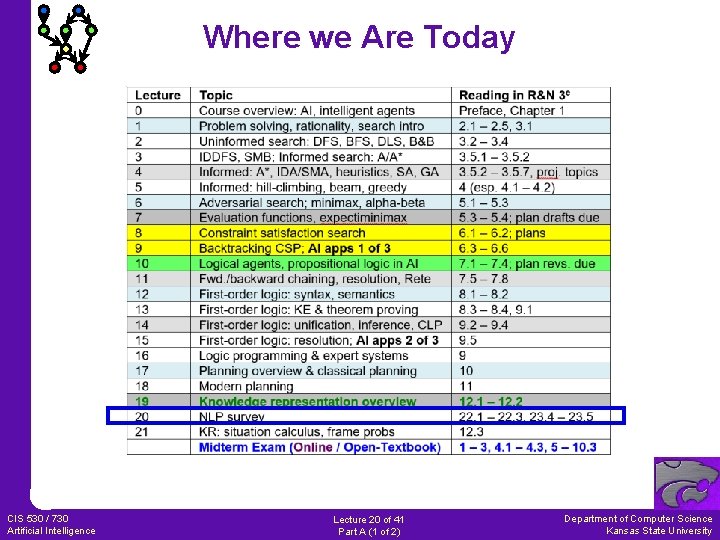

Where we Are Today CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

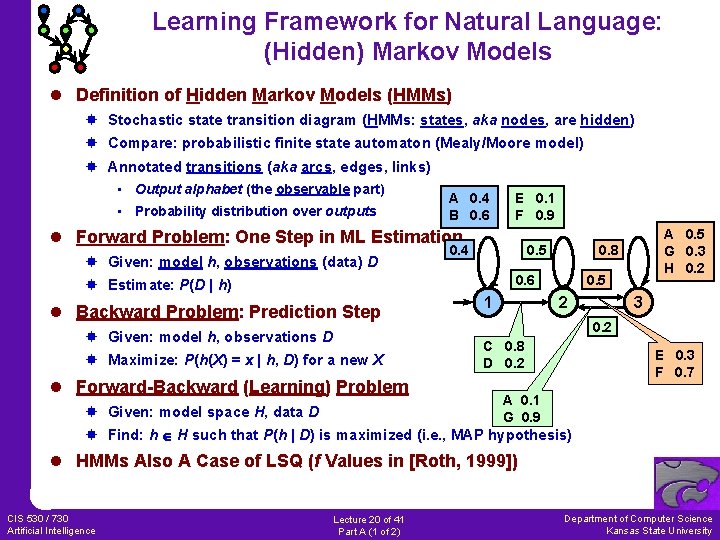

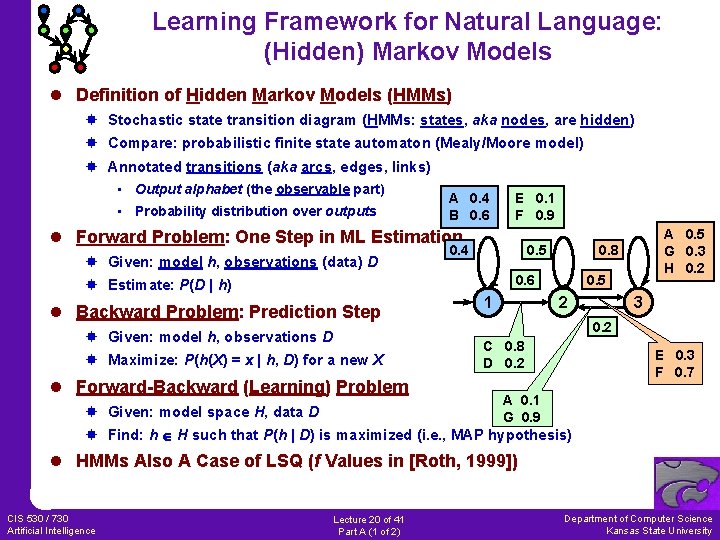

Learning Framework for Natural Language: (Hidden) Markov Models l Definition of Hidden Markov Models (HMMs) Stochastic state transition diagram (HMMs: states, aka nodes, are hidden) Compare: probabilistic finite state automaton (Mealy/Moore model) Annotated transitions (aka arcs, edges, links) • Output alphabet (the observable part) • Probability distribution over outputs A 0. 4 B 0. 6 E 0. 1 F 0. 9 l Forward Problem: One Step in ML Estimation Given: model h, observations (data) D 0. 4 0. 5 0. 8 0. 6 Estimate: P(D | h) l Backward Problem: Prediction Step Given: model h, observations D Maximize: P(h(X) = x | h, D) for a new X l Forward-Backward (Learning) Problem Given: model space H, data D 1 A 0. 5 G 0. 3 H 0. 2 0. 5 2 3 0. 2 C 0. 8 D 0. 2 E 0. 3 F 0. 7 A 0. 1 G 0. 9 Find: h H such that P(h | D) is maximized (i. e. , MAP hypothesis) l HMMs Also A Case of LSQ (f Values in [Roth, 1999]) CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

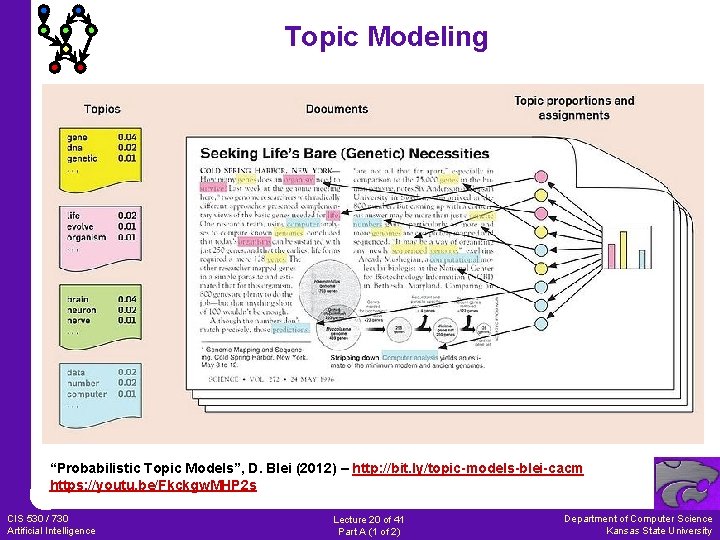

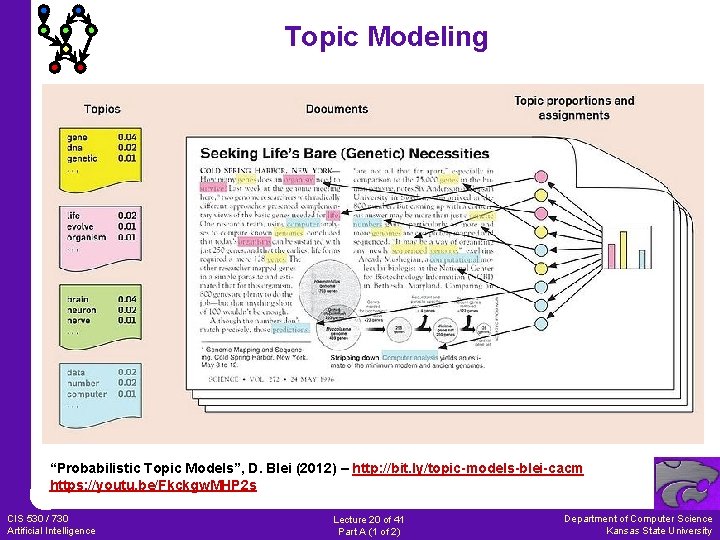

Topic Modeling “Probabilistic Topic Models”, D. Blei (2012) – http: //bit. ly/topic-models-blei-cacm https: //youtu. be/Fkckgw. MHP 2 s CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

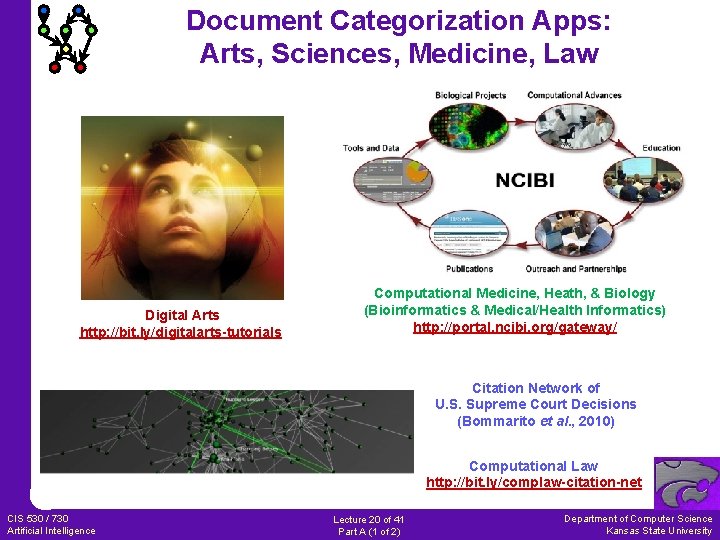

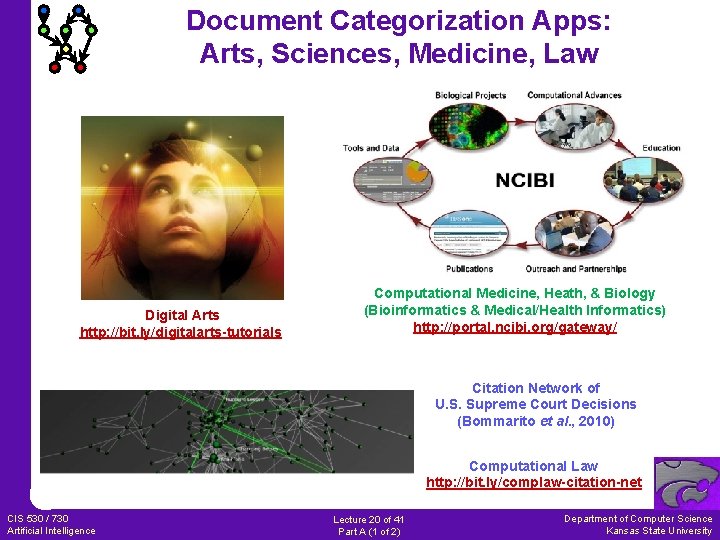

Document Categorization Apps: Arts, Sciences, Medicine, Law Digital Arts http: //bit. ly/digitalarts-tutorials Computational Medicine, Heath, & Biology (Bioinformatics & Medical/Health Informatics) http: //portal. ncibi. org/gateway/ Citation Network of U. S. Supreme Court Decisions (Bommarito et al. , 2010) Computational Law http: //bit. ly/complaw-citation-net CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

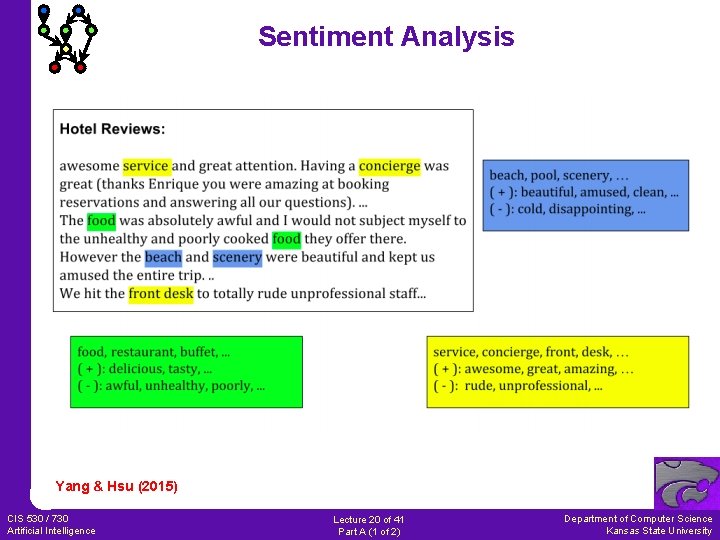

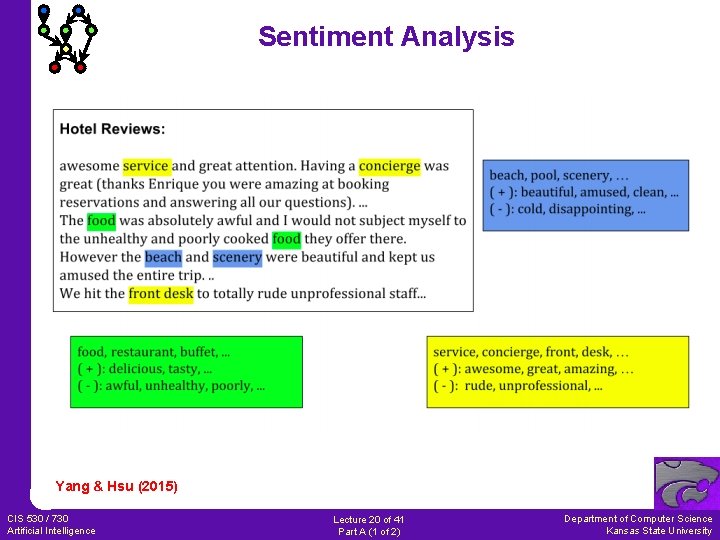

Sentiment Analysis Yang & Hsu (2015) CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

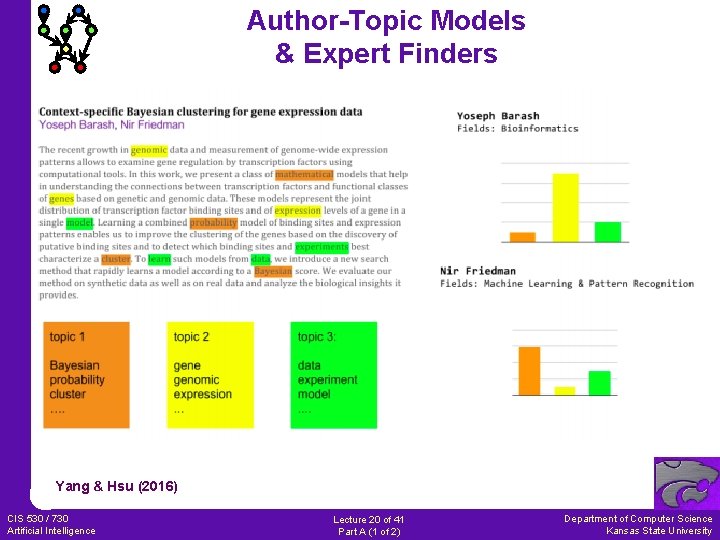

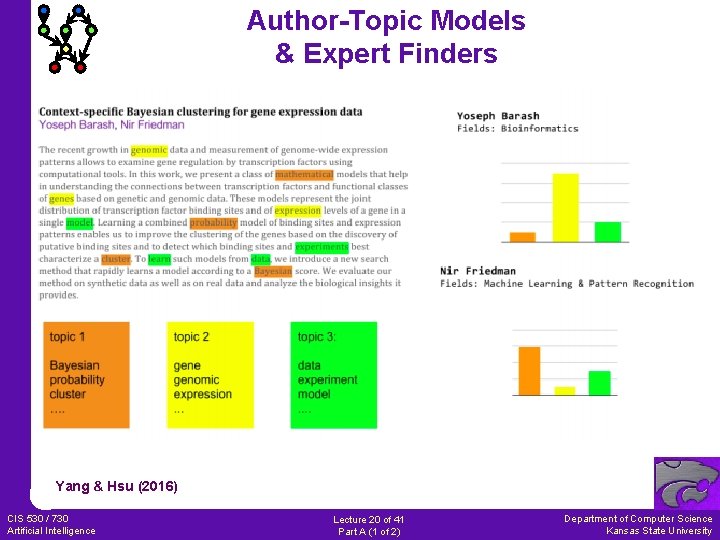

Author-Topic Models & Expert Finders Yang & Hsu (2016) CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

Information Extraction Tasks: RTE, Summarization, QA l Recognizing Textual Entailment (RTE) Determine when meaning of text logically follows from that of another Approaches: text categorization, semantic mapping, inference Related to question answering: “true/false” questions l Update Summarization Produce brief synopsis of points in text where user has read others Approaches: formal summarization, natural language (NL) synthesis Related to question answering: collect relevant documents, digest l Question Answering (QA) Respond to query posed in natural language Approaches: search, focused crawling, semantic mapping CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

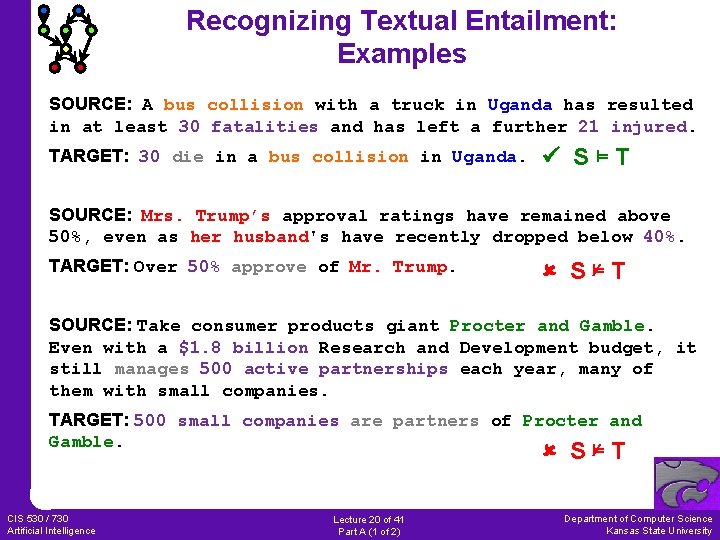

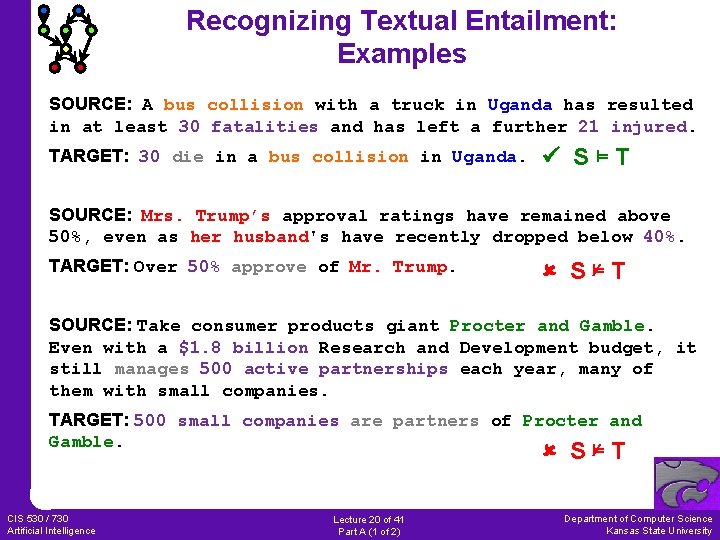

Recognizing Textual Entailment: Examples SOURCE: A bus collision with a truck in Uganda has resulted in at least 30 fatalities and has left a further 21 injured. TARGET: 30 die in a bus collision in Uganda. S⊨T SOURCE: Mrs. Trump’s approval ratings have remained above 50%, even as her husband's have recently dropped below 40%. TARGET: Over 50% approve of Mr. Trump. S⊭T SOURCE: Take consumer products giant Procter and Gamble. Even with a $1. 8 billion Research and Development budget, it still manages 500 active partnerships each year, many of them with small companies. TARGET: 500 small companies are partners of Procter and Gamble. S⊭T CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

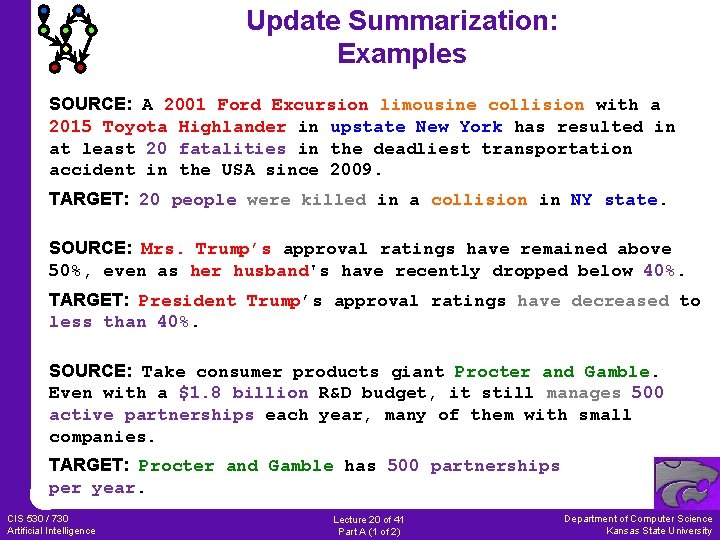

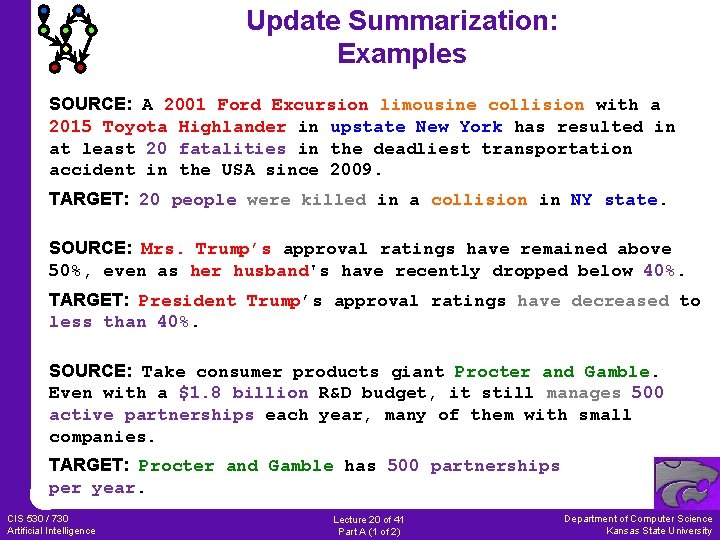

Update Summarization: Examples SOURCE: A 2001 Ford Excursion limousine collision with a 2015 Toyota Highlander in upstate New York has resulted in at least 20 fatalities in the deadliest transportation accident in the USA since 2009. TARGET: 20 people were killed in a collision in NY state. SOURCE: Mrs. Trump’s approval ratings have remained above 50%, even as her husband's have recently dropped below 40%. TARGET: President Trump’s approval ratings have decreased to less than 40%. SOURCE: Take consumer products giant Procter and Gamble. Even with a $1. 8 billion R&D budget, it still manages 500 active partnerships each year, many of them with small companies. TARGET: Procter and Gamble has 500 partnerships per year. CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

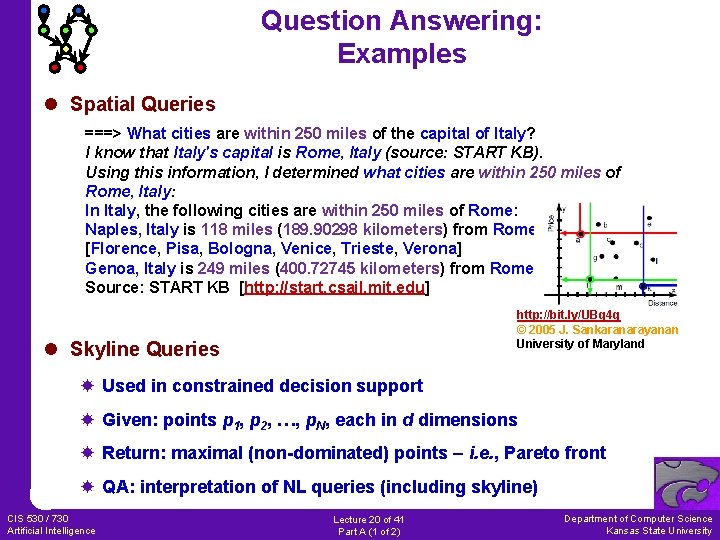

Question Answering: Examples l Spatial Queries ===> What cities are within 250 miles of the capital of Italy? I know that Italy's capital is Rome, Italy (source: START KB). Using this information, I determined what cities are within 250 miles of Rome, Italy: In Italy, the following cities are within 250 miles of Rome: Naples, Italy is 118 miles (189. 90298 kilometers) from Rome. [Florence, Pisa, Bologna, Venice, Trieste, Verona] Genoa, Italy is 249 miles (400. 72745 kilometers) from Rome. Source: START KB [http: //start. csail. mit. edu] http: //bit. ly/UBq 4 q © 2005 J. Sankaranarayanan University of Maryland l Skyline Queries Used in constrained decision support Given: points p 1, p 2, …, p. N, each in d dimensions Return: maximal (non-dominated) points – i. e. , Pareto front QA: interpretation of NL queries (including skyline) CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

Approach: Parsing for Semantic Role Labeling (SRL) l Algorithms SRL Text generation Semantic Role Labeling Demo http: //l 2 r. cs. uiuc. edu/~cogcomp/srl-demo. php © 2009 University of Illinois l Knowledge Representation: Parse Trees, Abstract Data Types l Other Tasks Filling in abstract data types (ADTs) aka frames, slot-filler structures Natural language generation, content evaluation CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

NLP Issues: Word Sense Disambiguation (WSD) l Problem Definition Given: m sentences, each containing a usage of a particular ambiguous word Example: “The can will rust. ” (auxiliary verb versus noun) Label: vj s correct word sense (e. g. , s {auxiliary verb, noun}) Representation: m examples (labeled attribute vectors <(w 1, w 2, …, wn), s>) Return: classifier f: X V that disambiguates new x (w 1, w 2, …, wn) l Solution Approach: Use Bayesian Learning (e. g. , Naïve Bayes) Caveat: can’t observe s in the text! A solution: treat s in P(wi | s) as missing value, impute s (assign by inference) [Pedersen and Bruce, 1998]: fill in using Gibbs sampling, EM algorithm (later) [Roth, 1998]: Naïve Bayes, sparse networks of Winnows (SNOW), TBL l Recent Research T. Pedersen’s research home page: http: //www. d. umn. edu/~tpederse/ D. Roth’s Cognitive Computation Group: http: //l 2 r. cs. uiuc. edu/~cogcomp/ CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

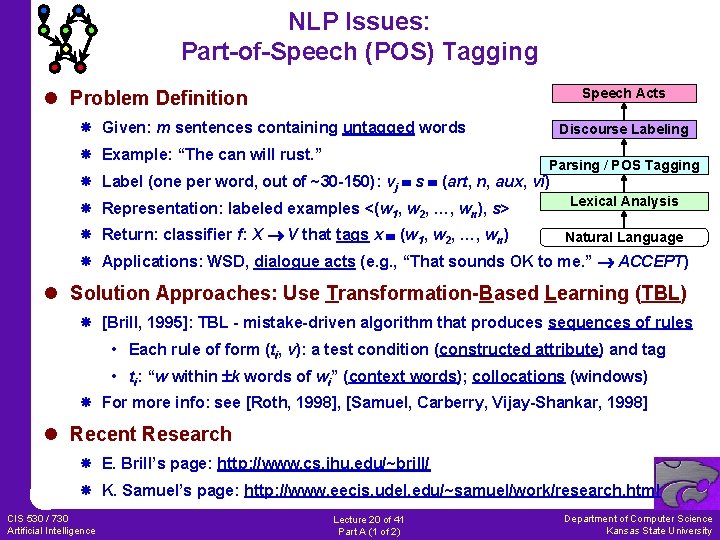

NLP Issues: Part-of-Speech (POS) Tagging Speech Acts l Problem Definition Given: m sentences containing untagged words Example: “The can will rust. ” Discourse Labeling Parsing / POS Tagging Label (one per word, out of ~30 -150): vj s (art, n, aux, vi) Representation: labeled examples <(w 1, w 2, …, wn), s> Lexical Analysis Return: classifier f: X V that tags x (w 1, w 2, …, wn) Natural Language Applications: WSD, dialogue acts (e. g. , “That sounds OK to me. ” ACCEPT) l Solution Approaches: Use Transformation-Based Learning (TBL) [Brill, 1995]: TBL - mistake-driven algorithm that produces sequences of rules • Each rule of form (ti, v): a test condition (constructed attribute) and tag • ti: “w within k words of wi” (context words); collocations (windows) For more info: see [Roth, 1998], [Samuel, Carberry, Vijay-Shankar, 1998] l Recent Research E. Brill’s page: http: //www. cs. jhu. edu/~brill/ K. Samuel’s page: http: //www. eecis. udel. edu/~samuel/work/research. html CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University

NLP Applications: Info Retrieval (IR) and Digital Libraries l Information Retrieval (IR) One role of learning: produce classifiers for documents (see [Sahami, 1999]) Query-based search engines (e. g. , for WWW: Alta. Vista, Lycos, Yahoo) Applications: bibliographic searches (citations, patent intelligence, etc. ) l Bayesian Classification: Integrating Supervised/Unsupervised Learning Unsupervised learning: organize collections of documents at a “topical” level e. g. , Auto. Class [Cheeseman et al, 1988]; self-organizing maps [Kohonen, 1995] More on this topic (document clustering) soon l Framework Extends Beyond Natural Language Collections of images, audio, video, other media Five Ss : Source, Stream, Structure, Scenario, Society Book on IR [van. Rijsbergen, 1979]: http: //www. dcs. gla. ac. uk/Keith/Preface. html l Recent Research M. Sahami’s page (Bayesian IR): http: //robotics. stanford. edu/users/sahami Digital libraries (DL) resources: http: //fox. cs. vt. edu CIS 530 / 730 Artificial Intelligence Lecture 20 of 41 Part A (1 of 2) Department of Computer Science Kansas State University