Lecture 2 Intelligent Agents Reading AIMA Ch 2

- Slides: 24

Lecture 2: Intelligent Agents Reading: AIMA, Ch. 2 Rutgers CS 440, Fall 2003

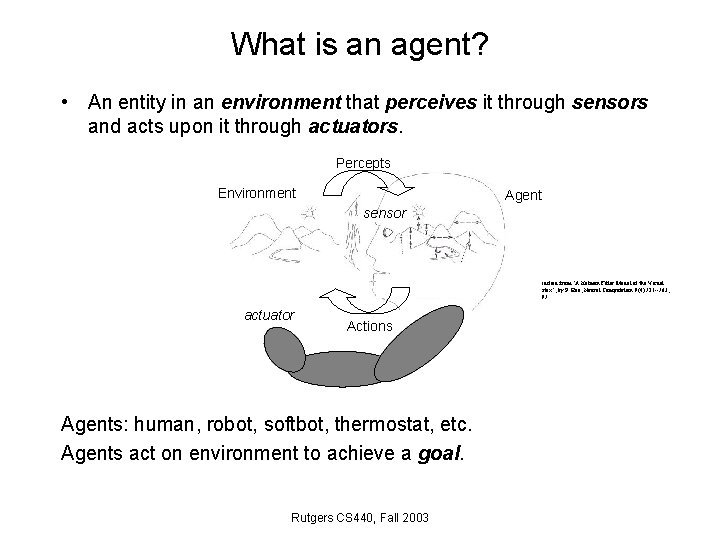

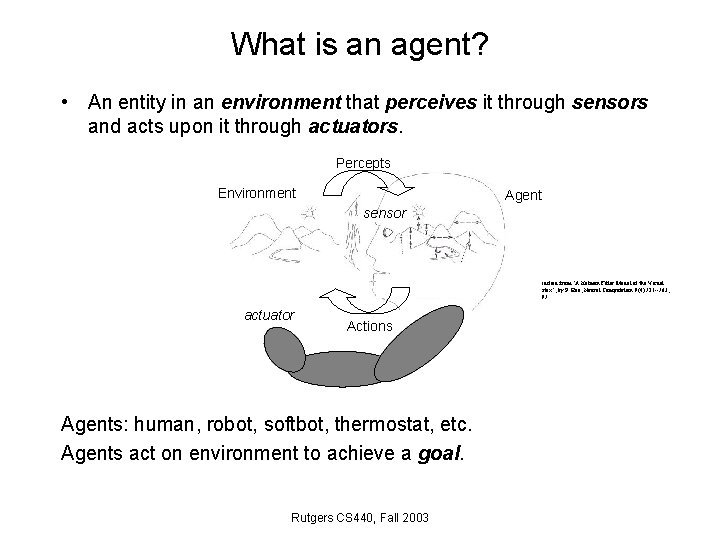

What is an agent? • An entity in an environment that perceives it through sensors and acts upon it through actuators. Percepts Environment Agent sensor Modified from "A Kalman Filter Model of the Visual Cortex", by P. Rao, Neural Computation 9(4): 721 --763, 1997 actuator Actions Agents: human, robot, softbot, thermostat, etc. Agents act on environment to achieve a goal. Rutgers CS 440, Fall 2003

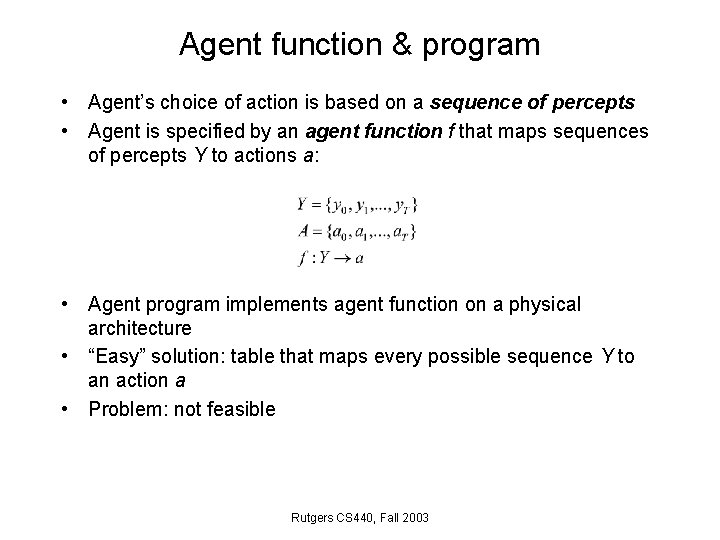

Agent function & program • Agent’s choice of action is based on a sequence of percepts • Agent is specified by an agent function f that maps sequences of percepts Y to actions a: • Agent program implements agent function on a physical architecture • “Easy” solution: table that maps every possible sequence Y to an action a • Problem: not feasible Rutgers CS 440, Fall 2003

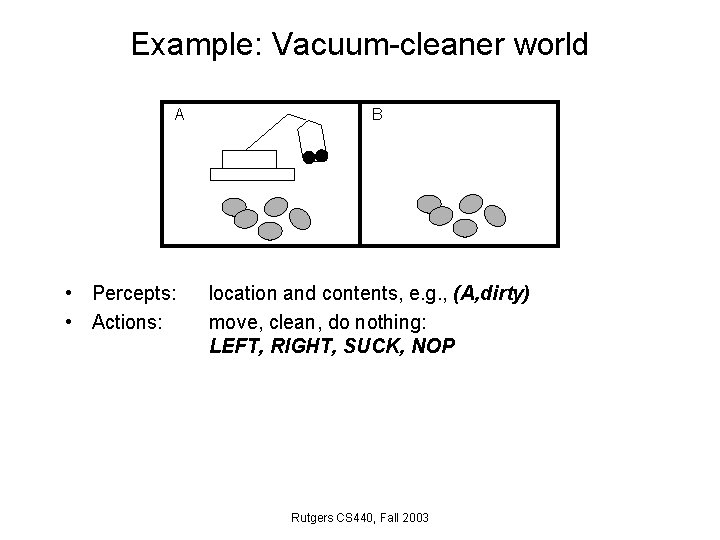

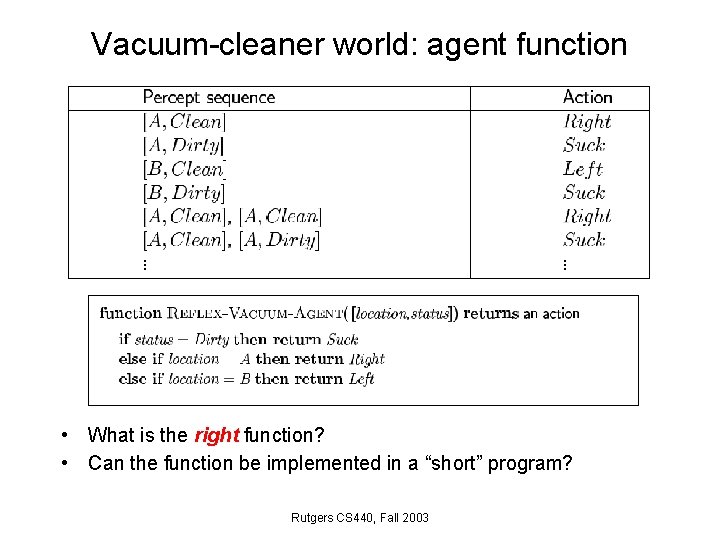

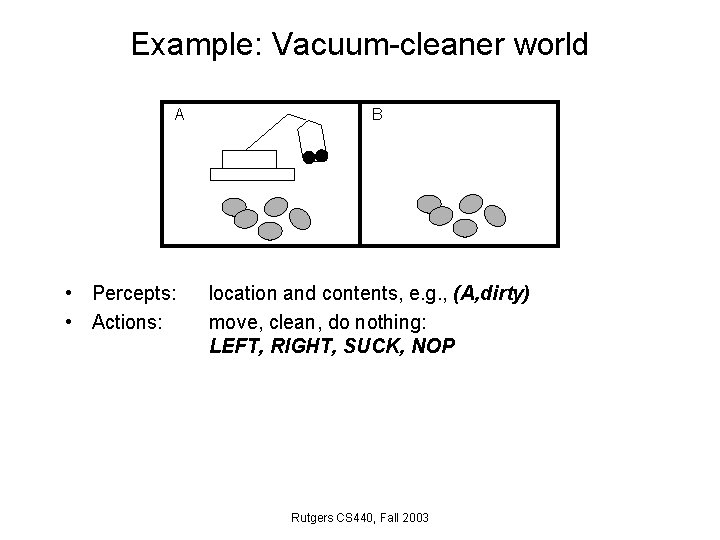

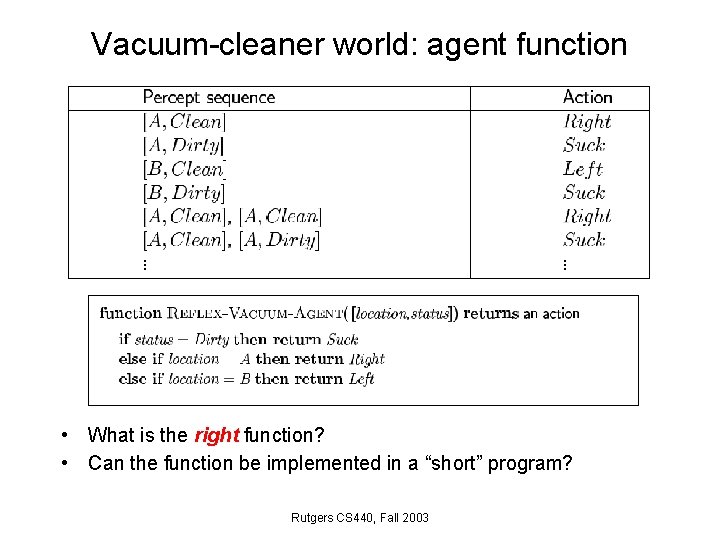

Example: Vacuum-cleaner world A • Percepts: • Actions: B location and contents, e. g. , (A, dirty) move, clean, do nothing: LEFT, RIGHT, SUCK, NOP Rutgers CS 440, Fall 2003

Vacuum-cleaner world: agent function • What is the right function? • Can the function be implemented in a “short” program? Rutgers CS 440, Fall 2003

The “right” agent function – rational behavior • Rational agent is the one that does the “right thing”: functional table is filled out correctly • What is the “right thing”? • Define success through a performance measure, r • Vacuum-cleaner world: – +1 point for each clean square in time T – +1 point for clean square, -1 for each move – -1000 for more than k dirty squares • Rational agent: An agent who selects an action that is expected to maximize the performance measure for a given percept sequence and its built-in knowledge • Ideal agent: maximizes actual performance, but needs to be omniscient. Impossible! • Builds a model of environment. Rutgers CS 440, Fall 2003

Properties of a rational agent • • Maximize expected performance Gathers information – does actions to modify future percepts Explores – in unknown environments Learns – from what it has perceived so far (dung beetle, sphex wasp) • Autonomous – increase its knowledge by learning Rutgers CS 440, Fall 2003

Task environment • To design a rational agent we need to specify a task environment = problem to which the agent is a solution • P. E. A. S. = Performance measure Environment Actuators Sensors • Example: automated taxi driver • Performance measure: safe, fast, legal, comfortable, maximize profits • Environment: roads, other traffic, pedestrians, customers • Actuators: steering, accelerator, brake, signal, horn • Sensors: cameras, sonar, speedometer, GPS Rutgers CS 440, Fall 2003

More PEAS examples • • • College test-taker Internet shopping agent Mars lander The president … Rutgers CS 440, Fall 2003

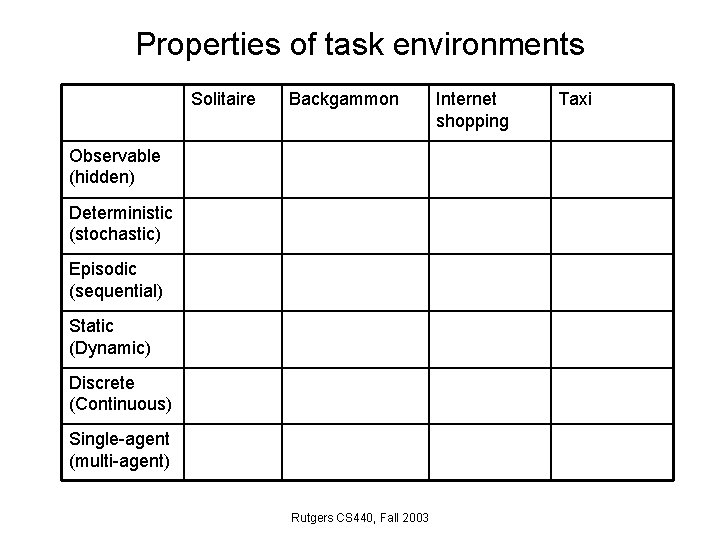

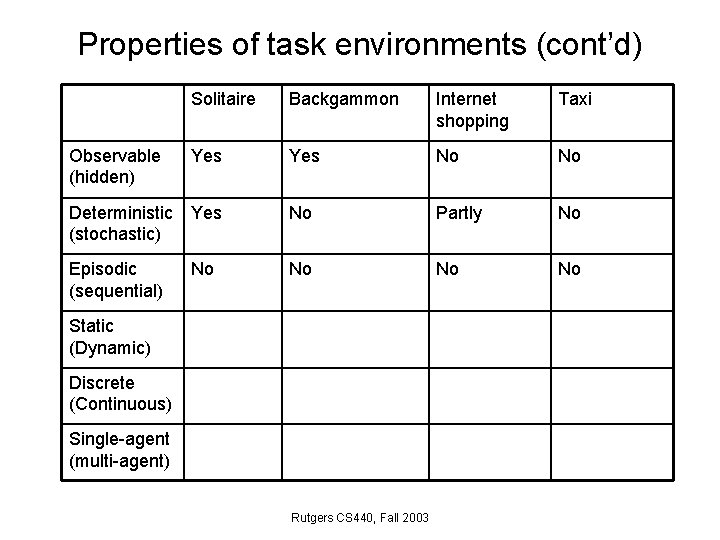

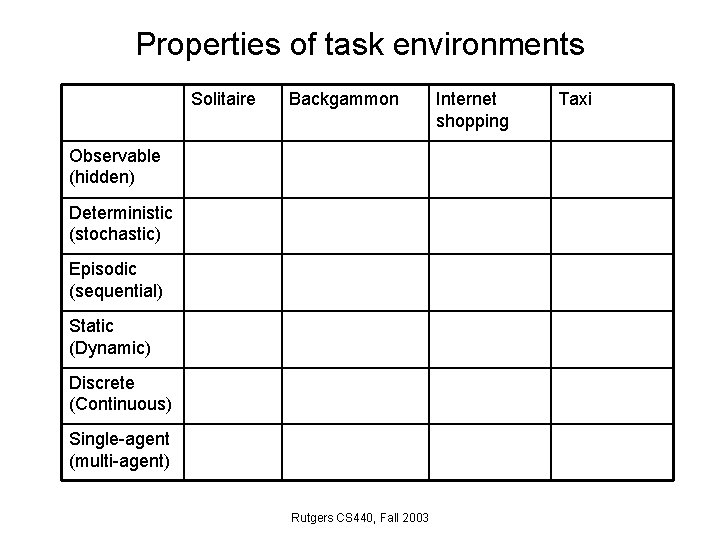

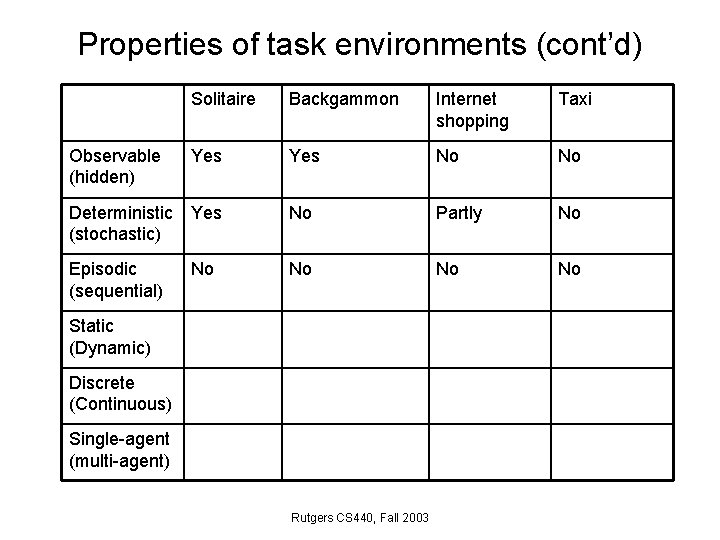

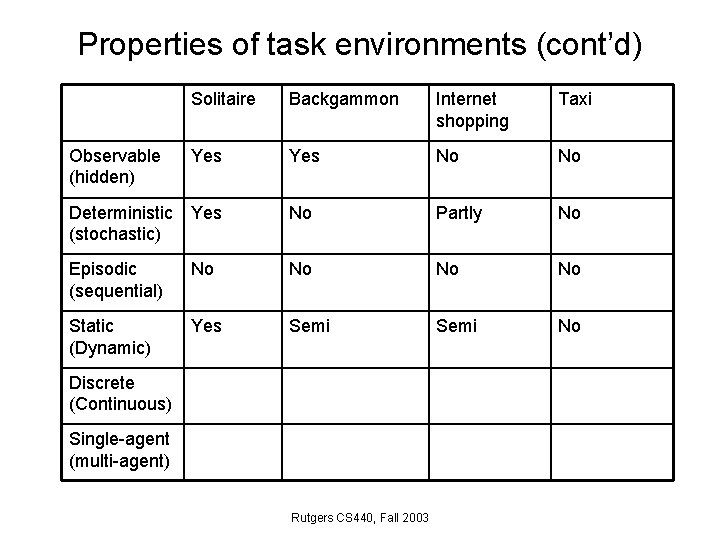

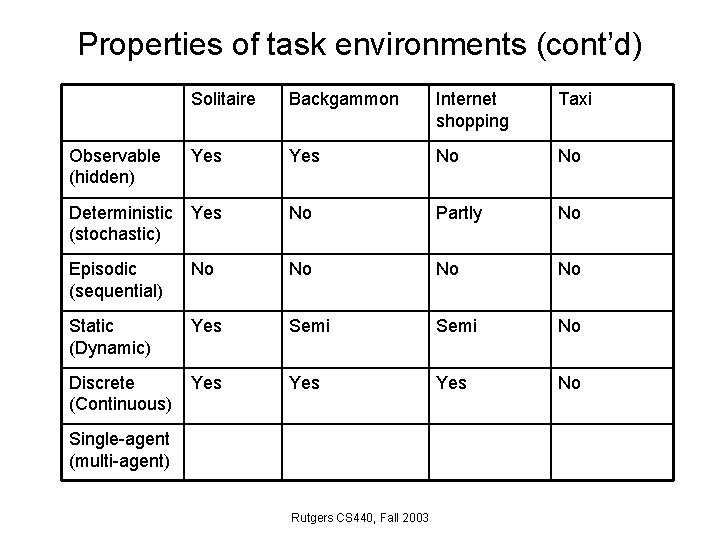

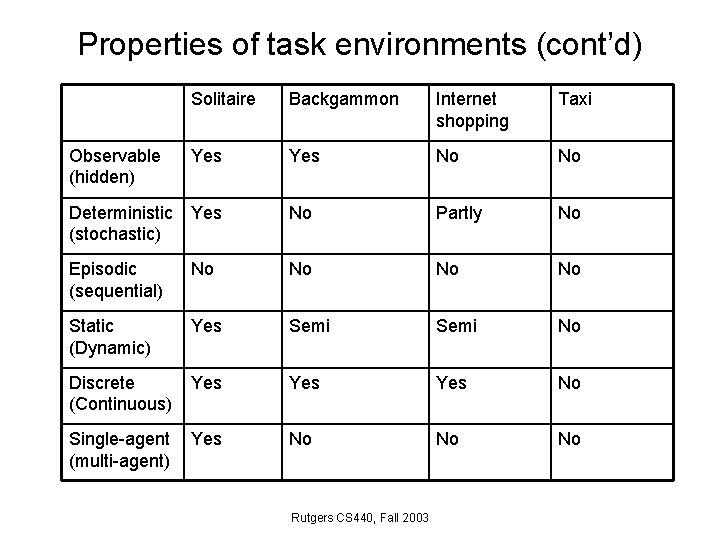

Properties of task environments Solitaire Backgammon Observable (hidden) Deterministic (stochastic) Episodic (sequential) Static (Dynamic) Discrete (Continuous) Single-agent (multi-agent) Rutgers CS 440, Fall 2003 Internet shopping Taxi

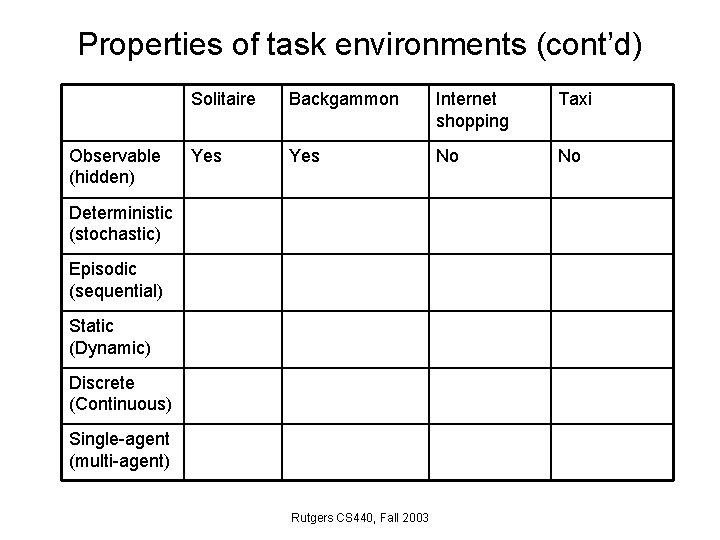

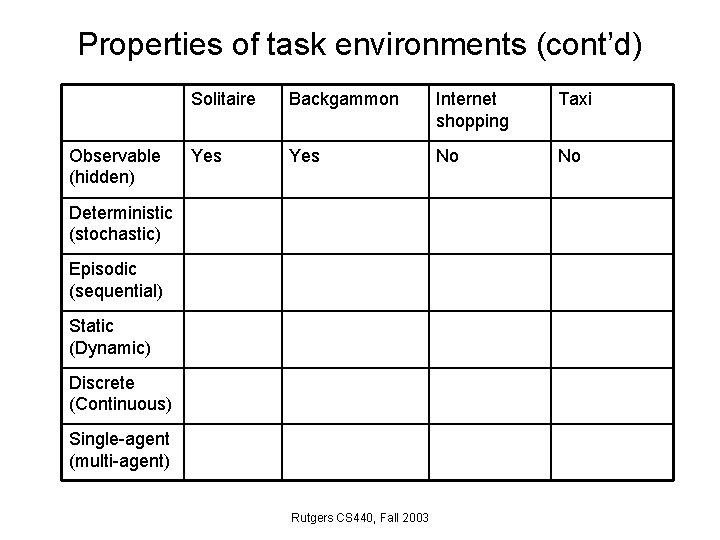

Properties of task environments (cont’d) Observable (hidden) Solitaire Backgammon Internet shopping Taxi Yes No No Deterministic (stochastic) Episodic (sequential) Static (Dynamic) Discrete (Continuous) Single-agent (multi-agent) Rutgers CS 440, Fall 2003

Properties of task environments (cont’d) Solitaire Backgammon Internet shopping Taxi Observable (hidden) Yes No No Deterministic (stochastic) Yes No Partly No Episodic (sequential) Static (Dynamic) Discrete (Continuous) Single-agent (multi-agent) Rutgers CS 440, Fall 2003

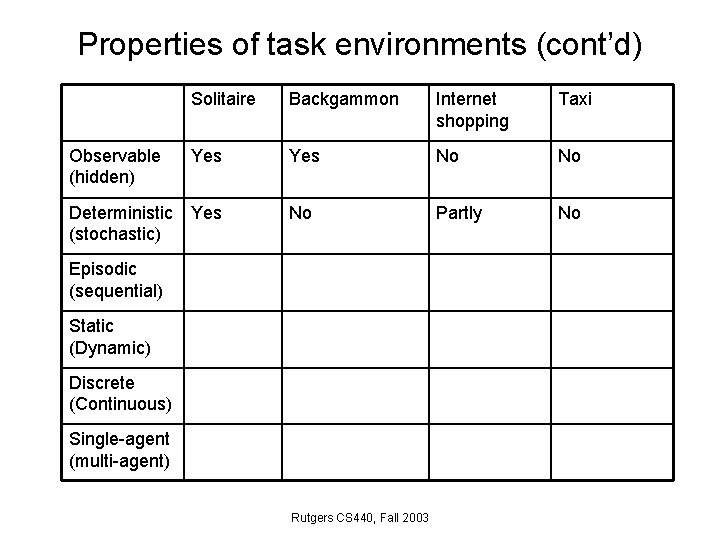

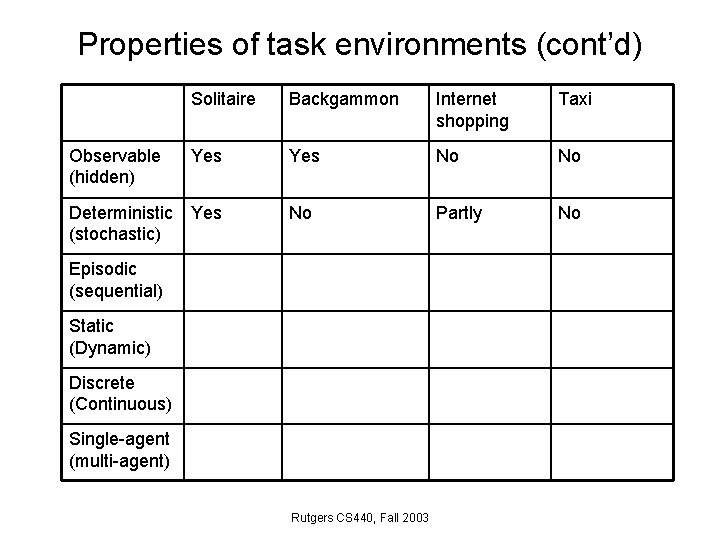

Properties of task environments (cont’d) Solitaire Backgammon Internet shopping Taxi Observable (hidden) Yes No No Deterministic (stochastic) Yes No Partly No Episodic (sequential) No No Static (Dynamic) Discrete (Continuous) Single-agent (multi-agent) Rutgers CS 440, Fall 2003

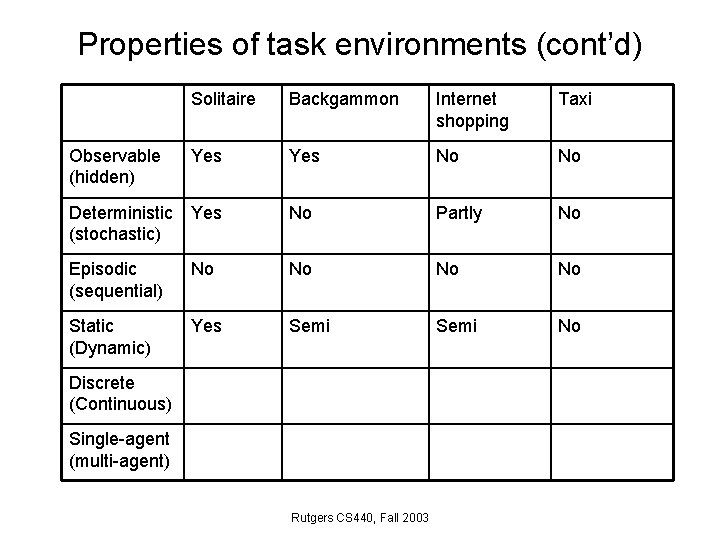

Properties of task environments (cont’d) Solitaire Backgammon Internet shopping Taxi Observable (hidden) Yes No No Deterministic (stochastic) Yes No Partly No Episodic (sequential) No No Static (Dynamic) Yes Semi No Discrete (Continuous) Single-agent (multi-agent) Rutgers CS 440, Fall 2003

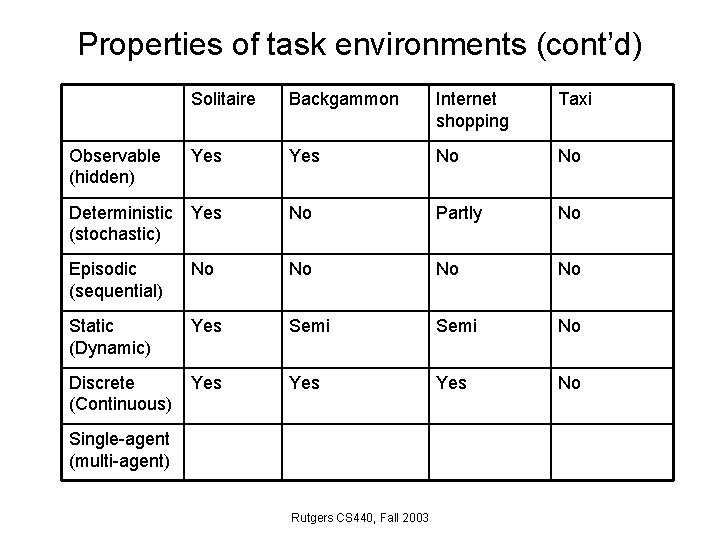

Properties of task environments (cont’d) Solitaire Backgammon Internet shopping Taxi Observable (hidden) Yes No No Deterministic (stochastic) Yes No Partly No Episodic (sequential) No No Static (Dynamic) Yes Semi No Discrete (Continuous) Yes Yes No Single-agent (multi-agent) Rutgers CS 440, Fall 2003

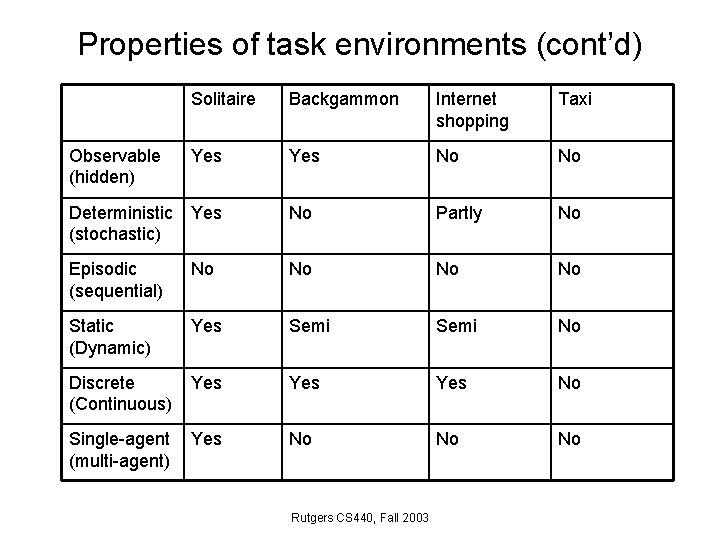

Properties of task environments (cont’d) Solitaire Backgammon Internet shopping Taxi Observable (hidden) Yes No No Deterministic (stochastic) Yes No Partly No Episodic (sequential) No No Static (Dynamic) Yes Semi No Discrete (Continuous) Yes Yes No Single-agent (multi-agent) Yes No No No Rutgers CS 440, Fall 2003

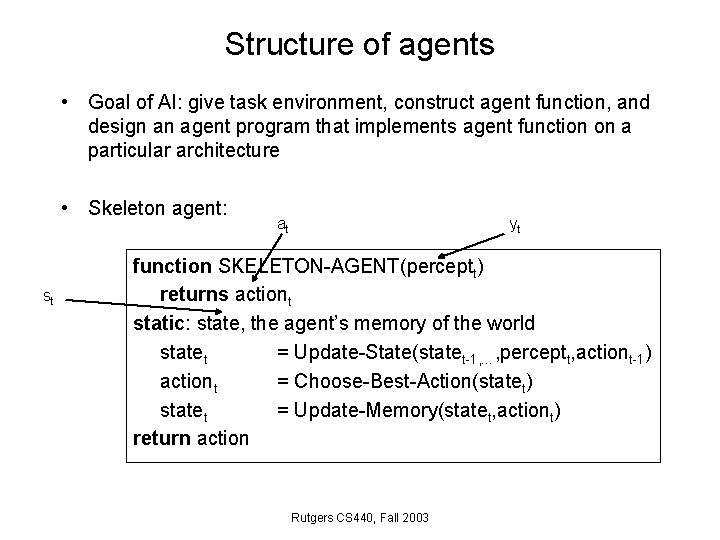

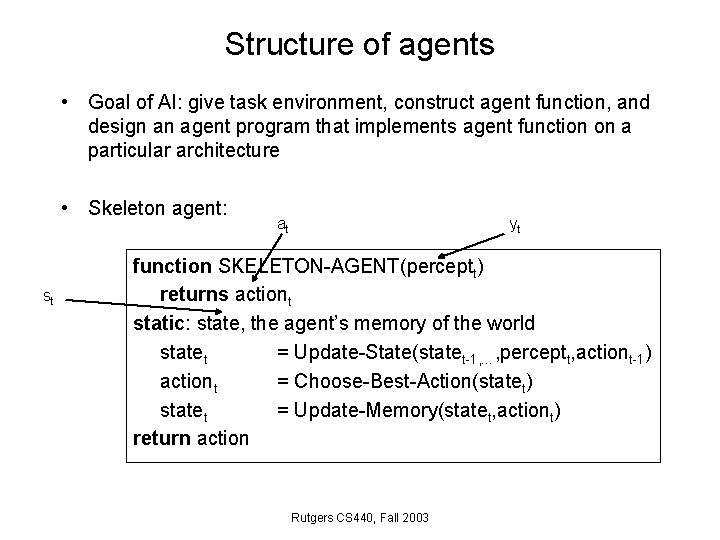

Structure of agents • Goal of AI: give task environment, construct agent function, and design an agent program that implements agent function on a particular architecture • Skeleton agent: st at yt function SKELETON-AGENT(perceptt) returns actiont static: state, the agent’s memory of the world statet = Update-State(statet-1, …, perceptt, actiont-1) actiont = Choose-Best-Action(statet) statet = Update-Memory(statet, actiont) return action Rutgers CS 440, Fall 2003

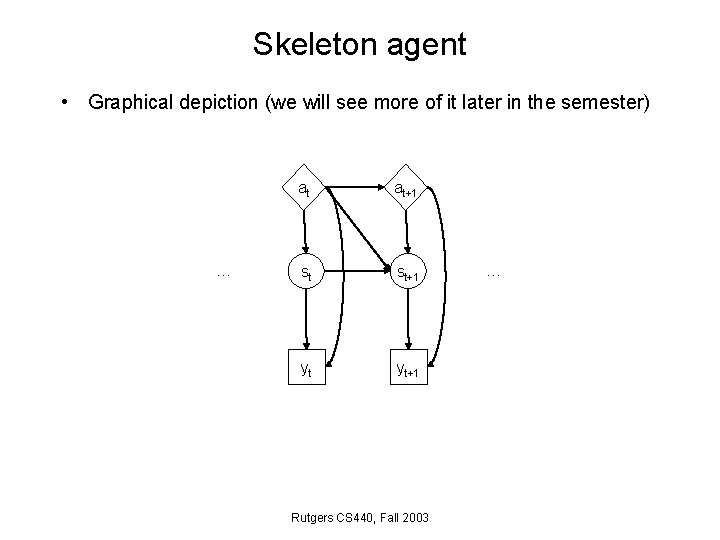

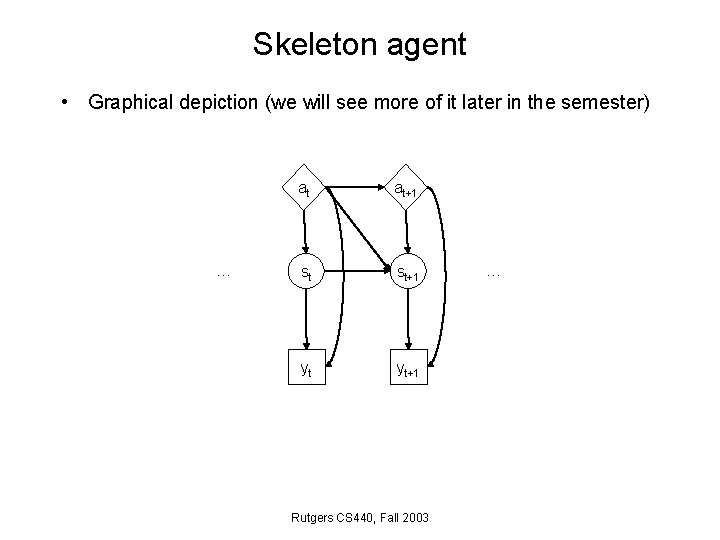

Skeleton agent • Graphical depiction (we will see more of it later in the semester) … at at+1 st st+1 yt yt+1 Rutgers CS 440, Fall 2003 …

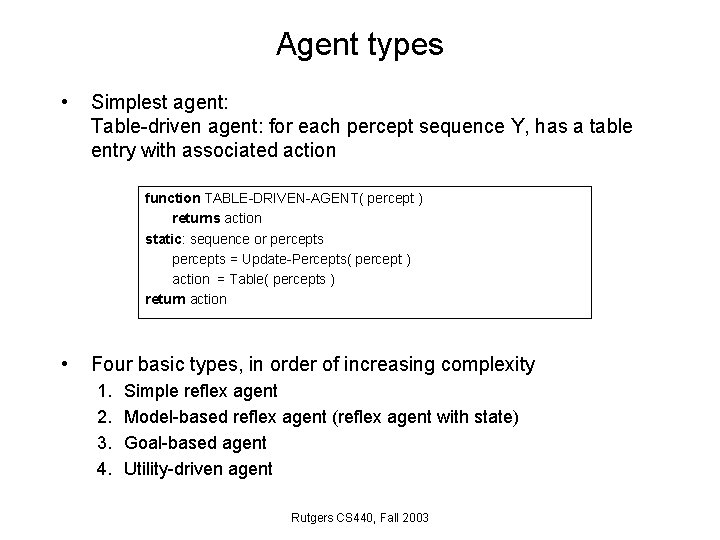

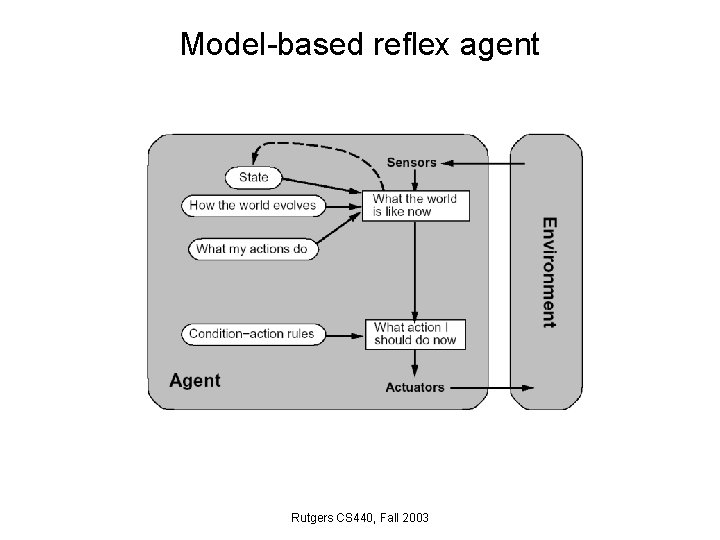

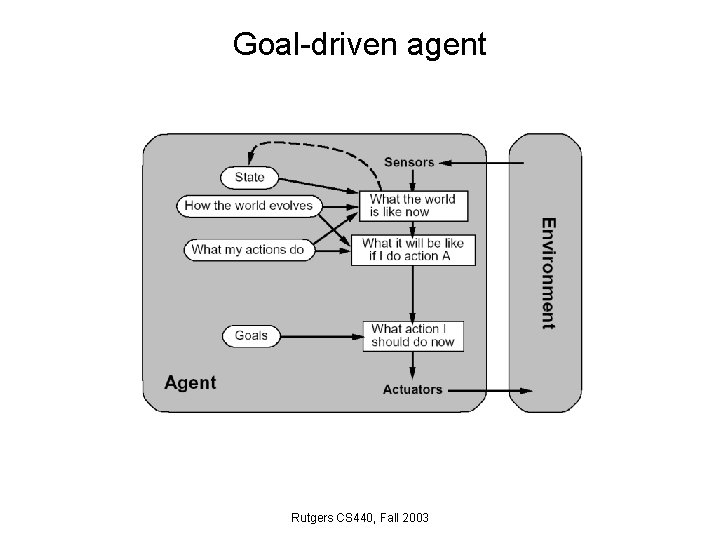

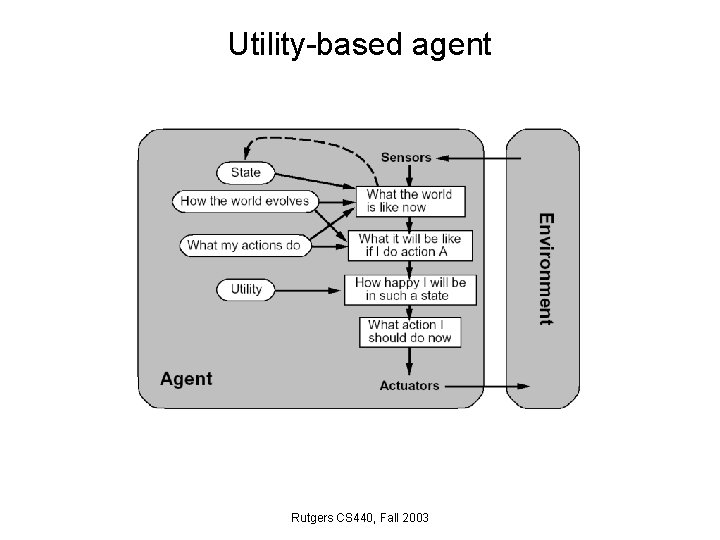

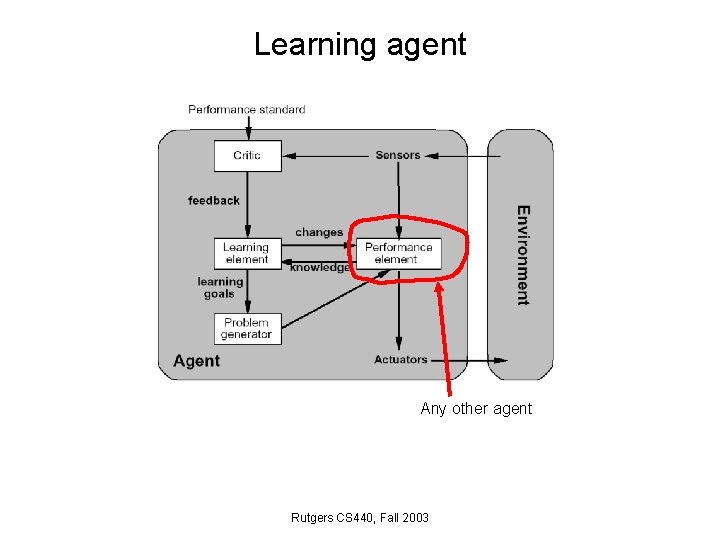

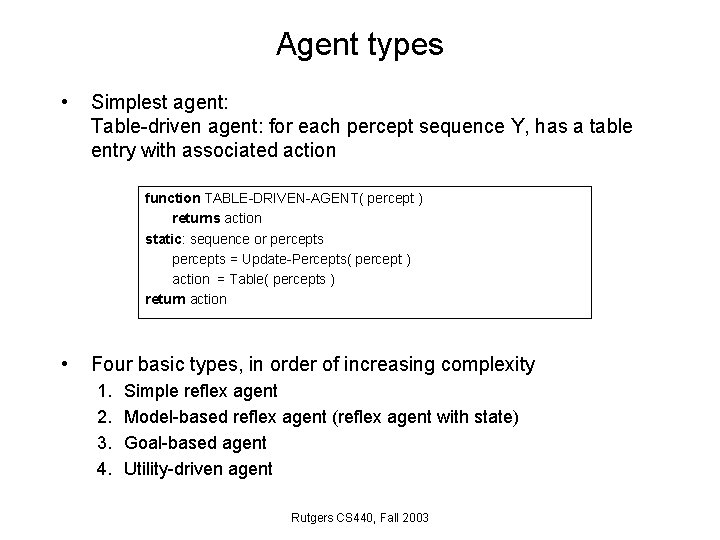

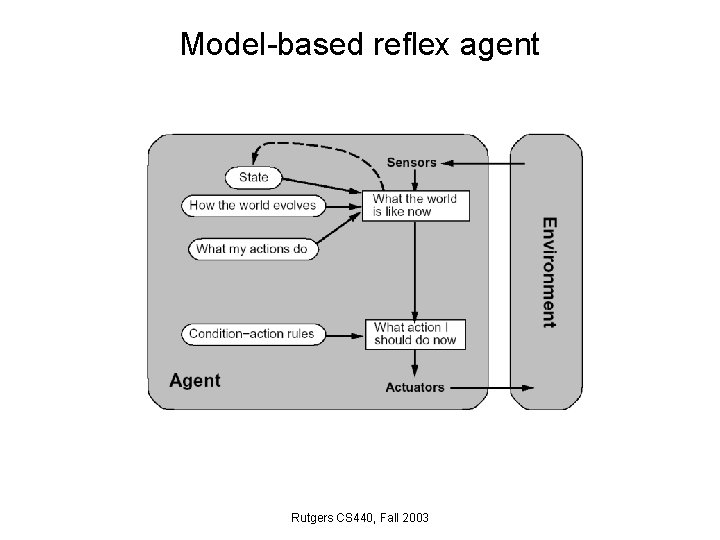

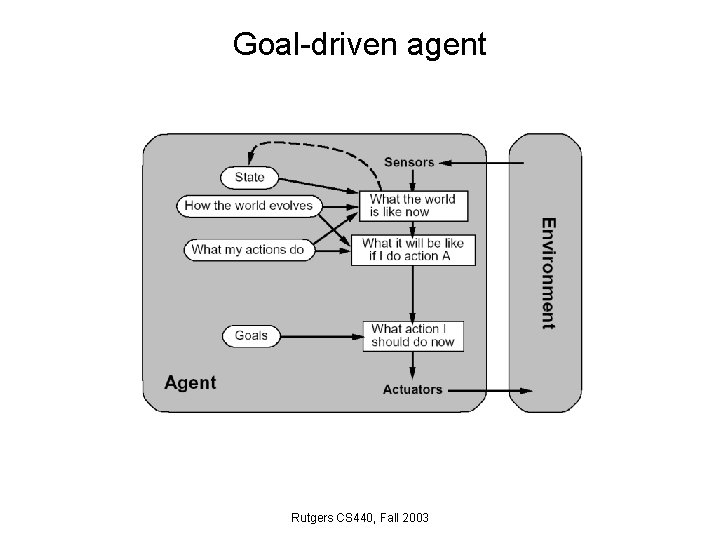

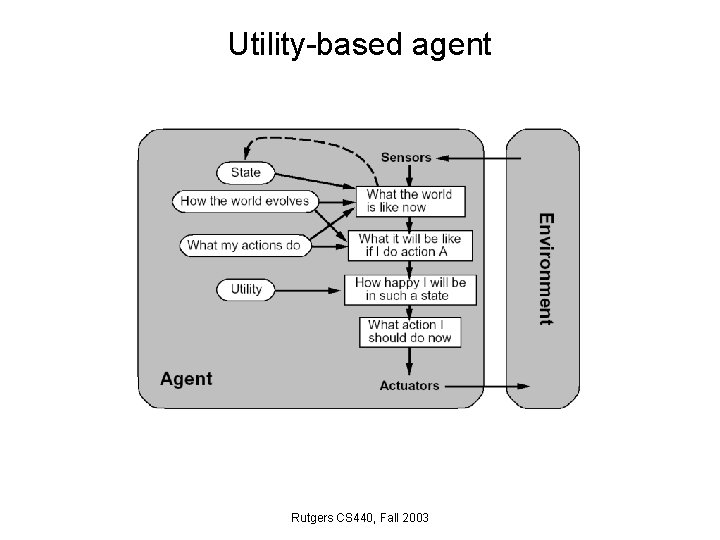

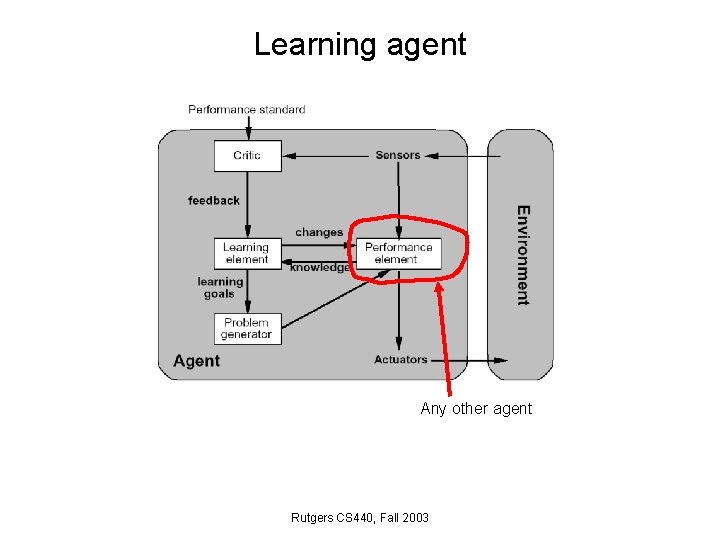

Agent types • Simplest agent: Table-driven agent: for each percept sequence Y, has a table entry with associated action function TABLE-DRIVEN-AGENT( percept ) returns action static: sequence or percepts = Update-Percepts( percept ) action = Table( percepts ) return action • Four basic types, in order of increasing complexity 1. 2. 3. 4. Simple reflex agent Model-based reflex agent (reflex agent with state) Goal-based agent Utility-driven agent Rutgers CS 440, Fall 2003

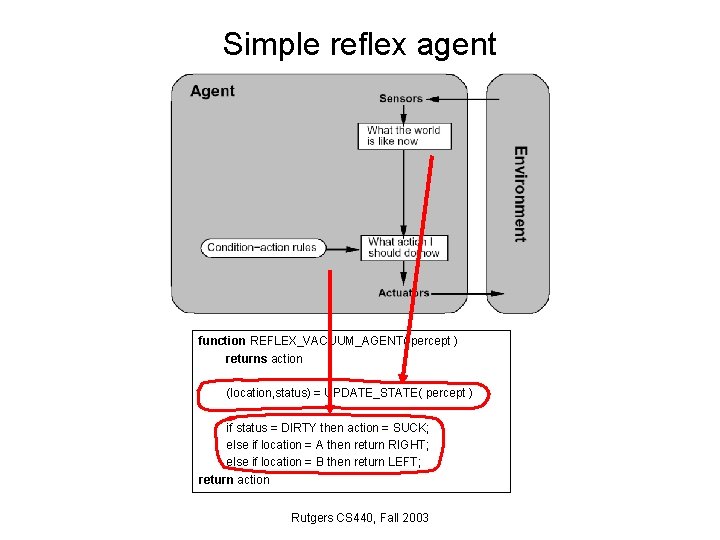

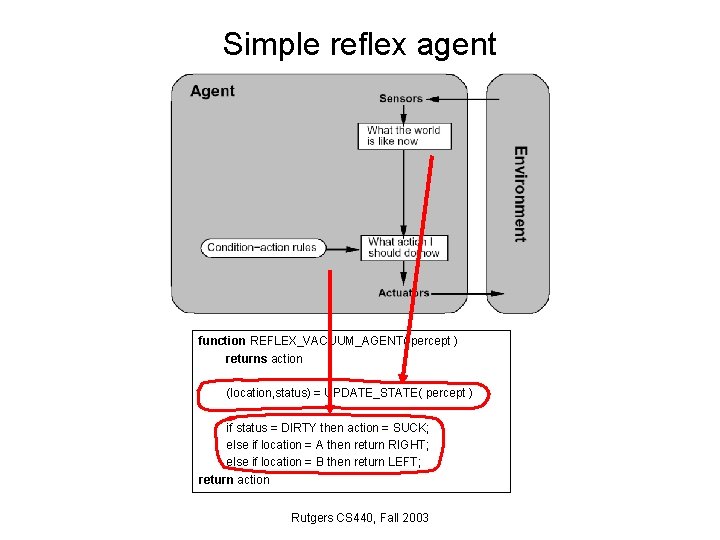

Simple reflex agent function REFLEX_VACUUM_AGENT( percept ) returns action (location, status) = UPDATE_STATE( percept ) if status = DIRTY then action = SUCK; else if location = A then return RIGHT; else if location = B then return LEFT; return action Rutgers CS 440, Fall 2003

Model-based reflex agent Rutgers CS 440, Fall 2003

Goal-driven agent Rutgers CS 440, Fall 2003

Utility-based agent Rutgers CS 440, Fall 2003

Learning agent Any other agent Rutgers CS 440, Fall 2003