LargeScale Iterative Data Processing CS 525 Big Data

Large-Scale Iterative Data Processing CS 525 Big Data Analytics paper “Ha. Loop: Efficient Iterative Data Processing On Large Scale Clusters” by Yingyi Bu, UC Irvine; Bill Howe, UW; Magda Balazinska, UW; and Michael Ernst, UW in VLDB’ 2010 VLDB 2010, Singapore

Observation n Observation: Map. Reduce has proven successful as a common runtime for non-recursive declarative languages n n n Observation: Loops and iteration everywhere : n n n HIVE (SQL-like language) Pig (Rel algebra with nested types) Graphs, clustering, mining Problem: n Map. Reduce can’t express recursion/iteration Observation: Many roll their own loops: n Iteration managed by hard-coded script outside Map. Reduce Bill Howe, UW 10/26/2021 2

![Twister – Page. Rank run MR term. cond. [Ekanayake HPDC 2010] while (!complete) { Twister – Page. Rank run MR term. cond. [Ekanayake HPDC 2010] while (!complete) {](http://slidetodoc.com/presentation_image_h2/ebd43d72589f7201a81cd886c9471124/image-3.jpg)

Twister – Page. Rank run MR term. cond. [Ekanayake HPDC 2010] while (!complete) { // start the pagerank map reduce process monitor = driver. run. Map. Reduce. BCast(new Bytes. Value(tmp. Compressed. Dvd. get. Bytes())); monitor. Till. Completion(); // get the result of process new. Compressed. Dvd = ((Page. Rank. Combiner) driver. get. Current. Combiner()). get. Results(); // decompress the compressed pagerank values new. Dvd = decompress(new. Compressed. Dvd); O(N) in the size tmp. Dvd = decompress(tmp. Compressed. Dvd); of the graph total. Error = get. Error(tmp. Dvd, new. Dvd); // get the difference between new and old pagerank values if (total. Error < tolerance) { complete = true; } tmp. Compressed. Dvd = new. Compressed. Dvd; } Bill Howe, UW 10/26/2021 3

Key idea n When the loop output is large… n n transitive closure connected components Page. Rank (with a convergence test as the termination condition) …need a distributed fixpoint operator n typically implemented as yet another Map. Reduce job -- on every iteration Bill Howe, UW 10/26/2021 4

Fixpoint n n n A fixpoint of a function f is a value x such that f(x) = x Fixpoint queries can be expressed with relational algebra plus a fixpoint operator Map - Reduce - Fixpoint n Hypothesis: model for all recursive queries Bill Howe, UW 10/26/2021 5

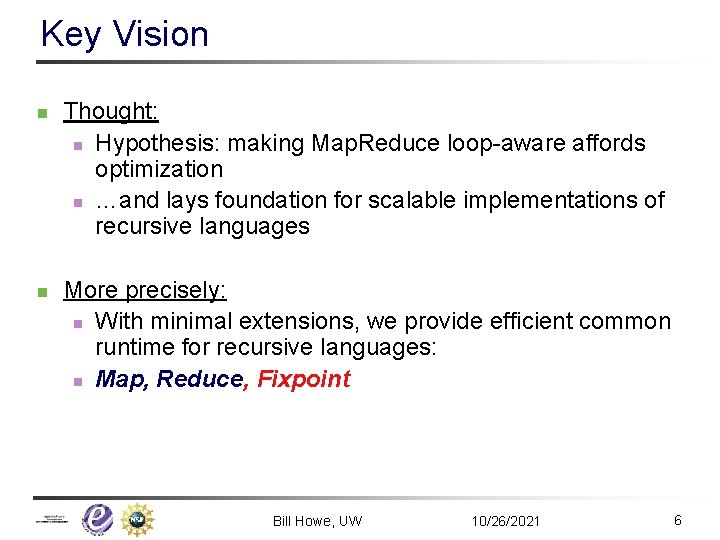

Key Vision n n Thought: n Hypothesis: making Map. Reduce loop-aware affords optimization n …and lays foundation for scalable implementations of recursive languages More precisely: n With minimal extensions, we provide efficient common runtime for recursive languages: n Map, Reduce, Fixpoint Bill Howe, UW 10/26/2021 6

Three Examples With Iteration Bill Howe, UW 10/26/2021 7

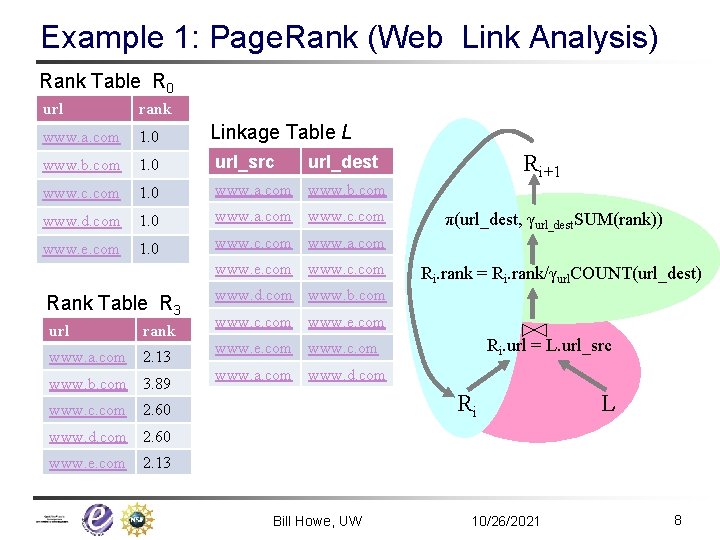

Example 1: Page. Rank (Web Link Analysis) Rank Table R 0 url rank www. a. com 1. 0 www. b. com 1. 0 url_src www. c. com 1. 0 www. a. com www. b. com www. d. com 1. 0 www. a. com www. c. com www. e. com 1. 0 www. c. com www. a. com Linkage Table L www. e. com www. c. com Rank Table R 3 url rank www. a. com 2. 13 www. b. com 3. 89 www. c. com Ri+1 url_dest π(url_dest, γurl_dest. SUM(rank)) Ri. rank = Ri. rank/γurl. COUNT(url_dest) www. d. com www. b. com www. c. com www. e. com Ri. url = L. url_src www. e. com www. c. om www. a. com www. d. com Ri 2. 60 L www. d. com 2. 60 www. e. com 2. 13 Bill Howe, UW 10/26/2021 8

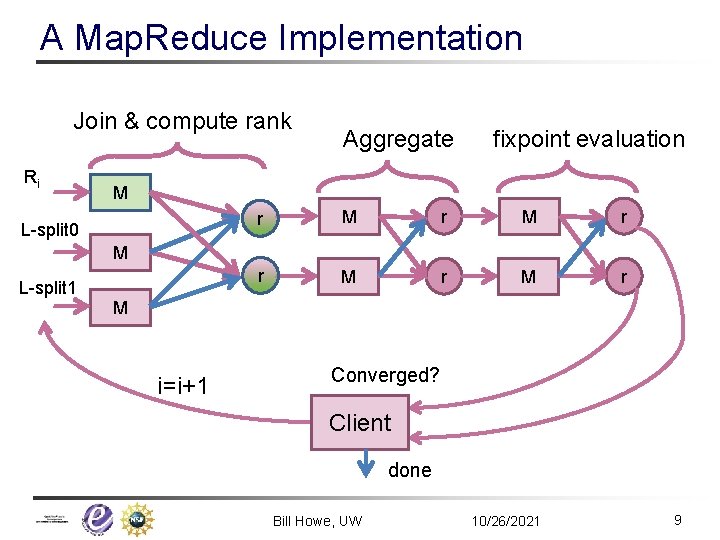

A Map. Reduce Implementation Join & compute rank Ri Aggregate fixpoint evaluation M L-split 0 r M r M r M L-split 1 M i=i+1 Converged? Client done Bill Howe, UW 10/26/2021 9

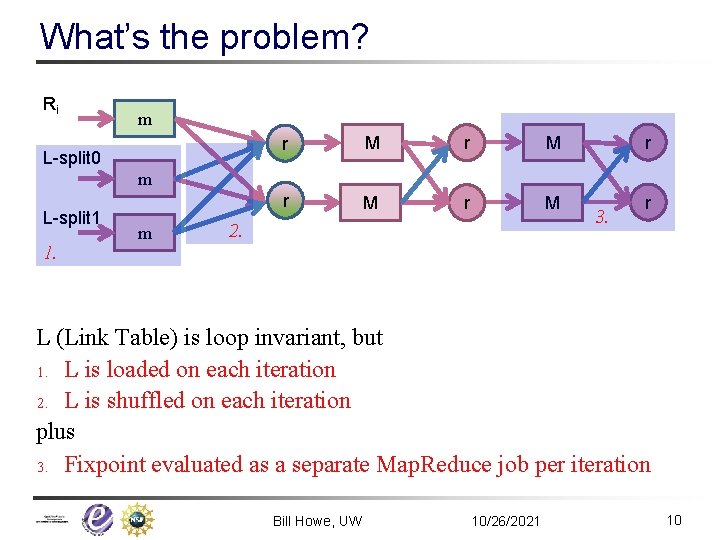

What’s the problem? Ri m L-split 0 r M r M r m L-split 1 1. m 2. 3. r L (Link Table) is loop invariant, but 1. L is loaded on each iteration 2. L is shuffled on each iteration plus 3. Fixpoint evaluated as a separate Map. Reduce job per iteration Bill Howe, UW 10/26/2021 10

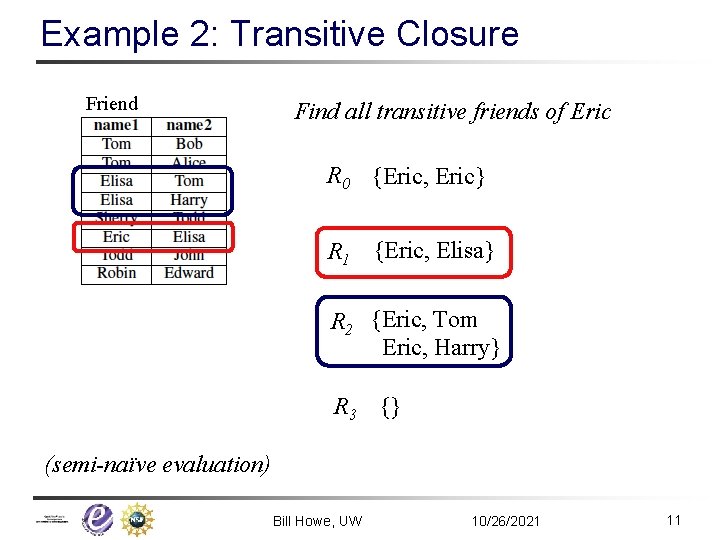

Example 2: Transitive Closure Friend Find all transitive friends of Eric R 0 {Eric, Eric} R 1 {Eric, Elisa} R 2 {Eric, Tom Eric, Harry} R 3 {} (semi-naïve evaluation) Bill Howe, UW 10/26/2021 11

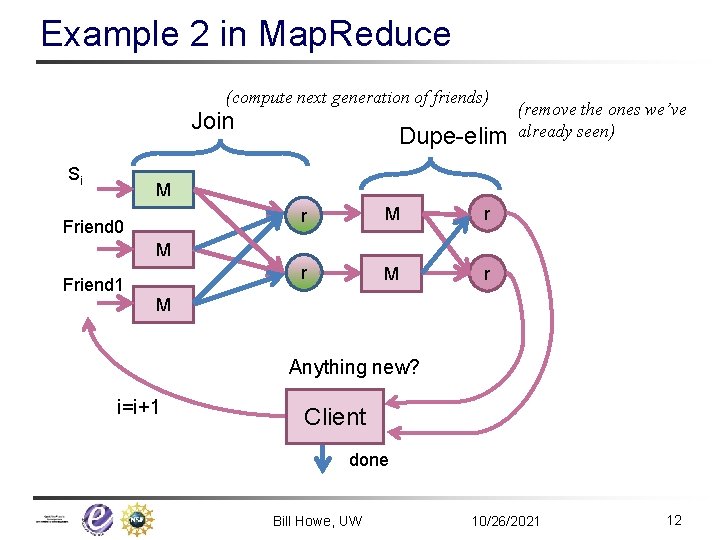

Example 2 in Map. Reduce (compute next generation of friends) Join Si Dupe-elim (remove the ones we’ve already seen) M Friend 0 r M r M Friend 1 M Anything new? i=i+1 Client done Bill Howe, UW 10/26/2021 12

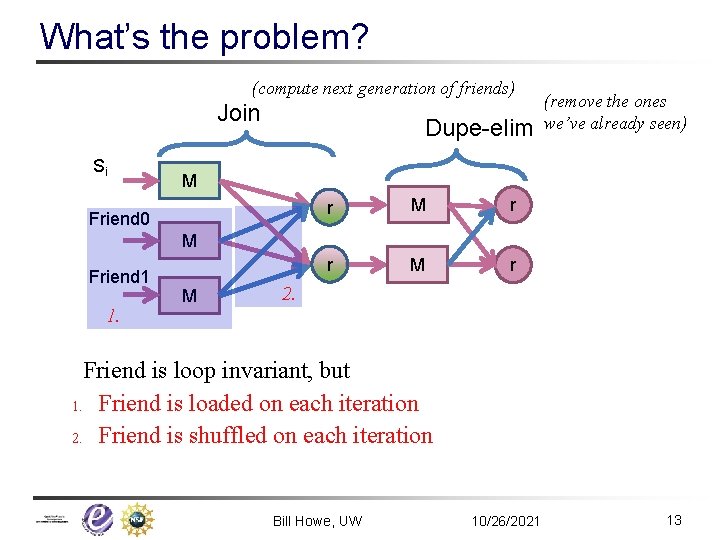

What’s the problem? (compute next generation of friends) Join Si Dupe-elim (remove the ones we’ve already seen) M Friend 0 r M r M Friend 1 1. M 2. Friend is loop invariant, but 1. Friend is loaded on each iteration 2. Friend is shuffled on each iteration Bill Howe, UW 10/26/2021 13

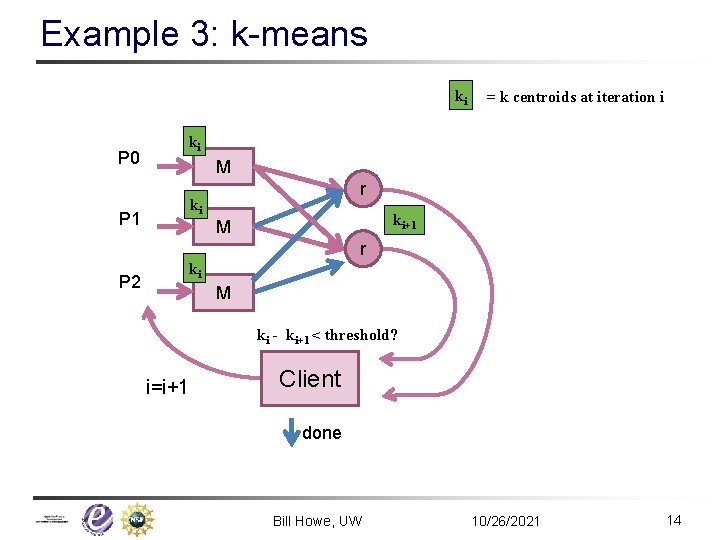

Example 3: k-means ki P 0 = k centroids at iteration i ki M r ki P 1 ki+1 M r P 2 ki M ki - ki+1 < threshold? i=i+1 Client done Bill Howe, UW 10/26/2021 14

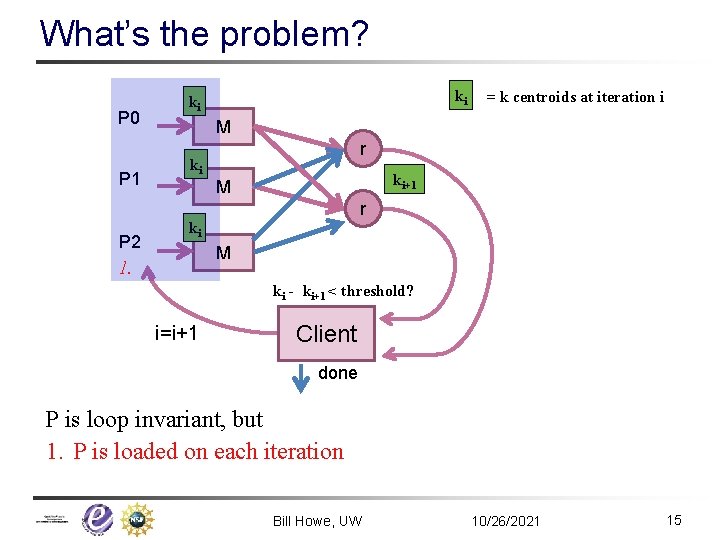

What’s the problem? P 0 P 1 ki ki = k centroids at iteration i M r ki ki+1 M r P 2 ki M 1. ki - ki+1 < threshold? i=i+1 Client done P is loop invariant, but 1. P is loaded on each iteration Bill Howe, UW 10/26/2021 15

Optimizations Enabled n Caching (and Indexing) Bill Howe, UW 10/26/2021 16

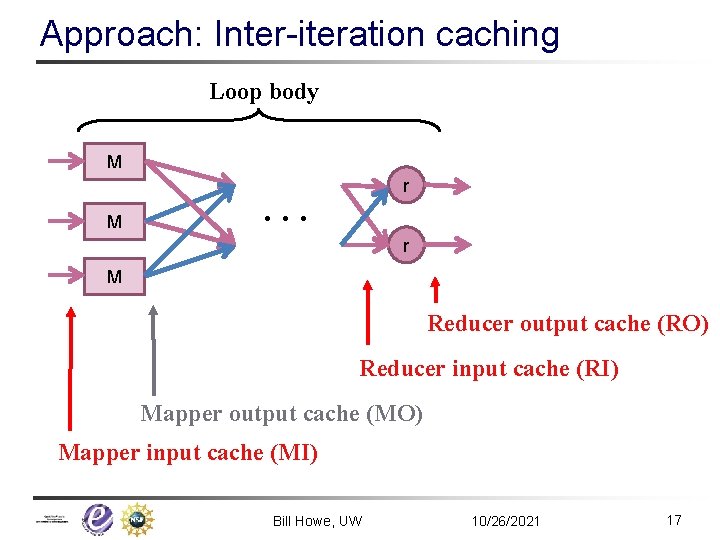

Approach: Inter-iteration caching Loop body M M … r r M Reducer output cache (RO) Reducer input cache (RI) Mapper output cache (MO) Mapper input cache (MI) Bill Howe, UW 10/26/2021 17

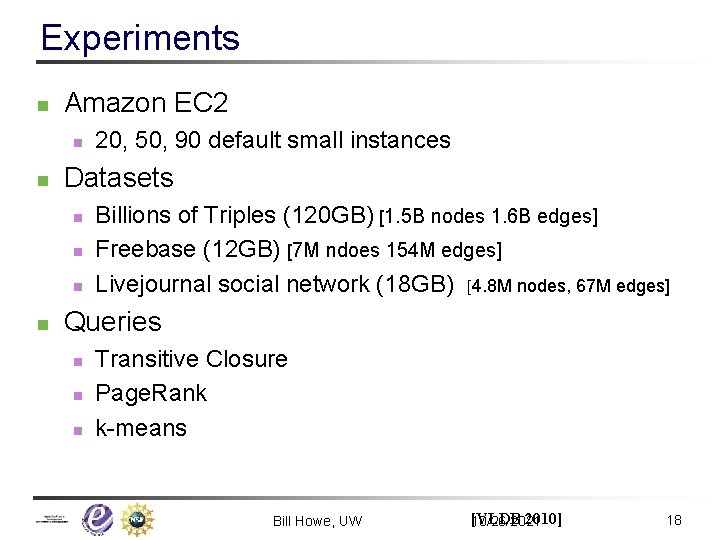

Experiments n Amazon EC 2 n n Datasets n n 20, 50, 90 default small instances Billions of Triples (120 GB) [1. 5 B nodes 1. 6 B edges] Freebase (12 GB) [7 M ndoes 154 M edges] Livejournal social network (18 GB) [4. 8 M nodes, 67 M edges] Queries n n n Transitive Closure Page. Rank k-means Bill Howe, UW [VLDB 2010] 10/26/2021 18

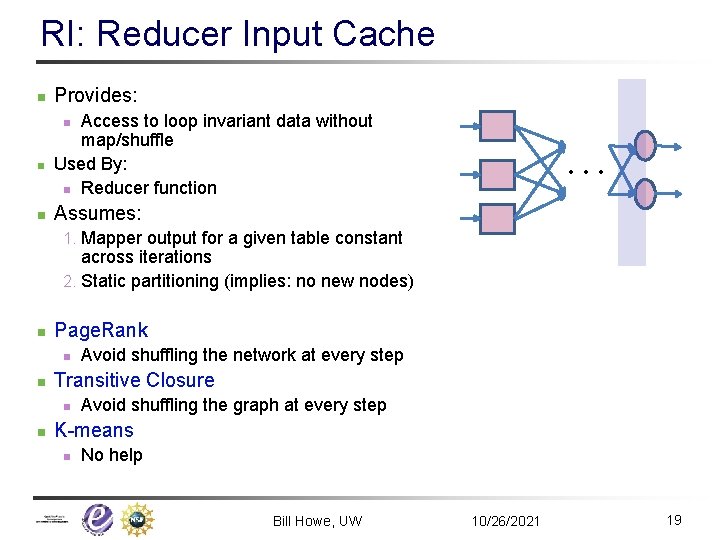

RI: Reducer Input Cache n Provides: Access to loop invariant data without map/shuffle Used By: n Reducer function n … Assumes: 1. Mapper output for a given table constant across iterations 2. Static partitioning (implies: no new nodes) n Page. Rank n n Transitive Closure n n Avoid shuffling the network at every step Avoid shuffling the graph at every step K-means n No help Bill Howe, UW 10/26/2021 19

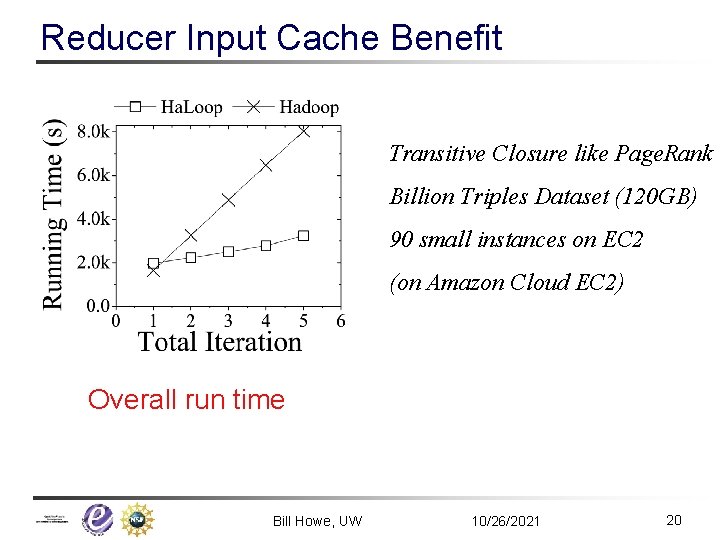

Reducer Input Cache Benefit Transitive Closure like Page. Rank Billion Triples Dataset (120 GB) 90 small instances on EC 2 (on Amazon Cloud EC 2) Overall run time Bill Howe, UW 10/26/2021 20

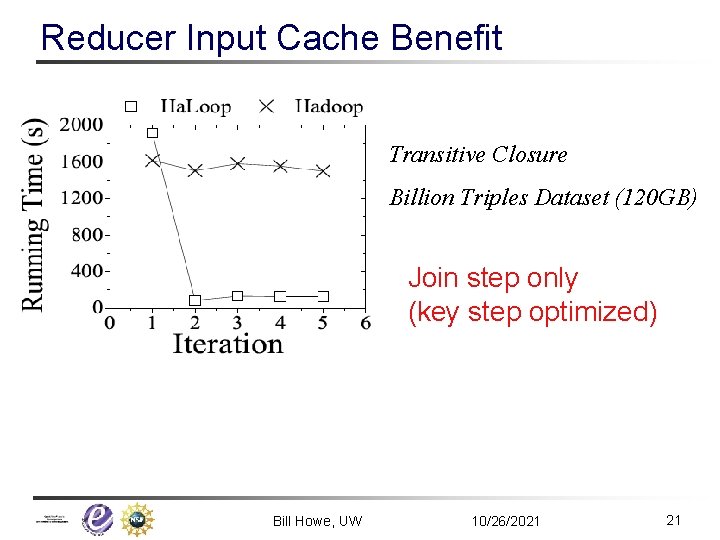

Reducer Input Cache Benefit Transitive Closure Billion Triples Dataset (120 GB) Join step only (key step optimized) Bill Howe, UW 10/26/2021 21

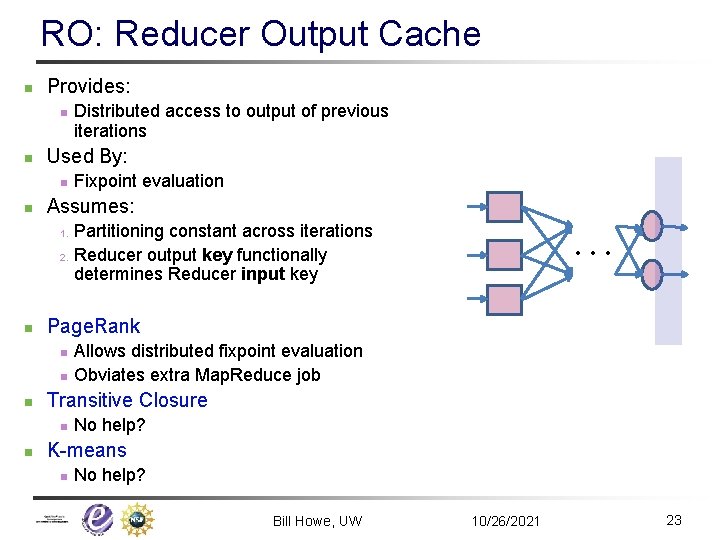

RO: Reducer Output Cache n Provides: n n Used By: n n Distributed access to output of previous iterations Fixpoint evaluation Assumes: … Partitioning constant across iterations 2. Reducer output key functionally determines Reducer input key 1. n Page. Rank n n n Transitive Closure n n Allows distributed fixpoint evaluation Obviates extra Map. Reduce job No help? K-means n No help? Bill Howe, UW 10/26/2021 23

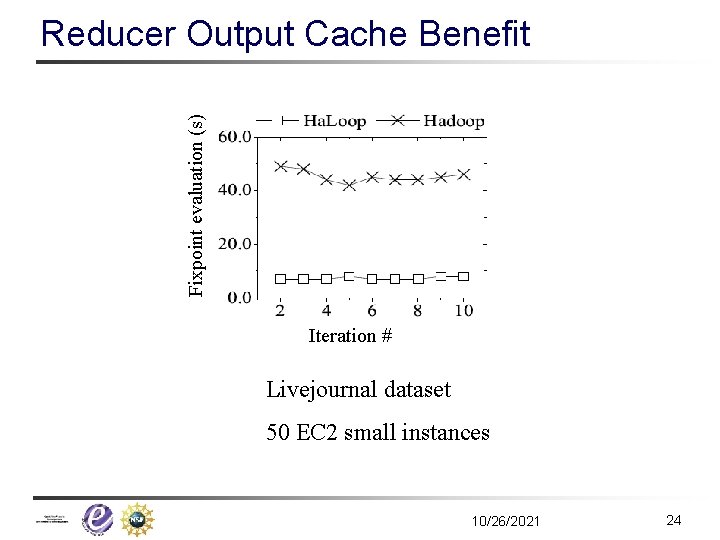

Fixpoint evaluation (s) Reducer Output Cache Benefit Iteration # Livejournal dataset 50 EC 2 small instances 10/26/2021 24

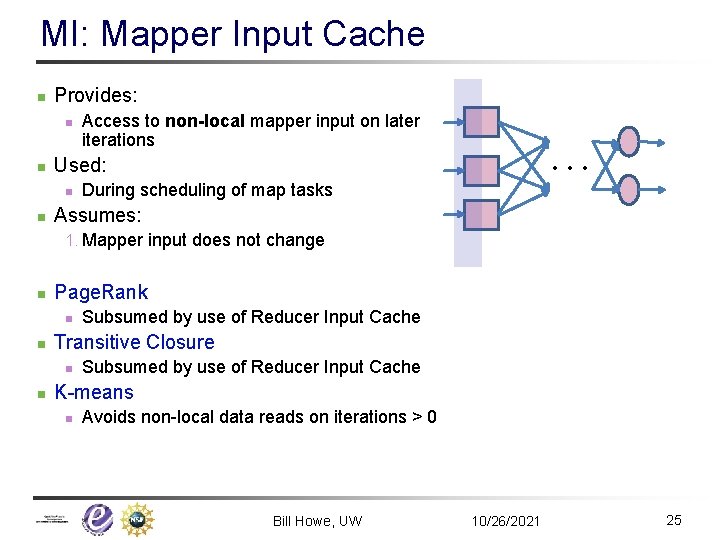

MI: Mapper Input Cache n Provides: n n … Used: n n Access to non-local mapper input on later iterations During scheduling of map tasks Assumes: 1. Mapper input does not change n Page. Rank n n Transitive Closure n n Subsumed by use of Reducer Input Cache K-means n Avoids non-local data reads on iterations > 0 Bill Howe, UW 10/26/2021 25

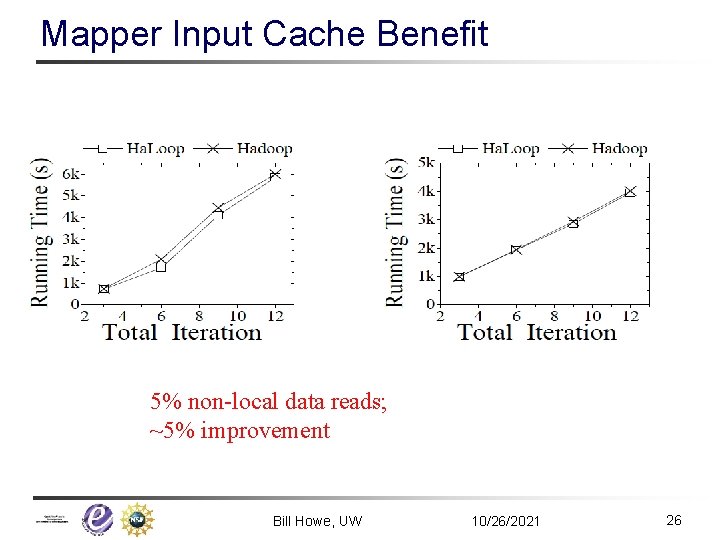

Mapper Input Cache Benefit 5% non-local data reads; ~5% improvement Bill Howe, UW 10/26/2021 26

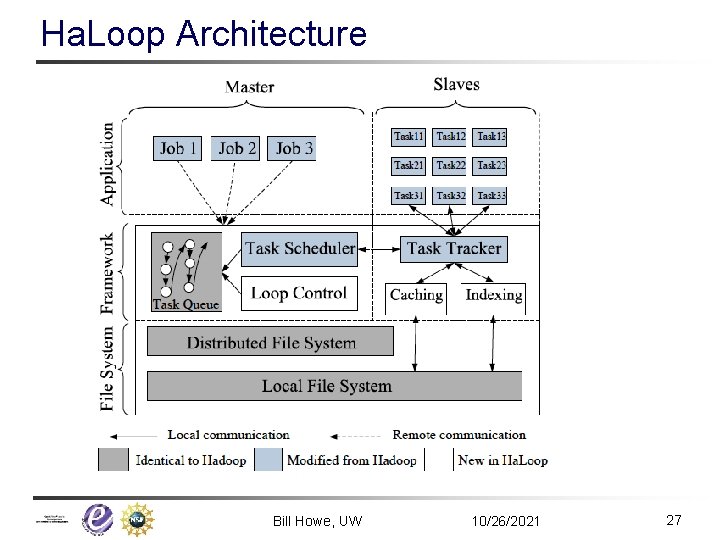

Ha. Loop Architecture Bill Howe, UW 10/26/2021 27

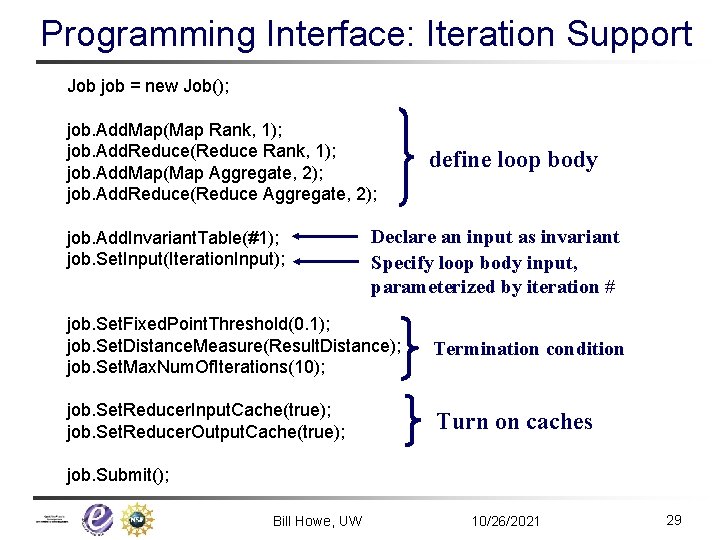

Programming Interface: Iteration Support Job job = new Job(); job. Add. Map(Map Rank, 1); job. Add. Reduce(Reduce Rank, 1); job. Add. Map(Map Aggregate, 2); job. Add. Reduce(Reduce Aggregate, 2); job. Add. Invariant. Table(#1); job. Set. Input(Iteration. Input); define loop body Declare an input as invariant Specify loop body input, parameterized by iteration # job. Set. Fixed. Point. Threshold(0. 1); job. Set. Distance. Measure(Result. Distance); job. Set. Max. Num. Of. Iterations(10); Termination condition job. Set. Reducer. Input. Cache(true); job. Set. Reducer. Output. Cache(true); Turn on caches job. Submit(); Bill Howe, UW 10/26/2021 29

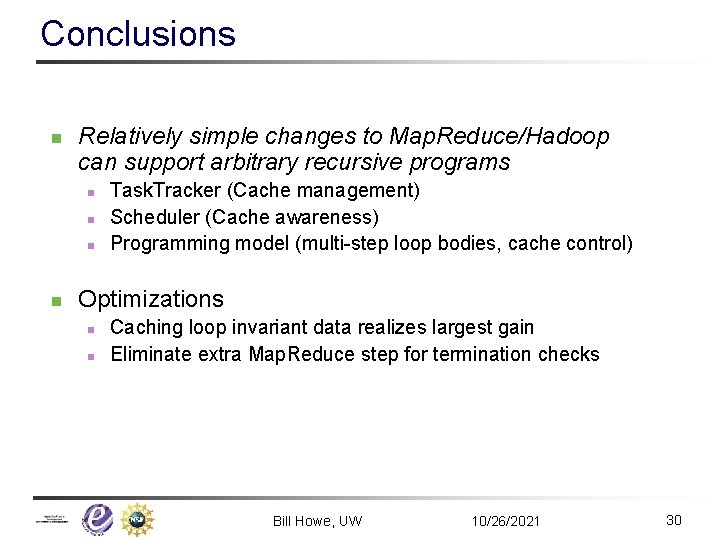

Conclusions n Relatively simple changes to Map. Reduce/Hadoop can support arbitrary recursive programs n n Task. Tracker (Cache management) Scheduler (Cache awareness) Programming model (multi-step loop bodies, cache control) Optimizations n n Caching loop invariant data realizes largest gain Eliminate extra Map. Reduce step for termination checks Bill Howe, UW 10/26/2021 30

- Slides: 28