Keep the Adversary Guessing Agent Security by Policy

Keep the Adversary Guessing: Agent Security by Policy Randomization Praveen Paruchuri University of Southern California paruchur@usc. edu University of Southern California 1

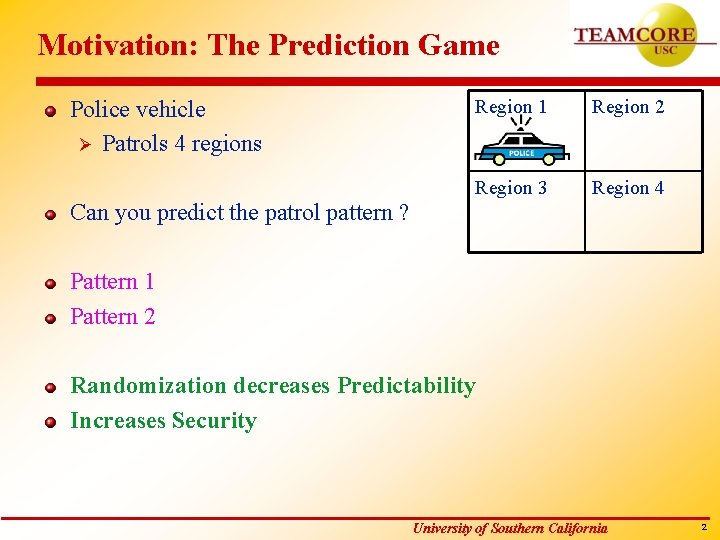

Motivation: The Prediction Game Police vehicle Ø Patrols 4 regions Region 1 Region 2 Region 3 Region 4 Can you predict the patrol pattern ? Pattern 1 Pattern 2 Randomization decreases Predictability Increases Security University of Southern California 2

Domains Police patrolling groups of houses Scheduled activities at airports like security check, refueling etc Ø Adversary monitors activities Ø Randomized policies University of Southern California 3

Problem Definition Problem : Security for agents in uncertain adversarial domains Assumptions for Agent/agent-team: Ø Variable information about adversary – Adversary cannot be modeled (Part 1) n Action/payoff structure unavailable – Adversary is partially modeled (Part 2) n Probability distribution over adversaries Assumptions for Adversary: Ø Knows agents plan/policy Ø Exploits the action predictability University of Southern California 4

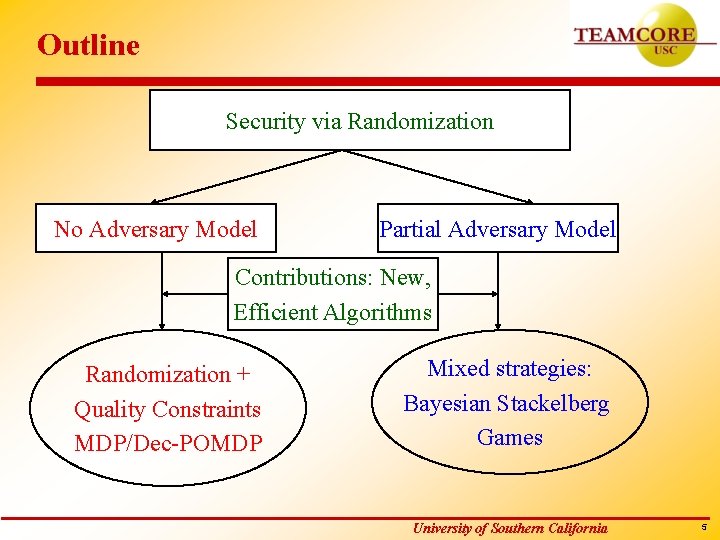

Outline Security via Randomization No Adversary Model Partial Adversary Model Contributions: New, Efficient Algorithms Randomization + Quality Constraints MDP/Dec-POMDP Mixed strategies: Bayesian Stackelberg Games University of Southern California 5

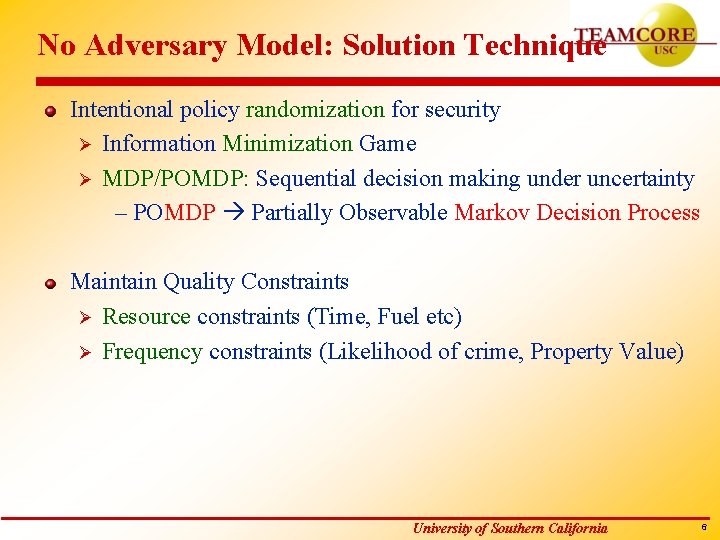

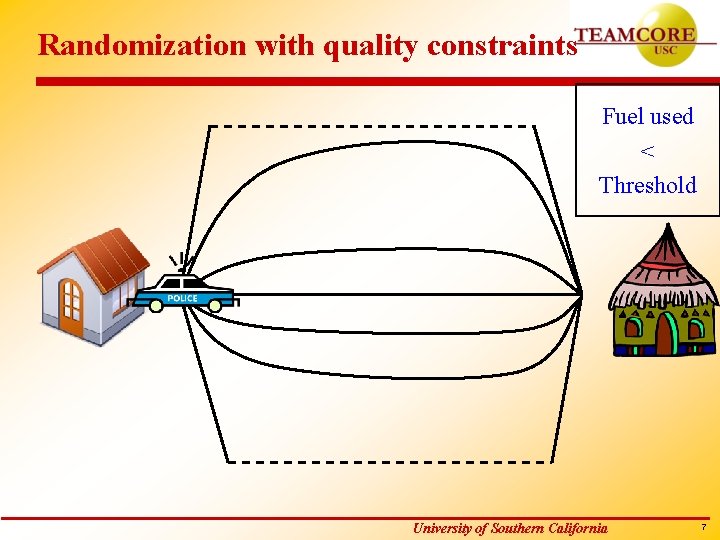

No Adversary Model: Solution Technique Intentional policy randomization for security Ø Information Minimization Game Ø MDP/POMDP: Sequential decision making under uncertainty – POMDP Partially Observable Markov Decision Process Maintain Quality Constraints Ø Resource constraints (Time, Fuel etc) Ø Frequency constraints (Likelihood of crime, Property Value) University of Southern California 6

Randomization with quality constraints Fuel used < Threshold University of Southern California 7

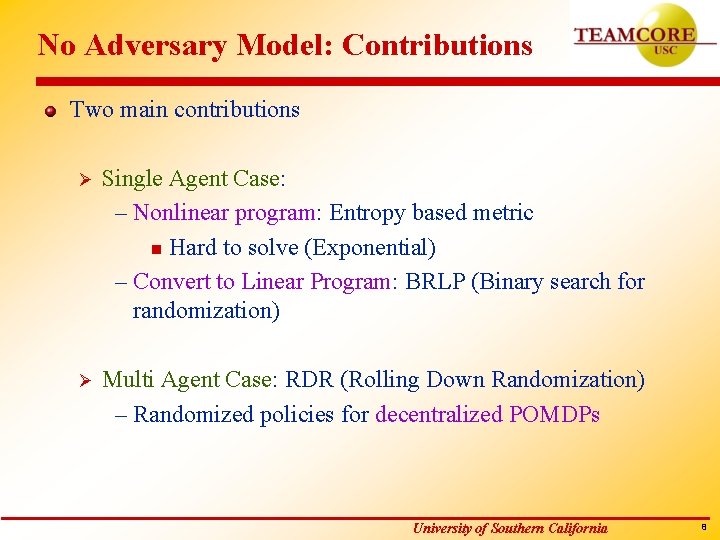

No Adversary Model: Contributions Two main contributions Ø Single Agent Case: – Nonlinear program: Entropy based metric n Hard to solve (Exponential) – Convert to Linear Program: BRLP (Binary search for randomization) Ø Multi Agent Case: RDR (Rolling Down Randomization) – Randomized policies for decentralized POMDPs University of Southern California 8

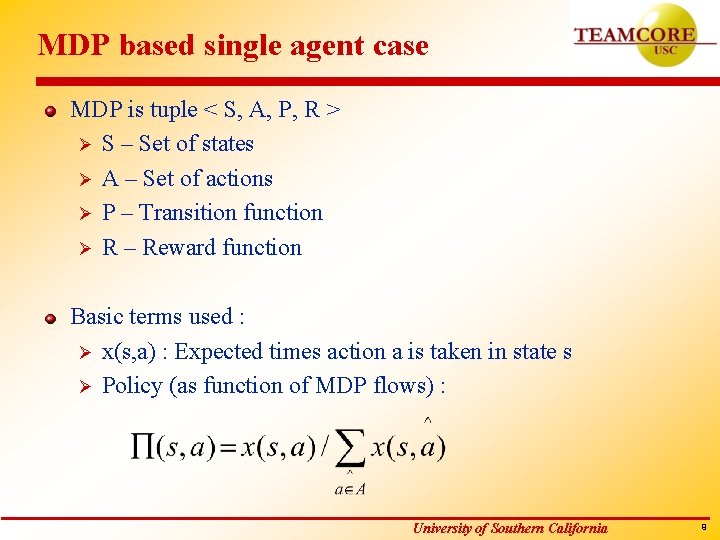

MDP based single agent case MDP is tuple < S, A, P, R > Ø S – Set of states Ø A – Set of actions Ø P – Transition function Ø R – Reward function Basic terms used : Ø x(s, a) : Expected times action a is taken in state s Ø Policy (as function of MDP flows) : University of Southern California 9

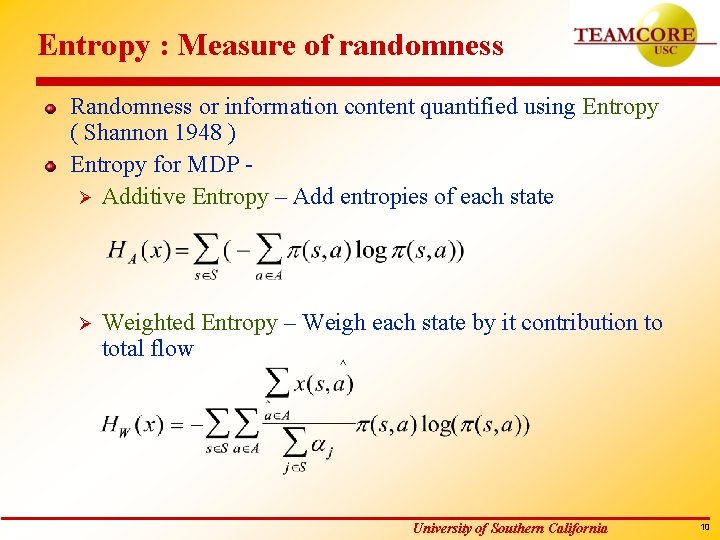

Entropy : Measure of randomness Randomness or information content quantified using Entropy ( Shannon 1948 ) Entropy for MDP Ø Additive Entropy – Add entropies of each state Ø Weighted Entropy – Weigh each state by it contribution to total flow University of Southern California 10

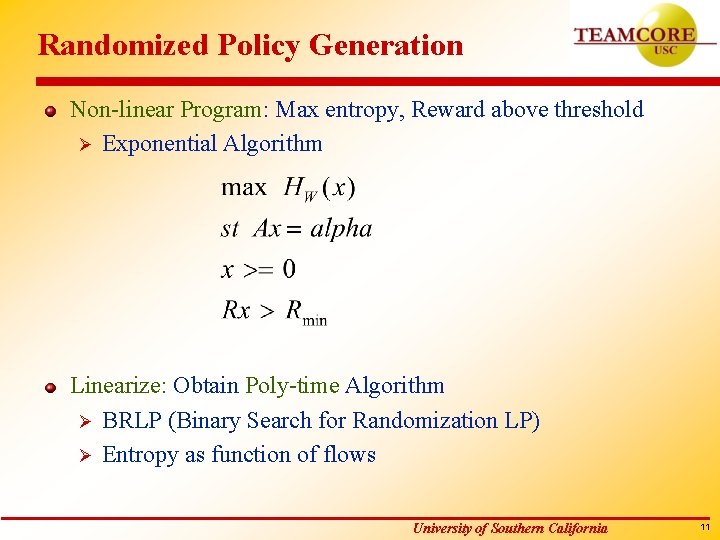

Randomized Policy Generation Non-linear Program: Max entropy, Reward above threshold Ø Exponential Algorithm Linearize: Obtain Poly-time Algorithm Ø BRLP (Binary Search for Randomization LP) Ø Entropy as function of flows University of Southern California 11

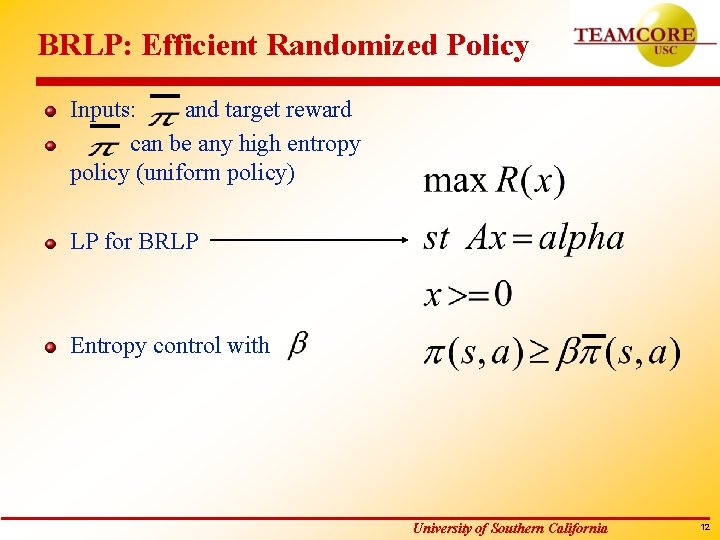

BRLP: Efficient Randomized Policy Inputs: and target reward can be any high entropy policy (uniform policy) LP for BRLP Entropy control with University of Southern California 12

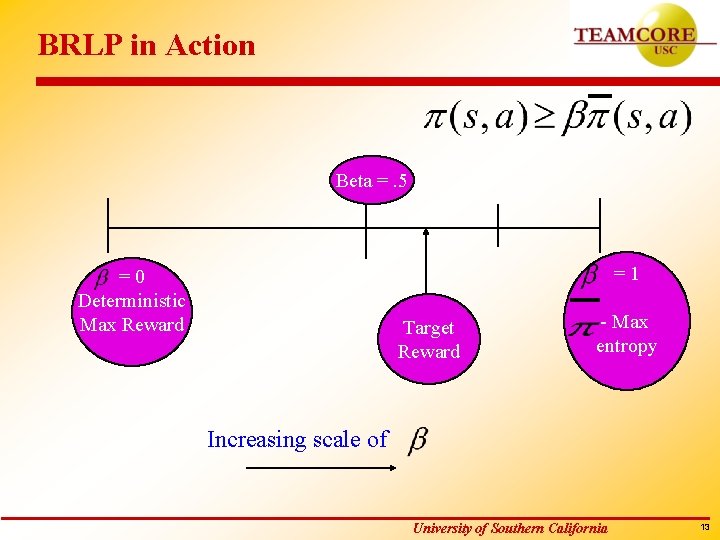

BRLP in Action Beta =. 5 =1 =0 Deterministic Max Reward Target Reward - Max entropy Increasing scale of University of Southern California 13

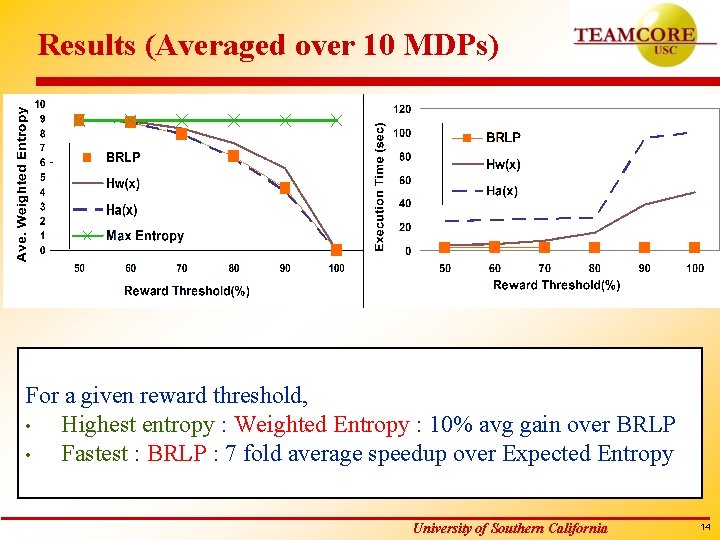

Results (Averaged over 10 MDPs) For a given reward threshold, • Highest entropy : Weighted Entropy : 10% avg gain over BRLP • Fastest : BRLP : 7 fold average speedup over Expected Entropy University of Southern California 14

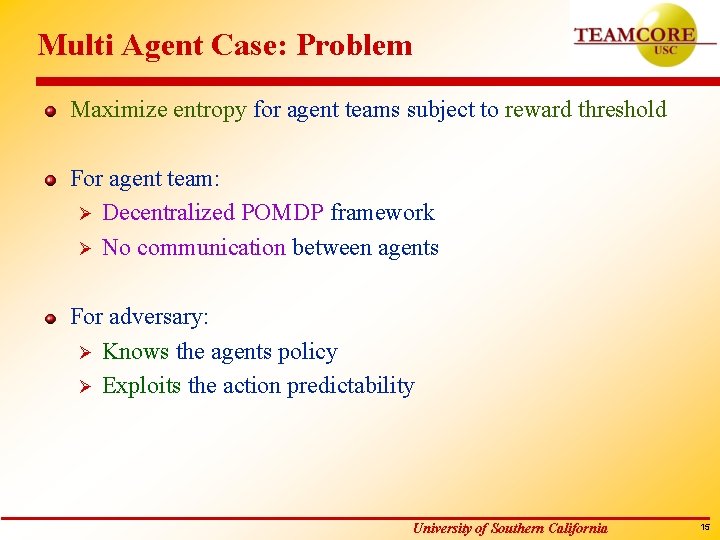

Multi Agent Case: Problem Maximize entropy for agent teams subject to reward threshold For agent team: Ø Decentralized POMDP framework Ø No communication between agents For adversary: Ø Knows the agents policy Ø Exploits the action predictability University of Southern California 15

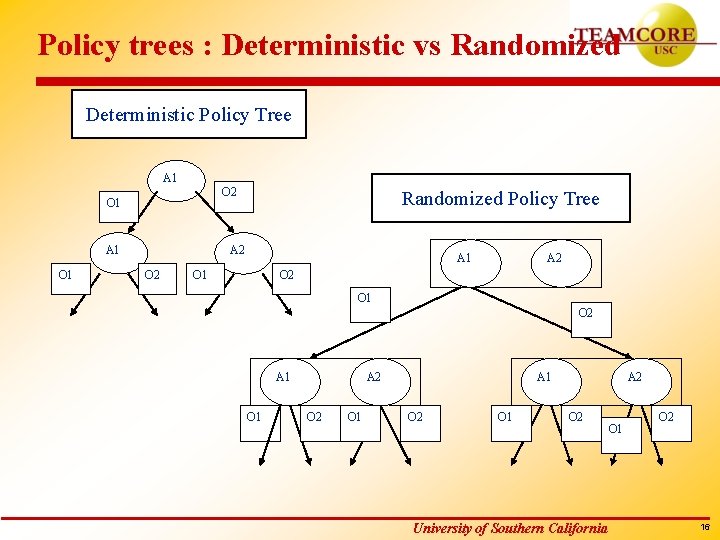

Policy trees : Deterministic vs Randomized Deterministic Policy Tree A 1 O 2 O 1 A 1 O 1 Randomized Policy Tree A 2 O 2 A 1 O 1 A 2 O 2 O 1 A 1 O 2 O 1 A 2 O 2 University of Southern California O 1 O 2 16

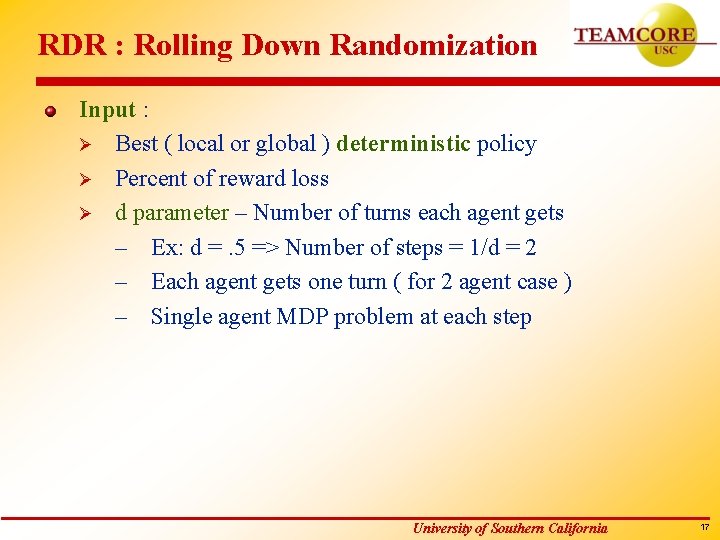

RDR : Rolling Down Randomization Input : Ø Best ( local or global ) deterministic policy Ø Percent of reward loss Ø d parameter – Number of turns each agent gets – Ex: d =. 5 => Number of steps = 1/d = 2 – Each agent gets one turn ( for 2 agent case ) – Single agent MDP problem at each step University of Southern California 17

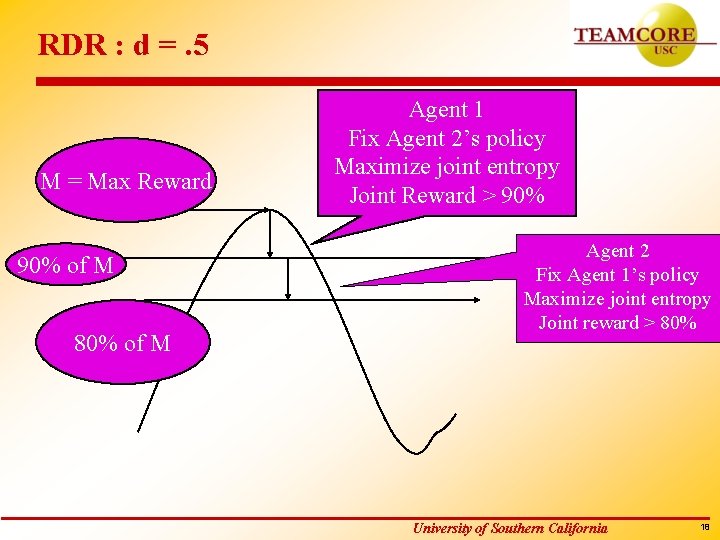

RDR : d =. 5 M = Max Reward 90% of M 80% of M Agent 1 Fix Agent 2’s policy Maximize joint entropy Joint Reward > 90% Agent 2 Fix Agent 1’s policy Maximize joint entropy Joint reward > 80% University of Southern California 18

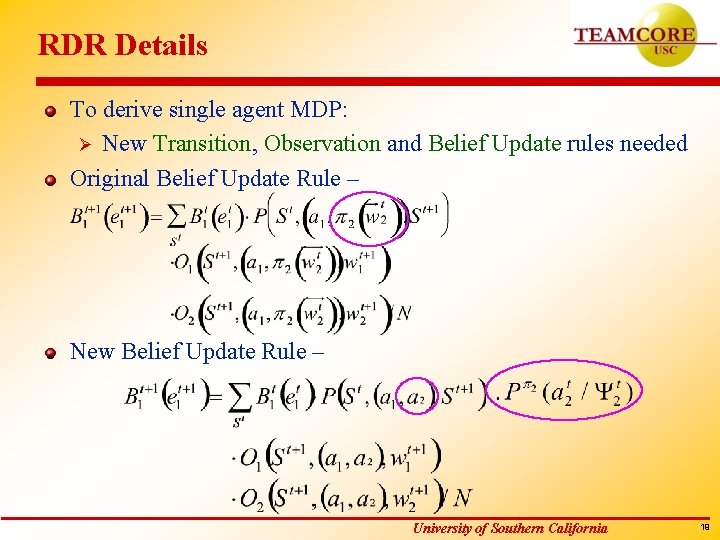

RDR Details To derive single agent MDP: Ø New Transition, Observation and Belief Update rules needed Original Belief Update Rule – New Belief Update Rule – University of Southern California 19

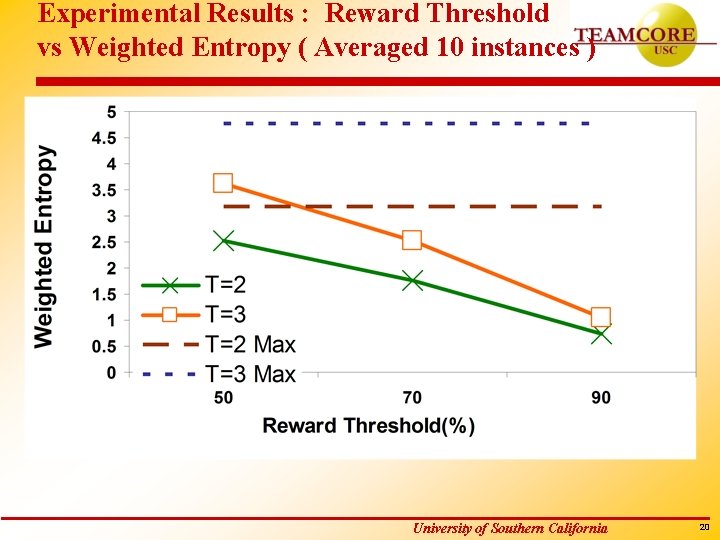

Experimental Results : Reward Threshold vs Weighted Entropy ( Averaged 10 instances ) University of Southern California 20

Security with Partial Adversary Modeled Police agent patrolling a region. Many adversaries (robbers) Ø Different motivations, different times and places Model (Action & Payoff) of each adversary known Probability distribution known over adversaries Modeled as Bayesian Stackelberg game University of Southern California 21

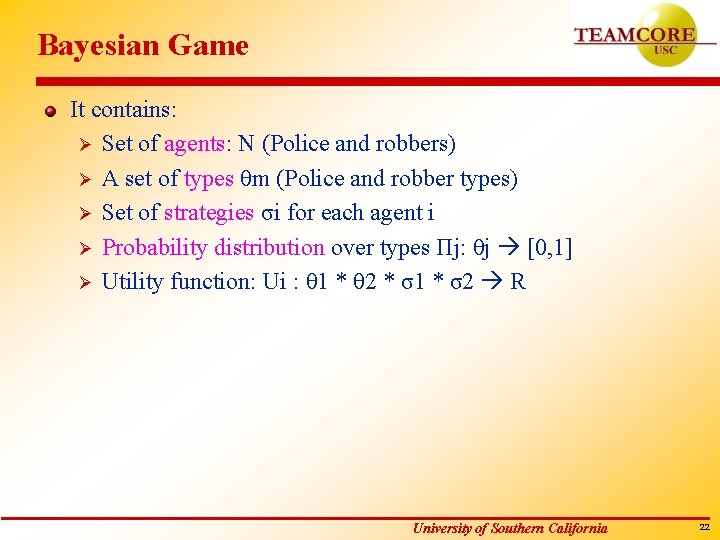

Bayesian Game It contains: Ø Set of agents: N (Police and robbers) Ø A set of types θm (Police and robber types) Ø Set of strategies σi for each agent i Ø Probability distribution over types Пj: θj [0, 1] Ø Utility function: Ui : θ 1 * θ 2 * σ1 * σ2 R University of Southern California 22

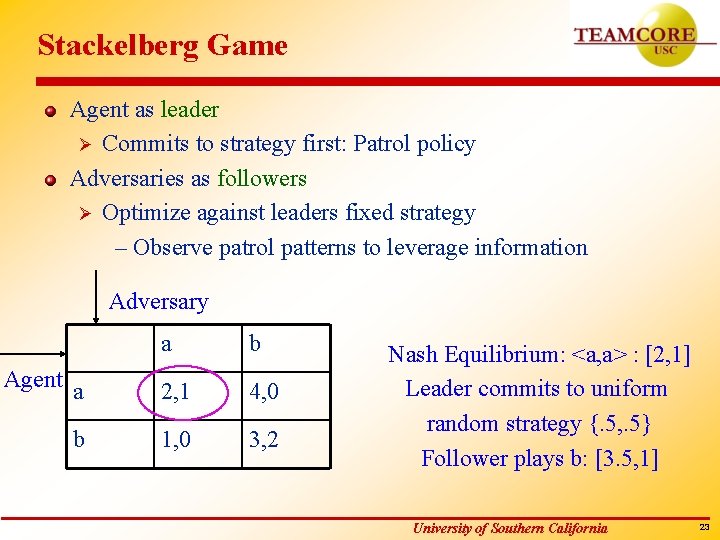

Stackelberg Game Agent as leader Ø Commits to strategy first: Patrol policy Adversaries as followers Ø Optimize against leaders fixed strategy – Observe patrol patterns to leverage information Adversary a b Agent a 2, 1 4, 0 b 1, 0 3, 2 Nash Equilibrium: <a, a> : [2, 1] Leader commits to uniform random strategy {. 5, . 5} Follower plays b: [3. 5, 1] University of Southern California 23

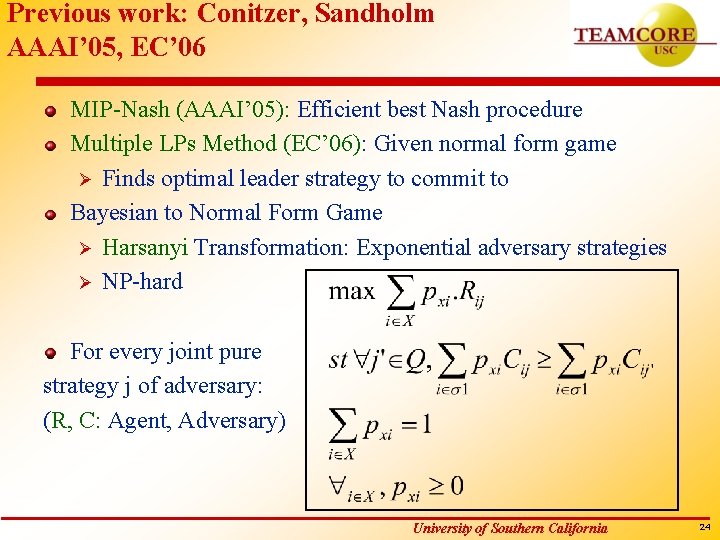

Previous work: Conitzer, Sandholm AAAI’ 05, EC’ 06 MIP-Nash (AAAI’ 05): Efficient best Nash procedure Multiple LPs Method (EC’ 06): Given normal form game Ø Finds optimal leader strategy to commit to Bayesian to Normal Form Game Ø Harsanyi Transformation: Exponential adversary strategies Ø NP-hard For every joint pure strategy j of adversary: (R, C: Agent, Adversary) University of Southern California 24

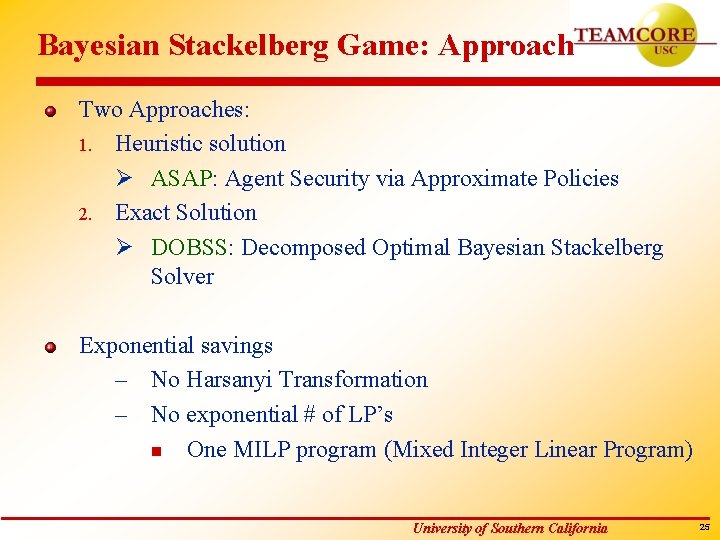

Bayesian Stackelberg Game: Approach Two Approaches: 1. Heuristic solution Ø ASAP: Agent Security via Approximate Policies 2. Exact Solution Ø DOBSS: Decomposed Optimal Bayesian Stackelberg Solver Exponential savings – No Harsanyi Transformation – No exponential # of LP’s n One MILP program (Mixed Integer Linear Program) University of Southern California 25

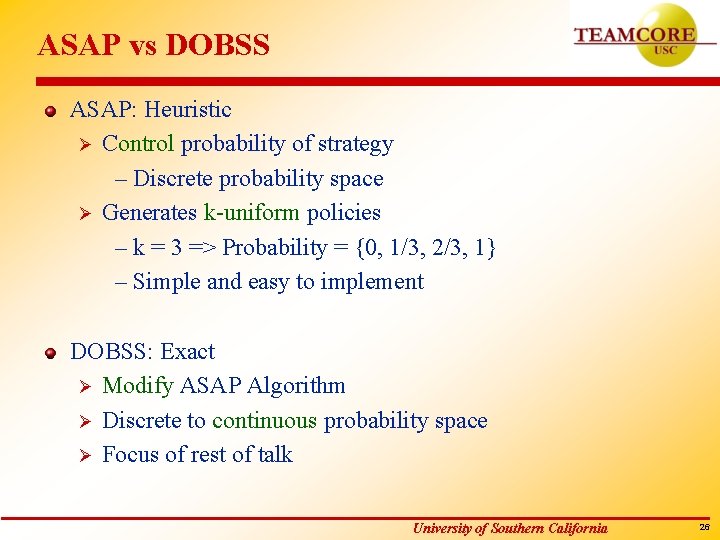

ASAP vs DOBSS ASAP: Heuristic Ø Control probability of strategy – Discrete probability space Ø Generates k-uniform policies – k = 3 => Probability = {0, 1/3, 2/3, 1} – Simple and easy to implement DOBSS: Exact Ø Modify ASAP Algorithm Ø Discrete to continuous probability space Ø Focus of rest of talk University of Southern California 26

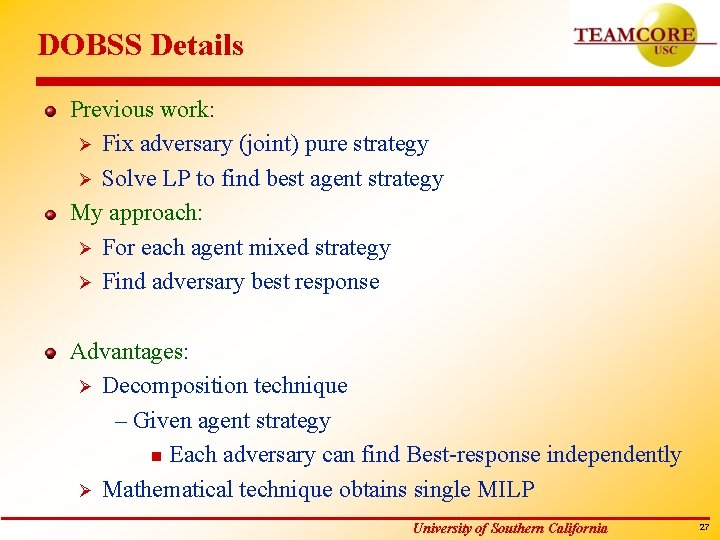

DOBSS Details Previous work: Ø Fix adversary (joint) pure strategy Ø Solve LP to find best agent strategy My approach: Ø For each agent mixed strategy Ø Find adversary best response Advantages: Ø Decomposition technique – Given agent strategy n Each adversary can find Best-response independently Ø Mathematical technique obtains single MILP University of Southern California 27

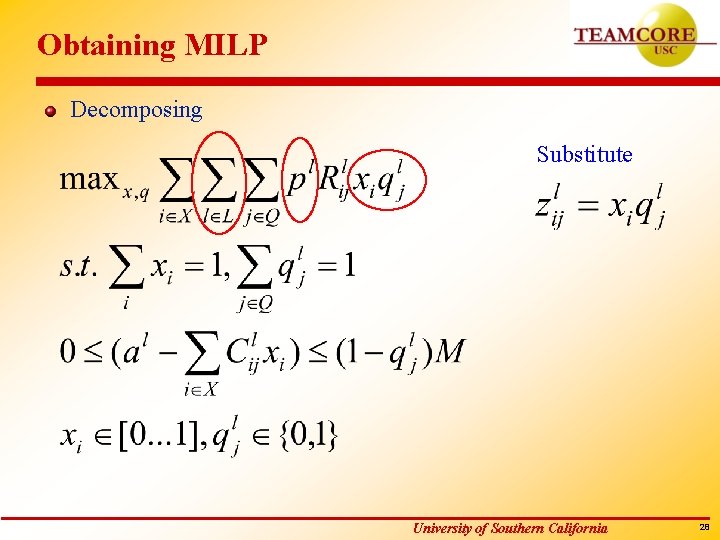

Obtaining MILP Decomposing Substitute University of Southern California 28

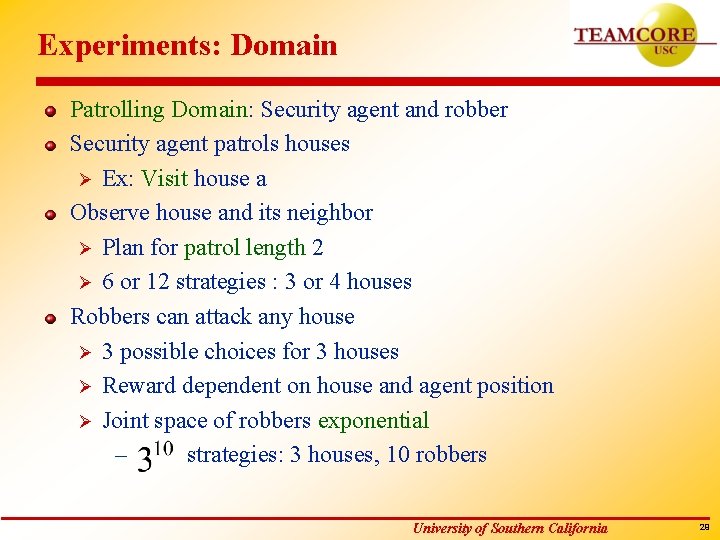

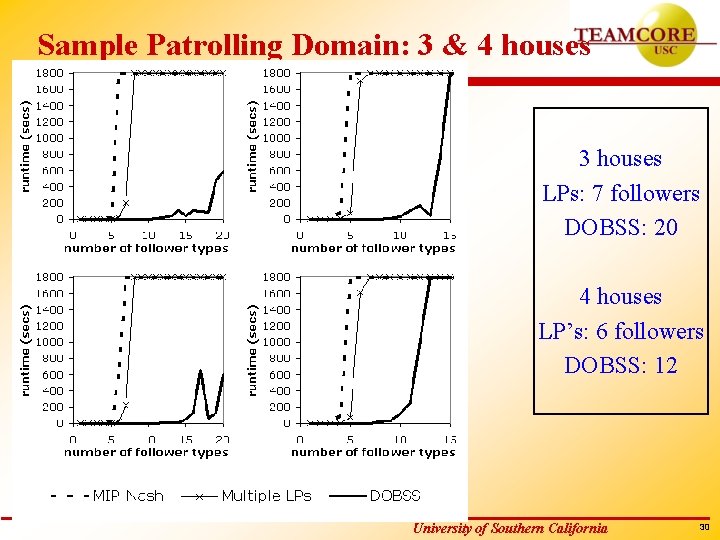

Experiments: Domain Patrolling Domain: Security agent and robber Security agent patrols houses Ø Ex: Visit house a Observe house and its neighbor Ø Plan for patrol length 2 Ø 6 or 12 strategies : 3 or 4 houses Robbers can attack any house Ø 3 possible choices for 3 houses Ø Reward dependent on house and agent position Ø Joint space of robbers exponential – strategies: 3 houses, 10 robbers University of Southern California 29

Sample Patrolling Domain: 3 & 4 houses 3 houses LPs: 7 followers DOBSS: 20 4 houses LP’s: 6 followers DOBSS: 12 University of Southern California 30

Conclusion Agent cannot model adversary Ø Intentional randomization algorithms for MDP/Dec-POMDP Agent has partial model of adversary Ø Efficient MILP solution for Bayesian Stackelberg games University of Southern California 31

Vision Incorporating machine learning Ø Dynamic environments Resource constrained agents Ø Constraints might be unknown in advance Developing real world applications Ø Police patrolling, Airport security University of Southern California 32

Thank You Any comments/questions ? University of Southern California 33

University of Southern California 34

- Slides: 34