IBM Global Engineering Solutions GPFS IBM Blue GeneP

IBM Global Engineering Solutions GPFS IBM Blue Gene/P © 2007 IBM Corporation

GPFS § What is GPFS? High performance shared disk clustered file system 4 Provides high speed file access to applications executing on: 4 – – – AIX Clusters Linux Clusters Blue Gene Systems Mixed Clusters (all of the above) Possibly other platforms in the future Scalable data access from 1 node to 2000+ nodes 4 Installed for 4 – – High Performance Computing Relational Databases Digital Media Scalable file services IBM Blue Gene/P System Administration

GPFS § GPFS File system 4 Built from a collection of disks which contain data and metadata 4 Can be built from a single disk to 1000’s disks 4 Unlimited number of open files within a file system. 4 Access to the file system is through – Standard Unix POSIX file system interface – Parallel interfaces offering byte level locking and token (lock) management § GPFS tested limits (GPFS 3. 1) 4 File/system size support limit: 2 PB(AIX and Linux support) 4 Each cluster can have 64 file systems (more in GPFS 3. 2+). 4 Number of files: 2 Billion (2, 147, 483, 647). 4 Nodes: 1530(AIX) : 2441(Linux) 4 IBM Blue Gene/P System Administration Logical Disks: >2 TB if (AIX – 64 Bit)

GPFS IBM Blue Gene/P System Administration

GPFS § Why use GPFS? 4 High Performance I/O – Striping data across multiple disks attached to multiple nodes – Efficient client side caching – Supporting a large block sizes to fit I/O requirements – Utilizing advanced algorithms that improve read-ahead and write-behind file functions – Using block level locking based on a token management system to provide data consistency while allowing multiple application nodes concurrent access to the files IBM Blue Gene/P System Administration

GPFS § Why use GPFS? 4 High Performance I/O (cont) – GPFS recognizes typical access patterns like sequential, reverse sequential and random and optimises I/O access for these patterns. – GPFS V 3. 1+ introduces the ability for multiple nodes to act as token managers for a single file system. This allows greater scalability for high transaction workloads – Scalable metadata management, allowing all nodes of the cluster accessing the file system to perform file metadata operations. IBM Blue Gene/P System Administration

GPFS § More features: 4 GPFS commands can perform a file system function across the entire cluster and most can be issued from any node in the cluster. 4 Provides support for the Data Management API (DMAPI) interface. This interface allows vendors of storage management applications such as IBM Tivoli® Storage Manager (TSM) to provide Hierarchical Storage Management (HSM) support for GPFS. 4 Quotas enable the administrator to control and monitor file system usage by users and groups across the cluster. GPFS provides user, group and fileset inode and data block usage quota. 4 Snapshot of an entire GPFS file system may be created to preserve the file system contents at a single point in time. 4 On AIX 5 L, GPFS supports NFS V 4 access control lists (ACLs) in addition to traditional ACL support 4 GPFS data may be exported to clients outside the cluster through NFS or Samba IBM Blue Gene/P System Administration

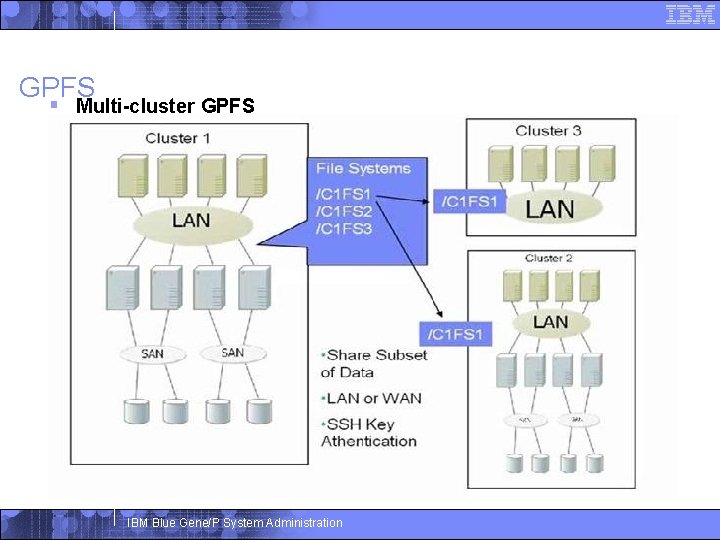

GPFS § Even more features: 4 Data and metadata replication means access to data is continued even when clusters members or storage subsystems fail. 4 Automatic recovery actions when failures are detected. Extensive logging and recovery capabilities are provided which maintain metadata consistency when application nodes holding locks or performing services fail. § GPFS Cluster configurations 4 Three types: – Shared Disk – Network Based Block I/O – Multi-cluster GPFS IBM Blue Gene/P System Administration

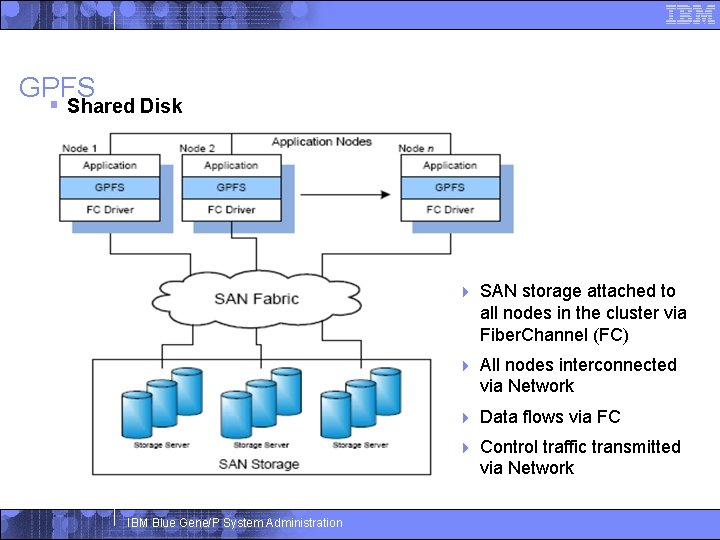

GPFS § Shared Disk IBM Blue Gene/P System Administration 4 SAN storage attached to all nodes in the cluster via Fiber. Channel (FC) 4 All nodes interconnected via Network 4 Data flows via FC 4 Control traffic transmitted via Network

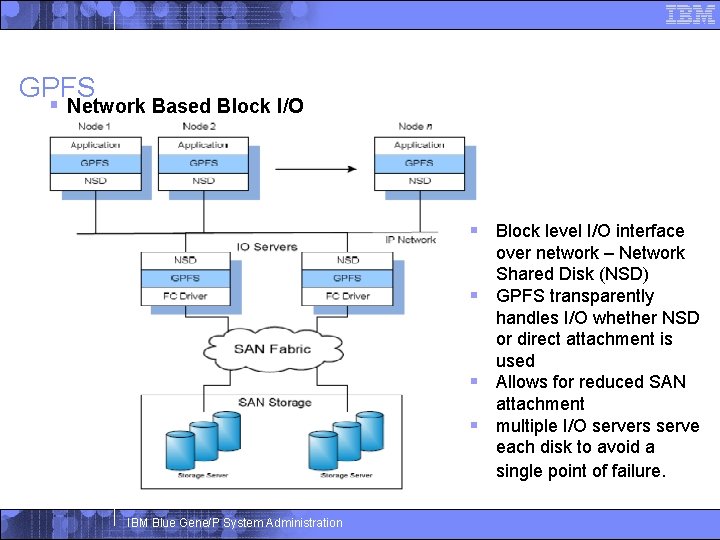

GPFS § Network Based Block I/O § Block level I/O interface over network – Network Shared Disk (NSD) § GPFS transparently handles I/O whether NSD or direct attachment is used § Allows for reduced SAN attachment § multiple I/O servers serve each disk to avoid a single point of failure. IBM Blue Gene/P System Administration

GPFS § Multi-cluster GPFS IBM Blue Gene/P System Administration

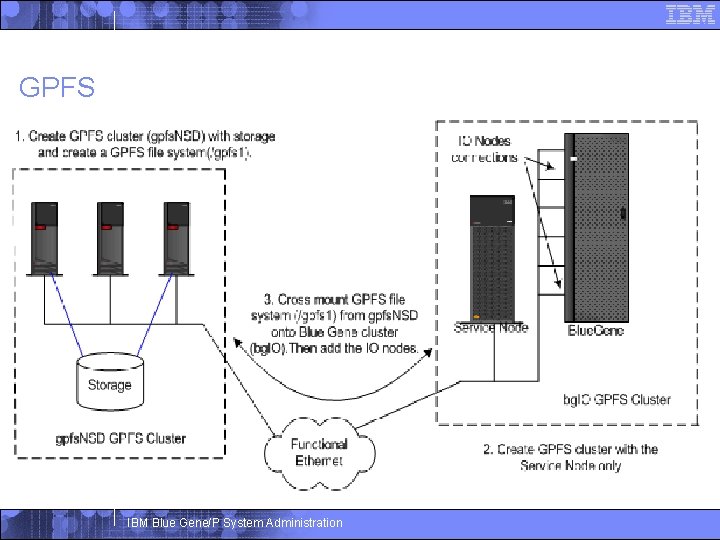

GPFS IBM Blue Gene/P System Administration

- Slides: 12