HumanRobot Teams Chris Atkeson CMU With input from

Human-Robot Teams Chris Atkeson, CMU With input from Florian Jentsch, UCF and Jean Oh, CMU (Robotics Collaborative Technology Alliance (RCTA), and Katia Sycara, CMU.

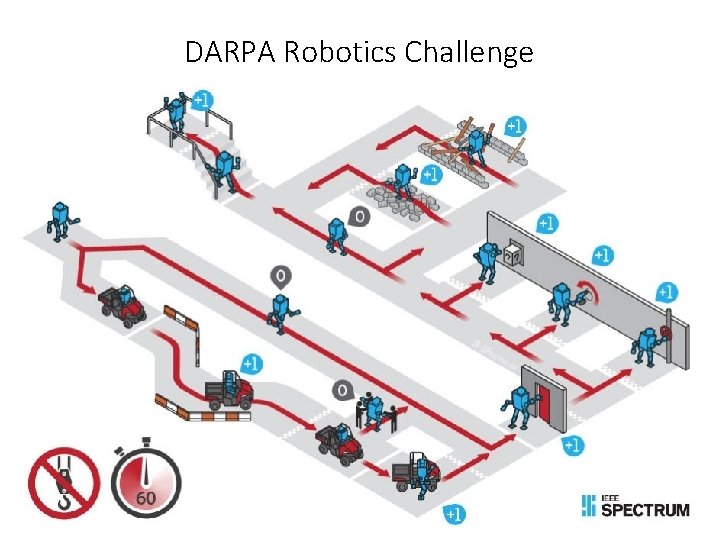

DARPA Robotics Challenge

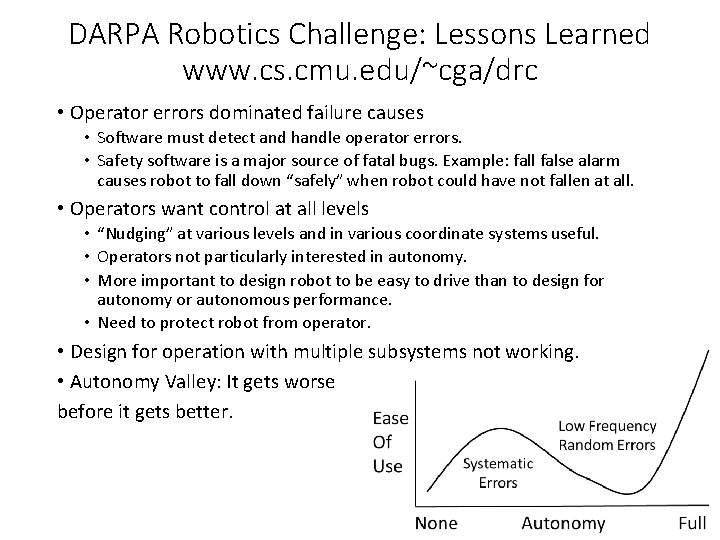

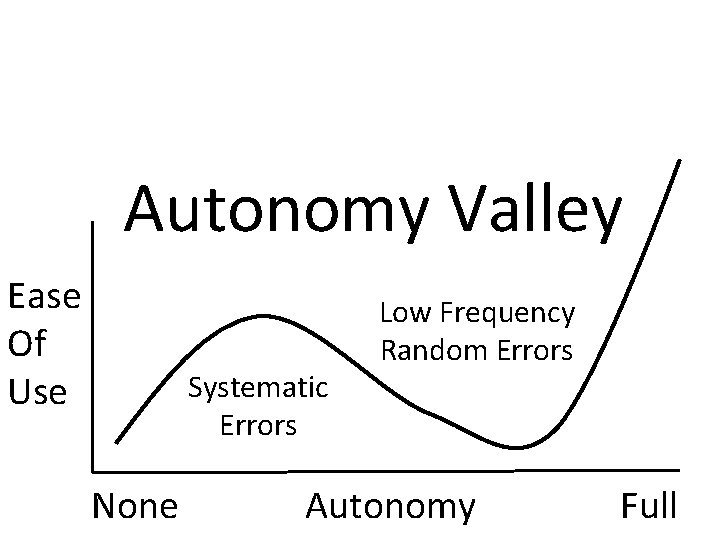

DARPA Robotics Challenge: Lessons Learned www. cs. cmu. edu/~cga/drc • Operator errors dominated failure causes • Software must detect and handle operator errors. • Safety software is a major source of fatal bugs. Example: fall false alarm causes robot to fall down “safely” when robot could have not fallen at all. • Operators want control at all levels • “Nudging” at various levels and in various coordinate systems useful. • Operators not particularly interested in autonomy. • More important to design robot to be easy to drive than to design for autonomy or autonomous performance. • Need to protect robot from operator. • Design for operation with multiple subsystems not working. • Autonomy Valley: It gets worse before it gets better.

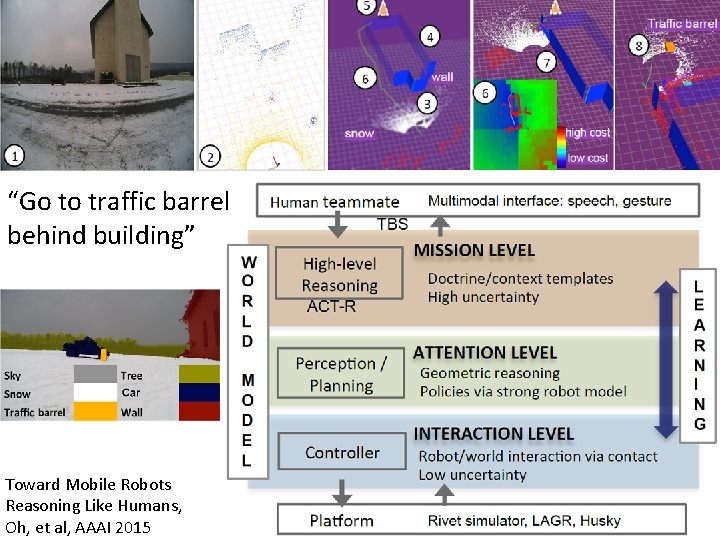

“Go to traffic barrel behind building” Toward Mobile Robots Reasoning Like Humans, Oh, et al, AAAI 2015

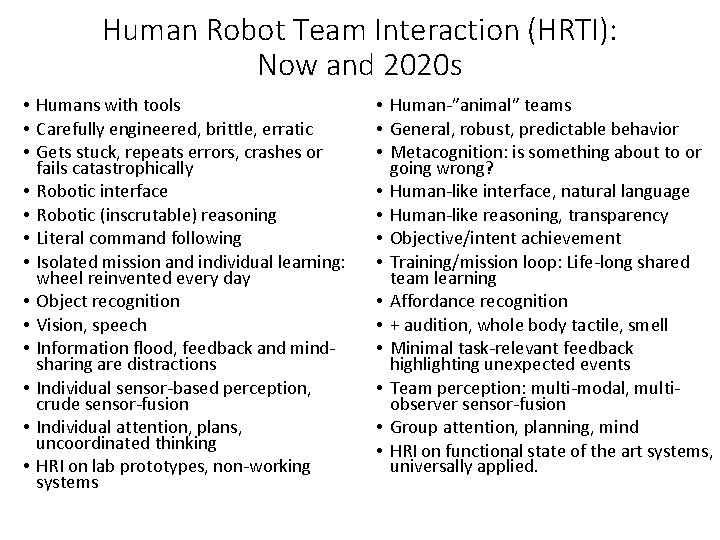

Human Robot Team Interaction (HRTI): Now and 2020 s • Humans with tools • Carefully engineered, brittle, erratic • Gets stuck, repeats errors, crashes or fails catastrophically • Robotic interface • Robotic (inscrutable) reasoning • Literal command following • Isolated mission and individual learning: wheel reinvented every day • Object recognition • Vision, speech • Information flood, feedback and mindsharing are distractions • Individual sensor-based perception, crude sensor-fusion • Individual attention, plans, uncoordinated thinking • HRI on lab prototypes, non-working systems • Human-”animal” teams • General, robust, predictable behavior • Metacognition: is something about to or going wrong? • Human-like interface, natural language • Human-like reasoning, transparency • Objective/intent achievement • Training/mission loop: Life-long shared team learning • Affordance recognition • + audition, whole body tactile, smell • Minimal task-relevant feedback highlighting unexpected events • Team perception: multi-modal, multiobserver sensor-fusion • Group attention, planning, mind • HRI on functional state of the art systems, universally applied.

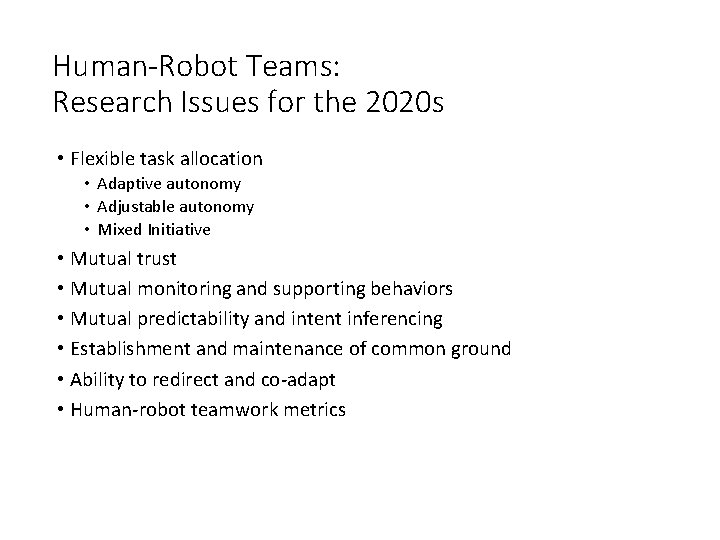

Human-Robot Teams: Research Issues for the 2020 s • Flexible task allocation • Adaptive autonomy • Adjustable autonomy • Mixed Initiative • Mutual trust • Mutual monitoring and supporting behaviors • Mutual predictability and intent inferencing • Establishment and maintenance of common ground • Ability to redirect and co-adapt • Human-robot teamwork metrics

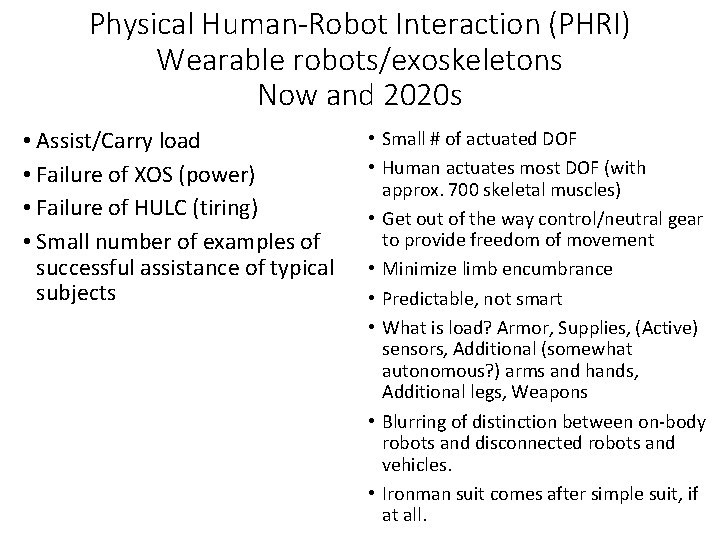

HULC XOS 3

Physical Human-Robot Interaction (PHRI) Wearable robots/exoskeletons Now and 2020 s • Assist/Carry load • Failure of XOS (power) • Failure of HULC (tiring) • Small number of examples of successful assistance of typical subjects • Small # of actuated DOF • Human actuates most DOF (with approx. 700 skeletal muscles) • Get out of the way control/neutral gear to provide freedom of movement • Minimize limb encumbrance • Predictable, not smart • What is load? Armor, Supplies, (Active) sensors, Additional (somewhat autonomous? ) arms and hands, Additional legs, Weapons • Blurring of distinction between on-body robots and disconnected robots and vehicles. • Ironman suit comes after simple suit, if at all.

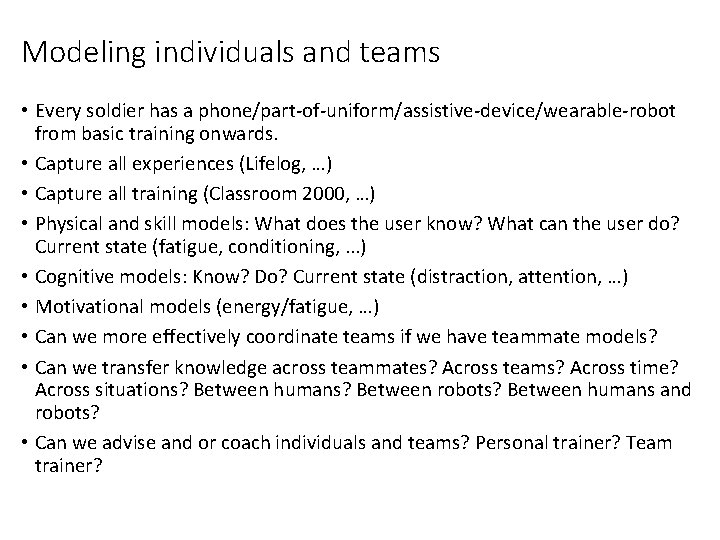

Modeling individuals and teams • Every soldier has a phone/part-of-uniform/assistive-device/wearable-robot from basic training onwards. • Capture all experiences (Lifelog, …) • Capture all training (Classroom 2000, …) • Physical and skill models: What does the user know? What can the user do? Current state (fatigue, conditioning, . . . ) • Cognitive models: Know? Do? Current state (distraction, attention, …) • Motivational models (energy/fatigue, …) • Can we more effectively coordinate teams if we have teammate models? • Can we transfer knowledge across teammates? Across teams? Across time? Across situations? Between humans? Between robots? Between humans and robots? • Can we advise and or coach individuals and teams? Personal trainer? Team trainer?

Smart/Natural/Trustworthy or Simple/Predictable/Symbiotic • Physical Human-Robot Interaction: • There is no existing model for how a “natural” exoskeleton should work. The field is dominated by the dream of an “invisible” suit that gives us super powers but otherwise does not restrict us in any way (Ironman). We can’t build that suit with existing technology. • An alternative design goal is a limited exoskeleton that provides predictable behavior on the short time scale, and adapts to the user over longer time scales (“symbiotic”, such as an intelligent/adaptive bicycle, skateboard, windsurfer, …). The human operator learns to provide rich behavior on top of the underlying simple and predictable system. • This debate applies to informational interaction: natural interface vs. symbiotic. Maybe a simple game-like interface is better in some cases? • This debate applies to how smart a robot should be. Maybe stupid is better in some cases? • This debate applies to how trustworthy a robot should be. Maybe a robot is trustworthy because its behavior is simple and predictable, not because it autonomously does a complex job well. • Animal model: should goal be doglike behavior? • Should natural, smart, and trustworthy be goals for the 2020 s, or are symbiotic, simple, and predictable more realistic goals for the 2020 s?

One size does not fit all • What are the tasks? • What are the environmental characteristics? • What robot abilities are needed? • What human abilities (physical, cognitive, and attentional) are needed?

Research Questions for Mulit-Robot HRI • How can people control multiple robot teams of increasing size? • What is the density of robots a human(s) can control? • What kinds of command are possible for a particular density? Key Constraint: Human attention is limited; attention is the budget • How many “things” can a human manage? • Robots • Tasks • Other people • Sources of information

Many forms of interaction are needed Robots • must be individually controllable • must function autonomously for long periods • must be commandable as cooperating teams • must adapt to absence of human attention • must incorporate humans in autonomous plans Key Idea: Look at HRI from viewpoint of complexity of operator’s cognitive complexity of command This framework allows systematic study of human control of multi-robot systems

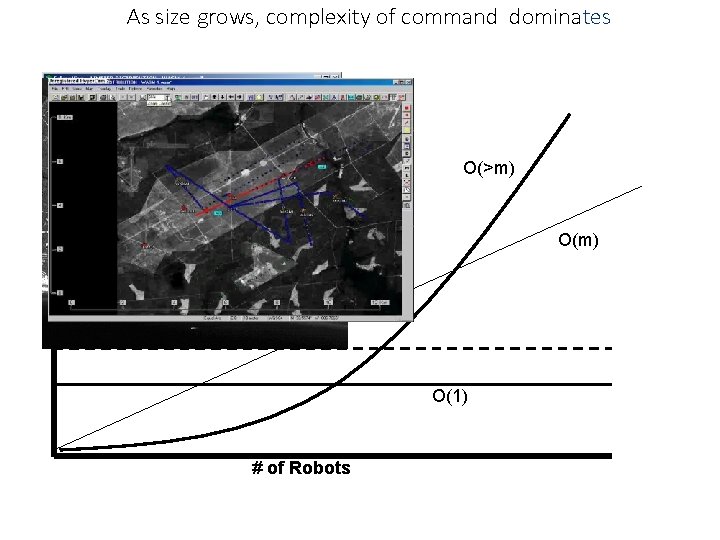

As size grows, complexity of command dominates O(>m) O(m) Cognitive limit O(1) # of Robots

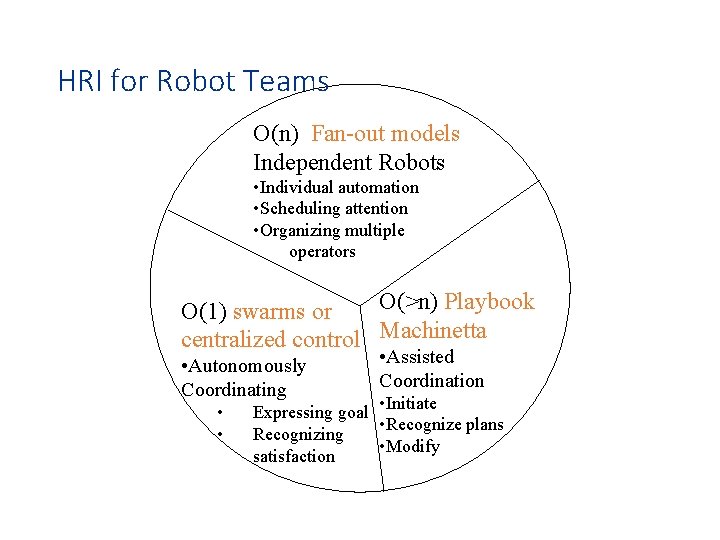

HRI for Robot Teams O(n) Fan-out models Independent Robots • Individual automation • Scheduling attention • Organizing multiple operators O(>n) Playbook O(1) swarms or centralized control Machinetta • Autonomously Coordinating • • • Assisted Coordination • Initiate Expressing goal • Recognize plans Recognizing • Modify satisfaction

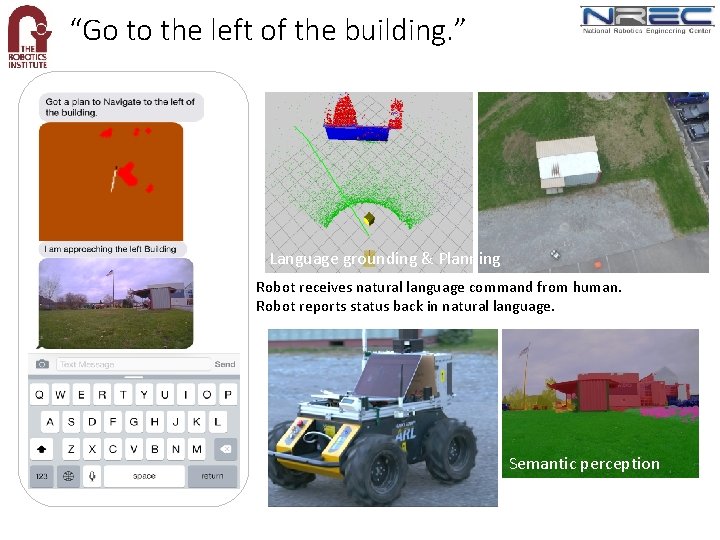

“Go to the left of the building. ” Language grounding & Planning Robot receives natural language command from human. Robot reports status back in natural language. Semantic perception

Autonomy Valley Ease Of Use Systematic Errors None Low Frequency Random Errors Autonomy Full

- Slides: 19