HDCIM Hyperdimensional Computing InMemory Architecture based on RRAM

HDC-IM: Hyperdimensional Computing In-Memory Architecture based on RRAM Jialong Liu, Mingyuan Ma, Zhenhua Zhu, Yu Wang, Huazhong Yang Department of Electronic Engineering, BNRist, Tsinghua University, Beijing, China • 1

Contents • Introduction • Encoding Method Adapted to RRAM • HDC-IM Architecture • Experimental Results • Conclusion • 2

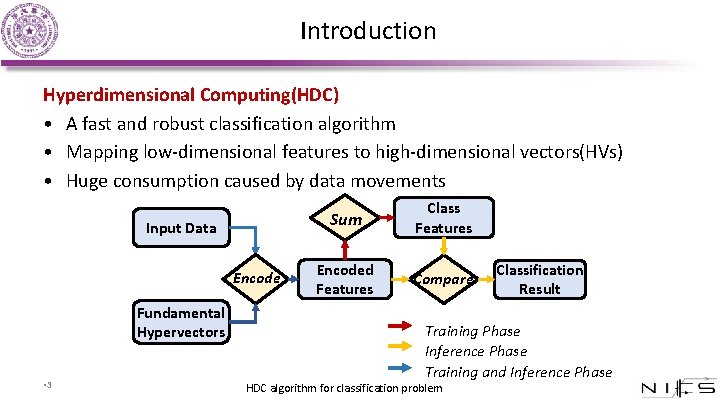

Introduction Hyperdimensional Computing(HDC) • A fast and robust classification algorithm • Mapping low-dimensional features to high-dimensional vectors(HVs) • Huge consumption caused by data movements Input Data Encode Fundamental Hypervectors • 3 Sum Class Features Encoded Features Compare Classification Result Training Phase Inference Phase Training and Inference Phase HDC algorithm for classification problem

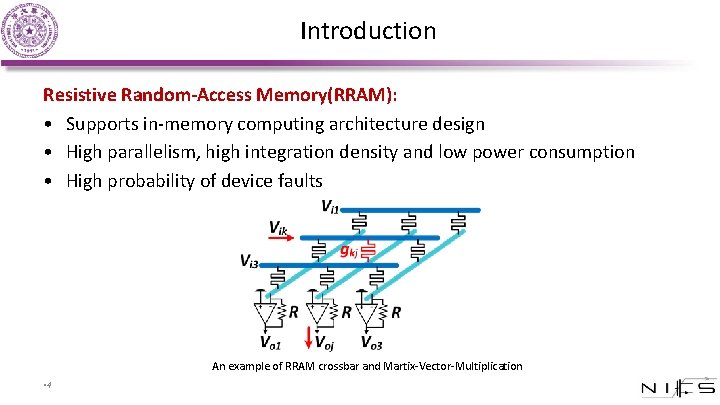

Introduction Resistive Random-Access Memory(RRAM): • Supports in-memory computing architecture design • High parallelism, high integration density and low power consumption • High probability of device faults An example of RRAM crossbar and Martix-Vector-Multiplication • 4

Introduction Motivation: Implement HDC on RRAM • Put HDC computations in or near memory to reduce data movements • Use RRAM-based logic to process encoding operation of HDC with high parallelism • HDC is fault-tolerant, which can compensate for RRAM device errors in some degree • 5

Introduction Overview of this work • Propose HDC-IM, a Hyperdimensional Computing-In-Memory architecture based on RRAM • Propose a new blocking encoding method adapted to RRAM to reduce required random patterns and data movements • HDC-IM provides more than 100 x speedup and higher energy efficiency compared with HDC on CPU • Experiments show that HDC-IM is fault-tolerant taking into account RRAM device faults • 6

Contents • Introduction • Encoding Method Adapted to RRAM • HDC-IM Architecture • Experimental Results • Conclusion • 7

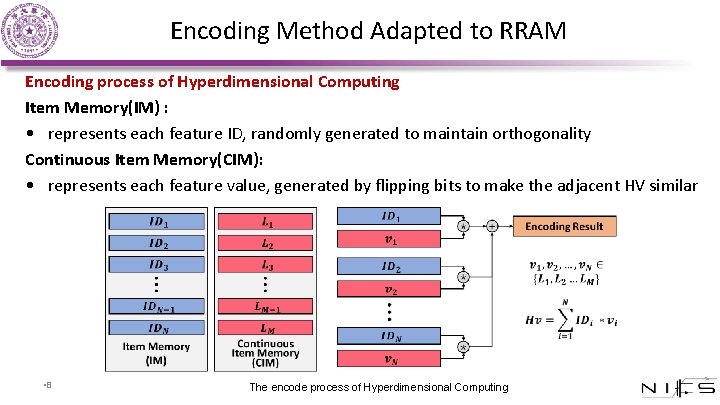

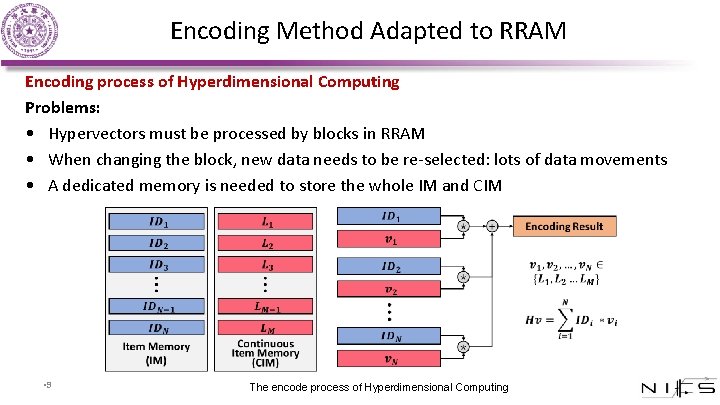

Encoding Method Adapted to RRAM Encoding process of Hyperdimensional Computing Item Memory(IM) : • represents each feature ID, randomly generated to maintain orthogonality Continuous Item Memory(CIM): • represents each feature value, generated by flipping bits to make the adjacent HV similar • • 8 The encode process of Hyperdimensional Computing

Encoding Method Adapted to RRAM Encoding process of Hyperdimensional Computing Problems: • Hypervectors must be processed by blocks in RRAM • When changing the block, new data needs to be re-selected: lots of data movements • A dedicated memory is needed to store the whole IM and CIM • • 9 The encode process of Hyperdimensional Computing

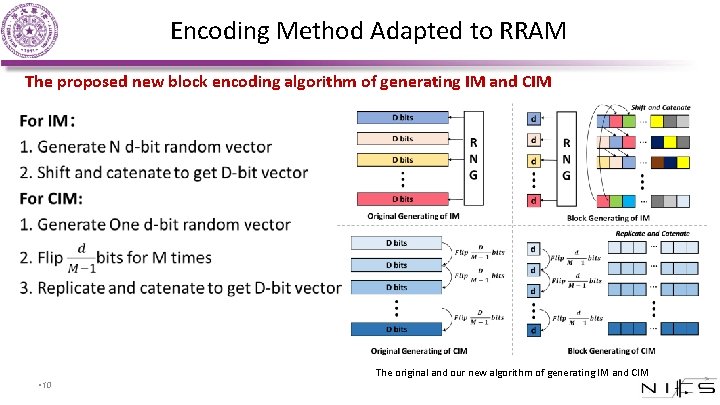

Encoding Method Adapted to RRAM The proposed new block encoding algorithm of generating IM and CIM • The original and our new algorithm of generating IM and CIM • 10

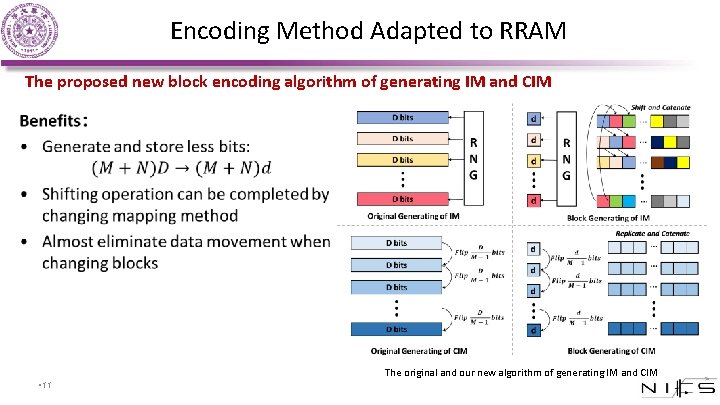

Encoding Method Adapted to RRAM The proposed new block encoding algorithm of generating IM and CIM • The original and our new algorithm of generating IM and CIM • 11

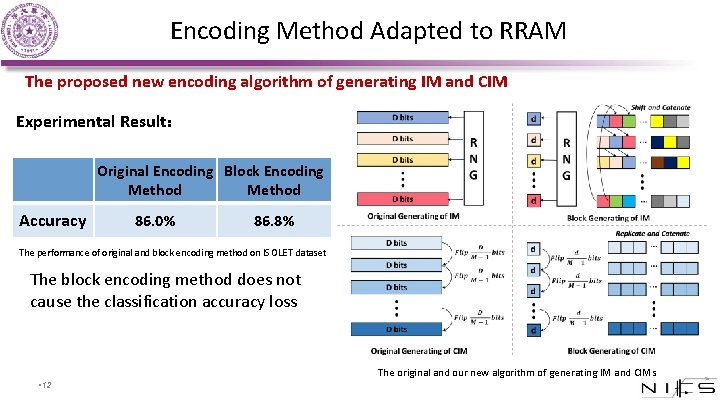

Encoding Method Adapted to RRAM The proposed new encoding algorithm of generating IM and CIM Experimental Result: Original Encoding Block Encoding Method Accuracy 86. 0% 86. 8% The performance of original and block encoding method on ISOLET dataset The block encoding method does not cause the classification accuracy loss The original and our new algorithm of generating IM and CIMs • 12

Contents • Introduction • Encoding Method Adapted to RRAM • HDC-IM Architecture • Experimental Results • Conclusion • 13

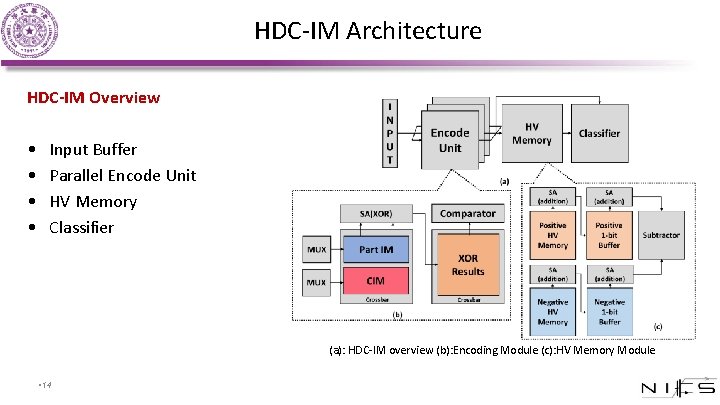

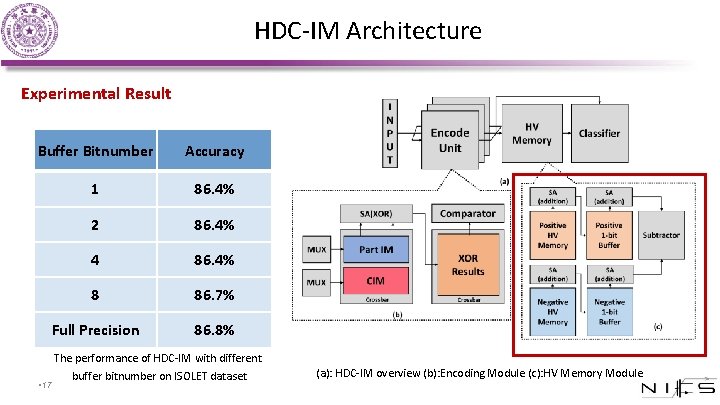

HDC-IM Architecture HDC-IM Overview • • Input Buffer Parallel Encode Unit HV Memory Classifier (a): HDC-IM overview (b): Encoding Module (c): HV Memory Module • 14

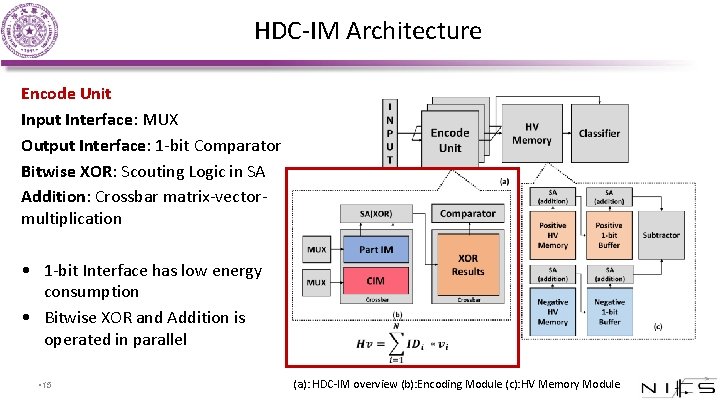

HDC-IM Architecture Encode Unit Input Interface: MUX Output Interface: 1 -bit Comparator Bitwise XOR: Scouting Logic in SA Addition: Crossbar matrix-vectormultiplication • 1 -bit Interface has low energy consumption • Bitwise XOR and Addition is operated in parallel • 15 • (a): HDC-IM overview (b): Encoding Module (c): HV Memory Module

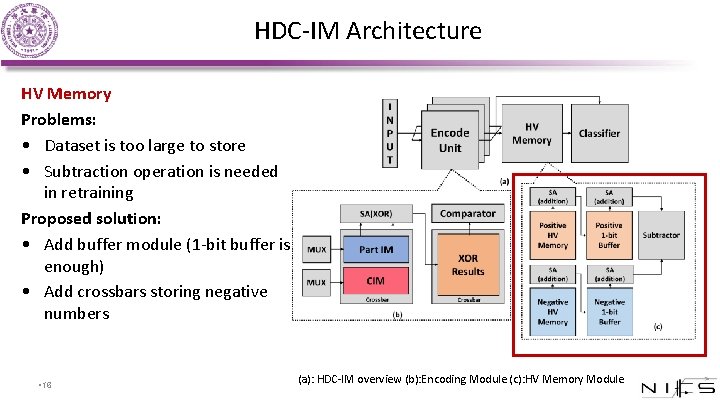

HDC-IM Architecture HV Memory Problems: • Dataset is too large to store • Subtraction operation is needed in retraining Proposed solution: • Add buffer module (1 -bit buffer is enough) • Add crossbars storing negative numbers • 16 (a): HDC-IM overview (b): Encoding Module (c): HV Memory Module

HDC-IM Architecture Experimental Result Buffer Bitnumber Accuracy 1 86. 4% 2 86. 4% 4 86. 4% 8 86. 7% Full Precision 86. 8% • 17 The performance of HDC-IM with different buffer bitnumber on ISOLET dataset (a): HDC-IM overview (b): Encoding Module (c): HV Memory Module

Contents • Introduction • Encoding Method Adapted to RRAM • HDC-IM Architecture • Experimental Results • Conclusion • 18

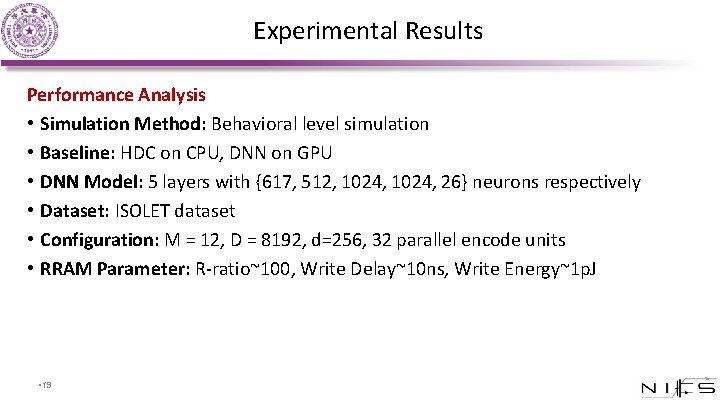

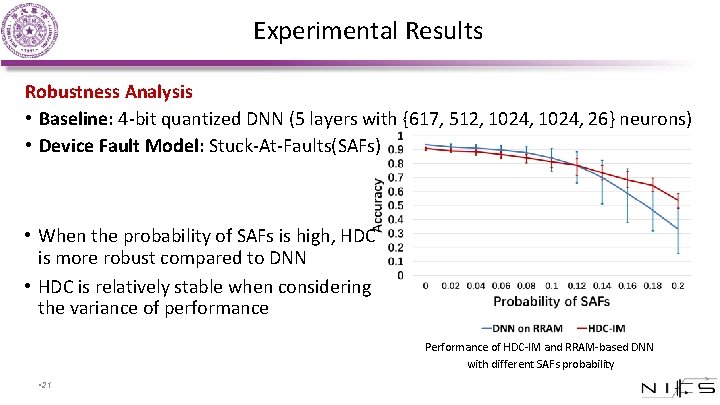

Experimental Results Performance Analysis • Simulation Method: Behavioral level simulation • Baseline: HDC on CPU, DNN on GPU • DNN Model: 5 layers with {617, 512, 1024, 26} neurons respectively • Dataset: ISOLET dataset • Configuration: M = 12, D = 8192, d=256, 32 parallel encode units • RRAM Parameter: R-ratio~100, Write Delay~10 ns, Write Energy~1 p. J • 19

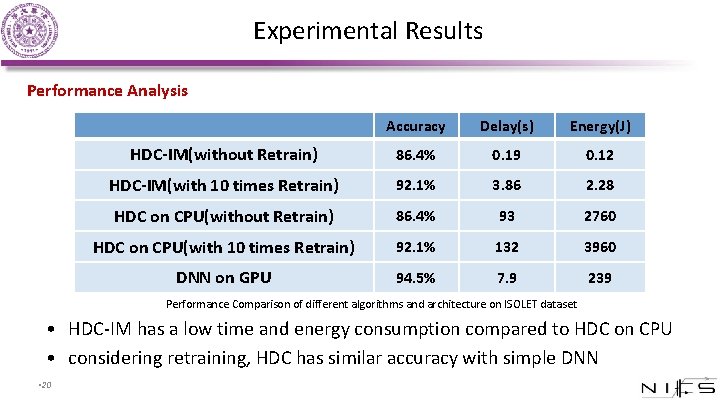

Experimental Results Performance Analysis Accuracy Delay(s) Energy(J) HDC-IM(without Retrain) 86. 4% 0. 19 0. 12 HDC-IM(with 10 times Retrain) 92. 1% 3. 86 2. 28 HDC on CPU(without Retrain) 86. 4% 93 2760 HDC on CPU(with 10 times Retrain) 92. 1% 132 3960 DNN on GPU 94. 5% 7. 9 239 Performance Comparison of different algorithms and architecture on ISOLET dataset • HDC-IM has a low time and energy consumption compared to HDC on CPU • considering retraining, HDC has similar accuracy with simple DNN • 20

Experimental Results Robustness Analysis • Baseline: 4 -bit quantized DNN (5 layers with {617, 512, 1024, 26} neurons) • Device Fault Model: Stuck-At-Faults(SAFs) • When the probability of SAFs is high, HDC is more robust compared to DNN • HDC is relatively stable when considering the variance of performance Performance of HDC-IM and RRAM-based DNN with different SAFs probability • 21

Contents • Introduction • Encoding Method Adapted to RRAM • HDC-IM Architecture • Experimental Results • Conclusion • 22

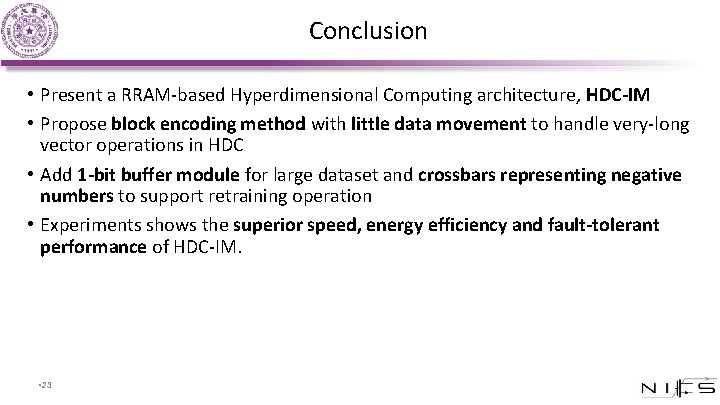

Conclusion • Present a RRAM-based Hyperdimensional Computing architecture, HDC-IM • Propose block encoding method with little data movement to handle very-long vector operations in HDC • Add 1 -bit buffer module for large dataset and crossbars representing negative numbers to support retraining operation • Experiments shows the superior speed, energy efficiency and fault-tolerant performance of HDC-IM. • 23

![References [1] Rahimi, P. Kanerva, L. Benini and J. M. Rabaey, “Efficient Bio signal References [1] Rahimi, P. Kanerva, L. Benini and J. M. Rabaey, “Efficient Bio signal](http://slidetodoc.com/presentation_image_h2/713faaec577dc8f7d7921a98bda0fd83/image-24.jpg)

References [1] Rahimi, P. Kanerva, L. Benini and J. M. Rabaey, “Efficient Bio signal Processing Using Hyperdimensional Computing: Network Templates for Combined Learning and Classification of Ex. G Signals, ” in Proceedings of the IEEE, vol. 107, no. 1, pp. 123 -143, Jan. 2019. [2] Gu P, Li B, Tang T, et al. , “Technological exploration of rram crossbar array for matrix-vector multiplication”. The 20 th Asia and South Pacific Design Automation Conference (ASPDAC), 2015. 106 -111. [3] S. Kvatinsky et al. , “MAGIC-Memristor-Aided Logic” in IEEE Transactions on Circuits and Systems II: Express Briefs, vol. 61, no. 11, pp. 895 -899, Nov. 2014. [4] S. Kvatinsky, G. Satat, N. Wald, E. G. Friedman, A. Kolodny and U. C. Weiser, “Memristor-Based Material Implication (IMPLY) Logic: Design. Principles and Methodologies” in IEEE Transactions on Very Large Scale Integration (VLSI) Systems, vol. 22, no. 10, pp. 2054 -2066, Oct. 2014. [5] Rahimi et al. , “A robust and energy efficient classifier using brain-inspired hyperdimensional computing, ” in ISLPED, August 2016. [6] M. Imani, D. Kong, A. Rahimi and T. Rosing, “Voice. HD: Hyperdimensional Computing for Efficient Speech Recognition, ” 2017 IEEE International Conference on Rebooting Computing (ICRC), Washington, DC, 2017, pp. 1 -8. [7] M. Imani, Y. Kim, T. Worley, S. Gupta and T. Rosing, “HDCluster: An Accurate Clustering Using Brain-Inspired High-Dimensional Computing, ” 2019 Design, Automation Test in Europe Conference Exhibition (DATE), Florence, Italy, 2019, pp. 1591 -1594. [8] “Uci machine learning repository. ” http: //archive. ics. uci. edu/ml/datasets/ISOLET. [9] R. Dlugosz, A. Rydlewski and T. Talaska, “Low power nonlinear Min/Max filters implemented in the CMOS technology” 2014 29 th International Conference on Microelectronics Proceedings - MIEL 2014, Belgrade, 2014, pp. 397 -400. [10] Khorami, M. B. Dastjerdi and A. F. Ahmadi, “A low-power high-speed comparator for analog to digital converters, ” 2016 IEEE International Symposium on Circuits and Systems (ISCAS), Montreal, QC, 2016, pp. 2010 -2013. [11] Shimeng Yu, “Resistive Random Access Memory (RRAM), ” in Resistive Random Access Memory (RRAM) , Morgan Claypool, 2016, pp. [12] H. -. P. Wong et al. , “Metal-Oxide RRAM, ” in Proceedings of the IEEE, vol. 100, no. 6, pp. 1951 -1970, June 2012. [13] L. Xia et al. , “Stuck-at Fault Tolerance in RRAM Computing Systems, ” in IEEE Journal on Emerging and Selected Topics in Circuits and Systems, vol. 8, no. 1, pp. 102 -115, March 2018. [14] L. Xie et al. , “Scouting Logic: A Novel Memristor-Based Logic Design for Resistive Computing, ” 2017 IEEE Computer Society Annual Symposium on VLSI (ISVLSI), Bochum, 2017, pp. 176 -181. • 24

Thanks! Jialong Liu, Mingyuan Ma, Zhenhua Zhu, Yu Wang, Huazhong Yang Department of Electronic Engineering, BNRist, Tsinghua University, Beijing, China • 25

- Slides: 25