Ethernet Fabrics Extreme Networks Extreme Fabric is a

Ethernet Fabrics Extreme Networks Extreme. Fabric is a fully meshed auto configuring routed Fabric that uses host routes to allow for IP mobility of any host on the network seamlessly. It supports logical L 2 domains with VXLAN and logical L 3 domains. with VRF. Mikael Holmberg Sr. Global Consulting Engineer

Disclaimer This product roadmap represents Extreme Networks® current product direction. All product releases will be on a when-and-if available basis. Actual feature development and timing of releases will be at the sole discretion of Extreme Networks. Not all features are supported on all platforms. Presentation of the product roadmap does not create a commitment by Extreme Networks to deliver a specific feature. Contents of this roadmap are subject to change without notice. 2

Agenda § § Why Ethernet Fabrics are needed? Extreme. Fabric…. what is it? Spine/Leaf Architecture Summit. X 870/690

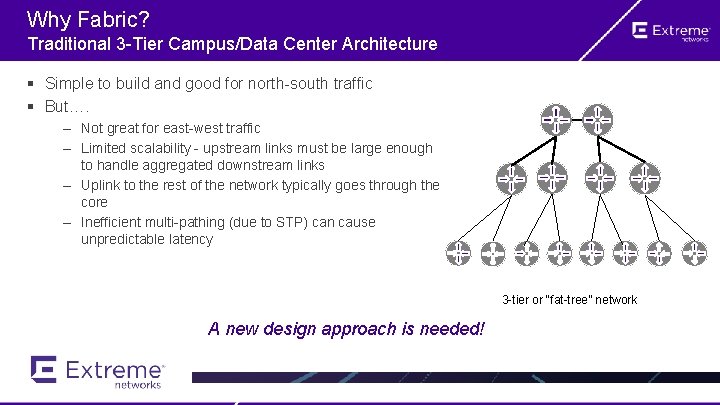

Why Fabric? Traditional 3 -Tier Campus/Data Center Architecture § Simple to build and good for north-south traffic § But…. – Not great for east-west traffic – Limited scalability - upstream links must be large enough to handle aggregated downstream links – Uplink to the rest of the network typically goes through the core – Inefficient multi-pathing (due to STP) can cause unpredictable latency 3 -tier or “fat-tree” network A new design approach is needed!

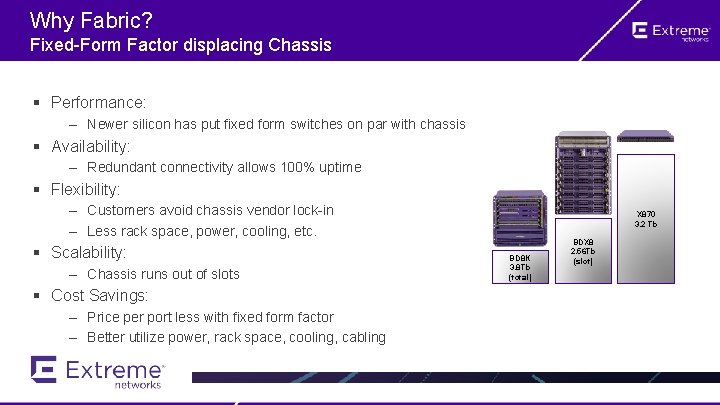

Why Fabric? Fixed-Form Factor displacing Chassis § Performance: – Newer silicon has put fixed form switches on par with chassis § Availability: – Redundant connectivity allows 100% uptime § Flexibility: – Customers avoid chassis vendor lock-in – Less rack space, power, cooling, etc. § Scalability: – Chassis runs out of slots § Cost Savings: – Price per port less with fixed form factor – Better utilize power, rack space, cooling, cabling X 870 3. 2 Tb BD 8 K 3. 8 Tb (total) BDX 8 2. 56 Tb (slot)

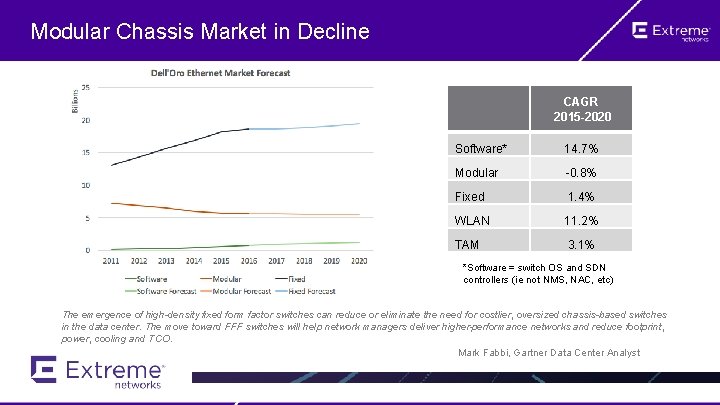

Modular Chassis Market in Decline CAGR 2015 -2020 Software* 14. 7% Modular -0. 8% Fixed 1. 4% WLAN 11. 2% TAM 3. 1% *Software = switch OS and SDN controllers (ie not NMS, NAC, etc) The emergence of high-density fixed form factor switches can reduce or eliminate the need for costlier, oversized chassis-based switches in the data center. The move toward FFF switches will help network managers deliver higher-performance networks and reduce footprint, power, cooling and TCO. Mark Fabbi, Gartner Data Center Analyst

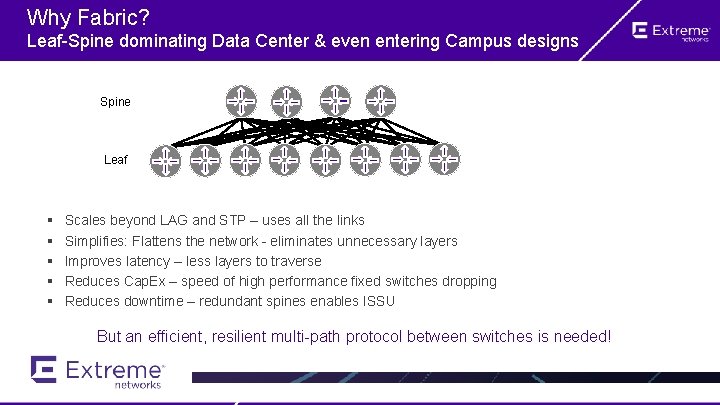

Why Fabric? Leaf-Spine dominating Data Center & even entering Campus designs Spine Leaf § § § Scales beyond LAG and STP – uses all the links Simplifies: Flattens the network - eliminates unnecessary layers Improves latency – less layers to traverse Reduces Cap. Ex – speed of high performance fixed switches dropping Reduces downtime – redundant spines enables ISSU But an efficient, resilient multi-path protocol between switches is needed!

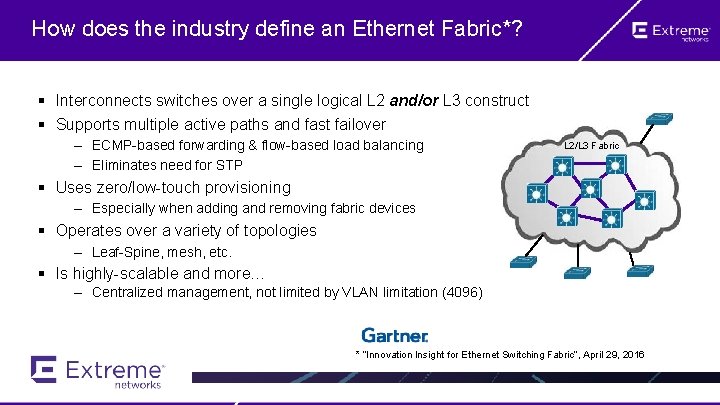

How does the industry define an Ethernet Fabric*? § Interconnects switches over a single logical L 2 and/or L 3 construct § Supports multiple active paths and fast failover – ECMP-based forwarding & flow-based load balancing – Eliminates need for STP L 2/L 3 Fabric § Uses zero/low-touch provisioning – Especially when adding and removing fabric devices § Operates over a variety of topologies – Leaf-Spine, mesh, etc. § Is highly-scalable and more… – Centralized management, not limited by VLAN limitation (4096) * “Innovation Insight for Ethernet Switching Fabric”, April 29, 2016

Extreme. Fabric

Extreme. Fabric Introduction Extreme. Fabric is a network of cooperating interconnected devices that create a Fabric of any scale for any topology, providing fully redundant, multipath routing. The Fabric grows dynamically and freely, not bound to any well-known topology such as Clos or Leaf/Spine. Extreme. Fabric nodes build a secure Fabric by running the very scalable BGP protocol to exchange topology information about the location of IP Hosts. It uses IPv 6 as the network layer to transport IPv 4 and IPv 6 traffic. Host addresses can be IPv 4/32 or IPv 6/128 addresses. Summary • • • Zero touch, zero local configuration RESTful API to manage the Fabric Secure with TLS and certificates and/or 802. 1 X If present, policy rules, configuration, and metadata are identical for all Fabric nodes Equal Cost Multi Path (ECMP) through the Fabric Automatic DHCP Relay Services LAG attached Bridges and Servers with Easy. LAG to multiple Extreme. Fabric nodes IPv 4/IPv 6 attached hosts – 32/128 bit host routes Default Gateway / Static routes via REST configuration

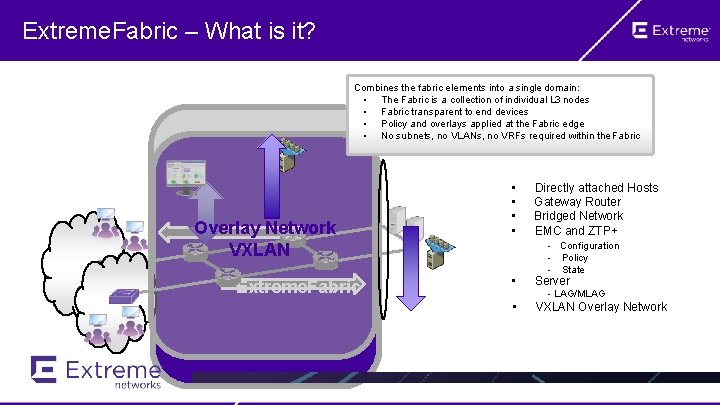

Extreme. Fabric – What is it? Combines the fabric elements into a single domain: • The Fabric is a collection of individual L 3 nodes • Fabric transparent to end devices • Policy and overlays applied at the Fabric edge • No subnets, no VLANs, no VRFs required within the Fabric Overlay Network VXLAN Extreme. Fabric • • Directly attached Hosts Gateway Router Bridged Network EMC and ZTP+ • Server • VXLAN Overlay Network - Configuration - Policy - State - LAG/MLAG

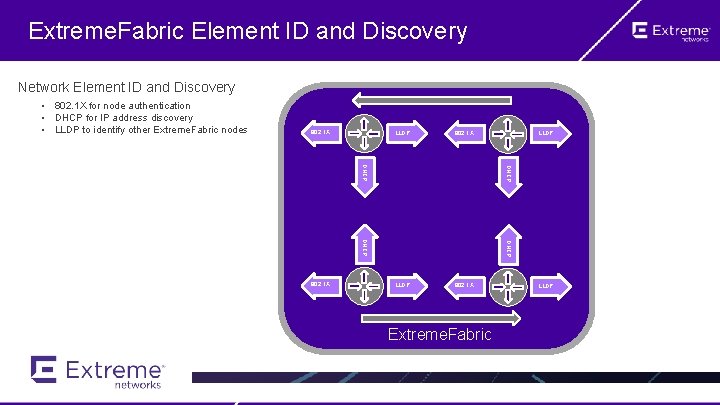

Extreme. Fabric Element ID and Discovery Network Element ID and Discovery • 802. 1 X for node authentication • DHCP for IP address discovery • LLDP to identify other Extreme. Fabric nodes 802. 1 X LLDP 802. 1 X DHCP 802. 1 X LLDP DHCP LLDP Extreme. Fabric LLDP

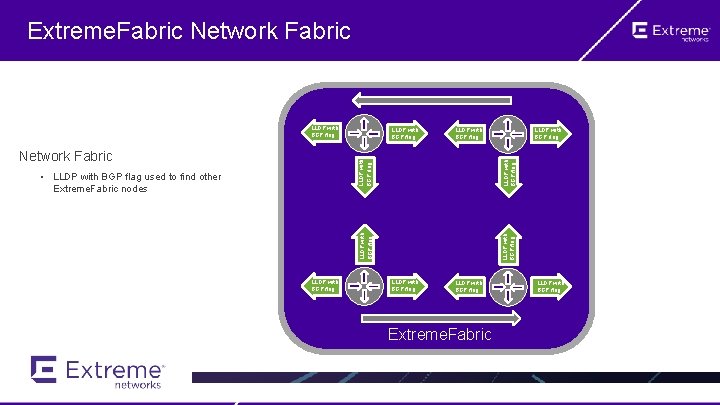

Extreme. Fabric Network Fabric LLDP with BGP flag LLDP with BGP flag • LLDP with BGP flag used to find other Extreme. Fabric nodes LLDP with BGP flag Network Fabric LLDP with BGP flag Extreme. Fabric LLDP with BGP flag

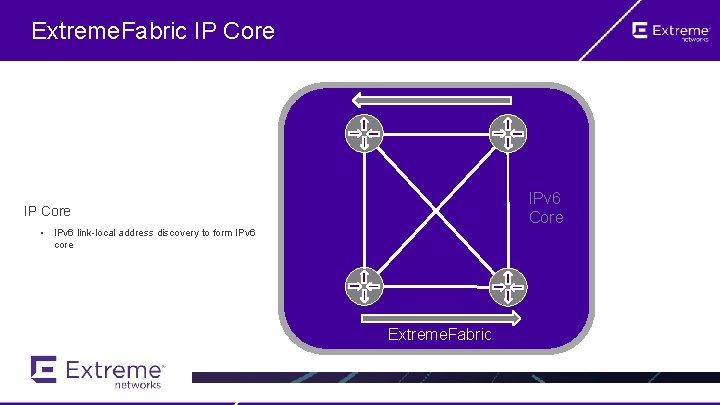

Extreme. Fabric IP Core IPv 6 Core IP Core • IPv 6 link-local address discovery to form IPv 6 core Extreme. Fabric

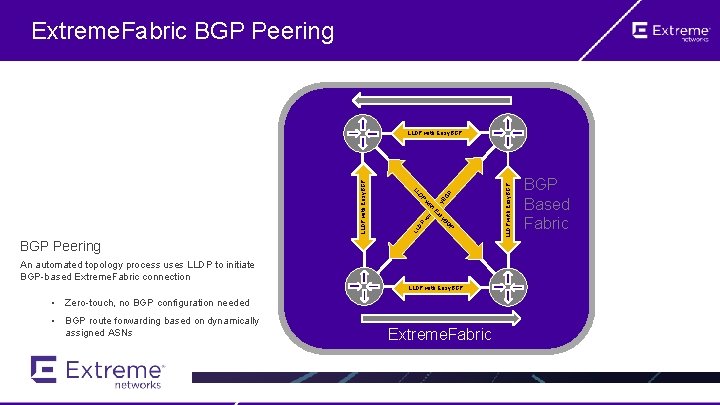

Extreme. Fabric BGP Peering P BGP Peering An automated topology process uses LLDP to initiate BGP-based Extreme. Fabric connection LLDP with Easy. BGP • Zero-touch, no BGP configuration needed • BGP route forwarding based on dynamically assigned ASNs Extreme. Fabric LLDP with Easy. BGP DP LL P BG y as w ith E a sy E th wi BG DP LL LLDP with Easy. BGP Based Fabric

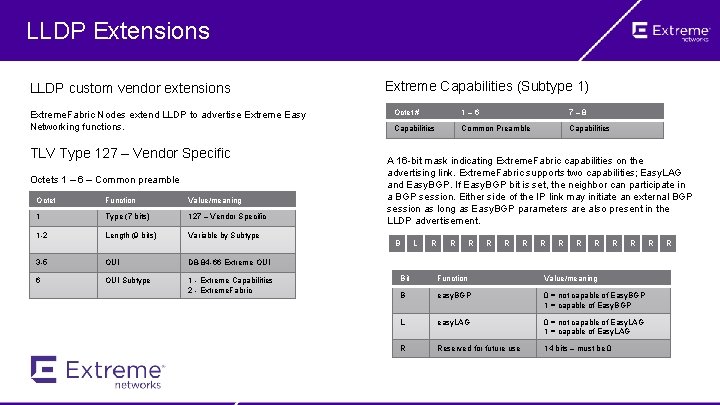

LLDP Extensions LLDP custom vendor extensions Extreme. Fabric Nodes extend LLDP to advertise Extreme Easy Networking functions. TLV Type 127 – Vendor Specific Octets 1 – 6 – Common preamble Octet Function Value/meaning 1 Type (7 bits) 127 – Vendor Specific 1 -2 Length (9 bits) Variable by Subtype 3 -5 OUI D 8 -84 -66 Extreme OUI 6 OUI Subtype 1 - Extreme Capabilities 2 - Extreme. Fabric Extreme Capabilities (Subtype 1) Octet # 1 – 6 7 – 8 Capabilities Common Preamble Capabilities A 16 -bit mask indicating Extreme. Fabric capabilities on the advertising link. Extreme. Fabric supports two capabilities; Easy. LAG and Easy. BGP. If Easy. BGP bit is set, the neighbor can participate in a BGP session. Either side of the IP link may initiate an external BGP session as long as Easy. BGP parameters are also present in the LLDP advertisement. B L R R R Bit Function Value/meaning B easy. BGP 0 = not capable of Easy. BGP 1 = capable of Easy. BGP L easy. LAG 0 = not capable of Easy. LAG 1 = capable of Easy. LAG R Reserved for future use 14 bits – must be 0 R R

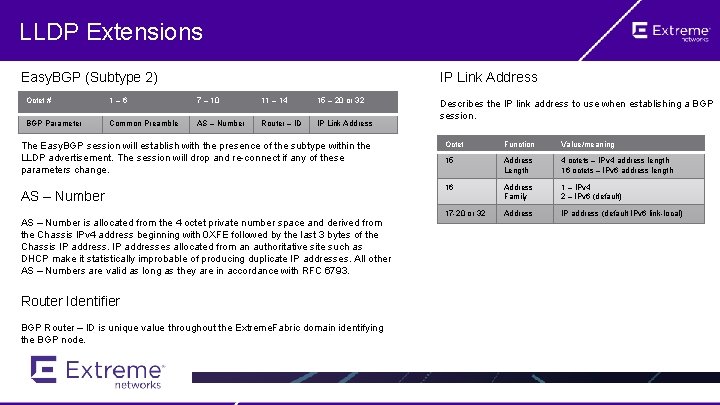

LLDP Extensions Easy. BGP (Subtype 2) IP Link Address Octet # 1 – 6 7 – 10 11 – 14 15 – 20 or 32 BGP Parameter Common Preamble AS – Number Router – ID IP Link Address The Easy. BGP session will establish with the presence of the subtype within the LLDP advertisement. The session will drop and re-connect if any of these parameters change. AS – Number is allocated from the 4 octet private number space and derived from the Chassis IPv 4 address beginning with 0 XFE followed by the last 3 bytes of the Chassis IP addresses allocated from an authoritative site such as DHCP make it statistically improbable of producing duplicate IP addresses. All other AS – Numbers are valid as long as they are in accordance with RFC 6793. Router Identifier BGP Router – ID is unique value throughout the Extreme. Fabric domain identifying the BGP node. Describes the IP link address to use when establishing a BGP session. Octet Function Value/meaning 15 Address Length 4 octets – IPv 4 address length 16 octets – IPv 6 address length 16 Address Family 1 – IPv 4 2 – IPv 6 (default) 17 -20 or 32 Address IP address (default IPv 6 link-local)

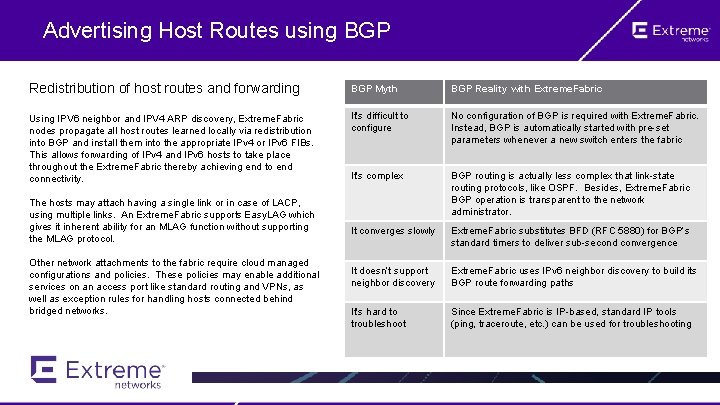

Advertising Host Routes using BGP Redistribution of host routes and forwarding BGP Myth BGP Reality with Extreme. Fabric Using IPV 6 neighbor and IPV 4 ARP discovery, Extreme. Fabric nodes propagate all host routes learned locally via redistribution into BGP and install them into the appropriate IPv 4 or IPv 6 FIBs. This allows forwarding of IPv 4 and IPv 6 hosts to take place throughout the Extreme. Fabric thereby achieving end to end connectivity. It’s difficult to configure No configuration of BGP is required with Extreme. Fabric. Instead, BGP is automatically started with pre-set parameters whenever a new switch enters the fabric It’s complex BGP routing is actually less complex that link-state routing protocols, like OSPF. Besides, Extreme. Fabric BGP operation is transparent to the network administrator. It converges slowly Extreme. Fabric substitutes BFD (RFC 5880) for BGP’s standard timers to deliver sub-second convergence It doesn’t support neighbor discovery Extreme. Fabric uses IPv 6 neighbor discovery to build its BGP route forwarding paths It’s hard to troubleshoot Since Extreme. Fabric is IP-based, standard IP tools (ping, traceroute, etc. ) can be used for troubleshooting The hosts may attach having a single link or in case of LACP, using multiple links. An Extreme. Fabric supports Easy. LAG which gives it inherent ability for an MLAG function without supporting the MLAG protocol. Other network attachments to the fabric require cloud managed configurations and policies. These policies may enable additional services on an access port like standard routing and VPNs, as well as exception rules for handling hosts connected behind bridged networks.

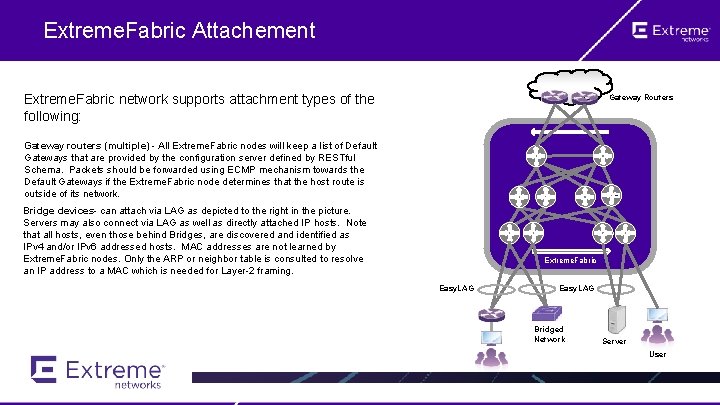

Extreme. Fabric Attachement Extreme. Fabric network supports attachment types of the following: Gateway Routers Gateway routers (multiple) - All Extreme. Fabric nodes will keep a list of Default Gateways that are provided by the configuration server defined by RESTful Schema. Packets should be forwarded using ECMP mechanism towards the Default Gateways if the Extreme. Fabric node determines that the host route is outside of its network. Bridge devices- can attach via LAG as depicted to the right in the picture. Servers may also connect via LAG as well as directly attached IP hosts. Note that all hosts, even those behind Bridges, are discovered and identified as IPv 4 and/or IPv 6 addressed hosts. MAC addresses are not learned by Extreme. Fabric nodes. Only the ARP or neighbor table is consulted to resolve an IP address to a MAC which is needed for Layer-2 framing. Extreme. Fabric Easy. LAG Bridged Network Server User

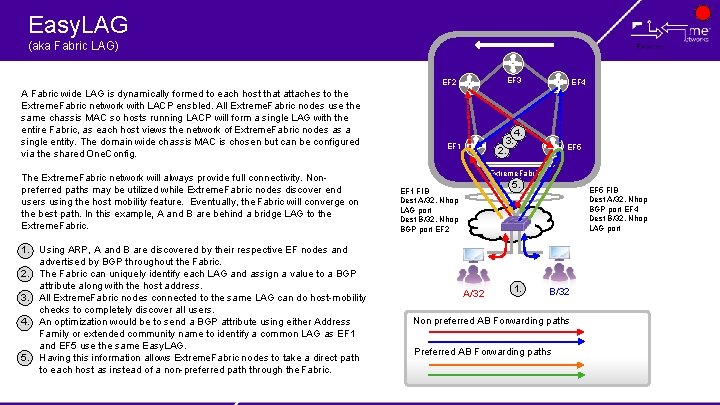

Easy. LAG (aka Fabric LAG) EF 3 EF 2 A Fabric wide LAG is dynamically formed to each host that attaches to the Extreme. Fabric network with LACP ensbled. All Extreme. Fabric nodes use the same chassis MAC so hosts running LACP will form a single LAG with the entire Fabric, as each host views the network of Extreme. Fabric nodes as a single entity. The domain wide chassis MAC is chosen but can be configured via the shared One. Config. The Extreme. Fabric network will always provide full connectivity. Nonpreferred paths may be utilized while Extreme. Fabric nodes discover end users using the host mobility feature. Eventually, the Fabric will converge on the best path. In this example, A and B are behind a bridge LAG to the Extreme. Fabric. 1. Using ARP, A and B are discovered by their respective EF nodes and advertised by BGP throughout the Fabric. 2. The Fabric can uniquely identify each LAG and assign a value to a BGP attribute along with the host address. 3. All Extreme. Fabric nodes connected to the same LAG can do host-mobility checks to completely discover all users. 4. An optimization would be to send a BGP attribute using either Address Family or extended community name to identify a common LAG as EF 1 and EF 5 use the same Easy. LAG. 5. Having this information allows Extreme. Fabric nodes to take a direct path to each host as instead of a non-preferred path through the Fabric. 3. 2. EF 1 EF 4 4. EF 5 Extreme. Fabric 5. EF 1 FIB Dest A/32, Nhop LAG port Dest B/32, Nhop BGP port EF 2 A/32 1. EF 5 FIB Dest A/32, Nhop BGP port EF 4 Dest B/32, Nhop LAG port B/32 Non preferred AB Forwarding paths Preferred AB Forwarding paths

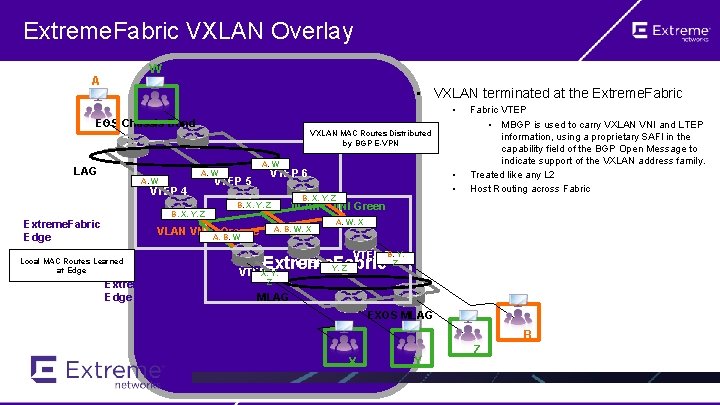

Extreme. Fabric VXLAN Overlay W A • VXLAN terminated at the Extreme. Fabric • EOS Chassis Bond LAG VXLAN MAC Routes Distributed by BGP E-VPN A, W VTEP 6 A, W VTEP 4 VTEP 5 B, X, Y, Z VLAN B, X, Y, Z Extreme. Fabric Edge VLAN VNI Local MAC Routes Learned at Edge Extreme. Fabric Edge Orange A, B, W • • A, B, W, X Fabric VTEP • MBGP is used to carry VXLAN VNI and LTEP information, using a proprietary SAFI in the capability field of the BGP Open Message to indicate support of the VXLAN address family. Treated like any L 2 Host Routing across Fabric VNI Green A, W, X VTEP 3 B, Y, VTEP 2 Y, Z Extreme. Fabric X, Y, VTEP 1 Z Z MLAG EXOS MLAG B X Y Z

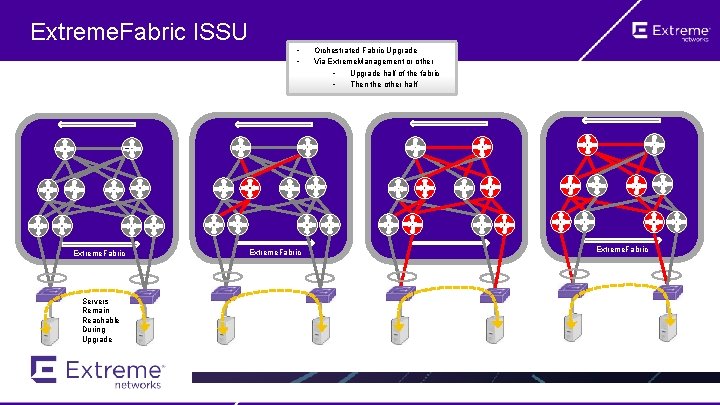

Extreme. Fabric ISSU Extreme. Fabric Servers Remain Reachable During Upgrade • • Extreme. Fabric Orchestrated Fabric Upgrade Via Extreme. Management or other • Upgrade half of the fabric • Then the other half Extreme. Fabric

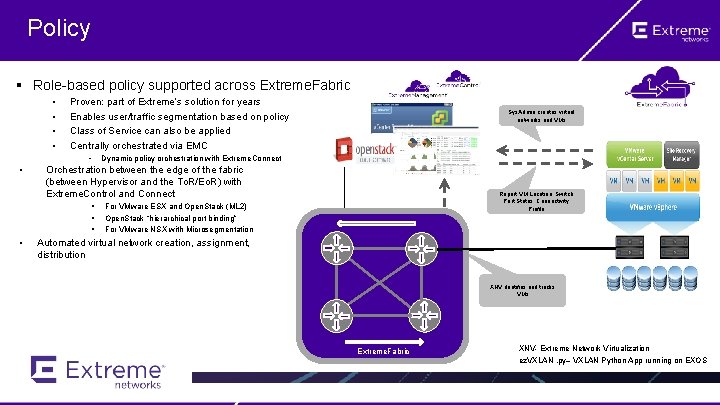

Policy § Role-based policy supported across Extreme. Fabric • • Proven: part of Extreme’s solution for years Enables user/traffic segmentation based on policy Class of Service can also be applied Centrally orchestrated via EMC • • Dynamic policy orchestration with Extreme. Connect Orchestration between the edge of the fabric (between Hypervisor and the To. R/Eo. R) with Extreme. Control and Connect § § § • Sys. Admin creates virtual networks and VMs Report VM Location, Switch Port Status, Connectivity Profile For VMware ESX and Open. Stack (ML 2) Open. Stack “hierarchical port binding” For VMware NSX with Microsegmentation Automated virtual network creation, assignment, distribution Compute Host XNV dentifies and tracks VMs Extreme. Fabric XNV- Extreme Network Virtualization ez. VXLAN. py– VXLAN Python App running on EXOS

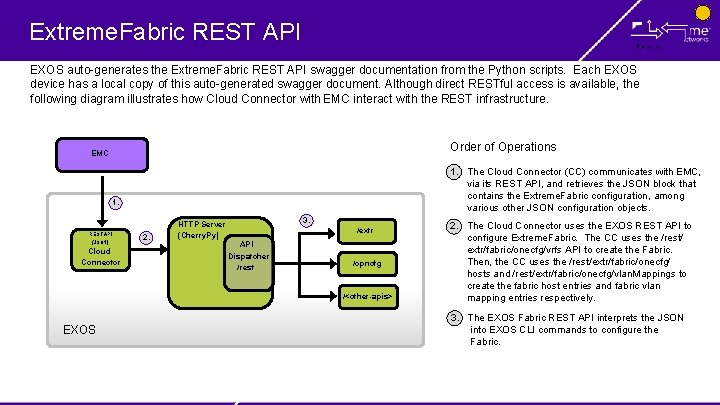

Extreme. Fabric REST API EXOS auto-generates the Extreme. Fabric REST API swagger documentation from the Python scripts. Each EXOS device has a local copy of this auto-generated swagger document. Although direct RESTful access is available, the following diagram illustrates how Cloud Connector with EMC interact with the REST infrastructure. Order of Operations EMC 1. REST API (JSON) Cloud Connector 2. HTTP Server (Cherry. Py) 3. /extr API Dispatcher /rest /opncfg /<other-apis> EXOS 1. The Cloud Connector (CC) communicates with EMC, via its REST API, and retrieves the JSON block that contains the Extreme. Fabric configuration, among various other JSON configuration objects. 2. The Cloud Connector uses the EXOS REST API to configure Extreme. Fabric. The CC uses the /rest/ extr/fabric/onecfg/vrfs API to create the Fabric. Then, the CC uses the /rest/extr/fabric/onecfg/ hosts and /rest/extr/fabric/onecfg/vlan. Mappings to create the fabric host entries and fabric vlan mapping entries respectively. 3. The EXOS Fabric REST API interprets the JSON into EXOS CLI commands to configure the Fabric.

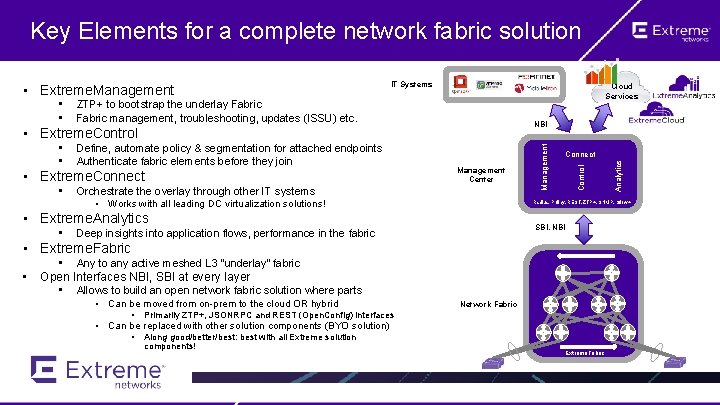

Key Elements for a complete network fabric solution Cloud Services ZTP+ to bootstrap the underlay Fabric management, troubleshooting, updates (ISSU) etc. NBI • • Define, automate policy & segmentation for attached endpoints Authenticate fabric elements before they join • Extreme. Connect • Management Center Orchestrate the overlay through other IT systems • Works with all leading DC virtualization solutions! Radius, Policy, REST, ZTP+, SNMP, Sflow+ • Extreme. Analytics • Connect SBI, NBI Deep insights into application flows, performance in the fabric • Extreme. Fabric • • Any to any active meshed L 3 “underlay” fabric Open Interfaces NBI, SBI at every layer • Allows to build an open network fabric solution where parts • Can be moved from on-prem to the cloud OR hybrid • Network Fabric Primarily ZTP+, JSONRPC and REST (Open. Config) interfaces • Can be replaced with other solution components (BYO solution) • Along good/better/best: best with all Extreme solution components! Analytics • Extreme. Control • • IT Systems Management • Extreme. Management Extreme. Fabric

Spine/Leaf Architecture

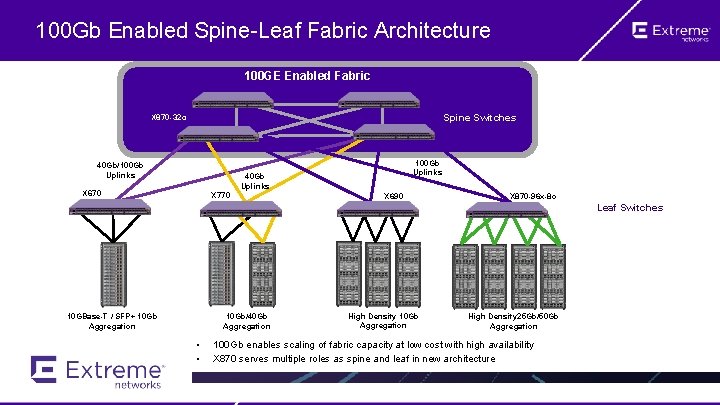

100 Gb Enabled Spine-Leaf Fabric Architecture 100 GE Enabled Fabric Spine Switches X 870 -32 c 40 Gb/100 Gb Uplinks 40 Gb Uplinks X 670 X 770 X 690 X 870 -96 x-8 c Leaf Switches 10 GBase-T / SFP+ 10 Gb Aggregation 10 Gb/40 Gb Aggregation • • High Density 10 Gb Aggregation High Density 25 Gb/50 Gb Aggregation 100 Gb enables scaling of fabric capacity at low cost with high availability X 870 serves multiple roles as spine and leaf in new architecture

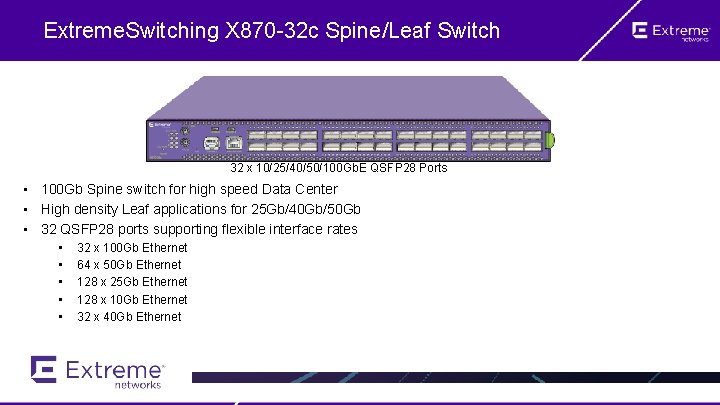

Extreme. Switching X 870 -32 c Spine/Leaf Switch 32 x 10/25/40/50/100 Gb. E QSFP 28 Ports • 100 Gb Spine switch for high speed Data Center • High density Leaf applications for 25 Gb/40 Gb/50 Gb • 32 QSFP 28 ports supporting flexible interface rates • • • 32 x 100 Gb Ethernet 64 x 50 Gb Ethernet 128 x 25 Gb Ethernet 128 x 10 Gb Ethernet 32 x 40 Gb Ethernet

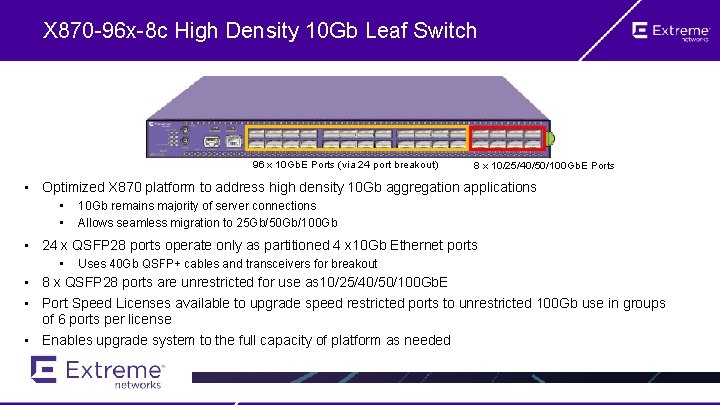

X 870 -96 x-8 c High Density 10 Gb Leaf Switch 96 x 10 Gb. E Ports (via 24 port breakout) 8 x 10/25/40/50/100 Gb. E Ports • Optimized X 870 platform to address high density 10 Gb aggregation applications • • 10 Gb remains majority of server connections Allows seamless migration to 25 Gb/50 Gb/100 Gb • 24 x QSFP 28 ports operate only as partitioned 4 x 10 Gb Ethernet ports • Uses 40 Gb QSFP+ cables and transceivers for breakout • 8 x QSFP 28 ports are unrestricted for use as 10/25/40/50/100 Gb. E • Port Speed Licenses available to upgrade speed restricted ports to unrestricted 100 Gb use in groups of 6 ports per license • Enables upgrade system to the full capacity of platform as needed

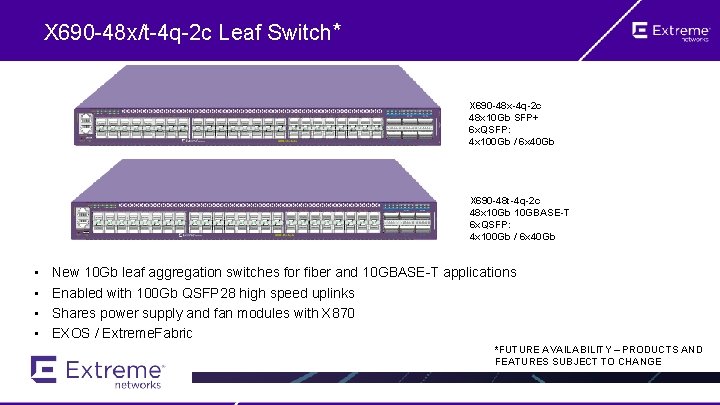

X 690 -48 x/t-4 q-2 c Leaf Switch* X 690 -48 x-4 q-2 c 48 x 10 Gb SFP+ 6 x. QSFP: 4 x 100 Gb / 6 x 40 Gb X 690 -48 t-4 q-2 c 48 x 10 Gb 10 GBASE-T 6 x. QSFP: 4 x 100 Gb / 6 x 40 Gb • • New 10 Gb leaf aggregation switches for fiber and 10 GBASE-T applications Enabled with 100 Gb QSFP 28 high speed uplinks Shares power supply and fan modules with X 870 EXOS / Extreme. Fabric *FUTURE AVAILABILITY – PRODUCTS AND FEATURES SUBJECT TO CHANGE

Thank You!

- Slides: 31