Empirically Revisiting and Enhancing IRBased TestCase Prioritization Qianyang

Empirically Revisiting and Enhancing IR-Based Test-Case Prioritization Qianyang Peng, August Shi, Lingming Zhang CNS-1646305 CCF-1763906 OAC-1839010 ISSTA 2020 7/21/2020 Virtual Event

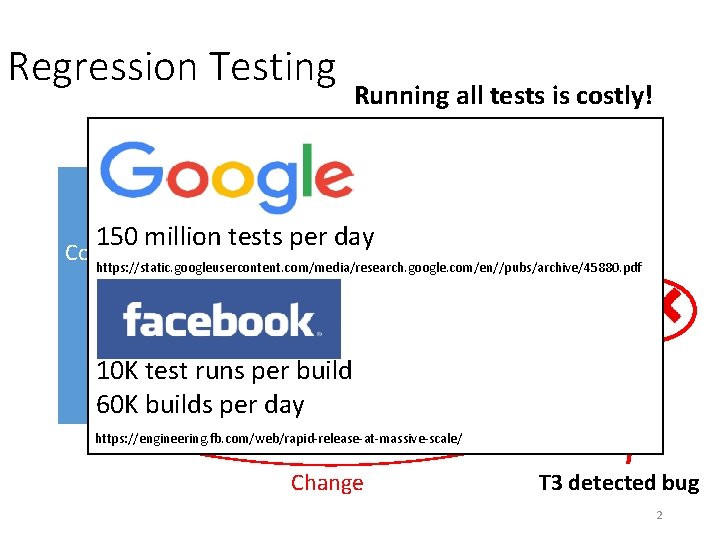

Regression Testing Running all tests is costly! Tests T 0 T 1 150 million tests per day Code Under https: //static. googleusercontent. com/media/research. google. com/en//pubs/archive/45880. pdf T 2 Test T 3 V 1 V 2 … … 10 K test runs per build TN TN 60 K builds per day https: //engineering. fb. com/web/rapid-release-at-massive-scale/ Change T 3 detected bug 2

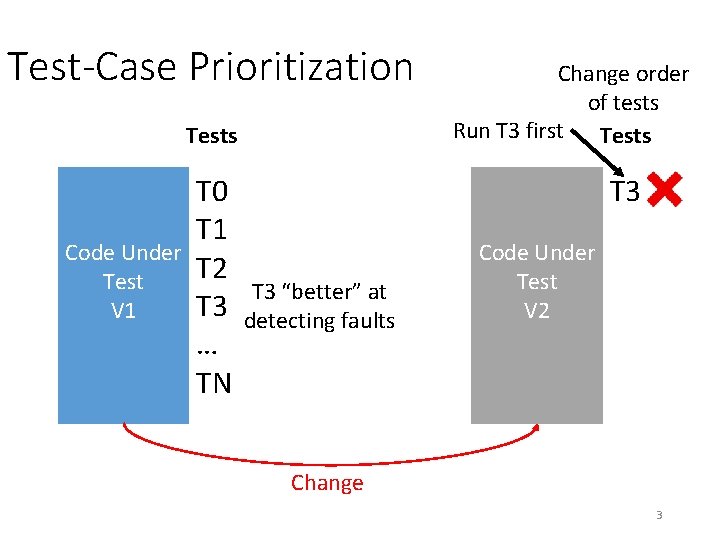

Test-Case Prioritization Tests T 0 T 1 Code Under T 2 Test T 3 V 1 … TN T 3 “better” at detecting faults Change order of tests Run T 3 first Tests T 3 T 0 Code Under T 2 Test T 1 V 2 … TN Change 3

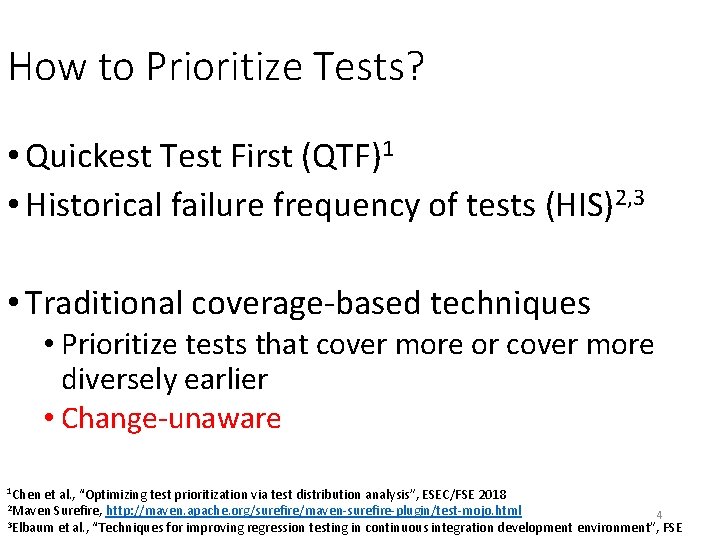

How to Prioritize Tests? • Quickest Test First (QTF)1 • Historical failure frequency of tests (HIS)2, 3 • Traditional coverage-based techniques • Prioritize tests that cover more or cover more diversely earlier • Change-unaware 1 Chen et al. , “Optimizing test prioritization via test distribution analysis”, ESEC/FSE 2018 2 Maven Surefire, http: //maven. apache. org/surefire/maven-surefire-plugin/test-mojo. html 4 3 Elbaum et al. , “Techniques for improving regression testing in continuous integration development environment”, FSE

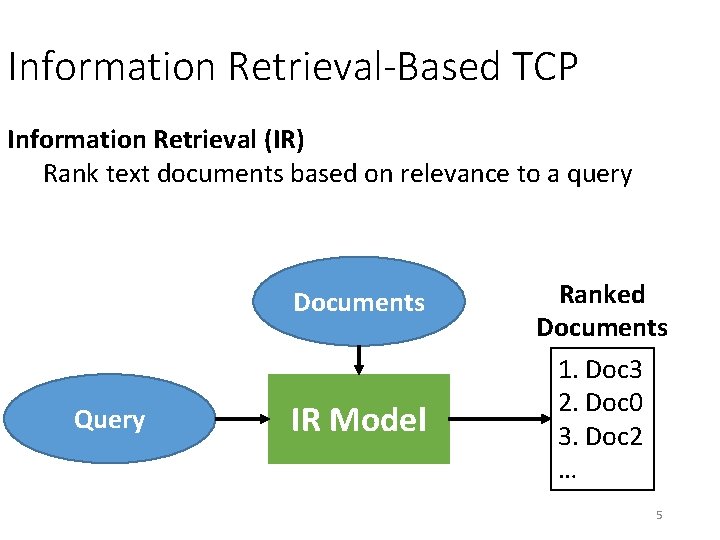

Information Retrieval-Based TCP Information Retrieval (IR) Rank text documents based on relevance to a query Documents Query IR Model Ranked Documents 1. Doc 3 2. Doc 0 3. Doc 2 … 5

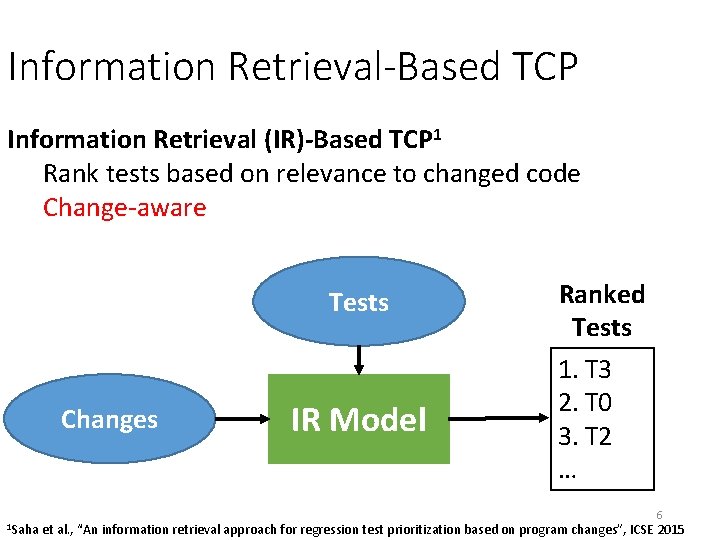

Information Retrieval-Based TCP Information Retrieval (IR)-Based TCP 1 Rank tests based on relevance to changed code Change-aware Tests Changes IR Model Ranked Tests 1. T 3 2. T 0 3. T 2 … 6 1 Saha et al. , “An information retrieval approach for regression test prioritization based on program changes”, ICSE 2015

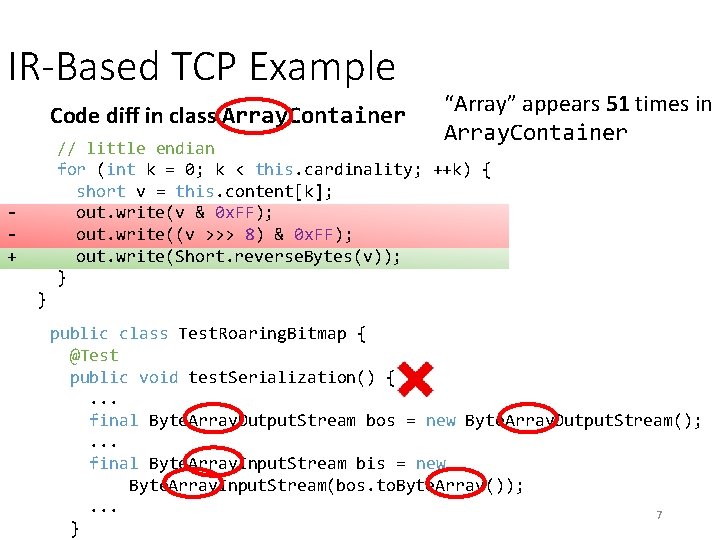

IR-Based TCP Example Code diff in class Array. Container “Array” appears 51 times in Array. Container // little endian for (int k = 0; k < this. cardinality; ++k) { short v = this. content[k]; out. write(v & 0 x. FF); out. write((v >>> 8) & 0 x. FF); out. write(Short. reverse. Bytes(v)); } + } public class Test. Roaring. Bitmap { @Test public void test. Serialization() {. . . final Byte. Array. Output. Stream bos = new Byte. Array. Output. Stream(); . . . final Byte. Array. Input. Stream bis = new Byte. Array. Input. Stream(bos. to. Byte. Array()); . . . 7 }

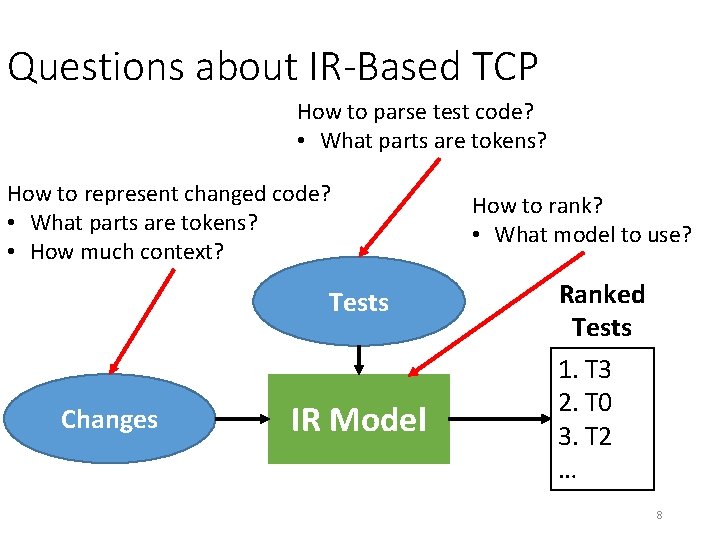

Questions about IR-Based TCP How to parse test code? • What parts are tokens? How to represent changed code? • What parts are tokens? • How much context? Tests Changes IR Model How to rank? • What model to use? Ranked Tests 1. T 3 2. T 0 3. T 2 … 8

More Questions about IR-Based TCP • How effective w. r. t. real test failures? • Previous work on TCP mainly evaluated using seeded faults/mutants • How effective w. r. t. time? • Previous work on TCP mainly evaluated assuming time per test is the same • Need to evaluate these factors! 9

Our Contributions • Dataset for IR-based TCP • Collected real test failures from Travis CI builds • Collected test code and diffs from Git. Hub • Evaluation of IR-based TCP w/ dataset • Effectiveness of different factors • Comparison against other TCP techniques • New hybrid techniques 10

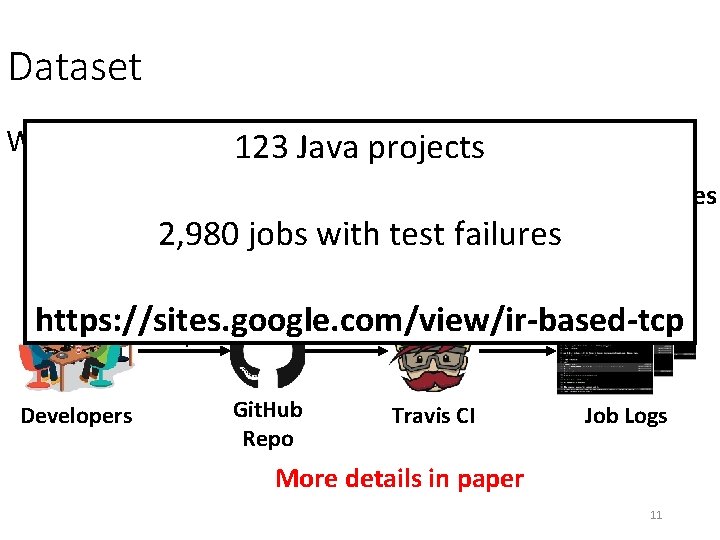

Dataset What we need: 123 Java projects Tests Code Diffs Test Failures 2, 980 jobs with test failures Test Times Triggers Push to https: //sites. google. com/view/ir-based-tcp Output Job Repo Developers Git. Hub Repo Travis CI Job Logs More details in paper 11

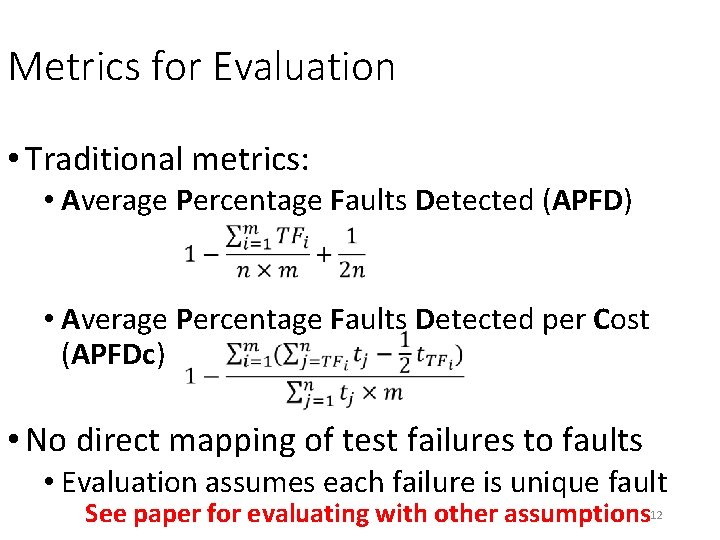

Metrics for Evaluation • Traditional metrics: • Average Percentage Faults Detected (APFD) • Average Percentage Faults Detected per Cost (APFDc) • No direct mapping of test failures to faults • Evaluation assumes each failure is unique fault See paper for evaluating with other assumptions 12

Evaluating IR-Based TCP on Dataset • RQ 1: Which are best options for IR-based TCP? • RQ 2: How does IR-based TCP compare against other TCP techniques? 13

Evaluating IR-Based TCP on Dataset • RQ 1: Which are best options for IR-based TCP? See paper for details Best options (Opt. IR): • Parse with tokenizer on AST • Whole file context • RQ 2: How does IR-based TCP compare against • BM 25 model other TCP techniques? 14

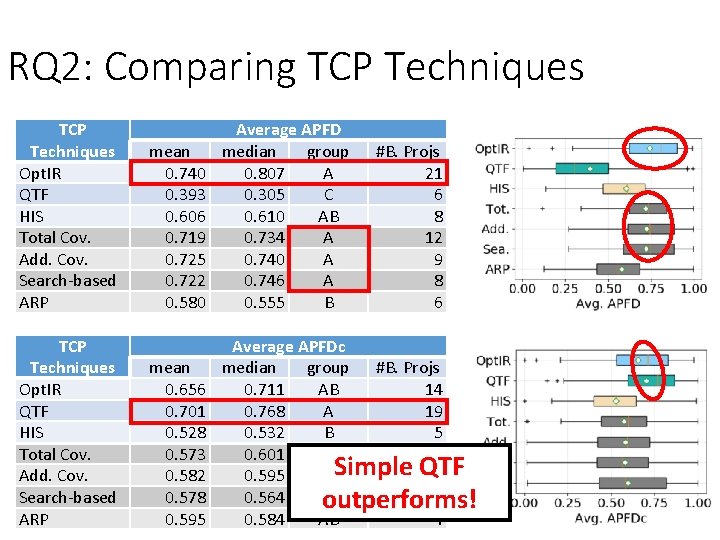

RQ 2: Comparing TCP Techniques Opt. IR QTF HIS Total Cov. Add. Cov. Search-based ARP mean 0. 740 0. 393 0. 606 0. 719 0. 725 0. 722 0. 580 Average APFD median group 0. 807 A 0. 305 C 0. 610 AB 0. 734 A 0. 740 A 0. 746 A 0. 555 B mean 0. 656 0. 701 0. 528 0. 573 0. 582 0. 578 0. 595 Average APFDc median group #B. Projs 0. 711 AB 14 0. 768 A 19 0. 532 B 5 0. 601 AB 6 0. 595 ABSimple QTF 4 0. 564 AB 6 outperforms! 0. 584 AB 4 #B. Projs 21 6 8 12 9 8 6 15

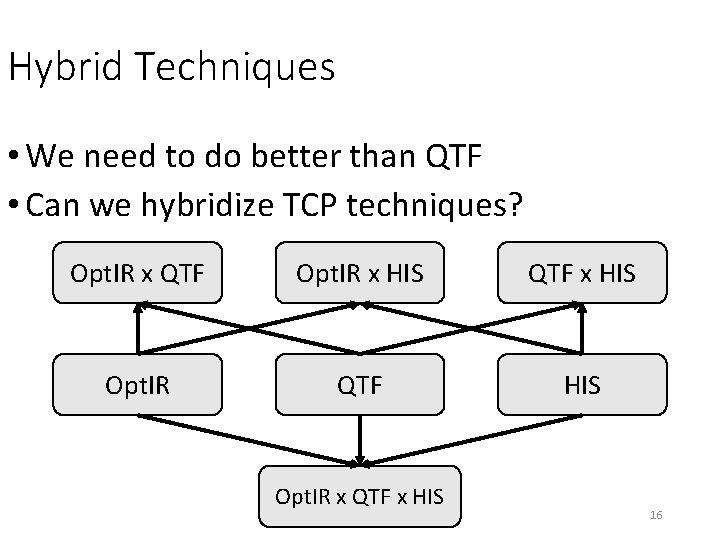

Hybrid Techniques • We need to do better than QTF • Can we hybridize TCP techniques? Opt. IR x QTF Opt. IR x HIS QTF x HIS Opt. IR QTF HIS Opt. IR x QTF x HIS 16

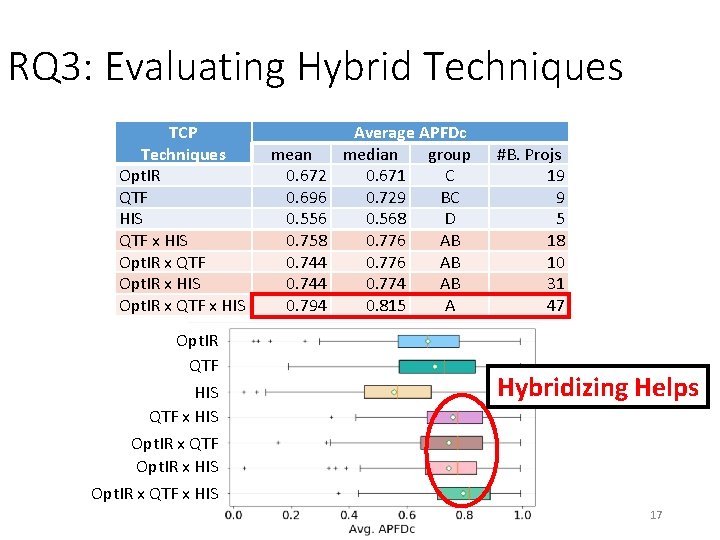

RQ 3: Evaluating Hybrid Techniques TCP Techniques Opt. IR QTF HIS QTF x HIS Opt. IR x QTF Opt. IR x HIS Opt. IR x QTF x HIS Opt. IR QTF HIS QTF x HIS mean 0. 672 0. 696 0. 556 0. 758 0. 744 0. 794 Average APFDc median group 0. 671 C 0. 729 BC 0. 568 D 0. 776 AB 0. 774 AB 0. 815 A #B. Projs 19 9 5 18 10 31 47 Hybridizing Helps Opt. IR x QTF Opt. IR x HIS Opt. IR x QTF x HIS 17

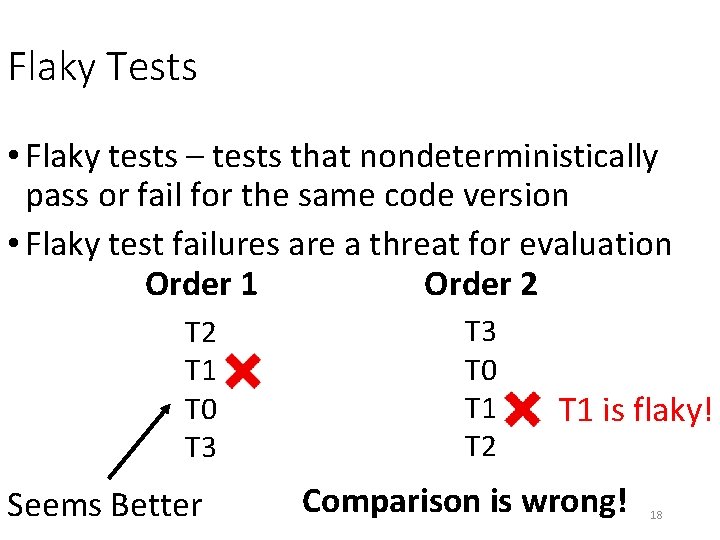

Flaky Tests • Flaky tests – tests that nondeterministically pass or fail for the same code version • Flaky test failures are a threat for evaluation Order 1 Order 2 T 1 T 0 T 3 Seems Better T 3 T 0 T 1 T 2 T 1 is flaky! Comparison is wrong! 18

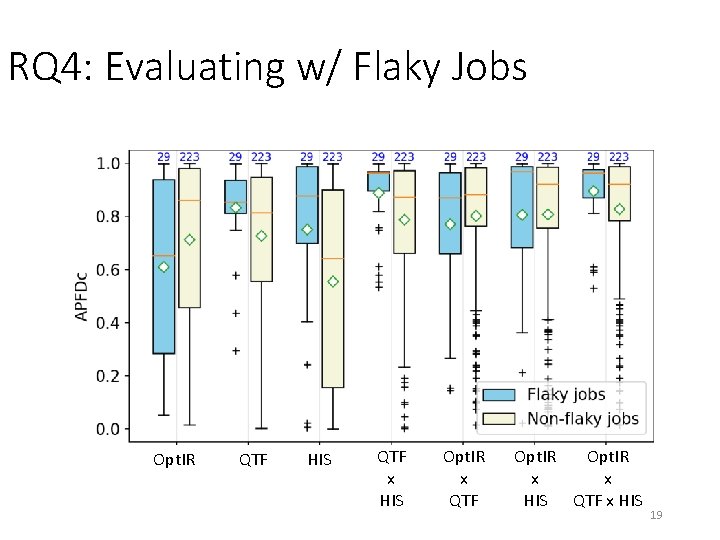

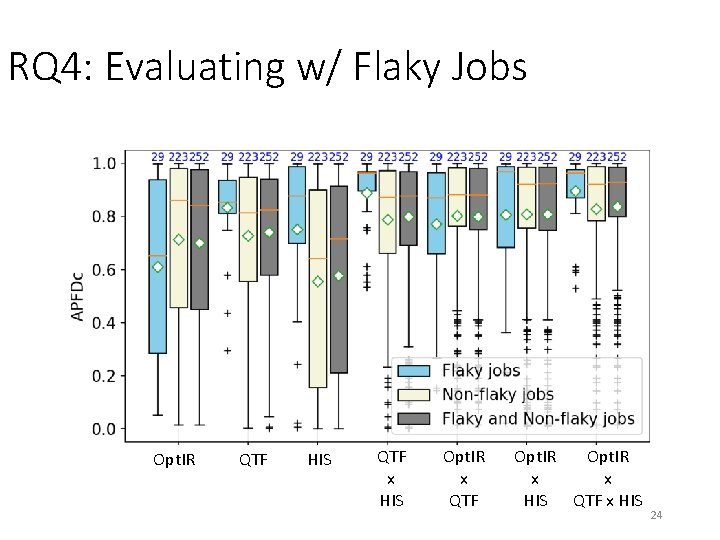

RQ 4: Evaluating w/ Flaky Jobs Opt. IR QTF HIS QTF x HIS Opt. IR x QTF Opt. IR x x HIS QTF x HIS 19

Conclusions https: //sites. google. com/view/ir-based-tcp 20

BACKUP

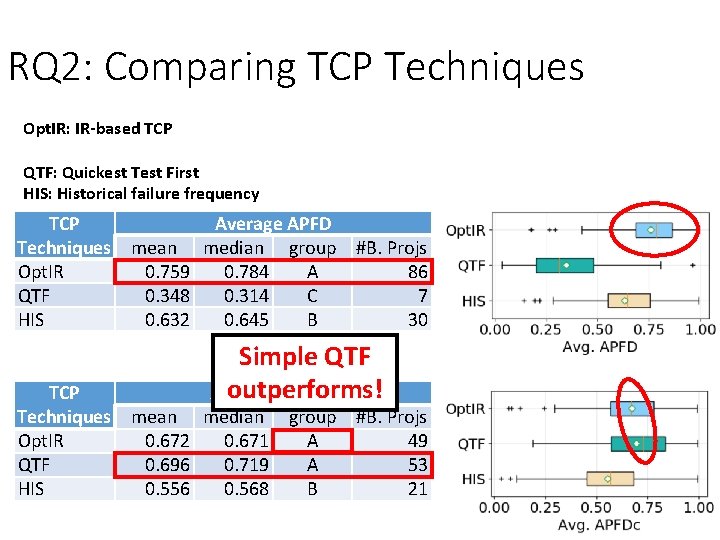

RQ 2: Comparing TCP Techniques Opt. IR: IR-based TCP QTF: Quickest Test First HIS: Historical failure frequency TCP Average APFD Techniques mean median group #B. Projs Opt. IR 0. 759 0. 784 A 86 QTF 0. 348 0. 314 C 7 HIS 0. 632 0. 645 B 30 Simple QTF outperforms! Average APFDc TCP Techniques mean median group #B. Projs Opt. IR 0. 672 0. 671 A 49 QTF 0. 696 0. 719 A 53 HIS 0. 556 0. 568 B 21 22

Compare between Flaky and Non-Flaky • Divide up dataset between flaky jobs and nonflaky jobs • Consider jobs with single test failures • Rerun jobs 6 times on Travis CI • Flaky job if test failure is flaky test • 252 total jobs: 29 flaky jobs, 223 non-flaky jobs 23

RQ 4: Evaluating w/ Flaky Jobs Opt. IR QTF HIS QTF x HIS Opt. IR x QTF Opt. IR x x HIS QTF x HIS 24

- Slides: 24