DISTRIBUTED MUTEX EE 324 Lecture 11 Vector Clocks

DISTRIBUTED MUTEX EE 324 Lecture 11

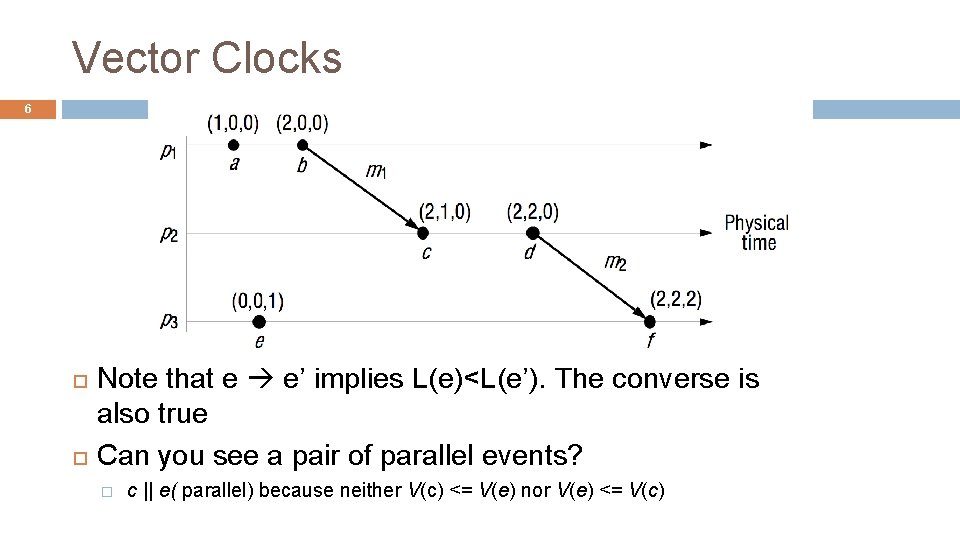

Vector Clocks Vector clocks overcome the shortcoming of Lamport logical clocks � L(e) < L(e’) does not imply e happened before e’ Goal � Want ordering that matches causality � V(e) < V(e’) if and only if e → e’ Method � Label ci each event by vector V(e) [c 1, c 2 …, cn] = # events in process i that causally precede e

![Vector Clock Algorithm Initially, all vectors [0, 0, …, 0] For event on process Vector Clock Algorithm Initially, all vectors [0, 0, …, 0] For event on process](http://slidetodoc.com/presentation_image_h2/de67c2a5e6fec4ad10cd70a7800d2d6e/image-3.jpg)

Vector Clock Algorithm Initially, all vectors [0, 0, …, 0] For event on process i, increment own ci Label message sent with local vector When process j receives message with vector [d 1, d 2, …, dn]: � Set local each local entry k to max(ck, dk) � Increment value of cj

Vector Clocks 4 Vector clocks overcome the shortcoming of Lamport logical clocks � L(e) < L(e’) does not imply e happened before e’ Vector timestamps are used to timestamp local events They are applied in schemes for replication of data

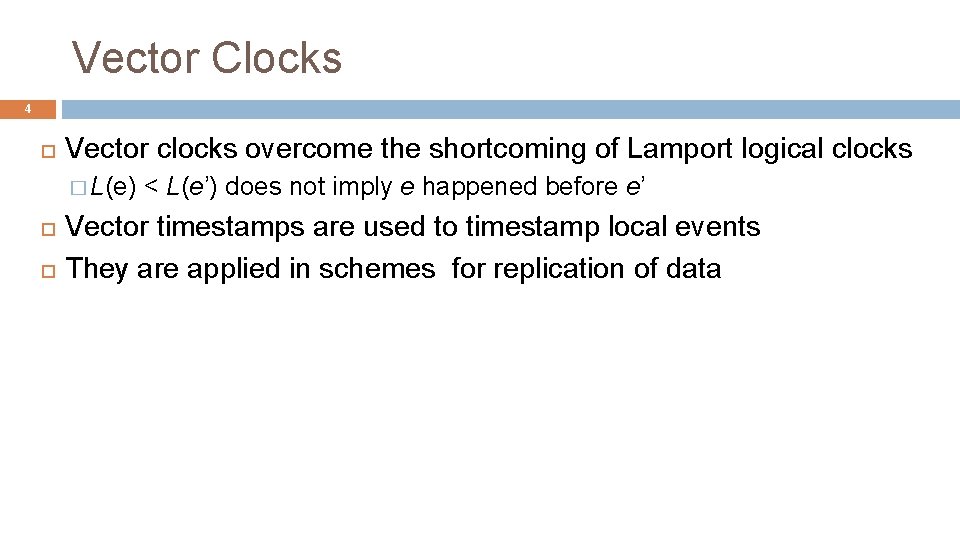

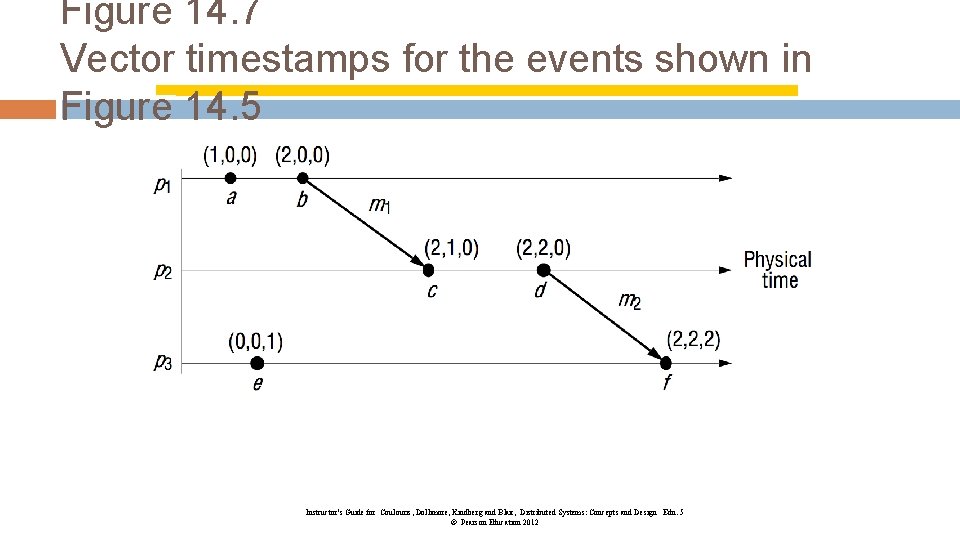

Vector Clocks 5 At p 1 �a occurs at (1, 0, 0); b occurs at (2, 0, 0); piggyback (2, 0, 0) on m 1 At p 2 on receipt of m 1 use max ((0, 0, 0), (2, 0, 0)) = (2, 0, 0) and add 1 to own element = (2, 1, 0) Meaning of =, <=, max etc for vector timestamps � compare elements pairwise

Vector Clocks 6 Note that e e’ implies L(e)<L(e’). The converse is also true Can you see a pair of parallel events? � c || e( parallel) because neither V(c) <= V(e) nor V(e) <= V(c)

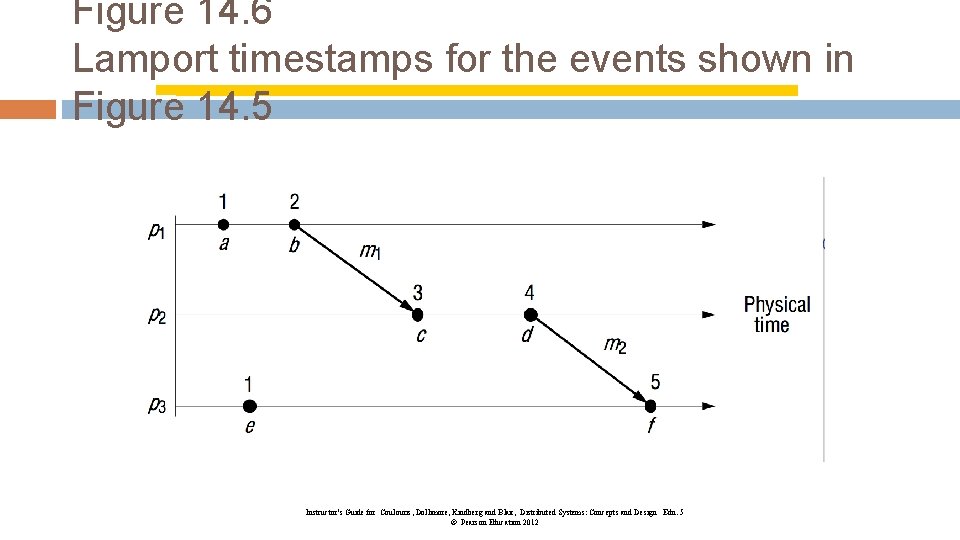

Figure 14. 6 Lamport timestamps for the events shown in Figure 14. 5 Instructor’s Guide for Coulouris, Dollimore, Kindberg and Blair, Distributed Systems: Concepts and Design Edn. 5 © Pearson Education 2012

Figure 14. 7 Vector timestamps for the events shown in Figure 14. 5 Instructor’s Guide for Coulouris, Dollimore, Kindberg and Blair, Distributed Systems: Concepts and Design Edn. 5 © Pearson Education 2012

Logical clocks including VC doesn’t capture everything Out-of-band communication

Where is vector clock used? Data replication � Bayou: http: //www. cs. berkeley. edu/~brewer/cs 262 b/updateconflicts. pdf � Amazon Dynamo DB https: //www. youtube. com/watch? v=oz-7 w. JJ 9 HZ 0 https: //www. youtube. com/watch? v=me. Bj. A 68 De. IU http: //www. allthingsdistributed. com/files/amazon-dynamo-sosp 2007. pdf and 211 page 210

Distributed Mutex (Reading CDK 5 15. 2) We learned about mutex, semaphore, and CVs within a single system. � What do they have in common? � They require a shared state and we kept it in the memory. Distributed mutex � No shared memory � How do we implement it? Message passing � Challenges: Message can be dropped and processes can fail.

Distributed Mutex (Reading CDK 5 15. 2) Entering/leaving a critical section � Enter() --- block if neccessary � Resource. Accessses() --- access shared resource (in side the CS) � Leave() Goal � Safety: at most one process may execute in the CS at one time � Liveness: Requests to enter/exit CS eventually succeeds. (no deadlock or starvation) � ordering: If one entry request “happened before” another, then entry to CS must happen in that order.

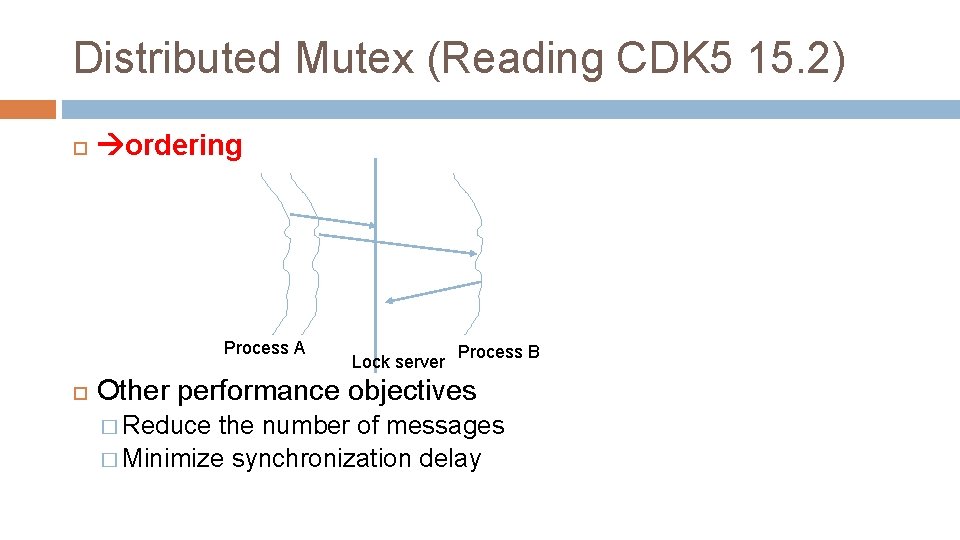

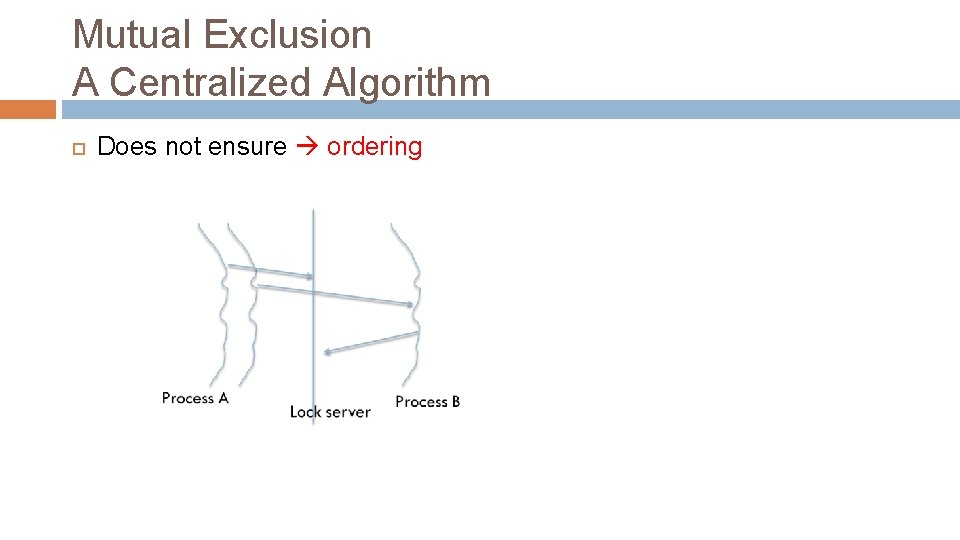

Distributed Mutex (Reading CDK 5 15. 2) ordering Process A Lock server Process B Other performance objectives � Reduce the number of messages � Minimize synchronization delay

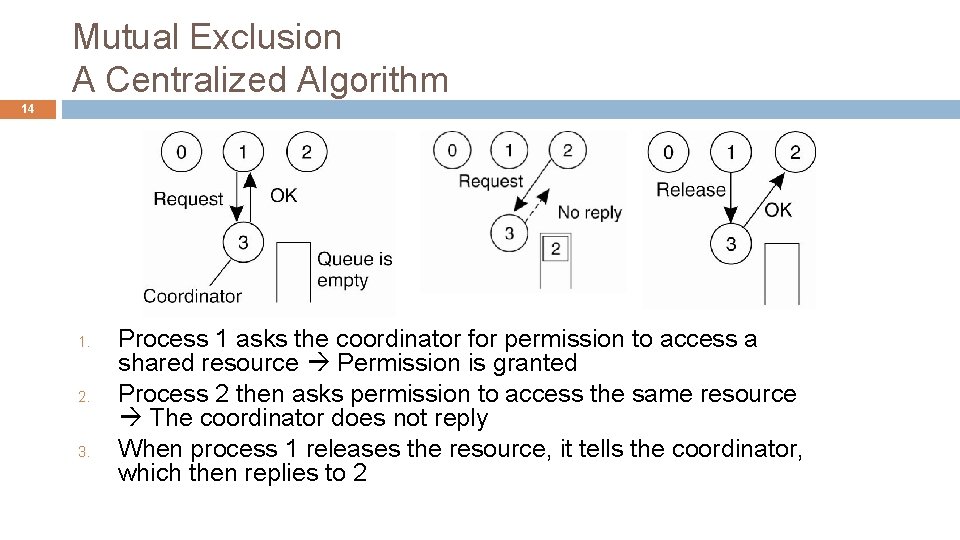

Mutual Exclusion A Centralized Algorithm 14 1. 2. 3. Process 1 asks the coordinator for permission to access a shared resource Permission is granted Process 2 then asks permission to access the same resource The coordinator does not reply When process 1 releases the resource, it tells the coordinator, which then replies to 2

Mutual Exclusion A Centralized Algorithm Advantages � Simple, small delay (one RTT) to acquire mutex � Only 3 messages required to enter and leave the critical section � Safety and liveness is met (assuming the central server does not fail) Disadvantages � Single point of failure How do we make the system robust to failure? Elect a new master when one fails. Must elect a master in a consistent fashion (how? ? ) � Central performance bottleneck � Does not ensure ordering (example? )

Mutual Exclusion A Centralized Algorithm Does not ensure ordering

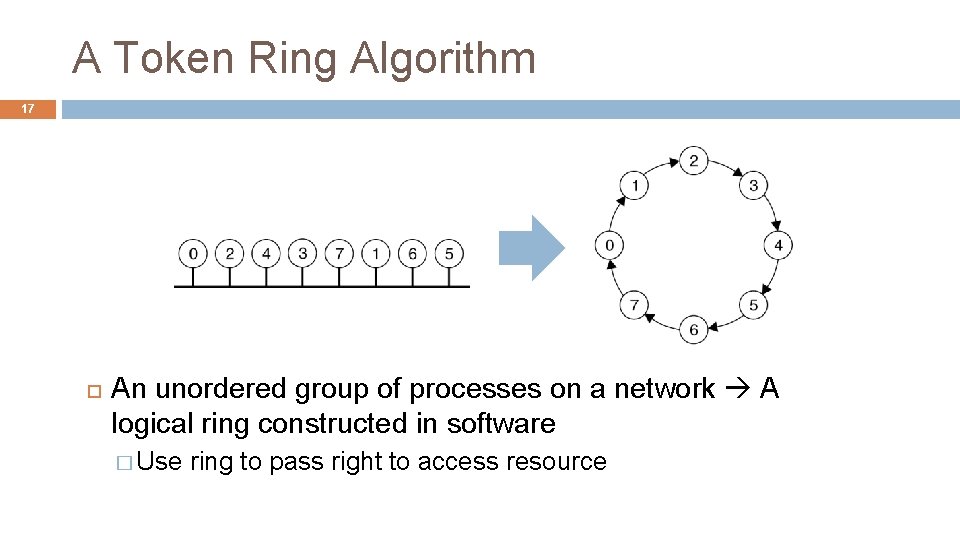

A Token Ring Algorithm 17 An unordered group of processes on a network A logical ring constructed in software � Use ring to pass right to access resource

A Token Ring Algorithm Benefits: Simple Problems: Failure recovery can be difficult. �A single process failure can break the ring. �But, a failure can be recovered by dropping the process in the logical ring. �Does not ensure ordering �Long synchronization delay: Need to wait for up to N-1 messages, for N processors

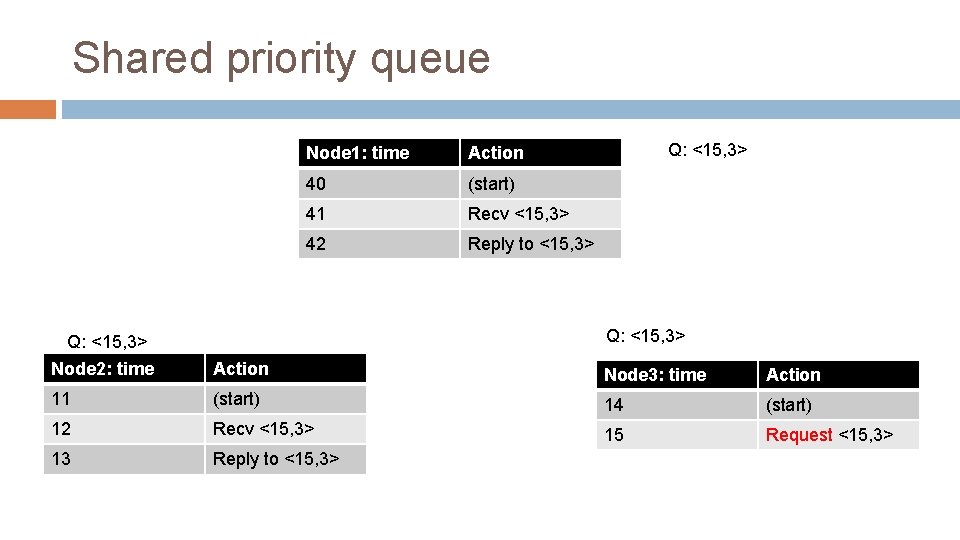

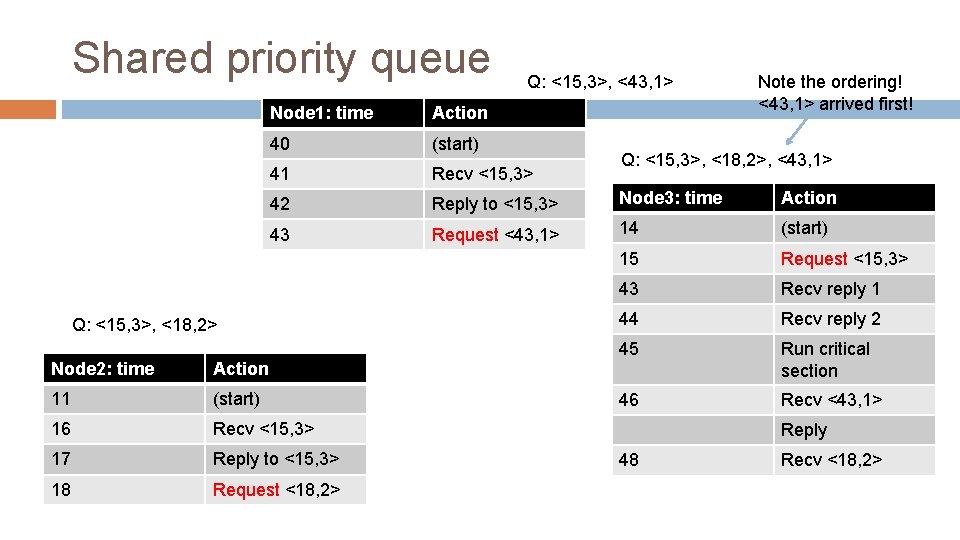

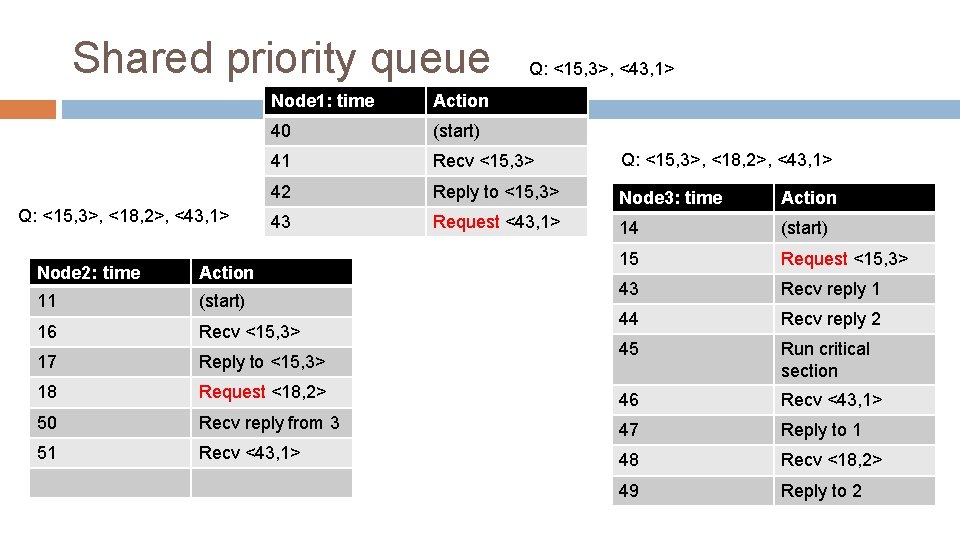

Lamport’s Shared Priority Queue Maintain a global priority queue of requests for the critical section. But each process has its own queue. The ordering inside the Qs is enforced by Lamport’s clock. � The request with earlier timestamp appears first in the queue. � Thus, we enforce ordering.

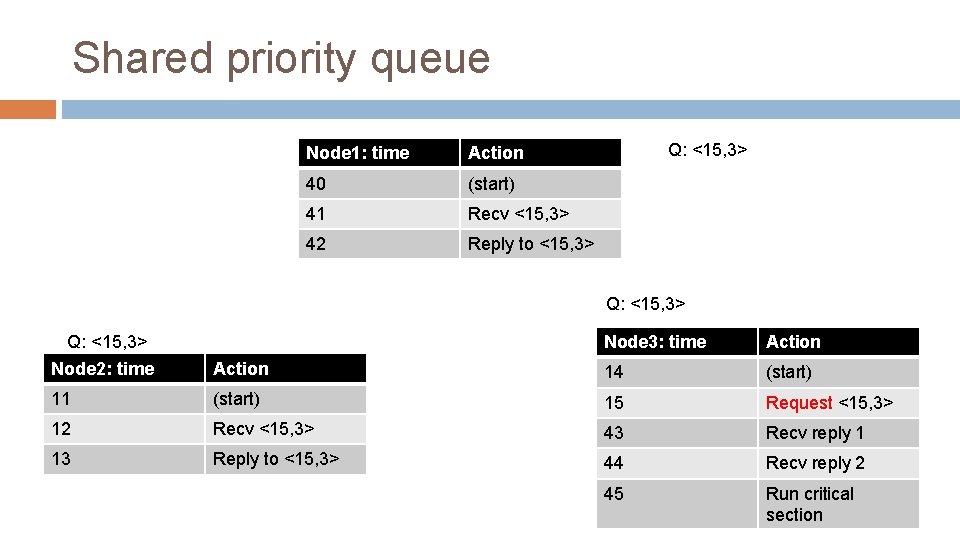

Lamport’s Shared Priority Queue Each process i locally maintains Qi (its own version of the priority Q) To execute critical section, you must have replies from all other processes AND your request must be at the front of Qi When you have all replies: � All other processes are aware of your request (because the request happens before response) � You are aware of any earlier requests (assume messages from the same process are not reordered)

Lamport’s Shared Priority Queue To enter critical section at process i : Stamp your request with the current time T Add request to Qi � Broadcast REQUEST(T) to all processes � Wait for all replies and for T to reach front of Qi � To leave � Pop head of Qi, Broadcast RELEASE to all processes On receipt of REQUEST(T’) from process k: Add T’ to Qi � If waiting for REPLY from k for an earlier request T, wait until k replies to you � Otherwise REPLY � • On receipt of RELEASE Pop head of Qi

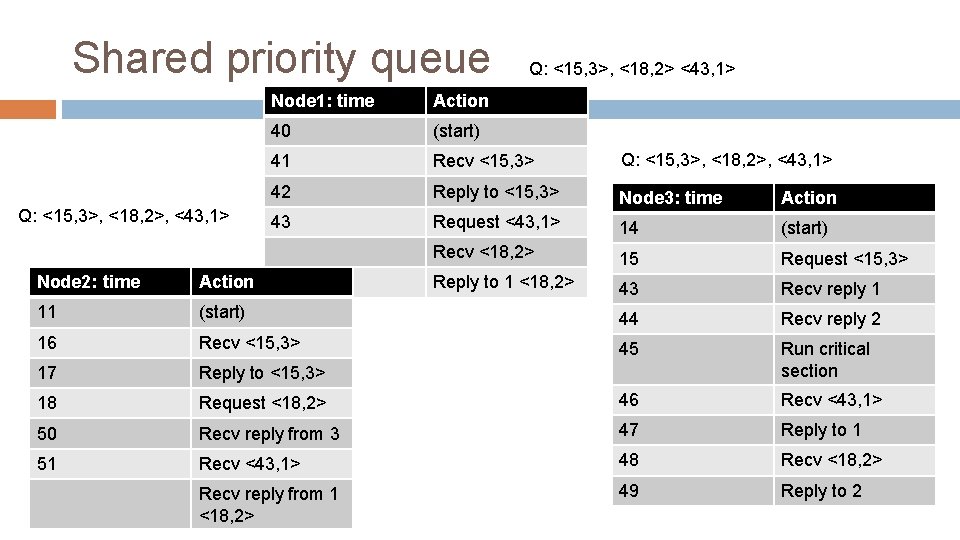

Shared priority queue Node 1: time Action 40 (start) 41 Recv <15, 3> 42 Reply to <15, 3> Q: <15, 3> Node 2: time Action Node 3: time Action 11 (start) 14 (start) 12 Recv <15, 3> 15 Request <15, 3> 13 Reply to <15, 3>

Shared priority queue Node 1: time Action 40 (start) 41 Recv <15, 3> 42 Reply to <15, 3> Q: <15, 3> Node 2: time Node 3: time Action 14 (start) 11 (start) 15 Request <15, 3> 12 Recv <15, 3> 43 Recv reply 1 13 Reply to <15, 3> 44 Recv reply 2 45 Run critical section

Shared priority queue Q: <15, 3>, <43, 1> Note the ordering! <43, 1> arrived first! Node 1: time Action 40 (start) 41 Recv <15, 3> 42 Reply to <15, 3> Node 3: time Action 43 Request <43, 1> 14 (start) 15 Request <15, 3> 43 Recv reply 1 44 Recv reply 2 45 Run critical section 46 Recv <43, 1> Q: <15, 3>, <18, 2> Node 2: time Action 11 (start) 16 Recv <15, 3> 17 Reply to <15, 3> 18 Request <18, 2> Q: <15, 3>, <18, 2>, <43, 1> Reply 48 Recv <18, 2>

Shared priority queue Q: <15, 3>, <18, 2>, <43, 1> Q: <15, 3>, <43, 1> Node 1: time Action 40 (start) 41 Recv <15, 3> Q: <15, 3>, <18, 2>, <43, 1> 42 Reply to <15, 3> Node 3: time Action 43 Request <43, 1> 14 (start) 15 Request <15, 3> 43 Recv reply 1 44 Recv reply 2 45 Run critical section Node 2: time Action 11 (start) 16 Recv <15, 3> 17 Reply to <15, 3> 18 Request <18, 2> 46 Recv <43, 1> 50 Recv reply from 3 47 Reply to 1 51 Recv <43, 1> 48 Recv <18, 2> 49 Reply to 2

Shared priority queue Q: <15, 3>, <18, 2>, <43, 1> Q: <15, 3>, <18, 2> <43, 1> Node 1: time Action 40 (start) 41 Recv <15, 3> Q: <15, 3>, <18, 2>, <43, 1> 42 Reply to <15, 3> Node 3: time Action 43 Request <43, 1> 14 (start) Recv <18, 2> 15 Request <15, 3> Reply to 1 <18, 2> 43 Recv reply 1 Node 2: time Action 11 (start) 44 Recv reply 2 16 Recv <15, 3> 45 17 Reply to <15, 3> Run critical section 18 Request <18, 2> 46 Recv <43, 1> 50 Recv reply from 3 47 Reply to 1 51 Recv <43, 1> 48 Recv <18, 2> Recv reply from 1 <18, 2> 49 Reply to 2

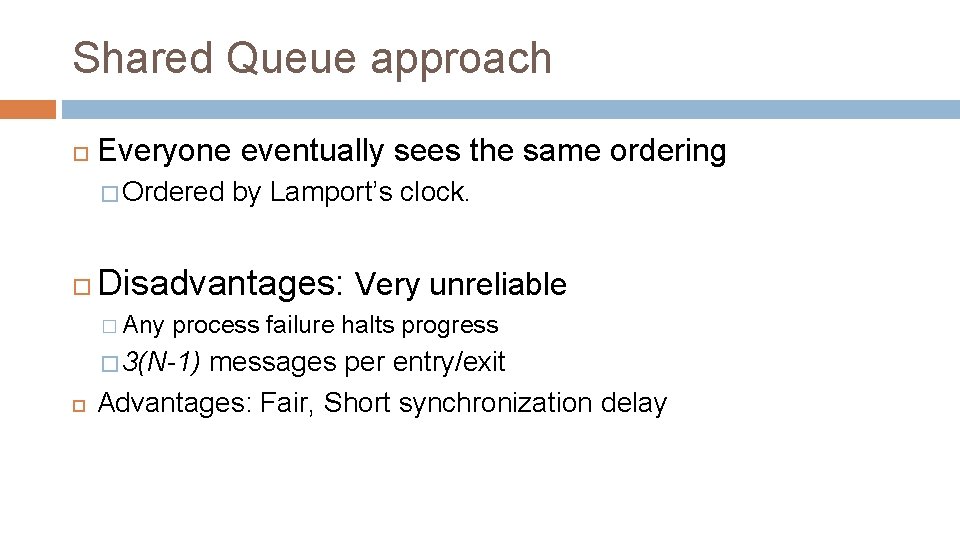

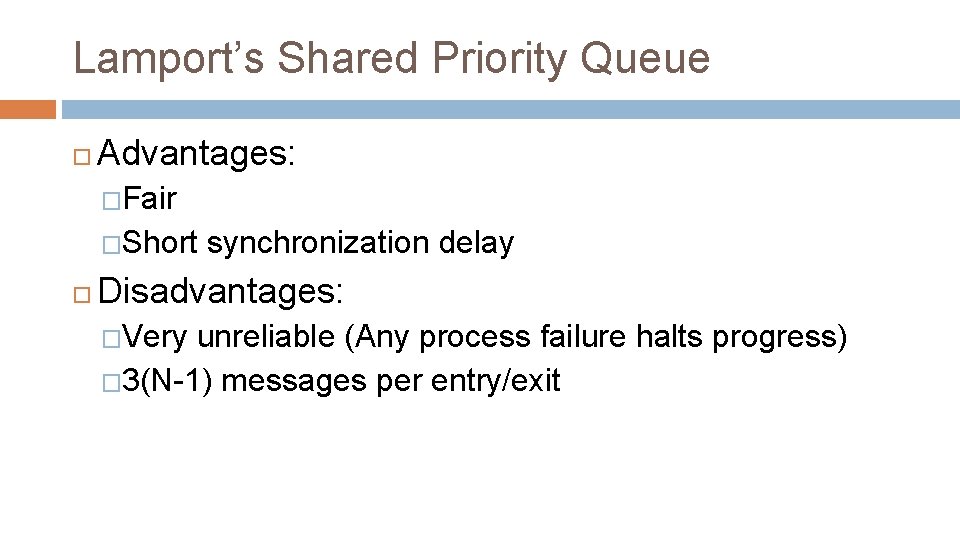

Shared Queue approach Everyone eventually sees the same ordering � Ordered Disadvantages: Very unreliable � Any process failure halts progress � 3(N-1) by Lamport’s clock. messages per entry/exit Advantages: Fair, Short synchronization delay

Lamport’s Shared Priority Queue Advantages: �Fair �Short synchronization delay Disadvantages: �Very unreliable (Any process failure halts progress) � 3(N-1) messages per entry/exit

- Slides: 28