Distributed Dynamic Correctness Testing of MPI Programs Bronis

Distributed Dynamic Correctness Testing of MPI Programs Bronis R. de Supinski and Jeffrey S. Vetter Center for Applied Scientific Computing August 21, 2002

Umpire l MPI Standard — Large and complex — Powerful — “Unsafe” or “erroneous” usage compiles and runs l Writing correct MPI programs is hard l Detect MPI usage errors that occur at runtime — Automatically — Dynamically — Unobtrusively l Distributed memory, asynchronous, library CASC 2

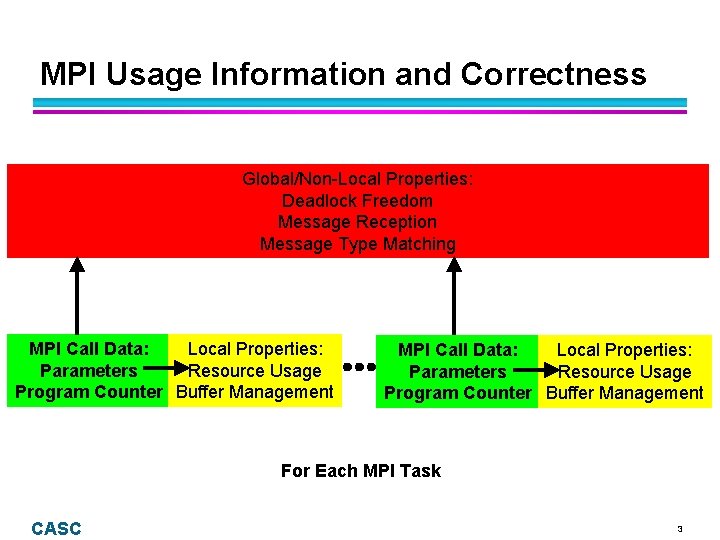

MPI Usage Information and Correctness Global/Non-Local Properties: Deadlock Freedom Message Reception Message Type Matching MPI Call Data: Local Properties: Parameters Resource Usage Program Counter Buffer Management For Each MPI Task CASC 3

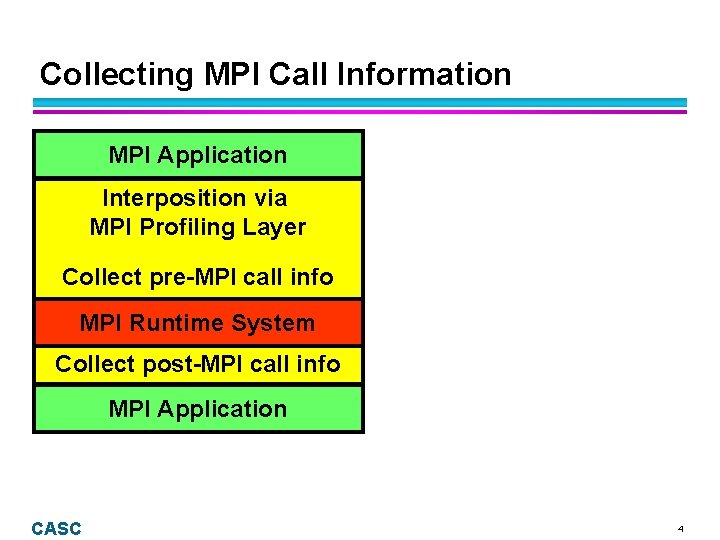

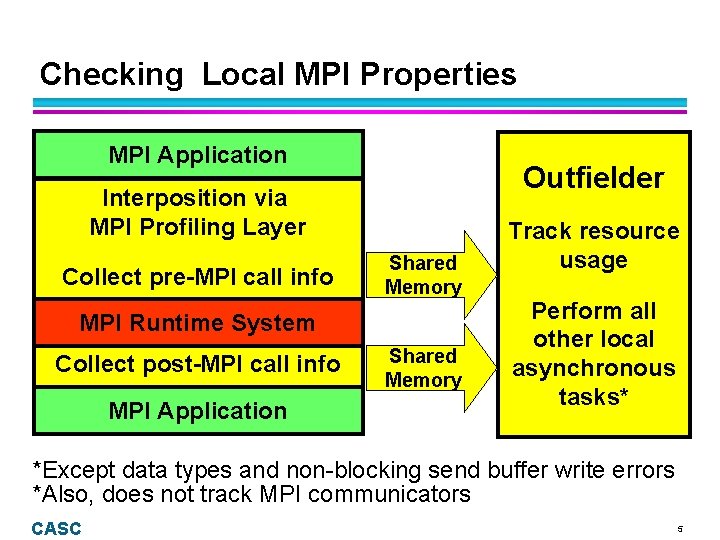

Collecting MPI Call Information MPI Application Interposition via MPI Profiling Layer Collect pre-MPI call info MPI Runtime System Collect post-MPI call info MPI Application CASC 4

Checking Local MPI Properties MPI Application Outfielder Interposition via MPI Profiling Layer Collect pre-MPI call info Shared Memory MPI Runtime System Collect post-MPI call info MPI Application Shared Memory Track resource usage Perform all other local asynchronous tasks* *Except data types and non-blocking send buffer write errors *Also, does not track MPI communicators CASC 5

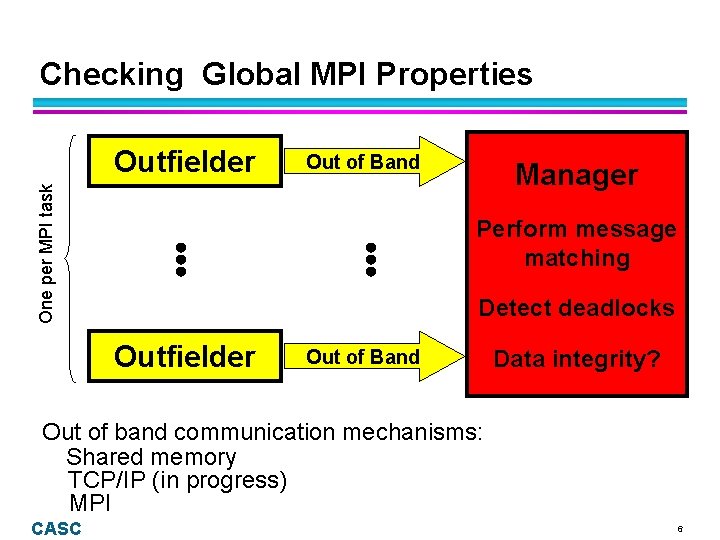

Checking Global MPI Properties Out of Band One per MPI task Outfielder Manager Perform message matching Detect deadlocks Outfielder Out of Band Data integrity? Out of band communication mechanisms: Shared memory TCP/IP (in progress) MPI CASC 6

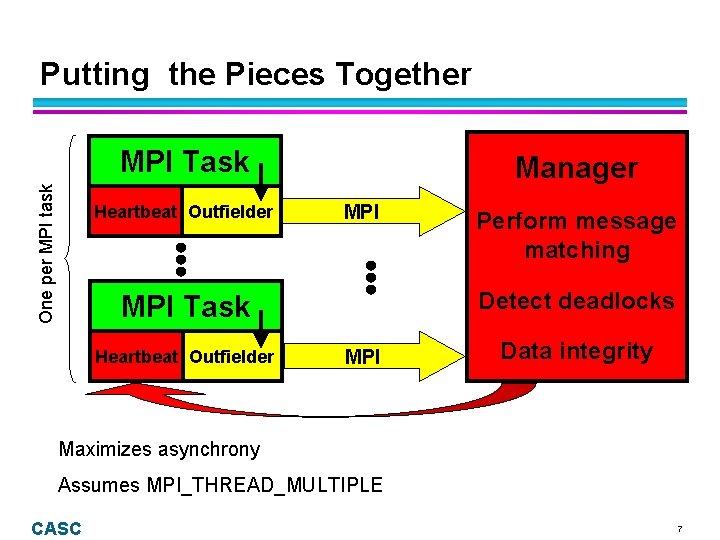

Putting the Pieces Together One per MPI task MPI Task Heartbeat Outfielder Manager MPI Detect deadlocks MPI Task Heartbeat Outfielder Perform message matching MPI Data integrity Maximizes asynchrony Assumes MPI_THREAD_MULTIPLE CASC 7

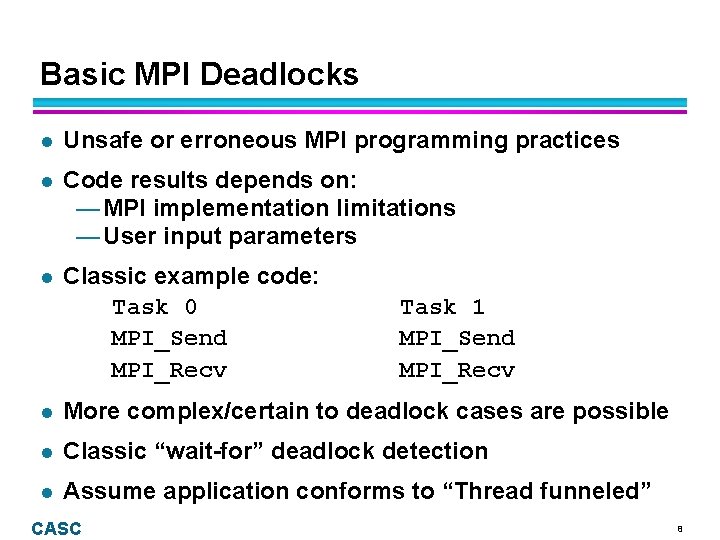

Basic MPI Deadlocks l Unsafe or erroneous MPI programming practices l Code results depends on: — MPI implementation limitations — User input parameters l Classic example code: Task 0 MPI_Send MPI_Recv Task 1 MPI_Send MPI_Recv l More complex/certain to deadlock cases are possible l Classic “wait-for” deadlock detection l Assume application conforms to “Thread funneled” CASC 8

Deadlocks with MPI Collectives l Erroneous MPI programming practice l Simple example code: Tasks 0, 1, & 2 MPI_Bcast MPI_Barrier Task 3 MPI_Barrier MPI_Bcast l Possible code results: — Deadlock — Correct message matching — Incorrect message matching — Mysterious error messages l “Wait-for” every task in communicator CASC 9

Non-blocking Completion Deadlocks l Full completions- all requests must complete — MPI_Wait equivalent to blocking send/recv — MPI_Waitall essentially equivalent to collective l Partial - (at least) one must complete — MPI_Waitany and MPI_Waitsome — Create “soft” wait-for relationships — Deadlock only if cycle for every request l Implications of partial completions: — Minor modifications of recursive algorithm — Cannot report a single cycle when detected CASC 10

MPI_ANY_SOURCE Receive Deadlocks l Deadlock only if cycle for every task in communicator l Complicates deadlock detection significantly — Must obtain actual source from implementation — Must make tentative matches — Timing dependent deadlocks l Simple example code: Task 0 Task 1 MPI_Recv(ANY) MPI_Send(0) MPI_Send(1) MPI_Recv(0) MPI_Recv(ANY) l Task 2 MPI_Send(0) Umpire philosophy: detect errors that actually occur CASC 11

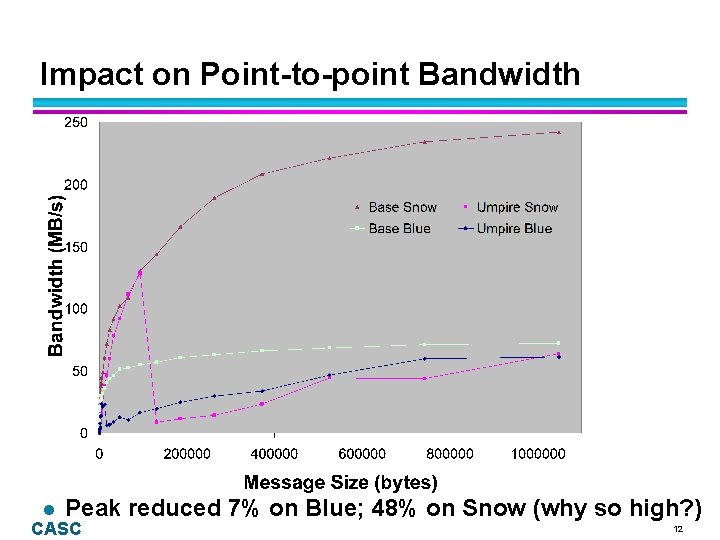

Impact on Point-to-point Bandwidth l Peak CASC reduced 7% on Blue; 48% on Snow (why so high? ) 12

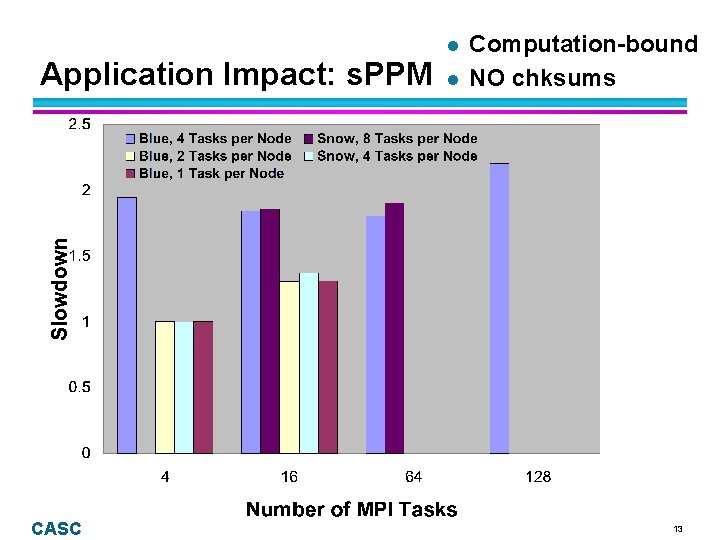

Application Impact: s. PPM CASC l l Computation-bound NO chksums 13

Status l Deadlock detection: nearly complete — All send/receive/collective operations — All derived communicator types — No MPI_Cancel, MPI_Probe support — Small issue for MPI_Intercomm_create l Type matching machinery in place but not checked l Send buffer overwrite detection is too expensive l User interface needs improvement l TCP/IP needed for MPI implementation verification l Tool available for “hand-holding” testing CASC 14

Conclusion l First automated MPI debugging tool — Detect deadlocks — Eliminates resource leaks — Assure correct non-blocking sends l Distributed memory implementation l Bulk of MPI-1. 2 Standard (Considering parts of MPI-2) l Results — Low overhead — Located resource errors in Sphinx CASC 15

UCRL-VG-149019 Work performed under the auspices of the U. S. Department of Energy by University of California Lawrence Livermore National Laboratory under Contract W-7405 -Eng-48 CASC 16

- Slides: 16