Disk IO and Network Benchmark on VMs Qiulan

Disk IO and Network Benchmark on VMs Qiulan Huang 26/11/2010

Benchmarking � CPU benchmarks: HEPSPEC 06 � I/O benchmarks: IOZONE � Network benchmarks: IPERF

Disk I/O Benchmark � Running 8 iozone processes on hypervisor � Running iozone process on 8 VMs at the same time � IOZONE options: -Mce -I -+r -r 256 k -s 8 g -f /usr/vice/cache/iozone_$i. dat$$ -i 0 -i 1 -i 2 � Pay close attention to the read and write performance

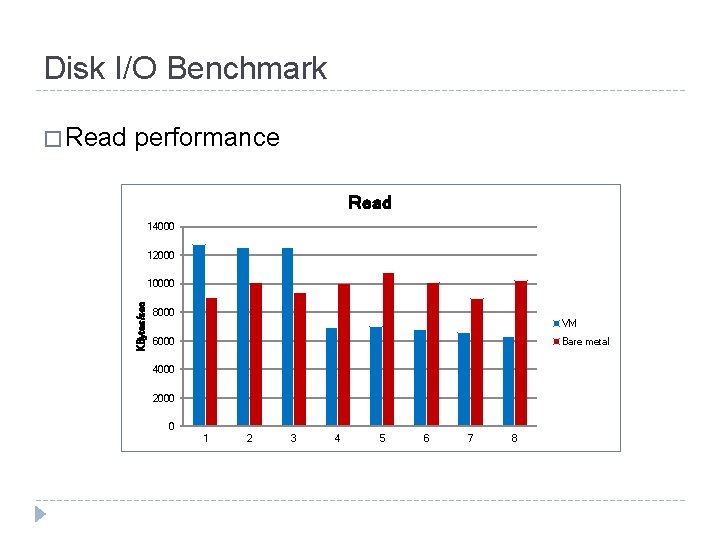

Disk I/O Benchmark performance Read 14000 12000 10000 KBytes/sec � Read 8000 VM 6000 Bare metal 4000 2000 0 1 2 3 4 5 6 7 8

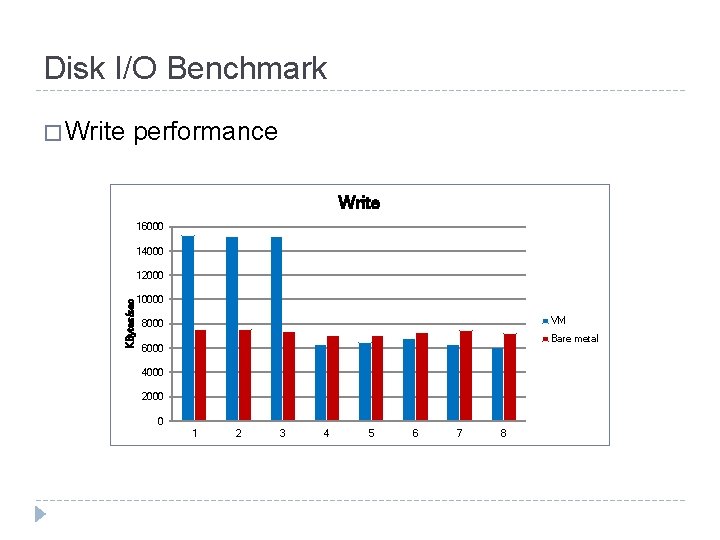

Disk I/O Benchmark � Write performance Write 16000 14000 KBytes/sec 12000 10000 VM 8000 Bare metal 6000 4000 2000 0 1 2 3 4 5 6 7 8

Summary(1) � For disk IO performance, the write performance penalty is about 10%. � While read performance loss is about 40% which is higher than the write one. � It’s weird there are still 3 VMs getting about twice performance of the bare mental.

Network Benchmark � Tools: IPERF 2. 0. 4 � Options: ‘-p 11522 -w 458742 -t 60 ‘ � TCP window size is 256 KB, the test duration time is 60 secs(default is 10 secs) � Physical Server: lxbsq 0910 � VM Servers: vmbsq 091000~ vmbsq 091007 � Client: lxvmpool 005

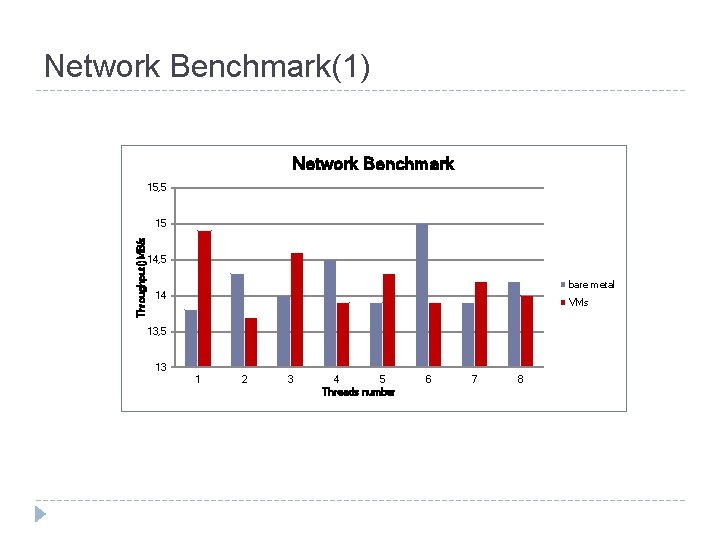

Benchmark Design(1) � Set 8 parallel threads running at the same time on the client to test the hypervisor throughput performance � Start 8 VMs running on the hypervisor and make them acted as servers. On the client side, run 8 threads almost at the same time to connect each server respectively � Server side command: � iperf -s –p Port. Number -w 458742 -t 60 � Client side command: � iperf -c Server. IP -p Port. Number -w 458742 –P 8 -t 60 � Port number should be same with the on server side

Network Benchmark(1) Network Benchmark 15, 5 Throughput()MB/s 15 14, 5 bare metal 14 VMs 13, 5 13 1 2 3 4 5 Threads number 6 7 8

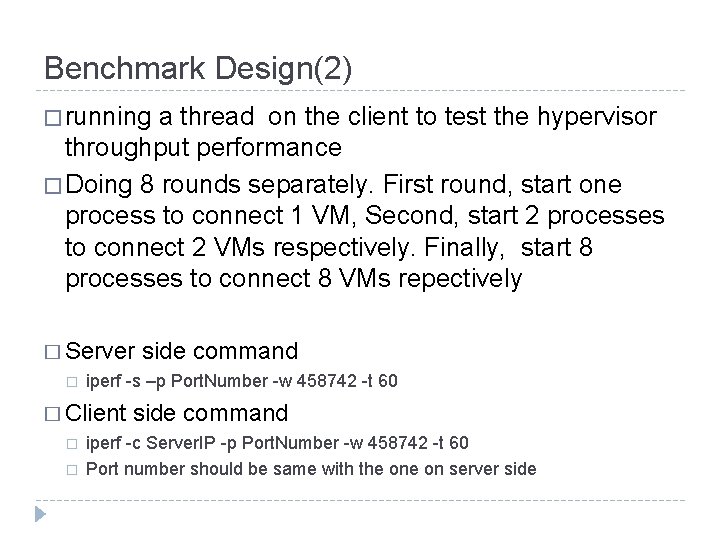

Benchmark Design(2) � running a thread on the client to test the hypervisor throughput performance � Doing 8 rounds separately. First round, start one process to connect 1 VM, Second, start 2 processes to connect 2 VMs respectively. Finally, start 8 processes to connect 8 VMs repectively � Server side command � iperf -s –p Port. Number -w 458742 -t 60 � Client side command � iperf -c Server. IP -p Port. Number -w 458742 -t 60 � Port number should be same with the on server side

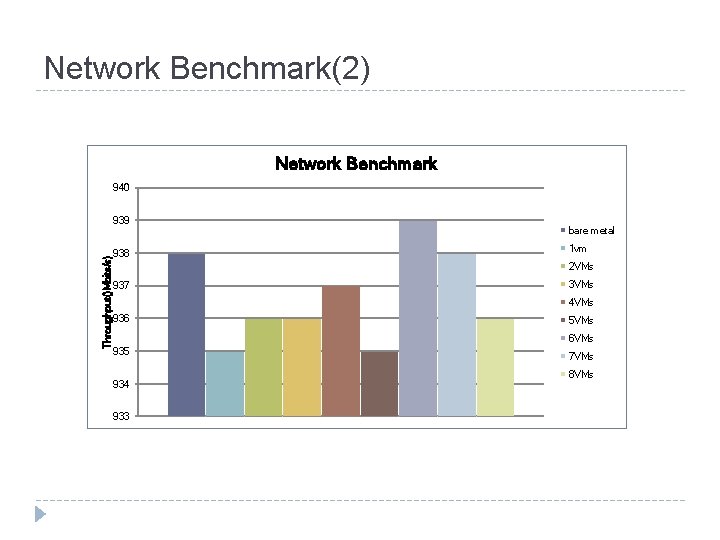

Network Benchmark(2) Network Benchmark 940 Throughput()Mbits/s) 939 938 bare metal 1 vm 2 VMs 937 3 VMs 4 VMs 936 5 VMs 6 VMs 935 934 933 7 VMs 8 VMs

Summary(2) � For network performance penalty in VMs is about 3%, It’s really optimistic. � The later one shows the performance in VMs is nearly equal to the physical machine. What’s more, with 4 VMs get better value than the bare one. � We should do more study to investigate and tune some parameters to optimize the network performance using real application.

Question?

- Slides: 13