CS 703 Advanced Operating Systems By Mr Farhan

CS 703 – Advanced Operating Systems By Mr. Farhan Zaidi

Lecture No. 9

Overview of today’s lecture n n n Shared variable analysis in multi-threaded programs Concurrency and synchronization Critical sections Solutions to the critical section problem Concurrency examples Re-cap of lecture

Shared Variables in Threaded C Programs n Question: Which variables in a threaded C program are shared variables? q The answer is not as simple as “global variables are shared” and “stack variables are private”. n Requires answers to the following questions: q What is the memory model for threads? q q How are variables mapped to memory instances? How many threads reference each of these instances?

Threads Memory Model n Conceptual model: q q q n Operationally, this model is not strictly enforced: q q n Each thread runs in the context of a process. Each thread has its own separate thread context. n Thread ID, stack pointer, program counter, condition codes, and general purpose registers. All threads share the remaining process context. n Code, data, heap, and shared library segments of the process virtual address space. n Open files and installed handlers While register values are truly separate and protected. . Any thread can read and write the stack of any other thread. Mismatch between the conceptual and operation model is a source of confusion and errors.

What resources are shared? n Local variables are not shared q q n Global variables are shared q n refer to data on the stack, each thread has its own stack never pass/share/store a pointer to a local variable on another thread’s stack! stored in the static data segment, accessible by any thread Dynamic objects are shared q stored in the heap, shared if you can name it n in C, can conjure up the pointer q e. g. , void *x = (void *) 0 x. DEADBEEF n in Java, strong typing prevents this q must pass references explicitly

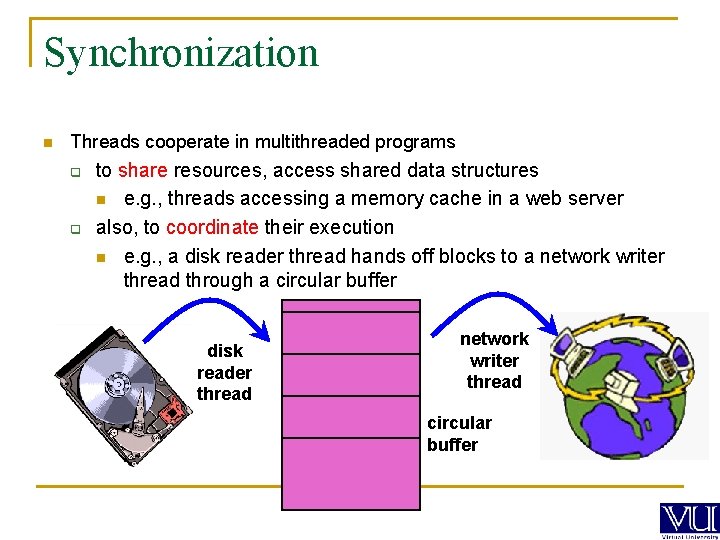

Synchronization n Threads cooperate in multithreaded programs q q to share resources, access shared data structures n e. g. , threads accessing a memory cache in a web server also, to coordinate their execution n e. g. , a disk reader thread hands off blocks to a network writer thread through a circular buffer disk reader thread network writer thread circular buffer

n For correctness, we have to control this cooperation q n We control cooperation using synchronization q n n must assume threads interleave executions arbitrarily and at different rates n scheduling is not under application writers’ control enables us to restrict the interleaving of executions Note: this also applies to processes, not just threads It also applies across machines in a distributed system

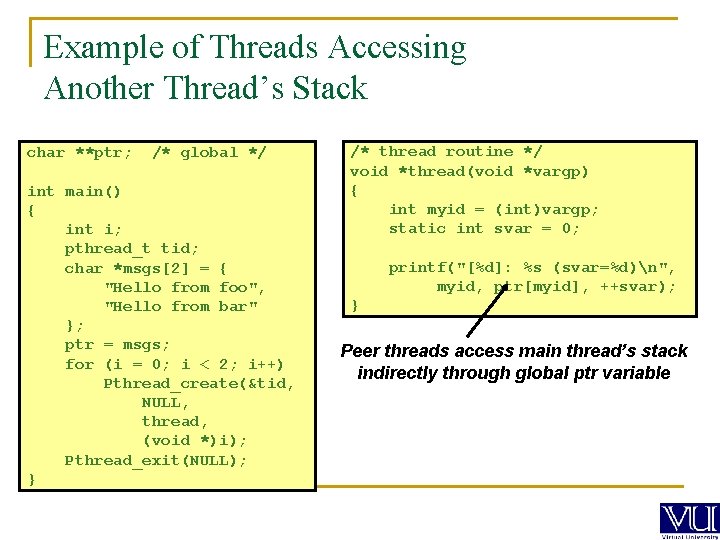

Example of Threads Accessing Another Thread’s Stack char **ptr; /* global */ int main() { int i; pthread_t tid; char *msgs[2] = { "Hello from foo", "Hello from bar" }; ptr = msgs; for (i = 0; i < 2; i++) Pthread_create(&tid, NULL, thread, (void *)i); Pthread_exit(NULL); } /* thread routine */ void *thread(void *vargp) { int myid = (int)vargp; static int svar = 0; printf("[%d]: %s (svar=%d)n", myid, ptr[myid], ++svar); } Peer threads access main thread’s stack indirectly through global ptr variable

![Mapping Variables to Mem. Instances Global var: 1 instance (ptr [data]) Local automatic vars: Mapping Variables to Mem. Instances Global var: 1 instance (ptr [data]) Local automatic vars:](http://slidetodoc.com/presentation_image_h2/3f77c3f8b45a91c07e927909ab6c67dc/image-10.jpg)

Mapping Variables to Mem. Instances Global var: 1 instance (ptr [data]) Local automatic vars: 1 instance (i. m, msgs. m ) char **ptr; /* global */ int main() { int i; pthread_t tid; char *msgs[N] = { "Hello from foo", "Hello from bar" }; ptr = msgs; for (i = 0; i < 2; i++) Pthread_create(&tid, NULL, thread, (void *)i); Pthread_exit(NULL); } Local automatic var: 2 instances ( myid. p 0[peer thread 0’s stack], myid. p 1[peer thread 1’s stack] ) /* thread routine */ void *thread(void *vargp) { int myid = (int)vargp; static int svar = 0; printf("[%d]: %s (svar=%d)n", myid, ptr[myid], ++svar); } Local static var: 1 instance (svar [data])

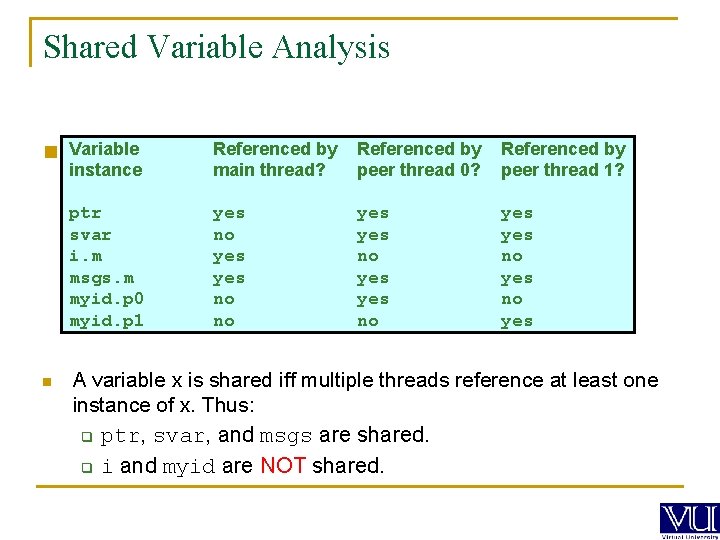

Shared Variable Analysis n Variable Which instance ptr svar i. m msgs. m myid. p 0 myid. p 1 n Referenced by shared? Referenced by variables are main thread? peer thread 0? Referenced by peer thread 1? yes no yes yes no yes A variable x is shared iff multiple threads reference at least one instance of x. Thus: q ptr, svar, and msgs are shared. q i and myid are NOT shared.

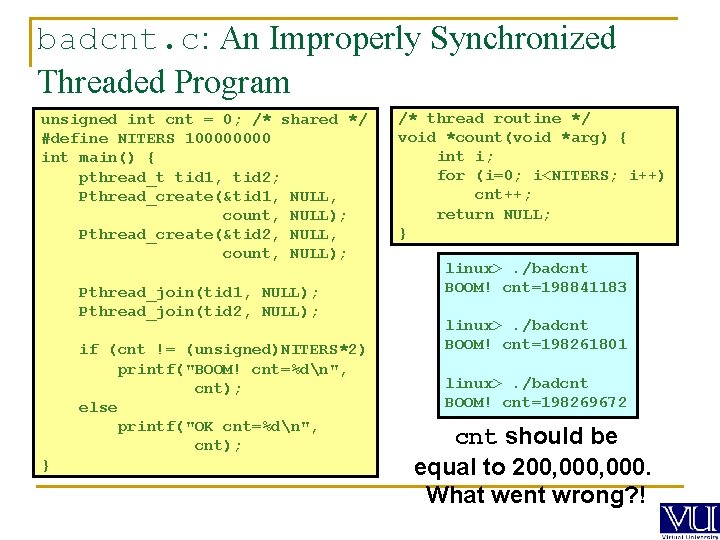

badcnt. c: An Improperly Synchronized Threaded Program unsigned int cnt = 0; /* shared */ #define NITERS 10000 int main() { pthread_t tid 1, tid 2; Pthread_create(&tid 1, NULL, count, NULL); Pthread_create(&tid 2, NULL, count, NULL); Pthread_join(tid 1, NULL); Pthread_join(tid 2, NULL); if (cnt != (unsigned)NITERS*2) printf("BOOM! cnt=%dn", cnt); else printf("OK cnt=%dn", cnt); } /* thread routine */ void *count(void *arg) { int i; for (i=0; i<NITERS; i++) cnt++; return NULL; } linux>. /badcnt BOOM! cnt=198841183 linux>. /badcnt BOOM! cnt=198261801 linux>. /badcnt BOOM! cnt=198269672 cnt should be equal to 200, 000. What went wrong? !

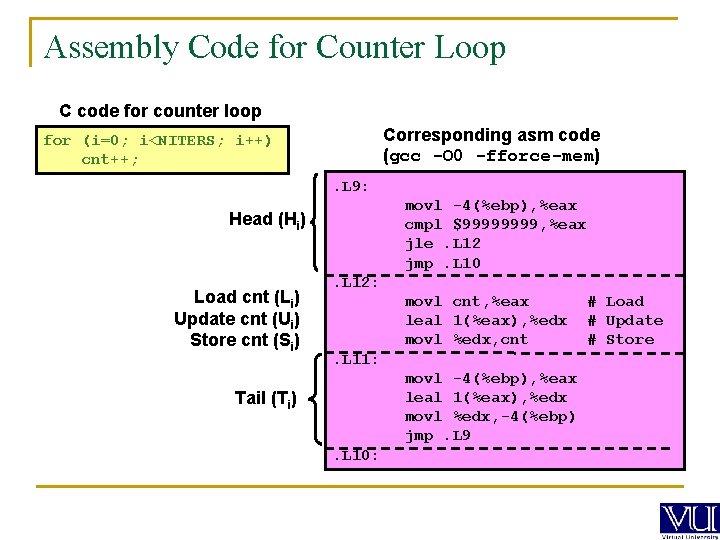

Assembly Code for Counter Loop C code for counter loop Corresponding asm code (gcc -O 0 -fforce-mem) for (i=0; i<NITERS; i++) cnt++; . L 9: movl -4(%ebp), %eax cmpl $9999, %eax jle. L 12 jmp. L 10 Head (Hi) Load cnt (Li) Update cnt (Ui) Store cnt (Si) . L 12: movl cnt, %eax leal 1(%eax), %edx movl %edx, cnt. L 11: movl -4(%ebp), %eax leal 1(%eax), %edx movl %edx, -4(%ebp) jmp. L 9 Tail (Ti). L 10: # Load # Update # Store

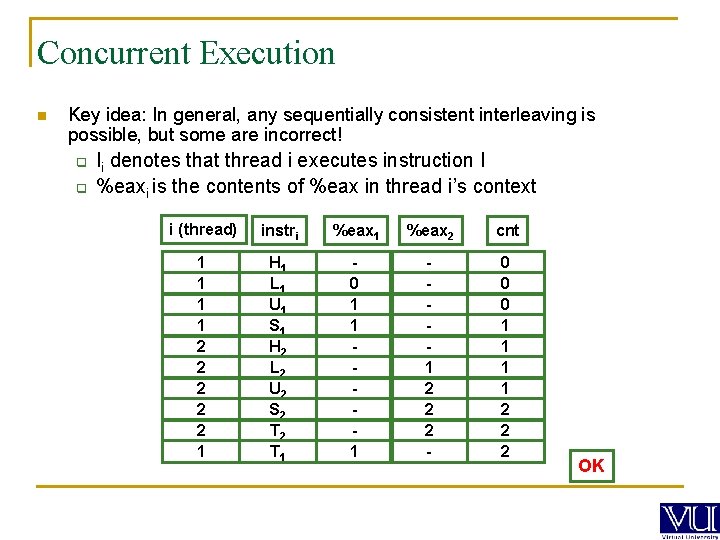

Concurrent Execution n Key idea: In general, any sequentially consistent interleaving is possible, but some are incorrect! q q Ii denotes that thread i executes instruction I %eaxi is the contents of %eax in thread i’s context i (thread) instri %eax 1 %eax 2 cnt 1 1 2 2 2 1 H 1 L 1 U 1 S 1 H 2 L 2 U 2 S 2 T 1 0 1 1 2 2 2 - 0 0 0 1 1 2 2 2 OK

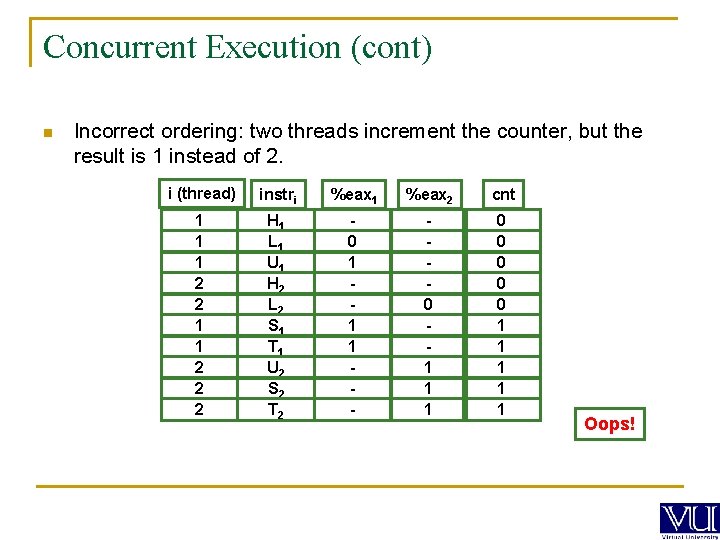

Concurrent Execution (cont) n Incorrect ordering: two threads increment the counter, but the result is 1 instead of 2. i (thread) instri %eax 1 %eax 2 cnt 1 1 1 2 2 2 H 1 L 1 U 1 H 2 L 2 S 1 T 1 U 2 S 2 T 2 0 1 1 1 - 0 1 1 1 0 0 0 1 1 1 Oops!

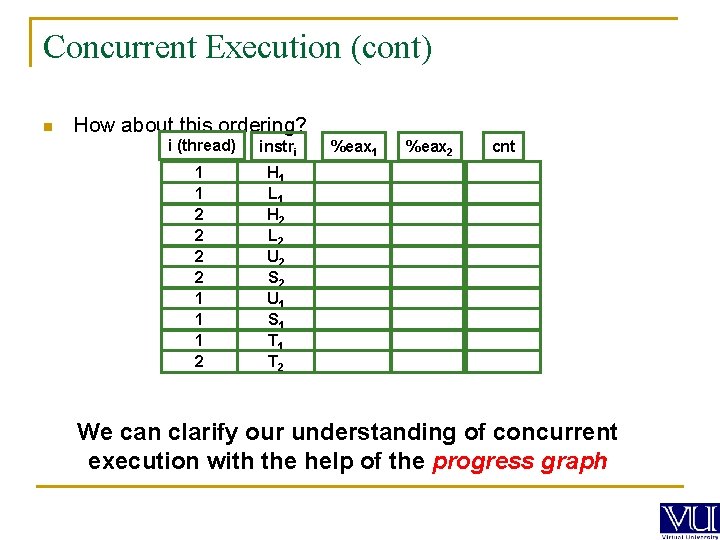

Concurrent Execution (cont) n How about this ordering? i (thread) instri 1 1 2 2 1 1 1 2 H 1 L 1 H 2 L 2 U 2 S 2 U 1 S 1 T 2 %eax 1 %eax 2 cnt We can clarify our understanding of concurrent execution with the help of the progress graph

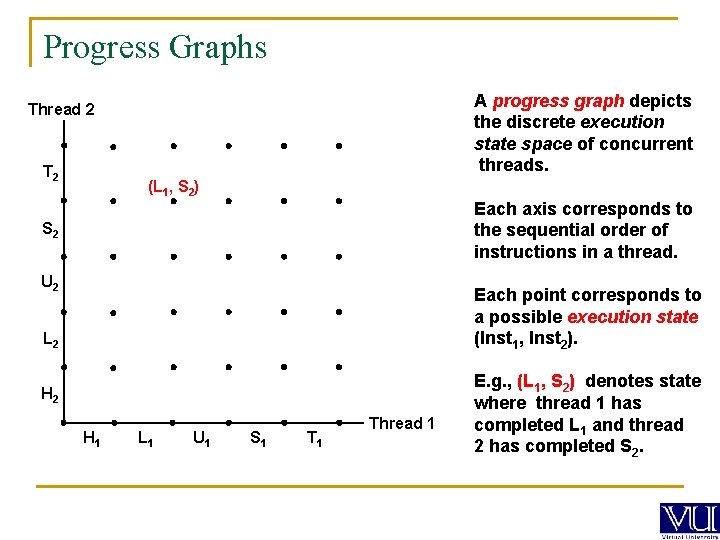

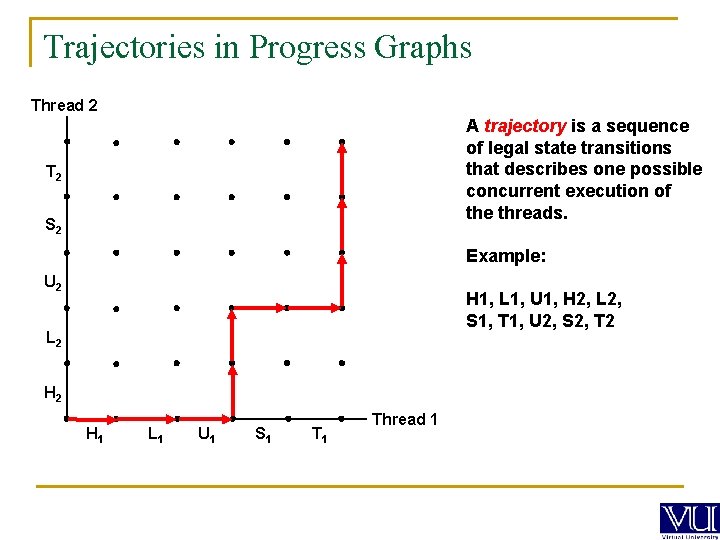

Progress Graphs A progress graph depicts the discrete execution state space of concurrent threads. Thread 2 T 2 (L 1, S 2) Each axis corresponds to the sequential order of instructions in a thread. S 2 U 2 Each point corresponds to a possible execution state (Inst 1, Inst 2). L 2 H 1 L 1 U 1 S 1 Thread 1 E. g. , (L 1, S 2) denotes state where thread 1 has completed L 1 and thread 2 has completed S 2.

Trajectories in Progress Graphs Thread 2 A trajectory is a sequence of legal state transitions that describes one possible concurrent execution of the threads. T 2 S 2 Example: U 2 H 1, L 1, U 1, H 2, L 2, S 1, T 1, U 2, S 2, T 2 L 2 H 1 L 1 U 1 S 1 Thread 1

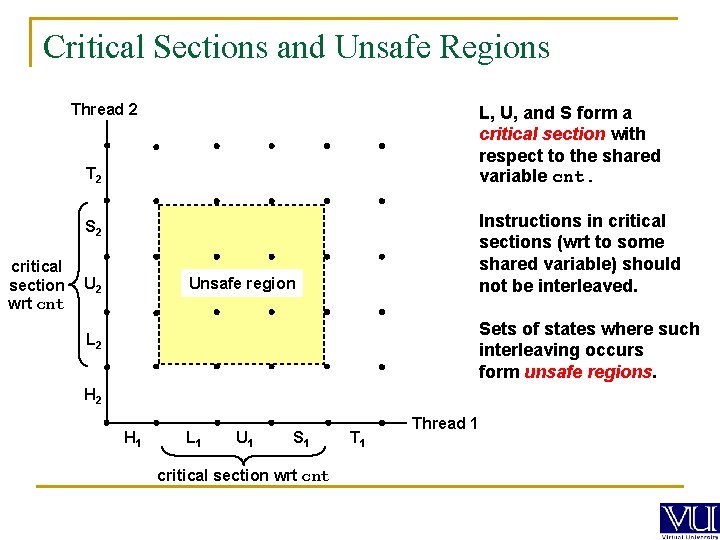

Critical Sections and Unsafe Regions Thread 2 L, U, and S form a critical section with respect to the shared variable cnt. T 2 Instructions in critical sections (wrt to some shared variable) should not be interleaved. S 2 critical section wrt cnt Unsafe region U 2 Sets of states where such interleaving occurs form unsafe regions. L 2 H 1 L 1 U 1 S 1 critical section wrt cnt T 1 Thread 1

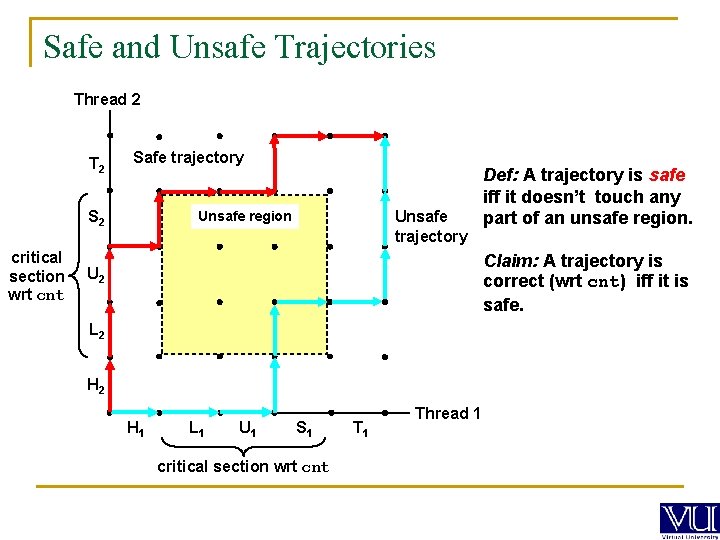

Safe and Unsafe Trajectories Thread 2 T 2 Safe trajectory S 2 critical section wrt cnt Unsafe trajectory Unsafe region Def: A trajectory is safe iff it doesn’t touch any part of an unsafe region. Claim: A trajectory is correct (wrt cnt) iff it is safe. U 2 L 2 H 1 L 1 U 1 S 1 critical section wrt cnt T 1 Thread 1

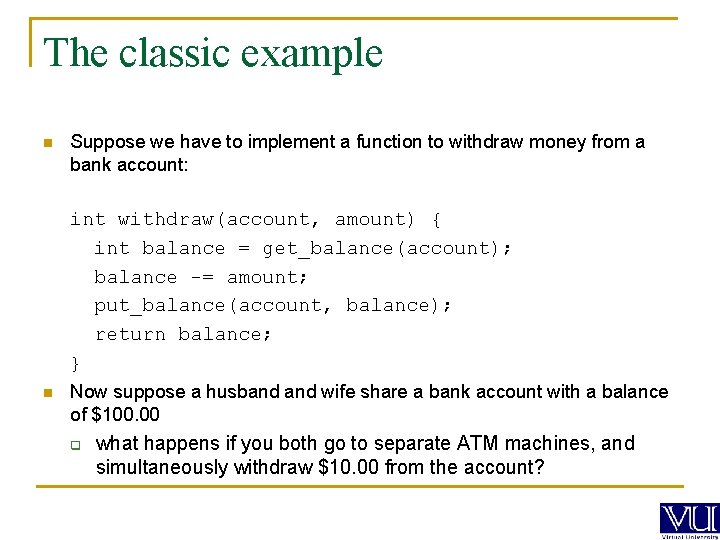

The classic example n Suppose we have to implement a function to withdraw money from a bank account: int withdraw(account, amount) { int balance = get_balance(account); balance -= amount; put_balance(account, balance); return balance; } n Now suppose a husband wife share a bank account with a balance of $100. 00 q what happens if you both go to separate ATM machines, and simultaneously withdraw $10. 00 from the account?

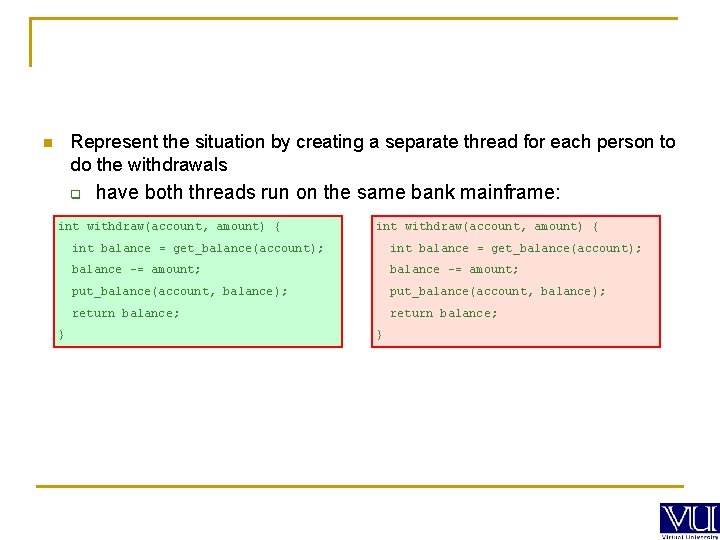

Represent the situation by creating a separate thread for each person to do the withdrawals n q have both threads run on the same bank mainframe: int withdraw(account, amount) { } int withdraw(account, amount) { int balance = get_balance(account); balance -= amount; put_balance(account, balance); return balance; }

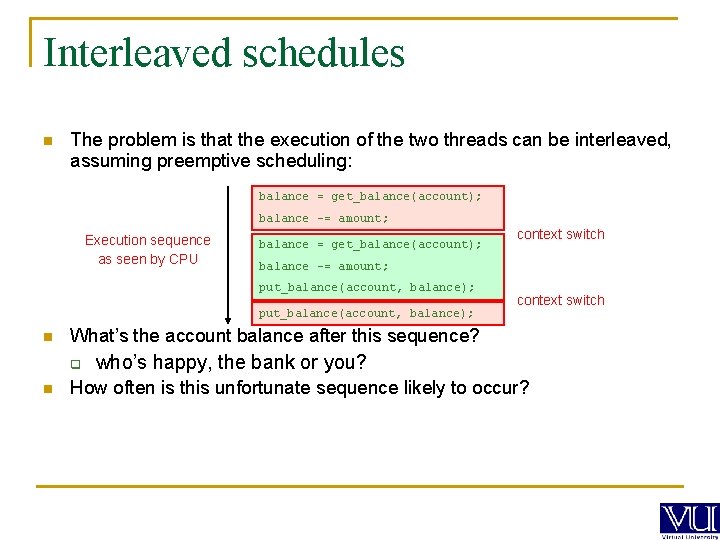

Interleaved schedules n The problem is that the execution of the two threads can be interleaved, assuming preemptive scheduling: balance = get_balance(account); balance -= amount; Execution sequence as seen by CPU balance = get_balance(account); balance -= amount; put_balance(account, balance); n context switch What’s the account balance after this sequence? q n context switch who’s happy, the bank or you? How often is this unfortunate sequence likely to occur?

Race conditions and concurrency Atomic operation: operation always runs to completion, or not at all. Indivisible, can't be stopped in the middle. On most machines, memory reference and assignment (load and store) of words, are atomic. n Many instructions are not atomic. For example, on most 32 -bit architectures, double precision floating point store is not atomic; it involves two separate memory operations.

The crux of the problem n The problem is that two concurrent threads (or processes) access a shared resource (account) without any synchronization q creates a race condition output is non-deterministic, depends on timing We need mechanisms for controlling access to shared resources in the face of concurrency q so we can reason about the operation of programs n essentially, re-introducing determinism Synchronization is necessary for any shared data structure q buffers, queues, lists, hash tables, scalars, … n n n

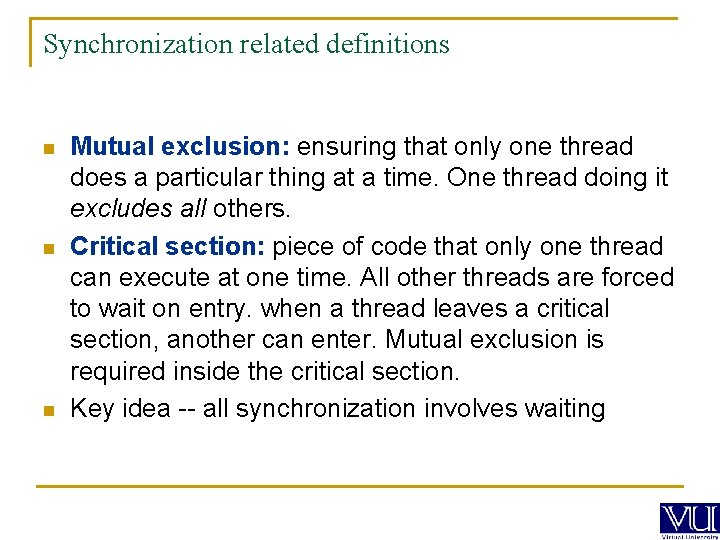

Synchronization related definitions n n n Mutual exclusion: ensuring that only one thread does a particular thing at a time. One thread doing it excludes all others. Critical section: piece of code that only one thread can execute at one time. All other threads are forced to wait on entry. when a thread leaves a critical section, another can enter. Mutual exclusion is required inside the critical section. Key idea -- all synchronization involves waiting

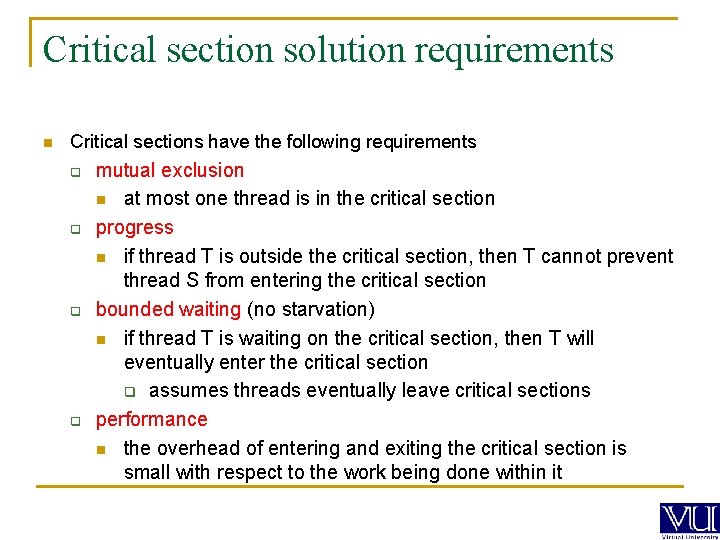

Critical section solution requirements n Critical sections have the following requirements q q mutual exclusion n at most one thread is in the critical section progress n if thread T is outside the critical section, then T cannot prevent thread S from entering the critical section bounded waiting (no starvation) n if thread T is waiting on the critical section, then T will eventually enter the critical section q assumes threads eventually leave critical sections performance n the overhead of entering and exiting the critical section is small with respect to the work being done within it

Synchronization: Too Much Milk n n n n n Person A 3: 00 Look in fridge. Out of milk. 3: 05 Leave for store. 3: 10 Arrive at store. 3: 15 Buy milk. 3: 20 Arrive home, put milk in fridge 3: 25 3: 30 Oops!! Too much milk Person B Look in fridge. Out of milk. Leave for store. Arrive at store. Buy milk. Arrive home, put milk in fridge.

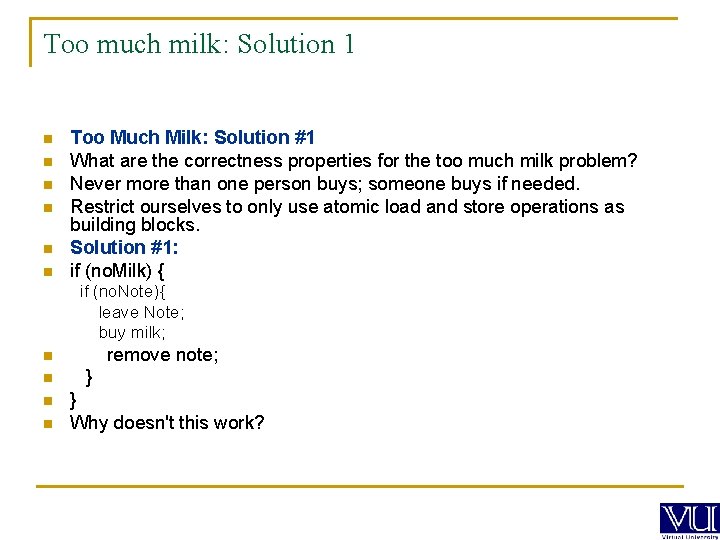

Too much milk: Solution 1 n n n Too Much Milk: Solution #1 What are the correctness properties for the too much milk problem? Never more than one person buys; someone buys if needed. Restrict ourselves to only use atomic load and store operations as building blocks. Solution #1: if (no. Milk) { if (no. Note){ leave Note; buy milk; remove note; n n } } Why doesn't this work?

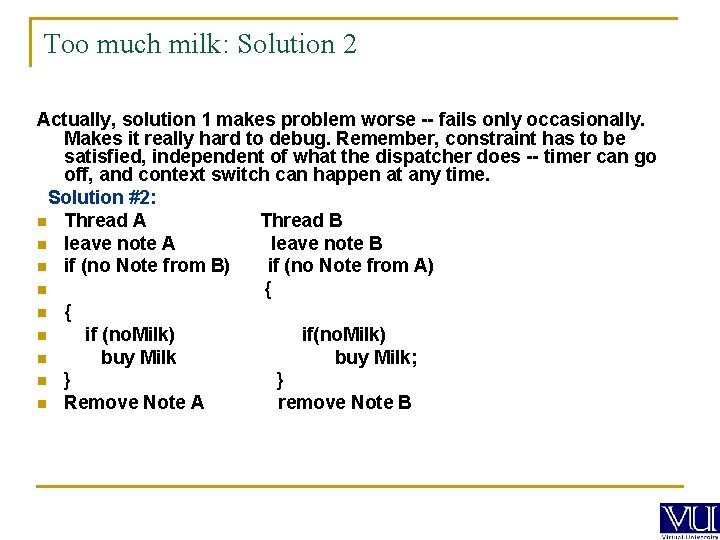

Too much milk: Solution 2 Actually, solution 1 makes problem worse -- fails only occasionally. Makes it really hard to debug. Remember, constraint has to be satisfied, independent of what the dispatcher does -- timer can go off, and context switch can happen at any time. Solution #2: n Thread A Thread B n leave note A leave note B n if (no Note from B) if (no Note from A) n { n if (no. Milk) if(no. Milk) n buy Milk; n } } n Remove Note A remove Note B

- Slides: 30